Feiyue Huang

ACFD: Asymmetric Cartoon Face Detector

Jul 02, 2020

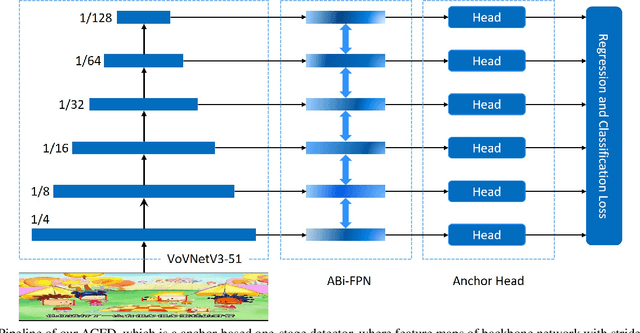

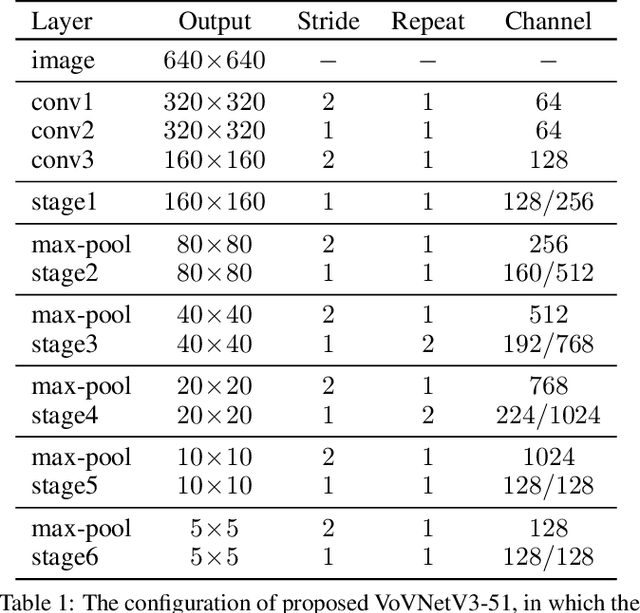

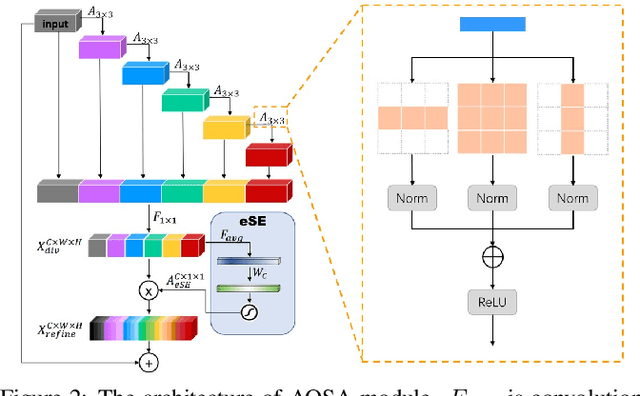

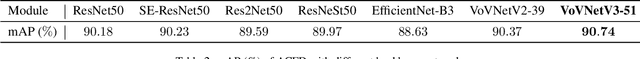

Abstract:Cartoon face detection is a more challenging task than human face detection due to many difficult scenarios is involved. Aiming at the characteristics of cartoon faces, such as huge differences within the intra-faces, in this paper, we propose an asymmetric cartoon face detector, named ACFD. Specifically, it consists of the following modules: a novel backbone VoVNetV3 comprised of several asymmetric one-shot aggregation modules (AOSA), asymmetric bi-directional feature pyramid network (ABi-FPN), dynamic anchor match strategy (DAM) and the corresponding margin binary classification loss (MBC). In particular, to generate features with diverse receptive fields, multi-scale pyramid features are extracted by VoVNetV3, and then fused and enhanced simultaneously by ABi-FPN for handling the faces in some extreme poses and have disparate aspect ratios. Besides, DAM is used to match enough high-quality anchors for each face, and MBC is for the strong power of discrimination. With the effectiveness of these modules, our ACFD achieves the 1st place on the detection track of 2020 iCartoon Face Challenge under the constraints of model size 200MB, inference time 50ms per image, and without any pretrained models.

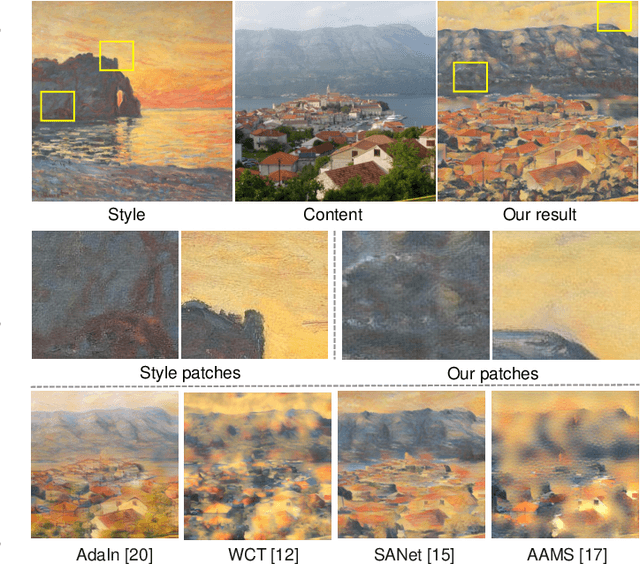

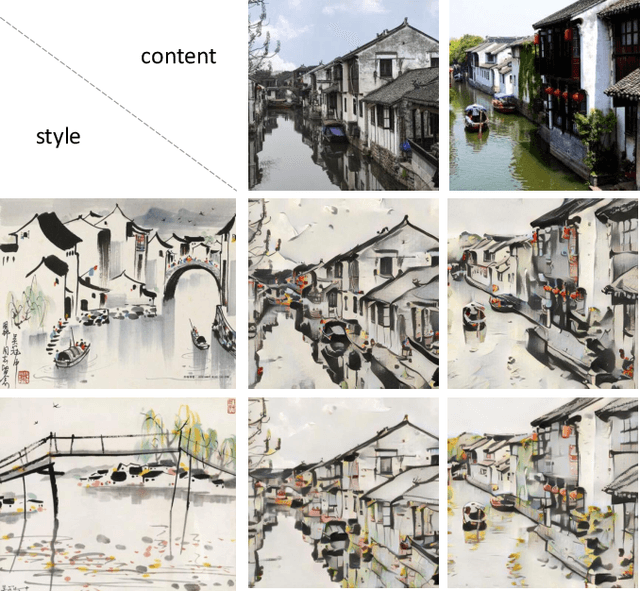

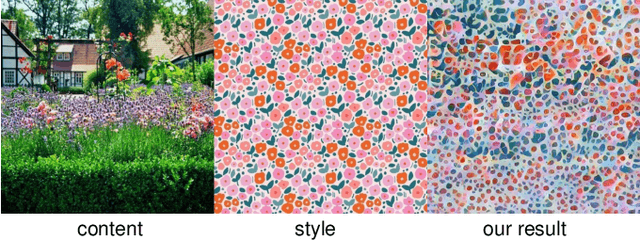

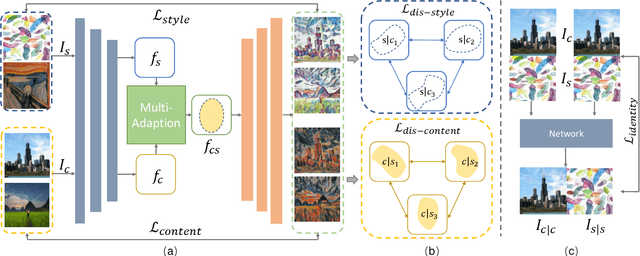

Arbitrary Style Transfer via Multi-Adaptation Network

May 27, 2020

Abstract:Arbitrary style transfer is a significant topic with both research value and application prospect.Given a content image and a referenced style painting, a desired style transfer would render the content image with the color tone and vivid stroke patterns of the style painting while synchronously maintain the detailed content structure information.Commonly, style transfer approaches would learn content and style representations of the content and style references first and then generate the stylized images guided by these representations.In this paper, we propose the multi-adaption network which involves two Self-Adaptation (SA) modules and one Co-Adaptation (CA) module: SA modules adaptively disentangles the content and style representations, i.e., content SA module uses the position-wise self-attention to enhance content representation and style SA module uses channel-wise self-attention to enhance style representation; CA module rearranges the distribution of style representation according to content representation distribution by calculating the local similarity between the disentangled content and style features in a non-local fashion.Moreover, a new disentanglement loss function enables our network to extract main style patterns to adapt to various content images and extract exact content features to adapt to various style images. Various qualitative and quantitative experiments demonstrate that the proposed multi-adaption network leads to better results than the state-of-the-art style transfer methods.

DGD: Densifying the Knowledge of Neural Networks with Filter Grafting and Knowledge Distillation

Apr 26, 2020

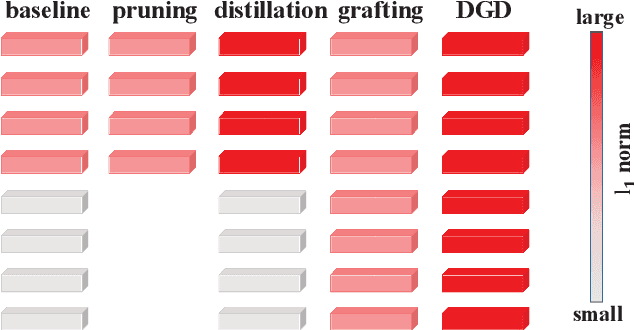

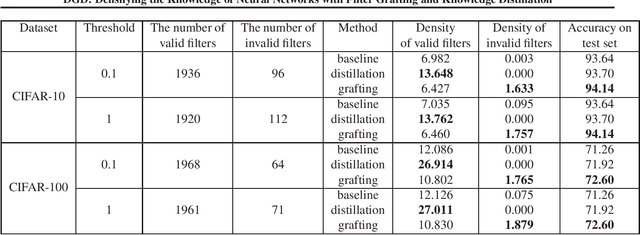

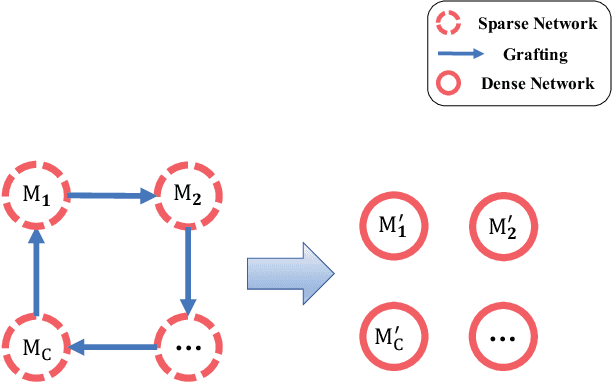

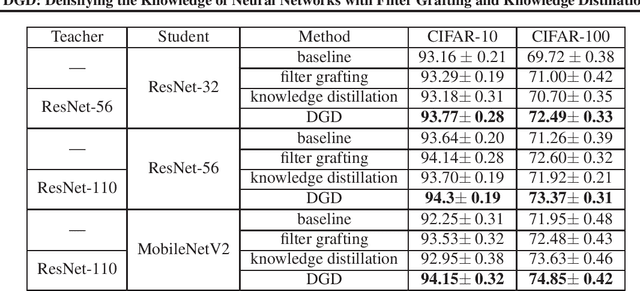

Abstract:With a fixed model structure, knowledge distillation and filter grafting are two effective ways to boost single model accuracy. However, the working mechanism and the differences between distillation and grafting have not been fully unveiled. In this paper, we evaluate the effect of distillation and grafting in the filter level, and find that the impacts of the two techniques are surprisingly complementary: distillation mostly enhances the knowledge of valid filters while grafting mostly reactivates invalid filters. This observation guides us to design a unified training framework called DGD, where distillation and grafting are naturally combined to increase the knowledge density inside the filters given a fixed model structure. Through extensive experiments, we show that the knowledge densified network in DGD shares both advantages of distillation and grafting, lifting the model accuracy to a higher level.

CurricularFace: Adaptive Curriculum Learning Loss for Deep Face Recognition

Apr 01, 2020

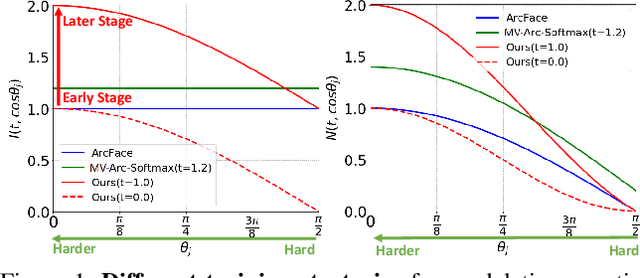

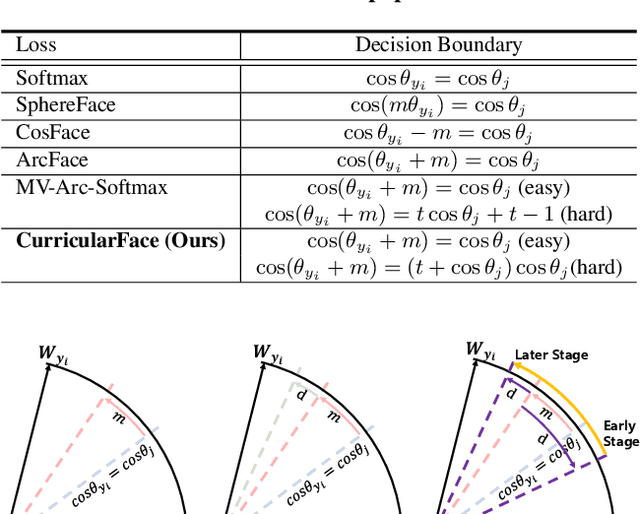

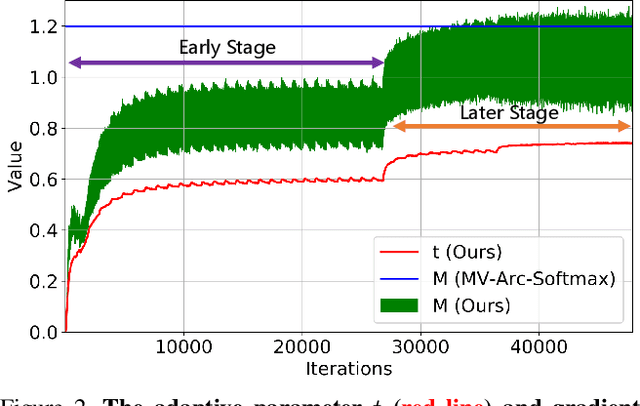

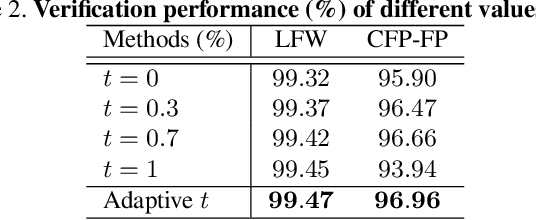

Abstract:As an emerging topic in face recognition, designing margin-based loss functions can increase the feature margin between different classes for enhanced discriminability. More recently, the idea of mining-based strategies is adopted to emphasize the misclassified samples, achieving promising results. However, during the entire training process, the prior methods either do not explicitly emphasize the sample based on its importance that renders the hard samples not fully exploited; or explicitly emphasize the effects of semi-hard/hard samples even at the early training stage that may lead to convergence issue. In this work, we propose a novel Adaptive Curriculum Learning loss (CurricularFace) that embeds the idea of curriculum learning into the loss function to achieve a novel training strategy for deep face recognition, which mainly addresses easy samples in the early training stage and hard ones in the later stage. Specifically, our CurricularFace adaptively adjusts the relative importance of easy and hard samples during different training stages. In each stage, different samples are assigned with different importance according to their corresponding difficultness. Extensive experimental results on popular benchmarks demonstrate the superiority of our CurricularFace over the state-of-the-art competitors.

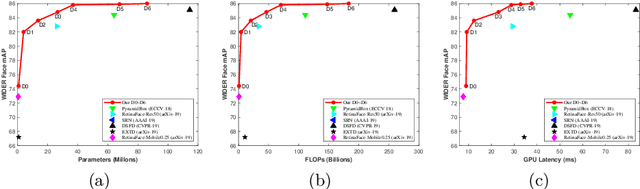

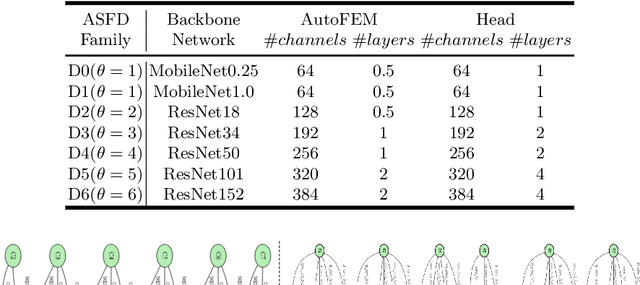

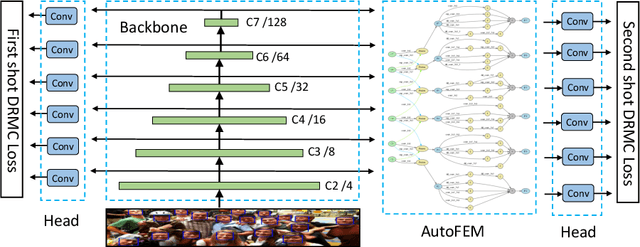

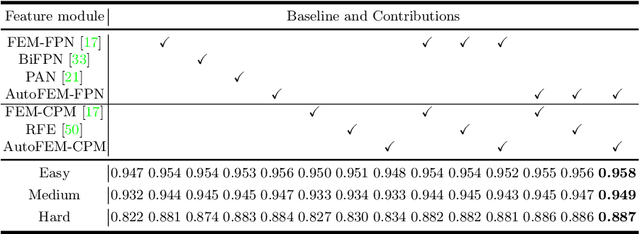

ASFD: Automatic and Scalable Face Detector

Mar 31, 2020

Abstract:In this paper, we propose a novel Automatic and Scalable Face Detector (ASFD), which is based on a combination of neural architecture search techniques as well as a new loss design. First, we propose an automatic feature enhance module named Auto-FEM by improved differential architecture search, which allows efficient multi-scale feature fusion and context enhancement. Second, we use Distance-based Regression and Margin-based Classification (DRMC) multi-task loss to predict accurate bounding boxes and learn highly discriminative deep features. Third, we adopt compound scaling methods and uniformly scale the backbone, feature modules, and head networks to develop a family of ASFD, which are consistently more efficient than the state-of-the-art face detectors. Extensive experiments conducted on popular benchmarks, e.g. WIDER FACE and FDDB, demonstrate that our ASFD-D6 outperforms the prior strong competitors, and our lightweight ASFD-D0 runs at more than 120 FPS with Mobilenet for VGA-resolution images.

Architecture Disentanglement for Deep Neural Networks

Mar 30, 2020

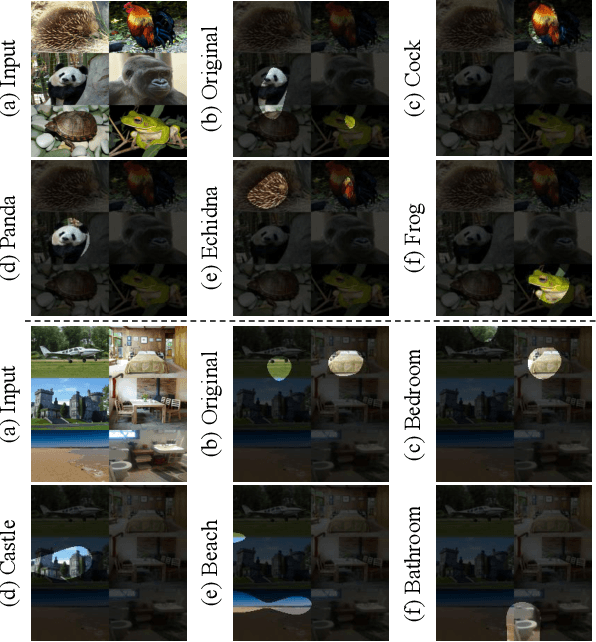

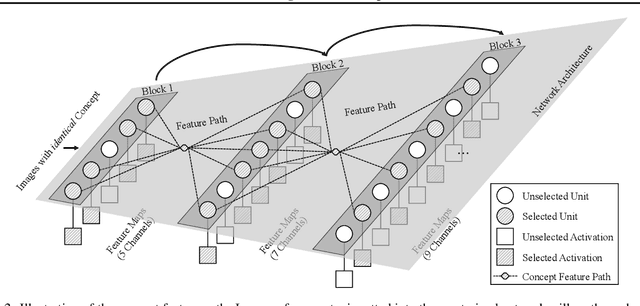

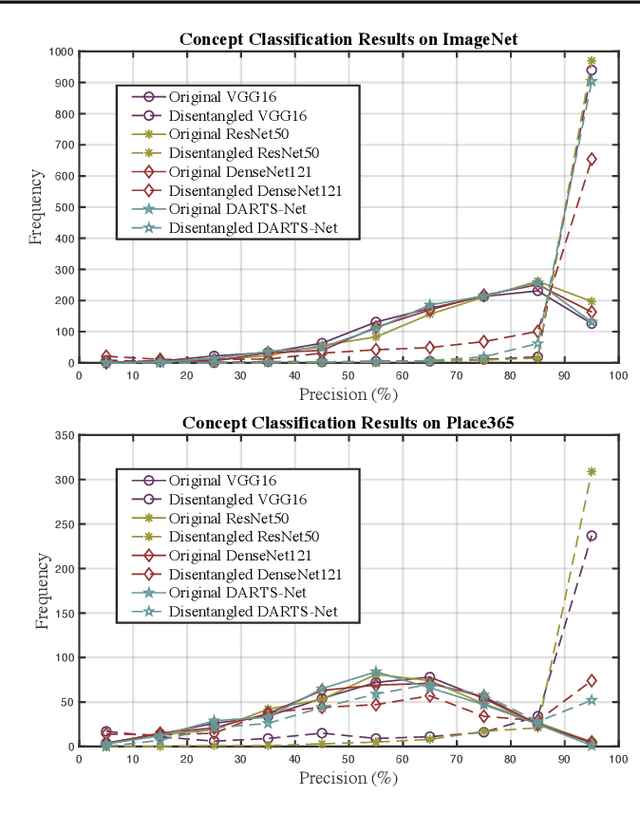

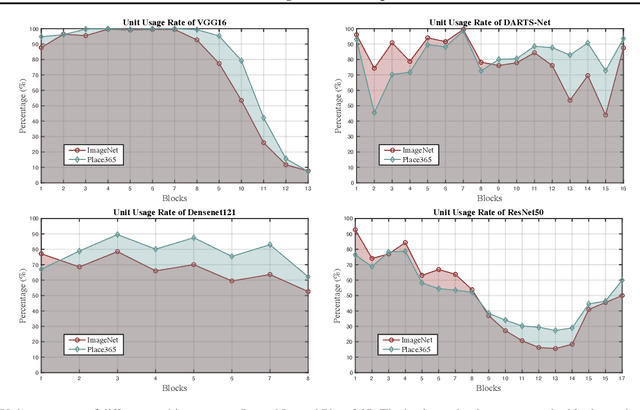

Abstract:Deep Neural Networks (DNNs) are central to deep learning, and understanding their internal working mechanism is crucial if they are to be used for emerging applications in medical and industrial AI. To this end, the current line of research typically involves linking semantic concepts to a DNN's units or layers. However, this fails to capture the hierarchical inference procedure throughout the network. To address this issue, we introduce the novel concept of Neural Architecture Disentanglement (NAD) in this paper. Specifically, we disentangle a pre-trained network into hierarchical paths corresponding to specific concepts, forming the concept feature paths, i.e., the concept flows from the bottom to top layers of a DNN. Such paths further enable us to quantify the interpretability of DNNs according to the learned diversity of human concepts. We select four types of representative architectures ranging from handcrafted to autoML-based, and conduct extensive experiments on object-based and scene-based datasets. Our NAD sheds important light on the information flow of semantic concepts in DNNs, and provides a fundamental metric that will facilitate the design of interpretable network architectures. Code will be available at: https://github.com/hujiecpp/NAD.

Towards Palmprint Verification On Smartphones

Mar 30, 2020

Abstract:With the rapid development of mobile devices, smartphones have gradually become an indispensable part of people's lives. Meanwhile, biometric authentication has been corroborated to be an effective method for establishing a person's identity with high confidence. Hence, recently, biometric technologies for smartphones have also become increasingly sophisticated and popular. But it is noteworthy that the application potential of palmprints for smartphones is seriously underestimated. Studies in the past two decades have shown that palmprints have outstanding merits in uniqueness and permanence, and have high user acceptance. However, currently, studies specializing in palmprint verification for smartphones are still quite sporadic, especially when compared to face- or fingerprint-oriented ones. In this paper, aiming to fill the aforementioned research gap, we conducted a thorough study of palmprint verification on smartphones and our contributions are twofold. First, to facilitate the study of palmprint verification on smartphones, we established an annotated palmprint dataset named MPD, which was collected by multi-brand smartphones in two separate sessions with various backgrounds and illumination conditions. As the largest dataset in this field, MPD contains 16,000 palm images collected from 200 subjects. Second, we built a DCNN-based palmprint verification system named DeepMPV+ for smartphones. In DeepMPV+, two key steps, ROI extraction and ROI matching, are both formulated as learning problems and then solved naturally by modern DCNN models. The efficiency and efficacy of DeepMPV+ have been corroborated by extensive experiments. To make our results fully reproducible, the labeled dataset and the relevant source codes have been made publicly available at https://cslinzhang.github.io/MobilePalmPrint/.

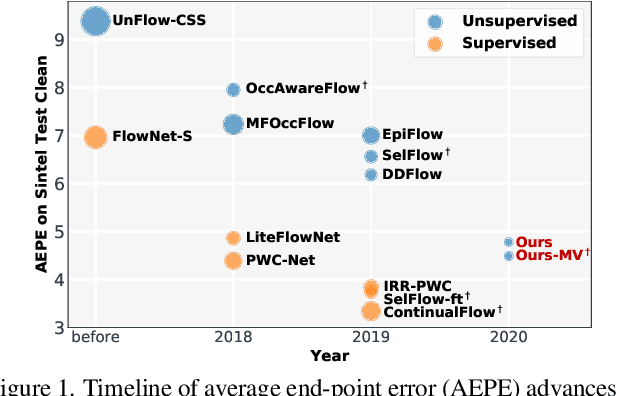

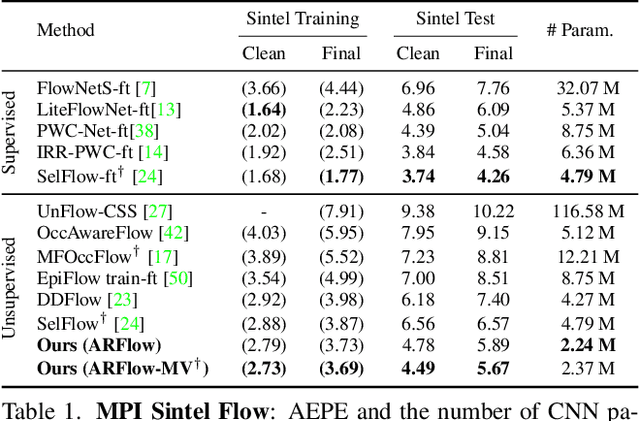

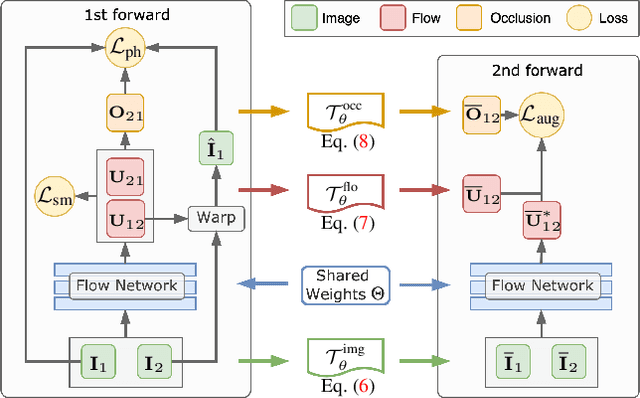

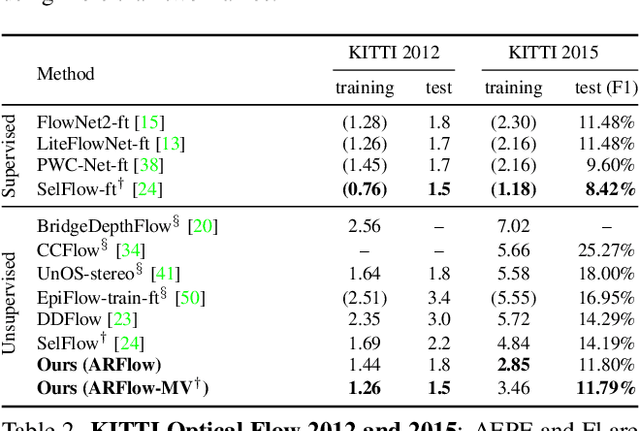

Learning by Analogy: Reliable Supervision from Transformations for Unsupervised Optical Flow Estimation

Mar 29, 2020

Abstract:Unsupervised learning of optical flow, which leverages the supervision from view synthesis, has emerged as a promising alternative to supervised methods. However, the objective of unsupervised learning is likely to be unreliable in challenging scenes. In this work, we present a framework to use more reliable supervision from transformations. It simply twists the general unsupervised learning pipeline by running another forward pass with transformed data from augmentation, along with using transformed predictions of original data as the self-supervision signal. Besides, we further introduce a lightweight network with multiple frames by a highly-shared flow decoder. Our method consistently gets a leap of performance on several benchmarks with the best accuracy among deep unsupervised methods. Also, our method achieves competitive results to recent fully supervised methods while with much fewer parameters.

Distribution Distillation Loss: Generic Approach for Improving Face Recognition from Hard Samples

Feb 10, 2020

Abstract:Large facial variations are the main challenge in face recognition. To this end, previous variation-specific methods make full use of task-related prior to design special network losses, which are typically not general among different tasks and scenarios. In contrast, the existing generic methods focus on improving the feature discriminability to minimize the intra-class distance while maximizing the interclass distance, which perform well on easy samples but fail on hard samples. To improve the performance on those hard samples for general tasks, we propose a novel Distribution Distillation Loss to narrow the performance gap between easy and hard samples, which is a simple, effective and generic for various types of facial variations. Specifically, we first adopt state-of-the-art classifiers such as ArcFace to construct two similarity distributions: teacher distribution from easy samples and student distribution from hard samples. Then, we propose a novel distribution-driven loss to constrain the student distribution to approximate the teacher distribution, which thus leads to smaller overlap between the positive and negative pairs in the student distribution. We have conducted extensive experiments on both generic large-scale face benchmarks and benchmarks with diverse variations on race, resolution and pose. The quantitative results demonstrate the superiority of our method over strong baselines, e.g., Arcface and Cosface.

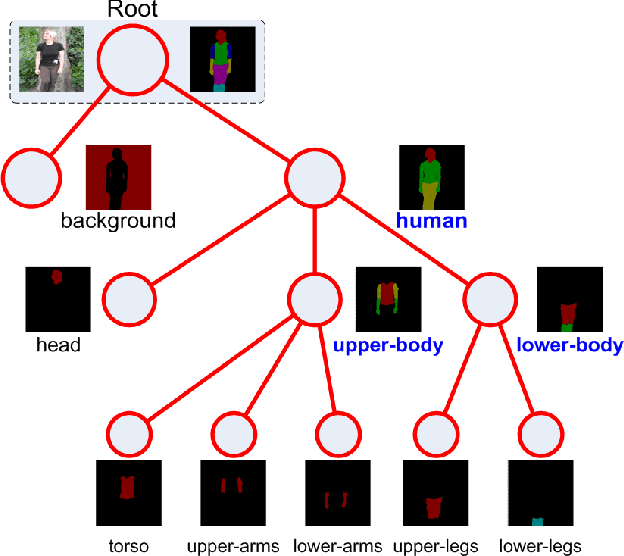

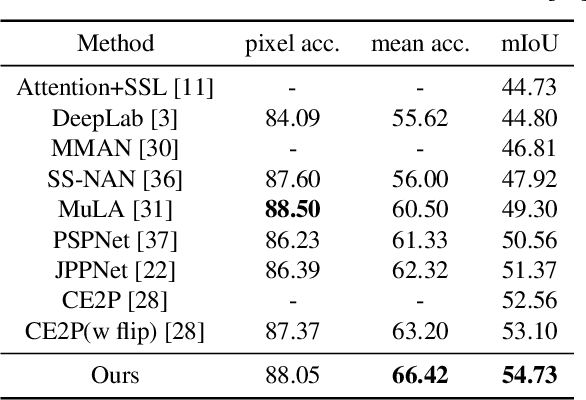

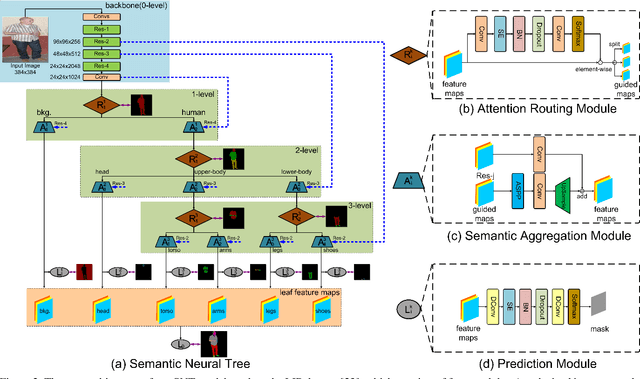

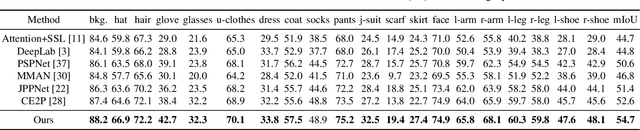

Learning Semantic Neural Tree for Human Parsing

Dec 20, 2019

Abstract:The majority of existing human parsing methods formulate the task as semantic segmentation, which regard each semantic category equally and fail to exploit the intrinsic physiological structure of human body, resulting in inaccurate results. In this paper, we design a novel semantic neural tree for human parsing, which uses a tree architecture to encode physiological structure of human body, and designs a coarse to fine process in a cascade manner to generate accurate results. Specifically, the semantic neural tree is designed to segment human regions into multiple semantic subregions (e.g., face, arms, and legs) in a hierarchical way using a new designed attention routing module. Meanwhile, we introduce the semantic aggregation module to combine multiple hierarchical features to exploit more context information for better performance. Our semantic neural tree can be trained in an end-to-end fashion by standard stochastic gradient descent (SGD) with back-propagation. Several experiments conducted on four challenging datasets for both single and multiple human parsing, i.e., LIP, PASCAL-Person-Part, CIHP and MHP-v2, demonstrate the effectiveness of the proposed method. Code can be found at https://isrc.iscas.ac.cn/gitlab/research/sematree.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge