Panoptic Segmentation

Panoptic segmentation is a computer vision task that combines semantic segmentation and instance segmentation to provide a comprehensive understanding of the scene. The goal of panoptic segmentation is to segment the image into semantically meaningful parts or regions, while also detecting and distinguishing individual instances of objects within those regions. In a given image, every pixel is assigned a semantic label, and pixels belonging to things classes (countable objects with instances, like cars and people) are assigned unique instance IDs.

Papers and Code

Condition-Aware Multimodal Fusion for Robust Semantic Perception of Driving Scenes

Oct 14, 2024

Leveraging multiple sensors is crucial for robust semantic perception in autonomous driving, as each sensor type has complementary strengths and weaknesses. However, existing sensor fusion methods often treat sensors uniformly across all conditions, leading to suboptimal performance. By contrast, we propose a novel, condition-aware multimodal fusion approach for robust semantic perception of driving scenes. Our method, CAFuser uses an RGB camera input to classify environmental conditions and generate a Condition Token that guides the fusion of multiple sensor modalities. We further newly introduce modality-specific feature adapters to align diverse sensor inputs into a shared latent space, enabling efficient integration with a single and shared pre-trained backbone. By dynamically adapting sensor fusion based on the actual condition, our model significantly improves robustness and accuracy, especially in adverse-condition scenarios. We set the new state of the art with CAFuser on the MUSES dataset with 59.7 PQ for multimodal panoptic segmentation and 78.2 mIoU for semantic segmentation, ranking first on the public benchmarks.

Configurable Embodied Data Generation for Class-Agnostic RGB-D Video Segmentation

Oct 16, 2024

This paper presents a method for generating large-scale datasets to improve class-agnostic video segmentation across robots with different form factors. Specifically, we consider the question of whether video segmentation models trained on generic segmentation data could be more effective for particular robot platforms if robot embodiment is factored into the data generation process. To answer this question, a pipeline is formulated for using 3D reconstructions (e.g. from HM3DSem) to generate segmented videos that are configurable based on a robot's embodiment (e.g. sensor type, sensor placement, and illumination source). A resulting massive RGB-D video panoptic segmentation dataset (MVPd) is introduced for extensive benchmarking with foundation and video segmentation models, as well as to support embodiment-focused research in video segmentation. Our experimental findings demonstrate that using MVPd for finetuning can lead to performance improvements when transferring foundation models to certain robot embodiments, such as specific camera placements. These experiments also show that using 3D modalities (depth images and camera pose) can lead to improvements in video segmentation accuracy and consistency. The project webpage is available at https://topipari.com/projects/MVPd

PanoSLAM: Panoptic 3D Scene Reconstruction via Gaussian SLAM

Dec 31, 2024

Understanding geometric, semantic, and instance information in 3D scenes from sequential video data is essential for applications in robotics and augmented reality. However, existing Simultaneous Localization and Mapping (SLAM) methods generally focus on either geometric or semantic reconstruction. In this paper, we introduce PanoSLAM, the first SLAM system to integrate geometric reconstruction, 3D semantic segmentation, and 3D instance segmentation within a unified framework. Our approach builds upon 3D Gaussian Splatting, modified with several critical components to enable efficient rendering of depth, color, semantic, and instance information from arbitrary viewpoints. To achieve panoptic 3D scene reconstruction from sequential RGB-D videos, we propose an online Spatial-Temporal Lifting (STL) module that transfers 2D panoptic predictions from vision models into 3D Gaussian representations. This STL module addresses the challenges of label noise and inconsistencies in 2D predictions by refining the pseudo labels across multi-view inputs, creating a coherent 3D representation that enhances segmentation accuracy. Our experiments show that PanoSLAM outperforms recent semantic SLAM methods in both mapping and tracking accuracy. For the first time, it achieves panoptic 3D reconstruction of open-world environments directly from the RGB-D video. (https://github.com/runnanchen/PanoSLAM)

Dynamic Prompting of Frozen Text-to-Image Diffusion Models for Panoptic Narrative Grounding

Sep 12, 2024

Panoptic narrative grounding (PNG), whose core target is fine-grained image-text alignment, requires a panoptic segmentation of referred objects given a narrative caption. Previous discriminative methods achieve only weak or coarse-grained alignment by panoptic segmentation pretraining or CLIP model adaptation. Given the recent progress of text-to-image Diffusion models, several works have shown their capability to achieve fine-grained image-text alignment through cross-attention maps and improved general segmentation performance. However, the direct use of phrase features as static prompts to apply frozen Diffusion models to the PNG task still suffers from a large task gap and insufficient vision-language interaction, yielding inferior performance. Therefore, we propose an Extractive-Injective Phrase Adapter (EIPA) bypass within the Diffusion UNet to dynamically update phrase prompts with image features and inject the multimodal cues back, which leverages the fine-grained image-text alignment capability of Diffusion models more sufficiently. In addition, we also design a Multi-Level Mutual Aggregation (MLMA) module to reciprocally fuse multi-level image and phrase features for segmentation refinement. Extensive experiments on the PNG benchmark show that our method achieves new state-of-the-art performance.

3rd Place Solution for PVUW Challenge 2024: Video Panoptic Segmentation

Jun 07, 2024

Video panoptic segmentation is an advanced task that extends panoptic segmentation by applying its concept to video sequences. In the hope of addressing the challenge of video panoptic segmentation in diverse conditions, We utilize DVIS++ as our baseline model and enhance it by introducing a comprehensive approach centered on the query-wise ensemble, supplemented by additional techniques. Our proposed approach achieved a VPQ score of 57.01 on the VIPSeg test set, and ranked 3rd in the VPS track of the 3rd Pixel-level Video Understanding in the Wild Challenge.

@Bench: Benchmarking Vision-Language Models for Human-centered Assistive Technology

Sep 21, 2024

As Vision-Language Models (VLMs) advance, human-centered Assistive Technologies (ATs) for helping People with Visual Impairments (PVIs) are evolving into generalists, capable of performing multiple tasks simultaneously. However, benchmarking VLMs for ATs remains under-explored. To bridge this gap, we first create a novel AT benchmark (@Bench). Guided by a pre-design user study with PVIs, our benchmark includes the five most crucial vision-language tasks: Panoptic Segmentation, Depth Estimation, Optical Character Recognition (OCR), Image Captioning, and Visual Question Answering (VQA). Besides, we propose a novel AT model (@Model) that addresses all tasks simultaneously and can be expanded to more assistive functions for helping PVIs. Our framework exhibits outstanding performance across tasks by integrating multi-modal information, and it offers PVIs a more comprehensive assistance. Extensive experiments prove the effectiveness and generalizability of our framework.

Strike a Balance in Continual Panoptic Segmentation

Jul 23, 2024

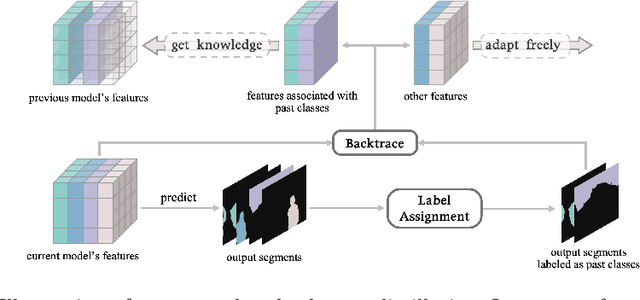

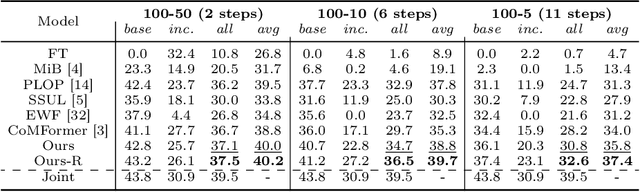

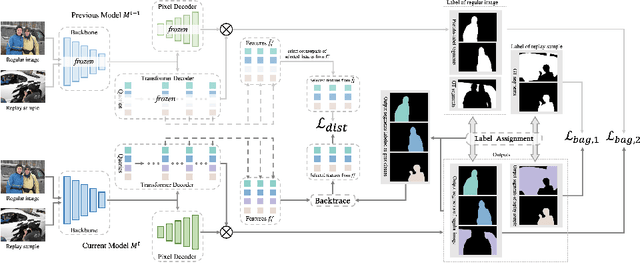

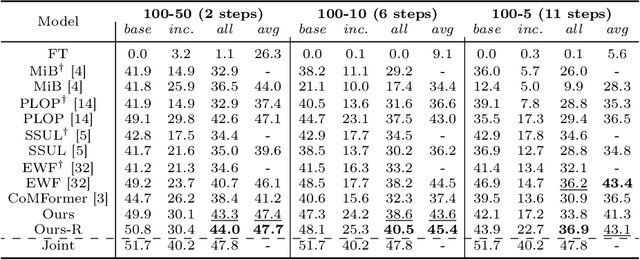

This study explores the emerging area of continual panoptic segmentation, highlighting three key balances. First, we introduce past-class backtrace distillation to balance the stability of existing knowledge with the adaptability to new information. This technique retraces the features associated with past classes based on the final label assignment results, performing knowledge distillation targeting these specific features from the previous model while allowing other features to flexibly adapt to new information. Additionally, we introduce a class-proportional memory strategy, which aligns the class distribution in the replay sample set with that of the historical training data. This strategy maintains a balanced class representation during replay, enhancing the utility of the limited-capacity replay sample set in recalling prior classes. Moreover, recognizing that replay samples are annotated only for the classes of their original step, we devise balanced anti-misguidance losses, which combat the impact of incomplete annotations without incurring classification bias. Building upon these innovations, we present a new method named Balanced Continual Panoptic Segmentation (BalConpas). Our evaluation on the challenging ADE20K dataset demonstrates its superior performance compared to existing state-of-the-art methods. The official code is available at https://github.com/jinpeng0528/BalConpas.

Resolving Inconsistent Semantics in Multi-Dataset Image Segmentation

Sep 15, 2024Leveraging multiple training datasets to scale up image segmentation models is beneficial for increasing robustness and semantic understanding. Individual datasets have well-defined ground truth with non-overlapping mask layouts and mutually exclusive semantics. However, merging them for multi-dataset training disrupts this harmony and leads to semantic inconsistencies; for example, the class "person" in one dataset and class "face" in another will require multilabel handling for certain pixels. Existing methods struggle with this setting, particularly when evaluated on label spaces mixed from the individual training sets. To overcome these issues, we introduce a simple yet effective multi-dataset training approach by integrating language-based embeddings of class names and label space-specific query embeddings. Our method maintains high performance regardless of the underlying inconsistencies between training datasets. Notably, on four benchmark datasets with label space inconsistencies during inference, we outperform previous methods by 1.6% mIoU for semantic segmentation, 9.1% PQ for panoptic segmentation, 12.1% AP for instance segmentation, and 3.0% in the newly proposed PIQ metric.

Panoptic-Depth Forecasting

Sep 18, 2024

Forecasting the semantics and 3D structure of scenes is essential for robots to navigate and plan actions safely. Recent methods have explored semantic and panoptic scene forecasting; however, they do not consider the geometry of the scene. In this work, we propose the panoptic-depth forecasting task for jointly predicting the panoptic segmentation and depth maps of unobserved future frames, from monocular camera images. To facilitate this work, we extend the popular KITTI-360 and Cityscapes benchmarks by computing depth maps from LiDAR point clouds and leveraging sequential labeled data. We also introduce a suitable evaluation metric that quantifies both the panoptic quality and depth estimation accuracy of forecasts in a coherent manner. Furthermore, we present two baselines and propose the novel PDcast architecture that learns rich spatio-temporal representations by incorporating a transformer-based encoder, a forecasting module, and task-specific decoders to predict future panoptic-depth outputs. Extensive evaluations demonstrate the effectiveness of PDcast across two datasets and three forecasting tasks, consistently addressing the primary challenges. We make the code publicly available at https://pdcast.cs.uni-freiburg.de.

Panoptic Segmentation of Galactic Structures in LSB Images

Jul 10, 2024

We explore the use of deep learning to localise galactic structures in low surface brightness (LSB) images. LSB imaging reveals many interesting structures, though these are frequently confused with galactic dust contamination, due to a strong local visual similarity. We propose a novel unified approach to multi-class segmentation of galactic structures and of extended amorphous image contaminants. Our panoptic segmentation model combines Mask R-CNN with a contaminant specialised network and utilises an adaptive preprocessing layer to better capture the subtle features of LSB images. Further, a human-in-the-loop training scheme is employed to augment ground truth labels. These different approaches are evaluated in turn, and together greatly improve the detection of both galactic structures and contaminants in LSB images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge