Yan Zhang

Fellow, IEEE

Incomplete Graph Learning: A Comprehensive Survey

Feb 18, 2025Abstract:Graph learning is a prevalent field that operates on ubiquitous graph data. Effective graph learning methods can extract valuable information from graphs. However, these methods are non-robust and affected by missing attributes in graphs, resulting in sub-optimal outcomes. This has led to the emergence of incomplete graph learning, which aims to process and learn from incomplete graphs to achieve more accurate and representative results. In this paper, we conducted a comprehensive review of the literature on incomplete graph learning. Initially, we categorize incomplete graphs and provide precise definitions of relevant concepts, terminologies, and techniques, thereby establishing a solid understanding for readers. Subsequently, we classify incomplete graph learning methods according to the types of incompleteness: (1) attribute-incomplete graph learning methods, (2) attribute-missing graph learning methods, and (3) hybrid-absent graph learning methods. By systematically classifying and summarizing incomplete graph learning methods, we highlight the commonalities and differences among existing approaches, aiding readers in selecting methods and laying the groundwork for further advancements. In addition, we summarize the datasets, incomplete processing modes, evaluation metrics, and application domains used by the current methods. Lastly, we discuss the current challenges and propose future directions for incomplete graph learning, with the aim of stimulating further innovations in this crucial field. To our knowledge, this is the first review dedicated to incomplete graph learning, aiming to offer valuable insights for researchers in related fields.We developed an online resource to follow relevant research based on this review, available at https://github.com/cherry-a11y/Incomplete-graph-learning.git

Baichuan-M1: Pushing the Medical Capability of Large Language Models

Feb 18, 2025

Abstract:The current generation of large language models (LLMs) is typically designed for broad, general-purpose applications, while domain-specific LLMs, especially in vertical fields like medicine, remain relatively scarce. In particular, the development of highly efficient and practical LLMs for the medical domain is challenging due to the complexity of medical knowledge and the limited availability of high-quality data. To bridge this gap, we introduce Baichuan-M1, a series of large language models specifically optimized for medical applications. Unlike traditional approaches that simply continue pretraining on existing models or apply post-training to a general base model, Baichuan-M1 is trained from scratch with a dedicated focus on enhancing medical capabilities. Our model is trained on 20 trillion tokens and incorporates a range of effective training methods that strike a balance between general capabilities and medical expertise. As a result, Baichuan-M1 not only performs strongly across general domains such as mathematics and coding but also excels in specialized medical fields. We have open-sourced Baichuan-M1-14B, a mini version of our model, which can be accessed through the following links.

Can you pass that tool?: Implications of Indirect Speech in Physical Human-Robot Collaboration

Feb 17, 2025Abstract:Indirect speech acts (ISAs) are a natural pragmatic feature of human communication, allowing requests to be conveyed implicitly while maintaining subtlety and flexibility. Although advancements in speech recognition have enabled natural language interactions with robots through direct, explicit commands--providing clarity in communication--the rise of large language models presents the potential for robots to interpret ISAs. However, empirical evidence on the effects of ISAs on human-robot collaboration (HRC) remains limited. To address this, we conducted a Wizard-of-Oz study (N=36), engaging a participant and a robot in collaborative physical tasks. Our findings indicate that robots capable of understanding ISAs significantly improve human's perceived robot anthropomorphism, team performance, and trust. However, the effectiveness of ISAs is task- and context-dependent, thus requiring careful use. These results highlight the importance of appropriately integrating direct and indirect requests in HRC to enhance collaborative experiences and task performance.

Mitigating the Impact of Prominent Position Shift in Drone-based RGBT Object Detection

Feb 13, 2025Abstract:Drone-based RGBT object detection plays a crucial role in many around-the-clock applications. However, real-world drone-viewed RGBT data suffers from the prominent position shift problem, i.e., the position of a tiny object differs greatly in different modalities. For instance, a slight deviation of a tiny object in the thermal modality will induce it to drift from the main body of itself in the RGB modality. Considering RGBT data are usually labeled on one modality (reference), this will cause the unlabeled modality (sensed) to lack accurate supervision signals and prevent the detector from learning a good representation. Moreover, the mismatch of the corresponding feature point between the modalities will make the fused features confusing for the detection head. In this paper, we propose to cast the cross-modality box shift issue as the label noise problem and address it on the fly via a novel Mean Teacher-based Cross-modality Box Correction head ensemble (CBC). In this way, the network can learn more informative representations for both modalities. Furthermore, to alleviate the feature map mismatch problem in RGBT fusion, we devise a Shifted Window-Based Cascaded Alignment (SWCA) module. SWCA mines long-range dependencies between the spatially unaligned features inside shifted windows and cascaded aligns the sensed features with the reference ones. Extensive experiments on two drone-based RGBT object detection datasets demonstrate that the correction results are both visually and quantitatively favorable, thereby improving the detection performance. In particular, our CBC module boosts the precision of the sensed modality ground truth by 25.52 aSim points. Overall, the proposed detector achieves an mAP_50 of 43.55 points on RGBTDronePerson and surpasses a state-of-the-art method by 8.6 mAP50 on a shift subset of DroneVehicle dataset. The code and data will be made publicly available.

Implicit Communication of Contextual Information in Human-Robot Collaboration

Feb 09, 2025Abstract:Implicit communication is crucial in human-robot collaboration (HRC), where contextual information, such as intentions, is conveyed as implicatures, forming a natural part of human interaction. However, enabling robots to appropriately use implicit communication in cooperative tasks remains challenging. My research addresses this through three phases: first, exploring the impact of linguistic implicatures on collaborative tasks; second, examining how robots' implicit cues for backchanneling and proactive communication affect team performance and perception, and how they should adapt to human teammates; and finally, designing and evaluating a multi-LLM robotics system that learns from human implicit communication. This research aims to enhance the natural communication abilities of robots and facilitate their integration into daily collaborative activities.

Long-VITA: Scaling Large Multi-modal Models to 1 Million Tokens with Leading Short-Context Accuray

Feb 07, 2025Abstract:Establishing the long-context capability of large vision-language models is crucial for video understanding, high-resolution image understanding, multi-modal agents and reasoning. We introduce Long-VITA, a simple yet effective large multi-modal model for long-context visual-language understanding tasks. It is adept at concurrently processing and analyzing modalities of image, video, and text over 4K frames or 1M tokens while delivering advanced performances on short-context multi-modal tasks. We propose an effective multi-modal training schema that starts with large language models and proceeds through vision-language alignment, general knowledge learning, and two sequential stages of long-sequence fine-tuning. We further implement context-parallelism distributed inference and logits-masked language modeling head to scale Long-VITA to infinitely long inputs of images and texts during model inference. Regarding training data, Long-VITA is built on a mix of $17$M samples from public datasets only and demonstrates the state-of-the-art performance on various multi-modal benchmarks, compared against recent cutting-edge models with internal data. Long-VITA is fully reproducible and supports both NPU and GPU platforms for training and testing. We hope Long-VITA can serve as a competitive baseline and offer valuable insights for the open-source community in advancing long-context multi-modal understanding.

Baichuan-Omni-1.5 Technical Report

Jan 26, 2025

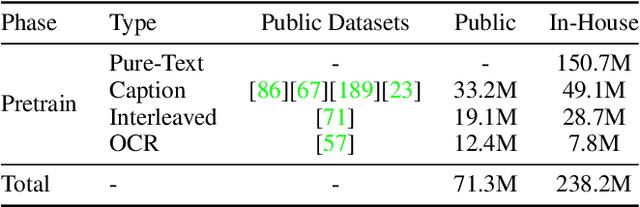

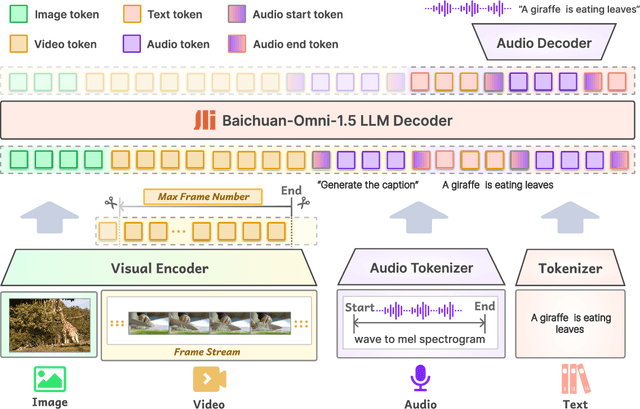

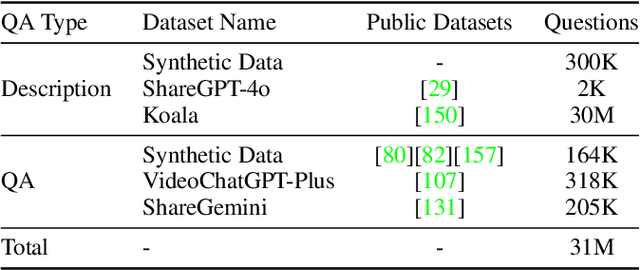

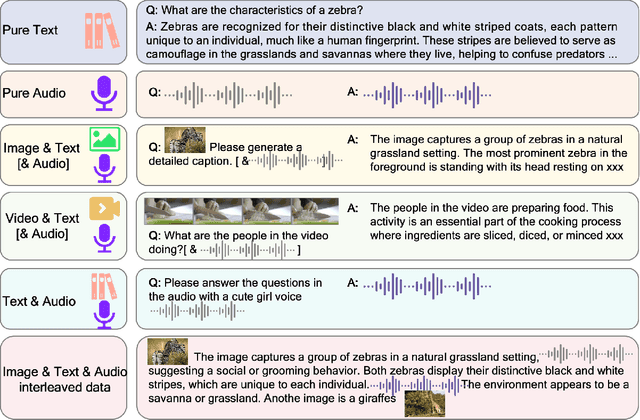

Abstract:We introduce Baichuan-Omni-1.5, an omni-modal model that not only has omni-modal understanding capabilities but also provides end-to-end audio generation capabilities. To achieve fluent and high-quality interaction across modalities without compromising the capabilities of any modality, we prioritized optimizing three key aspects. First, we establish a comprehensive data cleaning and synthesis pipeline for multimodal data, obtaining about 500B high-quality data (text, audio, and vision). Second, an audio-tokenizer (Baichuan-Audio-Tokenizer) has been designed to capture both semantic and acoustic information from audio, enabling seamless integration and enhanced compatibility with MLLM. Lastly, we designed a multi-stage training strategy that progressively integrates multimodal alignment and multitask fine-tuning, ensuring effective synergy across all modalities. Baichuan-Omni-1.5 leads contemporary models (including GPT4o-mini and MiniCPM-o 2.6) in terms of comprehensive omni-modal capabilities. Notably, it achieves results comparable to leading models such as Qwen2-VL-72B across various multimodal medical benchmarks.

Joint System Latency and Data Freshness Optimization for Cache-enabled Mobile Crowdsensing Networks

Jan 24, 2025

Abstract:Mobile crowdsensing (MCS) networks enable large-scale data collection by leveraging the ubiquity of mobile devices. However, frequent sensing and data transmission can lead to significant resource consumption. To mitigate this issue, edge caching has been proposed as a solution for storing recently collected data. Nonetheless, this approach may compromise data freshness. In this paper, we investigate the trade-off between re-using cached task results and re-sensing tasks in cache-enabled MCS networks, aiming to minimize system latency while maintaining information freshness. To this end, we formulate a weighted delay and age of information (AoI) minimization problem, jointly optimizing sensing decisions, user selection, channel selection, task allocation, and caching strategies. The problem is a mixed-integer non-convex programming problem which is intractable. Therefore, we decompose the long-term problem into sequential one-shot sub-problems and design a framework that optimizes system latency, task sensing decision, and caching strategy subproblems. When one task is re-sensing, the one-shot problem simplifies to the system latency minimization problem, which can be solved optimally. The task sensing decision is then made by comparing the system latency and AoI. Additionally, a Bayesian update strategy is developed to manage the cached task results. Building upon this framework, we propose a lightweight and time-efficient algorithm that makes real-time decisions for the long-term optimization problem. Extensive simulation results validate the effectiveness of our approach.

Test-Time Code-Switching for Cross-lingual Aspect Sentiment Triplet Extraction

Jan 24, 2025Abstract:Aspect Sentiment Triplet Extraction (ASTE) is a thriving research area with impressive outcomes being achieved on high-resource languages. However, the application of cross-lingual transfer to the ASTE task has been relatively unexplored, and current code-switching methods still suffer from term boundary detection issues and out-of-dictionary problems. In this study, we introduce a novel Test-Time Code-SWitching (TT-CSW) framework, which bridges the gap between the bilingual training phase and the monolingual test-time prediction. During training, a generative model is developed based on bilingual code-switched training data and can produce bilingual ASTE triplets for bilingual inputs. In the testing stage, we employ an alignment-based code-switching technique for test-time augmentation. Extensive experiments on cross-lingual ASTE datasets validate the effectiveness of our proposed method. We achieve an average improvement of 3.7% in terms of weighted-averaged F1 in four datasets with different languages. Additionally, we set a benchmark using ChatGPT and GPT-4, and demonstrate that even smaller generative models fine-tuned with our proposed TT-CSW framework surpass ChatGPT and GPT-4 by 14.2% and 5.0% respectively.

ROSAnnotator: A Web Application for ROSBag Data Analysis in Human-Robot Interaction

Jan 13, 2025Abstract:Human-robot interaction (HRI) is an interdisciplinary field that utilises both quantitative and qualitative methods. While ROSBags, a file format within the Robot Operating System (ROS), offer an efficient means of collecting temporally synched multimodal data in empirical studies with real robots, there is a lack of tools specifically designed to integrate qualitative coding and analysis functions with ROSBags. To address this gap, we developed ROSAnnotator, a web-based application that incorporates a multimodal Large Language Model (LLM) to support both manual and automated annotation of ROSBag data. ROSAnnotator currently facilitates video, audio, and transcription annotations and provides an open interface for custom ROS messages and tools. By using ROSAnnotator, researchers can streamline the qualitative analysis process, create a more cohesive analysis pipeline, and quickly access statistical summaries of annotations, thereby enhancing the overall efficiency of HRI data analysis. https://github.com/CHRI-Lab/ROSAnnotator

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge