Song Chen

An FPGA Implementation of Displacement Vector Search for Intra Pattern Copy in JPEG XS

Mar 11, 2026Abstract:Recently, progress has been made on the Intra Pattern Copy (IPC) tool for JPEG XS, an image compression standard designed for low-latency and low-complexity coding. IPC performs wavelet-domain intra compensation predictions to reduce spatial redundancy in screen content. A key module of IPC is the displacement vector (DV) search, which aims to solve the optimal prediction reference offset. However, the DV search process is computationally intensive, posing challenges for practical hardware deployment. In this paper, we propose an efficient pipelined FPGA architecture design for the DV search module to promote the practical deployment of IPC. Optimized memory organization, which leverages the IPC computational characteristics and data inherent reuse patterns, is further introduced to enhance the performance. Experimental results show that our proposed architecture achieves a throughput of 38.3 Mpixels/s with a power consumption of 277 mW, demonstrating its feasibility for practical hardware implementation in IPC and other predictive coding tools, and providing a promising foundation for ASIC deployment.

SecAgent: Efficient Mobile GUI Agent with Semantic Context

Mar 09, 2026Abstract:Mobile Graphical User Interface (GUI) agents powered by multimodal large language models have demonstrated promising capabilities in automating complex smartphone tasks. However, existing approaches face two critical limitations: the scarcity of high-quality multilingual datasets, particularly for non-English ecosystems, and inefficient history representation methods. To address these challenges, we present SecAgent, an efficient mobile GUI agent at 3B scale. We first construct a human-verified Chinese mobile GUI dataset with 18k grounding samples and 121k navigation steps across 44 applications, along with a Chinese navigation benchmark featuring multi-choice action annotations. Building upon this dataset, we propose a semantic context mechanism that distills history screenshots and actions into concise, natural language summaries, significantly reducing computational costs while preserving task-relevant information. Through supervised and reinforcement fine-tuning, SecAgent outperforms similar-scale baselines and achieves performance comparable to 7B-8B models on our and public navigation benchmarks. We will open-source the training dataset, benchmark, model, and code to advance research in multilingual mobile GUI automation.

SASQ: Static Activation Scaling for Quantization-Aware Training in Large Language Models

Dec 16, 2025Abstract:Large language models (LLMs) excel at natural language tasks but face deployment challenges due to their growing size outpacing GPU memory advancements. Model quantization mitigates this issue by lowering weight and activation precision, but existing solutions face fundamental trade-offs: dynamic quantization incurs high computational overhead and poses deployment challenges on edge devices, while static quantization sacrifices accuracy. Existing approaches of quantization-aware training (QAT) further suffer from weight training costs. We propose SASQ: a lightweight QAT framework specifically tailored for activation quantization factors. SASQ exclusively optimizes only the quantization factors (without changing pre-trained weights), enabling static inference with high accuracy while maintaining deployment efficiency. SASQ adaptively truncates some outliers, thereby reducing the difficulty of quantization while preserving the distributional characteristics of the activations. SASQ not only surpasses existing SOTA quantization schemes but also outperforms the corresponding FP16 models. On LLaMA2-7B, it achieves 5.2% lower perplexity than QuaRot and 4.7% lower perplexity than the FP16 model on WikiText2.

Ocean-OCR: Towards General OCR Application via a Vision-Language Model

Jan 26, 2025

Abstract:Multimodal large language models (MLLMs) have shown impressive capabilities across various domains, excelling in processing and understanding information from multiple modalities. Despite the rapid progress made previously, insufficient OCR ability hinders MLLMs from excelling in text-related tasks. In this paper, we present \textbf{Ocean-OCR}, a 3B MLLM with state-of-the-art performance on various OCR scenarios and comparable understanding ability on general tasks. We employ Native Resolution ViT to enable variable resolution input and utilize a substantial collection of high-quality OCR datasets to enhance the model performance. We demonstrate the superiority of Ocean-OCR through comprehensive experiments on open-source OCR benchmarks and across various OCR scenarios. These scenarios encompass document understanding, scene text recognition, and handwritten recognition, highlighting the robust OCR capabilities of Ocean-OCR. Note that Ocean-OCR is the first MLLM to outperform professional OCR models such as TextIn and PaddleOCR.

Baichuan-Omni-1.5 Technical Report

Jan 26, 2025

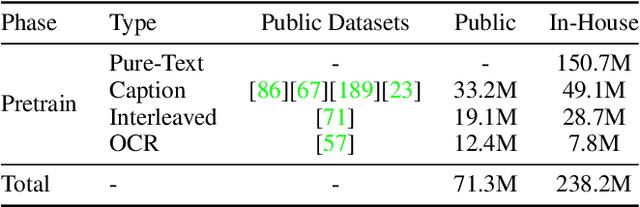

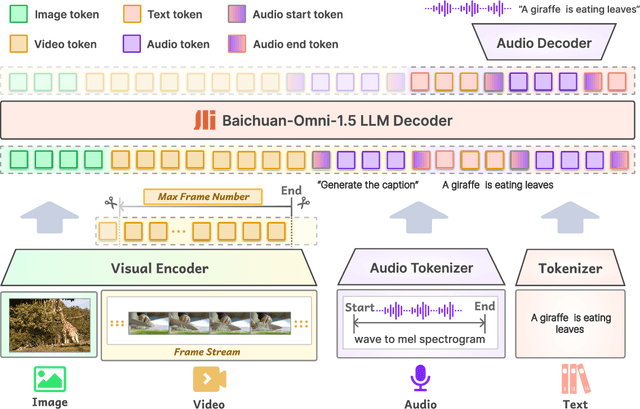

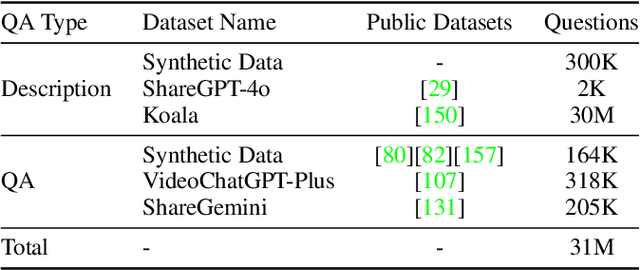

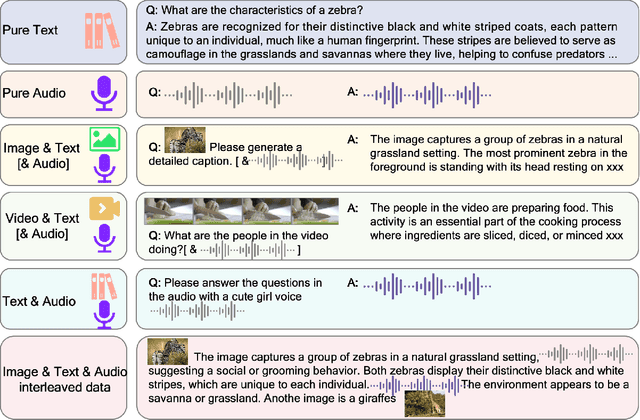

Abstract:We introduce Baichuan-Omni-1.5, an omni-modal model that not only has omni-modal understanding capabilities but also provides end-to-end audio generation capabilities. To achieve fluent and high-quality interaction across modalities without compromising the capabilities of any modality, we prioritized optimizing three key aspects. First, we establish a comprehensive data cleaning and synthesis pipeline for multimodal data, obtaining about 500B high-quality data (text, audio, and vision). Second, an audio-tokenizer (Baichuan-Audio-Tokenizer) has been designed to capture both semantic and acoustic information from audio, enabling seamless integration and enhanced compatibility with MLLM. Lastly, we designed a multi-stage training strategy that progressively integrates multimodal alignment and multitask fine-tuning, ensuring effective synergy across all modalities. Baichuan-Omni-1.5 leads contemporary models (including GPT4o-mini and MiniCPM-o 2.6) in terms of comprehensive omni-modal capabilities. Notably, it achieves results comparable to leading models such as Qwen2-VL-72B across various multimodal medical benchmarks.

Baichuan-Omni Technical Report

Oct 11, 2024

Abstract:The salient multimodal capabilities and interactive experience of GPT-4o highlight its critical role in practical applications, yet it lacks a high-performing open-source counterpart. In this paper, we introduce Baichuan-Omni, the first open-source 7B Multimodal Large Language Model (MLLM) adept at concurrently processing and analyzing modalities of image, video, audio, and text, while delivering an advanced multimodal interactive experience and strong performance. We propose an effective multimodal training schema starting with 7B model and proceeding through two stages of multimodal alignment and multitask fine-tuning across audio, image, video, and text modal. This approach equips the language model with the ability to handle visual and audio data effectively. Demonstrating strong performance across various omni-modal and multimodal benchmarks, we aim for this contribution to serve as a competitive baseline for the open-source community in advancing multimodal understanding and real-time interaction.

PiRD: Physics-informed Residual Diffusion for Flow Field Reconstruction

Apr 12, 2024

Abstract:The use of machine learning in fluid dynamics is becoming more common to expedite the computation when solving forward and inverse problems of partial differential equations. Yet, a notable challenge with existing convolutional neural network (CNN)-based methods for data fidelity enhancement is their reliance on specific low-fidelity data patterns and distributions during the training phase. In addition, the CNN-based method essentially treats the flow reconstruction task as a computer vision task that prioritizes the element-wise precision which lacks a physical and mathematical explanation. This dependence can dramatically affect the models' effectiveness in real-world scenarios, especially when the low-fidelity input deviates from the training data or contains noise not accounted for during training. The introduction of diffusion models in this context shows promise for improving performance and generalizability. Unlike direct mapping from a specific low-fidelity to a high-fidelity distribution, diffusion models learn to transition from any low-fidelity distribution towards a high-fidelity one. Our proposed model - Physics-informed Residual Diffusion, demonstrates the capability to elevate the quality of data from both standard low-fidelity inputs, to low-fidelity inputs with injected Gaussian noise, and randomly collected samples. By integrating physics-based insights into the objective function, it further refines the accuracy and the fidelity of the inferred high-quality data. Experimental results have shown that our approach can effectively reconstruct high-quality outcomes for two-dimensional turbulent flows from a range of low-fidelity input conditions without requiring retraining.

Graph Attention-Based Symmetry Constraint Extraction for Analog Circuits

Dec 22, 2023

Abstract:In recent years, analog circuits have received extensive attention and are widely used in many emerging applications. The high demand for analog circuits necessitates shorter circuit design cycles. To achieve the desired performance and specifications, various geometrical symmetry constraints must be carefully considered during the analog layout process. However, the manual labeling of these constraints by experienced analog engineers is a laborious and time-consuming process. To handle the costly runtime issue, we propose a graph-based learning framework to automatically extract symmetric constraints in analog circuit layout. The proposed framework leverages the connection characteristics of circuits and the devices'information to learn the general rules of symmetric constraints, which effectively facilitates the extraction of device-level constraints on circuit netlists. The experimental results demonstrate that compared to state-of-the-art symmetric constraint detection approaches, our framework achieves higher accuracy and lower false positive rate.

AiDAC: A Low-Cost In-Memory Computing Architecture with All-Analog Multi-Bit Compute and Interconnect

Dec 21, 2023

Abstract:Analog in-memory computing (AiMC) is an emerging technology that shows fantastic performance superiority for neural network acceleration. However, as the computational bit-width and scale increase, high-precision data conversion and long-distance data routing will result in unacceptable energy and latency overheads in the AiMC system. In this work, we focus on the potential of in-charge computing and in-time interconnection and show an innovative AiMC architecture, named AiDAC, with three key contributions: (1) AiDAC enhances multibit computing efficiency and reduces data conversion times by grouping capacitors technology; (2) AiDAC first adopts row drivers and column time accumulators to achieve large-scale AiMC arrays integration while minimizing the energy cost of data movements. (3) AiDAC is the first work to support large-scale all-analog multibit vector-matrix multiplication (VMM) operations. The evaluation shows that AiDAC maintains high-precision calculation (less than 0.79% total computing error) while also possessing excellent performance features, such as high parallelism (up to 26.2TOPS), low latency (<20ns/VMM), and high energy efficiency (123.8TOPS/W), for 8bits VMM with 1024 input channels.

NicePIM: Design Space Exploration for Processing-In-Memory DNN Accelerators with 3D-Stacked-DRAM

May 30, 2023

Abstract:With the widespread use of deep neural networks(DNNs) in intelligent systems, DNN accelerators with high performance and energy efficiency are greatly demanded. As one of the feasible processing-in-memory(PIM) architectures, 3D-stacked-DRAM-based PIM(DRAM-PIM) architecture enables large-capacity memory and low-cost memory access, which is a promising solution for DNN accelerators with better performance and energy efficiency. However, the low-cost characteristics of stacked DRAM and the distributed manner of memory access and data storing require us to rebalance the hardware design and DNN mapping. In this paper, we propose NicePIM to efficiently explore the design space of hardware architecture and DNN mapping of DRAM-PIM accelerators, which consists of three key components: PIM-Tuner, PIM-Mapper and Data-Scheduler. PIM-Tuner optimizes the hardware configurations leveraging a DNN model for classifying area-compliant architectures and a deep kernel learning model for identifying better hardware parameters. PIM-Mapper explores a variety of DNN mapping configurations, including parallelism between branches of DNN, DNN layer partitioning, DRAM capacity allocation and data layout pattern in DRAM to generate high-hardware-utilization DNN mapping schemes for various hardware configurations. The Data-Scheduler employs an integer-linear-programming-based data scheduling algorithm to alleviate the inter-PIM-node communication overhead of data-sharing brought by DNN layer partitioning. Experimental results demonstrate that NicePIM can optimize hardware configurations for DRAM-PIM systems effectively and can generate high-quality DNN mapping schemes with latency and energy cost reduced by 37% and 28% on average respectively compared to the baseline method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge