Tao Liu

Bipedal-Walking-Dynamics Model on Granular Terrains

Apr 13, 2026Abstract:Bipeds have demonstrated high agility and mobility in unstructured environments such as sand. The yielding of such granular media brings significant sinkage and slip of the bipedal feet, leading to uncertainty and instability of walking locomotion. We present a new dynamics-modeling approach to capture and predict bipedal-walking locomotion on granular media. A dynamic foot-terrain interaction model is integrated to compute the ground reaction force (GRF). The proposed granular dynamic model has three additional degree-of-freedom (DoF) to estimate foot sinkage and slip that are critical to capturing robot-walking kinematics and kinetics such as cost of transport (CoT). Using the new model, we analyze bipedal kinetics, CoT, and foot-terrain rolling and intrusion affects. Experiments are conducted using a biped robotic walker on sand to validate the proposed dynamic model with robot-gait profiles, media-intrusion prediction, and GRF estimations. This new dynamics model can further serve as an enabling tool for locomotion control and optimization of bipedal robots to efficiently walk on granular terrains.

FEDBUD: Joint Incentive and Privacy Optimization for Resource-Constrained Federated Learning

Apr 12, 2026Abstract:Federated learning has become a popular paradigm for privacy protection and edge-based machine learning. However, defending against differential attacks and devising incentive strategies remain significant bottlenecks in this field. Despite recent works on privacy-aware incentive mechanism design for federated learning, few of them consider both data volume and noise level. In this paper, we propose a novel federated learning system called FEDBUD, which combines privacy and economic concerns together by considering the joint influence of data volume and noise level on incentive strategy determination. In this system, the cloud server controls monetary payments to edge nodes, while edge nodes control data volume and noise level that potentially impact the model performance of the cloud server. To determine the mutually optimal strategies for both sides, we model FEDBUD as a two-stage Stackelberg Game and derive the Nash Equilibrium using the mean-field estimator and virtual queue. Experimental results on real-world datasets demonstrate the outstanding performance of FEDBUD.

Satellite-Free Training for Drone-View Geo-Localization

Apr 02, 2026Abstract:Drone-view geo-localization (DVGL) aims to determine the location of drones in GPS-denied environments by retrieving the corresponding geotagged satellite tile from a reference gallery given UAV observations of a location. In many existing formulations, these observations are represented by a single oblique UAV image. In contrast, our satellite-free setting is designed for multi-view UAV sequences, which are used to construct a geometry-normalized UAV-side location representation before cross-view retrieval. Existing approaches rely on satellite imagery during training, either through paired supervision or unsupervised alignment, which limits practical deployment when satellite data are unavailable or restricted. In this paper, we propose a satellite-free training (SFT) framework that converts drone imagery into cross-view compatible representations through three main stages: drone-side 3D scene reconstruction, geometry-based pseudo-orthophoto generation, and satellite-free feature aggregation for retrieval. Specifically, we first reconstruct dense 3D scenes from multi-view drone images using 3D Gaussian splatting and project the reconstructed geometry into pseudo-orthophotos via PCA-guided orthographic projection. This rendering stage operates directly on reconstructed scene geometry without requiring camera parameters at rendering time. Next, we refine these orthophotos with lightweight geometry-guided inpainting to obtain texture-complete drone-side views. Finally, we extract DINOv3 patch features from the generated orthophotos, learn a Fisher vector aggregation model solely from drone data, and reuse it at test time to encode satellite tiles for cross-view retrieval. Experimental results on University-1652 and SUES-200 show that our SFT framework substantially outperforms satellite-free generalization baselines and narrows the gap to methods trained with satellite imagery.

PoiCGAN: A Targeted Poisoning Based on Feature-Label Joint Perturbation in Federated Learning

Mar 24, 2026Abstract:Federated Learning (FL), as a popular distributed learning paradigm, has shown outstanding performance in improving computational efficiency and protecting data privacy, and is widely applied in industrial image classification. However, due to its distributed nature, FL is vulnerable to threats from malicious clients, with poisoning attacks being a common threat. A major limitation of existing poisoning attack methods is their difficulty in bypassing model performance tests and defense mechanisms based on model anomaly detection. This often results in the detection and removal of poisoned models, which undermines their practical utility. To ensure both the performance of industrial image classification and attacks, we propose a targeted poisoning attack, PoiCGAN, based on feature-label collaborative perturbation. Our method modifies the inputs of the discriminator and generator in the Conditional Generative Adversarial Network (CGAN) to influence the training process, generating an ideal poison generator. This generator not only produces specific poisoned samples but also automatically performs label flipping. Experiments across various datasets show that our method achieves an attack success rate 83.97% higher than baseline methods, with a less than 8.87% reduction in the main task's accuracy. Moreover, the poisoned samples and malicious models exhibit high stealthiness.

No Labels, No Look-Ahead: Unsupervised Online Video Stabilization with Classical Priors

Feb 26, 2026Abstract:We propose a new unsupervised framework for online video stabilization. Unlike methods based on deep learning that require paired stable and unstable datasets, our approach instantiates the classical stabilization pipeline with three stages and incorporates a multithreaded buffering mechanism. This design addresses three longstanding challenges in end-to-end learning: limited data, poor controllability, and inefficiency on hardware with constrained resources. Existing benchmarks focus mainly on handheld videos with a forward view in visible light, which restricts the applicability of stabilization to domains such as UAV nighttime remote sensing. To fill this gap, we introduce a new multimodal UAV aerial video dataset (UAV-Test). Experiments show that our method consistently outperforms state-of-the-art online stabilizers in both quantitative metrics and visual quality, while achieving performance comparable to offline methods.

Vision-Based Reasoning with Topology-Encoded Graphs for Anatomical Path Disambiguation in Robot-Assisted Endovascular Navigation

Feb 23, 2026Abstract:Robotic-assisted percutaneous coronary intervention (PCI) is constrained by the inherent limitations of 2D Digital Subtraction Angiography (DSA). Unlike physicians, who can directly manipulate guidewires and integrate tactile feedback with their prior anatomical knowledge, teleoperated robotic systems must rely solely on 2D projections. This mode of operation, simultaneously lacking spatial context and tactile sensation, may give rise to projection-induced ambiguities at vascular bifurcations. To address this challenge, we propose a two-stage framework (SCAR-UNet-GAT) for real-time robotic path planning. In the first stage, SCAR-UNet, a spatial-coordinate-attention-regularized U-Net, is employed for accurate coronary vessel segmentation. The integration of multi-level attention mechanisms enhances the delineation of thin, tortuous vessels and improves robustness against imaging noise. From the resulting binary masks, vessel centerlines and bifurcation points are extracted, and geometric descriptors (e.g., branch diameter, intersection angles) are fused with local DSA patches to construct node features. In the second stage, a Graph Attention Network (GAT) reasons over the vessel graph to identify anatomically consistent and clinically feasible trajectories, effectively distinguishing true bifurcations from projection-induced false crossings. On a clinical DSA dataset, SCAR-UNet achieved a Dice coefficient of 93.1%. For path disambiguation, the proposed GAT-based method attained a success rate of 95.0% and a target-arrival success rate of 90.0%, substantially outperforming conventional shortest-path planning (60.0% and 55.0%) and heuristic-based planning (75.0% and 70.0%). Validation on a robotic platform further confirmed the practical feasibility and robustness of the proposed framework.

IESR:Efficient MCTS-Based Modular Reasoning for Text-to-SQL with Large Language Models

Feb 05, 2026Abstract:Text-to-SQL is a key natural language processing task that maps natural language questions to SQL queries, enabling intuitive interaction with web-based databases. Although current methods perform well on benchmarks like BIRD and Spider, they struggle with complex reasoning, domain knowledge, and hypothetical queries, and remain costly in enterprise deployment. To address these issues, we propose a framework named IESR(Information Enhanced Structured Reasoning) for lightweight large language models: (i) leverages LLMs for key information understanding and schema linking, and decoupling mathematical computation and SQL generation, (ii) integrates a multi-path reasoning mechanism based on Monte Carlo Tree Search (MCTS) with majority voting, and (iii) introduces a trajectory consistency verification module with a discriminator model to ensure accuracy and consistency. Experimental results demonstrate that IESR achieves state-of-the-art performance on the complex reasoning benchmark LogicCat (24.28 EX) and the Archer dataset (37.28 EX) using only compact lightweight models without fine-tuning. Furthermore, our analysis reveals that current coder models exhibit notable biases and deficiencies in physical knowledge, mathematical computation, and common-sense reasoning, highlighting important directions for future research. We released code at https://github.com/Ffunkytao/IESR-SLM.

AGILE: Hand-Object Interaction Reconstruction from Video via Agentic Generation

Feb 04, 2026Abstract:Reconstructing dynamic hand-object interactions from monocular videos is critical for dexterous manipulation data collection and creating realistic digital twins for robotics and VR. However, current methods face two prohibitive barriers: (1) reliance on neural rendering often yields fragmented, non-simulation-ready geometries under heavy occlusion, and (2) dependence on brittle Structure-from-Motion (SfM) initialization leads to frequent failures on in-the-wild footage. To overcome these limitations, we introduce AGILE, a robust framework that shifts the paradigm from reconstruction to agentic generation for interaction learning. First, we employ an agentic pipeline where a Vision-Language Model (VLM) guides a generative model to synthesize a complete, watertight object mesh with high-fidelity texture, independent of video occlusions. Second, bypassing fragile SfM entirely, we propose a robust anchor-and-track strategy. We initialize the object pose at a single interaction onset frame using a foundation model and propagate it temporally by leveraging the strong visual similarity between our generated asset and video observations. Finally, a contact-aware optimization integrates semantic, geometric, and interaction stability constraints to enforce physical plausibility. Extensive experiments on HO3D, DexYCB, and in-the-wild videos reveal that AGILE outperforms baselines in global geometric accuracy while demonstrating exceptional robustness on challenging sequences where prior art frequently collapses. By prioritizing physical validity, our method produces simulation-ready assets validated via real-to-sim retargeting for robotic applications.

ProFit: Leveraging High-Value Signals in SFT via Probability-Guided Token Selection

Jan 14, 2026Abstract:Supervised fine-tuning (SFT) is a fundamental post-training strategy to align Large Language Models (LLMs) with human intent. However, traditional SFT often ignores the one-to-many nature of language by forcing alignment with a single reference answer, leading to the model overfitting to non-core expressions. Although our empirical analysis suggests that introducing multiple reference answers can mitigate this issue, the prohibitive data and computational costs necessitate a strategic shift: prioritizing the mitigation of single-reference overfitting over the costly pursuit of answer diversity. To achieve this, we reveal the intrinsic connection between token probability and semantic importance: high-probability tokens carry the core logical framework, while low-probability tokens are mostly replaceable expressions. Based on this insight, we propose ProFit, which selectively masks low-probability tokens to prevent surface-level overfitting. Extensive experiments confirm that ProFit consistently outperforms traditional SFT baselines on general reasoning and mathematical benchmarks.

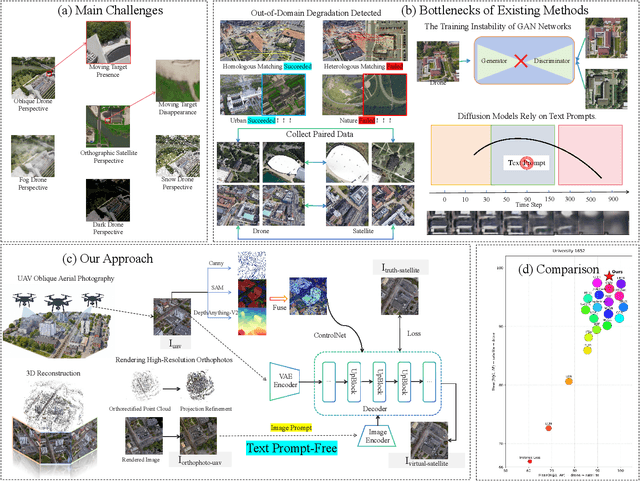

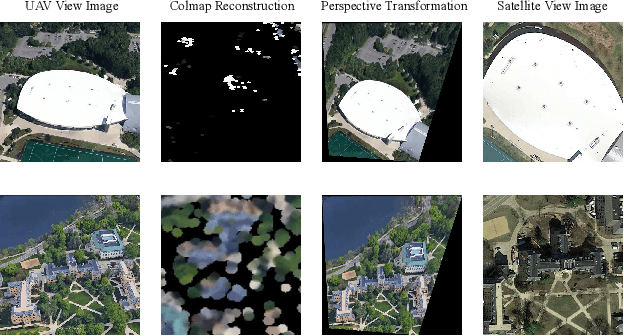

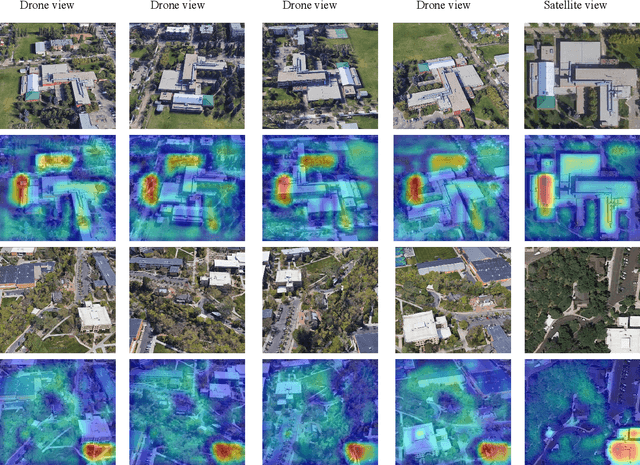

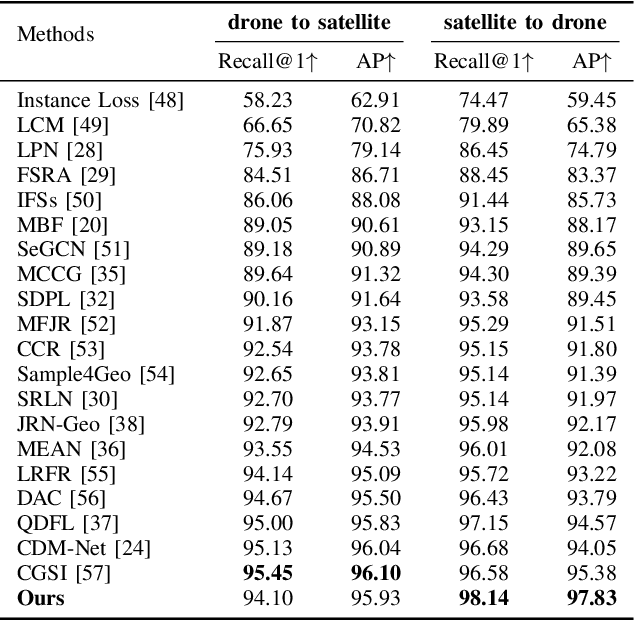

DiffusionUavLoc: Visually Prompted Diffusion for Cross-View UAV Localization

Nov 09, 2025

Abstract:With the rapid growth of the low-altitude economy, unmanned aerial vehicles (UAVs) have become key platforms for measurement and tracking in intelligent patrol systems. However, in GNSS-denied environments, localization schemes that rely solely on satellite signals are prone to failure. Cross-view image retrieval-based localization is a promising alternative, yet substantial geometric and appearance domain gaps exist between oblique UAV views and nadir satellite orthophotos. Moreover, conventional approaches often depend on complex network architectures, text prompts, or large amounts of annotation, which hinders generalization. To address these issues, we propose DiffusionUavLoc, a cross-view localization framework that is image-prompted, text-free, diffusion-centric, and employs a VAE for unified representation. We first use training-free geometric rendering to synthesize pseudo-satellite images from UAV imagery as structural prompts. We then design a text-free conditional diffusion model that fuses multimodal structural cues to learn features robust to viewpoint changes. At inference, descriptors are computed at a fixed time step t and compared using cosine similarity. On University-1652 and SUES-200, the method performs competitively for cross-view localization, especially for satellite-to-drone in University-1652.Our data and code will be published at the following URL: https://github.com/liutao23/DiffusionUavLoc.git.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge