"Time": models, code, and papers

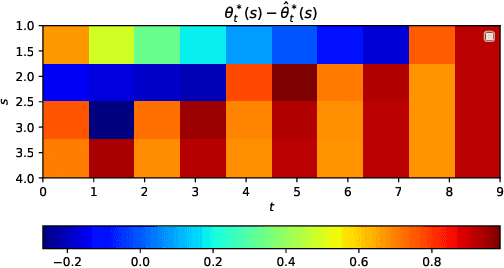

Online Estimation and Optimization of Utility-Based Shortfall Risk

Nov 16, 2021Utility-Based Shortfall Risk (UBSR) is a risk metric that is increasingly popular in financial applications, owing to certain desirable properties that it enjoys. We consider the problem of estimating UBSR in a recursive setting, where samples from the underlying loss distribution are available one-at-a-time. We cast the UBSR estimation problem as a root finding problem, and propose stochastic approximation-based estimations schemes. We derive non-asymptotic bounds on the estimation error in the number of samples. We also consider the problem of UBSR optimization within a parameterized class of random variables. We propose a stochastic gradient descent based algorithm for UBSR optimization, and derive non-asymptotic bounds on its convergence.

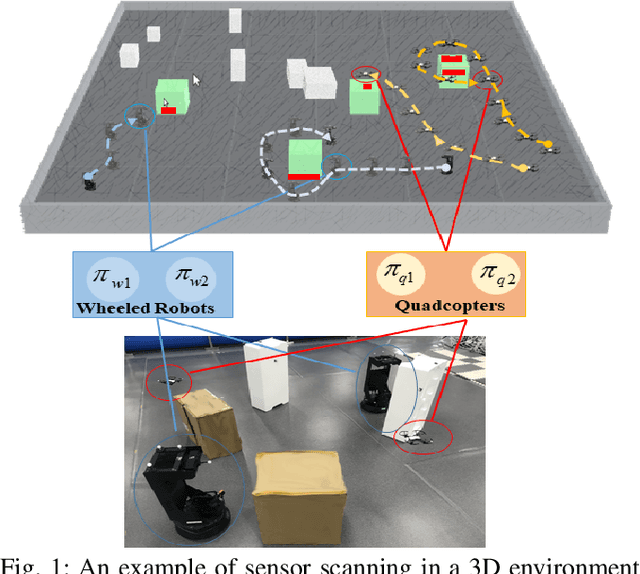

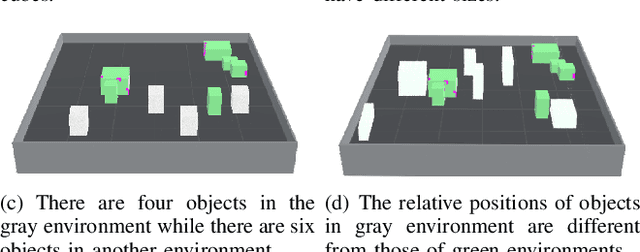

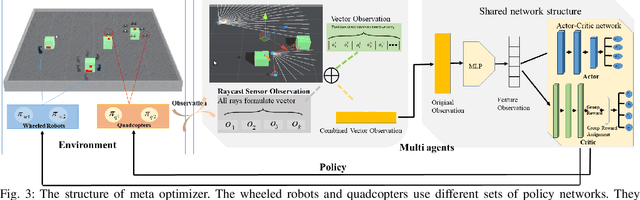

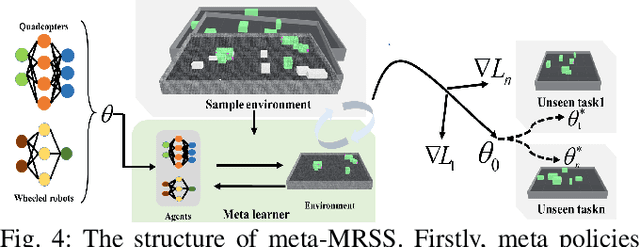

Meta Reinforcement Learning Based Sensor Scanning in 3D Uncertain Environments for Heterogeneous Multi-Robot Systems

Sep 28, 2021

We study a novel problem that tackles learning based sensor scanning in 3D and uncertain environments with heterogeneous multi-robot systems. Our motivation is two-fold: first, 3D environments are complex, the use of heterogeneous multi-robot systems intuitively can facilitate sensor scanning by fully taking advantage of sensors with different capabilities. Second, in uncertain environments (e.g. rescue), time is of great significance. Since the learning process normally takes time to train and adapt to a new environment, we need to find an effective way to explore and adapt quickly. To this end, in this paper, we present a meta-learning approach to improve the exploration and adaptation capabilities. The experimental results demonstrate our method can outperform other methods by approximately 15%-27% on success rate and 70%-75% on adaptation speed.

Neural Controlled Differential Equations for Irregular Time Series

May 18, 2020

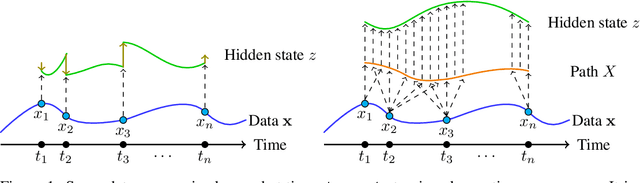

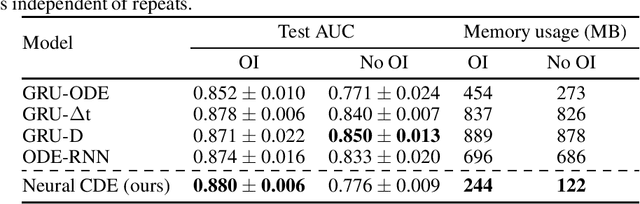

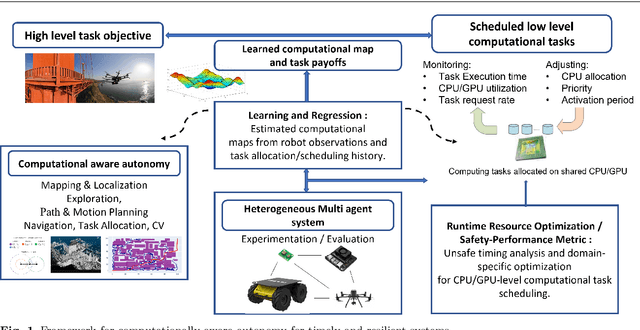

Neural ordinary differential equations are an attractive option for modelling temporal dynamics. However, a fundamental issue is that the solution to an ordinary differential equation is determined by its initial condition, and there is no mechanism for adjusting the trajectory based on subsequent observations. Here, we demonstrate how this may be resolved through the well-understood mathematics of \emph{controlled differential equations}. The resulting \emph{neural controlled differential equation} model is directly applicable to the general setting of partially-observed irregularly-sampled multivariate time series, and (unlike previous work on this problem) it may utilise memory-efficient adjoint-based backpropagation even across observations. We demonstrate that our model achieves state-of-the-art performance against similar (ODE or RNN based) models in empirical studies on a range of datasets. Finally we provide theoretical results demonstrating universal approximation, and that our model subsumes alternative ODE models.

Towards Computational Awareness in Autonomous Robots: An Empirical Study of Computational Kernels

Dec 20, 2021

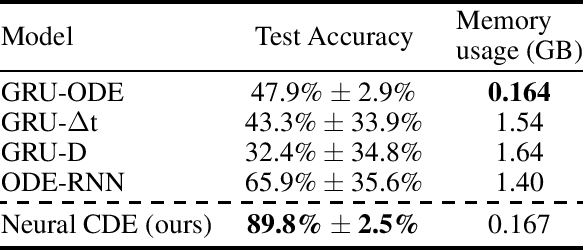

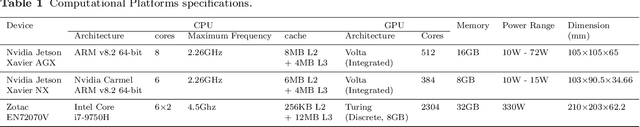

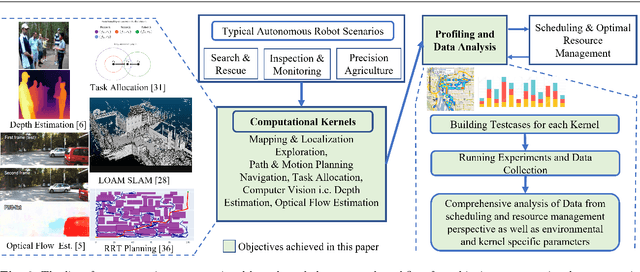

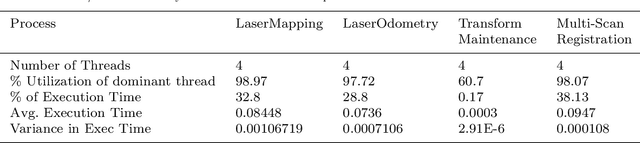

The potential impact of autonomous robots on everyday life is evident in emerging applications such as precision agriculture, search and rescue, and infrastructure inspection. However, such applications necessitate operation in unknown and unstructured environments with a broad and sophisticated set of objectives, all under strict computation and power limitations. We therefore argue that the computational kernels enabling robotic autonomy must be scheduled and optimized to guarantee timely and correct behavior, while allowing for reconfiguration of scheduling parameters at run time. In this paper, we consider a necessary first step towards this goal of computational awareness in autonomous robots: an empirical study of a base set of computational kernels from the resource management perspective. Specifically, we conduct a data-driven study of the timing, power, and memory performance of kernels for localization and mapping, path planning, task allocation, depth estimation, and optical flow, across three embedded computing platforms. We profile and analyze these kernels to provide insight into scheduling and dynamic resource management for computation-aware autonomous robots. Notably, our results show that there is a correlation of kernel performance with a robot's operational environment, justifying the notion of computation-aware robots and why our work is a crucial step towards this goal.

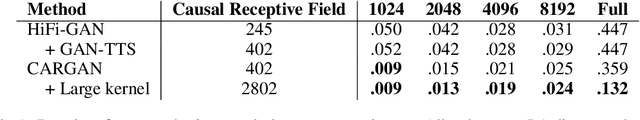

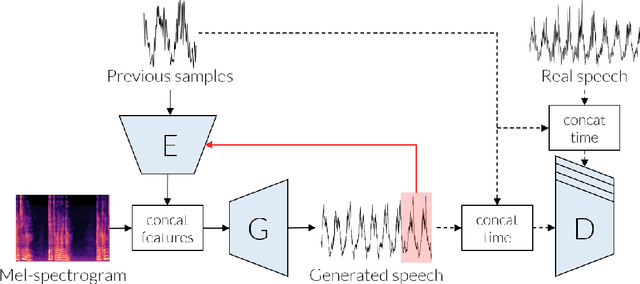

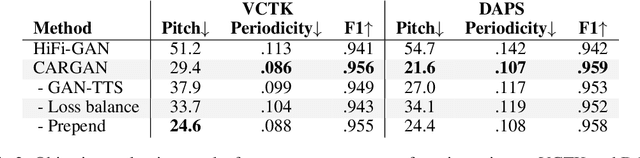

Chunked Autoregressive GAN for Conditional Waveform Synthesis

Oct 19, 2021

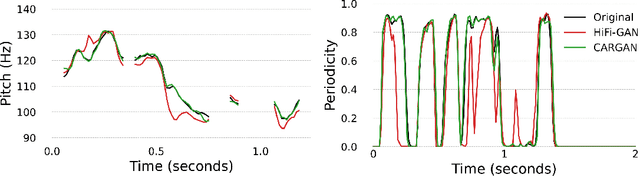

Conditional waveform synthesis models learn a distribution of audio waveforms given conditioning such as text, mel-spectrograms, or MIDI. These systems employ deep generative models that model the waveform via either sequential (autoregressive) or parallel (non-autoregressive) sampling. Generative adversarial networks (GANs) have become a common choice for non-autoregressive waveform synthesis. However, state-of-the-art GAN-based models produce artifacts when performing mel-spectrogram inversion. In this paper, we demonstrate that these artifacts correspond with an inability for the generator to learn accurate pitch and periodicity. We show that simple pitch and periodicity conditioning is insufficient for reducing this error relative to using autoregression. We discuss the inductive bias that autoregression provides for learning the relationship between instantaneous frequency and phase, and show that this inductive bias holds even when autoregressively sampling large chunks of the waveform during each forward pass. Relative to prior state-of- the-art GAN-based models, our proposed model, Chunked Autoregressive GAN (CARGAN) reduces pitch error by 40-60%, reduces training time by 58%, maintains a fast generation speed suitable for real-time or interactive applications, and maintains or improves subjective quality.

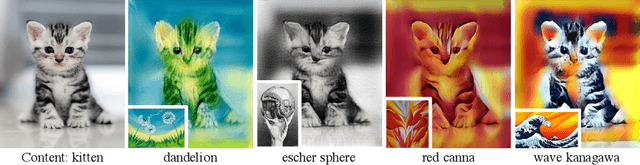

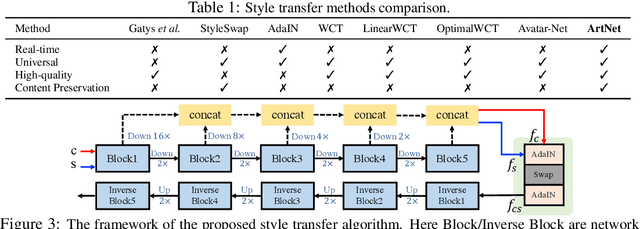

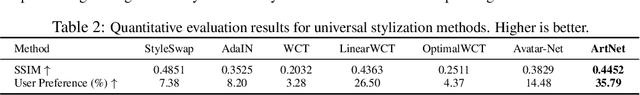

Real-time Universal Style Transfer on High-resolution Images via Zero-channel Pruning

Jun 23, 2020

Extracting effective deep features to represent content and style information is the key to universal style transfer. Most existing algorithms use VGG19 as the feature extractor, which incurs a high computational cost and impedes real-time style transfer on high-resolution images. In this work, we propose a lightweight alternative architecture - ArtNet, which is based on GoogLeNet, and later pruned by a novel channel pruning method named Zero-channel Pruning specially designed for style transfer approaches. Besides, we propose a theoretically sound sandwich swap transform (S2) module to transfer deep features, which can create a pleasing holistic appearance and good local textures with an improved content preservation ability. By using ArtNet and S2, our method is 2.3 to 107.4 times faster than state-of-the-art approaches. The comprehensive experiments demonstrate that ArtNet can achieve universal, real-time, and high-quality style transfer on high-resolution images simultaneously, (68.03 FPS on 512 times 512 images).

Densely-Populated Traffic Detection using YOLOv5 and Non-Maximum Suppression Ensembling

Aug 27, 2021Vehicular object detection is the heart of any intelligent traffic system. It is essential for urban traffic management. R-CNN, Fast R-CNN, Faster R-CNN and YOLO were some of the earlier state-of-the-art models. Region based CNN methods have the problem of higher inference time which makes it unrealistic to use the model in real-time. YOLO on the other hand struggles to detect small objects that appear in groups. In this paper, we propose a method that can locate and classify vehicular objects from a given densely crowded image using YOLOv5. The shortcoming of YOLO was solved my ensembling 4 different models. Our proposed model performs well on images taken from both top view and side view of the street in both day and night. The performance of our proposed model was measured on Dhaka AI dataset which contains densely crowded vehicular images. Our experiment shows that our model achieved mAP@0.5 of 0.458 with inference time of 0.75 sec which outperforms other state-of-the-art models on performance. Hence, the model can be implemented in the street for real-time traffic detection which can be used for traffic control and data collection.

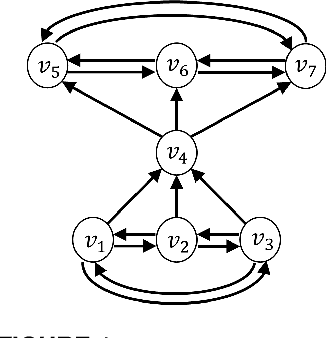

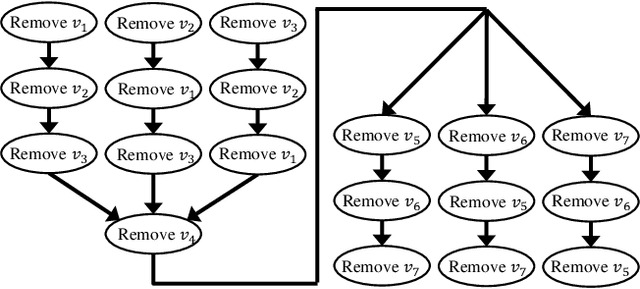

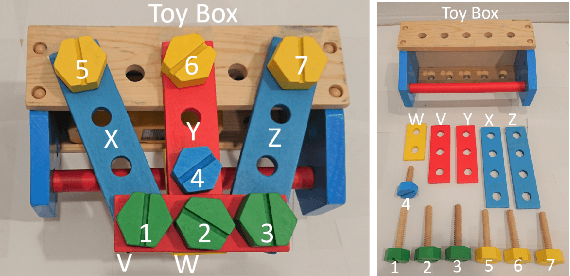

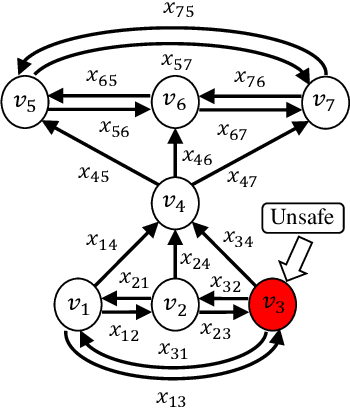

A Real-Time Receding Horizon Sequence Planner for Disassembly in A Human-Robot Collaboration Setting

Jul 06, 2020

Product disassembly is a labor-intensive process and is far from being automated. Typically, disassembly is not robust enough to handle product varieties from different shapes, models, and physical uncertainties due to component imperfections, damage throughout component usage, or insufficient product information. To overcome these difficulties and to automate the disassembly procedure through human-robot collaboration without excessive computational cost, this paper proposes a real-time receding horizon sequence planner that distributes tasks between robot and human operator while taking real-time human motion into consideration. The sequence planner aims to address several issues in the disassembly line, such as varying orientations, safety constraints of human operators, uncertainty of human operation, and the computational cost of large number of disassembly tasks. The proposed disassembly sequence planner identifies both the positions and orientations of the to-be-disassembled items, as well as the locations of human operator, and obtains an optimal disassembly sequence that follows disassembly rules and safety constraints for human operation. Experimental tests have been conducted to validate the proposed planner: the robot can locate and disassemble the components following the optimal sequence, and consider explicitly human operator's real-time motion, and collaborate with the human operator without violating safety constraints.

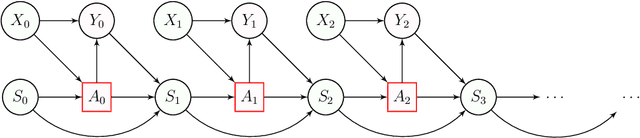

Stateful Offline Contextual Policy Evaluation and Learning

Oct 19, 2021

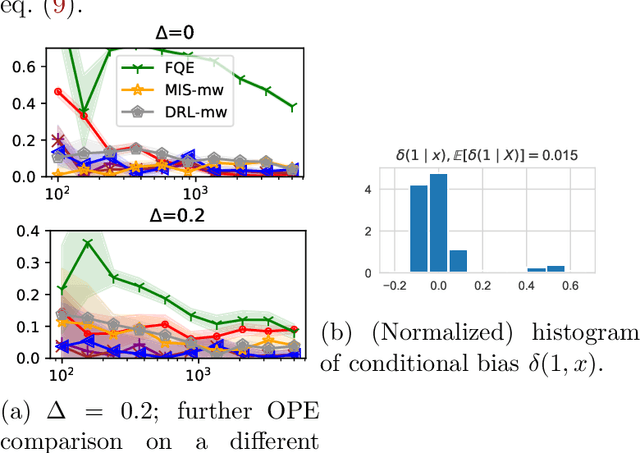

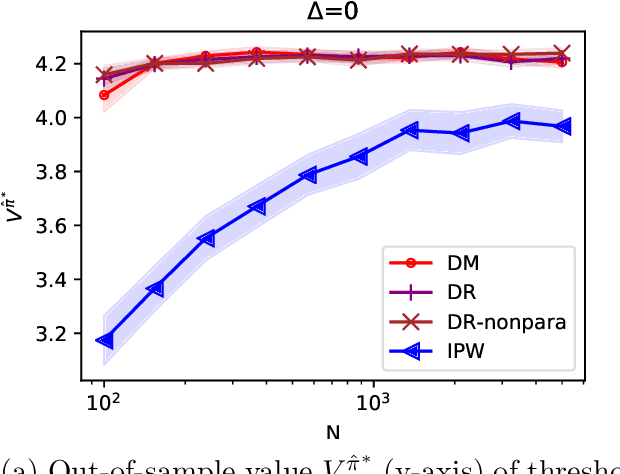

We study off-policy evaluation and learning from sequential data in a structured class of Markov decision processes that arise from repeated interactions with an exogenous sequence of arrivals with contexts, which generate unknown individual-level responses to agent actions. This model can be thought of as an offline generalization of contextual bandits with resource constraints. We formalize the relevant causal structure of problems such as dynamic personalized pricing and other operations management problems in the presence of potentially high-dimensional user types. The key insight is that an individual-level response is often not causally affected by the state variable and can therefore easily be generalized across timesteps and states. When this is true, we study implications for (doubly robust) off-policy evaluation and learning by instead leveraging single time-step evaluation, estimating the expectation over a single arrival via data from a population, for fitted-value iteration in a marginal MDP. We study sample complexity and analyze error amplification that leads to the persistence, rather than attenuation, of confounding error over time. In simulations of dynamic and capacitated pricing, we show improved out-of-sample policy performance in this class of relevant problems.

A Bayesian Treatment of Real-to-Sim for Deformable Object Manipulation

Dec 09, 2021

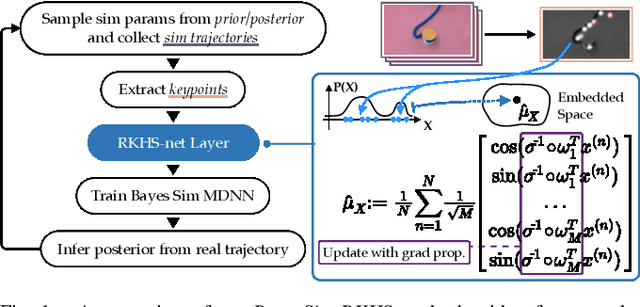

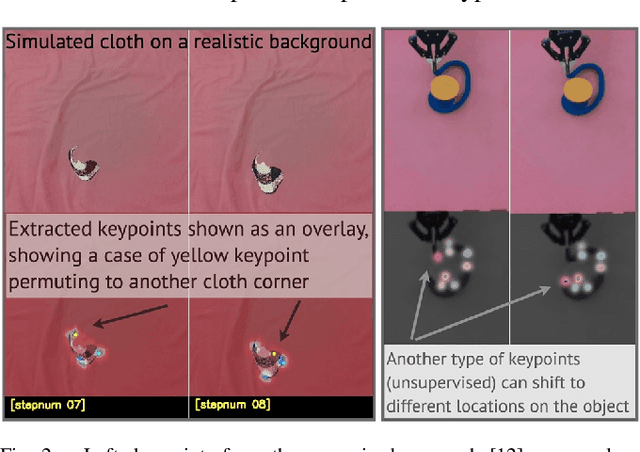

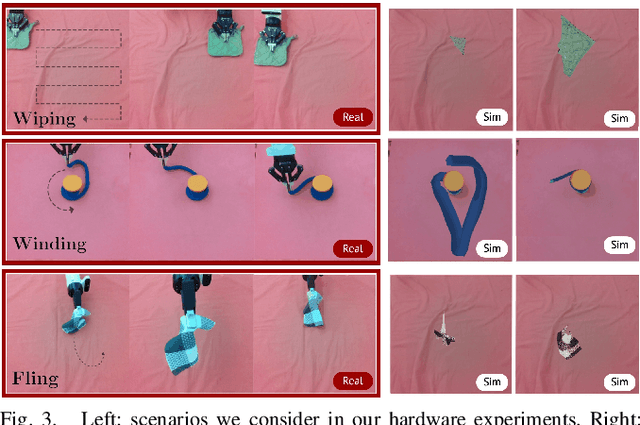

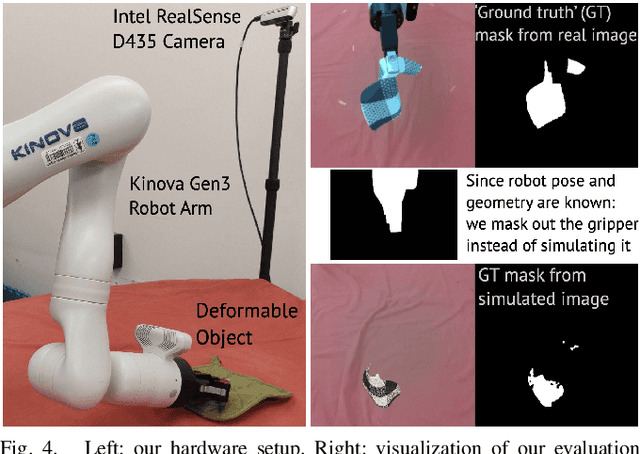

Deformable object manipulation remains a challenging task in robotics research. Conventional techniques for parameter inference and state estimation typically rely on a precise definition of the state space and its dynamics. While this is appropriate for rigid objects and robot states, it is challenging to define the state space of a deformable object and how it evolves in time. In this work, we pose the problem of inferring physical parameters of deformable objects as a probabilistic inference task defined with a simulator. We propose a novel methodology for extracting state information from image sequences via a technique to represent the state of a deformable object as a distribution embedding. This allows to incorporate noisy state observations directly into modern Bayesian simulation-based inference tools in a principled manner. Our experiments confirm that we can estimate posterior distributions of physical properties, such as elasticity, friction and scale of highly deformable objects, such as cloth and ropes. Overall, our method addresses the real-to-sim problem probabilistically and helps to better represent the evolution of the state of deformable objects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge