Xu Li

Britton Chance Center for Biomedical Photonics, Wuhan National Laboratory for Optoelectronics-Huazhong University of Science and Technology, China

Low-Resource Domain Adaptation for Speech LLMs via Text-Only Fine-Tuning

Jun 06, 2025

Abstract:Recent advances in automatic speech recognition (ASR) have combined speech encoders with large language models (LLMs) through projection, forming Speech LLMs with strong performance. However, adapting them to new domains remains challenging, especially in low-resource settings where paired speech-text data is scarce. We propose a text-only fine-tuning strategy for Speech LLMs using unpaired target-domain text without requiring additional audio. To preserve speech-text alignment, we introduce a real-time evaluation mechanism during fine-tuning. This enables effective domain adaptation while maintaining source-domain performance. Experiments on LibriSpeech, SlideSpeech, and Medical datasets show that our method achieves competitive recognition performance, with minimal degradation compared to full audio-text fine-tuning. It also improves generalization to new domains without catastrophic forgetting, highlighting the potential of text-only fine-tuning for low-resource domain adaptation of ASR.

Fewer Hallucinations, More Verification: A Three-Stage LLM-Based Framework for ASR Error Correction

May 30, 2025

Abstract:Automatic Speech Recognition (ASR) error correction aims to correct recognition errors while preserving accurate text. Although traditional approaches demonstrate moderate effectiveness, LLMs offer a paradigm that eliminates the need for training and labeled data. However, directly using LLMs will encounter hallucinations problem, which may lead to the modification of the correct text. To address this problem, we propose the Reliable LLM Correction Framework (RLLM-CF), which consists of three stages: (1) error pre-detection, (2) chain-of-thought sub-tasks iterative correction, and (3) reasoning process verification. The advantage of our method is that it does not require additional information or fine-tuning of the model, and ensures the correctness of the LLM correction under multi-pass programming. Experiments on AISHELL-1, AISHELL-2, and Librispeech show that the GPT-4o model enhanced by our framework achieves 21%, 11%, 9%, and 11.4% relative reductions in CER/WER.

MM-Prompt: Cross-Modal Prompt Tuning for Continual Visual Question Answering

May 26, 2025Abstract:Continual Visual Question Answering (CVQA) based on pre-trained models(PTMs) has achieved promising progress by leveraging prompt tuning to enable continual multi-modal learning. However, most existing methods adopt cross-modal prompt isolation, constructing visual and textual prompts separately, which exacerbates modality imbalance and leads to degraded performance over time. To tackle this issue, we propose MM-Prompt, a novel framework incorporating cross-modal prompt query and cross-modal prompt recovery. The former enables balanced prompt selection by incorporating cross-modal signals during query formation, while the latter promotes joint prompt reconstruction through iterative cross-modal interactions, guided by an alignment loss to prevent representational drift. Extensive experiments show that MM-Prompt surpasses prior approaches in accuracy and knowledge retention, while maintaining balanced modality engagement throughout continual learning.

AutoGEEval: A Multimodal and Automated Framework for Geospatial Code Generation on GEE with Large Language Models

May 19, 2025Abstract:Geospatial code generation is emerging as a key direction in the integration of artificial intelligence and geoscientific analysis. However, there remains a lack of standardized tools for automatic evaluation in this domain. To address this gap, we propose AutoGEEval, the first multimodal, unit-level automated evaluation framework for geospatial code generation tasks on the Google Earth Engine (GEE) platform powered by large language models (LLMs). Built upon the GEE Python API, AutoGEEval establishes a benchmark suite (AutoGEEval-Bench) comprising 1325 test cases that span 26 GEE data types. The framework integrates both question generation and answer verification components to enable an end-to-end automated evaluation pipeline-from function invocation to execution validation. AutoGEEval supports multidimensional quantitative analysis of model outputs in terms of accuracy, resource consumption, execution efficiency, and error types. We evaluate 18 state-of-the-art LLMs-including general-purpose, reasoning-augmented, code-centric, and geoscience-specialized models-revealing their performance characteristics and potential optimization pathways in GEE code generation. This work provides a unified protocol and foundational resource for the development and assessment of geospatial code generation models, advancing the frontier of automated natural language to domain-specific code translation.

YuE: Scaling Open Foundation Models for Long-Form Music Generation

Mar 11, 2025Abstract:We tackle the task of long-form music generation--particularly the challenging \textbf{lyrics-to-song} problem--by introducing YuE, a family of open foundation models based on the LLaMA2 architecture. Specifically, YuE scales to trillions of tokens and generates up to five minutes of music while maintaining lyrical alignment, coherent musical structure, and engaging vocal melodies with appropriate accompaniment. It achieves this through (1) track-decoupled next-token prediction to overcome dense mixture signals, (2) structural progressive conditioning for long-context lyrical alignment, and (3) a multitask, multiphase pre-training recipe to converge and generalize. In addition, we redesign the in-context learning technique for music generation, enabling versatile style transfer (e.g., converting Japanese city pop into an English rap while preserving the original accompaniment) and bidirectional generation. Through extensive evaluation, we demonstrate that YuE matches or even surpasses some of the proprietary systems in musicality and vocal agility. In addition, fine-tuning YuE enables additional controls and enhanced support for tail languages. Furthermore, beyond generation, we show that YuE's learned representations can perform well on music understanding tasks, where the results of YuE match or exceed state-of-the-art methods on the MARBLE benchmark. Keywords: lyrics2song, song generation, long-form, foundation model, music generation

NoT: Federated Unlearning via Weight Negation

Mar 07, 2025Abstract:Federated unlearning (FU) aims to remove a participant's data contributions from a trained federated learning (FL) model, ensuring privacy and regulatory compliance. Traditional FU methods often depend on auxiliary storage on either the client or server side or require direct access to the data targeted for removal-a dependency that may not be feasible if the data is no longer available. To overcome these limitations, we propose NoT, a novel and efficient FU algorithm based on weight negation (multiplying by -1), which circumvents the need for additional storage and access to the target data. We argue that effective and efficient unlearning can be achieved by perturbing model parameters away from the set of optimal parameters, yet being well-positioned for quick re-optimization. This technique, though seemingly contradictory, is theoretically grounded: we prove that the weight negation perturbation effectively disrupts inter-layer co-adaptation, inducing unlearning while preserving an approximate optimality property, thereby enabling rapid recovery. Experimental results across three datasets and three model architectures demonstrate that NoT significantly outperforms existing baselines in unlearning efficacy as well as in communication and computational efficiency.

Capturing Rich Behavior Representations: A Dynamic Action Semantic-Aware Graph Transformer for Video Captioning

Feb 19, 2025Abstract:Existing video captioning methods merely provide shallow or simplistic representations of object behaviors, resulting in superficial and ambiguous descriptions. However, object behavior is dynamic and complex. To comprehensively capture the essence of object behavior, we propose a dynamic action semantic-aware graph transformer. Firstly, a multi-scale temporal modeling module is designed to flexibly learn long and short-term latent action features. It not only acquires latent action features across time scales, but also considers local latent action details, enhancing the coherence and sensitiveness of latent action representations. Secondly, a visual-action semantic aware module is proposed to adaptively capture semantic representations related to object behavior, enhancing the richness and accurateness of action representations. By harnessing the collaborative efforts of these two modules,we can acquire rich behavior representations to generate human-like natural descriptions. Finally, this rich behavior representations and object representations are used to construct a temporal objects-action graph, which is fed into the graph transformer to model the complex temporal dependencies between objects and actions. To avoid adding complexity in the inference phase, the behavioral knowledge of the objects will be distilled into a simple network through knowledge distillation. The experimental results on MSVD and MSR-VTT datasets demonstrate that the proposed method achieves significant performance improvements across multiple metrics.

Baichuan-Omni-1.5 Technical Report

Jan 26, 2025

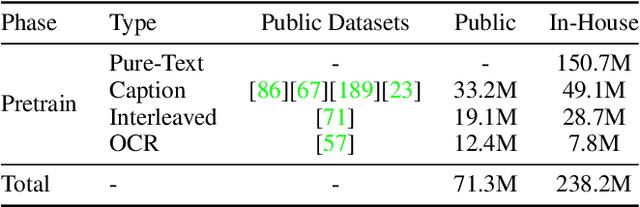

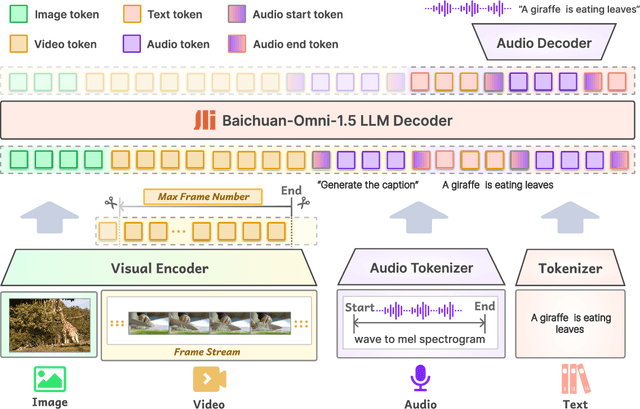

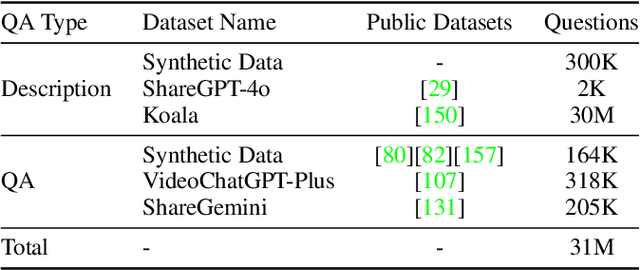

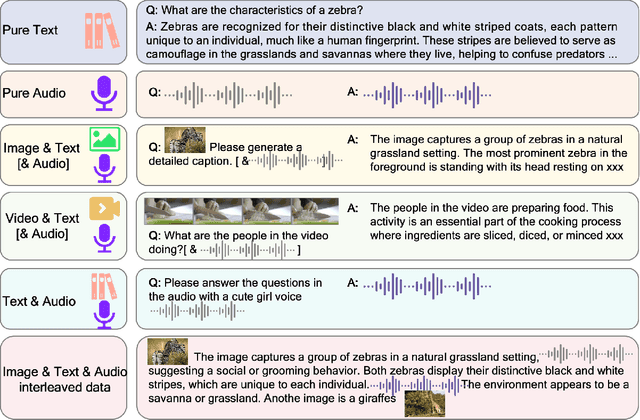

Abstract:We introduce Baichuan-Omni-1.5, an omni-modal model that not only has omni-modal understanding capabilities but also provides end-to-end audio generation capabilities. To achieve fluent and high-quality interaction across modalities without compromising the capabilities of any modality, we prioritized optimizing three key aspects. First, we establish a comprehensive data cleaning and synthesis pipeline for multimodal data, obtaining about 500B high-quality data (text, audio, and vision). Second, an audio-tokenizer (Baichuan-Audio-Tokenizer) has been designed to capture both semantic and acoustic information from audio, enabling seamless integration and enhanced compatibility with MLLM. Lastly, we designed a multi-stage training strategy that progressively integrates multimodal alignment and multitask fine-tuning, ensuring effective synergy across all modalities. Baichuan-Omni-1.5 leads contemporary models (including GPT4o-mini and MiniCPM-o 2.6) in terms of comprehensive omni-modal capabilities. Notably, it achieves results comparable to leading models such as Qwen2-VL-72B across various multimodal medical benchmarks.

Complementary Subspace Low-Rank Adaptation of Vision-Language Models for Few-Shot Classification

Jan 25, 2025

Abstract:Vision language model (VLM) has been designed for large scale image-text alignment as a pretrained foundation model. For downstream few shot classification tasks, parameter efficient fine-tuning (PEFT) VLM has gained much popularity in the computer vision community. PEFT methods like prompt tuning and linear adapter have been studied for fine-tuning VLM while low rank adaptation (LoRA) algorithm has rarely been considered for few shot fine-tuning VLM. The main obstacle to use LoRA for few shot fine-tuning is the catastrophic forgetting problem. Because the visual language alignment knowledge is important for the generality in few shot learning, whereas low rank adaptation interferes with the most informative direction of the pretrained weight matrix. We propose the complementary subspace low rank adaptation (Comp-LoRA) method to regularize the catastrophic forgetting problem in few shot VLM finetuning. In detail, we optimize the low rank matrix in the complementary subspace, thus preserving the general vision language alignment ability of VLM when learning the novel few shot information. We conduct comparison experiments of the proposed Comp-LoRA method and other PEFT methods on fine-tuning VLM for few shot classification. And we also present the suppression on the catastrophic forgetting problem of our proposed method against directly applying LoRA to VLM. The results show that the proposed method surpasses the baseline method by about +1.0\% Top-1 accuracy and preserves the VLM zero-shot performance over the baseline method by about +1.3\% Top-1 accuracy.

Global Semantic-Guided Sub-image Feature Weight Allocation in High-Resolution Large Vision-Language Models

Jan 24, 2025Abstract:As the demand for high-resolution image processing in Large Vision-Language Models (LVLMs) grows, sub-image partitioning has become a popular approach for mitigating visual information loss associated with fixed-resolution processing. However, existing partitioning methods uniformly process sub-images, resulting in suboptimal image understanding. In this work, we reveal that the sub-images with higher semantic relevance to the entire image encapsulate richer visual information for preserving the model's visual understanding ability. Therefore, we propose the Global Semantic-guided Weight Allocator (GSWA) module, which dynamically allocates weights to sub-images based on their relative information density, emulating human visual attention mechanisms. This approach enables the model to focus on more informative regions, overcoming the limitations of uniform treatment. We integrate GSWA into the InternVL2-2B framework to create SleighVL, a lightweight yet high-performing model. Extensive experiments demonstrate that SleighVL outperforms models with comparable parameters and remains competitive with larger models. Our work provides a promising direction for more efficient and contextually aware high-resolution image processing in LVLMs, advancing multimodal system development.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge