Shiwen Mao

Sherman

Rethinking Wireless Communications through Formal Mathematical AI Reasoning

Apr 28, 2026Abstract:Mathematical analysis has long underpinned wireless communication theory, yet the growing complexity of next-generation systems demands increasingly sophisticated reasoning from domain experts. Recent advances in AI mathematical reasoning, from formal theorem proving to large language model (LLM)-based derivation, offer a promising but largely unexplored path forward. Here we argue that wireless communications is a uniquely structured domain for formal AI reasoning, and propose a three-layer framework of verification, derivation, and discovery to rethink how wireless mathematical knowledge is established.

A Geometric Algebra-informed NeRF Framework for Generalizable Wireless Channel Prediction

Apr 13, 2026Abstract:In this paper, we propose the geometric algebra-informed neural radiance fields (GAI-NeRF), a novel framework for wireless channel prediction that leverages geometric algebra attention mechanisms to capture ray-object interactions in complex propagation environments. Our approach incorporates global token representations, drawing inspiration from transformer architectures in language and vision domains, to aggregate learned spatial-electromagnetic features and enhance scene understanding. We identify limitations in conventional static ray tracing modules that hinder model generalization and address this challenge through a new ray tracing architecture. This design enables effective generalization across diverse wireless scenarios while maintaining computational efficiency. Experimental results demonstrate that GAI-NeRF achieves superior performance in channel prediction tasks by combining geometric algebra principles with neural scene representations, offering a promising direction for next-generation wireless communication systems. Moreover, GAI-NeRF greatly outperforms existing methods across multiple wireless scenarios. To ensure comprehensive assessment, we further evaluate our approach against multiple benchmarks using newly collected real-world indoor datasets tailored for single-scene downstream tasks and generalization testing, confirming its robust performance in unseen environments and establishing its high efficacy for wireless channel prediction.

Semantic Communication with an LLM-enabled Knowledge Base

Apr 07, 2026Abstract:Semantic communication (SC) can achieve superior coding and transmission performance based on the knowledge contained in the semantic knowledge base (KB). However, conventional KBs consist of source KBs and channel KBs, which are often costly to obtain data and limited in data scale. Fortunately, large language models (LLMs) have recently emerged with extensive knowledge and generative capabilities. Therefore, this paper proposes an SC system with LLM-enabled knowledge base (SC-LMKB), which utilizes the generation ability of LLMs to significantly enrich the KB of SC systems. In particular, we first design an LLM-enabled generation mechanism with a prompt engineering strategy for source data generation (SDG) and a cross-attention alignment method for channel data generation (CDG). However, hallucinations from LLMs may cause semantic noise, thus degrading SC performance. To mitigate the hallucination issue, a cross-domain fusion codec (CDFC) framework with a hallucination filtering phase and a cross-domain fusion phase is then proposed for SDG. In particular, the first phase filters out new data generated by the LMKB irrelevant to the original data based on semantic similarity. Then, a cross-domain fusion phase is proposed, which fuses source data with LLM-generated data based on their semantic importance, thereby enhancing task performance. Besides, a joint training objective that combines cross-entropy loss and reconstruction loss is proposed to reduce the impact of hallucination on CDG. Experiment results on three cross-modality retrieval tasks demonstrate that the proposed SC-LMKB can achieve up to 72.6\% and 90.7\% performance gains compared to conventional SC systems and LLM-enabled SC systems, respectively.

Low-Complexity Distributed Combining Design for Near-Field Cell-Free XL-MIMO Systems

Feb 03, 2026Abstract:In this paper, we investigate the low-complexity distributed combining scheme design for near-field cell-free extremely large-scale multiple-input-multiple-output (CF XL-MIMO) systems. Firstly, we construct the uplink spectral efficiency (SE) performance analysis framework for CF XL-MIMO systems over centralized and distributed processing schemes. Notably, we derive the centralized minimum mean-square error (CMMSE) and local minimum mean-square error (LMMSE) combining schemes over arbitrary channel estimators. Then, focusing on the CMMSE and LMMSE combining schemes, we propose five low-complexity distributed combining schemes based on the matrix approximation methodology or the symmetric successive over relaxation (SSOR) algorithm. More specifically, we propose two matrix approximation methodology-aided combining schemes: Global Statistics \& Local Instantaneous information-based MMSE (GSLI-MMSE) and Statistics matrix Inversion-based LMMSE (SI-LMMSE). These two schemes are derived by approximating the global instantaneous information in the CMMSE combining and the local instantaneous information in the LMMSE combining with the global and local statistics information by asymptotic analysis and matrix expectation approximation, respectively. Moreover, by applying the low-complexity SSOR algorithm to iteratively solve the matrix inversion in the LMMSE combining, we derive three distributed SSOR-based LMMSE combining schemes, distinguished from the applied information and initial values.

A Survey on Reconfigurable Intelligent Surfaces in Practical Systems: Security and Privacy Perspectives

Dec 12, 2025

Abstract:Reconfigurable Intelligent Surfaces (RIS) have emerged as a transformative technology capable of reshaping wireless environments through dynamic manipulation of electromagnetic waves. While extensive research has explored their theoretical benefits for communication and sensing, practical deployments in smart environments such as homes, vehicles, and industrial settings remain limited and under-examined, particularly from security and privacy perspectives. This survey provides a comprehensive examination of RIS applications in real-world systems, with a focus on the security and privacy threats, vulnerabilities, and defensive strategies relevant to practical use. We analyze scenarios with two types of systems (with and without legitimate RIS) and two types of attackers (with and without malicious RIS), and demonstrate how RIS may introduce new attacks to practical systems, including eavesdropping, jamming, and spoofing attacks. In response, we review defenses against RIS-related attacks in these systems, such as applying additional security algorithms, disrupting attackers, and early detection of unauthorized RIS. We also discuss scenarios in which the legitimate user applies an additional RIS to defend against attacks. To support future research, we also provide a collection of open-source tools, datasets, demos, and papers at: https://awesome-ris-security.github.io/. By highlighting RIS's functionality and its security/privacy challenges and opportunities, this survey aims to guide researchers and engineers toward the development of secure, resilient, and privacy-preserving RIS-enabled practical wireless systems and environments.

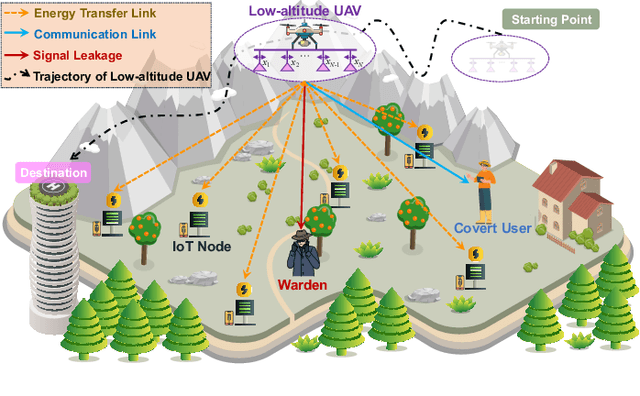

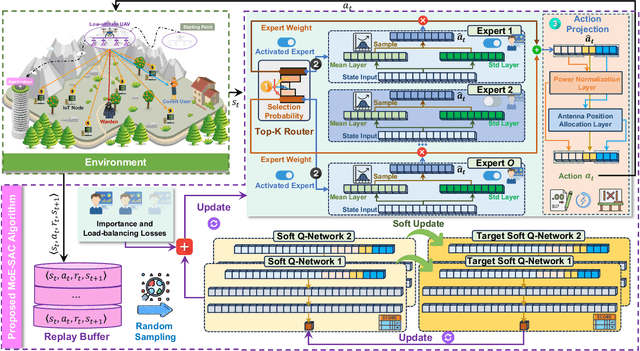

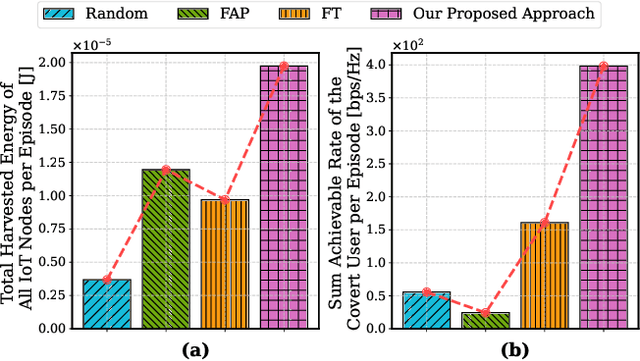

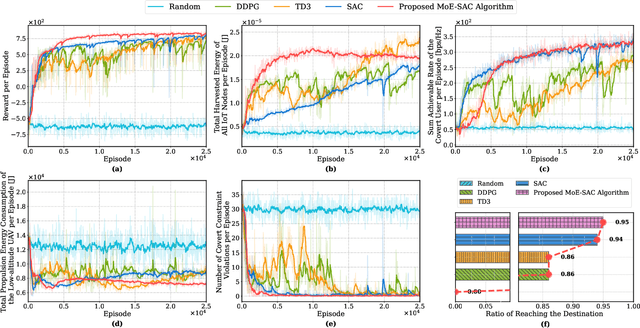

Low-Altitude UAV-Carried Movable Antenna for Joint Wireless Power Transfer and Covert Communications

Oct 30, 2025

Abstract:The proliferation of Internet of Things (IoT) networks has created an urgent need for sustainable energy solutions, particularly for the battery-constrained spatially distributed IoT nodes. While low-altitude uncrewed aerial vehicles (UAVs) employed with wireless power transfer (WPT) capabilities offer a promising solution, the line-of-sight channels that facilitate efficient energy delivery also expose sensitive operational data to adversaries. This paper proposes a novel low-altitude UAV-carried movable antenna-enhanced transmission system joint WPT and covert communications, which simultaneously performs energy supplements to IoT nodes and establishes transmission links with a covert user by leveraging wireless energy signals as a natural cover. Then, we formulate a multi-objective optimization problem that jointly maximizes the total harvested energy of IoT nodes and sum achievable rate of the covert user, while minimizing the propulsion energy consumption of the low-altitude UAV. To address the non-convex and temporally coupled optimization problem, we propose a mixture-of-experts-augmented soft actor-critic (MoE-SAC) algorithm that employs a sparse Top-K gated mixture-of-shallow-experts architecture to represent multimodal policy distributions arising from the conflicting optimization objectives. We also incorporate an action projection module that explicitly enforces per-time-slot power budget constraints and antenna position constraints. Simulation results demonstrate that the proposed approach significantly outperforms some baseline approaches and other state-of-the-art deep reinforcement learning algorithms.

Optimizing Communication and Device Clustering for Clustered Federated Learning with Differential Privacy

Jul 09, 2025

Abstract:In this paper, a secure and communication-efficient clustered federated learning (CFL) design is proposed. In our model, several base stations (BSs) with heterogeneous task-handling capabilities and multiple users with non-independent and identically distributed (non-IID) data jointly perform CFL training incorporating differential privacy (DP) techniques. Since each BS can process only a subset of the learning tasks and has limited wireless resource blocks (RBs) to allocate to users for federated learning (FL) model parameter transmission, it is necessary to jointly optimize RB allocation and user scheduling for CFL performance optimization. Meanwhile, our considered CFL method requires devices to use their limited data and FL model information to determine their task identities, which may introduce additional communication overhead. We formulate an optimization problem whose goal is to minimize the training loss of all learning tasks while considering device clustering, RB allocation, DP noise, and FL model transmission delay. To solve the problem, we propose a novel dynamic penalty function assisted value decomposed multi-agent reinforcement learning (DPVD-MARL) algorithm that enables distributed BSs to independently determine their connected users, RBs, and DP noise of the connected users but jointly minimize the training loss of all learning tasks across all BSs. Different from the existing MARL methods that assign a large penalty for invalid actions, we propose a novel penalty assignment scheme that assigns penalty depending on the number of devices that cannot meet communication constraints (e.g., delay), which can guide the MARL scheme to quickly find valid actions, thus improving the convergence speed. Simulation results show that the DPVD-MARL can improve the convergence rate by up to 20% and the ultimate accumulated rewards by 15% compared to independent Q-learning.

From Ground to Sky: Architectures, Applications, and Challenges Shaping Low-Altitude Wireless Networks

Jun 14, 2025Abstract:In this article, we introduce a novel low-altitude wireless network (LAWN), which is a reconfigurable, three-dimensional (3D) layered architecture. In particular, the LAWN integrates connectivity, sensing, control, and computing across aerial and terrestrial nodes that enable seamless operation in complex, dynamic, and mission-critical environments. In this article, we introduce a novel low-altitude wireless network (LAWN), which is a reconfigurable, three-dimensional (3D) layered architecture. Different from the conventional aerial communication systems, LAWN's distinctive feature is its tight integration of functional planes in which multiple functionalities continually reshape themselves to operate safely and efficiently in the low-altitude sky. With the LAWN, we discuss several enabling technologies, such as integrated sensing and communication (ISAC), semantic communication, and fully-actuated control systems. Finally, we identify potential applications and key cross-layer challenges. This article offers a comprehensive roadmap for future research and development in the low-altitude airspace.

LLM-guided DRL for Multi-tier LEO Satellite Networks with Hybrid FSO/RF Links

May 17, 2025

Abstract:Despite significant advancements in terrestrial networks, inherent limitations persist in providing reliable coverage to remote areas and maintaining resilience during natural disasters. Multi-tier networks with low Earth orbit (LEO) satellites and high-altitude platforms (HAPs) offer promising solutions, but face challenges from high mobility and dynamic channel conditions that cause unstable connections and frequent handovers. In this paper, we design a three-tier network architecture that integrates LEO satellites, HAPs, and ground terminals with hybrid free-space optical (FSO) and radio frequency (RF) links to maximize coverage while maintaining connectivity reliability. This hybrid approach leverages the high bandwidth of FSO for satellite-to-HAP links and the weather resilience of RF for HAP-to-ground links. We formulate a joint optimization problem to simultaneously balance downlink transmission rate and handover frequency by optimizing network configuration and satellite handover decisions. The problem is highly dynamic and non-convex with time-coupled constraints. To address these challenges, we propose a novel large language model (LLM)-guided truncated quantile critics algorithm with dynamic action masking (LTQC-DAM) that utilizes dynamic action masking to eliminate unnecessary exploration and employs LLMs to adaptively tune hyperparameters. Simulation results demonstrate that the proposed LTQC-DAM algorithm outperforms baseline algorithms in terms of convergence, downlink transmission rate, and handover frequency. We also reveal that compared to other state-of-the-art LLMs, DeepSeek delivers the best performance through gradual, contextually-aware parameter adjustments.

Decentralization of Generative AI via Mixture of Experts for Wireless Networks: A Comprehensive Survey

Apr 28, 2025

Abstract:Mixture of Experts (MoE) has emerged as a promising paradigm for scaling model capacity while preserving computational efficiency, particularly in large-scale machine learning architectures such as large language models (LLMs). Recent advances in MoE have facilitated its adoption in wireless networks to address the increasing complexity and heterogeneity of modern communication systems. This paper presents a comprehensive survey of the MoE framework in wireless networks, highlighting its potential in optimizing resource efficiency, improving scalability, and enhancing adaptability across diverse network tasks. We first introduce the fundamental concepts of MoE, including various gating mechanisms and the integration with generative AI (GenAI) and reinforcement learning (RL). Subsequently, we discuss the extensive applications of MoE across critical wireless communication scenarios, such as vehicular networks, unmanned aerial vehicles (UAVs), satellite communications, heterogeneous networks, integrated sensing and communication (ISAC), and mobile edge networks. Furthermore, key applications in channel prediction, physical layer signal processing, radio resource management, network optimization, and security are thoroughly examined. Additionally, we present a detailed overview of open-source datasets that are widely used in MoE-based models to support diverse machine learning tasks. Finally, this survey identifies crucial future research directions for MoE, emphasizing the importance of advanced training techniques, resource-aware gating strategies, and deeper integration with emerging 6G technologies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge