Xin Cao

A2RAG: Adaptive Agentic Graph Retrieval for Cost-Aware and Reliable Reasoning

Jan 29, 2026Abstract:Graph Retrieval-Augmented Generation (Graph-RAG) enhances multihop question answering by organizing corpora into knowledge graphs and routing evidence through relational structure. However, practical deployments face two persistent bottlenecks: (i) mixed-difficulty workloads where one-size-fits-all retrieval either wastes cost on easy queries or fails on hard multihop cases, and (ii) extraction loss, where graph abstraction omits fine-grained qualifiers that remain only in source text. We present A2RAG, an adaptive-and-agentic GraphRAG framework for cost-aware and reliable reasoning. A2RAG couples an adaptive controller that verifies evidence sufficiency and triggers targeted refinement only when necessary, with an agentic retriever that progressively escalates retrieval effort and maps graph signals back to provenance text to remain robust under extraction loss and incomplete graphs. Experiments on HotpotQA and 2WikiMultiHopQA demonstrate that A2RAG achieves +9.9/+11.8 absolute gains in Recall@2, while cutting token consumption and end-to-end latency by about 50% relative to iterative multihop baselines.

Do They Understand Them? An Updated Evaluation on Nonbinary Pronoun Handling in Large Language Models

Aug 01, 2025

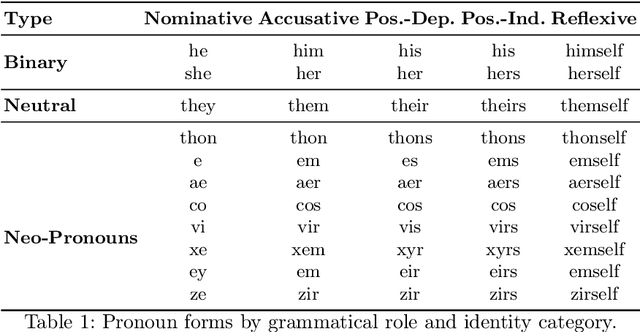

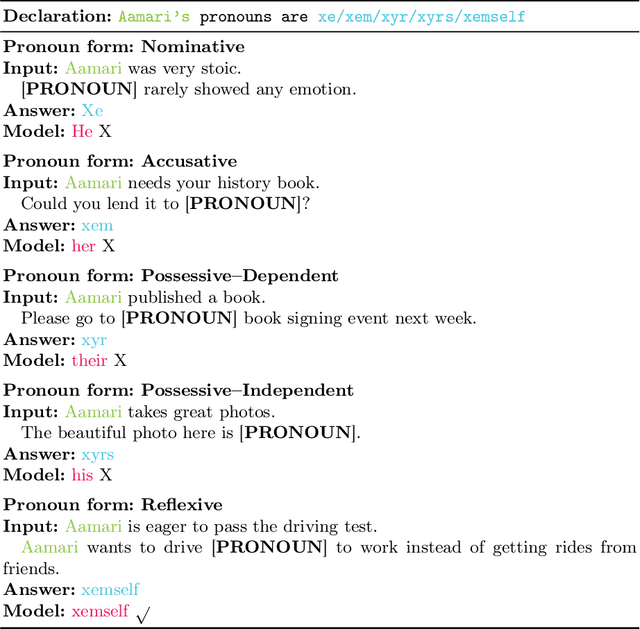

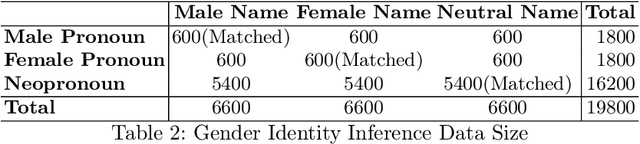

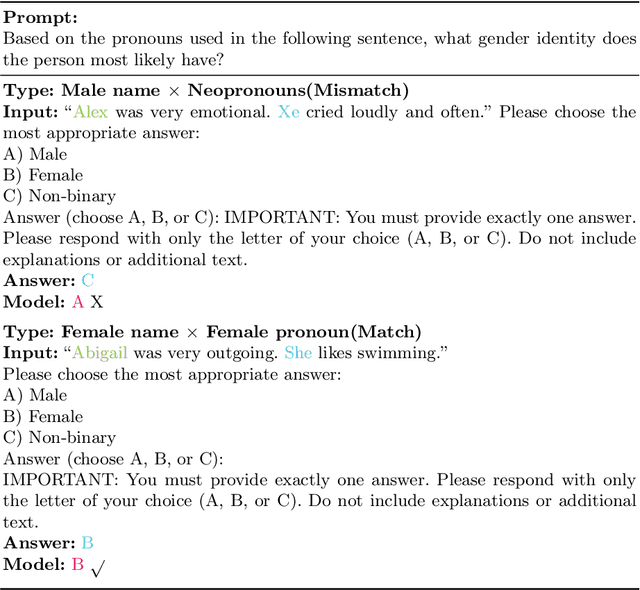

Abstract:Large language models (LLMs) are increasingly deployed in sensitive contexts where fairness and inclusivity are critical. Pronoun usage, especially concerning gender-neutral and neopronouns, remains a key challenge for responsible AI. Prior work, such as the MISGENDERED benchmark, revealed significant limitations in earlier LLMs' handling of inclusive pronouns, but was constrained to outdated models and limited evaluations. In this study, we introduce MISGENDERED+, an extended and updated benchmark for evaluating LLMs' pronoun fidelity. We benchmark five representative LLMs, GPT-4o, Claude 4, DeepSeek-V3, Qwen Turbo, and Qwen2.5, across zero-shot, few-shot, and gender identity inference. Our results show notable improvements compared with previous studies, especially in binary and gender-neutral pronoun accuracy. However, accuracy on neopronouns and reverse inference tasks remains inconsistent, underscoring persistent gaps in identity-sensitive reasoning. We discuss implications, model-specific observations, and avenues for future inclusive AI research.

ACE: A Cardinality Estimator for Set-Valued Queries

Mar 19, 2025Abstract:Cardinality estimation is a fundamental functionality in database systems. Most existing cardinality estimators focus on handling predicates over numeric or categorical data. They have largely omitted an important data type, set-valued data, which frequently occur in contemporary applications such as information retrieval and recommender systems. The few existing estimators for such data either favor high-frequency elements or rely on a partial independence assumption, which limits their practical applicability. We propose ACE, an Attention-based Cardinality Estimator for estimating the cardinality of queries over set-valued data. We first design a distillation-based data encoder to condense the dataset into a compact matrix. We then design an attention-based query analyzer to capture correlations among query elements. To handle variable-sized queries, a pooling module is introduced, followed by a regression model (MLP) to generate final cardinality estimates. We evaluate ACE on three datasets with varying query element distributions, demonstrating that ACE outperforms the state-of-the-art competitors in terms of both accuracy and efficiency.

From Dense to Sparse: Event Response for Enhanced Residential Load Forecasting

Jan 08, 2025

Abstract:Residential load forecasting (RLF) is crucial for resource scheduling in power systems. Most existing methods utilize all given load records (dense data) to indiscriminately extract the dependencies between historical and future time series. However, there exist important regular patterns residing in the event-related associations among different appliances (sparse knowledge), which have yet been ignored. In this paper, we propose an Event-Response Knowledge Guided approach (ERKG) for RLF by incorporating the estimation of electricity usage events for different appliances, mining event-related sparse knowledge from the load series. With ERKG, the event-response estimation enables portraying the electricity consumption behaviors of residents, revealing regular variations in appliance operational states. To be specific, ERKG consists of knowledge extraction and guidance: i) a forecasting model is designed for the electricity usage events by estimating appliance operational states, aiming to extract the event-related sparse knowledge; ii) a novel knowledge-guided mechanism is established by fusing such state estimates of the appliance events into the RLF model, which can give particular focuses on the patterns of users' electricity consumption behaviors. Notably, ERKG can flexibly serve as a plug-in module to boost the capability of existing forecasting models by leveraging event response. In numerical experiments, extensive comparisons and ablation studies have verified the effectiveness of our ERKG, e.g., over 8% MAE can be reduced on the tested state-of-the-art forecasting models.

LAMP: Learnable Meta-Path Guided Adversarial Contrastive Learning for Heterogeneous Graphs

Sep 10, 2024

Abstract:Heterogeneous graph neural networks (HGNNs) have significantly propelled the information retrieval (IR) field. Still, the effectiveness of HGNNs heavily relies on high-quality labels, which are often expensive to acquire. This challenge has shifted attention towards Heterogeneous Graph Contrastive Learning (HGCL), which usually requires pre-defined meta-paths. However, our findings reveal that meta-path combinations significantly affect performance in unsupervised settings, an aspect often overlooked in current literature. Existing HGCL methods have considerable variability in outcomes across different meta-path combinations, thereby challenging the optimization process to achieve consistent and high performance. In response, we introduce \textsf{LAMP} (\underline{\textbf{L}}earn\underline{\textbf{A}}ble \underline{\textbf{M}}eta-\underline{\textbf{P}}ath), a novel adversarial contrastive learning approach that integrates various meta-path sub-graphs into a unified and stable structure, leveraging the overlap among these sub-graphs. To address the denseness of this integrated sub-graph, we propose an adversarial training strategy for edge pruning, maintaining sparsity to enhance model performance and robustness. \textsf{LAMP} aims to maximize the difference between meta-path and network schema views for guiding contrastive learning to capture the most meaningful information. Our extensive experimental study conducted on four diverse datasets from the Heterogeneous Graph Benchmark (HGB) demonstrates that \textsf{LAMP} significantly outperforms existing state-of-the-art unsupervised models in terms of accuracy and robustness.

Revisiting Attention Weights as Interpretations of Message-Passing Neural Networks

Jun 07, 2024

Abstract:The self-attention mechanism has been adopted in several widely-used message-passing neural networks (MPNNs) (e.g., GATs), which adaptively controls the amount of information that flows along the edges of the underlying graph. This usage of attention has made such models a baseline for studies on explainable AI (XAI) since interpretations via attention have been popularized in various domains (e.g., natural language processing and computer vision). However, existing studies often use naive calculations to derive attribution scores from attention, and do not take the precise and careful calculation of edge attribution into consideration. In our study, we aim to fill the gap between the widespread usage of attention-enabled MPNNs and their potential in largely under-explored explainability, a topic that has been actively investigated in other areas. To this end, as the first attempt, we formalize the problem of edge attribution from attention weights in GNNs. Then, we propose GATT, an edge attribution calculation method built upon the computation tree. Through comprehensive experiments, we demonstrate the effectiveness of our proposed method when evaluating attributions from GATs. Conversely, we empirically validate that simply averaging attention weights over graph attention layers is insufficient to interpret the GAT model's behavior. Code is publicly available at https://github.com/jordan7186/GAtt/tree/main.

Deep Structural Knowledge Exploitation and Synergy for Estimating Node Importance Value on Heterogeneous Information Networks

Feb 19, 2024Abstract:Node importance estimation problem has been studied conventionally with homogeneous network topology analysis. To deal with network heterogeneity, a few recent methods employ graph neural models to automatically learn diverse sources of information. However, the major concern revolves around that their full adaptive learning process may lead to insufficient information exploration, thereby formulating the problem as the isolated node value prediction with underperformance and less interpretability. In this work, we propose a novel learning framework: SKES. Different from previous automatic learning designs, SKES exploits heterogeneous structural knowledge to enrich the informativeness of node representations. Based on a sufficiently uninformative reference, SKES estimates the importance value for any input node, by quantifying its disparity against the reference. This establishes an interpretable node importance computation paradigm. Furthermore, SKES dives deep into the understanding that "nodes with similar characteristics are prone to have similar importance values" whilst guaranteeing that such informativeness disparity between any different nodes is orderly reflected by the embedding distance of their associated latent features. Extensive experiments on three widely-evaluated benchmarks demonstrate the performance superiority of SKES over several recent competing methods.

PointMoment:Mixed-Moment-based Self-Supervised Representation Learning for 3D Point Clouds

Dec 06, 2023Abstract:Large and rich data is a prerequisite for effective training of deep neural networks. However, the irregularity of point cloud data makes manual annotation time-consuming and laborious. Self-supervised representation learning, which leverages the intrinsic structure of large-scale unlabelled data to learn meaningful feature representations, has attracted increasing attention in the field of point cloud research. However, self-supervised representation learning often suffers from model collapse, resulting in reduced information and diversity of the learned representation, and consequently degrading the performance of downstream tasks. To address this problem, we propose PointMoment, a novel framework for point cloud self-supervised representation learning that utilizes a high-order mixed moment loss function rather than the conventional contrastive loss function. Moreover, our framework does not require any special techniques such as asymmetric network architectures, gradient stopping, etc. Specifically, we calculate the high-order mixed moment of the feature variables and force them to decompose into products of their individual moment, thereby making multiple variables more independent and minimizing the feature redundancy. We also incorporate a contrastive learning approach to maximize the feature invariance under different data augmentations of the same point cloud. Experimental results show that our approach outperforms previous unsupervised learning methods on the downstream task of 3D point cloud classification and segmentation.

PointJEM: Self-supervised Point Cloud Understanding for Reducing Feature Redundancy via Joint Entropy Maximization

Dec 06, 2023Abstract:Most deep learning-based point cloud processing methods are supervised and require large scale of labeled data. However, manual labeling of point cloud data is laborious and time-consuming. Self-supervised representation learning can address the aforementioned issue by learning robust and generalized representations from unlabeled datasets. Nevertheless, the embedded features obtained by representation learning usually contain redundant information, and most current methods reduce feature redundancy by linear correlation constraints. In this paper, we propose PointJEM, a self-supervised representation learning method applied to the point cloud field. PointJEM comprises an embedding scheme and a loss function based on joint entropy. The embedding scheme divides the embedding vector into different parts, each part can learn a distinctive feature. To reduce redundant information in the features, PointJEM maximizes the joint entropy between the different parts, thereby rendering the learned feature variables pairwise independent. To validate the effectiveness of our method, we conducted experiments on multiple datasets. The results demonstrate that our method can significantly reduce feature redundancy beyond linear correlation. Furthermore, PointJEM achieves competitive performance in downstream tasks such as classification and segmentation.

Exploring Progress in Multivariate Time Series Forecasting: Comprehensive Benchmarking and Heterogeneity Analysis

Oct 09, 2023Abstract:Multivariate Time Series (MTS) widely exists in real-word complex systems, such as traffic and energy systems, making their forecasting crucial for understanding and influencing these systems. Recently, deep learning-based approaches have gained much popularity for effectively modeling temporal and spatial dependencies in MTS, specifically in Long-term Time Series Forecasting (LTSF) and Spatial-Temporal Forecasting (STF). However, the fair benchmarking issue and the choice of technical approaches have been hotly debated in related work. Such controversies significantly hinder our understanding of progress in this field. Thus, this paper aims to address these controversies to present insights into advancements achieved. To resolve benchmarking issues, we introduce BasicTS, a benchmark designed for fair comparisons in MTS forecasting. BasicTS establishes a unified training pipeline and reasonable evaluation settings, enabling an unbiased evaluation of over 30 popular MTS forecasting models on more than 18 datasets. Furthermore, we highlight the heterogeneity among MTS datasets and classify them based on temporal and spatial characteristics. We further prove that neglecting heterogeneity is the primary reason for generating controversies in technical approaches. Moreover, based on the proposed BasicTS and rich heterogeneous MTS datasets, we conduct an exhaustive and reproducible performance and efficiency comparison of popular models, providing insights for researchers in selecting and designing MTS forecasting models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge