Wai Lam

A Survey on Proactive Dialogue Systems: Problems, Methods, and Prospects

May 09, 2023Abstract:Proactive dialogue systems, related to a wide range of real-world conversational applications, equip the conversational agent with the capability of leading the conversation direction towards achieving pre-defined targets or fulfilling certain goals from the system side. It is empowered by advanced techniques to progress to more complicated tasks that require strategical and motivational interactions. In this survey, we provide a comprehensive overview of the prominent problems and advanced designs for conversational agent's proactivity in different types of dialogues. Furthermore, we discuss challenges that meet the real-world application needs but require a greater research focus in the future. We hope that this first survey of proactive dialogue systems can provide the community with a quick access and an overall picture to this practical problem, and stimulate more progresses on conversational AI to the next level.

Decoder-Only or Encoder-Decoder? Interpreting Language Model as a Regularized Encoder-Decoder

Apr 08, 2023

Abstract:The sequence-to-sequence (seq2seq) task aims at generating the target sequence based on the given input source sequence. Traditionally, most of the seq2seq task is resolved by the Encoder-Decoder framework which requires an encoder to encode the source sequence and a decoder to generate the target text. Recently, a bunch of new approaches have emerged that apply decoder-only language models directly to the seq2seq task. Despite the significant advancements in applying language models to the seq2seq task, there is still a lack of thorough analysis on the effectiveness of the decoder-only language model architecture. This paper aims to address this gap by conducting a detailed comparison between the encoder-decoder architecture and the decoder-only language model framework through the analysis of a regularized encoder-decoder structure. This structure is designed to replicate all behaviors in the classical decoder-only language model but has an encoder and a decoder making it easier to be compared with the classical encoder-decoder structure. Based on the analysis, we unveil the attention degeneration problem in the language model, namely, as the generation step number grows, less and less attention is focused on the source sequence. To give a quantitative understanding of this problem, we conduct a theoretical sensitivity analysis of the attention output with respect to the source input. Grounded on our analysis, we propose a novel partial attention language model to solve the attention degeneration problem. Experimental results on machine translation, summarization, and data-to-text generation tasks support our analysis and demonstrate the effectiveness of our proposed model.

Product Question Answering in E-Commerce: A Survey

Feb 16, 2023Abstract:Product question answering (PQA), aiming to automatically provide instant responses to customer's questions in E-Commerce platforms, has drawn increasing attention in recent years. Compared with typical QA problems, PQA exhibits unique challenges such as the subjectivity and reliability of user-generated contents in E-commerce platforms. Therefore, various problem settings and novel methods have been proposed to capture these special characteristics. In this paper, we aim to systematically review existing research efforts on PQA. Specifically, we categorize PQA studies into four problem settings in terms of the form of provided answers. We analyze the pros and cons, as well as present existing datasets and evaluation protocols for each setting. We further summarize the most significant challenges that characterize PQA from general QA applications and discuss their corresponding solutions. Finally, we conclude this paper by providing the prospect on several future directions.

TRIP: Triangular Document-level Pre-training for Multilingual Language Models

Dec 15, 2022

Abstract:Despite the current success of multilingual pre-training, most prior works focus on leveraging monolingual data or bilingual parallel data and overlooked the value of trilingual parallel data. This paper presents \textbf{Tri}angular Document-level \textbf{P}re-training (\textbf{TRIP}), which is the first in the field to extend the conventional monolingual and bilingual pre-training to a trilingual setting by (i) \textbf{Grafting} the same documents in two languages into one mixed document, and (ii) predicting the remaining one language as the reference translation. Our experiments on document-level MT and cross-lingual abstractive summarization show that TRIP brings by up to 3.65 d-BLEU points and 6.2 ROUGE-L points on three multilingual document-level machine translation benchmarks and one cross-lingual abstractive summarization benchmark, including multiple strong state-of-the-art (SOTA) scores. In-depth analysis indicates that TRIP improves document-level machine translation and captures better document contexts in at least three characteristics: (i) tense consistency, (ii) noun consistency and (iii) conjunction presence.

From Clozing to Comprehending: Retrofitting Pre-trained Language Model to Pre-trained Machine Reader

Dec 09, 2022Abstract:We present Pre-trained Machine Reader (PMR), a novel method to retrofit Pre-trained Language Models (PLMs) into Machine Reading Comprehension (MRC) models without acquiring labeled data. PMR is capable of resolving the discrepancy between model pre-training and downstream fine-tuning of existing PLMs, and provides a unified solver for tackling various extraction tasks. To achieve this, we construct a large volume of general-purpose and high-quality MRC-style training data with the help of Wikipedia hyperlinks and design a Wiki Anchor Extraction task to guide the MRC-style pre-training process. Although conceptually simple, PMR is particularly effective in solving extraction tasks including Extractive Question Answering and Named Entity Recognition, where it shows tremendous improvements over previous approaches especially under low-resource settings. Moreover, viewing sequence classification task as a special case of extraction task in our MRC formulation, PMR is even capable to extract high-quality rationales to explain the classification process, providing more explainability of the predictions.

On the Effectiveness of Parameter-Efficient Fine-Tuning

Nov 28, 2022

Abstract:Fine-tuning pre-trained models has been ubiquitously proven to be effective in a wide range of NLP tasks. However, fine-tuning the whole model is parameter inefficient as it always yields an entirely new model for each task. Currently, many research works propose to only fine-tune a small portion of the parameters while keeping most of the parameters shared across different tasks. These methods achieve surprisingly good performance and are shown to be more stable than their corresponding fully fine-tuned counterparts. However, such kind of methods is still not well understood. Some natural questions arise: How does the parameter sparsity lead to promising performance? Why is the model more stable than the fully fine-tuned models? How to choose the tunable parameters? In this paper, we first categorize the existing methods into random approaches, rule-based approaches, and projection-based approaches based on how they choose which parameters to tune. Then, we show that all of the methods are actually sparse fine-tuned models and conduct a novel theoretical analysis of them. We indicate that the sparsity is actually imposing a regularization on the original model by controlling the upper bound of the stability. Such stability leads to better generalization capability which has been empirically observed in a lot of recent research works. Despite the effectiveness of sparsity grounded by our theory, it still remains an open problem of how to choose the tunable parameters. To better choose the tunable parameters, we propose a novel Second-order Approximation Method (SAM) which approximates the original problem with an analytically solvable optimization function. The tunable parameters are determined by directly optimizing the approximation function. The experimental results show that our proposed SAM model outperforms many strong baseline models and it also verifies our theoretical analysis.

McQueen: a Benchmark for Multimodal Conversational Query Rewrite

Oct 23, 2022

Abstract:The task of query rewrite aims to convert an in-context query to its fully-specified version where ellipsis and coreference are completed and referred-back according to the history context. Although much progress has been made, less efforts have been paid to real scenario conversations that involve drawing information from more than one modalities. In this paper, we propose the task of multimodal conversational query rewrite (McQR), which performs query rewrite under the multimodal visual conversation setting. We collect a large-scale dataset named McQueen based on manual annotation, which contains 15k visual conversations and over 80k queries where each one is associated with a fully-specified rewrite version. In addition, for entities appearing in the rewrite, we provide the corresponding image box annotation. We then use the McQueen dataset to benchmark a state-of-the-art method for effectively tackling the McQR task, which is based on a multimodal pre-trained model with pointer generator. Extensive experiments are performed to demonstrate the effectiveness of our model on this task\footnote{The dataset and code of this paper are both available in \url{https://github.com/yfyuan01/MQR}

Towards Generalizable and Robust Text-to-SQL Parsing

Oct 23, 2022

Abstract:Text-to-SQL parsing tackles the problem of mapping natural language questions to executable SQL queries. In practice, text-to-SQL parsers often encounter various challenging scenarios, requiring them to be generalizable and robust. While most existing work addresses a particular generalization or robustness challenge, we aim to study it in a more comprehensive manner. In specific, we believe that text-to-SQL parsers should be (1) generalizable at three levels of generalization, namely i.i.d., zero-shot, and compositional, and (2) robust against input perturbations. To enhance these capabilities of the parser, we propose a novel TKK framework consisting of Task decomposition, Knowledge acquisition, and Knowledge composition to learn text-to-SQL parsing in stages. By dividing the learning process into multiple stages, our framework improves the parser's ability to acquire general SQL knowledge instead of capturing spurious patterns, making it more generalizable and robust. Experimental results under various generalization and robustness settings show that our framework is effective in all scenarios and achieves state-of-the-art performance on the Spider, SParC, and CoSQL datasets. Code can be found at https://github.com/AlibabaResearch/DAMO-ConvAI/tree/main/tkk.

Retrofitting Multilingual Sentence Embeddings with Abstract Meaning Representation

Oct 18, 2022

Abstract:We introduce a new method to improve existing multilingual sentence embeddings with Abstract Meaning Representation (AMR). Compared with the original textual input, AMR is a structured semantic representation that presents the core concepts and relations in a sentence explicitly and unambiguously. It also helps reduce surface variations across different expressions and languages. Unlike most prior work that only evaluates the ability to measure semantic similarity, we present a thorough evaluation of existing multilingual sentence embeddings and our improved versions, which include a collection of five transfer tasks in different downstream applications. Experiment results show that retrofitting multilingual sentence embeddings with AMR leads to better state-of-the-art performance on both semantic textual similarity and transfer tasks. Our codebase and evaluation scripts can be found at \url{https://github.com/jcyk/MSE-AMR}.

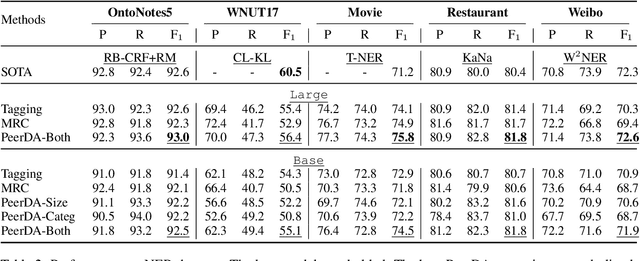

PeerDA: Data Augmentation via Modeling Peer Relation for Span Identification Tasks

Oct 17, 2022

Abstract:Span Identification (SpanID) is a family of NLP tasks that aims to detect and classify text spans. Different from previous works that merely leverage Subordinate (\textsc{Sub}) relation about \textit{if a span is an instance of a certain category} to train SpanID models, we explore Peer (\textsc{Pr}) relation, which indicates that \textit{the two spans are two different instances from the same category sharing similar features}, and propose a novel \textbf{Peer} \textbf{D}ata \textbf{A}ugmentation (PeerDA) approach to treat span-span pairs with the \textsc{Pr} relation as a kind of augmented training data. PeerDA has two unique advantages: (1) There are a large number of span-span pairs with the \textsc{Pr} relation for augmenting the training data. (2) The augmented data can prevent over-fitting to the superficial span-category mapping by pushing SpanID models to leverage more on spans' semantics. Experimental results on ten datasets over four diverse SpanID tasks across seven domains demonstrate the effectiveness of PeerDA. Notably, seven of them achieve state-of-the-art results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge