Vijay Kumar

Beyond Robustness: A Taxonomy of Approaches towards Resilient Multi-Robot Systems

Sep 25, 2021

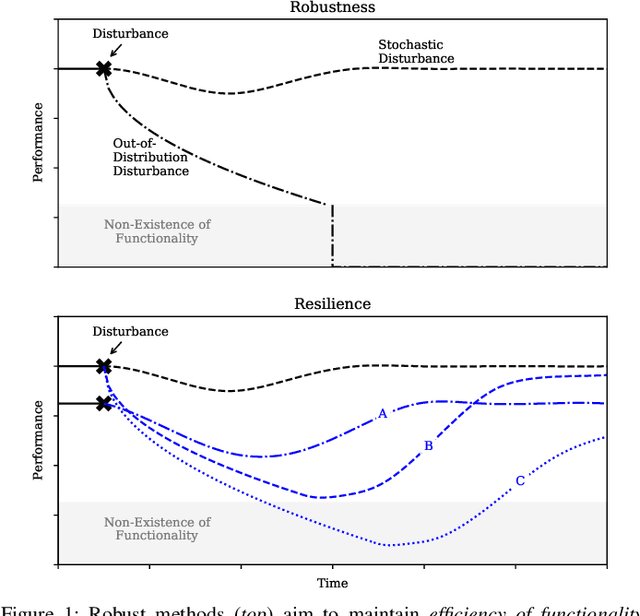

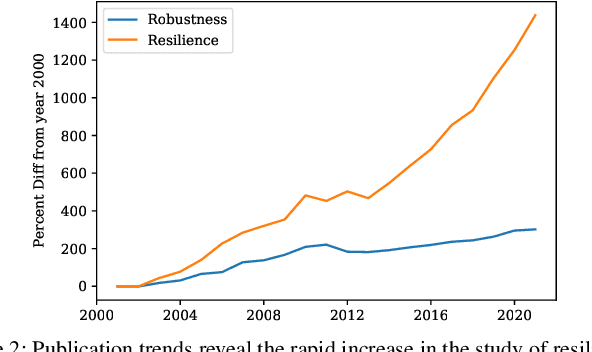

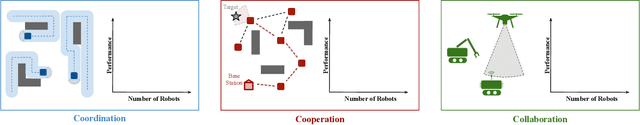

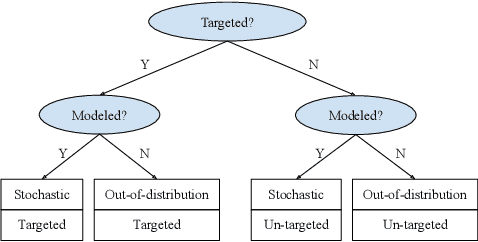

Abstract:Robustness is key to engineering, automation, and science as a whole. However, the property of robustness is often underpinned by costly requirements such as over-provisioning, known uncertainty and predictive models, and known adversaries. These conditions are idealistic, and often not satisfiable. Resilience on the other hand is the capability to endure unexpected disruptions, to recover swiftly from negative events, and bounce back to normality. In this survey article, we analyze how resilience is achieved in networks of agents and multi-robot systems that are able to overcome adversity by leveraging system-wide complementarity, diversity, and redundancy - often involving a reconfiguration of robotic capabilities to provide some key ability that was not present in the system a priori. As society increasingly depends on connected automated systems to provide key infrastructure services (e.g., logistics, transport, and precision agriculture), providing the means to achieving resilient multi-robot systems is paramount. By enumerating the consequences of a system that is not resilient (fragile), we argue that resilience must become a central engineering design consideration. Towards this goal, the community needs to gain clarity on how it is defined, measured, and maintained. We address these questions across foundational robotics domains, spanning perception, control, planning, and learning. One of our key contributions is a formal taxonomy of approaches, which also helps us discuss the defining factors and stressors for a resilient system. Finally, this survey article gives insight as to how resilience may be achieved. Importantly, we highlight open problems that remain to be tackled in order to reap the benefits of resilient robotic systems.

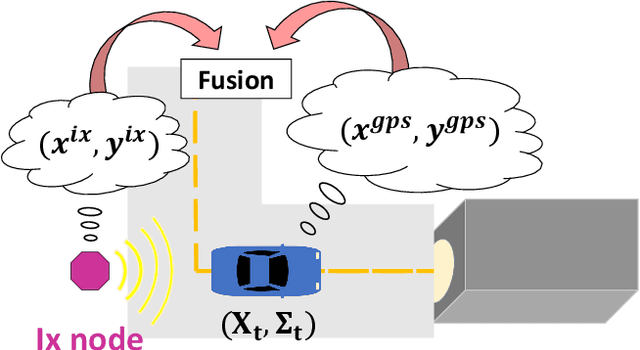

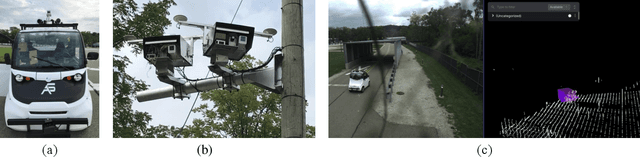

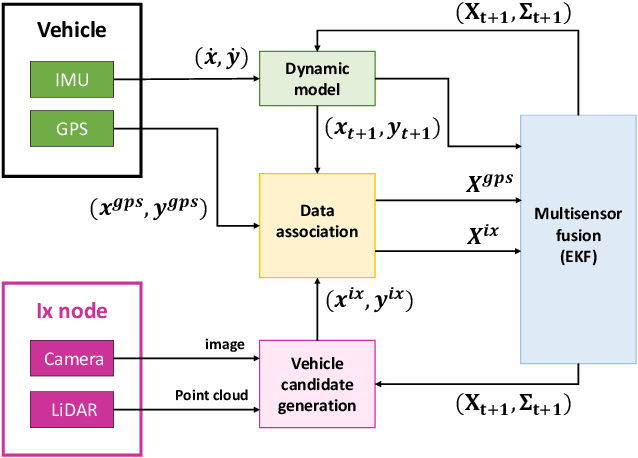

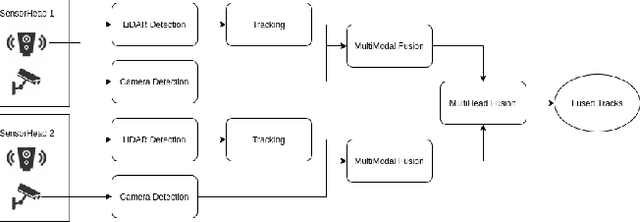

Infrastructure Node-based Vehicle Localization for Autonomous Driving

Sep 21, 2021

Abstract:Vehicle localization is essential for autonomous vehicle (AV) navigation and Advanced Driver Assistance Systems (ADAS). Accurate vehicle localization is often achieved via expensive inertial navigation systems or by employing compute-intensive vision processing (LiDAR/camera) to augment the low-cost and noisy inertial sensors. Here we have developed a framework for fusing the information obtained from a smart infrastructure node (ix-node) with the autonomous vehicles on-board localization engine to estimate the robust and accurate pose of the ego-vehicle even with cheap inertial sensors. A smart ix-node is typically used to augment the perception capability of an autonomous vehicle, especially when the onboard perception sensors of AVs are blocked by the dynamic and static objects in the environment thereby making them ineffectual. In this work, we utilize this perception output from an ix-node to increase the localization accuracy of the AV. The fusion of ix-node perception output with the vehicle's low-cost inertial sensors allows us to perform reliable vehicle localization without the need for relying on expensive inertial navigation systems or compute-intensive vision processing onboard the AVs. The proposed approach has been tested on real-world datasets collected from a test track in Ann Arbor, Michigan. Detailed analysis of the experimental results shows that incorporating ix-node data improves localization performance.

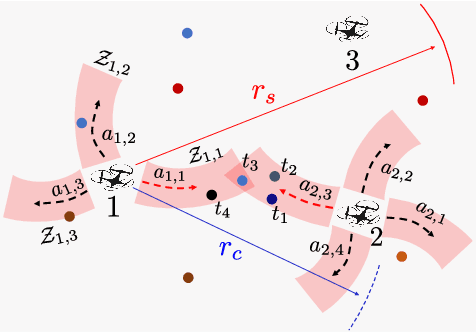

Robust Multi-Robot Active Target Tracking Against Sensing and Communication Attacks

Sep 20, 2021

Abstract:The problem of multi-robot target tracking asks for actively planning the joint motion of robots to track targets. In this paper, we focus on such target tracking problems in adversarial environments, where attacks or failures may deactivate robots' sensors and communications. In contrast to the previous works that consider no attacks or sensing attacks only, we formalize the first robust multi-robot tracking framework that accounts for any fixed numbers of worst-case sensing and communication attacks. To secure against such attacks, we design the first robust planning algorithm, named Robust Active Target Tracking (RATT), which approximates the communication attacks to equivalent sensing attacks and then optimizes against the approximated and original sensing attacks. We show that RATT provides provable suboptimality bounds on the tracking quality for any non-decreasing objective function. Our analysis utilizes the notations of curvature for set functions introduced in combinatorial optimization. In addition, RATT runs in polynomial time and terminates with the same running time as state-of-the-art algorithms for (non-robust) target tracking. Finally, we evaluate RATT with both qualitative and quantitative simulations across various scenarios. In the evaluations, RATT exhibits a tracking quality that is near-optimal and superior to varying non-robust heuristics. We also demonstrate RATT's superiority and robustness against varying attack models (e.g., worst-case and bounded rational attacks).

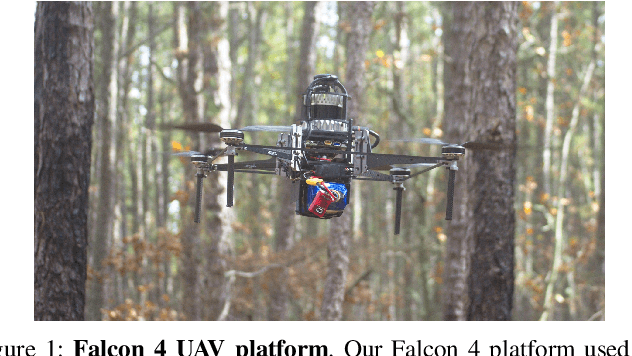

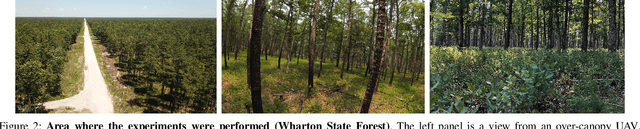

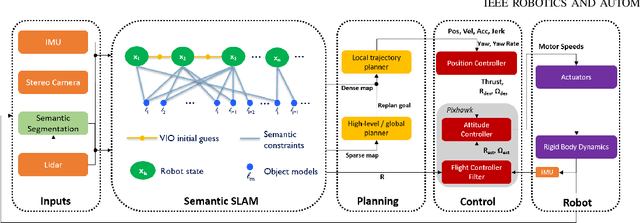

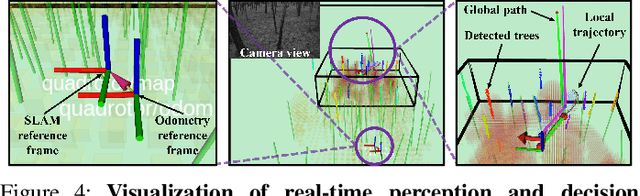

Large-scale Autonomous Flight with Real-time Semantic SLAM under Dense Forest Canopy

Sep 19, 2021

Abstract:In this letter, we propose an integrated autonomous flight and semantic SLAM system that can perform long-range missions and real-time semantic mapping in highly cluttered, unstructured, and GPS-denied under-canopy environments. First, tree trunks and ground planes are detected from LIDAR scans. We use a neural network and an instance extraction algorithm to enable semantic segmentation in real time onboard the UAV. Second, detected tree trunk instances are modeled as cylinders and associated across the whole LIDAR sequence. This semantic data association constraints both robot poses as well as trunk landmark models. The output of semantic SLAM is used in state estimation, planning, and control algorithms in real time. The global planner relies on a sparse map to plan the shortest path to the global goal, and the local trajectory planner uses a small but finely discretized robot-centric map to plan a dynamically feasible and collision-free trajectory to the local goal. Both the global path and local trajectory lead to drift-corrected goals, thus helping the UAV execute its mission accurately and safely.

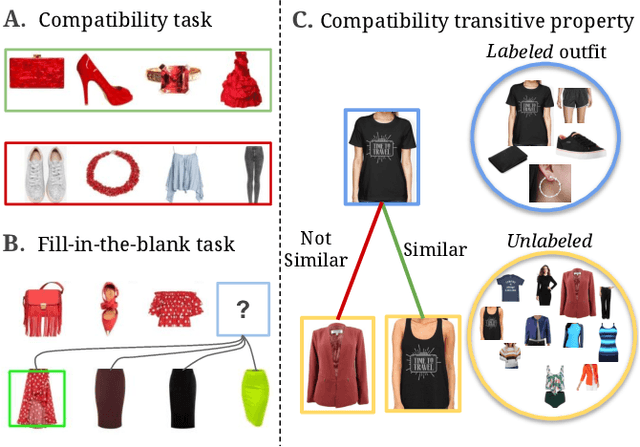

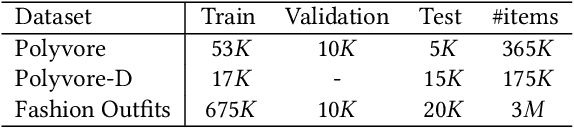

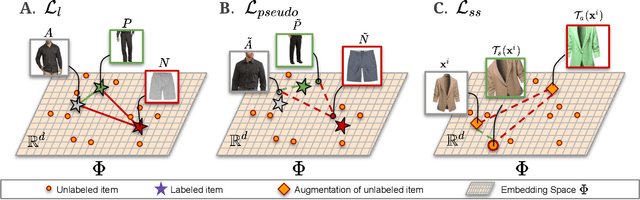

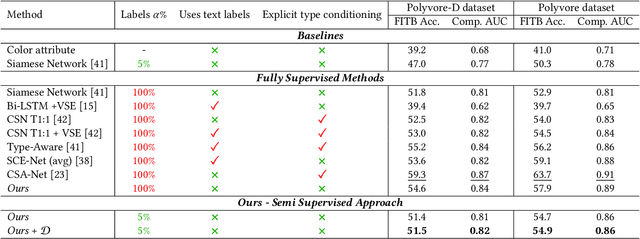

Semi-Supervised Visual Representation Learning for Fashion Compatibility

Sep 16, 2021

Abstract:We consider the problem of complementary fashion prediction. Existing approaches focus on learning an embedding space where fashion items from different categories that are visually compatible are closer to each other. However, creating such labeled outfits is intensive and also not feasible to generate all possible outfit combinations, especially with large fashion catalogs. In this work, we propose a semi-supervised learning approach where we leverage large unlabeled fashion corpus to create pseudo-positive and pseudo-negative outfits on the fly during training. For each labeled outfit in a training batch, we obtain a pseudo-outfit by matching each item in the labeled outfit with unlabeled items. Additionally, we introduce consistency regularization to ensure that representation of the original images and their transformations are consistent to implicitly incorporate colour and other important attributes through self-supervision. We conduct extensive experiments on Polyvore, Polyvore-D and our newly created large-scale Fashion Outfits datasets, and show that our approach with only a fraction of labeled examples performs on-par with completely supervised methods.

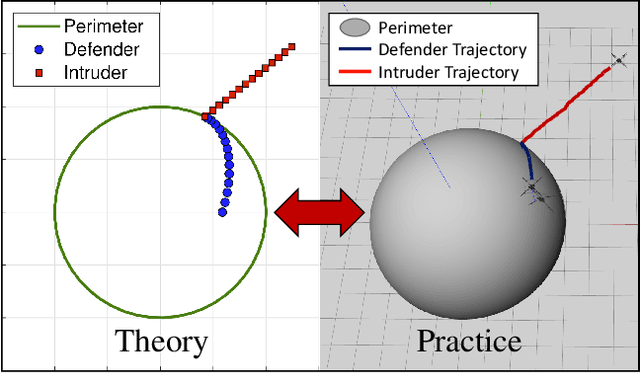

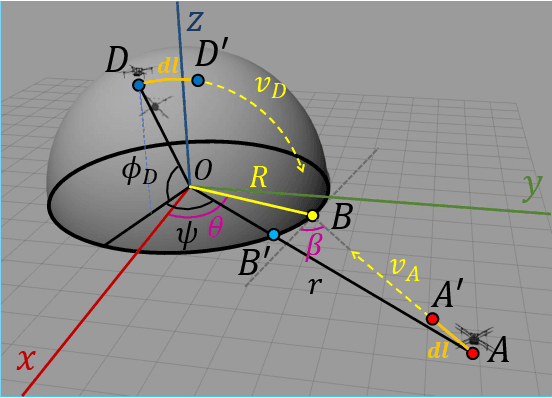

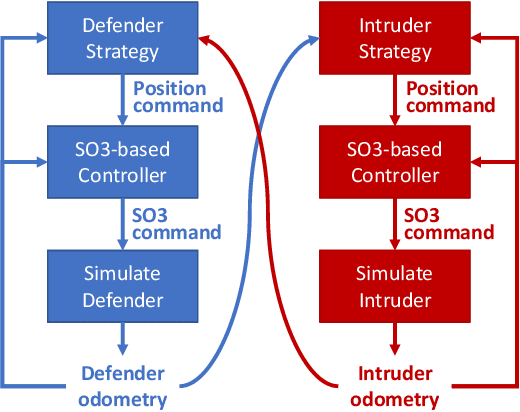

Defending a Perimeter from a Ground Intruder Using an Aerial Defender: Theory and Practice

Sep 07, 2021

Abstract:The perimeter defense game has received interest in recent years as a variant of the pursuit-evasion game. A number of previous works have solved this game to obtain the optimal strategies for defender and intruder, but the derived theory considers the players as point particles with first-order assumptions. In this work, we aim to apply the theory derived from the perimeter defense problem to robots with realistic models of actuation and sensing and observe performance discrepancy in relaxing the first-order assumptions. In particular, we focus on the hemisphere perimeter defense problem where a ground intruder tries to reach the base of a hemisphere while an aerial defender constrained to move on the hemisphere aims to capture the intruder. The transition from theory to practice is detailed, and the designed system is simulated in Gazebo. Two metrics for parametric analysis and comparative study are proposed to evaluate the performance discrepancy.

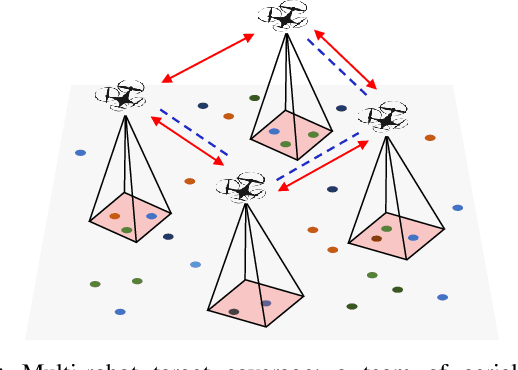

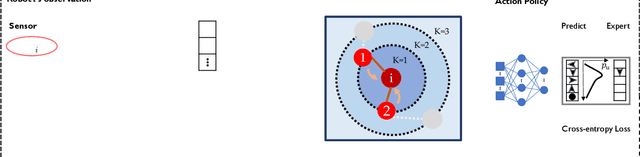

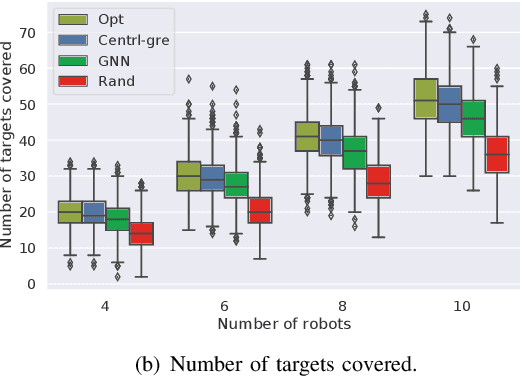

Graph Neural Networks for Decentralized Multi-Robot Submodular Action Selection

May 18, 2021

Abstract:In this paper, we develop a learning-based approach for decentralized submodular maximization. We focus on applications where robots are required to jointly select actions, e.g., motion primitives, to maximize team submodular objectives with local communications only. Such applications are essential for large-scale multi-robot coordination such as multi-robot motion planning for area coverage, environment exploration, and target tracking. But the current decentralized submodular maximization algorithms either require assumptions on the inter-robot communication or lose some suboptimal guarantees. In this work, we propose a general-purpose learning architecture towards submodular maximization at scale, with decentralized communications. Particularly, our learning architecture leverages a graph neural network (GNN) to capture local interactions of the robots and learns decentralized decision-making for the robots. We train the learning model by imitating an expert solution and implement the resulting model for decentralized action selection involving local observations and communications only. We demonstrate the performance of our GNN-based learning approach in a scenario of active target coverage with large networks of robots. The simulation results show our approach nearly matches the coverage performance of the expert algorithm, and yet runs several orders faster with more than 30 robots. The results also exhibit our approach's generalization capability in previously unseen scenarios, e.g., larger environments and larger networks of robots.

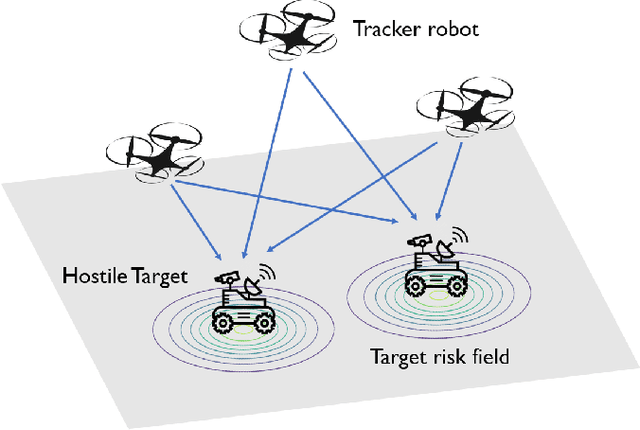

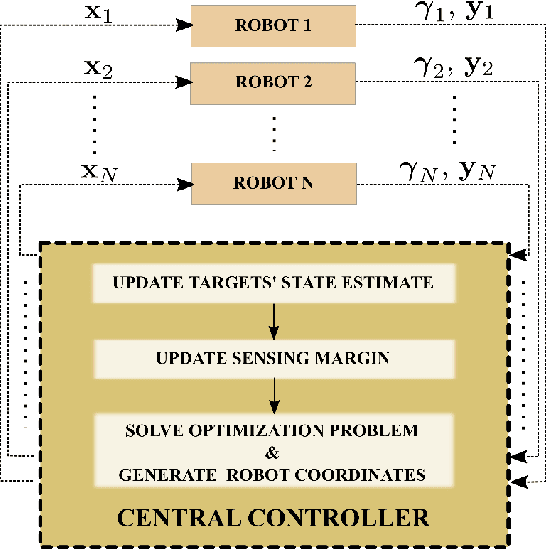

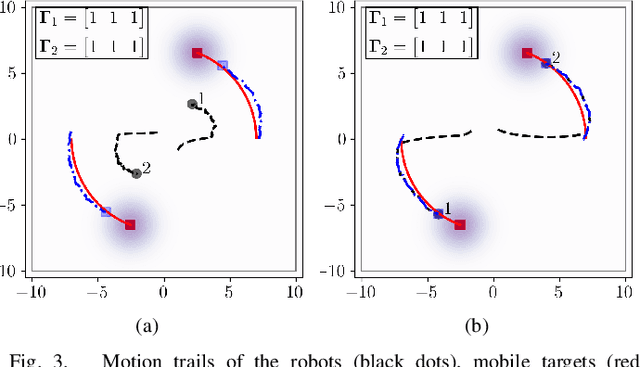

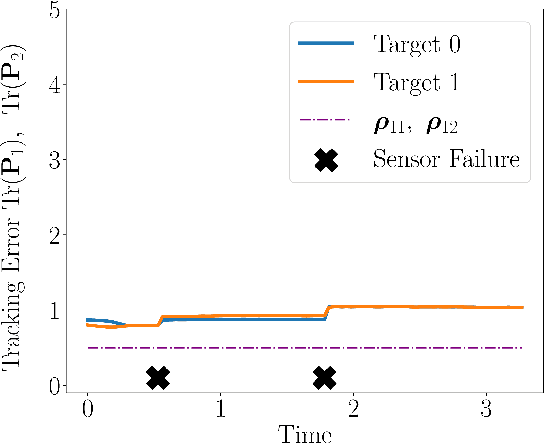

Adaptive and Risk-Aware Target Tracking with Heterogeneous Robot Teams

May 09, 2021

Abstract:We consider a scenario where a team of robots with heterogeneous sensors must track a set of hostile targets which induce sensory failures on the robots. In particular, the likelihood of failures depends on the proximity between the targets and the robots. We propose a control framework that implicitly addresses the competing objectives of performance maximization and sensor preservation (which impacts the future performance of the team). Our framework consists of a predictive component -- which accounts for the risk of being detected by the target, and a reactive component -- which maximizes the performance of the team regardless of the failures that have already occurred. Based on a measure of the abundance of sensors in the team, our framework can generate aggressive and risk-averse robot configurations to track the targets. Crucially, the heterogeneous sensing capabilities of the robots are explicitly considered in each step, allowing for a more expressive risk-performance trade-off. Simulated experiments with induced sensor failures demonstrate the efficacy of the proposed approach.

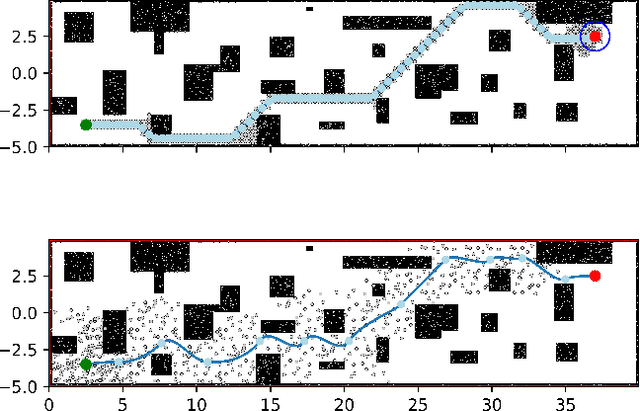

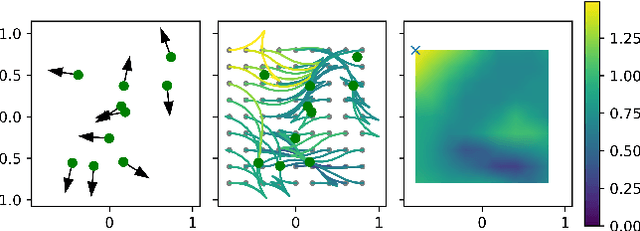

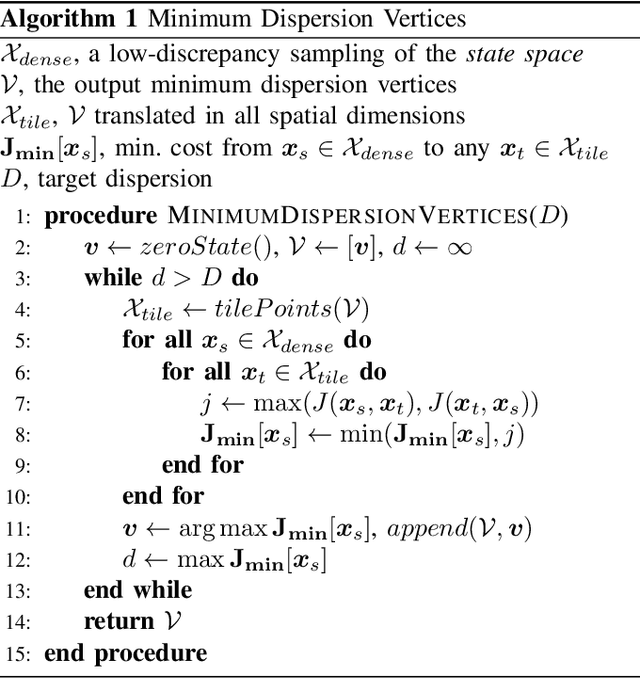

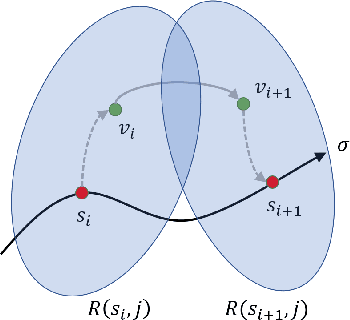

Dispersion-Minimizing Motion Primitives for Search-Based Motion Planning

Mar 26, 2021

Abstract:Search-based planning with motion primitives is a powerful motion planning technique that can provide dynamic feasibility, optimality, and real-time computation times on size, weight, and power-constrained platforms in unstructured environments. However, optimal design of the motion planning graph, while crucial to the performance of the planner, has not been a main focus of prior work. This paper proposes to address this by introducing a method of choosing vertices and edges in a motion primitive graph that is grounded in sampling theory and leads to theoretical guarantees on planner completeness. By minimizing dispersion of the graph vertices in the metric space induced by trajectory cost, we optimally cover the space of feasible trajectories with our motion primitive graph. In comparison with baseline motion primitives defined by uniform input space sampling, our motion primitive graphs have lower dispersion, find a plan with fewer iterations of the graph search, and have only one parameter to tune.

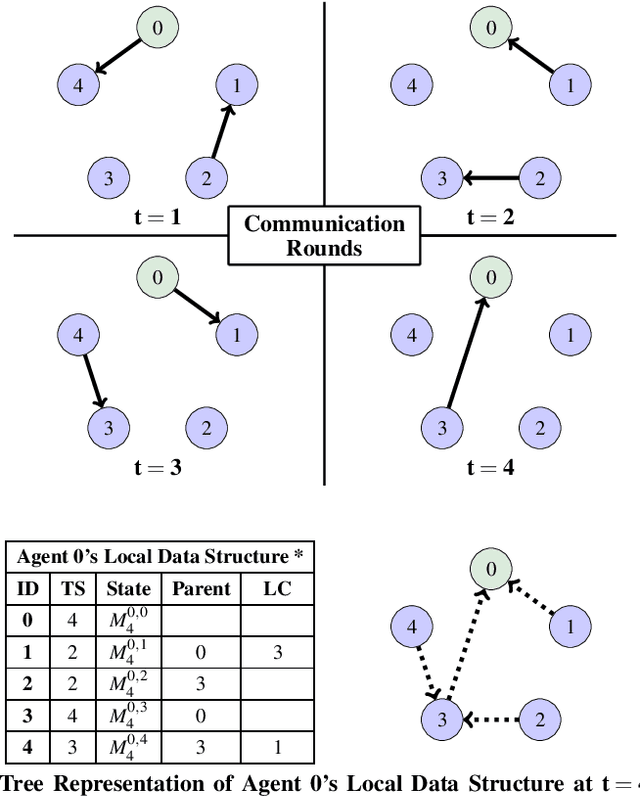

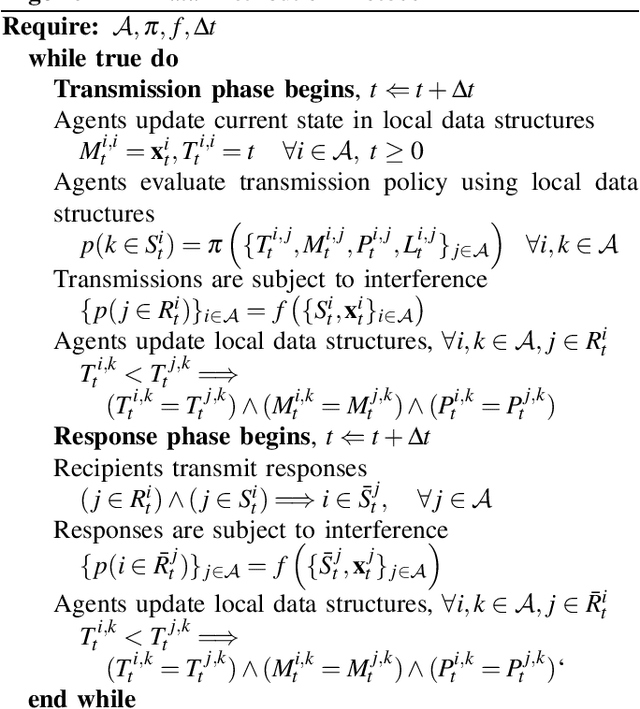

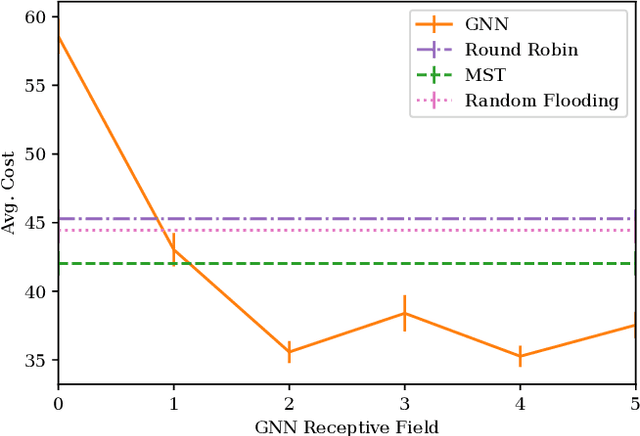

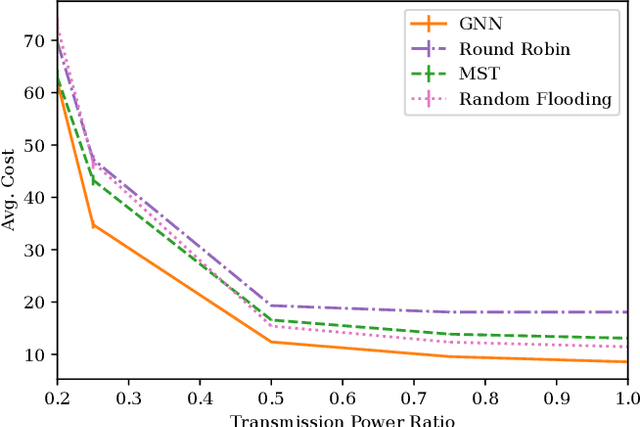

Learning Connectivity for Data Distribution in Robot Teams

Mar 08, 2021

Abstract:Many algorithms for control of multi-robot teams operate under the assumption that low-latency, global state information necessary to coordinate agent actions can readily be disseminated among the team. However, in harsh environments with no existing communication infrastructure, robots must form ad-hoc networks, forcing the team to operate in a distributed fashion. To overcome this challenge, we propose a task-agnostic, decentralized, low-latency method for data distribution in ad-hoc networks using Graph Neural Networks (GNN). Our approach enables multi-agent algorithms based on global state information to function by ensuring it is available at each robot. To do this, agents glean information about the topology of the network from packet transmissions and feed it to a GNN running locally which instructs the agent when and where to transmit the latest state information. We train the distributed GNN communication policies via reinforcement learning using the average Age of Information as the reward function and show that it improves training stability compared to task-specific reward functions. Our approach performs favorably compared to industry-standard methods for data distribution such as random flooding and round robin. We also show that the trained policies generalize to larger teams of both static and mobile agents.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge