Elijah S. Lee

Graph Neural Networks for Decentralized Multi-Agent Perimeter Defense

Jan 23, 2023Abstract:In this work, we study the problem of decentralized multi-agent perimeter defense that asks for computing actions for defenders with local perceptions and communications to maximize the capture of intruders. One major challenge for practical implementations is to make perimeter defense strategies scalable for large-scale problem instances. To this end, we leverage graph neural networks (GNNs) to develop an imitation learning framework that learns a mapping from defenders' local perceptions and their communication graph to their actions. The proposed GNN-based learning network is trained by imitating a centralized expert algorithm such that the learned actions are close to that generated by the expert algorithm. We demonstrate that our proposed network performs closer to the expert algorithm and is superior to other baseline algorithms by capturing more intruders. Our GNN-based network is trained at a small scale and can be generalized to large-scale cases. We run perimeter defense games in scenarios with different team sizes and configurations to demonstrate the performance of the learned network.

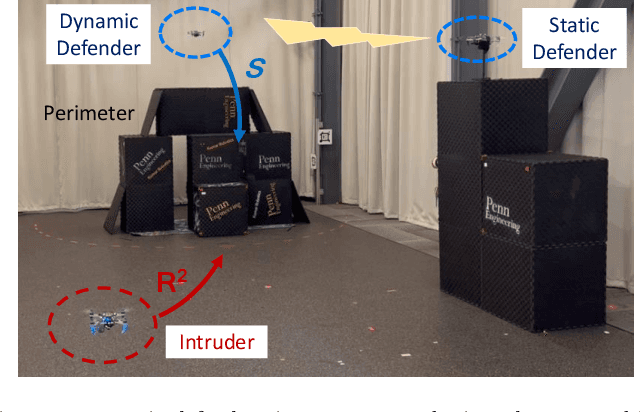

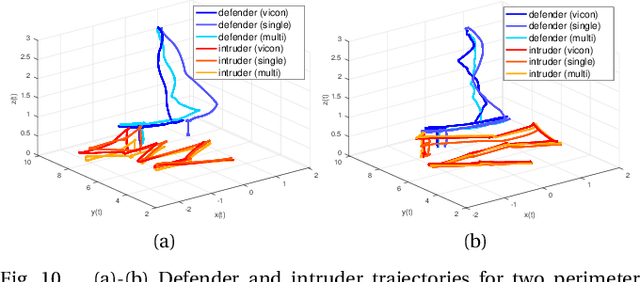

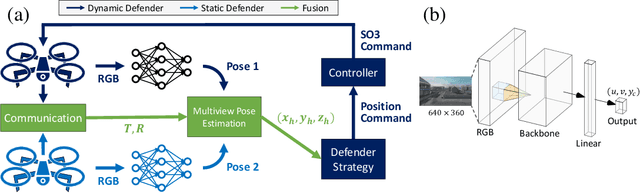

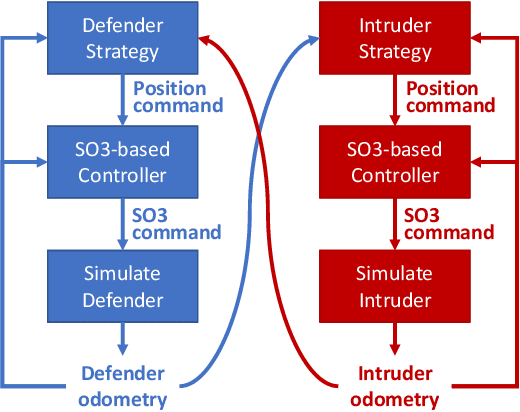

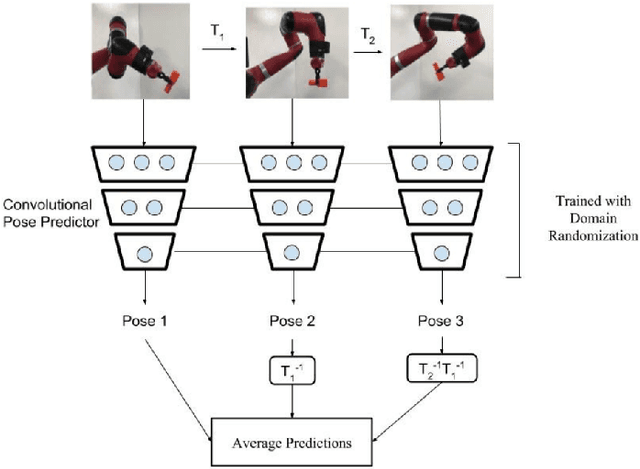

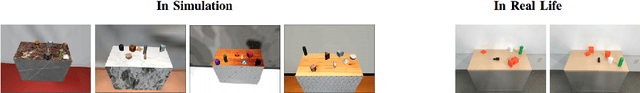

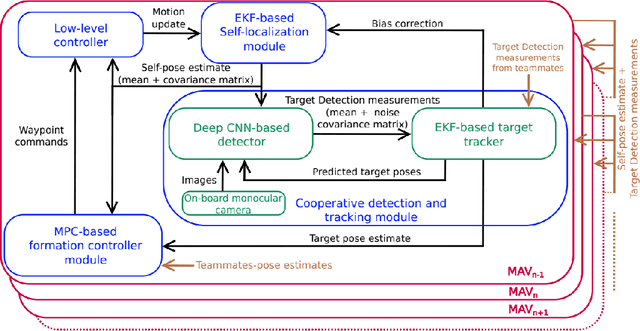

Vision-based Perimeter Defense via Multiview Pose Estimation

Sep 25, 2022

Abstract:Previous studies in the perimeter defense game have largely focused on the fully observable setting where the true player states are known to all players. However, this is unrealistic for practical implementation since defenders may have to perceive the intruders and estimate their states. In this work, we study the perimeter defense game in a photo-realistic simulator and the real world, requiring defenders to estimate intruder states from vision. We train a deep machine learning-based system for intruder pose detection with domain randomization that aggregates multiple views to reduce state estimation errors and adapt the defensive strategy to account for this. We newly introduce performance metrics to evaluate the vision-based perimeter defense. Through extensive experiments, we show that our approach improves state estimation, and eventually, perimeter defense performance in both 1-defender-vs-1-intruder games, and 2-defenders-vs-1-intruder games.

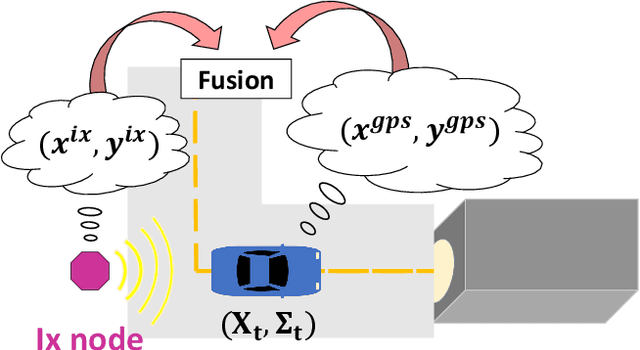

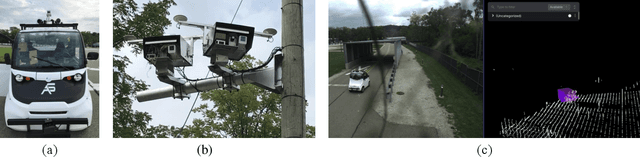

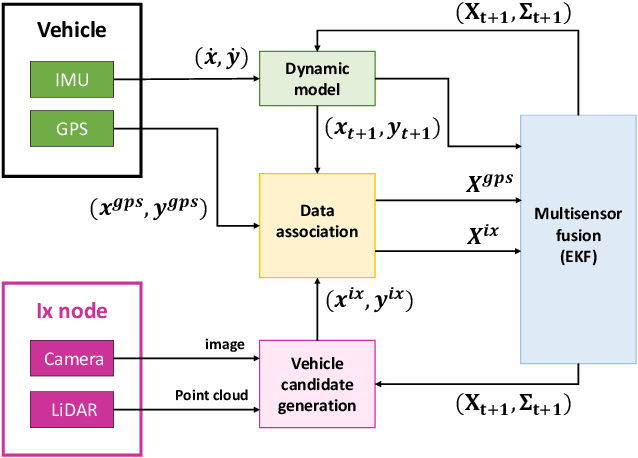

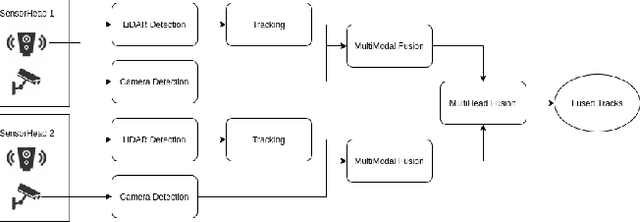

Infrastructure Node-based Vehicle Localization for Autonomous Driving

Sep 21, 2021

Abstract:Vehicle localization is essential for autonomous vehicle (AV) navigation and Advanced Driver Assistance Systems (ADAS). Accurate vehicle localization is often achieved via expensive inertial navigation systems or by employing compute-intensive vision processing (LiDAR/camera) to augment the low-cost and noisy inertial sensors. Here we have developed a framework for fusing the information obtained from a smart infrastructure node (ix-node) with the autonomous vehicles on-board localization engine to estimate the robust and accurate pose of the ego-vehicle even with cheap inertial sensors. A smart ix-node is typically used to augment the perception capability of an autonomous vehicle, especially when the onboard perception sensors of AVs are blocked by the dynamic and static objects in the environment thereby making them ineffectual. In this work, we utilize this perception output from an ix-node to increase the localization accuracy of the AV. The fusion of ix-node perception output with the vehicle's low-cost inertial sensors allows us to perform reliable vehicle localization without the need for relying on expensive inertial navigation systems or compute-intensive vision processing onboard the AVs. The proposed approach has been tested on real-world datasets collected from a test track in Ann Arbor, Michigan. Detailed analysis of the experimental results shows that incorporating ix-node data improves localization performance.

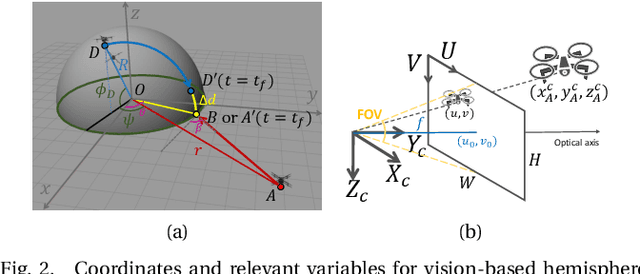

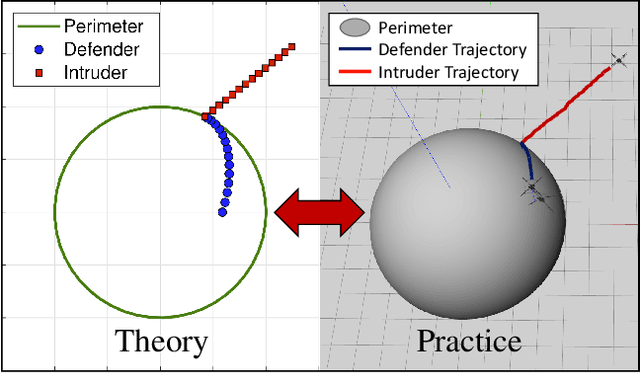

Defending a Perimeter from a Ground Intruder Using an Aerial Defender: Theory and Practice

Sep 07, 2021

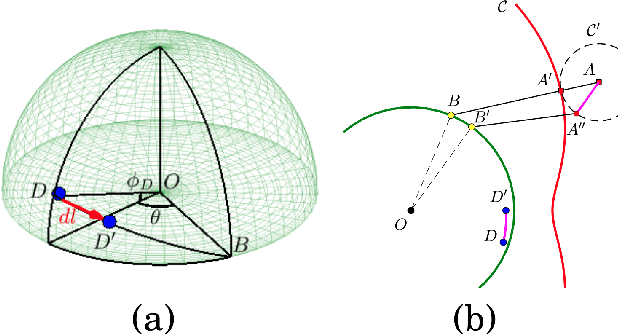

Abstract:The perimeter defense game has received interest in recent years as a variant of the pursuit-evasion game. A number of previous works have solved this game to obtain the optimal strategies for defender and intruder, but the derived theory considers the players as point particles with first-order assumptions. In this work, we aim to apply the theory derived from the perimeter defense problem to robots with realistic models of actuation and sensing and observe performance discrepancy in relaxing the first-order assumptions. In particular, we focus on the hemisphere perimeter defense problem where a ground intruder tries to reach the base of a hemisphere while an aerial defender constrained to move on the hemisphere aims to capture the intruder. The transition from theory to practice is detailed, and the designed system is simulated in Gazebo. Two metrics for parametric analysis and comparative study are proposed to evaluate the performance discrepancy.

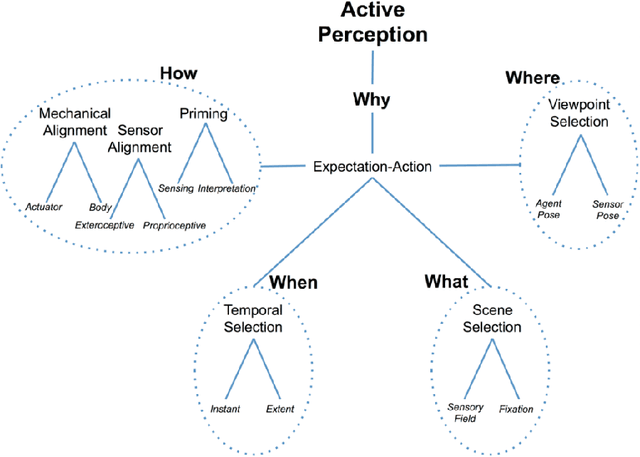

Active Perception with Neural Networks

Sep 06, 2021

Abstract:Active perception has been employed in many domains, particularly in the field of robotics. The idea of active perception is to utilize the input data to predict the next action that can help robots to improve their performance. The main challenge lies in understanding the input data to be coupled with the action, and gathering meaningful information of the environment in an efficient way is necessary and desired. With recent developments of neural networks, interpreting the perceived data has become possible at the semantic level, and real-time interpretation based on deep learning has enabled the efficient closing of the perception-action loop. This report highlights recent progress in employing active perception based on neural networks for single and multi-agent systems.

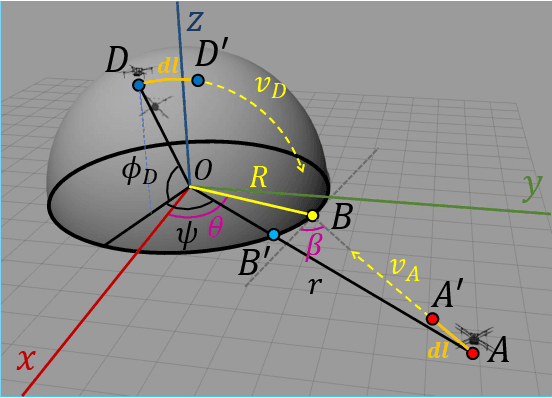

Perimeter-defense Game between Aerial Defender and Ground Intruder

Dec 29, 2020

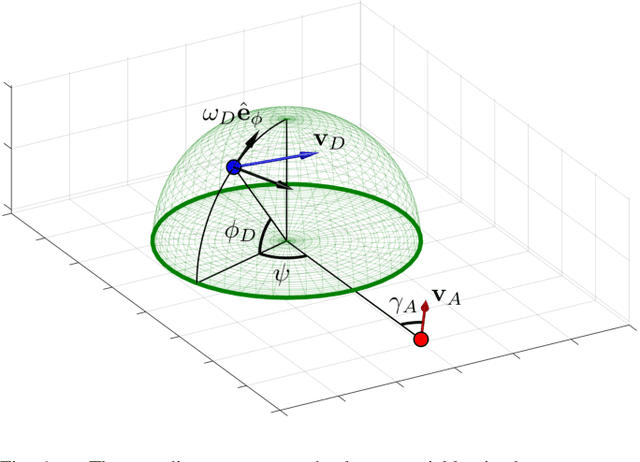

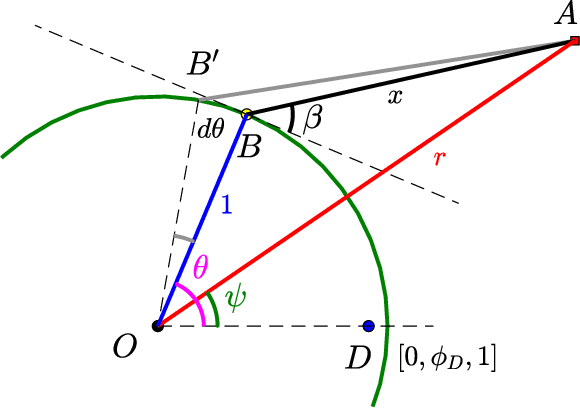

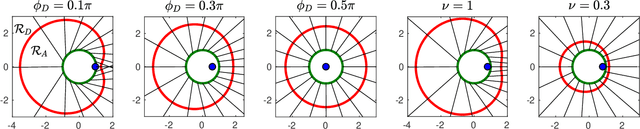

Abstract:We study a variant of pursuit-evasion game in the context of perimeter defense. In this problem, the intruder aims to reach the base plane of a hemisphere without being captured by the defender, while the defender tries to capture the intruder. The perimeter-defense game was previously studied under the assumption that the defender moves on a circle. We extend the problem to the case where the defender moves on a hemisphere. To solve this problem, we analyze the strategies based on the breaching point at which the intruder tries to reach the target and predict the goal position, defined as optimal breaching point, that is achieved by the optimal strategies on both players. We provide the barrier that divides the state space into defender-winning and intruder-winning regions and prove that the optimal strategies for both players are to move towards the optimal breaching point. Simulation results are presented to demonstrate that the optimality of the game is given as a Nash equilibrium.

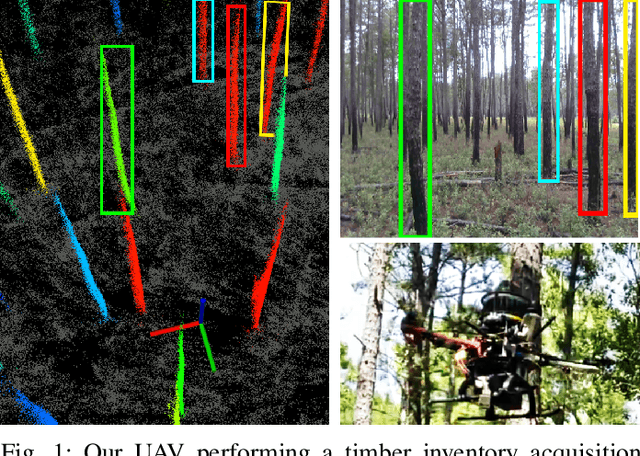

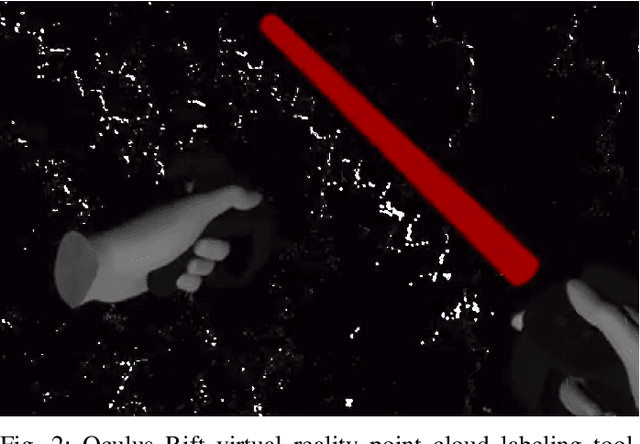

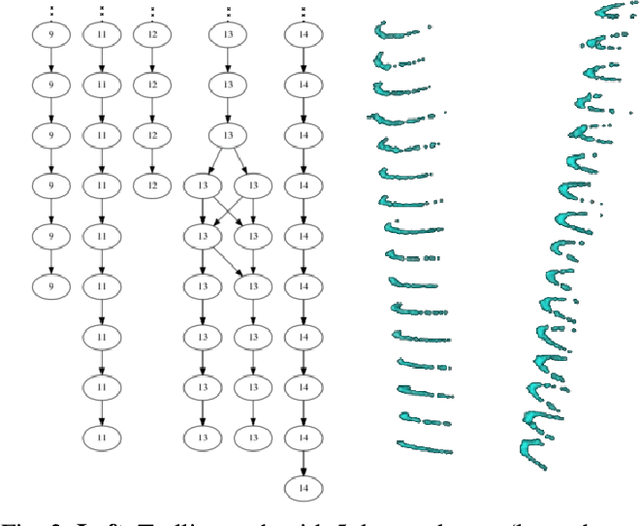

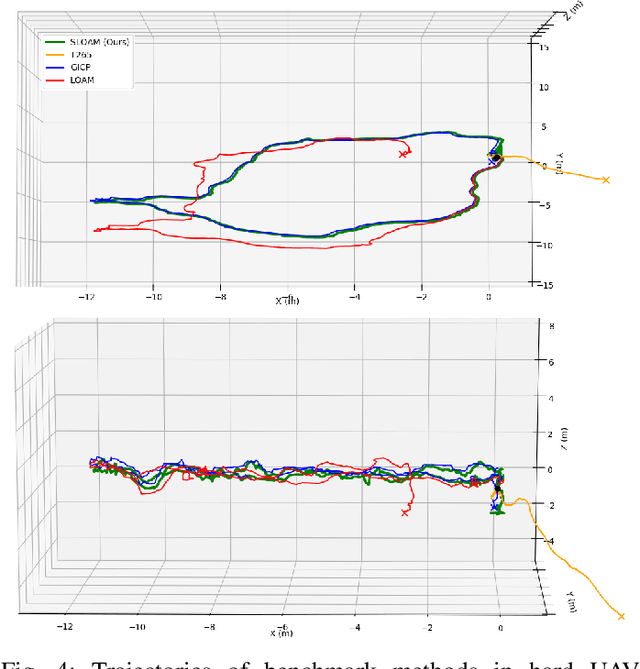

SLOAM: Semantic Lidar Odometry and Mapping for Forest Inventory

Dec 29, 2019

Abstract:This paper describes an end-to-end pipeline for tree diameter estimation based on semantic segmentation and lidar odometry and mapping. Accurate mapping of this type of environment is challenging since the ground and the trees are surrounded by leaves, thorns and vines, and the sensor typically experiences extreme motion. We propose a semantic feature based pose optimization that simultaneously refines the tree models while estimating the robot pose. The pipeline utilizes a custom virtual reality tool for labeling 3D scans that is used to train a semantic segmentation network. The masked point cloud is used to compute a trellis graph that identifies individual instances and extracts relevant features that are used by the SLAM module. We show that traditional lidar and image based methods fail in the forest environment on both Unmanned Aerial Vehicle (UAV) and hand-carry systems, while our method is more robust, scalable, and automatically generates tree diameter estimations.

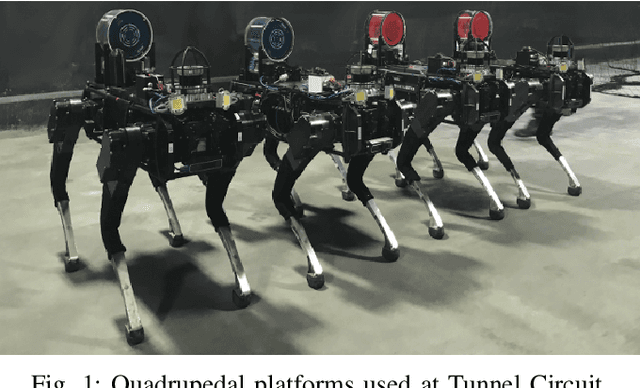

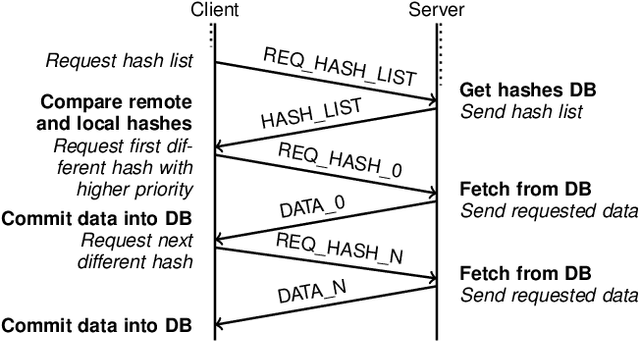

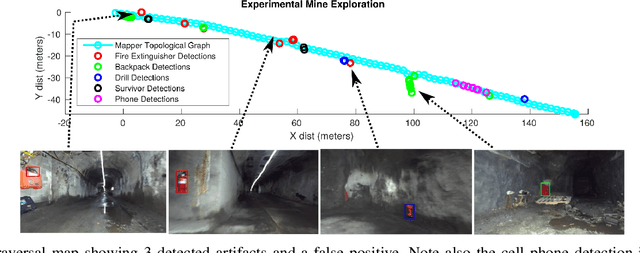

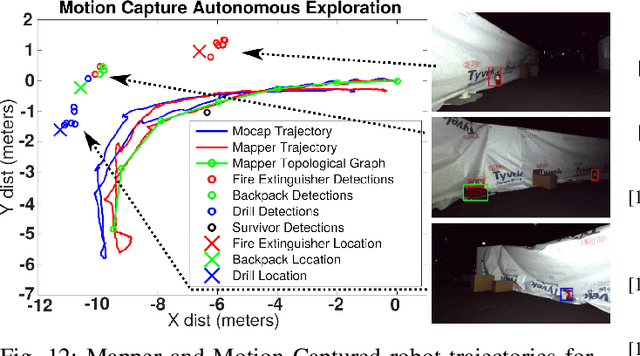

Mine Tunnel Exploration using Multiple Quadrupedal Robots

Sep 20, 2019

Abstract:Robotic exploration of underground environments is a particularly challenging problem due to communication, endurance, and traversability constraints which necessitate high degrees of autonomy and agility. These challenges are further enhanced by the need to minimize human intervention for practical applications. While legged robots have the ability to traverse extremely challenging terrain, they also engender further inherent challenges for planning, estimation, and control. In this work, we describe a fully autonomous system for multi-robot mine exploration and mapping using legged quadrupeds, as well as a distributed database mesh networking system for reporting data. In addition, we show results from the DARPA Subterranean Challenge (SubT) Tunnel Circuit demonstrating localization of artifacts after traversals of hundreds of meters. To our knowledge, these experiments represent the first fully autonomous exploration of an unknown GNSS-denied environment undertaken by legged robots.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge