Lu Li

Culture-Aware Humorous Captioning: Multimodal Humor Generation across Cultural Contexts

Apr 20, 2026Abstract:Recent multimodal large language models have shown promising ability in generating humorous captions for images, yet they still lack stable control over explicit cultural context, making it difficult to jointly maintain image relevance, contextual appropriateness, and humor quality under a specified cultural background. To address this limitation, we introduce a new multimodal generation task, culture-aware humorous captioning, which requires a model to generate a humorous caption conditioned on both an input image and a target cultural context. Captions generated under different cultural contexts are not expected to share the same surface form, but should remain grounded in similar visual situations or humorous rationales.To support this task, we establish a six-dimensional evaluation framework covering image relevance, contextual fit, semantic richness, reasonableness, humor, and creativity. We further propose a staged alignment framework that first initializes the model with high-resource supervision under the Western cultural context, then performs multi-dimensional preference alignment via judge-based GRPO with a Degradation-aware Prototype Repulsion Constraint to mitigate reward hacking in open-ended generation, and finally adapts the model to the Eastern cultural context with a small amount of supervision. Experimental results show that our method achieves stronger overall performance under the proposed evaluation framework, with particularly large gains in contextual fit and a better balance between image relevance and humor under cultural constraints.

SignalClaw: LLM-Guided Evolutionary Synthesis of Interpretable Traffic Signal Control Skills

Apr 07, 2026Abstract:Traffic signal control TSC requires strategies that are both effective and interpretable for deployment, yet reinforcement learning produces opaque neural policies while program synthesis depends on restrictive domain-specific languages. We present SIGNALCLAW, a framework that uses large language models LLMs as evolutionary skill generators to synthesize and refine interpretable control skills for adaptive TSC. Each skill includes rationale, selection guidance, and executable code, making policies human-inspectable and self-documenting. At each generation, evolution signals from simulation metrics such as queue percentiles, delay trends, and stagnation are translated into natural language feedback to guide improvement. SignalClaw also introduces event-driven compositional evolution: an event detector identifies emergency vehicles, transit priority, incidents, and congestion via TraCI, and a priority dispatcher selects specialized skills. Each skill is evolved independently, and a priority chain enables runtime composition without retraining. We evaluate SignalClaw on routine and event-injected SUMO scenarios against four baselines. On routine scenarios, it achieves average delay of 7.8 to 9.2 seconds, within 3 to 10 percent of the best method, with low variance across random seeds. Under event scenarios, it yields the lowest emergency delay 11.2 to 18.5 seconds versus 42.3 to 72.3 for MaxPressure and 78.5 to 95.3 for DQN, and the lowest transit person delay 9.8 to 11.5 seconds versus 38.7 to 45.2 for MaxPressure. In mixed events, the dispatcher composes skills effectively while maintaining stable overall delay. The evolved skills progress from simple linear rules to conditional strategies with multi-feature interactions, while remaining fully interpretable and directly modifiable by traffic engineers.

SACRED: A Faithful Annotated Multimedia Multimodal Multilingual Dataset for Classifying Connectedness Types in Online Spirituality

Mar 28, 2026Abstract:In religion and theology studies, spirituality has garnered significant research attention for the reason that it not only transcends culture but offers unique experience to each individual. However, social scientists often rely on limited datasets, which are basically unavailable online. In this study, we collaborated with social scientists to develop a high-quality multimedia multi-modal datasets, \textbf{SACRED}, in which the faithfulness of classification is guaranteed. Using \textbf{SACRED}, we evaluated the performance of 13 popular LLMs as well as traditional rule-based and fine-tuned approaches. The result suggests DeepSeek-V3 model performs well in classifying such abstract concepts (i.e., 79.19\% accuracy in the Quora test set), and the GPT-4o-mini model surpassed the other models in the vision tasks (63.99\% F1 score). Purportedly, this is the first annotated multi-modal dataset from online spirituality communication. Our study also found a new type of connectedness which is valuable for communication science studies.

QualiTeacher: Quality-Conditioned Pseudo-Labeling for Real-World Image Restoration

Mar 09, 2026Abstract:Real-world image restoration (RWIR) is a highly challenging task due to the absence of clean ground-truth images. Many recent methods resort to pseudo-label (PL) supervision, often within a Mean-Teacher (MT) framework. However, these methods face a critical paradox: unconditionally trusting the often imperfect, low-quality PLs forces the student model to learn undesirable artifacts, while discarding them severely limits data diversity and impairs model generalization. In this paper, we propose QualiTeacher, a novel framework that transforms pseudo-label quality from a noisy liability into a conditional supervisory signal. Instead of filtering, QualiTeacher explicitly conditions the student model on the quality of the PLs, estimated by an ensemble of complementary non-reference image quality assessment (NR-IQA) models spanning low-level distortion and semantic-level assessment. This strategy teaches the student network to learn a quality-graded restoration manifold, enabling it to understand what constitutes different quality levels. Consequently, it can not only avoid mimicking artifacts from low-quality labels but also extrapolate to generate results of higher quality than the teacher itself. To ensure the robustness and accuracy of this quality-driven learning, we further enhance the process with a multi-augmentation scheme to diversify the PL quality spectrum, a score-based preference optimization strategy inspired by Direct Preference Optimization (DPO) to enforce a monotonically ordered quality separation, and a cropped consistency loss to prevent adversarial over-optimization (reward hacking) of the IQA models. Experiments on standard RWIR benchmarks demonstrate that QualiTeacher can serve as a plug-and-play strategy to improve the quality of the existing pseudo-labeling framework, establishing a new paradigm for learning from imperfect supervision. Code will be released.

What Makes Value Learning Efficient in Residual Reinforcement Learning?

Feb 11, 2026Abstract:Residual reinforcement learning (RL) enables stable online refinement of expressive pretrained policies by freezing the base and learning only bounded corrections. However, value learning in residual RL poses unique challenges that remain poorly understood. In this work, we identify two key bottlenecks: cold start pathology, where the critic lacks knowledge of the value landscape around the base policy, and structural scale mismatch, where the residual contribution is dwarfed by the base action. Through systematic investigation, we uncover the mechanisms underlying these bottlenecks, revealing that simple yet principled solutions suffice: base-policy transitions serve as an essential value anchor for implicit warmup, and critic normalization effectively restores representation sensitivity for discerning value differences. Based on these insights, we propose DAWN (Data-Anchored Warmup and Normalization), a minimal approach targeting efficient value learning in residual RL. By addressing these bottlenecks, DAWN demonstrates substantial efficiency gains across diverse benchmarks, policy architectures, and observation modalities.

LLaTTE: Scaling Laws for Multi-Stage Sequence Modeling in Large-Scale Ads Recommendation

Jan 27, 2026Abstract:We present LLaTTE (LLM-Style Latent Transformers for Temporal Events), a scalable transformer architecture for production ads recommendation. Through systematic experiments, we demonstrate that sequence modeling in recommendation systems follows predictable power-law scaling similar to LLMs. Crucially, we find that semantic features bend the scaling curve: they are a prerequisite for scaling, enabling the model to effectively utilize the capacity of deeper and longer architectures. To realize the benefits of continued scaling under strict latency constraints, we introduce a two-stage architecture that offloads the heavy computation of large, long-context models to an asynchronous upstream user model. We demonstrate that upstream improvements transfer predictably to downstream ranking tasks. Deployed as the largest user model at Meta, this multi-stage framework drives a 4.3\% conversion uplift on Facebook Feed and Reels with minimal serving overhead, establishing a practical blueprint for harnessing scaling laws in industrial recommender systems.

Nodule-DETR: A Novel DETR Architecture with Frequency-Channel Attention for Ultrasound Thyroid Nodule Detection

Jan 05, 2026Abstract:Thyroid cancer is the most common endocrine malignancy, and its incidence is rising globally. While ultrasound is the preferred imaging modality for detecting thyroid nodules, its diagnostic accuracy is often limited by challenges such as low image contrast and blurred nodule boundaries. To address these issues, we propose Nodule-DETR, a novel detection transformer (DETR) architecture designed for robust thyroid nodule detection in ultrasound images. Nodule-DETR introduces three key innovations: a Multi-Spectral Frequency-domain Channel Attention (MSFCA) module that leverages frequency analysis to enhance features of low-contrast nodules; a Hierarchical Feature Fusion (HFF) module for efficient multi-scale integration; and Multi-Scale Deformable Attention (MSDA) to flexibly capture small and irregularly shaped nodules. We conducted extensive experiments on a clinical dataset of real-world thyroid ultrasound images. The results demonstrate that Nodule-DETR achieves state-of-the-art performance, outperforming the baseline model by a significant margin of 0.149 in mAP@0.5:0.95. The superior accuracy of Nodule-DETR highlights its significant potential for clinical application as an effective tool in computer-aided thyroid diagnosis. The code of work is available at https://github.com/wjj1wjj/Nodule-DETR.

Autonomously Unweaving Multiple Cables Using Visual Feedback

Dec 13, 2025

Abstract:Many cable management tasks involve separating out the different cables and removing tangles. Automating this task is challenging because cables are deformable and can have combinations of knots and multiple interwoven segments. Prior works have focused on untying knots in one cable, which is one subtask of cable management. However, in this paper, we focus on a different subtask called multi-cable unweaving, which refers to removing the intersections among multiple interwoven cables to separate them and facilitate further manipulation. We propose a method that utilizes visual feedback to unweave a bundle of loosely entangled cables. We formulate cable unweaving as a pick-and-place problem, where the grasp position is selected from discrete nodes in a graph-based cable state representation. Our cable state representation encodes both topological and geometric information about the cables from the visual image. To predict future cable states and identify valid actions, we present a novel state transition model that takes into account the straightening and bending of cables during manipulation. Using this state transition model, we select between two high-level action primitives and calculate predicted immediate costs to optimize the lower-level actions. We experimentally demonstrate that iterating the above perception-planning-action process enables unweaving electric cables and shoelaces with an 84% success rate on average.

Scaling Latent Reasoning via Looped Language Models

Oct 29, 2025

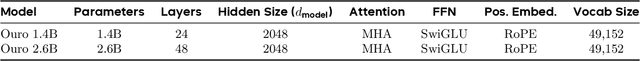

Abstract:Modern LLMs are trained to "think" primarily via explicit text generation, such as chain-of-thought (CoT), which defers reasoning to post-training and under-leverages pre-training data. We present and open-source Ouro, named after the recursive Ouroboros, a family of pre-trained Looped Language Models (LoopLM) that instead build reasoning into the pre-training phase through (i) iterative computation in latent space, (ii) an entropy-regularized objective for learned depth allocation, and (iii) scaling to 7.7T tokens. Ouro 1.4B and 2.6B models enjoy superior performance that match the results of up to 12B SOTA LLMs across a wide range of benchmarks. Through controlled experiments, we show this advantage stems not from increased knowledge capacity, but from superior knowledge manipulation capabilities. We also show that LoopLM yields reasoning traces more aligned with final outputs than explicit CoT. We hope our results show the potential of LoopLM as a novel scaling direction in the reasoning era. Our model could be found in: http://ouro-llm.github.io.

Bag-of-Word-Groups (BoWG): A Robust and Efficient Loop Closure Detection Method Under Perceptual Aliasing

Oct 26, 2025Abstract:Loop closure is critical in Simultaneous Localization and Mapping (SLAM) systems to reduce accumulative drift and ensure global mapping consistency. However, conventional methods struggle in perceptually aliased environments, such as narrow pipes, due to vector quantization, feature sparsity, and repetitive textures, while existing solutions often incur high computational costs. This paper presents Bag-of-Word-Groups (BoWG), a novel loop closure detection method that achieves superior precision-recall, robustness, and computational efficiency. The core innovation lies in the introduction of word groups, which captures the spatial co-occurrence and proximity of visual words to construct an online dictionary. Additionally, drawing inspiration from probabilistic transition models, we incorporate temporal consistency directly into similarity computation with an adaptive scheme, substantially improving precision-recall performance. The method is further strengthened by a feature distribution analysis module and dedicated post-verification mechanisms. To evaluate the effectiveness of our method, we conduct experiments on both public datasets and a confined-pipe dataset we constructed. Results demonstrate that BoWG surpasses state-of-the-art methods, including both traditional and learning-based approaches, in terms of precision-recall and computational efficiency. Our approach also exhibits excellent scalability, achieving an average processing time of 16 ms per image across 17,565 images in the Bicocca25b dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge