Fabio Ramos

NVIDIA, University of Sydney

Signatures Meet Dynamic Programming: Generalizing Bellman Equations for Trajectory Following

Dec 09, 2023Abstract:Path signatures have been proposed as a powerful representation of paths that efficiently captures the path's analytic and geometric characteristics, having useful algebraic properties including fast concatenation of paths through tensor products. Signatures have recently been widely adopted in machine learning problems for time series analysis. In this work we establish connections between value functions typically used in optimal control and intriguing properties of path signatures. These connections motivate our novel control framework with signature transforms that efficiently generalizes the Bellman equation to the space of trajectories. We analyze the properties and advantages of the framework, termed signature control. In particular, we demonstrate that (i) it can naturally deal with varying/adaptive time steps; (ii) it propagates higher-level information more efficiently than value function updates; (iii) it is robust to dynamical system misspecification over long rollouts. As a specific case of our framework, we devise a model predictive control method for path tracking. This method generalizes integral control, being suitable for problems with unknown disturbances. The proposed algorithms are tested in simulation, with differentiable physics models including typical control and robotics tasks such as point-mass, curve following for an ant model, and a robotic manipulator.

Stein Variational Belief Propagation for Multi-Robot Coordination

Nov 28, 2023

Abstract:Decentralized coordination for multi-robot systems involves planning in challenging, high-dimensional spaces. The planning problem is particularly challenging in the presence of obstacles and different sources of uncertainty such as inaccurate dynamic models and sensor noise. In this paper, we introduce Stein Variational Belief Propagation (SVBP), a novel algorithm for performing inference over nonparametric marginal distributions of nodes in a graph. We apply SVBP to multi-robot coordination by modelling a robot swarm as a graphical model and performing inference for each robot. We demonstrate our algorithm on a simulated multi-robot perception task, and on a multi-robot planning task within a Model-Predictive Control (MPC) framework, on both simulated and real-world mobile robots. Our experiments show that SVBP represents multi-modal distributions better than sampling-based or Gaussian baselines, resulting in improved performance on perception and planning tasks. Furthermore, we show that SVBP's ability to represent diverse trajectories for decentralized multi-robot planning makes it less prone to deadlock scenarios than leading baselines.

cuRobo: Parallelized Collision-Free Minimum-Jerk Robot Motion Generation

Nov 03, 2023

Abstract:This paper explores the problem of collision-free motion generation for manipulators by formulating it as a global motion optimization problem. We develop a parallel optimization technique to solve this problem and demonstrate its effectiveness on massively parallel GPUs. We show that combining simple optimization techniques with many parallel seeds leads to solving difficult motion generation problems within 50ms on average, 60x faster than state-of-the-art (SOTA) trajectory optimization methods. We achieve SOTA performance by combining L-BFGS step direction estimation with a novel parallel noisy line search scheme and a particle-based optimization solver. To further aid trajectory optimization, we develop a parallel geometric planner that plans within 20ms and also introduce a collision-free IK solver that can solve over 7000 queries/s. We package our contributions into a state of the art GPU accelerated motion generation library, cuRobo and release it to enrich the robotics community. Additional details are available at https://curobo.org

STAMP: Differentiable Task and Motion Planning via Stein Variational Gradient Descent

Oct 03, 2023

Abstract:Planning for many manipulation tasks, such as using tools or assembling parts, often requires both symbolic and geometric reasoning. Task and Motion Planning (TAMP) algorithms typically solve these problems by conducting a tree search over high-level task sequences while checking for kinematic and dynamic feasibility. While performant, most existing algorithms are highly inefficient as their time complexity grows exponentially with the number of possible actions and objects. Additionally, they only find a single solution to problems in which many feasible plans may exist. To address these limitations, we propose a novel algorithm called Stein Task and Motion Planning (STAMP) that leverages parallelization and differentiable simulation to efficiently search for multiple diverse plans. STAMP relaxes discrete-and-continuous TAMP problems into continuous optimization problems that can be solved using variational inference. Our algorithm builds upon Stein Variational Gradient Descent, a gradient-based variational inference algorithm, and parallelized differentiable physics simulators on the GPU to efficiently obtain gradients for inference. Further, we employ imitation learning to introduce action abstractions that reduce the inference problem to lower dimensions. We demonstrate our method on two TAMP problems and empirically show that STAMP is able to: 1) produce multiple diverse plans in parallel; and 2) search for plans more efficiently compared to existing TAMP baselines.

Path Signatures for Diversity in Probabilistic Trajectory Optimisation

Aug 08, 2023

Abstract:Motion planning can be cast as a trajectory optimisation problem where a cost is minimised as a function of the trajectory being generated. In complex environments with several obstacles and complicated geometry, this optimisation problem is usually difficult to solve and prone to local minima. However, recent advancements in computing hardware allow for parallel trajectory optimisation where multiple solutions are obtained simultaneously, each initialised from a different starting point. Unfortunately, without a strategy preventing two solutions to collapse on each other, naive parallel optimisation can suffer from mode collapse diminishing the efficiency of the approach and the likelihood of finding a global solution. In this paper we leverage on recent advances in the theory of rough paths to devise an algorithm for parallel trajectory optimisation that promotes diversity over the range of solutions, therefore avoiding mode collapses and achieving better global properties. Our approach builds on path signatures and Hilbert space representations of trajectories, and connects parallel variational inference for trajectory estimation with diversity promoting kernels. We empirically demonstrate that this strategy achieves lower average costs than competing alternatives on a range of problems, from 2D navigation to robotic manipulators operating in cluttered environments.

Learning to Simulate Tree-Branch Dynamics for Manipulation

Jun 06, 2023Abstract:We propose to use a simulation driven inverse inference approach to model the joint dynamics of tree branches under manipulation. Learning branch dynamics and gaining the ability to manipulate deformable vegetation can help with occlusion-prone tasks, such as fruit picking in dense foliage, as well as moving overhanging vines and branches for navigation in dense vegetation. The underlying deformable tree geometry is encapsulated as coarse spring abstractions executed on parallel, non-differentiable simulators. The implicit statistical model defined by the simulator, reference trajectories obtained by actively probing the ground truth, and the Bayesian formalism, together guide the spring parameter posterior density estimation. Our non-parametric inference algorithm, based on Stein Variational Gradient Descent, incorporates biologically motivated assumptions into the inference process as neural network driven learnt joint priors; moreover, it leverages the finite difference scheme for gradient approximations. Real and simulated experiments confirm that our model can predict deformation trajectories, quantify the estimation uncertainty, and it can perform better when base-lined against other inference algorithms, particularly from the Monte Carlo family. The model displays strong robustness properties in the presence of heteroscedastic sensor noise; furthermore, it can generalise to unseen grasp locations.

IndustReal: Transferring Contact-Rich Assembly Tasks from Simulation to Reality

May 26, 2023Abstract:Robotic assembly is a longstanding challenge, requiring contact-rich interaction and high precision and accuracy. Many applications also require adaptivity to diverse parts, poses, and environments, as well as low cycle times. In other areas of robotics, simulation is a powerful tool to develop algorithms, generate datasets, and train agents. However, simulation has had a more limited impact on assembly. We present IndustReal, a set of algorithms, systems, and tools that solve assembly tasks in simulation with reinforcement learning (RL) and successfully achieve policy transfer to the real world. Specifically, we propose 1) simulation-aware policy updates, 2) signed-distance-field rewards, and 3) sampling-based curricula for robotic RL agents. We use these algorithms to enable robots to solve contact-rich pick, place, and insertion tasks in simulation. We then propose 4) a policy-level action integrator to minimize error at policy deployment time. We build and demonstrate a real-world robotic assembly system that uses the trained policies and action integrator to achieve repeatable performance in the real world. Finally, we present hardware and software tools that allow other researchers to reproduce our system and results. For videos and additional details, please see http://sites.google.com/nvidia.com/industreal .

DefGraspNets: Grasp Planning on 3D Fields with Graph Neural Nets

Mar 28, 2023

Abstract:Robotic grasping of 3D deformable objects is critical for real-world applications such as food handling and robotic surgery. Unlike rigid and articulated objects, 3D deformable objects have infinite degrees of freedom. Fully defining their state requires 3D deformation and stress fields, which are exceptionally difficult to analytically compute or experimentally measure. Thus, evaluating grasp candidates for grasp planning typically requires accurate, but slow 3D finite element method (FEM) simulation. Sampling-based grasp planning is often impractical, as it requires evaluation of a large number of grasp candidates. Gradient-based grasp planning can be more efficient, but requires a differentiable model to synthesize optimal grasps from initial candidates. Differentiable FEM simulators may fill this role, but are typically no faster than standard FEM. In this work, we propose learning a predictive graph neural network (GNN), DefGraspNets, to act as our differentiable model. We train DefGraspNets to predict 3D stress and deformation fields based on FEM-based grasp simulations. DefGraspNets not only runs up to 1500 times faster than the FEM simulator, but also enables fast gradient-based grasp optimization over 3D stress and deformation metrics. We design DefGraspNets to align with real-world grasp planning practices and demonstrate generalization across multiple test sets, including real-world experiments.

Batch Bayesian optimisation via density-ratio estimation with guarantees

Sep 22, 2022

Abstract:Bayesian optimisation (BO) algorithms have shown remarkable success in applications involving expensive black-box functions. Traditionally BO has been set as a sequential decision-making process which estimates the utility of query points via an acquisition function and a prior over functions, such as a Gaussian process. Recently, however, a reformulation of BO via density-ratio estimation (BORE) allowed reinterpreting the acquisition function as a probabilistic binary classifier, removing the need for an explicit prior over functions and increasing scalability. In this paper, we present a theoretical analysis of BORE's regret and an extension of the algorithm with improved uncertainty estimates. We also show that BORE can be naturally extended to a batch optimisation setting by recasting the problem as approximate Bayesian inference. The resulting algorithm comes equipped with theoretical performance guarantees and is assessed against other batch BO baselines in a series of experiments.

Renaissance Robot: Optimal Transport Policy Fusion for Learning Diverse Skills

Jul 03, 2022

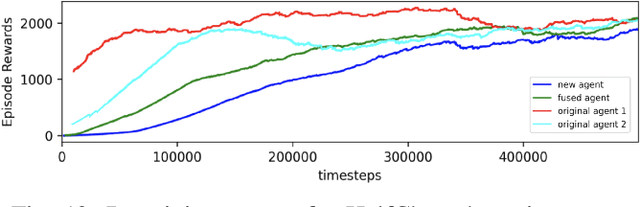

Abstract:Deep reinforcement learning (RL) is a promising approach to solving complex robotics problems. However, the process of learning through trial-and-error interactions is often highly time-consuming, despite recent advancements in RL algorithms. Additionally, the success of RL is critically dependent on how well the reward-shaping function suits the task, which is also time-consuming to design. As agents trained on a variety of robotics problems continue to proliferate, the ability to reuse their valuable learning for new domains becomes increasingly significant. In this paper, we propose a post-hoc technique for policy fusion using Optimal Transport theory as a robust means of consolidating the knowledge of multiple agents that have been trained on distinct scenarios. We further demonstrate that this provides an improved weights initialisation of the neural network policy for learning new tasks, requiring less time and computational resources than either retraining the parent policies or training a new policy from scratch. Ultimately, our results on diverse agents commonly used in deep RL show that specialised knowledge can be unified into a "Renaissance agent", allowing for quicker learning of new skills.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge