Michael A. Lin

Isaac Lab: A GPU-Accelerated Simulation Framework for Multi-Modal Robot Learning

Nov 06, 2025

Abstract:We present Isaac Lab, the natural successor to Isaac Gym, which extends the paradigm of GPU-native robotics simulation into the era of large-scale multi-modal learning. Isaac Lab combines high-fidelity GPU parallel physics, photorealistic rendering, and a modular, composable architecture for designing environments and training robot policies. Beyond physics and rendering, the framework integrates actuator models, multi-frequency sensor simulation, data collection pipelines, and domain randomization tools, unifying best practices for reinforcement and imitation learning at scale within a single extensible platform. We highlight its application to a diverse set of challenges, including whole-body control, cross-embodiment mobility, contact-rich and dexterous manipulation, and the integration of human demonstrations for skill acquisition. Finally, we discuss upcoming integration with the differentiable, GPU-accelerated Newton physics engine, which promises new opportunities for scalable, data-efficient, and gradient-based approaches to robot learning. We believe Isaac Lab's combination of advanced simulation capabilities, rich sensing, and data-center scale execution will help unlock the next generation of breakthroughs in robotics research.

Navigation and 3D Surface Reconstruction from Passive Whisker Sensing

Jun 10, 2024

Abstract:Whiskers provide a way to sense surfaces in the immediate environment without disturbing it. In this paper we present a method for using highly flexible, curved, passive whiskers mounted along a robot arm to gather sensory data as they brush past objects during normal robot motion. The information is useful both for guiding the robot in cluttered spaces and for reconstructing the exposed faces of objects. Surface reconstruction depends on accurate localization of contact points along each whisker. We present an algorithm based on Bayesian filtering that rapidly converges to within 1\,mm of the actual contact locations. The piecewise-continuous history of contact locations from each whisker allows for accurate reconstruction of curves on object surfaces. Employing multiple whiskers and traces, we are able to produce an occupancy map of proximal objects.

IndustReal: Transferring Contact-Rich Assembly Tasks from Simulation to Reality

May 26, 2023Abstract:Robotic assembly is a longstanding challenge, requiring contact-rich interaction and high precision and accuracy. Many applications also require adaptivity to diverse parts, poses, and environments, as well as low cycle times. In other areas of robotics, simulation is a powerful tool to develop algorithms, generate datasets, and train agents. However, simulation has had a more limited impact on assembly. We present IndustReal, a set of algorithms, systems, and tools that solve assembly tasks in simulation with reinforcement learning (RL) and successfully achieve policy transfer to the real world. Specifically, we propose 1) simulation-aware policy updates, 2) signed-distance-field rewards, and 3) sampling-based curricula for robotic RL agents. We use these algorithms to enable robots to solve contact-rich pick, place, and insertion tasks in simulation. We then propose 4) a policy-level action integrator to minimize error at policy deployment time. We build and demonstrate a real-world robotic assembly system that uses the trained policies and action integrator to achieve repeatable performance in the real world. Finally, we present hardware and software tools that allow other researchers to reproduce our system and results. For videos and additional details, please see http://sites.google.com/nvidia.com/industreal .

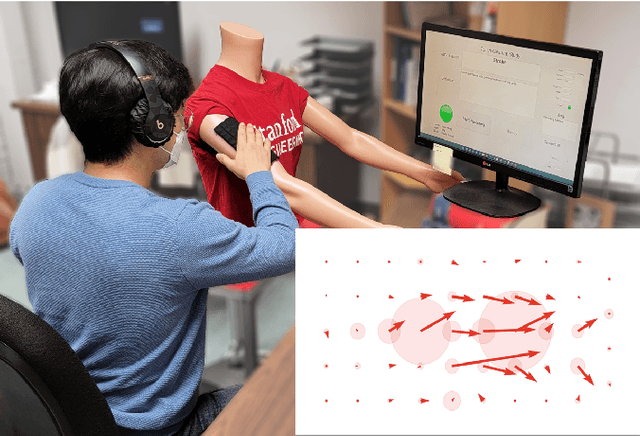

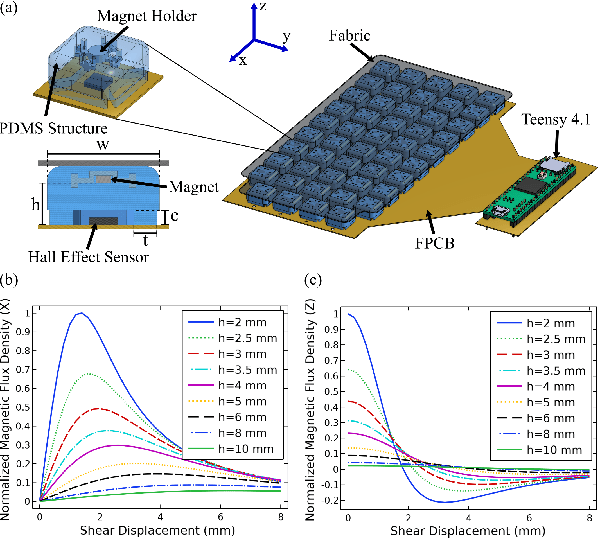

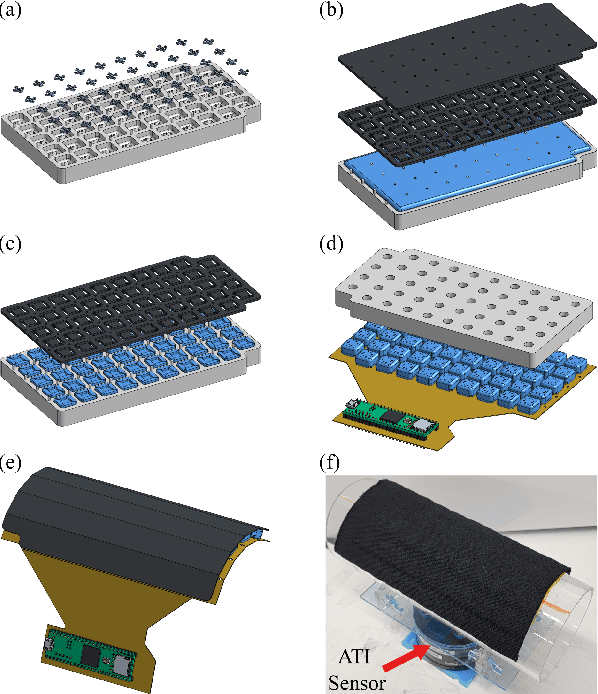

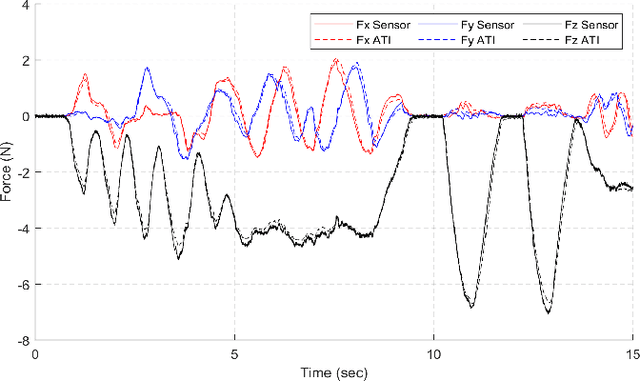

Deep Learning Classification of Touch Gestures Using Distributed Normal and Shear Force

Sep 30, 2022

Abstract:When humans socially interact with another agent (e.g., human, pet, or robot) through touch, they do so by applying varying amounts of force with different directions, locations, contact areas, and durations. While previous work on touch gesture recognition has focused on the spatio-temporal distribution of normal forces, we hypothesize that the addition of shear forces will permit more reliable classification. We present a soft, flexible skin with an array of tri-axial tactile sensors for the arm of a person or robot. We use it to collect data on 13 touch gesture classes through user studies and train a Convolutional Neural Network (CNN) to learn spatio-temporal features from the recorded data. The network achieved a recognition accuracy of 74% with normal and shear data, compared to 66% using only normal force data. Adding distributed shear data improved classification accuracy for 11 out of 13 touch gesture classes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge