Xuanyi Liu

Aligning LLM Uncertainty with Human Disagreement in Subjectivity Analysis

May 11, 2026Abstract:Large language models for subjectivity analysis are typically trained with aggregated labels, which compress variations in human judgment into a single supervision signal. This paradigm overlooks the intrinsic uncertainty of low-agreement samples and often induces overconfident predictions, undermining reliability and generalization in complex subjective settings. In this work, we advocate uncertainty-aware subjectivity analysis, where models are expected to make predictions while expressing uncertainty that reflects human disagreement. To operationalize this perspective, we propose a two-phase Disagreement Perception and Uncertainty Alignment (DPUA) framework. Specifically, DPUA jointly models label prediction, rationale generation, and uncertainty expression under an uncertainty-aware setting. In the disagreement perception phase, adaptive decoupled learning enhances the model's sensitivity to disagreement-related cues while preserving task performance. In the uncertainty alignment phase, GRPO-based reward optimization further improves uncertainty-aware reasoning and aligns the model's confidence expression with the human disagreement distribution. Experiments on three subjectivity analysis tasks show that DPUA preserves task performance while better aligning model uncertainty with human disagreement, mitigating overconfidence on boundary samples, and improving out-of-distribution generalization.

ARGUS: Policy-Adaptive Ad Governance via Evolving Reinforcement with Adversarial Umpiring

May 04, 2026Abstract:Online advertising governance faces significant challenges due to the non-stationary nature of regulatory policies, where emerging mandates (e.g., restrictions on education or aesthetic anxiety) create severe label inconsistencies and reasoning ambiguities in historical datasets. In this paper, we propose ARGUS, a policy-adaptive governance system that enables evolving reinforcement through multi-agent adversarial umpiring. ARGUS addresses the sparsity of new policy data by employing a three-stage framework: (1) Policy Seeding for initial perception; (2) Adversarial Label Rectification, which utilizes a ``Prosecutor-Defender-Umpire'' architecture to resolve conflicts between stale labels and new mandates; and (3) Latent Knowledge Discovery, which employs a tripartite dialectical discussion to unearth sophisticated, ``gray-area'' violations. By leveraging RAG-enhanced policy knowledge and Chain-of-Thought synthesis as dynamic rewards for reinforcement learning, ARGUS synchronizes its reasoning pathways with evolving regulations. Extensive experiments on both industrial and public datasets demonstrate that ARGUS significantly outperforms traditional fine-tuning baselines, achieving superior policy-adaptive learning with minimal gold data.

StreamCacheVGGT: Streaming Visual Geometry Transformers with Robust Scoring and Hybrid Cache Compression

Apr 16, 2026Abstract:Reconstructing dense 3D geometry from continuous video streams requires stable inference under a constant memory budget. Existing $O(1)$ frameworks primarily rely on a ``pure eviction'' paradigm, which suffers from significant information destruction due to binary token deletion and evaluation noise from localized, single-layer scoring. To address these bottlenecks, we propose StreamCacheVGGT, a training-free framework that reimagines cache management through two synergistic modules: Cross-Layer Consistency-Enhanced Scoring (CLCES) and Hybrid Cache Compression (HCC). CLCES mitigates activation noise by tracking token importance trajectories across the Transformer hierarchy, employing order-statistical analysis to identify sustained geometric salience. Leveraging these robust scores, HCC transcends simple eviction by introducing a three-tier triage strategy that merges moderately important tokens into retained anchors via nearest-neighbor assignment on the key-vector manifold. This approach preserves essential geometric context that would otherwise be lost. Extensive evaluations on five benchmarks (7-Scenes, NRGBD, ETH3D, Bonn, and KITTI) demonstrate that StreamCacheVGGT sets a new state-of-the-art, delivering superior reconstruction accuracy and long-term stability while strictly adhering to constant-cost constraints.

Large Language Model for Discrete Optimization Problems: Evaluation and Step-by-step Reasoning

Mar 08, 2026Abstract:This work investigated the capabilities of different models, including the Llama-3 series of models and CHATGPT, with different forms of expression in solving discrete optimization problems by testing natural language datasets. In contrast to formal datasets with a limited scope of parameters, our dataset included a variety of problem types in discrete optimization problems and featured a wide range of parameter magnitudes, including instances with large parameter sets, integrated with augmented data. It aimed to (1) provide an overview of LLMs' ability in large-scale problems, (2) offer suggestions to those who want to solve discrete optimization problems automatically, and (3) regard the performance as a benchmark for future research. These datasets included original, expanded and augmented datasets. Among these three datasets, the original and augmented ones aimed for evaluation while the expanded one may help finetune a new model. In the experiment, comparisons were made between strong and week models, CoT methods and No-CoT methods on various datasets. The result showed that stronger model performed better reasonably. Contrary to general agreement, it also showed that CoT technique was not always effective regarding the capability of models and disordered datasets improved performance of models on easy to-understand problems, even though they were sometimes with high variance, a manifestation of instability. Therefore, for those who seek to enhance the automatic resolution of discrete optimization problems, it is recommended to consult the results, including the line charts presented in the Appendix, as well as the conclusions drawn in this study for relevant suggestions.

Unlocking the Power of SAM 2 for Few-Shot Segmentation

May 21, 2025

Abstract:Few-Shot Segmentation (FSS) aims to learn class-agnostic segmentation on few classes to segment arbitrary classes, but at the risk of overfitting. To address this, some methods use the well-learned knowledge of foundation models (e.g., SAM) to simplify the learning process. Recently, SAM 2 has extended SAM by supporting video segmentation, whose class-agnostic matching ability is useful to FSS. A simple idea is to encode support foreground (FG) features as memory, with which query FG features are matched and fused. Unfortunately, the FG objects in different frames of SAM 2's video data are always the same identity, while those in FSS are different identities, i.e., the matching step is incompatible. Therefore, we design Pseudo Prompt Generator to encode pseudo query memory, matching with query features in a compatible way. However, the memories can never be as accurate as the real ones, i.e., they are likely to contain incomplete query FG, and some unexpected query background (BG) features, leading to wrong segmentation. Hence, we further design Iterative Memory Refinement to fuse more query FG features into the memory, and devise a Support-Calibrated Memory Attention to suppress the unexpected query BG features in memory. Extensive experiments have been conducted on PASCAL-5$^i$ and COCO-20$^i$ to validate the effectiveness of our design, e.g., the 1-shot mIoU can be 4.2% better than the best baseline.

POPEN: Preference-Based Optimization and Ensemble for LVLM-Based Reasoning Segmentation

Apr 01, 2025

Abstract:Existing LVLM-based reasoning segmentation methods often suffer from imprecise segmentation results and hallucinations in their text responses. This paper introduces POPEN, a novel framework designed to address these issues and achieve improved results. POPEN includes a preference-based optimization method to finetune the LVLM, aligning it more closely with human preferences and thereby generating better text responses and segmentation results. Additionally, POPEN introduces a preference-based ensemble method for inference, which integrates multiple outputs from the LVLM using a preference-score-based attention mechanism for refinement. To better adapt to the segmentation task, we incorporate several task-specific designs in our POPEN framework, including a new approach for collecting segmentation preference data with a curriculum learning mechanism, and a novel preference optimization loss to refine the segmentation capability of the LVLM. Experiments demonstrate that our method achieves state-of-the-art performance in reasoning segmentation, exhibiting minimal hallucination in text responses and the highest segmentation accuracy compared to previous advanced methods like LISA and PixelLM. Project page is https://lanyunzhu.site/POPEN/

X as Supervision: Contending with Depth Ambiguity in Unsupervised Monocular 3D Pose Estimation

Nov 20, 2024Abstract:Recent unsupervised methods for monocular 3D pose estimation have endeavored to reduce dependence on limited annotated 3D data, but most are solely formulated in 2D space, overlooking the inherent depth ambiguity issue. Due to the information loss in 3D-to-2D projection, multiple potential depths may exist, yet only some of them are plausible in human structure. To tackle depth ambiguity, we propose a novel unsupervised framework featuring a multi-hypothesis detector and multiple tailored pretext tasks. The detector extracts multiple hypotheses from a heatmap within a local window, effectively managing the multi-solution problem. Furthermore, the pretext tasks harness 3D human priors from the SMPL model to regularize the solution space of pose estimation, aligning it with the empirical distribution of 3D human structures. This regularization is partially achieved through a GCN-based discriminator within the discriminative learning, and is further complemented with synthetic images through rendering, ensuring plausible estimations. Consequently, our approach demonstrates state-of-the-art unsupervised 3D pose estimation performance on various human datasets. Further evaluations on data scale-up and one animal dataset highlight its generalization capabilities. Code will be available at https://github.com/Charrrrrlie/X-as-Supervision.

Hybrid Mamba for Few-Shot Segmentation

Sep 29, 2024

Abstract:Many few-shot segmentation (FSS) methods use cross attention to fuse support foreground (FG) into query features, regardless of the quadratic complexity. A recent advance Mamba can also well capture intra-sequence dependencies, yet the complexity is only linear. Hence, we aim to devise a cross (attention-like) Mamba to capture inter-sequence dependencies for FSS. A simple idea is to scan on support features to selectively compress them into the hidden state, which is then used as the initial hidden state to sequentially scan query features. Nevertheless, it suffers from (1) support forgetting issue: query features will also gradually be compressed when scanning on them, so the support features in hidden state keep reducing, and many query pixels cannot fuse sufficient support features; (2) intra-class gap issue: query FG is essentially more similar to itself rather than to support FG, i.e., query may prefer not to fuse support features but their own ones from the hidden state, yet the success of FSS relies on the effective use of support information. To tackle them, we design a hybrid Mamba network (HMNet), including (1) a support recapped Mamba to periodically recap the support features when scanning query, so the hidden state can always contain rich support information; (2) a query intercepted Mamba to forbid the mutual interactions among query pixels, and encourage them to fuse more support features from the hidden state. Consequently, the support information is better utilized, leading to better performance. Extensive experiments have been conducted on two public benchmarks, showing the superiority of HMNet. The code is available at https://github.com/Sam1224/HMNet.

Proposal-Level Unsupervised Domain Adaptation for Open World Unbiased Detector

Nov 04, 2023

Abstract:Open World Object Detection (OWOD) combines open-set object detection with incremental learning capabilities to handle the challenge of the open and dynamic visual world. Existing works assume that a foreground predictor trained on the seen categories can be directly transferred to identify the unseen categories' locations by selecting the top-k most confident foreground predictions. However, the assumption is hardly valid in practice. This is because the predictor is inevitably biased to the known categories, and fails under the shift in the appearance of the unseen categories. In this work, we aim to build an unbiased foreground predictor by re-formulating the task under Unsupervised Domain Adaptation, where the current biased predictor helps form the domains: the seen object locations and confident background locations as the source domain, and the rest ambiguous ones as the target domain. Then, we adopt the simple and effective self-training method to learn a predictor based on the domain-invariant foreground features, hence achieving unbiased prediction robust to the shift in appearance between the seen and unseen categories. Our approach's pipeline can adapt to various detection frameworks and UDA methods, empirically validated by OWOD evaluation, where we achieve state-of-the-art performance.

Ultrafast Parallel LiDAR with Time-encoding and Spectral Scanning: Breaking the Time-of-flight Limit

Mar 09, 2021

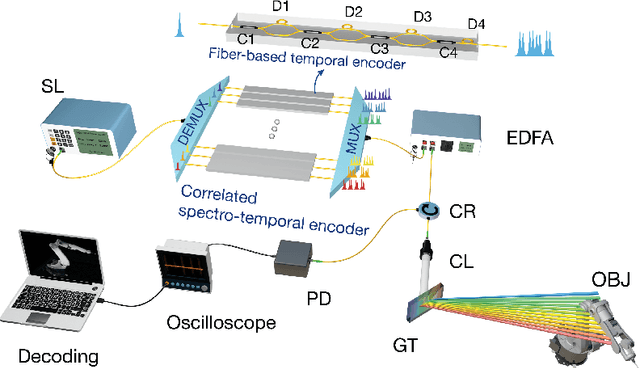

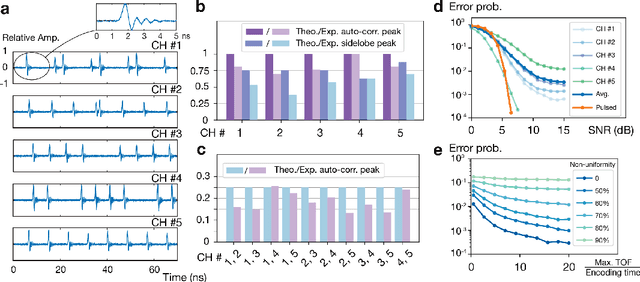

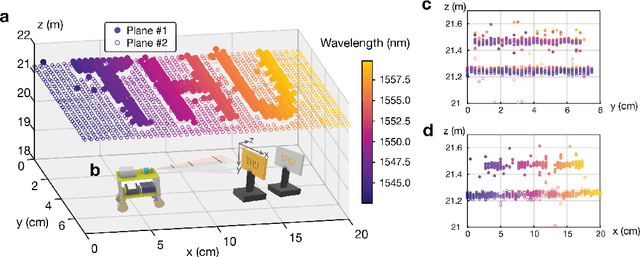

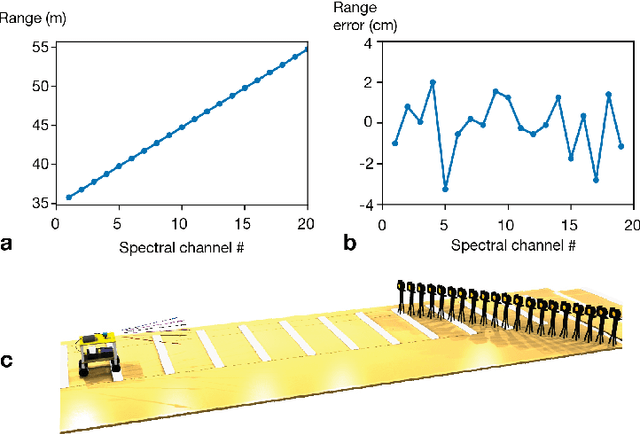

Abstract:Light detection and ranging (LiDAR) has been widely used in autonomous driving and large-scale manufacturing. Although state-of-the-art scanning LiDAR can perform long-range three-dimensional imaging, the frame rate is limited by both round-trip delay and the beam steering speed, hindering the development of high-speed autonomous vehicles. For hundred-meter level ranging applications, a several-time speedup is highly desirable. Here, we uniquely combine fiber-based encoders with wavelength-division multiplexing devices to implement all-optical time-encoding on the illumination light. Using this method, parallel detection and fast inertia-free spectral scanning can be achieved simultaneously with single-pixel detection. As a result, the frame rate of a scanning LiDAR can be multiplied with scalability. We demonstrate a 4.4-fold speedup for a maximum 75-m detection range, compared with a time-of-flight-limited laser ranging system. This approach has the potential to improve the velocity of LiDAR-based autonomous vehicles to the regime of hundred kilometers per hour and open up a new paradigm for ultrafast-frame-rate LiDAR imaging.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge