"Information": models, code, and papers

Understanding Transformer Memorization Recall Through Idioms

Oct 11, 2022

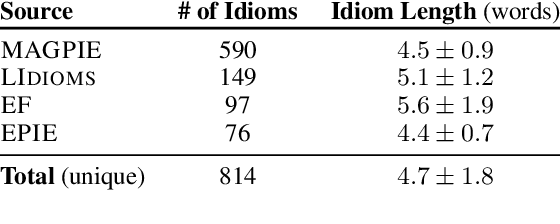

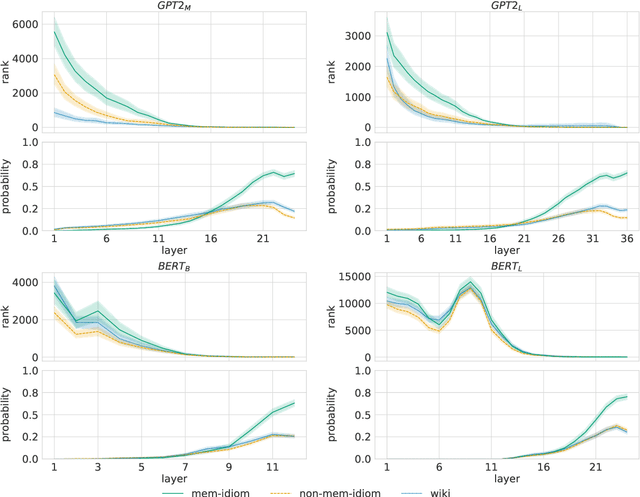

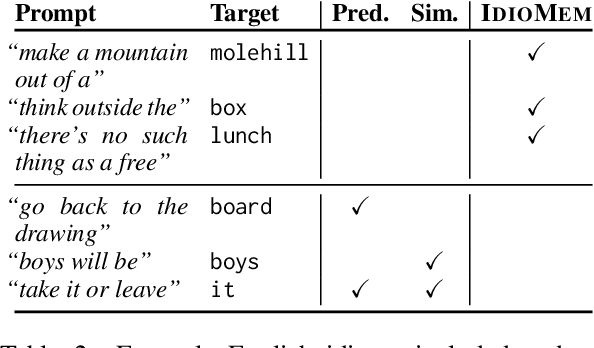

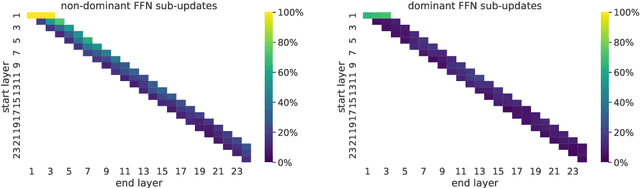

To produce accurate predictions, language models (LMs) must balance between generalization and memorization. Yet, little is known about the mechanism by which transformer LMs employ their memorization capacity. When does a model decide to output a memorized phrase, and how is this phrase then retrieved from memory? In this work, we offer the first methodological framework for probing and characterizing recall of memorized sequences in transformer LMs. First, we lay out criteria for detecting model inputs that trigger memory recall, and propose idioms as inputs that fulfill these criteria. Next, we construct a dataset of English idioms and use it to compare model behavior on memorized vs. non-memorized inputs. Specifically, we analyze the internal prediction construction process by interpreting the model's hidden representations as a gradual refinement of the output probability distribution. We find that across different model sizes and architectures, memorized predictions are a two-step process: early layers promote the predicted token to the top of the output distribution, and upper layers increase model confidence. This suggests that memorized information is stored and retrieved in the early layers of the network. Last, we demonstrate the utility of our methodology beyond idioms in memorized factual statements. Overall, our work makes a first step towards understanding memory recall, and provides a methodological basis for future studies of transformer memorization.

Bayesian Optimization with Informative Covariance

Aug 04, 2022

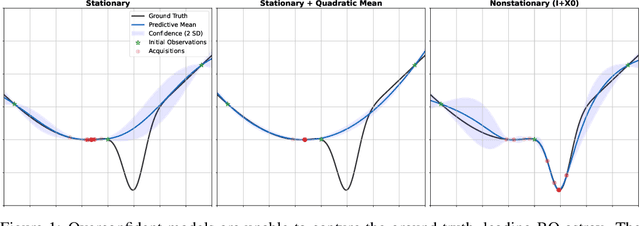

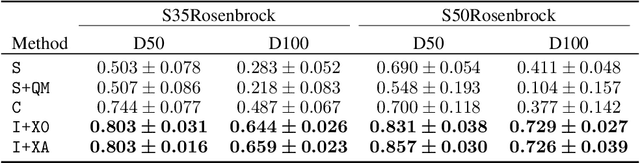

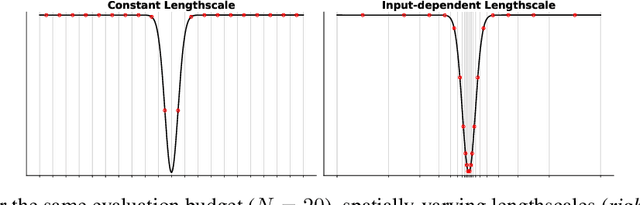

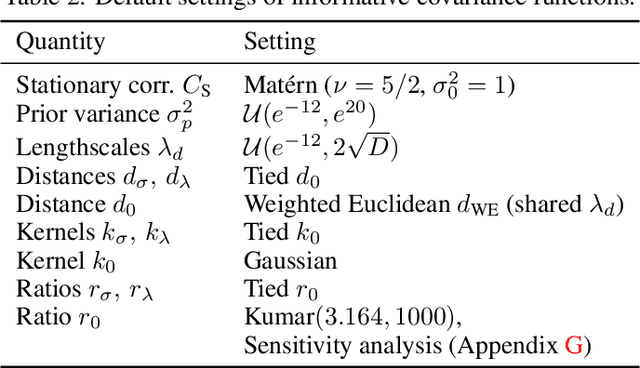

Bayesian Optimization is a methodology for global optimization of unknown and expensive objectives. It combines a surrogate Bayesian regression model with an acquisition function to decide where to evaluate the objective. Typical regression models are Gaussian processes with stationary covariance functions, which, however, are unable to express prior input-dependent information, in particular information about possible locations of the optimum. The ubiquity of stationary models has led to the common practice of exploiting prior information via informative mean functions. In this paper, we highlight that these models can lead to poor performance, especially in high dimensions. We propose novel informative covariance functions that leverage nonstationarity to encode preferences for certain regions of the search space and adaptively promote local exploration during the optimization. We demonstrate that they can increase the sample efficiency of the optimization in high dimensions, even under weak prior information.

MechRetro is a chemical-mechanism-driven graph learning framework for interpretable retrosynthesis prediction and pathway planning

Oct 06, 2022

Leveraging artificial intelligence for automatic retrosynthesis speeds up organic pathway planning in digital laboratories. However, existing deep learning approaches are unexplainable, like "black box" with few insights, notably limiting their applications in real retrosynthesis scenarios. Here, we propose MechRetro, a chemical-mechanism-driven graph learning framework for interpretable retrosynthetic prediction and pathway planning, which learns several retrosynthetic actions to simulate a reverse reaction via elaborate self-adaptive joint learning. By integrating chemical knowledge as prior information, we design a novel Graph Transformer architecture to adaptively learn discriminative and chemically meaningful molecule representations, highlighting the strong capacity in molecule feature representation learning. We demonstrate that MechRetro outperforms the state-of-the-art approaches for retrosynthetic prediction with a large margin on large-scale benchmark datasets. Extending MechRetro to the multi-step retrosynthesis analysis, we identify efficient synthetic routes via an interpretable reasoning mechanism, leading to a better understanding in the realm of knowledgeable synthetic chemists. We also showcase that MechRetro discovers a novel pathway for protokylol, along with energy scores for uncertainty assessment, broadening the applicability for practical scenarios. Overall, we expect MechRetro to provide meaningful insights for high-throughput automated organic synthesis in drug discovery.

COMPS: Conceptual Minimal Pair Sentences for testing Property Knowledge and Inheritance in Pre-trained Language Models

Oct 06, 2022

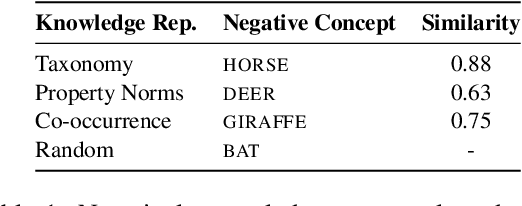

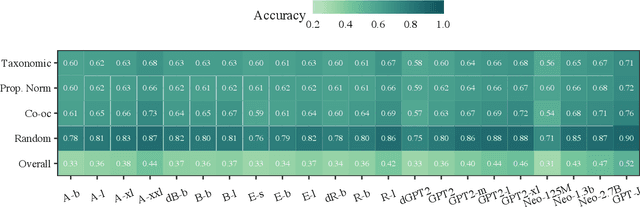

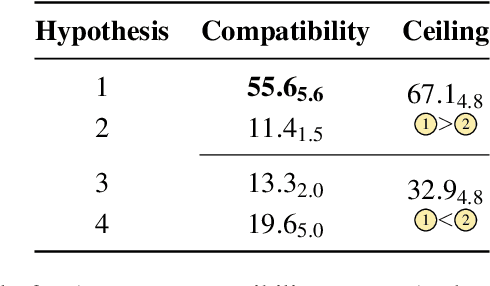

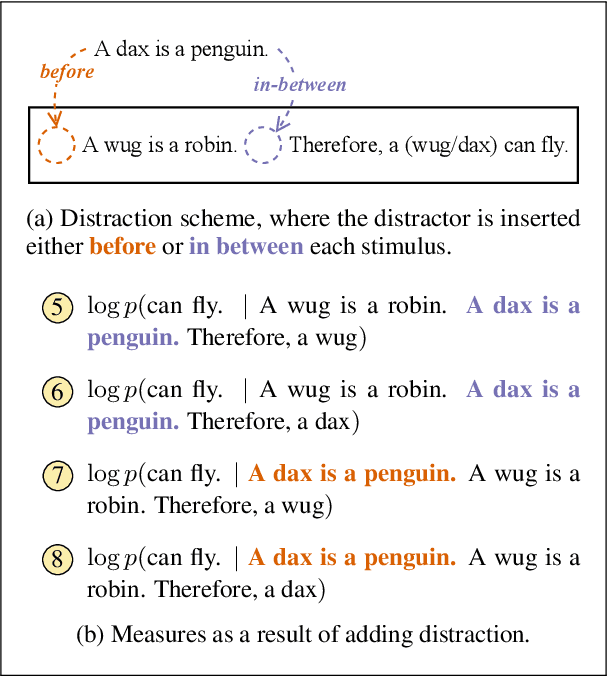

A characteristic feature of human semantic memory is its ability to not only store and retrieve the properties of concepts observed through experience, but to also facilitate the inheritance of properties (can breathe) from superordinate concepts (animal) to their subordinates (dog) -- i.e. demonstrate property inheritance. In this paper, we present COMPS, a collection of minimal pair sentences that jointly tests pre-trained language models (PLMs) on their ability to attribute properties to concepts and their ability to demonstrate property inheritance behavior. Analyses of 22 different PLMs on COMPS reveal that they can easily distinguish between concepts on the basis of a property when they are trivially different, but find it relatively difficult when concepts are related on the basis of nuanced knowledge representations. Furthermore, we find that PLMs can demonstrate behavior consistent with property inheritance to a great extent, but fail in the presence of distracting information, which decreases the performance of many models, sometimes even below chance. This lack of robustness in demonstrating simple reasoning raises important questions about PLMs' capacity to make correct inferences even when they appear to possess the prerequisite knowledge.

Small Character Models Match Large Word Models for Autocomplete Under Memory Constraints

Oct 06, 2022

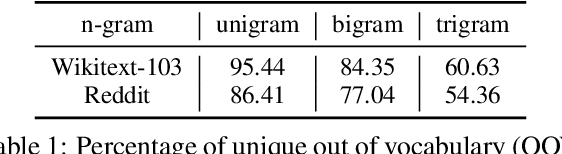

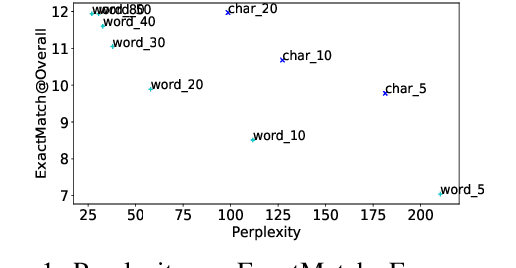

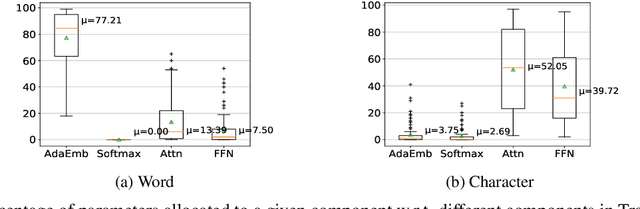

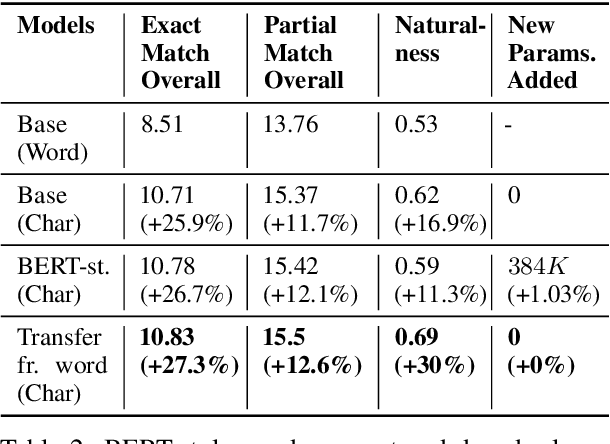

Autocomplete is a task where the user inputs a piece of text, termed prompt, which is conditioned by the model to generate semantically coherent continuation. Existing works for this task have primarily focused on datasets (e.g., email, chat) with high frequency user prompt patterns (or focused prompts) where word-based language models have been quite effective. In this work, we study the more challenging setting consisting of low frequency user prompt patterns (or broad prompts, e.g., prompt about 93rd academy awards) and demonstrate the effectiveness of character-based language models. We study this problem under memory-constrained settings (e.g., edge devices and smartphones), where character-based representation is effective in reducing the overall model size (in terms of parameters). We use WikiText-103 benchmark to simulate broad prompts and demonstrate that character models rival word models in exact match accuracy for the autocomplete task, when controlled for the model size. For instance, we show that a 20M parameter character model performs similar to an 80M parameter word model in the vanilla setting. We further propose novel methods to improve character models by incorporating inductive bias in the form of compositional information and representation transfer from large word models.

Audio-Visual Face Reenactment

Oct 06, 2022

This work proposes a novel method to generate realistic talking head videos using audio and visual streams. We animate a source image by transferring head motion from a driving video using a dense motion field generated using learnable keypoints. We improve the quality of lip sync using audio as an additional input, helping the network to attend to the mouth region. We use additional priors using face segmentation and face mesh to improve the structure of the reconstructed faces. Finally, we improve the visual quality of the generations by incorporating a carefully designed identity-aware generator module. The identity-aware generator takes the source image and the warped motion features as input to generate a high-quality output with fine-grained details. Our method produces state-of-the-art results and generalizes well to unseen faces, languages, and voices. We comprehensively evaluate our approach using multiple metrics and outperforming the current techniques both qualitative and quantitatively. Our work opens up several applications, including enabling low bandwidth video calls. We release a demo video and additional information at http://cvit.iiit.ac.in/research/projects/cvit-projects/avfr.

Q-LSTM Language Model -- Decentralized Quantum Multilingual Pre-Trained Language Model for Privacy Protection

Oct 06, 2022

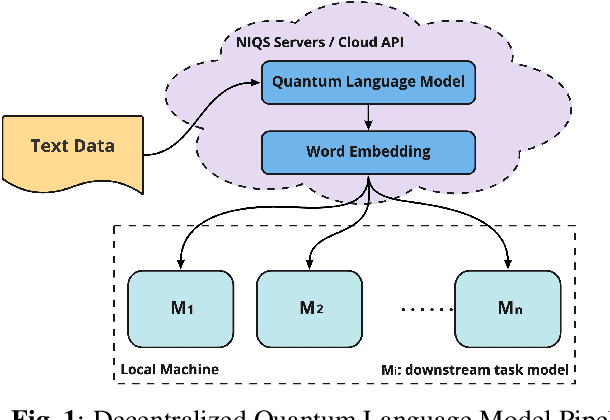

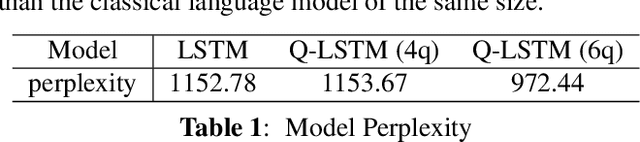

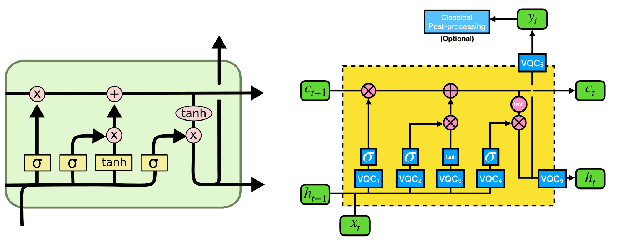

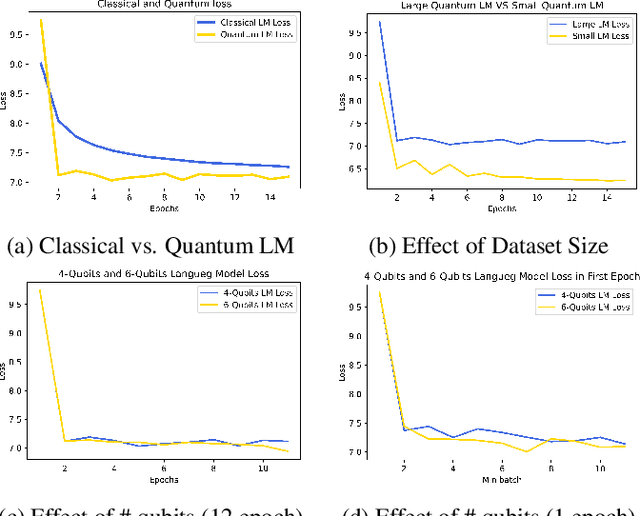

Large-scale language models are trained on a massive amount of natural language data that might encode or reflect our private information. With careful manipulation, malicious agents can reverse engineer the training data even if data sanitation and differential privacy algorithms were involved in the pre-training process. In this work, we propose a decentralized training framework to address privacy concerns in training large-scale language models. The framework consists of a cloud quantum language model built with Variational Quantum Classifiers (VQC) for sentence embedding and a local Long-Short Term Memory (LSTM) model. We use both intrinsic evaluation (loss, perplexity) and extrinsic evaluation (downstream sentiment analysis task) to evaluate the performance of our quantum language model. Our quantum model was comparable to its classical counterpart on all the above metrics. We also perform ablation studies to look into the effect of the size of VQC and the size of training data on the performance of the model. Our approach solves privacy concerns without sacrificing downstream task performance. The intractability of quantum operations on classical hardware ensures the confidentiality of the training data and makes it impossible to be recovered by any adversary.

GNSS/MEMS-INS Integration for Drone Navigation using EKF on Lie Groups

Oct 06, 2022

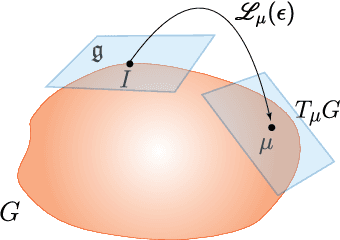

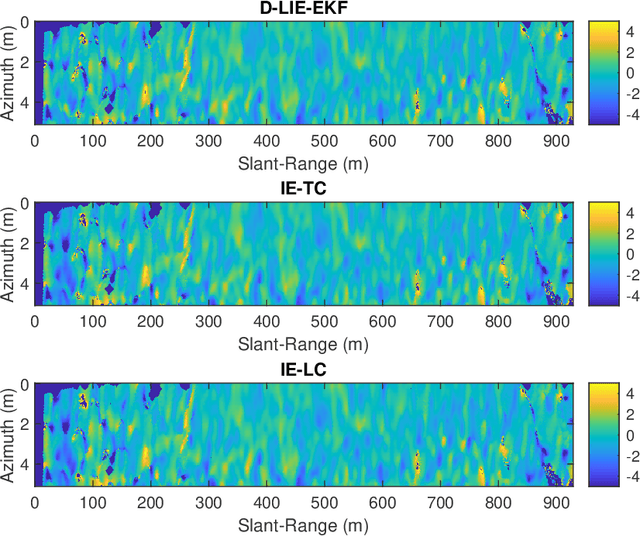

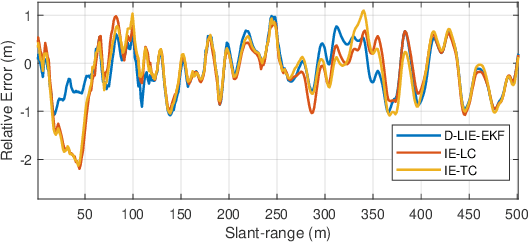

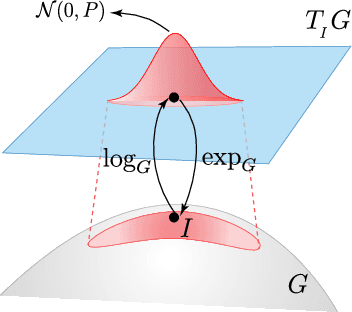

Building upon the theory of Kalman Filtering on Lie Groups, this paper describes an Extended Kalman Filter and Smoother for Loosely Coupled Integration of GNSS/INS tailored for post-processing applications. The approach employs a dynamic model on a matrix Lie Group that aggregates position, velocity, attitude, and the IMU biases as a single element of a Lie group. The development was motivated by a drone-borne Differential Interferometric SAR (DinSAR) application, which requires high-precision navigation information for short-flight missions using low-cost MEMS sensors. The filter and the Rauch-Tung-Striebel (RTS) smoother are both implemented and validated. The paper also presents a novel algorithm to initialize the heading value as an alternative to gyro-compassing or magnetometer-based alignments. The Mahalanobis Distance and the $\chi^2$-test are employed during the filter update step to address the practical issue of outlier rejection for the GNSS measurements. The paper uses synthetic data to compare classic navigation schemes based on multiplicative quaternions and Euler angles. Finally, real data experiments demonstrate that the Kalman Filter based on Lie Groups performs better DinSAR processing than state-of-the-art commercial software.

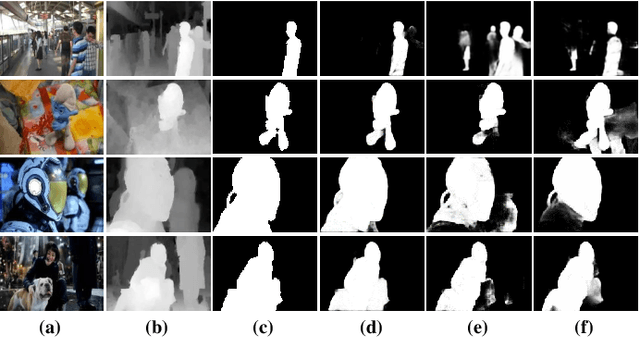

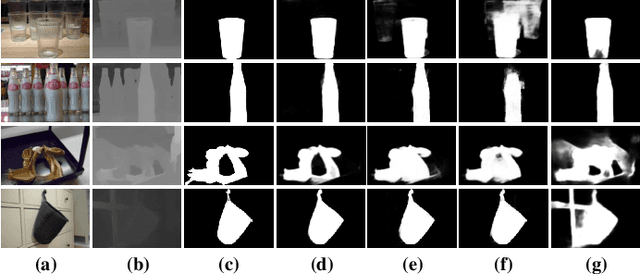

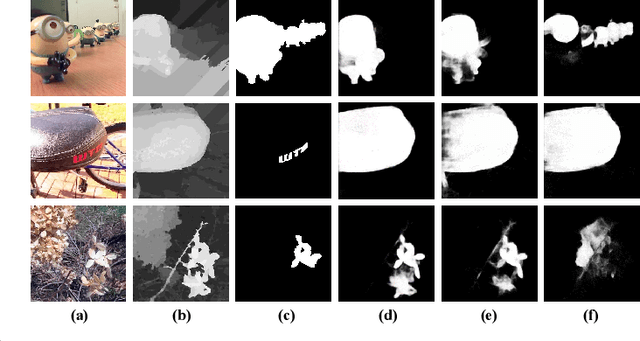

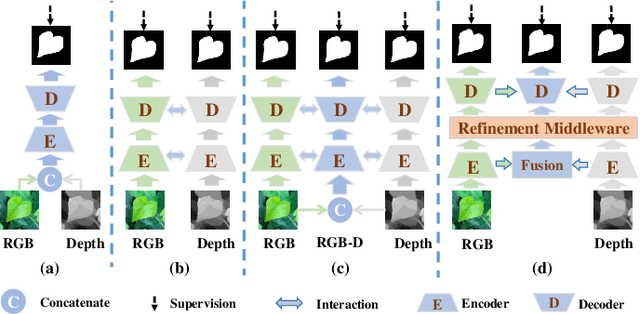

CIR-Net: Cross-modality Interaction and Refinement for RGB-D Salient Object Detection

Oct 06, 2022

Focusing on the issue of how to effectively capture and utilize cross-modality information in RGB-D salient object detection (SOD) task, we present a convolutional neural network (CNN) model, named CIR-Net, based on the novel cross-modality interaction and refinement. For the cross-modality interaction, 1) a progressive attention guided integration unit is proposed to sufficiently integrate RGB-D feature representations in the encoder stage, and 2) a convergence aggregation structure is proposed, which flows the RGB and depth decoding features into the corresponding RGB-D decoding streams via an importance gated fusion unit in the decoder stage. For the cross-modality refinement, we insert a refinement middleware structure between the encoder and the decoder, in which the RGB, depth, and RGB-D encoder features are further refined by successively using a self-modality attention refinement unit and a cross-modality weighting refinement unit. At last, with the gradually refined features, we predict the saliency map in the decoder stage. Extensive experiments on six popular RGB-D SOD benchmarks demonstrate that our network outperforms the state-of-the-art saliency detectors both qualitatively and quantitatively.

Robust field-level inference with dark matter halos

Sep 14, 2022

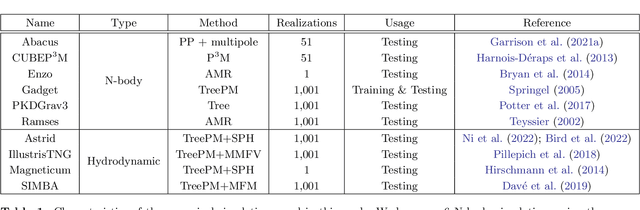

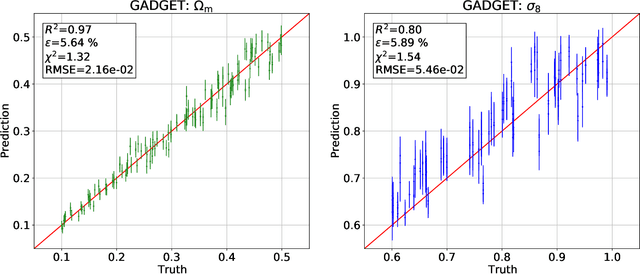

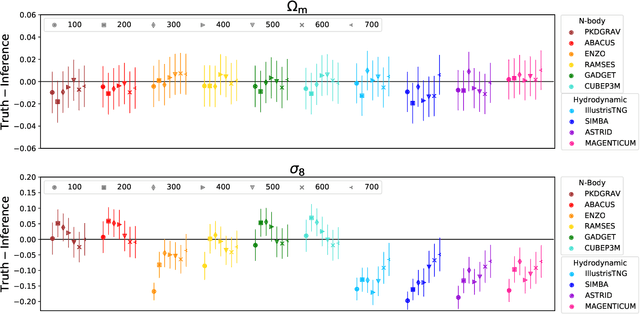

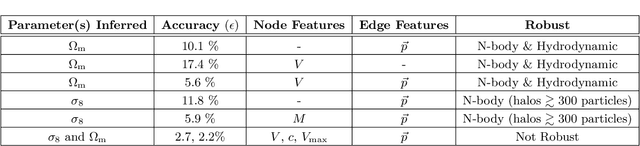

We train graph neural networks on halo catalogues from Gadget N-body simulations to perform field-level likelihood-free inference of cosmological parameters. The catalogues contain $\lesssim$5,000 halos with masses $\gtrsim 10^{10}~h^{-1}M_\odot$ in a periodic volume of $(25~h^{-1}{\rm Mpc})^3$; every halo in the catalogue is characterized by several properties such as position, mass, velocity, concentration, and maximum circular velocity. Our models, built to be permutationally, translationally, and rotationally invariant, do not impose a minimum scale on which to extract information and are able to infer the values of $\Omega_{\rm m}$ and $\sigma_8$ with a mean relative error of $\sim6\%$, when using positions plus velocities and positions plus masses, respectively. More importantly, we find that our models are very robust: they can infer the value of $\Omega_{\rm m}$ and $\sigma_8$ when tested using halo catalogues from thousands of N-body simulations run with five different N-body codes: Abacus, CUBEP$^3$M, Enzo, PKDGrav3, and Ramses. Surprisingly, the model trained to infer $\Omega_{\rm m}$ also works when tested on thousands of state-of-the-art CAMELS hydrodynamic simulations run with four different codes and subgrid physics implementations. Using halo properties such as concentration and maximum circular velocity allow our models to extract more information, at the expense of breaking the robustness of the models. This may happen because the different N-body codes are not converged on the relevant scales corresponding to these parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge