Tian Qiu

Theoretical and Empirical Study of Spatial Power Focusing Effect for Sparse Arrays at Terahertz Band

Nov 19, 2025Abstract:This work investigates the spatial power focusing effect for large-scale sparse arrays at terahertz (THz) band, combining theoretical analysis with experimental validation. Specifically, based on a Green's function channel model, we analyze the power distribution along the $z$-axis, deriving a closed-form expression to characterize the focusing effect. Furthermore, the factors influencing the focusing effect, including phase noise and positional deviations, are theoretically analyzed and numerically simulated. Finally, a 300 GHz measurement platform based on a vector network analyzer (VNA) is constructed for experimental validation. The measurement results demonstrate close consistence with theoretical simulation results, confirming the spatial power focusing effect for sparse arrays.

Mutual Coupling Aware Channel Estimation for RIS-Aided Multi-User mmWave Systems

Nov 11, 2025

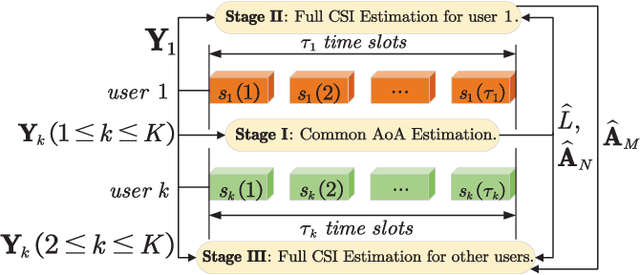

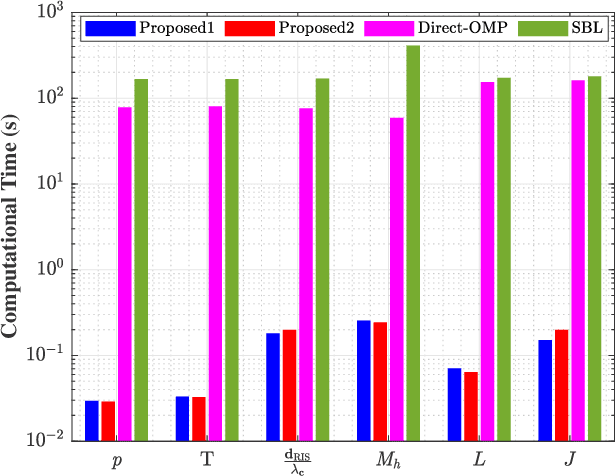

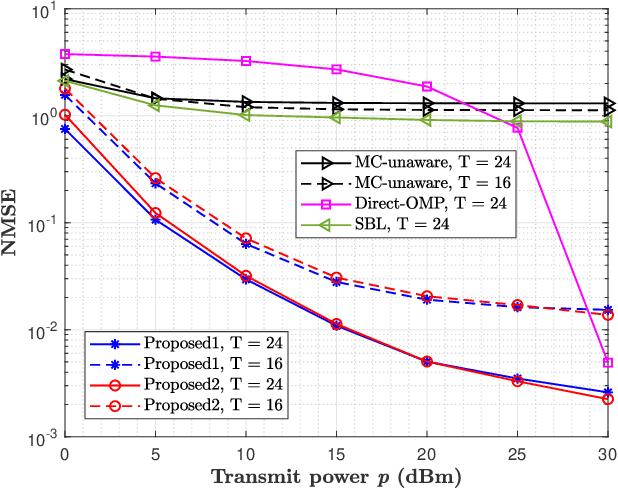

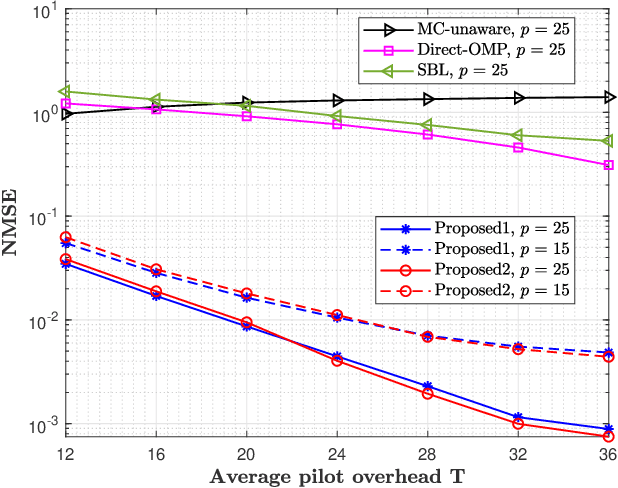

Abstract:This paper proposes a three-stage uplink channel estimation protocol for reconfigurable intelligent surface (RIS)-aided multi-user (MU) millimeter-wave (mmWave) multiple-input single-output (MISO) systems, where both the base station (BS) and the RIS are equipped with uniform planar arrays (UPAs). The proposed approach explicitly accounts for the mutual coupling (MC) effect, modeled via scattering parameter multiport network theory. In Stage~I, a dimension-reduced subspace-based method is proposed to estimate the common angle of arrival (AoA) at the BS using the received signals across all users. In Stage~II, MC-aware cascaded channel estimation is performed for a typical user. The equivalent measurement vectors for each cascaded path are extracted and the reference column is reconstructed using a compressed sensing (CS)-based approach. By leveraging the structure of the cascaded channel, the reference column is rearranged to estimate the AoA at the RIS, thereby reducing the computational complexity associated with estimating other columns. Additionally, the common angle of departure (AoD) at the RIS is also obtained in this stage, which significantly reduces the pilot overhead for estimating the cascaded channels of other users in Stage~III. The RIS phase shift training matrix is designed to optimize performance in the presence of MC and outperforms random phase scheme. Simulation results validate that the proposed method yields better performance than the MC-unaware and existing approaches in terms of estimation accuracy and pilot efficiency.

PreResQ-R1: Towards Fine-Grained Rank-and-Score Reinforcement Learning for Visual Quality Assessment via Preference-Response Disentangled Policy Optimization

Nov 07, 2025Abstract:Visual Quality Assessment (QA) seeks to predict human perceptual judgments of visual fidelity. While recent multimodal large language models (MLLMs) show promise in reasoning about image and video quality, existing approaches mainly rely on supervised fine-tuning or rank-only objectives, resulting in shallow reasoning, poor score calibration, and limited cross-domain generalization. We propose PreResQ-R1, a Preference-Response Disentangled Reinforcement Learning framework that unifies absolute score regression and relative ranking consistency within a single reasoning-driven optimization scheme. Unlike prior QA methods, PreResQ-R1 introduces a dual-branch reward formulation that separately models intra-sample response coherence and inter-sample preference alignment, optimized via Group Relative Policy Optimization (GRPO). This design encourages fine-grained, stable, and interpretable chain-of-thought reasoning about perceptual quality. To extend beyond static imagery, we further design a global-temporal and local-spatial data flow strategy for Video Quality Assessment. Remarkably, with reinforcement fine-tuning on only 6K images and 28K videos, PreResQ-R1 achieves state-of-the-art results across 10 IQA and 5 VQA benchmarks under both SRCC and PLCC metrics, surpassing by margins of 5.30% and textbf2.15% in IQA task, respectively. Beyond quantitative gains, it produces human-aligned reasoning traces that reveal the perceptual cues underlying quality judgments. Code and model are available.

DATR: Diffusion-based 3D Apple Tree Reconstruction Framework with Sparse-View

Aug 27, 2025Abstract:Digital twin applications offered transformative potential by enabling real-time monitoring and robotic simulation through accurate virtual replicas of physical assets. The key to these systems is 3D reconstruction with high geometrical fidelity. However, existing methods struggled under field conditions, especially with sparse and occluded views. This study developed a two-stage framework (DATR) for the reconstruction of apple trees from sparse views. The first stage leverages onboard sensors and foundation models to semi-automatically generate tree masks from complex field images. Tree masks are used to filter out background information in multi-modal data for the single-image-to-3D reconstruction at the second stage. This stage consists of a diffusion model and a large reconstruction model for respective multi view and implicit neural field generation. The training of the diffusion model and LRM was achieved by using realistic synthetic apple trees generated by a Real2Sim data generator. The framework was evaluated on both field and synthetic datasets. The field dataset includes six apple trees with field-measured ground truth, while the synthetic dataset featured structurally diverse trees. Evaluation results showed that our DATR framework outperformed existing 3D reconstruction methods across both datasets and achieved domain-trait estimation comparable to industrial-grade stationary laser scanners while improving the throughput by $\sim$360 times, demonstrating strong potential for scalable agricultural digital twin systems.

CosmoFlow: Scale-Aware Representation Learning for Cosmology with Flow Matching

Jul 16, 2025Abstract:Generative machine learning models have been demonstrated to be able to learn low dimensional representations of data that preserve information required for downstream tasks. In this work, we demonstrate that flow matching based generative models can learn compact, semantically rich latent representations of field level cold dark matter (CDM) simulation data without supervision. Our model, CosmoFlow, learns representations 32x smaller than the raw field data, usable for field level reconstruction, synthetic data generation, and parameter inference. Our model also learns interpretable representations, in which different latent channels correspond to features at different cosmological scales.

Efficient Long CoT Reasoning in Small Language Models

May 24, 2025Abstract:Recent large reasoning models such as DeepSeek-R1 exhibit strong complex problems solving abilities by generating long chain-of-thought (CoT) reasoning steps. It is challenging to directly train small language models (SLMs) to emerge long CoT. Thus, distillation becomes a practical method to enable SLMs for such reasoning ability. However, the long CoT often contains a lot of redundant contents (e.g., overthinking steps) which may make SLMs hard to learn considering their relatively poor capacity and generalization. To address this issue, we propose a simple-yet-effective method to prune unnecessary steps in long CoT, and then employ an on-policy method for the SLM itself to curate valid and useful long CoT training data. In this way, SLMs can effectively learn efficient long CoT reasoning and preserve competitive performance at the same time. Experimental results across a series of mathematical reasoning benchmarks demonstrate the effectiveness of the proposed method in distilling long CoT reasoning ability into SLMs which maintains the competitive performance but significantly reduces generating redundant reasoning steps.

Gone With the Bits: Revealing Racial Bias in Low-Rate Neural Compression for Facial Images

May 05, 2025Abstract:Neural compression methods are gaining popularity due to their superior rate-distortion performance over traditional methods, even at extremely low bitrates below 0.1 bpp. As deep learning architectures, these models are prone to bias during the training process, potentially leading to unfair outcomes for individuals in different groups. In this paper, we present a general, structured, scalable framework for evaluating bias in neural image compression models. Using this framework, we investigate racial bias in neural compression algorithms by analyzing nine popular models and their variants. Through this investigation, we first demonstrate that traditional distortion metrics are ineffective in capturing bias in neural compression models. Next, we highlight that racial bias is present in all neural compression models and can be captured by examining facial phenotype degradation in image reconstructions. We then examine the relationship between bias and realism in the decoded images and demonstrate a trade-off across models. Finally, we show that utilizing a racially balanced training set can reduce bias but is not a sufficient bias mitigation strategy. We additionally show the bias can be attributed to compression model bias and classification model bias. We believe that this work is a first step towards evaluating and eliminating bias in neural image compression models.

BookWorld: From Novels to Interactive Agent Societies for Creative Story Generation

Apr 20, 2025Abstract:Recent advances in large language models (LLMs) have enabled social simulation through multi-agent systems. Prior efforts focus on agent societies created from scratch, assigning agents with newly defined personas. However, simulating established fictional worlds and characters remain largely underexplored, despite its significant practical value. In this paper, we introduce BookWorld, a comprehensive system for constructing and simulating book-based multi-agent societies. BookWorld's design covers comprehensive real-world intricacies, including diverse and dynamic characters, fictional worldviews, geographical constraints and changes, e.t.c. BookWorld enables diverse applications including story generation, interactive games and social simulation, offering novel ways to extend and explore beloved fictional works. Through extensive experiments, we demonstrate that BookWorld generates creative, high-quality stories while maintaining fidelity to the source books, surpassing previous methods with a win rate of 75.36%. The code of this paper can be found at the project page: https://bookworld2025.github.io/.

Streamlining Biomedical Research with Specialized LLMs

Apr 15, 2025Abstract:In this paper, we propose a novel system that integrates state-of-the-art, domain-specific large language models with advanced information retrieval techniques to deliver comprehensive and context-aware responses. Our approach facilitates seamless interaction among diverse components, enabling cross-validation of outputs to produce accurate, high-quality responses enriched with relevant data, images, tables, and other modalities. We demonstrate the system's capability to enhance response precision by leveraging a robust question-answering model, significantly improving the quality of dialogue generation. The system provides an accessible platform for real-time, high-fidelity interactions, allowing users to benefit from efficient human-computer interaction, precise retrieval, and simultaneous access to a wide range of literature and data. This dramatically improves the research efficiency of professionals in the biomedical and pharmaceutical domains and facilitates faster, more informed decision-making throughout the R\&D process. Furthermore, the system proposed in this paper is available at https://synapse-chat.patsnap.com.

Joint 3D Point Cloud Segmentation using Real-Sim Loop: From Panels to Trees and Branches

Mar 07, 2025Abstract:Modern orchards are planted in structured rows with distinct panel divisions to improve management. Accurate and efficient joint segmentation of point cloud from Panel to Tree and Branch (P2TB) is essential for robotic operations. However, most current segmentation methods focus on single instance segmentation and depend on a sequence of deep networks to perform joint tasks. This strategy hinders the use of hierarchical information embedded in the data, leading to both error accumulation and increased costs for annotation and computation, which limits its scalability for real-world applications. In this study, we proposed a novel approach that incorporated a Real2Sim L-TreeGen for training data generation and a joint model (J-P2TB) designed for the P2TB task. The J-P2TB model, trained on the generated simulation dataset, was used for joint segmentation of real-world panel point clouds via zero-shot learning. Compared to representative methods, our model outperformed them in most segmentation metrics while using 40% fewer learnable parameters. This Sim2Real result highlighted the efficacy of L-TreeGen in model training and the performance of J-P2TB for joint segmentation, demonstrating its strong accuracy, efficiency, and generalizability for real-world applications. These improvements would not only greatly benefit the development of robots for automated orchard operations but also advance digital twin technology.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge