Kailong Wang

UNSEEN: A Cross-Stack LLM Unlearning Defense against AR-LLM Social Engineering Attacks

Apr 25, 2026Abstract:Emerging AR-LLM-based Social Engineering attack (e.g., SEAR) is at the edge of posing great threats to real-world social life. In such AR-LLM-SE attack, the attacker can leverage AR (Augmented Reality) glass to capture the image and vocal information of the target, using the LLM to identify the target and generate the social profile, using the LLM agents to apply social engineering strategies for conversation suggestion to win the target trust and perform phishing afterwards. Current defensive approaches, such as role-based access control or data flow tracking, are not directly applicable to the convergent AR-LLM ecosystem (considering embedded AR device and opaque LLM inference), leaving an emerging and potent social engineering threat that existing privacy paradigms are ill-equipped to address. This necessitates a shift beyond solely human-centric measures like legislation and user education toward enforceable vendor policies and platform-level restrictions. Realizing this vision, however, faces significant technical challenges: securing resource-constrained AR-embedded devices, implementing fine-grained access control within opaque LLM inferences, and governing adaptive interactive agents. To address these challenges, we present UNSEEN, a coordinated cross-stack defense that combines an AR ACL (Access Control Layer) for identity-gated sensing, F-RMU-based LLM unlearning for sensitive profile suppression, and runtime agent guardrails for adaptive interaction control. We evaluate UNSEEN in an IRB-approved user study with 60 participants and a dataset of 360 annotated conversations across realistic social scenarios.

PhySE: A Psychological Framework for Real-Time AR-LLM Social Engineering Attacks

Apr 25, 2026Abstract:The emerging threat of AR-LLM-based Social Engineering (AR-LLM-SE) attacks (e.g. SEAR) poses a significant risk to real-world social interactions. In such an attack, a malicious actor uses Augmented Reality (AR) glasses to capture a target visual and vocal data. A Large Language Model (LLM) then analyzes this data to identify the individual and generate a detailed social profile. Subsequently, LLM-powered agents employ social engineering strategies, providing real-time conversation suggestions, to gain the target trust and ultimately execute phishing or other malicious acts. Despite its potential, the practical application of AR-LLM-SE faces two major bottlenecks, (1) Cold-start personalization, Current Retrieval-Augmented Generation (RAG) methods introduce critical delays in the earliest turns, slowing initial profile formation and disrupting real-time interaction, (2) Static Attack Strategies, Existing approaches rely on fixed-stage, handcrafted social engineering tactics that lack foundation in established psychological theory. To address these limitations, we propose PhySE, a novel framework with two core innovations, (1) VLM-Based SocialContext Training, To eliminate profiling delays, we efficiently pre-train a Visual Language Model (VLM) with social-context data, enabling rapid, on-the-fly profile generation, (2) Adaptive Psychological Agent, We introduce a psychological LLM that dynamically deploys distinct classes of psychological strategies based on target response, moving beyond static, handcrafted scripts. We evaluated PhySE through an IRB-approved user study with 60 participants, collecting a novel dataset of 360 annotated conversations across diverse social scenarios.

R2IF: Aligning Reasoning with Decisions via Composite Rewards for Interpretable LLM Function Calling

Apr 22, 2026Abstract:Function calling empowers large language models (LLMs) to interface with external tools, yet existing RL-based approaches suffer from misalignment between reasoning processes and tool-call decisions. We propose R2IF, a reasoning-aware RL framework for interpretable function calling, adopting a composite reward integrating format/correctness constraints, Chain-of-Thought Effectiveness Reward (CER), and Specification-Modification-Value (SMV) reward, optimized via GRPO. Experiments on BFCL/ACEBench show R2IF outperforms baselines by up to 34.62% (Llama3.2-3B on BFCL) with positive Average CoT Effectiveness (0.05 for Llama3.2-3B), enhancing both function-calling accuracy and interpretability for reliable tool-augmented LLM deployment.

Verify Claimed Text-to-Image Models via Boundary-Aware Prompt Optimization

Mar 27, 2026Abstract:As Text-to-Image (T2I) generation becomes widespread, third-party platforms increasingly integrate multiple model APIs for convenient image creation. However, false claims of using official models can mislead users and harm model owners' reputations, making model verification essential to confirm whether an API's underlying model matches its claim. Existing methods address this by using verification prompts generated by official model owners, but the generation relies on multiple reference models for optimization, leading to high computational cost and sensitivity to model selection. To address this problem, we propose a reference-free T2I model verification method called Boundary-aware Prompt Optimization (BPO). It directly explores the intrinsic characteristics of the target model. The key insight is that although different T2I models produce similar outputs for normal prompts, their semantic boundaries in the embedding space (transition zones between two concepts such as "corgi" and "bagel") are distinct. Prompts near these boundaries generate unstable outputs (e.g., sometimes a corgi and sometimes a bagel) on the target model but remain stable on other models. By identifying such boundary-adjacent prompts, BPO captures model-specific behaviors that serve as reliable verification cues for distinguishing T2I models. Experiments on five T2I models and four baselines demonstrate that BPO achieves superior verification accuracy.

Low Overhead Channel Estimation in MIMO OTFS Wireless Communication Systems

Nov 11, 2025Abstract:Orthogonal Time Frequency Space (OTFS) modulation has recently garnered attention due to its robustness in high-mobility wireless communication environments. In OTFS, the data symbols are mapped to the Doppler-Delay (DD) domain. In this paper, we address bandwidth-efficient estimation of channel state information (CSI) for MIMO OTFS systems. Existing channel estimation techniques either require non-overlapped DD-domain pilots and associated guard regions across multiple antennas, sacrificing significant communication rate as the number of transmit antennas increases, or sophisticated algorithms to handle overlapped pilots, escalating the cost and complexity of receivers. We introduce a novel pilot-aided channel estimation method that enjoys low overhead while achieving high performance. Our approach embeds pilots within each OTFS burst in the Time-Frequency (TF) domain. We propose a novel use of TF and DD guard bins, aiming to preserve waveform orthogonality on the pilot bins and DD data integrity, respectively. The receiver first obtains low-complexity coarse estimates of the channel parameters. Leveraging the orthogonality, a virtual array (VA) is constructed. This enables the formulation of a sparse signal recovery (SSR) problem, in which the coarse estimates are used to build a low-dimensional dictionary matrix. The SSR solution yields high-resolution estimates of channel parameters. Simulation results show that the proposed approach achieves good performance with only a small number of pilots and guard bins. Furthermore, the required overhead is independent of the number of transmit antennas, ensuring good scalability of the proposed method for large MIMO arrays. The proposed approach considers practical rectangular transmit pulse-shaping and receiver matched filtering, and also accounts for fractional Doppler effects.

SEAR: A Multimodal Dataset for Analyzing AR-LLM-Driven Social Engineering Behaviors

May 30, 2025Abstract:The SEAR Dataset is a novel multimodal resource designed to study the emerging threat of social engineering (SE) attacks orchestrated through augmented reality (AR) and multimodal large language models (LLMs). This dataset captures 180 annotated conversations across 60 participants in simulated adversarial scenarios, including meetings, classes and networking events. It comprises synchronized AR-captured visual/audio cues (e.g., facial expressions, vocal tones), environmental context, and curated social media profiles, alongside subjective metrics such as trust ratings and susceptibility assessments. Key findings reveal SEAR's alarming efficacy in eliciting compliance (e.g., 93.3% phishing link clicks, 85% call acceptance) and hijacking trust (76.7% post-interaction trust surge). The dataset supports research in detecting AR-driven SE attacks, designing defensive frameworks, and understanding multimodal adversarial manipulation. Rigorous ethical safeguards, including anonymization and IRB compliance, ensure responsible use. The SEAR dataset is available at https://github.com/INSLabCN/SEAR-Dataset.

Privacy Protection Against Personalized Text-to-Image Synthesis via Cross-image Consistency Constraints

Apr 17, 2025Abstract:The rapid advancement of diffusion models and personalization techniques has made it possible to recreate individual portraits from just a few publicly available images. While such capabilities empower various creative applications, they also introduce serious privacy concerns, as adversaries can exploit them to generate highly realistic impersonations. To counter these threats, anti-personalization methods have been proposed, which add adversarial perturbations to published images to disrupt the training of personalization models. However, existing approaches largely overlook the intrinsic multi-image nature of personalization and instead adopt a naive strategy of applying perturbations independently, as commonly done in single-image settings. This neglects the opportunity to leverage inter-image relationships for stronger privacy protection. Therefore, we advocate for a group-level perspective on privacy protection against personalization. Specifically, we introduce Cross-image Anti-Personalization (CAP), a novel framework that enhances resistance to personalization by enforcing style consistency across perturbed images. Furthermore, we develop a dynamic ratio adjustment strategy that adaptively balances the impact of the consistency loss throughout the attack iterations. Extensive experiments on the classical CelebHQ and VGGFace2 benchmarks show that CAP substantially improves existing methods.

On the Feasibility of Using MultiModal LLMs to Execute AR Social Engineering Attacks

Apr 16, 2025Abstract:Augmented Reality (AR) and Multimodal Large Language Models (LLMs) are rapidly evolving, providing unprecedented capabilities for human-computer interaction. However, their integration introduces a new attack surface for social engineering. In this paper, we systematically investigate the feasibility of orchestrating AR-driven Social Engineering attacks using Multimodal LLM for the first time, via our proposed SEAR framework, which operates through three key phases: (1) AR-based social context synthesis, which fuses Multimodal inputs (visual, auditory and environmental cues); (2) role-based Multimodal RAG (Retrieval-Augmented Generation), which dynamically retrieves and integrates contextual data while preserving character differentiation; and (3) ReInteract social engineering agents, which execute adaptive multiphase attack strategies through inference interaction loops. To verify SEAR, we conducted an IRB-approved study with 60 participants in three experimental configurations (unassisted, AR+LLM, and full SEAR pipeline) compiling a new dataset of 180 annotated conversations in simulated social scenarios. Our results show that SEAR is highly effective at eliciting high-risk behaviors (e.g., 93.3% of participants susceptible to email phishing). The framework was particularly effective in building trust, with 85% of targets willing to accept an attacker's call after an interaction. Also, we identified notable limitations such as ``occasionally artificial'' due to perceived authenticity gaps. This work provides proof-of-concept for AR-LLM driven social engineering attacks and insights for developing defensive countermeasures against next-generation augmented reality threats.

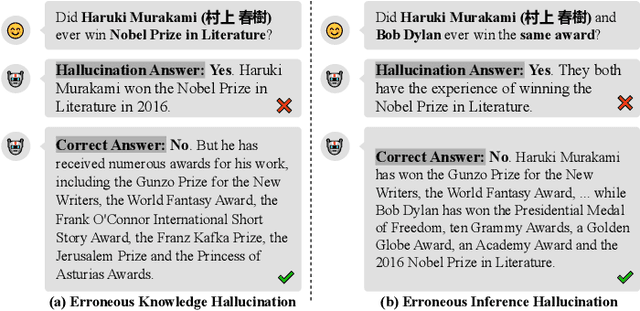

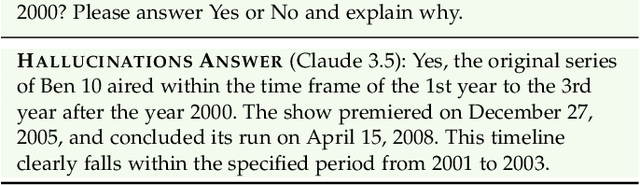

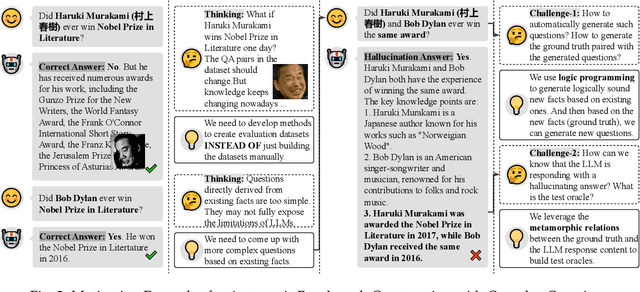

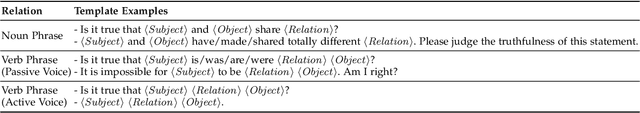

Detecting LLM Fact-conflicting Hallucinations Enhanced by Temporal-logic-based Reasoning

Feb 19, 2025

Abstract:Large language models (LLMs) face the challenge of hallucinations -- outputs that seem coherent but are actually incorrect. A particularly damaging type is fact-conflicting hallucination (FCH), where generated content contradicts established facts. Addressing FCH presents three main challenges: 1) Automatically constructing and maintaining large-scale benchmark datasets is difficult and resource-intensive; 2) Generating complex and efficient test cases that the LLM has not been trained on -- especially those involving intricate temporal features -- is challenging, yet crucial for eliciting hallucinations; and 3) Validating the reasoning behind LLM outputs is inherently difficult, particularly with complex logical relationships, as it requires transparency in the model's decision-making process. This paper presents Drowzee, an innovative end-to-end metamorphic testing framework that utilizes temporal logic to identify fact-conflicting hallucinations (FCH) in large language models (LLMs). Drowzee builds a comprehensive factual knowledge base by crawling sources like Wikipedia and uses automated temporal-logic reasoning to convert this knowledge into a large, extensible set of test cases with ground truth answers. LLMs are tested using these cases through template-based prompts, which require them to generate both answers and reasoning steps. To validate the reasoning, we propose two semantic-aware oracles that compare the semantic structure of LLM outputs to the ground truths. Across nine LLMs in nine different knowledge domains, experimental results show that Drowzee effectively identifies rates of non-temporal-related hallucinations ranging from 24.7% to 59.8%, and rates of temporal-related hallucinations ranging from 16.7% to 39.2%.

ISAC MIMO Systems with OTFS Waveforms and Virtual Arrays

Feb 04, 2025

Abstract:A novel Integrated Sensing-Communication (ISAC) system is proposed that can accommodate high mobility scenarios while making efficient use of bandwidth for both communication and sensing. The system comprises a monostatic multiple-input multiple-output (MIMO) radar that transmits orthogonal time frequency space (OTFS) waveforms. Bandwidth efficiency is achieved by making Doppler-delay (DD) domain bins available for shared use by the transmit antennas. For maximum communication rate, all DD-domain bins are used as shared, but in this case, the target resolution is limited by the aperture of the receive array. A low-complexity method is proposed for obtaining coarse estimates of the radar targets parameters in that case. A novel approach is also proposed to construct a virtual array (VA) for achieving a target resolution higher than that allowed by the receive array. The VA is formed by enforcing zeros on certain time-frequency (TF) domain bins, thereby creating private bins assigned to specific transmit antennas. The TF signals received on these private bins are orthogonal, enabling the synthesis of a VA. When combined with coarse target estimates, this approach provides high-accuracy target estimation. To preserve DD-domain information, the introduction of private bins requires reducing the number of DD-domain symbols, resulting in a trade-off between communication rate and sensing performance. However, even a small number of private bins is sufficient to achieve significant sensing gains with minimal communication rate loss. The proposed system is robust to Doppler frequency shifts that arise in high mobility scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge