Juan Nieto

ETH Zürich

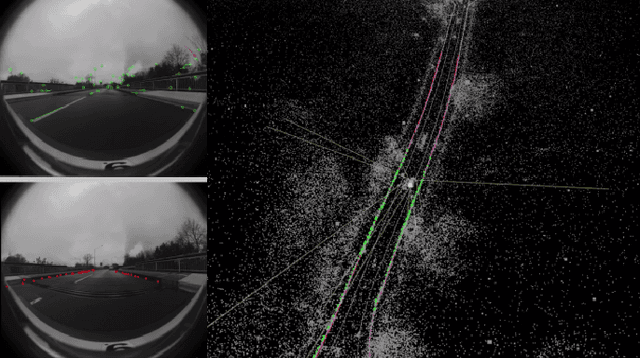

IDOL: A Framework for IMU-DVS Odometry using Lines

Aug 13, 2020

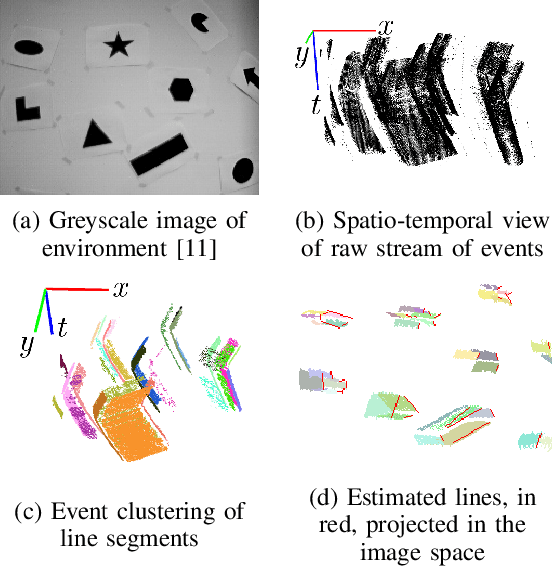

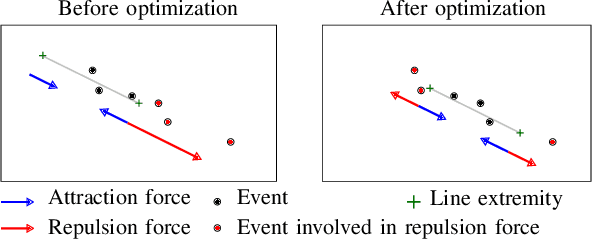

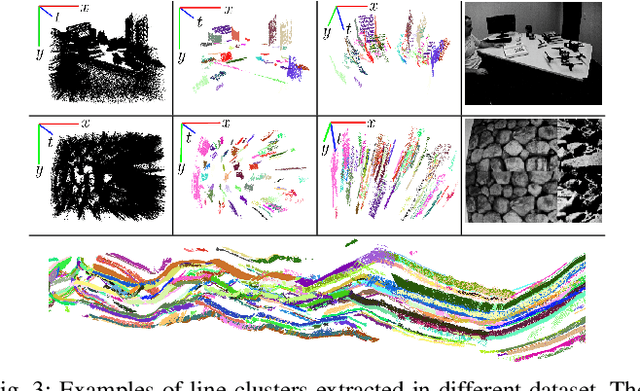

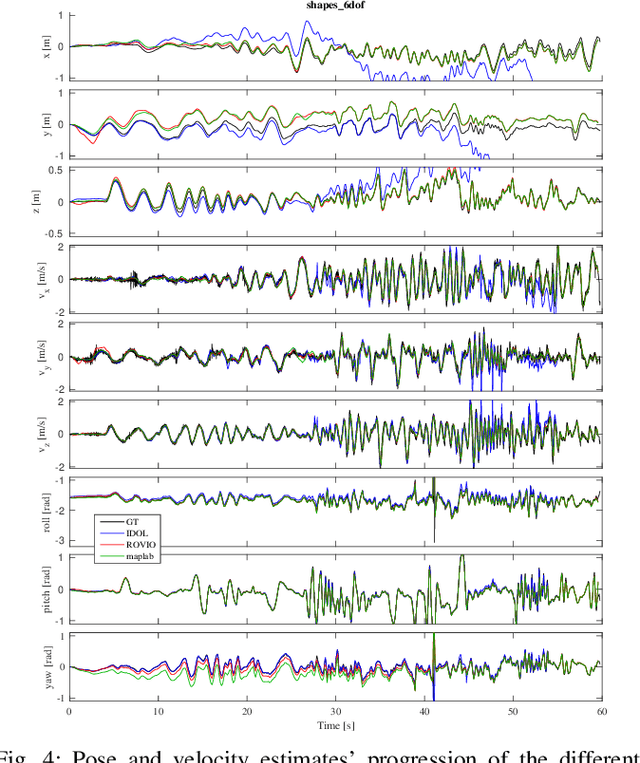

Abstract:In this paper, we introduce IDOL, an optimization-based framework for IMU-DVS Odometry using Lines. Event cameras, also called Dynamic Vision Sensors (DVSs), generate highly asynchronous streams of events triggered upon illumination changes for each individual pixel. This novel paradigm presents advantages in low illumination conditions and high-speed motions. Nonetheless, this unconventional sensing modality brings new challenges to perform scene reconstruction or motion estimation. The proposed method offers to leverage a continuous-time representation of the inertial readings to associate each event with timely accurate inertial data. The method's front-end extracts event clusters that belong to line segments in the environment whereas the back-end estimates the system's trajectory alongside the lines' 3D position by minimizing point-to-line distances between individual events and the lines' projection in the image space. A novel attraction/repulsion mechanism is presented to accurately estimate the lines' extremities, avoiding their explicit detection in the event data. The proposed method is benchmarked against a state-of-the-art frame-based visual-inertial odometry framework using public datasets. The results show that IDOL performs at the same order of magnitude on most datasets and even shows better orientation estimates. These findings can have a great impact on new algorithms for DVS.

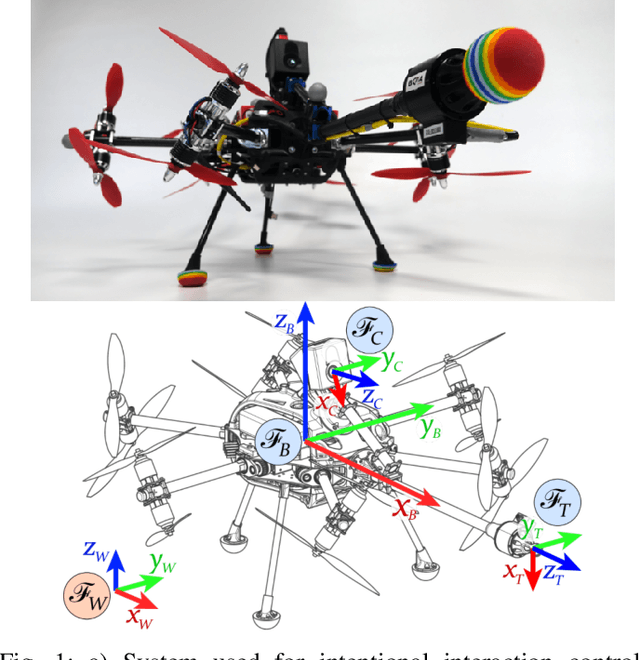

Learning dynamics for improving control of overactuated flying systems

Jun 23, 2020

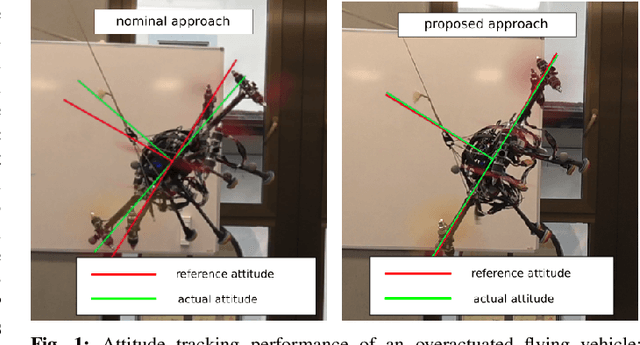

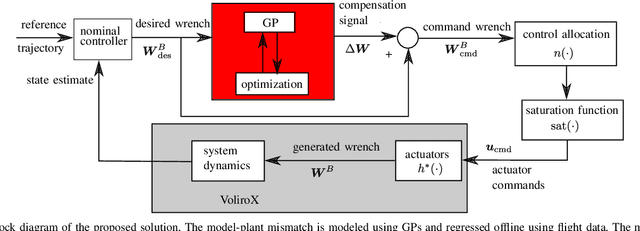

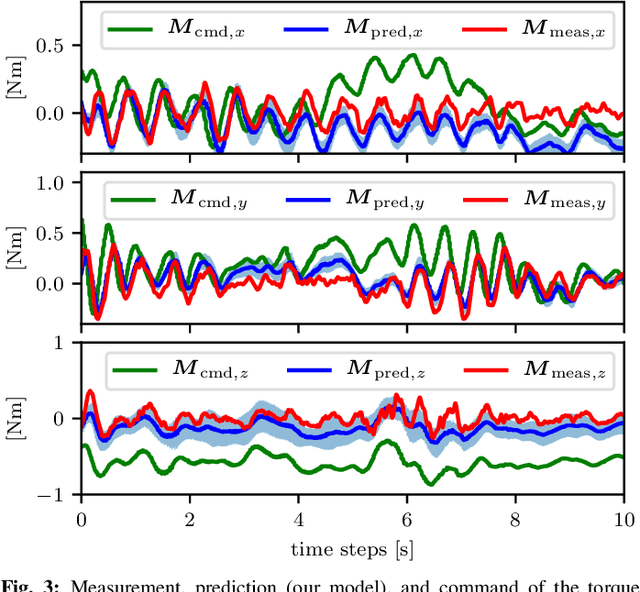

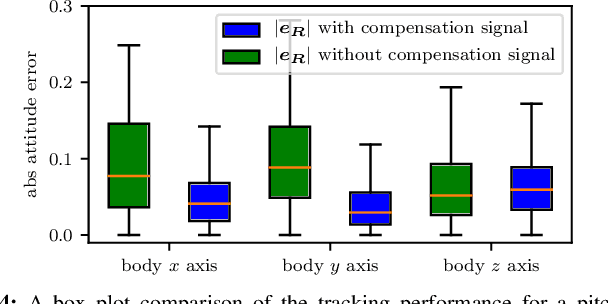

Abstract:Overactuated omnidirectional flying vehicles are capable of generating force and torque in any direction, which is important for applications such as contact-based industrial inspection. This comes at the price of an increase in model complexity. These vehicles usually have non-negligible, repetitive dynamics that are hard to model, such as the aerodynamic interference between the propellers. This makes it difficult for high-performance trajectory tracking using a model-based controller. This paper presents an approach that combines a data-driven and a first-principle model for the system actuation and uses it to improve the controller. In a first step, the first-principle model errors are learned offline using a Gaussian Process (GP) regressor. At runtime, the first-principle model and the GP regressor are used jointly to obtain control commands. This is formulated as an optimization problem, which avoids ambiguous solutions present in a standard inverse model in overactuated systems, by only using forward models. The approach is validated using a tilt-arm overactuated omnidirectional flying vehicle performing attitude trajectory tracking. The results show that with our proposed method, the attitude trajectory error is reduced by 32% on average as compared to a nominal PID controller.

Voxgraph: Globally Consistent, Volumetric Mapping using Signed Distance Function Submaps

Apr 27, 2020

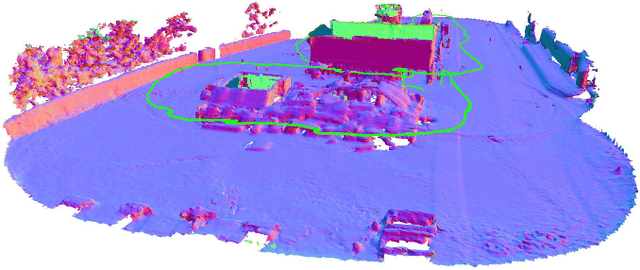

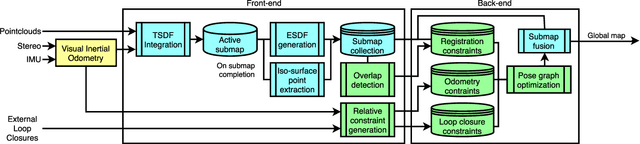

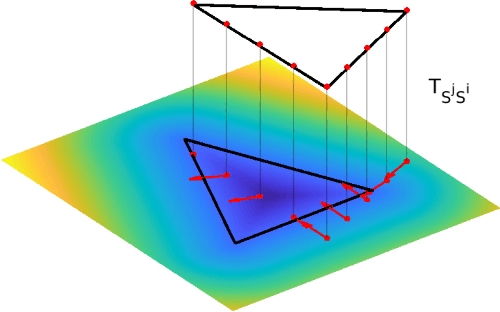

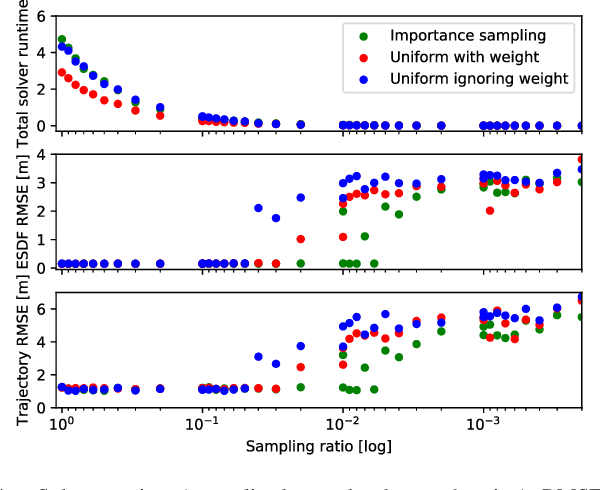

Abstract:Globally consistent dense maps are a key requirement for long-term robot navigation in complex environments. While previous works have addressed the challenges of dense mapping and global consistency, most require more computational resources than may be available on-board small robots. We propose a framework that creates globally consistent volumetric maps on a CPU and is lightweight enough to run on computationally constrained platforms. Our approach represents the environment as a collection of overlapping Signed Distance Function (SDF) submaps, and maintains global consistency by computing an optimal alignment of the submap collection. By exploiting the underlying SDF representation, we generate correspondence free constraints between submap pairs that are computationally efficient enough to optimize the global problem each time a new submap is added. We deploy the proposed system on a hexacopter Micro Aerial Vehicle (MAV) with an Intel i7-8650U CPU in two realistic scenarios: mapping a large-scale area using a 3D LiDAR, and mapping an industrial space using an RGB-D camera. In the large-scale outdoor experiments, the system optimizes a 120x80m map in less than 4s and produces absolute trajectory RMSEs of less than 1m over 400m trajectories. Our complete system, called voxgraph, is available as open source.

* 8 pages, 9 figures

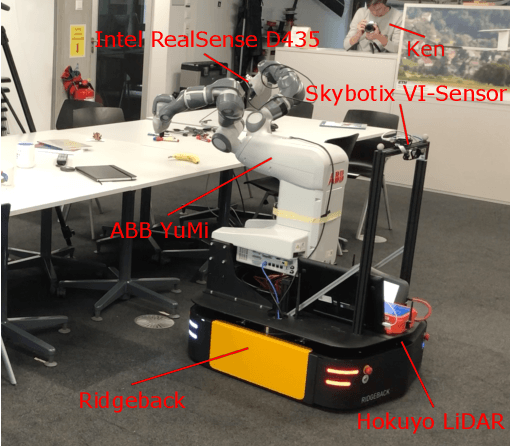

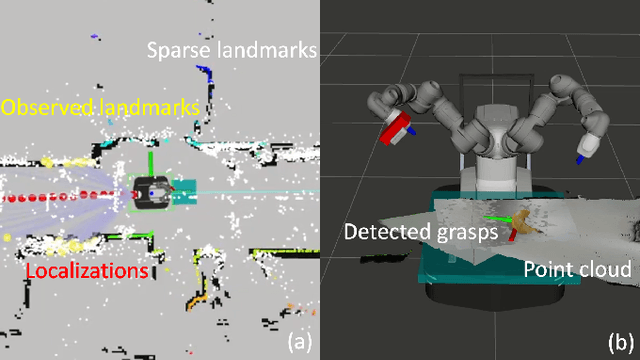

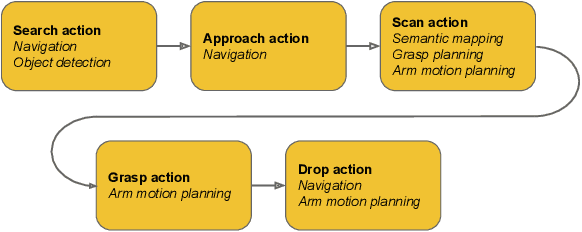

Go Fetch: Mobile Manipulation in Unstructured Environments

Apr 02, 2020

Abstract:With humankind facing new and increasingly large-scale challenges in the medical and domestic spheres, automation of the service sector carries a tremendous potential for improved efficiency, quality, and safety of operations. Mobile robotics can offer solutions with a high degree of mobility and dexterity, however these complex systems require a multitude of heterogeneous components to be carefully integrated into one consistent framework. This work presents a mobile manipulation system that combines perception, localization, navigation, motion planning and grasping skills into one common workflow for fetch and carry applications in unstructured indoor environments. The tight integration across the various modules is experimentally demonstrated on the task of finding a commonly available object in an office environment, grasping it, and delivering it to a desired drop-off location. The accompanying video is available at https://youtu.be/e89_Xg1sLnY.

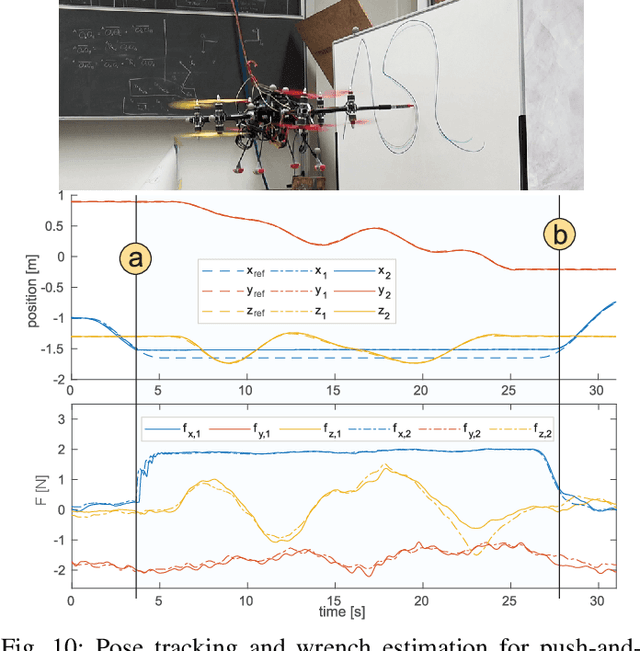

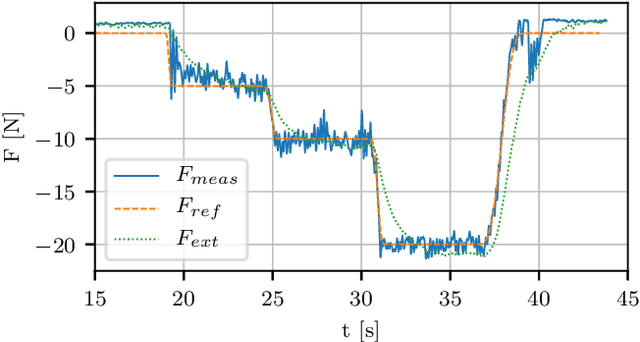

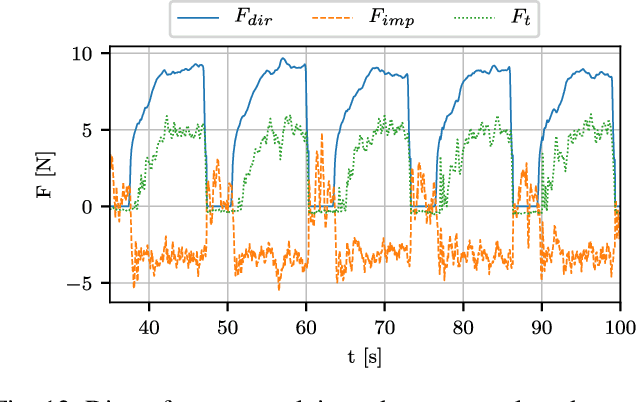

Active Interaction Force Control for Omnidirectional Aerial Contact-Based Inspection

Apr 01, 2020

Abstract:This paper presents and validates two approaches for active interaction force control and planning for omnidirectional aerial manipulation platforms, with the goal of aerial contact inspection in unstructured environments. We extend upon an axis-selective impedance controller to present a variable axis-selective impedance control which integrates direct force control for intentional interaction, using feedback from an on-board force sensor. The control approaches aim to reject disturbances in free flight, while handling unintentional interaction, and actively controlling desired interaction forces. A fully actuated and omnidirectional tilt-rotor aerial system is used to show capabilities of the control and planning methods. Experiments demonstrate disturbance rejection, push-and-slide interaction, and force controlled interaction in different flight orientations. The system is validated as a tool for non-destructive testing of concrete infrastructure, and statistical results of interaction control performance are presented and discussed.

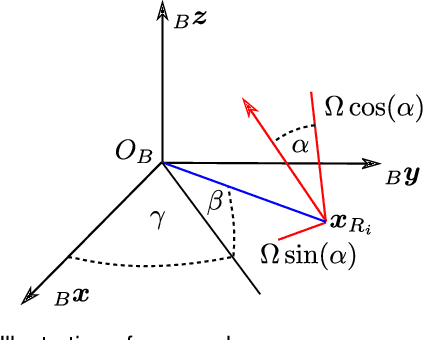

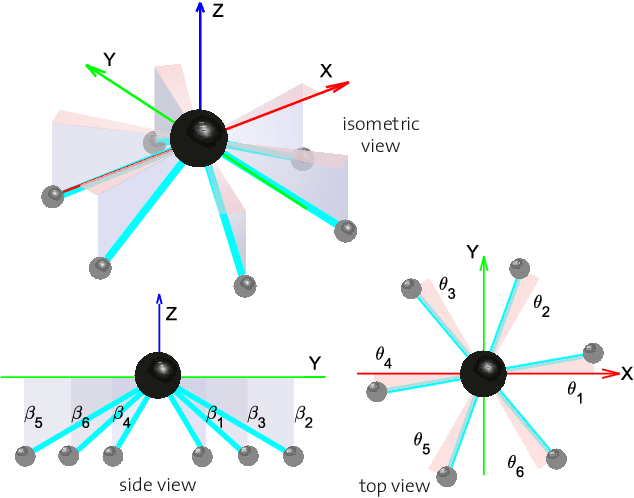

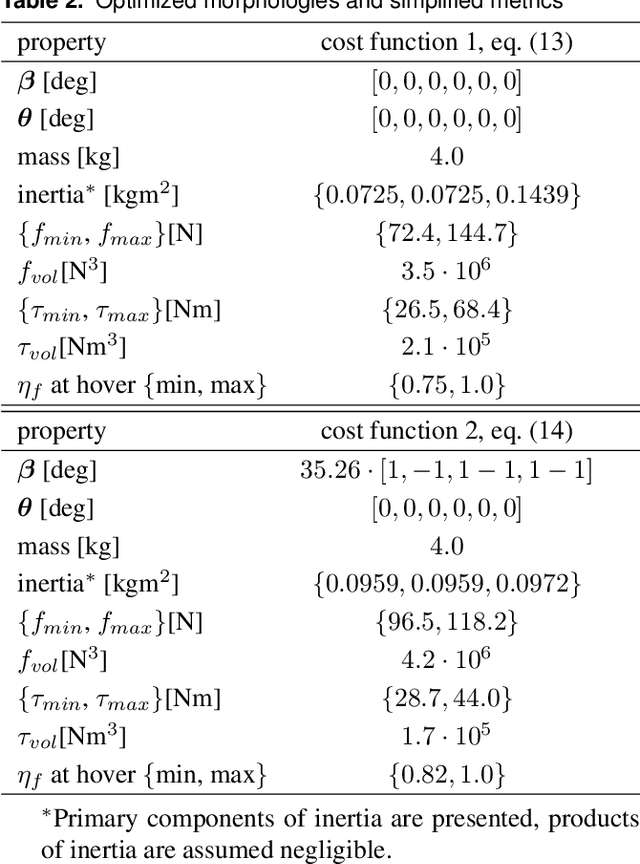

Design and optimal control of a tiltrotor micro aerial vehicle for efficient omnidirectional flight

Mar 20, 2020

Abstract:Omnidirectional micro aerial vehicles are a growing field of research, with demonstrated advantages for aerial interaction and uninhibited observation. While systems with complete pose omnidirectionality and high hover efficiency have been developed independently, a robust system that combines the two has not been demonstrated to date. This paper presents the design and optimal control of a novel omnidirectional vehicle that can exert a wrench in any orientation while maintaining efficient flight configurations. The system design is motivated by the result of a morphology design optimization. A six degrees of freedom optimal controller is derived, with an actuator allocation approach that implements task prioritization, and is robust to singularities. Flight experiments demonstrate and verify the system's capabilities.

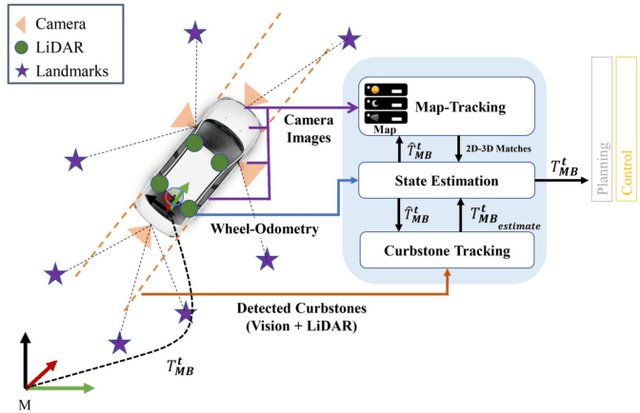

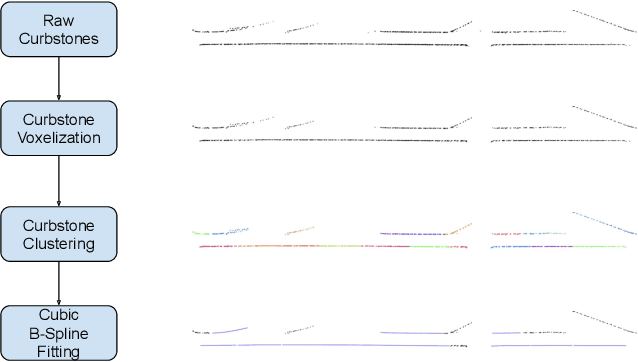

MOZARD: Multi-Modal Localization for Autonomous Vehicles in Urban Outdoor Environments

Mar 03, 2020

Abstract:Visually poor scenarios are one of the main sources of failure in visual localization systems in outdoor environments. To address this challenge, we present MOZARD, a multi-modal localization system for urban outdoor environments using vision and LiDAR. By extending our preexisting key-point based visual multi-session local localization approach with the use of semantic data, an improved localization recall can be achieved across vastly different appearance conditions. In particular we focus on the use of curbstone information because of their broad distribution and reliability within urban environments. We present thorough experimental evaluations on several driving kilometers in challenging urban outdoor environments, analyze the recall and accuracy of our localization system and demonstrate in a case study possible failure cases of each subsystem. We demonstrate that MOZARD is able to bridge scenarios where our previous work VIZARD fails, hence yielding an increased recall performance, while a similar localization accuracy of 0.2m is achieved

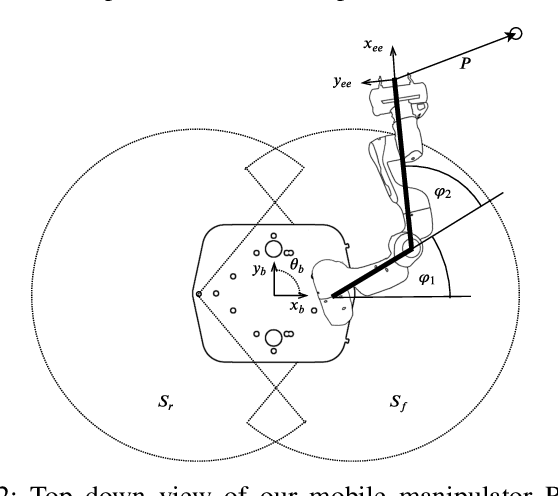

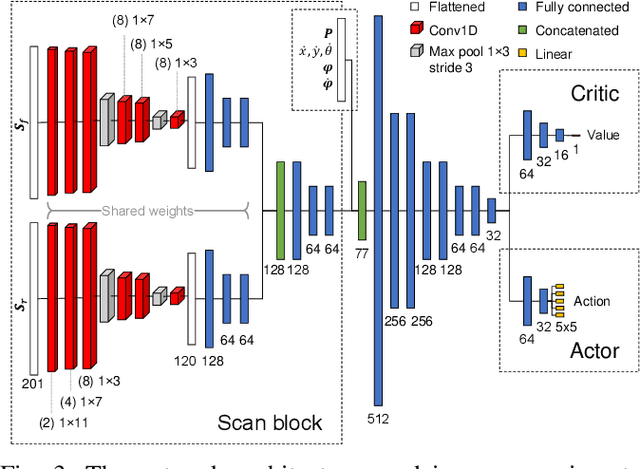

Whole-Body Control of a Mobile Manipulator using End-to-End Reinforcement Learning

Feb 25, 2020

Abstract:Mobile manipulation is usually achieved by sequentially executing base and manipulator movements. This simplification, however, leads to a loss in efficiency and in some cases a reduction of workspace size. Even though different methods have been proposed to solve Whole-Body Control (WBC) online, they are either limited by a kinematic model or do not allow for reactive, online obstacle avoidance. In order to overcome these drawbacks, in this work, we propose an end-to-end Reinforcement Learning (RL) approach to WBC. We compared our learned controller against a state-of-the-art sampling-based method in simulation and achieved faster overall mission times. In addition, we validated the learned policy on our mobile manipulator RoyalPanda in challenging narrow corridor environments.

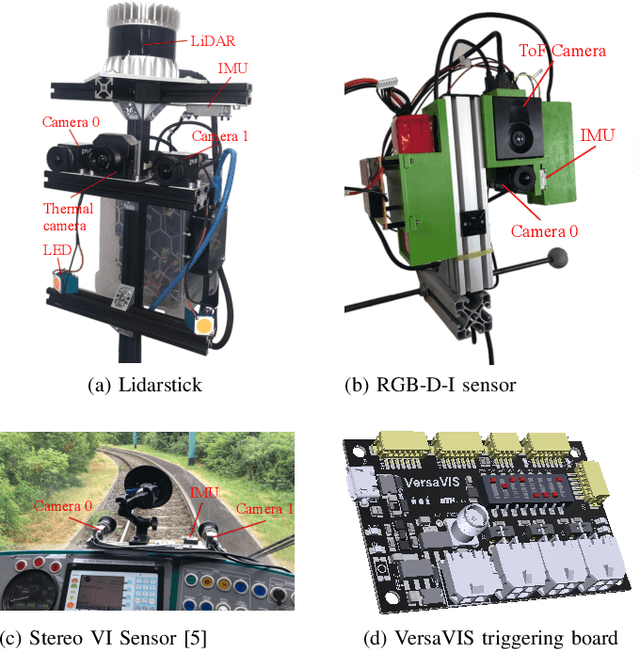

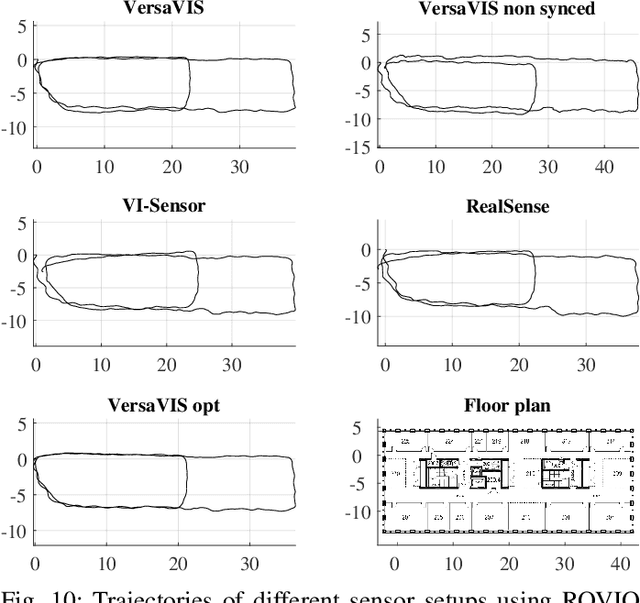

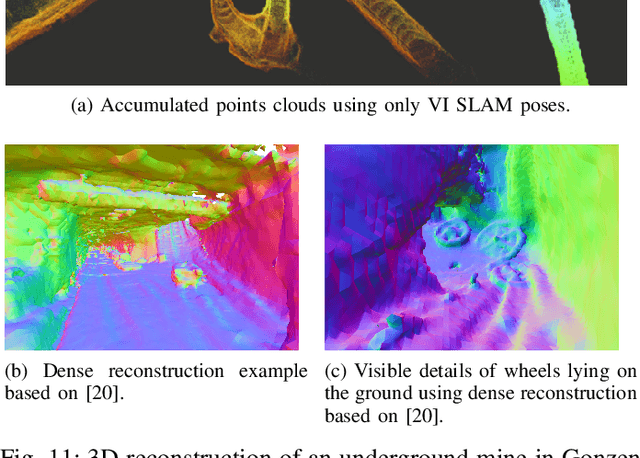

VersaVIS: An Open Versatile Multi-Camera Visual-Inertial Sensor Suite

Dec 05, 2019

Abstract:Robust and accurate pose estimation is crucial for many applications in mobile robotics. Extending visual Simultaneous Localization and Mapping (SLAM) with other modalities such as an inertial measurement unit (IMU) can boost robustness and accuracy. However, for a tight sensor fusion, accurate time synchronization of the sensors is often crucial. Changing exposure times, internal sensor filtering, multiple clock sources and unpredictable delays from operation system scheduling and data transfer can make sensor synchronization challenging. In this paper, we present VersaVIS, an Open Versatile Multi-Camera Visual-Inertial Sensor Suite aimed to be an efficient research platform for easy deployment, integration and extension for many mobile robotic applications. VersaVIS provides a complete, open-source hardware, firmware and software bundle to perform time synchronization of multiple cameras with an IMU featuring exposure compensation, host clock translation and independent and stereo camera triggering. The sensor suite supports a wide range of cameras and IMUs to match the requirements of the application. The synchronization accuracy of the framework is evaluated on multiple experiments achieving timing accuracy of less than 1 ms. Furthermore, the applicability and versatility of the sensor suite is demonstrated in multiple applications including visual-inertial SLAM, multi-camera applications, multimodal mapping, reconstruction and object based mapping.

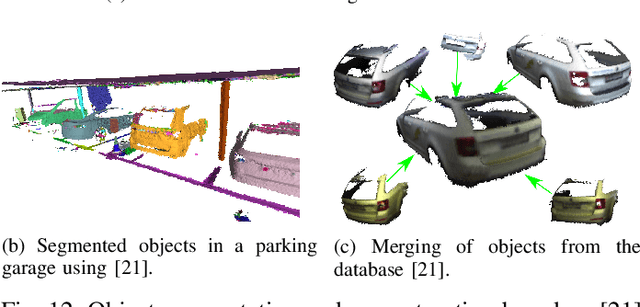

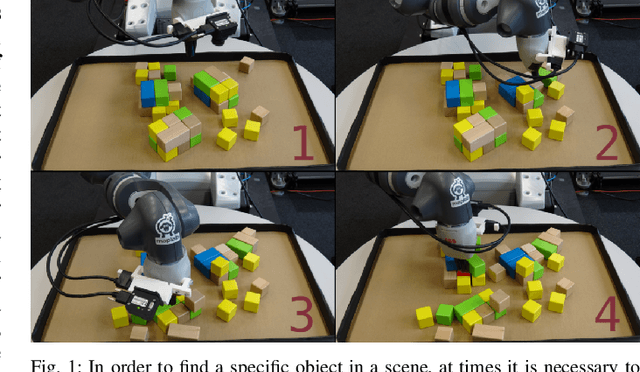

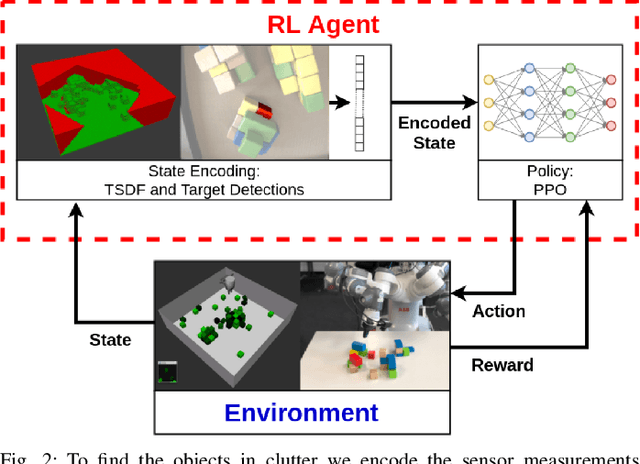

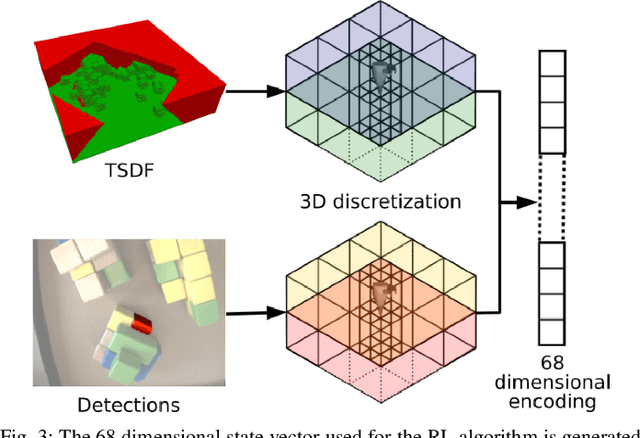

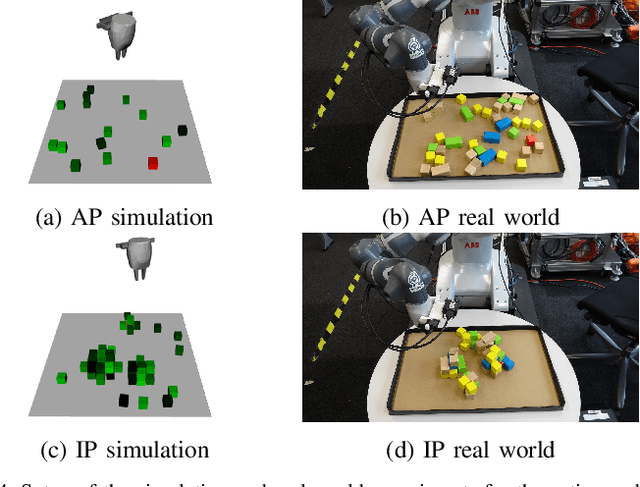

Object Finding in Cluttered Scenes Using Interactive Perception

Nov 18, 2019

Abstract:Object finding in clutter is a skill that requires both perception of the environment and in many cases physical interaction. In robotics, interactive perception defines a set of algorithms that leverage actions to improve the perception of the environment, and vice versa use perception to guide the next action. Scene interactions are difficult to model, therefore, most of the current systems use predefined heuristics. This limits their ability to efficiently search for the target object in a complex environment. In order to remove heuristics and the need for explicit models of the interactions, in this work we propose a reinforcement learning based active and interactive perception system for scene exploration and object search. We evaluate our work both in simulated and in real world experiments using a robotic manipulator equipped with an RGB and a depth camera, and compared our system to two baselines. The results indicate that our approach, trained in simulation only, transfers smoothly to reality and can solve the object finding task efficiently and with more than 90% success rate.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge