Jie An

Learning to Evaluate the Artness of AI-generated Images

May 08, 2023

Abstract:Assessing the artness of AI-generated images continues to be a challenge within the realm of image generation. Most existing metrics cannot be used to perform instance-level and reference-free artness evaluation. This paper presents ArtScore, a metric designed to evaluate the degree to which an image resembles authentic artworks by artists (or conversely photographs), thereby offering a novel approach to artness assessment. We first blend pre-trained models for photo and artwork generation, resulting in a series of mixed models. Subsequently, we utilize these mixed models to generate images exhibiting varying degrees of artness with pseudo-annotations. Each photorealistic image has a corresponding artistic counterpart and a series of interpolated images that range from realistic to artistic. This dataset is then employed to train a neural network that learns to estimate quantized artness levels of arbitrary images. Extensive experiments reveal that the artness levels predicted by ArtScore align more closely with human artistic evaluation than existing evaluation metrics, such as Gram loss and ArtFID.

Latent-Shift: Latent Diffusion with Temporal Shift for Efficient Text-to-Video Generation

Apr 18, 2023

Abstract:We propose Latent-Shift -- an efficient text-to-video generation method based on a pretrained text-to-image generation model that consists of an autoencoder and a U-Net diffusion model. Learning a video diffusion model in the latent space is much more efficient than in the pixel space. The latter is often limited to first generating a low-resolution video followed by a sequence of frame interpolation and super-resolution models, which makes the entire pipeline very complex and computationally expensive. To extend a U-Net from image generation to video generation, prior work proposes to add additional modules like 1D temporal convolution and/or temporal attention layers. In contrast, we propose a parameter-free temporal shift module that can leverage the spatial U-Net as is for video generation. We achieve this by shifting two portions of the feature map channels forward and backward along the temporal dimension. The shifted features of the current frame thus receive the features from the previous and the subsequent frames, enabling motion learning without additional parameters. We show that Latent-Shift achieves comparable or better results while being significantly more efficient. Moreover, Latent-Shift can generate images despite being finetuned for T2V generation.

QuantArt: Quantizing Image Style Transfer Towards High Visual Fidelity

Dec 20, 2022Abstract:The mechanism of existing style transfer algorithms is by minimizing a hybrid loss function to push the generated image toward high similarities in both content and style. However, this type of approach cannot guarantee visual fidelity, i.e., the generated artworks should be indistinguishable from real ones. In this paper, we devise a new style transfer framework called QuantArt for high visual-fidelity stylization. QuantArt pushes the latent representation of the generated artwork toward the centroids of the real artwork distribution with vector quantization. By fusing the quantized and continuous latent representations, QuantArt allows flexible control over the generated artworks in terms of content preservation, style similarity, and visual fidelity. Experiments on various style transfer settings show that our QuantArt framework achieves significantly higher visual fidelity compared with the existing style transfer methods.

Improving Visual-textual Sentiment Analysis by Fusing Expert Features

Nov 23, 2022Abstract:Visual-textual sentiment analysis aims to predict sentiment with the input of a pair of image and text. The main challenge of visual-textual sentiment analysis is how to learn effective visual features for sentiment prediction since input images are often very diverse. To address this challenge, we propose a new method that improves visual-textual sentiment analysis by introducing powerful expert visual features. The proposed method consists of four parts: (1) a visual-textual branch to learn features directly from data for sentiment analysis, (2) a visual expert branch with a set of pre-trained "expert" encoders to extract effective visual features, (3) a CLIP branch to implicitly model visual-textual correspondence, and (4) a multimodal feature fusion network based on either BERT or MLP to fuse multimodal features and make sentiment prediction. Extensive experiments on three datasets show that our method produces better visual-textual sentiment analysis performance than existing methods.

Make-A-Video: Text-to-Video Generation without Text-Video Data

Sep 29, 2022

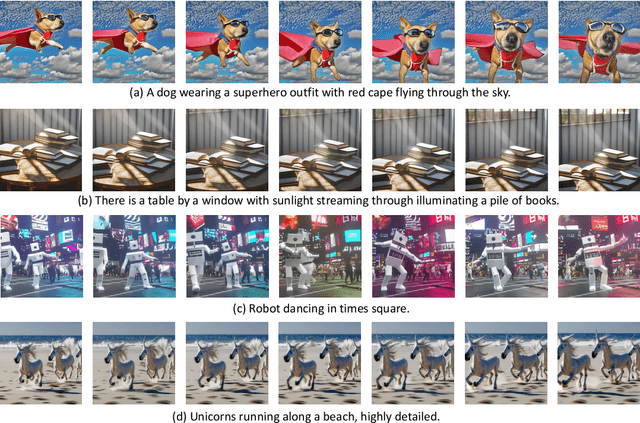

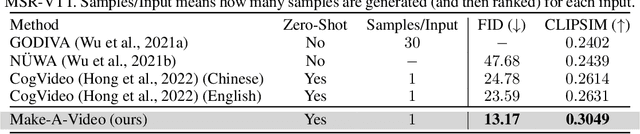

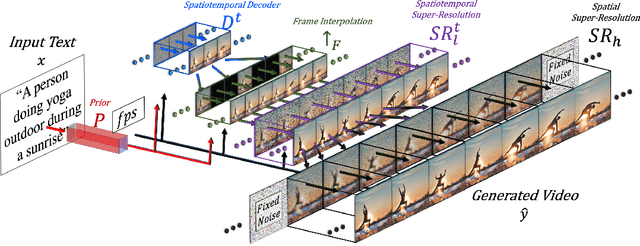

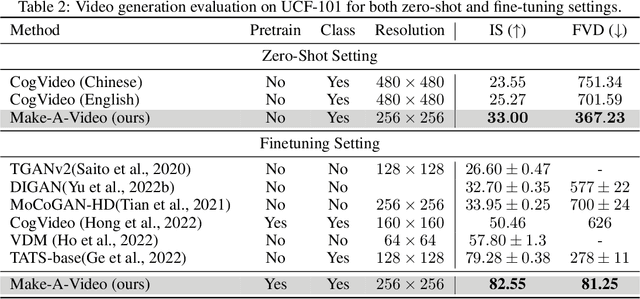

Abstract:We propose Make-A-Video -- an approach for directly translating the tremendous recent progress in Text-to-Image (T2I) generation to Text-to-Video (T2V). Our intuition is simple: learn what the world looks like and how it is described from paired text-image data, and learn how the world moves from unsupervised video footage. Make-A-Video has three advantages: (1) it accelerates training of the T2V model (it does not need to learn visual and multimodal representations from scratch), (2) it does not require paired text-video data, and (3) the generated videos inherit the vastness (diversity in aesthetic, fantastical depictions, etc.) of today's image generation models. We design a simple yet effective way to build on T2I models with novel and effective spatial-temporal modules. First, we decompose the full temporal U-Net and attention tensors and approximate them in space and time. Second, we design a spatial temporal pipeline to generate high resolution and frame rate videos with a video decoder, interpolation model and two super resolution models that can enable various applications besides T2V. In all aspects, spatial and temporal resolution, faithfulness to text, and quality, Make-A-Video sets the new state-of-the-art in text-to-video generation, as determined by both qualitative and quantitative measures.

Time-Frequency Mask Aware Bi-directional LSTM: A Deep Learning Approach for Underwater Acoustic Signal Separation

Feb 09, 2022

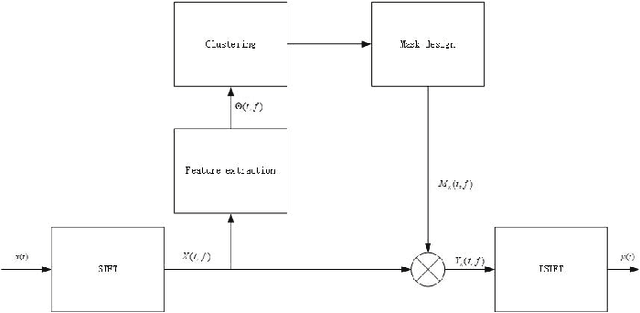

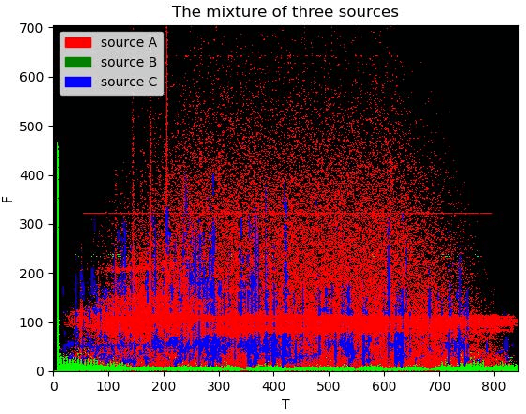

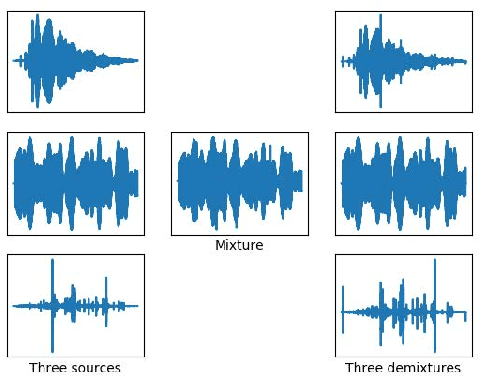

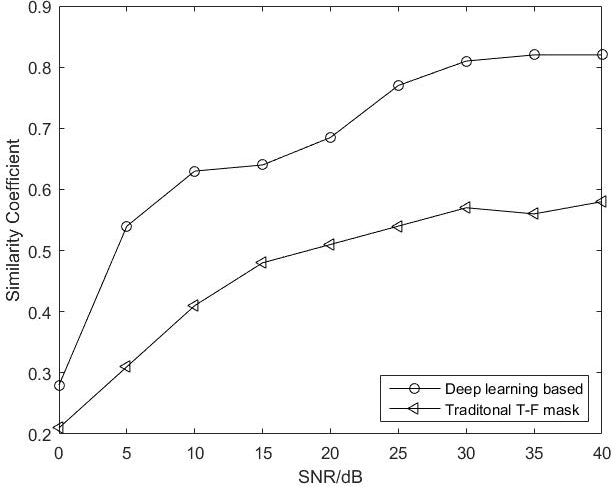

Abstract:The underwater acoustic signals separation is a key technique for the underwater communications. The existing methods are mostly model-based, and could not accurately characterise the practical underwater acoustic communication environment. They are only suitable for binary signal separation, but cannot handle multivariate signal separation. On the other hand, the recurrent neural network (RNN) shows powerful capability in extracting the features of the temporal sequences. Inspired by this, in this paper, we present a data-driven approach for underwater acoustic signals separation using deep learning technology. We use the Bi-directional Long Short-Term Memory (Bi-LSTM) to explore the features of Time-Frequency (T-F) mask, and propose a T-F mask aware Bi-LSTM for signal separation. Taking advantage of the sparseness of the T-F image, the designed Bi-LSTM network is able to extract the discriminative features for separation, which further improves the separation performance. In particular, this method breaks through the limitations of the existing methods, not only achieves good results in multivariate separation, but also effectively separates signals when mixed with 40dB Gaussian noise signals. The experimental results show that this method can achieve a $97\%$ guarantee ratio (PSR), and the average similarity coefficient of the multivariate signal separation is stable above 0.8 under high noise conditions.

ArtFlow: Unbiased Image Style Transfer via Reversible Neural Flows

Apr 09, 2021

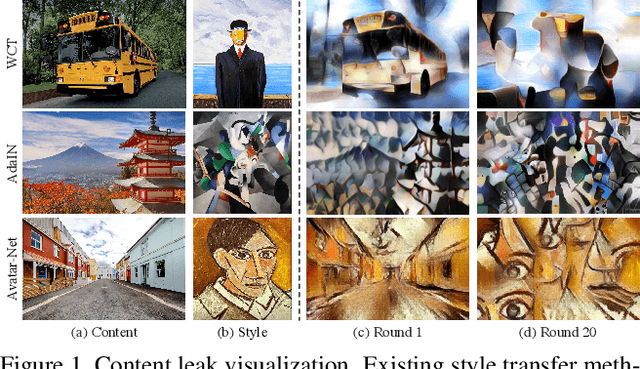

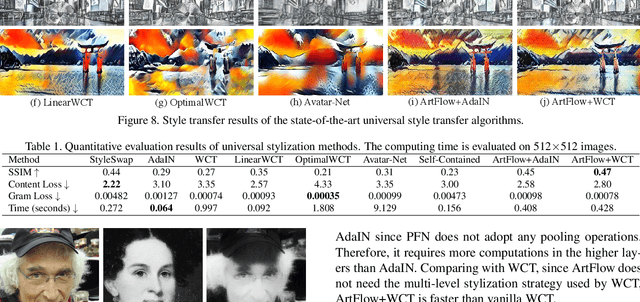

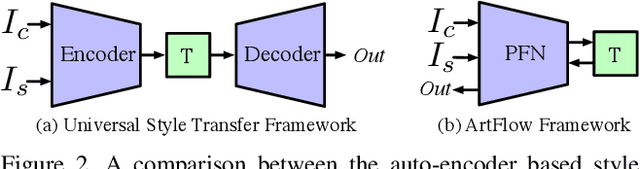

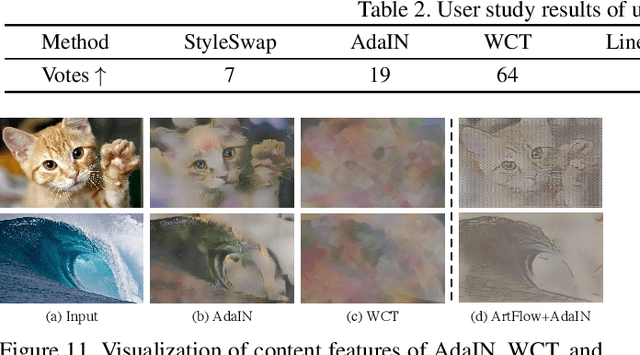

Abstract:Universal style transfer retains styles from reference images in content images. While existing methods have achieved state-of-the-art style transfer performance, they are not aware of the content leak phenomenon that the image content may corrupt after several rounds of stylization process. In this paper, we propose ArtFlow to prevent content leak during universal style transfer. ArtFlow consists of reversible neural flows and an unbiased feature transfer module. It supports both forward and backward inferences and operates in a projection-transfer-reversion scheme. The forward inference projects input images into deep features, while the backward inference remaps deep features back to input images in a lossless and unbiased way. Extensive experiments demonstrate that ArtFlow achieves comparable performance to state-of-the-art style transfer methods while avoiding content leak.

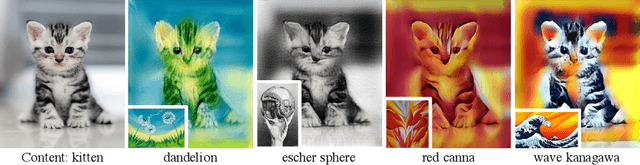

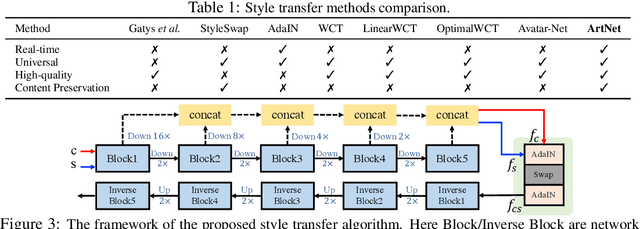

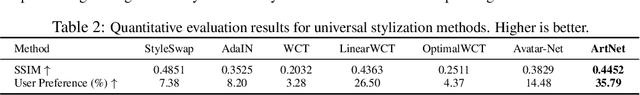

Real-time Universal Style Transfer on High-resolution Images via Zero-channel Pruning

Jun 23, 2020

Abstract:Extracting effective deep features to represent content and style information is the key to universal style transfer. Most existing algorithms use VGG19 as the feature extractor, which incurs a high computational cost and impedes real-time style transfer on high-resolution images. In this work, we propose a lightweight alternative architecture - ArtNet, which is based on GoogLeNet, and later pruned by a novel channel pruning method named Zero-channel Pruning specially designed for style transfer approaches. Besides, we propose a theoretically sound sandwich swap transform (S2) module to transfer deep features, which can create a pleasing holistic appearance and good local textures with an improved content preservation ability. By using ArtNet and S2, our method is 2.3 to 107.4 times faster than state-of-the-art approaches. The comprehensive experiments demonstrate that ArtNet can achieve universal, real-time, and high-quality style transfer on high-resolution images simultaneously, (68.03 FPS on 512 times 512 images).

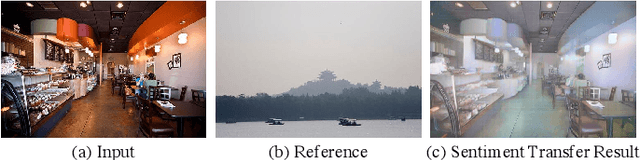

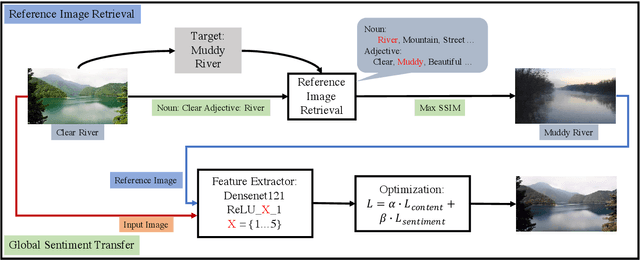

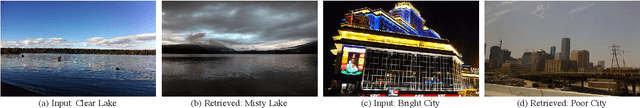

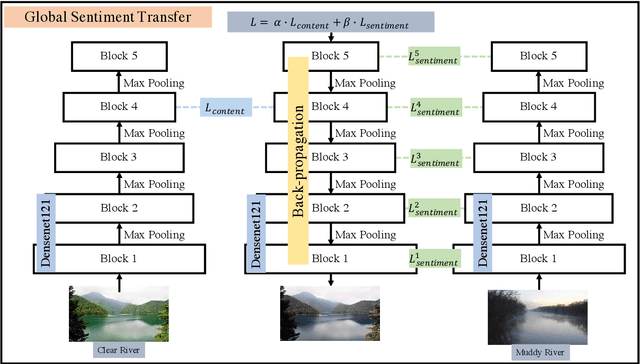

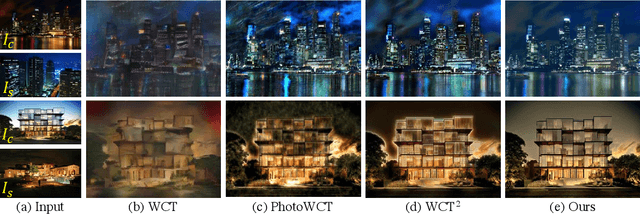

Global Image Sentiment Transfer

Jun 22, 2020

Abstract:Transferring the sentiment of an image is an unexplored research topic in the area of computer vision. This work proposes a novel framework consisting of a reference image retrieval step and a global sentiment transfer step to transfer sentiments of images according to a given sentiment tag. The proposed image retrieval algorithm is based on the SSIM index. The retrieved reference images by the proposed algorithm are more content-related against the algorithm based on the perceptual loss. Therefore can lead to a better image sentiment transfer result. In addition, we propose a global sentiment transfer step, which employs an optimization algorithm to iteratively transfer sentiment of images based on feature maps produced by the Densenet121 architecture. The proposed sentiment transfer algorithm can transfer the sentiment of images while ensuring the content structure of the input image intact. The qualitative and quantitative experiments demonstrate that the proposed sentiment transfer framework outperforms existing artistic and photorealistic style transfer algorithms in making reliable sentiment transfer results with rich and exact details.

Ultrafast Photorealistic Style Transfer via Neural Architecture Search

Dec 05, 2019

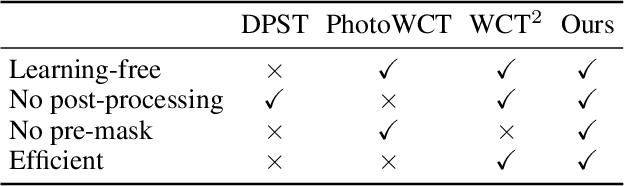

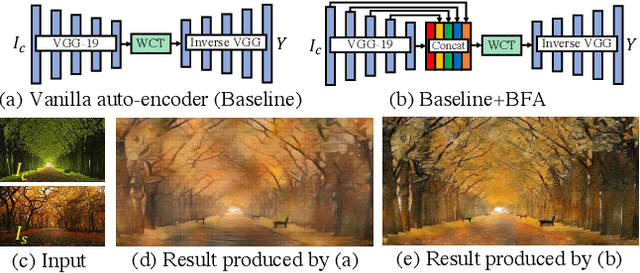

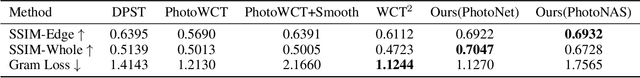

Abstract:The key challenge in photorealistic style transfer is that an algorithm should faithfully transfer the style of a reference photo to a content photo while the generated image should look like one captured by a camera. Although several photorealistic style transfer algorithms have been proposed, they need to rely on post- and/or pre-processing to make the generated images look photorealistic. If we disable the additional processing, these algorithms would fail to produce plausible photorealistic stylization in terms of detail preservation and photorealism. In this work, we propose an effective solution to these issues. Our method consists of a construction step (C-step) to build a photorealistic stylization network and a pruning step (P-step) for acceleration. In the C-step, we propose a dense auto-encoder named PhotoNet based on a carefully designed pre-analysis. PhotoNet integrates a feature aggregation module (BFA) and instance normalized skip links (INSL). To generate faithful stylization, we introduce multiple style transfer modules in the decoder and INSLs. PhotoNet significantly outperforms existing algorithms in terms of both efficiency and effectiveness. In the P-step, we adopt a neural architecture search method to accelerate PhotoNet. We propose an automatic network pruning framework in the manner of teacher-student learning for photorealistic stylization. The network architecture named PhotoNAS resulted from the search achieves significant acceleration over PhotoNet while keeping the stylization effects almost intact. We conduct extensive experiments on both image and video transfer. The results show that our method can produce favorable results while achieving 20-30 times acceleration in comparison with the existing state-of-the-art approaches. It is worth noting that the proposed algorithm accomplishes better performance without any pre- or post-processing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge