David D. Fan

Autonomous Off-road Navigation over Extreme Terrains with Perceptually-challenging Conditions

Jan 26, 2021

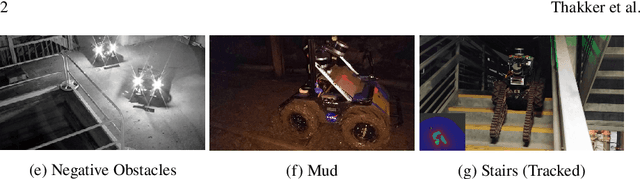

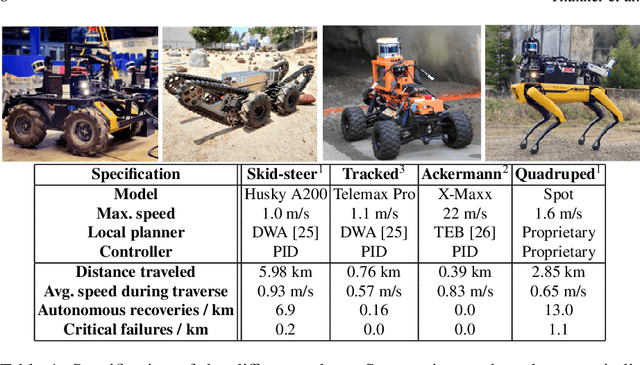

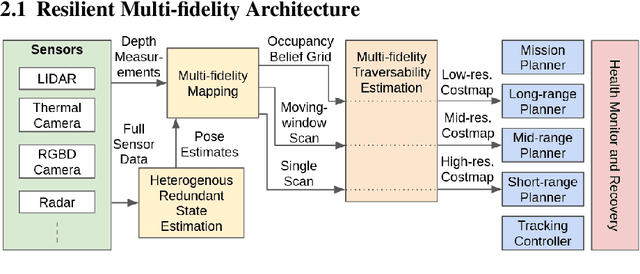

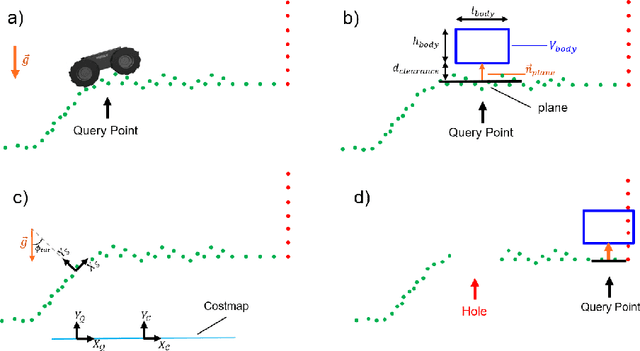

Abstract:We propose a framework for resilient autonomous navigation in perceptually challenging unknown environments with mobility-stressing elements such as uneven surfaces with rocks and boulders, steep slopes, negative obstacles like cliffs and holes, and narrow passages. Environments are GPS-denied and perceptually-degraded with variable lighting from dark to lit and obscurants (dust, fog, smoke). Lack of prior maps and degraded communication eliminates the possibility of prior or off-board computation or operator intervention. This necessitates real-time on-board computation using noisy sensor data. To address these challenges, we propose a resilient architecture that exploits redundancy and heterogeneity in sensing modalities. Further resilience is achieved by triggering recovery behaviors upon failure. We propose a fast settling algorithm to generate robust multi-fidelity traversability estimates in real-time. The proposed approach was deployed on multiple physical systems including skid-steer and tracked robots, a high-speed RC car and legged robots, as a part of Team CoSTAR's effort to the DARPA Subterranean Challenge, where the team won 2nd and 1st place in the Tunnel and Urban Circuits, respectively.

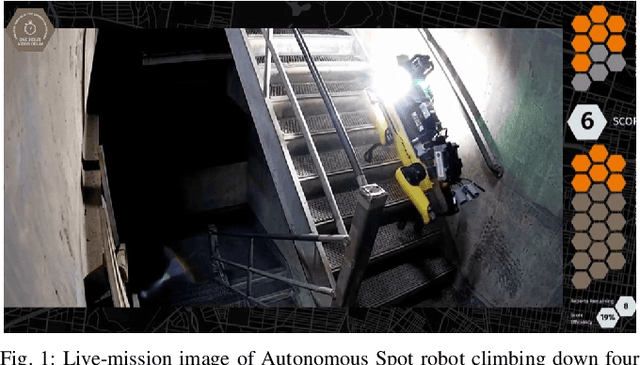

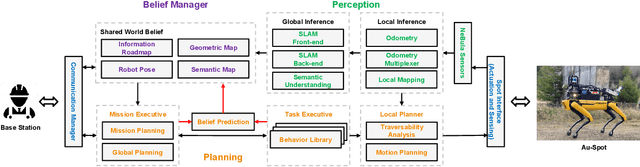

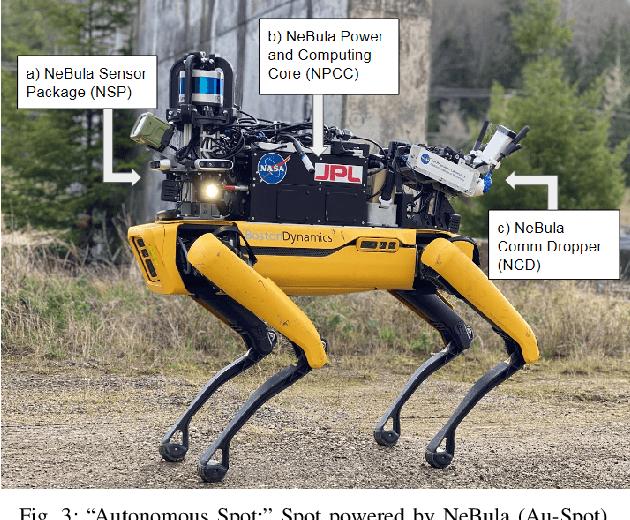

Autonomous Spot: Long-Range Autonomous Exploration of Extreme Environments with Legged Locomotion

Nov 01, 2020

Abstract:This paper serves as one of the first efforts to enable large-scale and long-duration autonomy using the Boston Dynamics Spot robot. Motivated by exploring extreme environments, particularly those involved in the DARPA Subterranean Challenge, this paper pushes the boundaries of the state-of-practice in enabling legged robotic systems to accomplish real-world complex missions in relevant scenarios. In particular, we discuss the behaviors and capabilities which emerge from the integration of the autonomy architecture NeBula (Networked Belief-aware Perceptual Autonomy) with next-generation mobility systems. We will discuss the hardware and software challenges, and solutions in mobility, perception, autonomy, and very briefly, wireless networking, as well as lessons learned and future directions. We demonstrate the performance of the proposed solutions on physical systems in real-world scenarios.

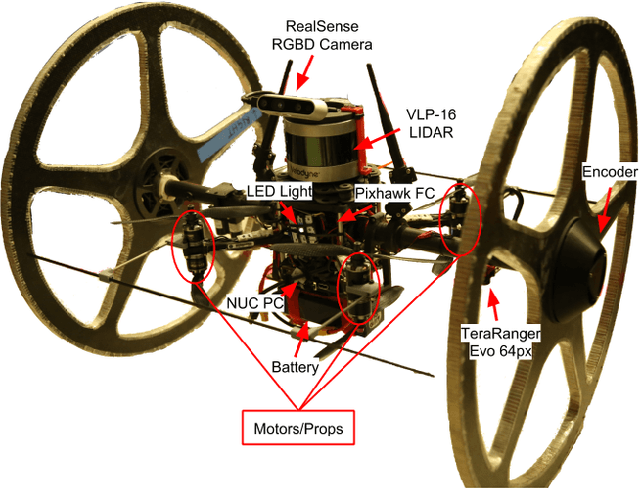

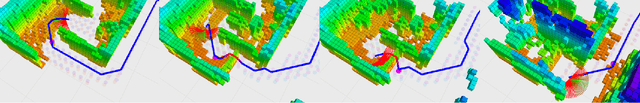

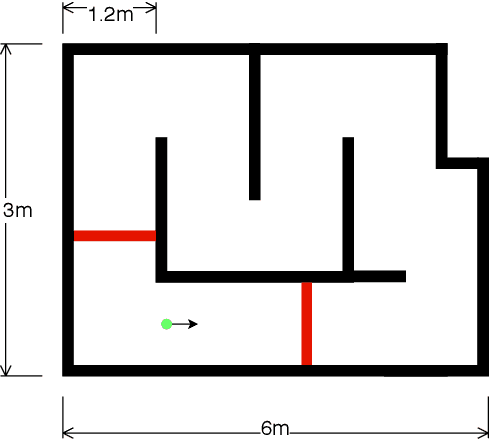

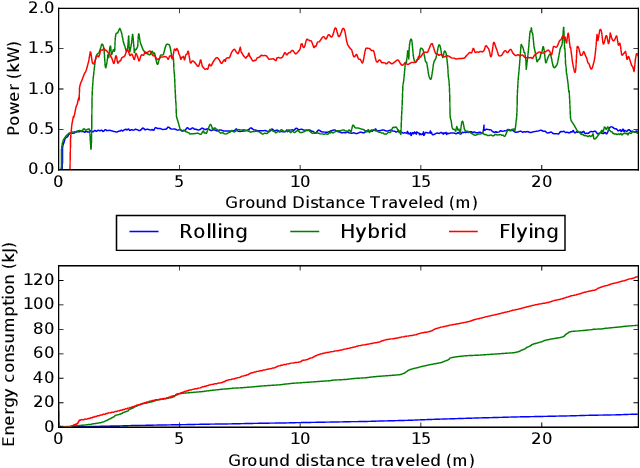

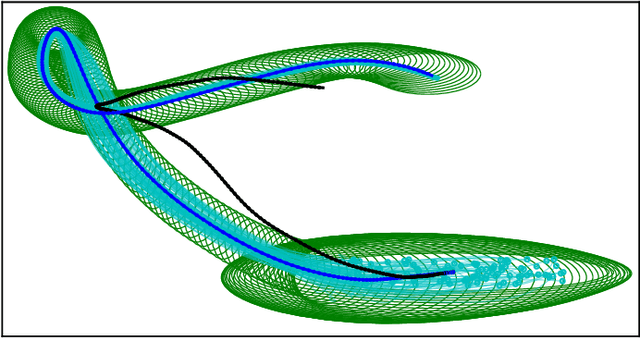

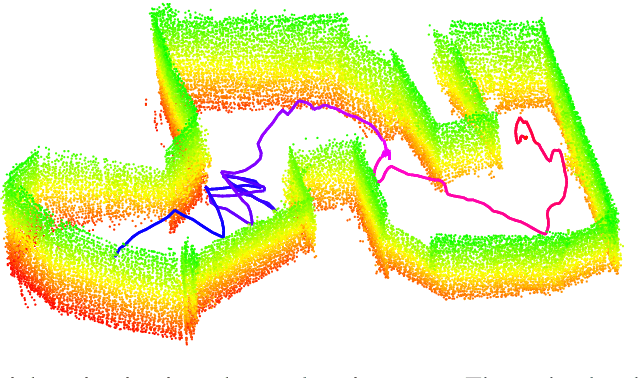

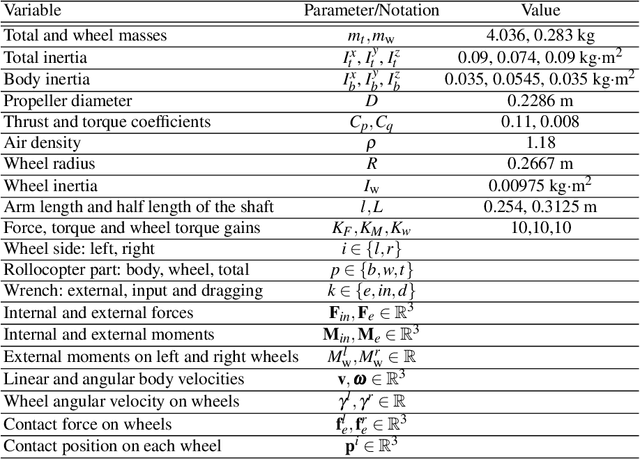

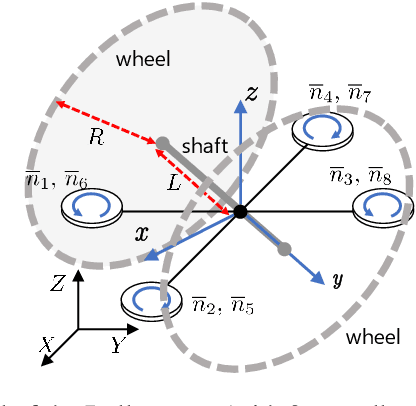

Autonomous Hybrid Ground/Aerial Mobility in Unknown Environments

Sep 11, 2020

Abstract:Hybrid ground and aerial vehicles can possess distinct advantages over ground-only or flight-only designs in terms of energy savings and increased mobility. In this work we outline our unified framework for controls, planning, and autonomy of hybrid ground/air vehicles. Our contribution is three-fold: 1) We develop a control scheme for the control of passive two-wheeled hybrid ground/aerial vehicles. 2) We present a unified planner for both rolling and flying by leveraging differential flatness mappings. 3) We conduct experiments leveraging mapping and global planning for hybrid mobility in unknown environments, showing that hybrid mobility uses up to five times less energy than flying only.

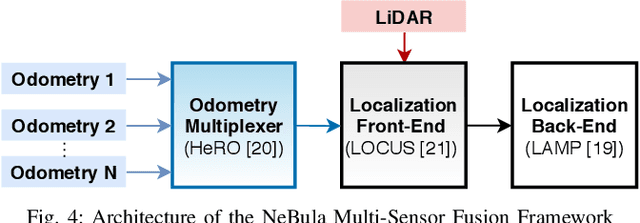

Towards Resilient Autonomous Navigation of Drones

Aug 21, 2020

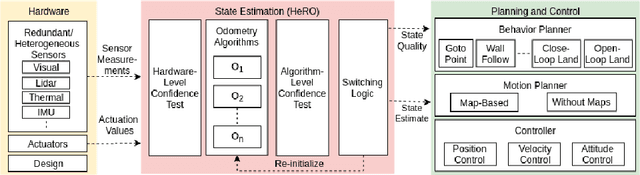

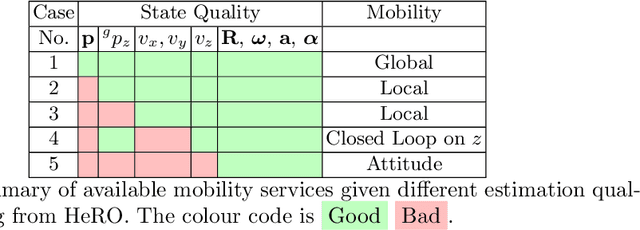

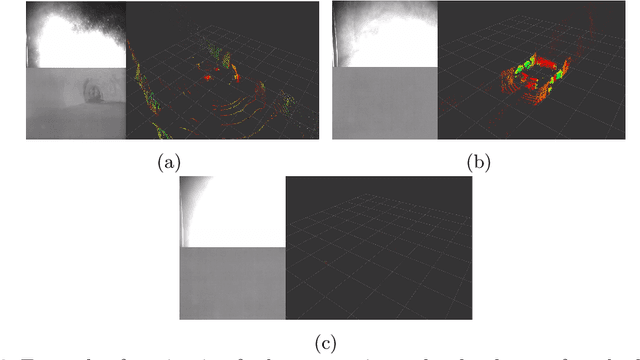

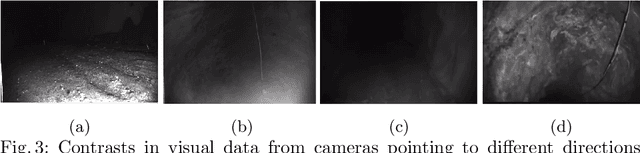

Abstract:Robots and particularly drones are especially useful in exploring extreme environments that pose hazards to humans. To ensure safe operations in these situations, usually perceptually degraded and without good GNSS, it is critical to have a reliable and robust state estimation solution. The main body of literature in robot state estimation focuses on developing complex algorithms favoring accuracy. Typically, these approaches rely on a strong underlying assumption: the main estimation engine will not fail during operation. In contrast, we propose an architecture that pursues robustness in state estimation by considering redundancy and heterogeneity in both sensing and estimation algorithms. The architecture is designed to expect and detect failures and adapt the behavior of the system to ensure safety. To this end, we present HeRO (Heterogeneous Redundant Odometry): a stack of estimation algorithms running in parallel supervised by a resiliency logic. This logic carries out three main functions: a) perform confidence tests both in data quality and algorithm health; b) re-initialize those algorithms that might be malfunctioning; c) generate a smooth state estimate by multiplexing the inputs based on their quality. The state and quality estimates are used by the guidance and control modules to adapt the mobility behaviors of the system. The validation and utility of the approach are shown with real experiments on a flying robot for the use case of autonomous exploration of subterranean environments, with particular results from the STIX event of the DARPA Subterranean Challenge.

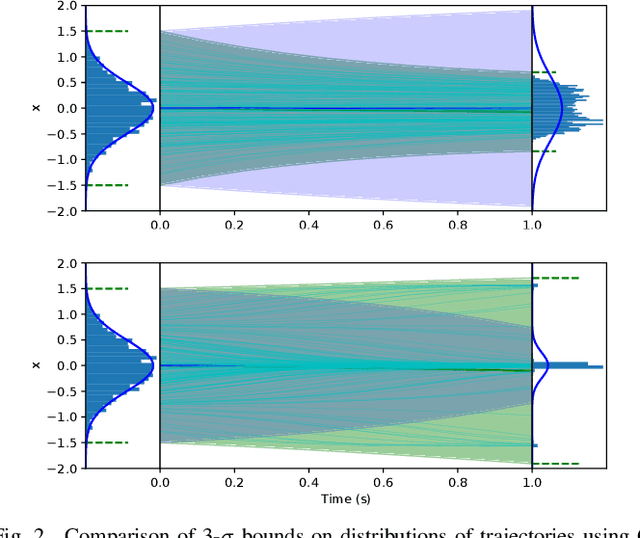

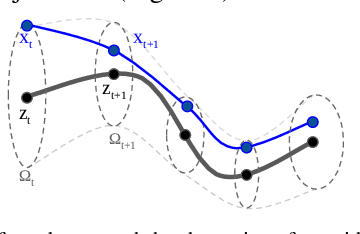

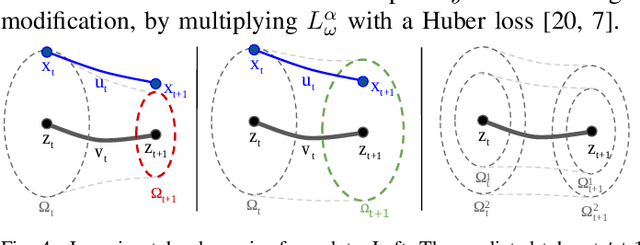

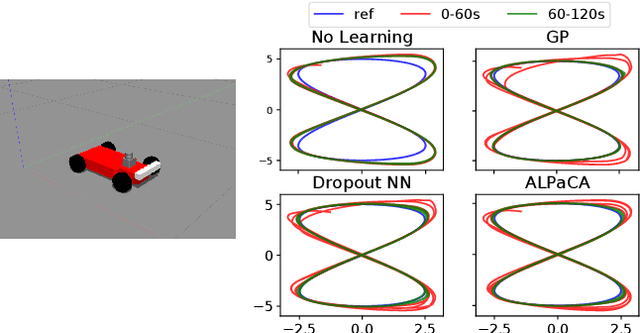

Deep Learning Tubes for Tube MPC

Feb 05, 2020

Abstract:Learning-based control aims to construct models of a system to use for planning or trajectory optimization, e.g. in model-based reinforcement learning. In order to obtain guarantees of safety in this context, uncertainty must be accurately quantified. This uncertainty may come from errors in learning (due to a lack of data, for example), or may be inherent to the system. Propagating uncertainty in learned dynamics models is a difficult problem. Common approaches rely on restrictive assumptions of how distributions are parameterized or propagated in time. In contrast, in this work we propose using deep learning to obtain expressive and flexible models of how these distributions behave, which we then use for nonlinear Model Predictive Control (MPC). First, we introduce a deep quantile regression framework for control which enforces probabilistic quantile bounds and quantifies epistemic uncertainty. Next, using our method we explore three different approaches for learning tubes which contain the possible trajectories of the system, and demonstrate how to use each of them in a Tube MPC scheme. Furthermore, we prove these schemes are recursively feasible and satisfy constraints with a desired margin of probability. Finally, we present experiments in simulation on a nonlinear quadrotor system, demonstrating the practical efficacy of these ideas.

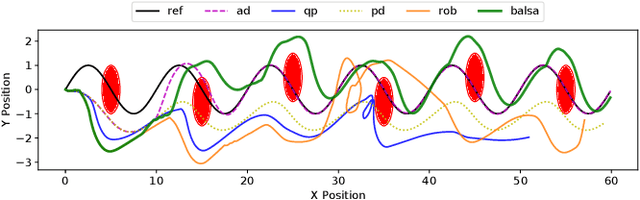

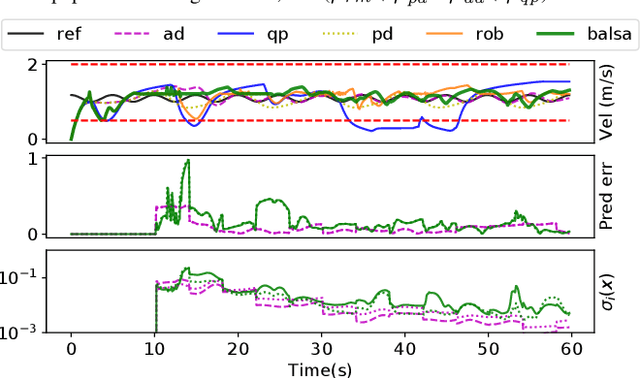

Bayesian Learning-Based Adaptive Control for Safety Critical Systems

Oct 05, 2019

Abstract:Deep learning has enjoyed much recent success, and applying state-of-the-art model learning methods to controls is an exciting prospect. However, there is a strong reluctance to use these methods on safety-critical systems, which have constraints on safety, stability, and real-time performance. We propose a framework which satisfies these constraints while allowing the use of deep neural networks for learning model uncertainties. Central to our method is the use of Bayesian model learning, which provides an avenue for maintaining appropriate degrees of caution in the face of the unknown. In the proposed approach, we develop an adaptive control framework leveraging the theory of stochastic CLFs (Control Lypunov Functions) and stochastic CBFs (Control Barrier Functions) along with tractable Bayesian model learning via Gaussian Processes or Bayesian neural networks. Under reasonable assumptions, we guarantee stability and safety while adapting to unknown dynamics with probability 1. We demonstrate this architecture for high-speed terrestrial mobility targeting potential applications in safety-critical high-speed Mars rover missions.

Contact Inertial Odometry: Collisions are your Friend

Aug 30, 2019

Abstract:Autonomous exploration of unknown environments with aerial vehicles remains a challenging problem, especially in perceptually degraded conditions. Dust, smoke, fog, and a lack of visual or LiDAR-based features result in severe difficulties for state estimation and planning. The absence of measurement updates from visual or LiDAR odometry can cause large drifts in velocity estimates while propagating measurements from an IMU. Furthermore, it is not possible to construct a map for collision checking in absence of pose updates. In this work, we show that it is indeed possible to navigate without any exteroceptive sensing by exploiting collisions instead of treating them as constraints. To this end, we first perform modeling and system identification for a hybrid ground and aerial vehicle which can withstand collisions. Next, we develop a novel external wrench estimation algorithm for this class of vehicles. We then present a novel contact-based inertial odometry (CIO) algorithm: it uses estimated external forces to detect collisions and to generate pseudo-measurements of the robot velocity, fused in an Extended Kalman Filter. Finally, we implement a reactive planner and control law which encourage exploration by bouncing off obstacles. We validate our framework in hardware experiments and show that a quadrotor can traverse a cluttered environment using an IMU only. This work can be used on drones to recover from visual inertial odometry failure or on micro-drones that do not have the payload capacity to carry cameras, LiDARs or powerful computers.

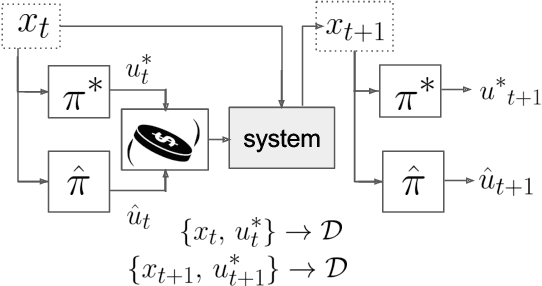

MPC-Inspired Neural Network Policies for Sequential Decision Making

Mar 14, 2018

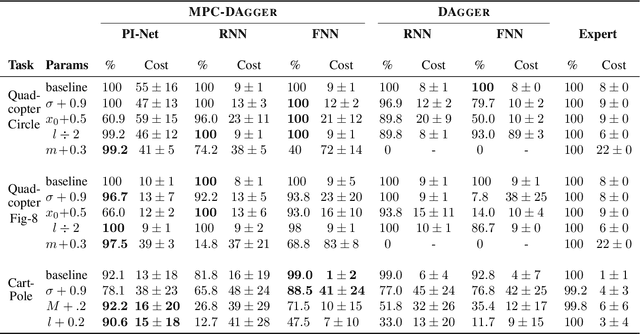

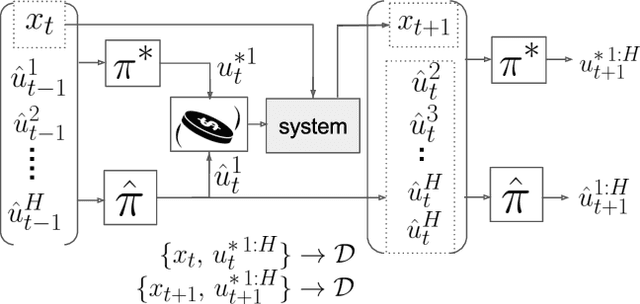

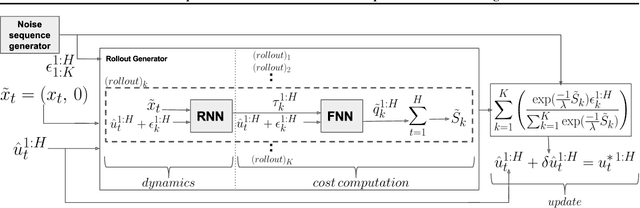

Abstract:In this paper we investigate the use of MPC-inspired neural network policies for sequential decision making. We introduce an extension to the DAgger algorithm for training such policies and show how they have improved training performance and generalization capabilities. We take advantage of this extension to show scalable and efficient training of complex planning policy architectures in continuous state and action spaces. We provide an extensive comparison of neural network policies by considering feed forward policies, recurrent policies, and recurrent policies with planning structure inspired by the Path Integral control framework. Our results suggest that MPC-type recurrent policies have better robustness to disturbances and modeling error.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge