David D. Fan

An Addendum to NeBula: Towards Extending TEAM CoSTAR's Solution to Larger Scale Environments

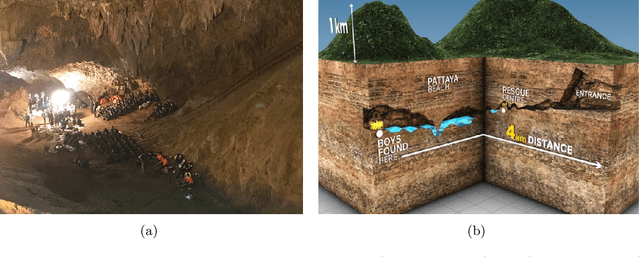

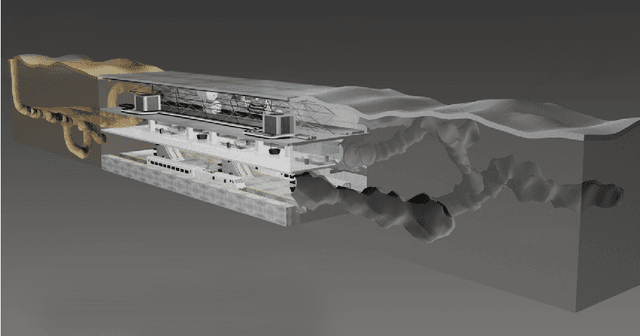

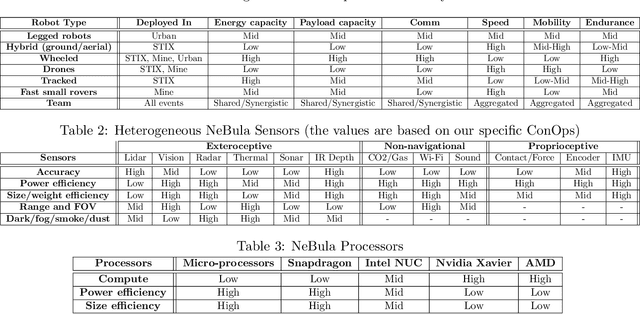

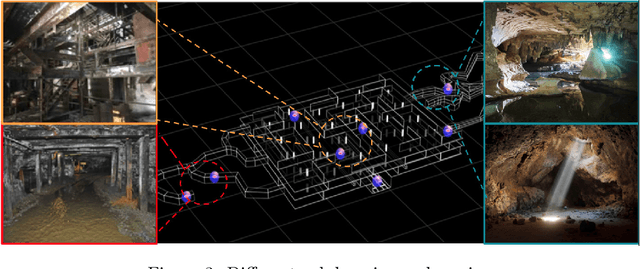

Apr 18, 2025Abstract:This paper presents an appendix to the original NeBula autonomy solution developed by the TEAM CoSTAR (Collaborative SubTerranean Autonomous Robots), participating in the DARPA Subterranean Challenge. Specifically, this paper presents extensions to NeBula's hardware, software, and algorithmic components that focus on increasing the range and scale of the exploration environment. From the algorithmic perspective, we discuss the following extensions to the original NeBula framework: (i) large-scale geometric and semantic environment mapping; (ii) an adaptive positioning system; (iii) probabilistic traversability analysis and local planning; (iv) large-scale POMDP-based global motion planning and exploration behavior; (v) large-scale networking and decentralized reasoning; (vi) communication-aware mission planning; and (vii) multi-modal ground-aerial exploration solutions. We demonstrate the application and deployment of the presented systems and solutions in various large-scale underground environments, including limestone mine exploration scenarios as well as deployment in the DARPA Subterranean challenge.

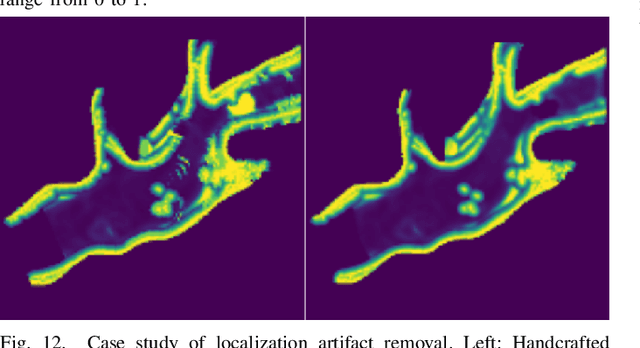

UNRealNet: Learning Uncertainty-Aware Navigation Features from High-Fidelity Scans of Real Environments

Jul 11, 2024

Abstract:Traversability estimation in rugged, unstructured environments remains a challenging problem in field robotics. Often, the need for precise, accurate traversability estimation is in direct opposition to the limited sensing and compute capability present on affordable, small-scale mobile robots. To address this issue, we present a novel method to learn [u]ncertainty-aware [n]avigation features from high-fidelity scans of [real]-world environments (UNRealNet). This network can be deployed on-robot to predict these high-fidelity features using input from lower-quality sensors. UNRealNet predicts dense, metric-space features directly from single-frame lidar scans, thus reducing the effects of occlusion and odometry error. Our approach is label-free, and is able to produce traversability estimates that are robot-agnostic. Additionally, we can leverage UNRealNet's predictive uncertainty to both produce risk-aware traversability estimates, and refine our feature predictions over time. We find that our method outperforms traditional local mapping and inpainting baselines by up to 40%, and demonstrate its efficacy on multiple legged platforms.

SEEK: Semantic Reasoning for Object Goal Navigation in Real World Inspection Tasks

May 16, 2024

Abstract:This paper addresses the problem of object-goal navigation in autonomous inspections in real-world environments. Object-goal navigation is crucial to enable effective inspections in various settings, often requiring the robot to identify the target object within a large search space. Current object inspection methods fall short of human efficiency because they typically cannot bootstrap prior and common sense knowledge as humans do. In this paper, we introduce a framework that enables robots to use semantic knowledge from prior spatial configurations of the environment and semantic common sense knowledge. We propose SEEK (Semantic Reasoning for Object Inspection Tasks) that combines semantic prior knowledge with the robot's observations to search for and navigate toward target objects more efficiently. SEEK maintains two representations: a Dynamic Scene Graph (DSG) and a Relational Semantic Network (RSN). The RSN is a compact and practical model that estimates the probability of finding the target object across spatial elements in the DSG. We propose a novel probabilistic planning framework to search for the object using relational semantic knowledge. Our simulation analyses demonstrate that SEEK outperforms the classical planning and Large Language Models (LLMs)-based methods that are examined in this study in terms of efficiency for object-goal inspection tasks. We validated our approach on a physical legged robot in urban environments, showcasing its practicality and effectiveness in real-world inspection scenarios.

Low Frequency Sampling in Model Predictive Path Integral Control

Apr 03, 2024Abstract:Sampling-based model-predictive controllers have become a powerful optimization tool for planning and control problems in various challenging environments. In this paper, we show how the default choice of uncorrelated Gaussian distributions can be improved upon with the use of a colored noise distribution. Our choice of distribution allows for the emphasis on low frequency control signals, which can result in smoother and more exploratory samples. We use this frequency-based sampling distribution with Model Predictive Path Integral (MPPI) in both hardware and simulation experiments to show better or equal performance on systems with various speeds of input response.

Semantic Belief Behavior Graph: Enabling Autonomous Robot Inspection in Unknown Environments

Jan 30, 2024

Abstract:This paper addresses the problem of autonomous robotic inspection in complex and unknown environments. This capability is crucial for efficient and precise inspections in various real-world scenarios, even when faced with perceptual uncertainty and lack of prior knowledge of the environment. Existing methods for real-world autonomous inspections typically rely on predefined targets and waypoints and often fail to adapt to dynamic or unknown settings. In this work, we introduce the Semantic Belief Behavior Graph (SB2G) framework as a novel approach to semantic-aware autonomous robot inspection. SB2G generates a control policy for the robot, featuring behavior nodes that encapsulate various semantic-based policies designed for inspecting different classes of objects. We design an active semantic search behavior to guide the robot in locating objects for inspection while reducing semantic information uncertainty. The edges in the SB2G encode transitions between these behaviors. We validate our approach through simulation and real-world urban inspections using a legged robotic platform. Our results show that SB2G enables a more efficient inspection policy, exhibiting performance comparable to human-operated inspections.

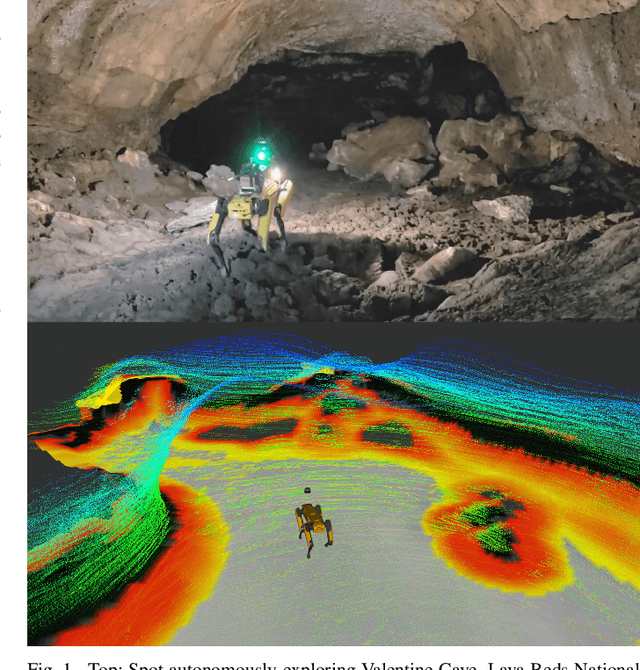

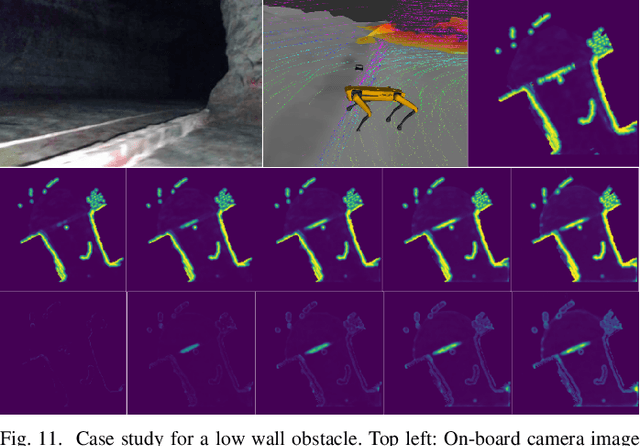

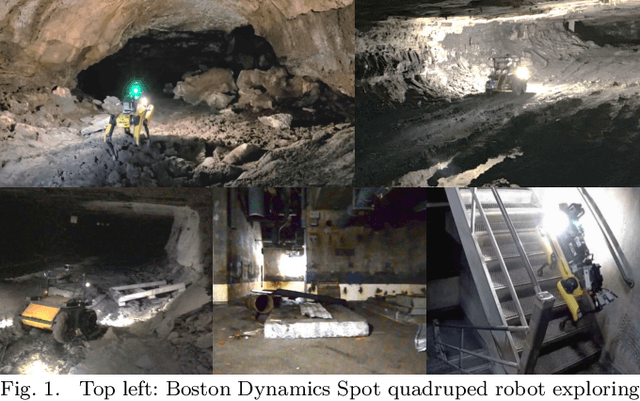

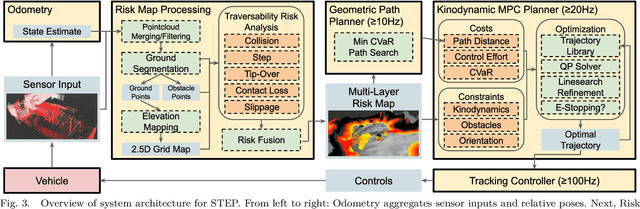

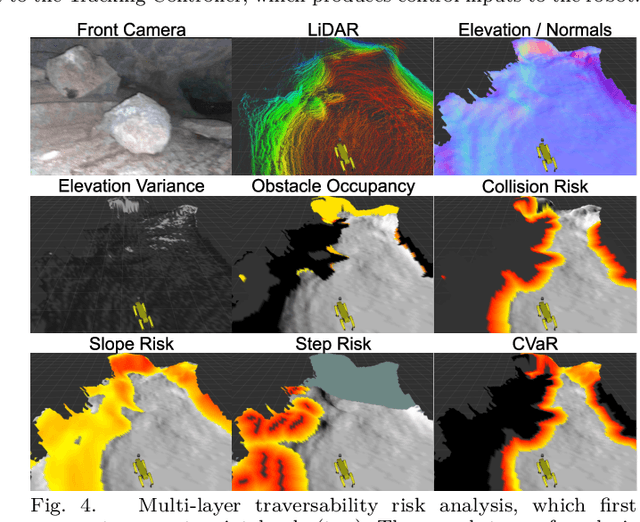

STEP: Stochastic Traversability Evaluation and Planning for Risk-Aware Off-road Navigation; Results from the DARPA Subterranean Challenge

Mar 02, 2023

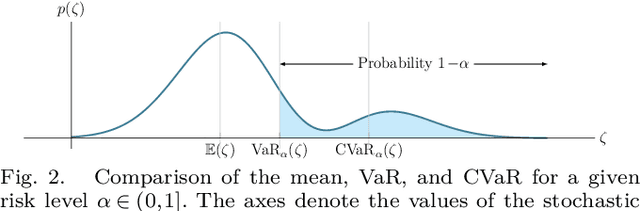

Abstract:Although autonomy has gained widespread usage in structured and controlled environments, robotic autonomy in unknown and off-road terrain remains a difficult problem. Extreme, off-road, and unstructured environments such as undeveloped wilderness, caves, rubble, and other post-disaster sites pose unique and challenging problems for autonomous navigation. Based on our participation in the DARPA Subterranean Challenge, we propose an approach to improve autonomous traversal of robots in subterranean environments that are perceptually degraded and completely unknown through a traversability and planning framework called STEP (Stochastic Traversability Evaluation and Planning). We present 1) rapid uncertainty-aware mapping and traversability evaluation, 2) tail risk assessment using the Conditional Value-at-Risk (CVaR), 3) efficient risk and constraint-aware kinodynamic motion planning using sequential quadratic programming-based (SQP) model predictive control (MPC), 4) fast recovery behaviors to account for unexpected scenarios that may cause failure, and 5) risk-based gait adaptation for quadrupedal robots. We illustrate and validate extensive results from our experiments on wheeled and legged robotic platforms in field studies at the Valentine Cave, CA (cave environment), Kentucky Underground, KY (mine environment), and Louisville Mega Cavern, KY (final competition site for the DARPA Subterranean Challenge with tunnel, urban, and cave environments).

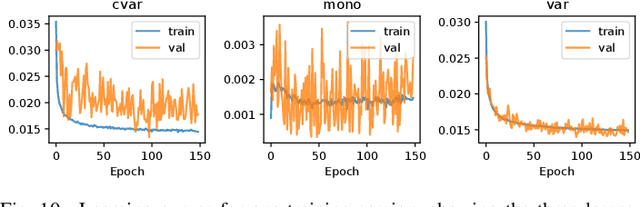

Learning Risk-aware Costmaps for Traversability in Challenging Environments

Jul 25, 2021

Abstract:One of the main challenges in autonomous robotic exploration and navigation in unknown and unstructured environments is determining where the robot can or cannot safely move. A significant source of difficulty in this determination arises from stochasticity and uncertainty, coming from localization error, sensor sparsity and noise, difficult-to-model robot-ground interactions, and disturbances to the motion of the vehicle. Classical approaches to this problem rely on geometric analysis of the surrounding terrain, which can be prone to modeling errors and can be computationally expensive. Moreover, modeling the distribution of uncertain traversability costs is a difficult task, compounded by the various error sources mentioned above. In this work, we take a principled learning approach to this problem. We introduce a neural network architecture for robustly learning the distribution of traversability costs. Because we are motivated by preserving the life of the robot, we tackle this learning problem from the perspective of learning tail-risks, i.e. the Conditional Value-at-Risk (CVaR). We show that this approach reliably learns the expected tail risk given a desired probability risk threshold between 0 and 1, producing a traversability costmap which is more robust to outliers, more accurately captures tail risks, and is more computationally efficient, when compared against baselines. We validate our method on data collected a legged robot navigating challenging, unstructured environments including an abandoned subway, limestone caves, and lava tube caves.

NeBula: Quest for Robotic Autonomy in Challenging Environments; TEAM CoSTAR at the DARPA Subterranean Challenge

Mar 28, 2021

Abstract:This paper presents and discusses algorithms, hardware, and software architecture developed by the TEAM CoSTAR (Collaborative SubTerranean Autonomous Robots), competing in the DARPA Subterranean Challenge. Specifically, it presents the techniques utilized within the Tunnel (2019) and Urban (2020) competitions, where CoSTAR achieved 2nd and 1st place, respectively. We also discuss CoSTAR's demonstrations in Martian-analog surface and subsurface (lava tubes) exploration. The paper introduces our autonomy solution, referred to as NeBula (Networked Belief-aware Perceptual Autonomy). NeBula is an uncertainty-aware framework that aims at enabling resilient and modular autonomy solutions by performing reasoning and decision making in the belief space (space of probability distributions over the robot and world states). We discuss various components of the NeBula framework, including: (i) geometric and semantic environment mapping; (ii) a multi-modal positioning system; (iii) traversability analysis and local planning; (iv) global motion planning and exploration behavior; (i) risk-aware mission planning; (vi) networking and decentralized reasoning; and (vii) learning-enabled adaptation. We discuss the performance of NeBula on several robot types (e.g. wheeled, legged, flying), in various environments. We discuss the specific results and lessons learned from fielding this solution in the challenging courses of the DARPA Subterranean Challenge competition.

STEP: Stochastic Traversability Evaluation and Planning for Safe Off-road Navigation

Mar 04, 2021

Abstract:Although ground robotic autonomy has gained widespread usage in structured and controlled environments, autonomy in unknown and off-road terrain remains a difficult problem. Extreme, off-road, and unstructured environments such as undeveloped wilderness, caves, and rubble pose unique and challenging problems for autonomous navigation. To tackle these problems we propose an approach for assessing traversability and planning a safe, feasible, and fast trajectory in real-time. Our approach, which we name STEP (Stochastic Traversability Evaluation and Planning), relies on: 1) rapid uncertainty-aware mapping and traversability evaluation, 2) tail risk assessment using the Conditional Value-at-Risk (CVaR), and 3) efficient risk and constraint-aware kinodynamic motion planning using sequential quadratic programming-based (SQP) model predictive control (MPC). We analyze our method in simulation and validate its efficacy on wheeled and legged robotic platforms exploring extreme terrains including an underground lava tube.

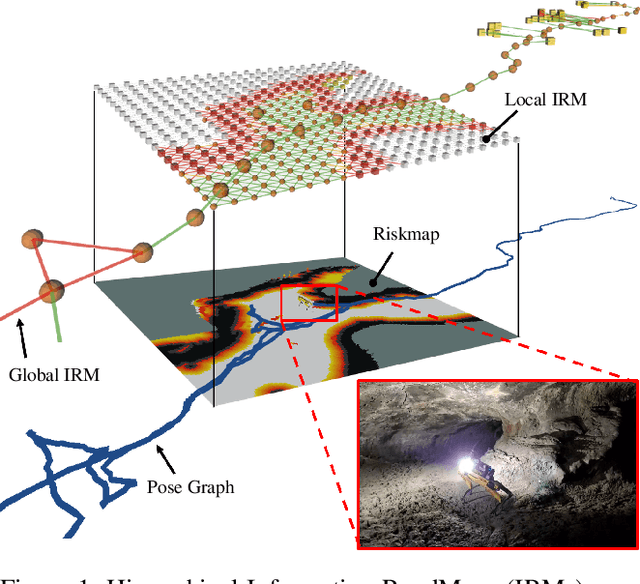

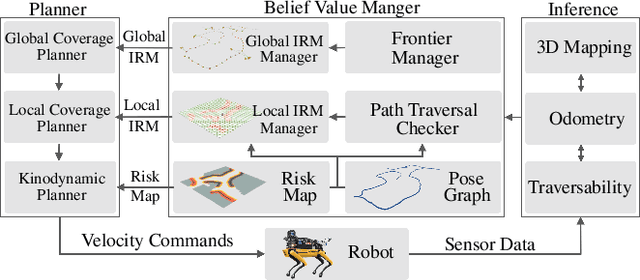

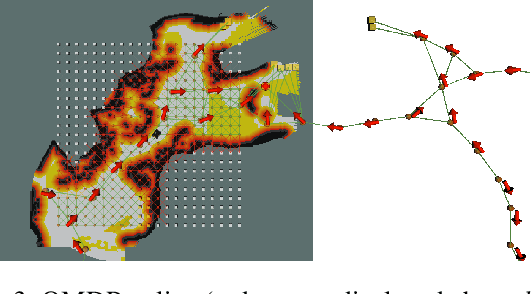

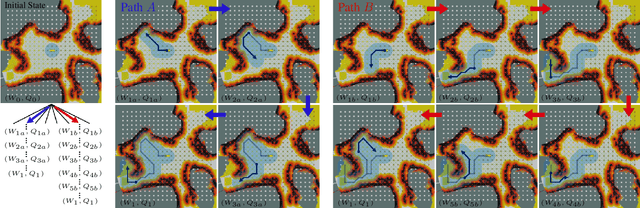

PLGRIM: Hierarchical Value Learning for Large-scale Exploration in Unknown Environments

Feb 10, 2021

Abstract:In order for a robot to explore an unknown environment autonomously, it must account for uncertainty in sensor measurements, hazard assessment, localization, and motion execution. Making decisions for maximal reward in a stochastic setting requires learning values and constructing policies over a belief space, i.e., probability distribution of the robot-world state. Value learning over belief spaces suffer from computational challenges in high-dimensional spaces, such as large spatial environments and long temporal horizons for exploration. At the same time, it should be adaptive and resilient to disturbances at run time in order to ensure the robot's safety, as required in many real-world applications. This work proposes a scalable value learning framework, PLGRIM (Probabilistic Local and Global Reasoning on Information roadMaps), that bridges the gap between (i) local, risk-aware resiliency and (ii) global, reward-seeking mission objectives. By leveraging hierarchical belief space planners with information-rich graph structures, PLGRIM can address large-scale exploration problems while providing locally near-optimal coverage plans. PLGRIM is a step toward enabling belief space planners on physical robots operating in unknown and complex environments. We validate our proposed framework with a high-fidelity dynamic simulation in diverse environments and with physical hardware, Boston Dynamics' Spot robot, in a lava tube.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge