"Time": models, code, and papers

ETA Prediction with Graph Neural Networks in Google Maps

Aug 25, 2021

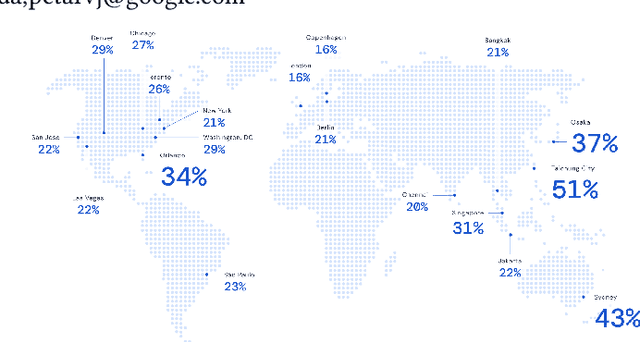

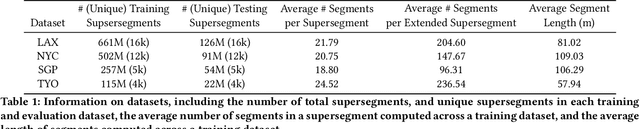

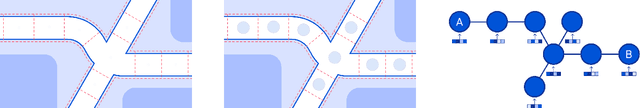

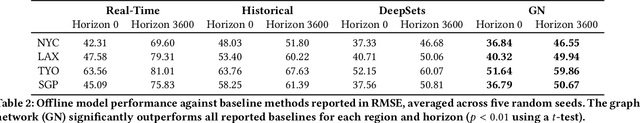

Travel-time prediction constitutes a task of high importance in transportation networks, with web mapping services like Google Maps regularly serving vast quantities of travel time queries from users and enterprises alike. Further, such a task requires accounting for complex spatiotemporal interactions (modelling both the topological properties of the road network and anticipating events -- such as rush hours -- that may occur in the future). Hence, it is an ideal target for graph representation learning at scale. Here we present a graph neural network estimator for estimated time of arrival (ETA) which we have deployed in production at Google Maps. While our main architecture consists of standard GNN building blocks, we further detail the usage of training schedule methods such as MetaGradients in order to make our model robust and production-ready. We also provide prescriptive studies: ablating on various architectural decisions and training regimes, and qualitative analyses on real-world situations where our model provides a competitive edge. Our GNN proved powerful when deployed, significantly reducing negative ETA outcomes in several regions compared to the previous production baseline (40+% in cities like Sydney).

Synthetic ECG Signal Generation Using Generative Neural Networks

Dec 05, 2021

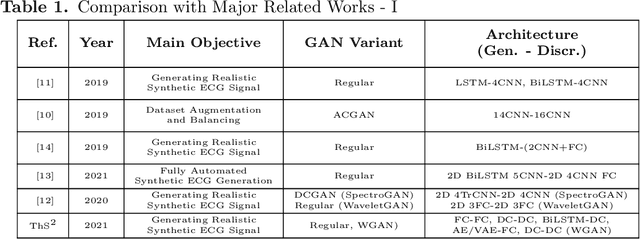

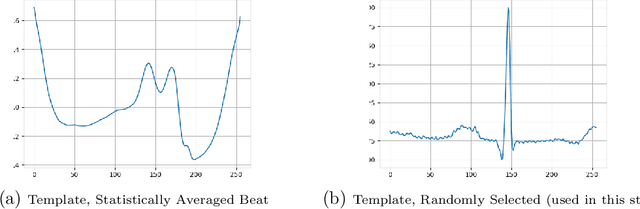

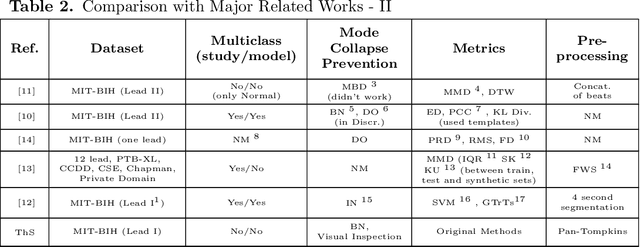

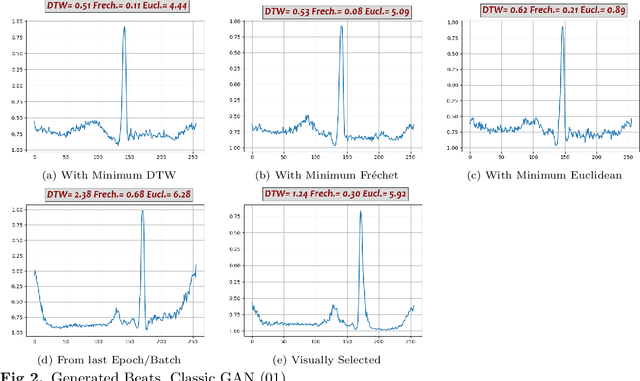

Electrocardiogram (ECG) datasets tend to be highly imbalanced due to the scarcity of abnormal cases. Additionally, the use of real patients' ECG is highly regulated due to privacy issues. Therefore, there is always a need for more ECG data, especially for the training of automatic diagnosis machine learning models, which perform better when trained on a balanced dataset. We studied the synthetic ECG generation capability of 5 different models from the generative adversarial network (GAN) family and compared their performances, the focus being only on Normal cardiac cycles. Dynamic Time Warping (DTW), Fr\'echet, and Euclidean distance functions were employed to quantitatively measure performance. Five different methods for evaluating generated beats were proposed and applied. We also proposed 3 new concepts (threshold, accepted beat and productivity rate) and employed them along with the aforementioned methods as a systematic way for comparison between models. The results show that all the tested models can to an extent successfully mass-generate acceptable heartbeats with high similarity in morphological features, and potentially all of them can be used to augment imbalanced datasets. However, visual inspections of generated beats favor BiLSTM-DC GAN and WGAN, as they produce statistically more acceptable beats. Also, with regards to productivity rate, the Classic GAN is superior with a 72% productivity rate.

A General Optimization Framework for Dynamic Time Warping

May 31, 2019

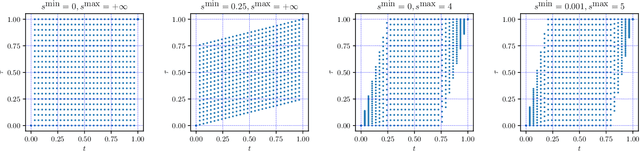

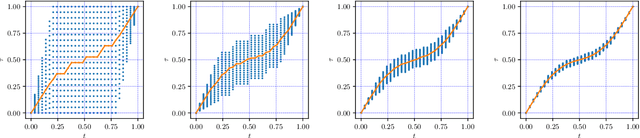

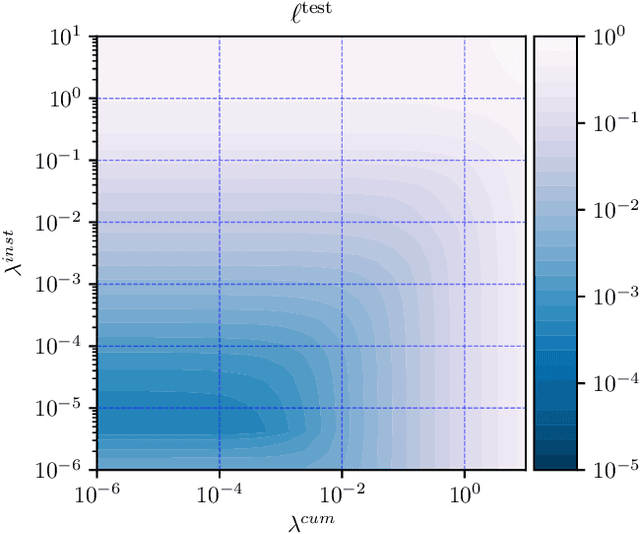

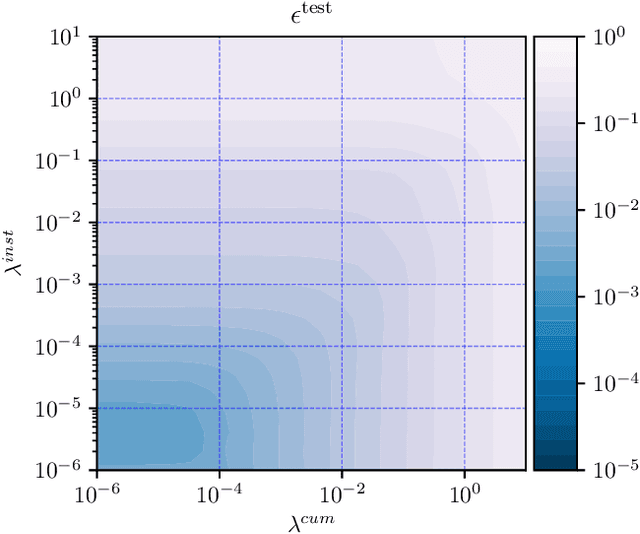

The goal of dynamic time warping is to transform or warp time in order to approximately align two signals together. We pose the choice of warping function as an optimization problem with several terms in the objective. The first term measures the misalignment of the time-warped signals. Two additional regularization terms penalize the cumulative warping and the instantaneous rate of time warping; constraints on the warping can be imposed by assigning the value +inf to the regularization terms. Different choices of the three objective terms yield different time warping functions that trade off signal fit or alignment and properties of the warping function. The optimization problem we formulate is a classical optimal control problem, with initial and terminal constraints, and a state dimension of one. We describe an effective general method that minimizes the objective by discretizing the values of the original and warped time, and using standard dynamic programming to compute the (globally) optimal warping function with the discretized values. Iterated refinement of this scheme yields a high accuracy warping function in just a few iterations. Our method is implemented as an open source Python package GDTW.

ES-dRNN: A Hybrid Exponential Smoothing and Dilated Recurrent Neural Network Model for Short-Term Load Forecasting

Dec 05, 2021

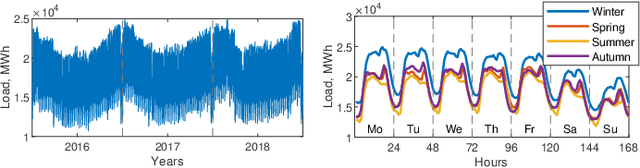

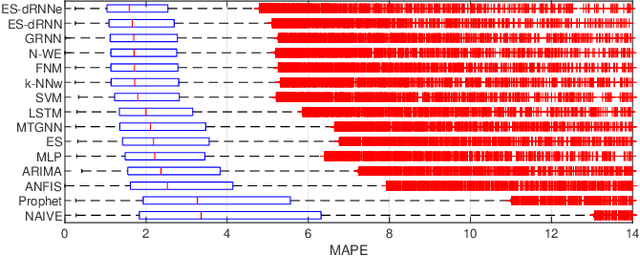

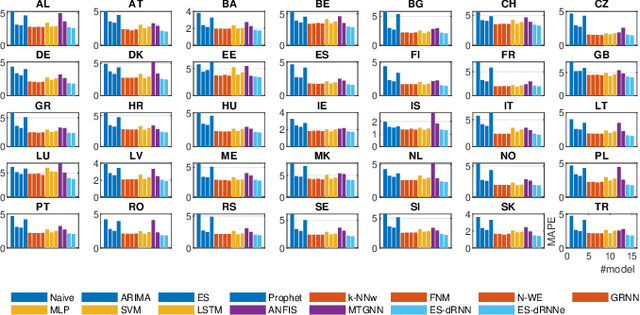

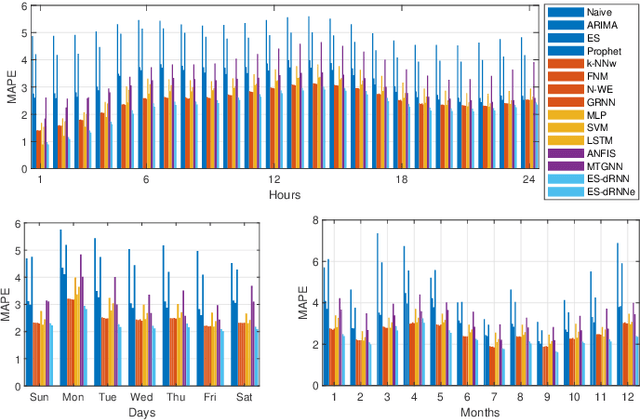

Short-term load forecasting (STLF) is challenging due to complex time series (TS) which express three seasonal patterns and a nonlinear trend. This paper proposes a novel hybrid hierarchical deep learning model that deals with multiple seasonality and produces both point forecasts and predictive intervals (PIs). It combines exponential smoothing (ES) and a recurrent neural network (RNN). ES extracts dynamically the main components of each individual TS and enables on-the-fly deseasonalization, which is particularly useful when operating on a relatively small data set. A multi-layer RNN is equipped with a new type of dilated recurrent cell designed to efficiently model both short and long-term dependencies in TS. To improve the internal TS representation and thus the model's performance, RNN learns simultaneously both the ES parameters and the main mapping function transforming inputs into forecasts. We compare our approach against several baseline methods, including classical statistical methods and machine learning (ML) approaches, on STLF problems for 35 European countries. The empirical study clearly shows that the proposed model has high expressive power to solve nonlinear stochastic forecasting problems with TS including multiple seasonality and significant random fluctuations. In fact, it outperforms both statistical and state-of-the-art ML models in terms of accuracy.

A Priori Calibration of Transient Kinetics Data via Machine Learning

Sep 27, 2021

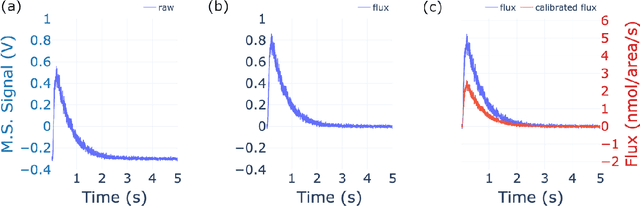

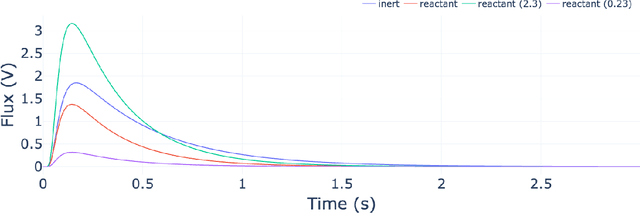

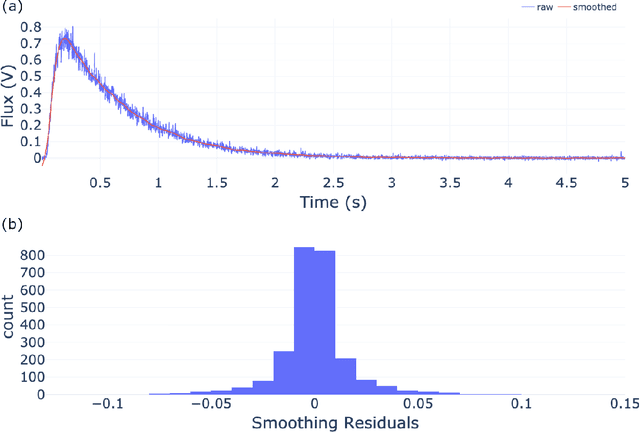

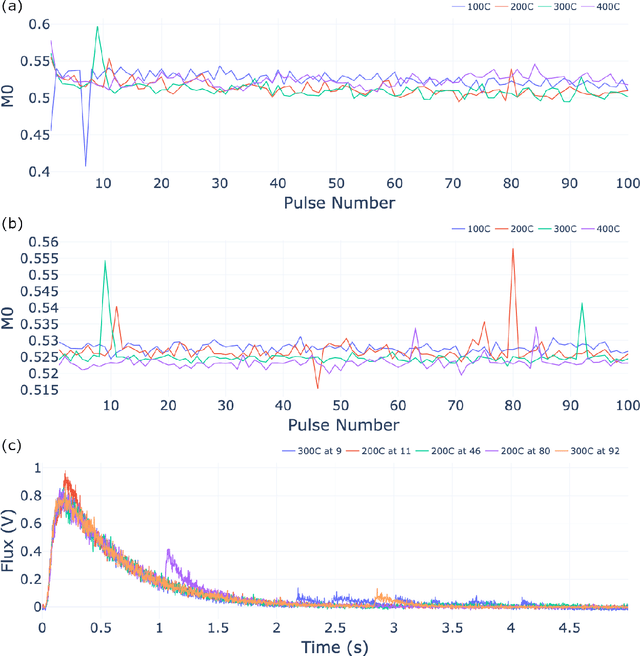

The temporal analysis of products reactor provides a vast amount of transient kinetic information that may be used to describe a variety of chemical features including the residence time distribution, kinetic coefficients, number of active sites, and the reaction mechanism. However, as with any measurement device, the TAP reactor signal is convoluted with noise. To reduce the uncertainty of the kinetic measurement and any derived parameters or mechanisms, proper preprocessing must be performed prior to any advanced analysis. This preprocessing consists of baseline correction, i.e., a shift in the voltage response, and calibration, i.e., a scaling of the flux response based on prior experiments. The current methodology of preprocessing requires significant user discretion and reliance on previous experiments that may drift over time. Herein we use machine learning techniques combined with physical constraints to convert the raw instrument signal to chemical information. As such, the proposed methodology demonstrates clear benefits over the traditional preprocessing in the calibration of the inert and feed mixture products without need of prior calibration experiments or heuristic input from the user.

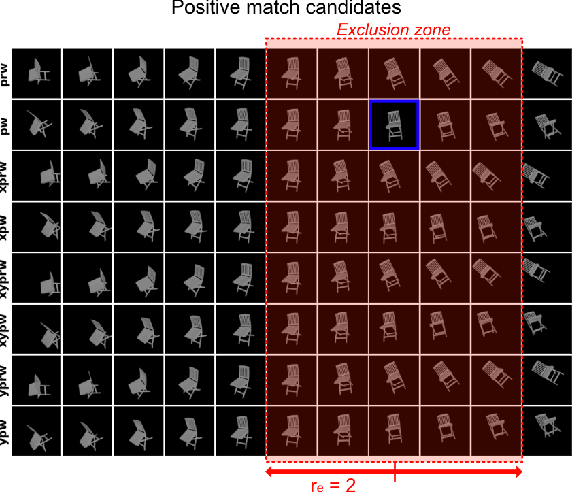

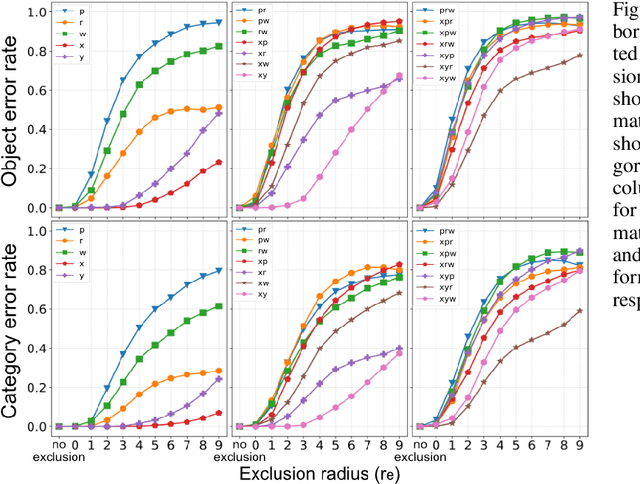

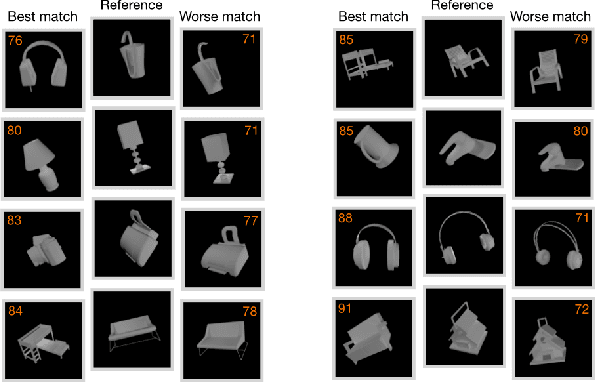

ShapeY: Measuring Shape Recognition Capacity Using Nearest Neighbor Matching

Nov 16, 2021

Object recognition in humans depends primarily on shape cues. We have developed a new approach to measuring the shape recognition performance of a vision system based on nearest neighbor view matching within the system's embedding space. Our performance benchmark, ShapeY, allows for precise control of task difficulty, by enforcing that view matching span a specified degree of 3D viewpoint change and/or appearance change. As a first test case we measured the performance of ResNet50 pre-trained on ImageNet. Matching error rates were high. For example, a 27 degree change in object pitch led ResNet50 to match the incorrect object 45% of the time. Appearance changes were also highly disruptive. Examination of false matches indicates that ResNet50's embedding space is severely "tangled". These findings suggest ShapeY can be a useful tool for charting the progress of artificial vision systems towards human-level shape recognition capabilities.

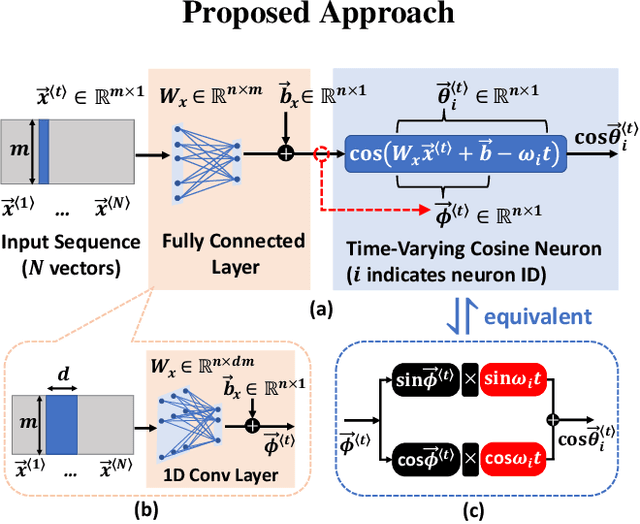

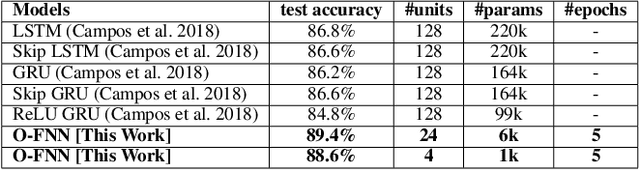

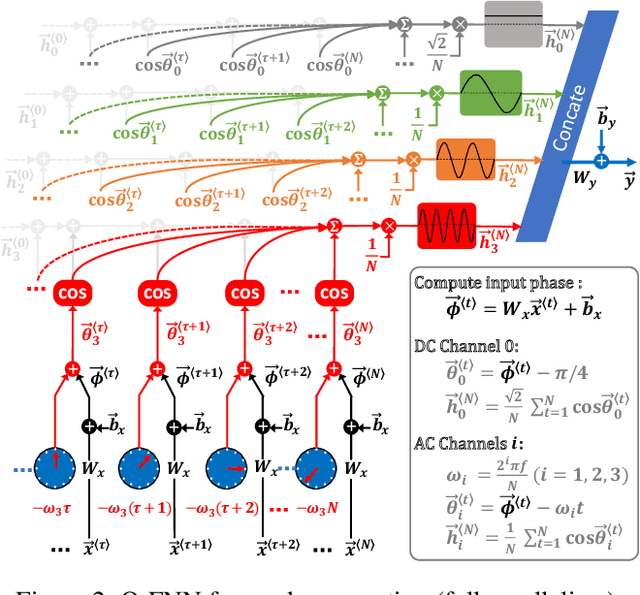

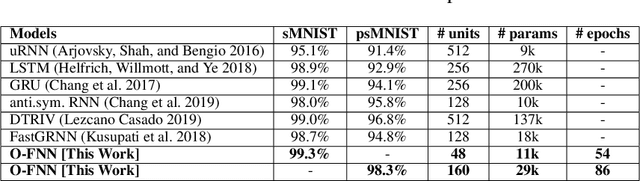

Oscillatory Fourier Neural Network: A Compact and Efficient Architecture for Sequential Processing

Sep 14, 2021

Tremendous progress has been made in sequential processing with the recent advances in recurrent neural networks. However, recurrent architectures face the challenge of exploding/vanishing gradients during training, and require significant computational resources to execute back-propagation through time. Moreover, large models are typically needed for executing complex sequential tasks. To address these challenges, we propose a novel neuron model that has cosine activation with a time varying component for sequential processing. The proposed neuron provides an efficient building block for projecting sequential inputs into spectral domain, which helps to retain long-term dependencies with minimal extra model parameters and computation. A new type of recurrent network architecture, named Oscillatory Fourier Neural Network, based on the proposed neuron is presented and applied to various types of sequential tasks. We demonstrate that recurrent neural network with the proposed neuron model is mathematically equivalent to a simplified form of discrete Fourier transform applied onto periodical activation. In particular, the computationally intensive back-propagation through time in training is eliminated, leading to faster training while achieving the state of the art inference accuracy in a diverse group of sequential tasks. For instance, applying the proposed model to sentiment analysis on IMDB review dataset reaches 89.4% test accuracy within 5 epochs, accompanied by over 35x reduction in the model size compared to LSTM. The proposed novel RNN architecture is well poised for intelligent sequential processing in resource constrained hardware.

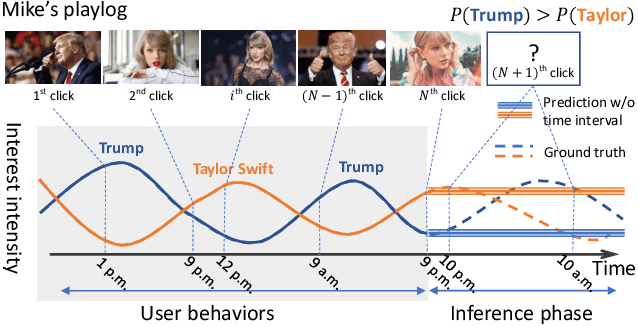

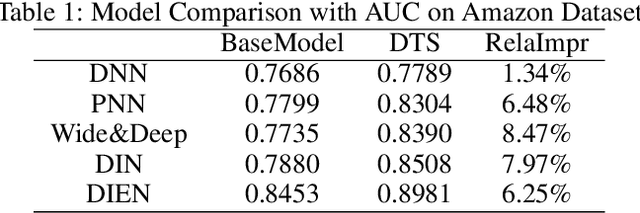

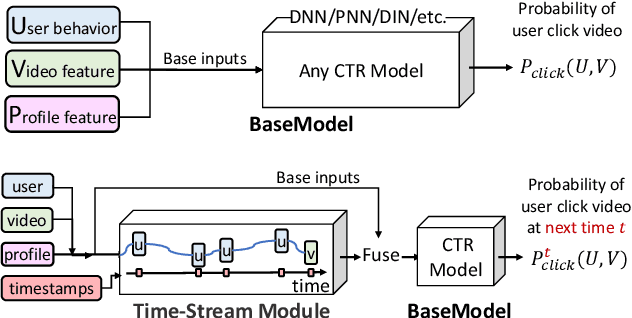

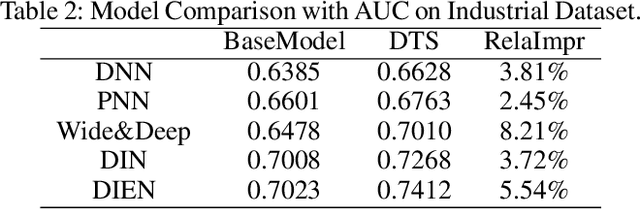

Deep Time-Stream Framework for Click-Through Rate Prediction by Tracking Interest Evolution

Jan 08, 2020

Click-through rate (CTR) prediction is an essential task in industrial applications such as video recommendation. Recently, deep learning models have been proposed to learn the representation of users' overall interests, while ignoring the fact that interests may dynamically change over time. We argue that it is necessary to consider the continuous-time information in CTR models to track user interest trend from rich historical behaviors. In this paper, we propose a novel Deep Time-Stream framework (DTS) which introduces the time information by an ordinary differential equations (ODE). DTS continuously models the evolution of interests using a neural network, and thus is able to tackle the challenge of dynamically representing users' interests based on their historical behaviors. In addition, our framework can be seamlessly applied to any existing deep CTR models by leveraging the additional Time-Stream Module, while no changes are made to the original CTR models. Experiments on public dataset as well as real industry dataset with billions of samples demonstrate the effectiveness of proposed approaches, which achieve superior performance compared with existing methods.

* 8 pages. arXiv admin note: text overlap with arXiv:1809.03672 by other authors

Exploratory Factor Analysis of Data on a Sphere

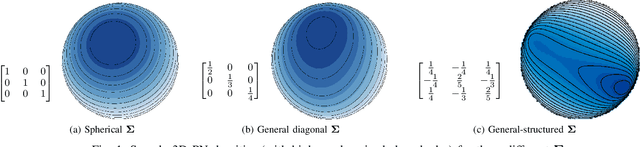

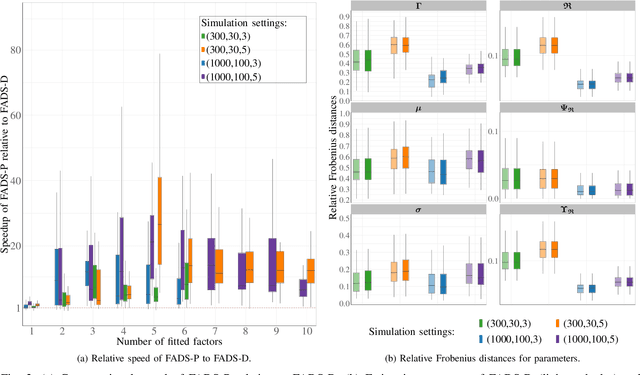

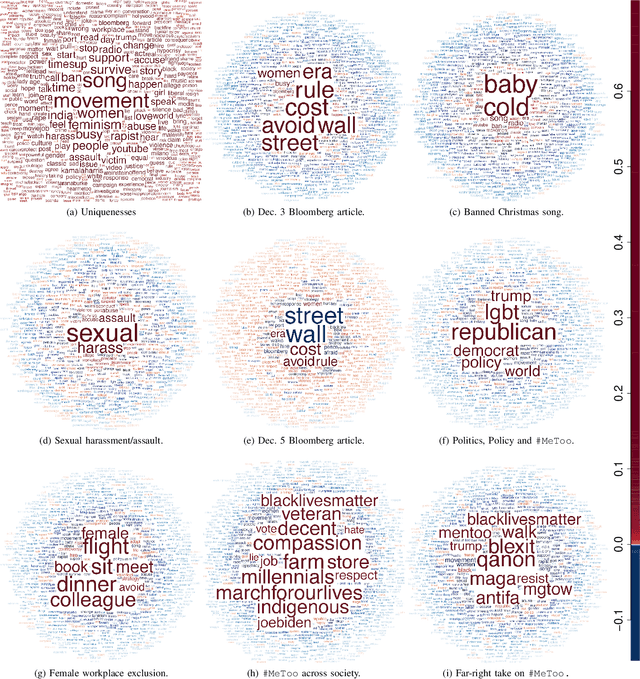

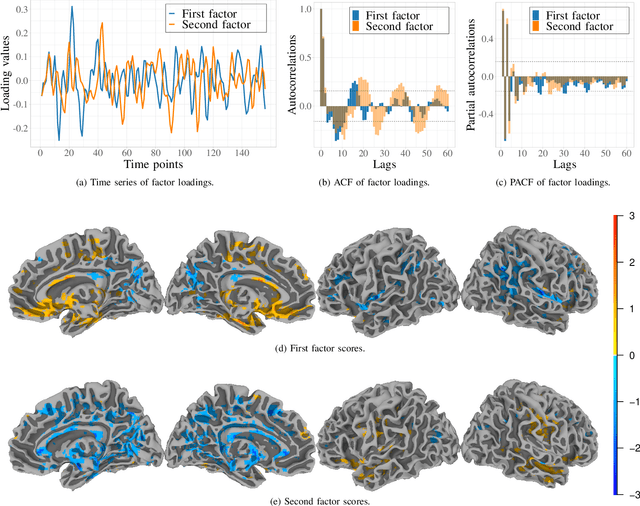

Nov 09, 2021

Data on high-dimensional spheres arise frequently in many disciplines either naturally or as a consequence of preliminary processing and can have intricate dependence structure that needs to be understood. We develop exploratory factor analysis of the projected normal distribution to explain the variability in such data using a few easily interpreted latent factors. Our methodology provides maximum likelihood estimates through a novel fast alternating expectation profile conditional maximization algorithm. Results on simulation experiments on a wide range of settings are uniformly excellent. Our methodology provides interpretable and insightful results when applied to tweets with the $\#MeToo$ hashtag in early December 2018, to time-course functional Magnetic Resonance Images of the average pre-teen brain at rest, to characterize handwritten digits, and to gene expression data from cancerous cells in the Cancer Genome Atlas.

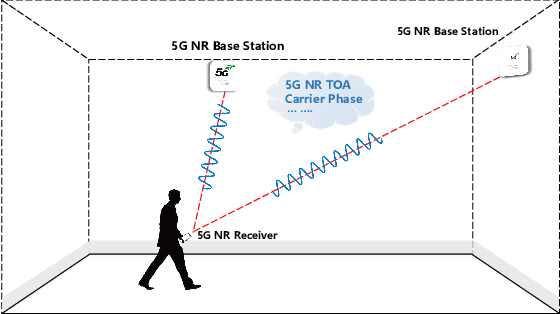

Carrier Phase Ranging for Indoor Positioning with 5G NR Signals

Dec 22, 2021

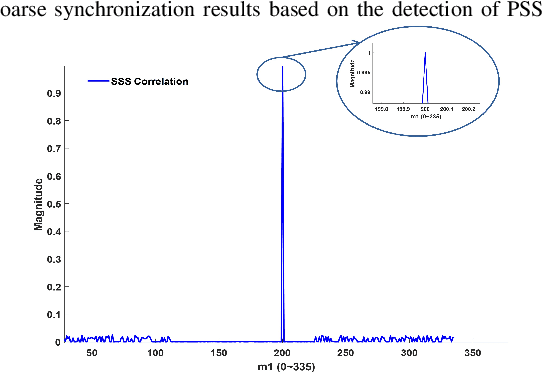

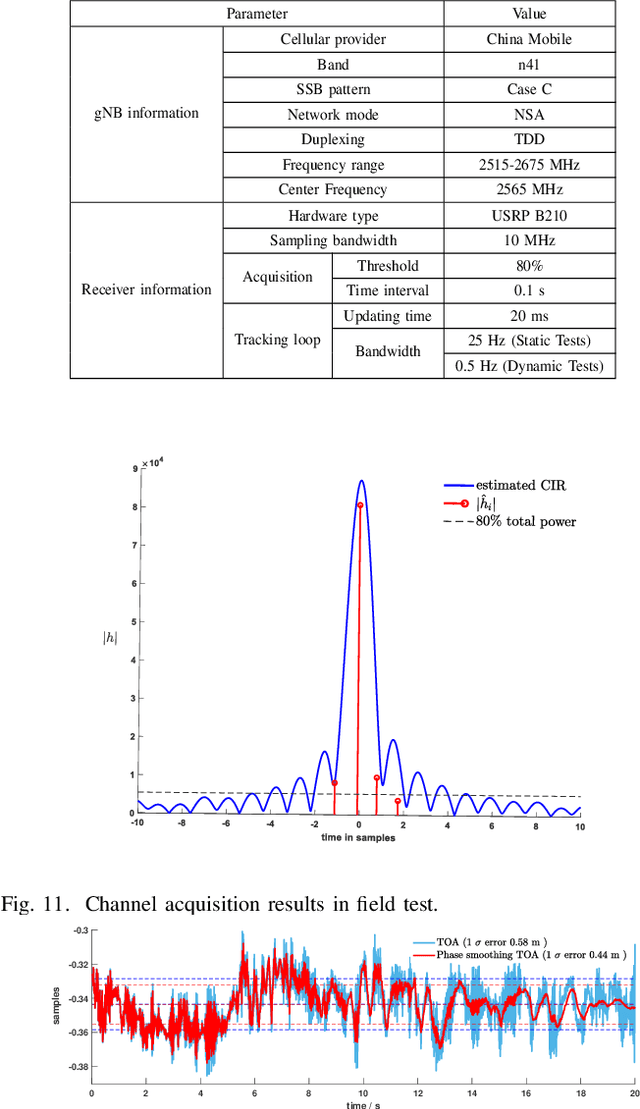

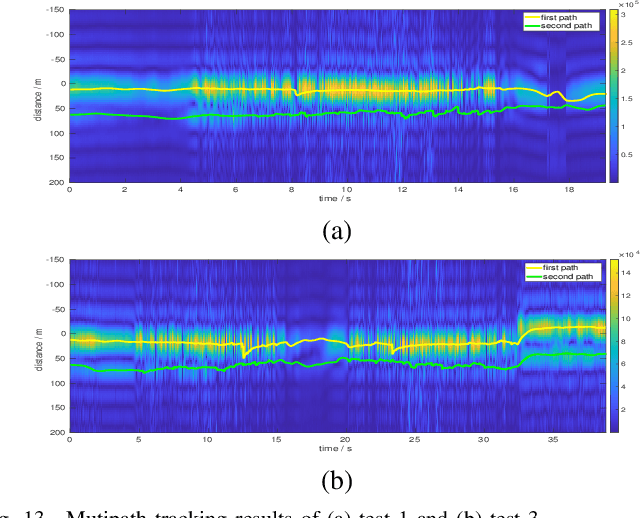

Indoor positioning is one of the core technologies of Internet of Things (IoT) and artificial intelligence (AI), and is expected to play a significant role in the upcoming era of AI. However, affected by the complexity of indoor environments, it is still highly challenging to achieve continuous and reliable indoor positioning. Currently, 5G cellular networks are being deployed worldwide, the new technologies of which have brought the approaches for improving the performance of wireless indoor positioning. In this paper, we investigate the indoor positioning under the 5G new radio (NR), which has been standardized and being commercially operated in massive markets. Specifically, a solution is proposed and a software defined receiver (SDR) is developed for indoor positioning. With our SDR indoor positioning system, the 5G NR signals are firstly sampled by universal software radio peripheral (USRP), and then, coarse synchronization is achieved via detecting the start of the synchronization signal block (SSB). Then, with the assistance of the pilots transmitted on the physical broadcasting channel (PBCH), multipath acquisition and delay tracking are sequentially carried out to estimate the time of arrival (ToA) of received signals. Furthermore, to improve the ToA ranging accuracy, the carrier phase of the first arrived path is estimated. Finally, to quantify the accuracy of our ToA estimation method, indoor field tests are carried out in an office environment, where a 5G NR base station (known as gNB) is installed for commercial use. Our test results show that, in the static test scenarios, the ToA accuracy measured by the 1-{\sigma} error interval is about 0.5 m, while in the pedestrian mobile environment, the probability of range accuracy within 0.8 m is 95%.

* 13 pages

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge