Xinyi Li

Geometric Operator Learning with Optimal Transport

Jul 26, 2025Abstract:We propose integrating optimal transport (OT) into operator learning for partial differential equations (PDEs) on complex geometries. Classical geometric learning methods typically represent domains as meshes, graphs, or point clouds. Our approach generalizes discretized meshes to mesh density functions, formulating geometry embedding as an OT problem that maps these functions to a uniform density in a reference space. Compared to previous methods relying on interpolation or shared deformation, our OT-based method employs instance-dependent deformation, offering enhanced flexibility and effectiveness. For 3D simulations focused on surfaces, our OT-based neural operator embeds the surface geometry into a 2D parameterized latent space. By performing computations directly on this 2D representation of the surface manifold, it achieves significant computational efficiency gains compared to volumetric simulation. Experiments with Reynolds-averaged Navier-Stokes equations (RANS) on the ShapeNet-Car and DrivAerNet-Car datasets show that our method achieves better accuracy and also reduces computational expenses in terms of both time and memory usage compared to existing machine learning models. Additionally, our model demonstrates significantly improved accuracy on the FlowBench dataset, underscoring the benefits of employing instance-dependent deformation for datasets with highly variable geometries.

DSAGL: Dual-Stream Attention-Guided Learning for Weakly Supervised Whole Slide Image Classification

May 29, 2025Abstract:Whole-slide images (WSIs) are critical for cancer diagnosis due to their ultra-high resolution and rich semantic content. However, their massive size and the limited availability of fine-grained annotations pose substantial challenges for conventional supervised learning. We propose DSAGL (Dual-Stream Attention-Guided Learning), a novel weakly supervised classification framework that combines a teacher-student architecture with a dual-stream design. DSAGL explicitly addresses instance-level ambiguity and bag-level semantic consistency by generating multi-scale attention-based pseudo labels and guiding instance-level learning. A shared lightweight encoder (VSSMamba) enables efficient long-range dependency modeling, while a fusion-attentive module (FASA) enhances focus on sparse but diagnostically relevant regions. We further introduce a hybrid loss to enforce mutual consistency between the two streams. Experiments on CIFAR-10, NCT-CRC, and TCGA-Lung datasets demonstrate that DSAGL consistently outperforms state-of-the-art MIL baselines, achieving superior discriminative performance and robustness under weak supervision.

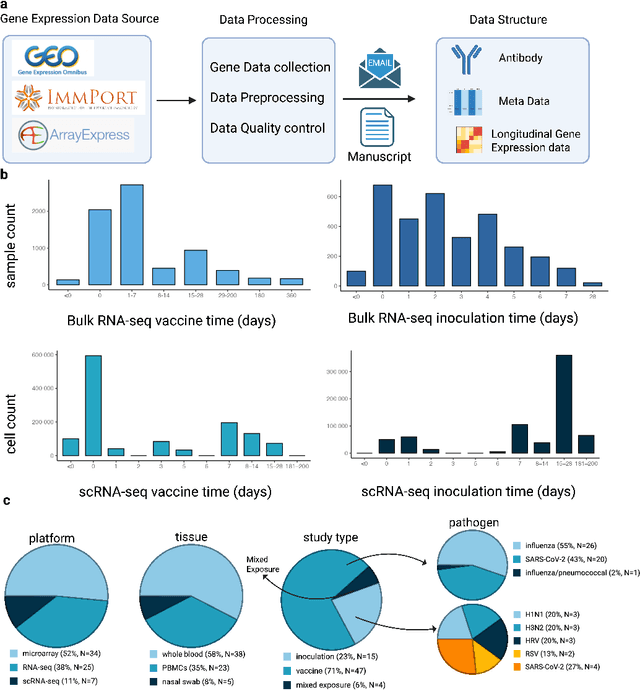

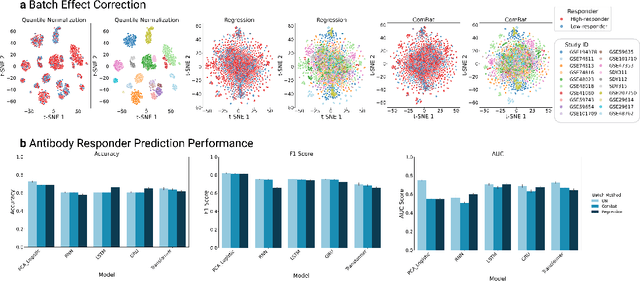

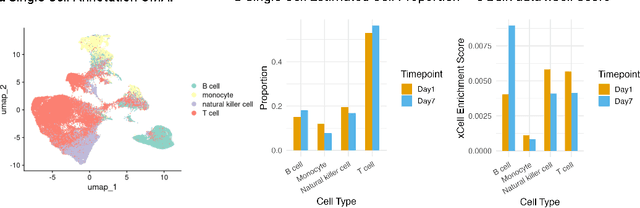

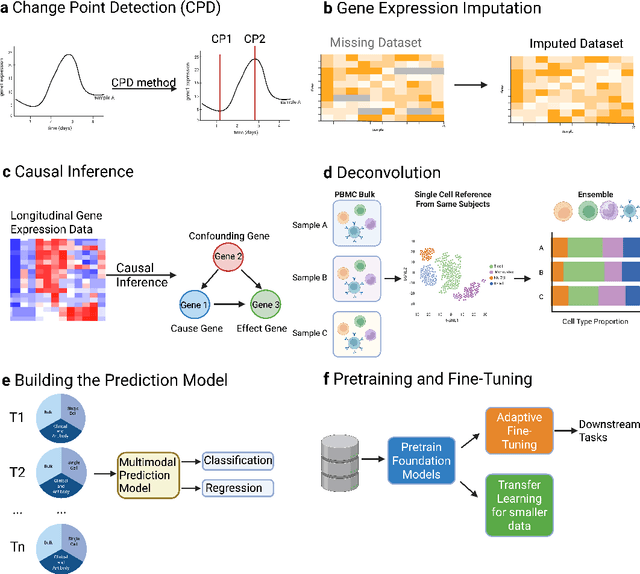

HR-VILAGE-3K3M: A Human Respiratory Viral Immunization Longitudinal Gene Expression Dataset for Systems Immunity

May 19, 2025

Abstract:Respiratory viral infections pose a global health burden, yet the cellular immune responses driving protection or pathology remain unclear. Natural infection cohorts often lack pre-exposure baseline data and structured temporal sampling. In contrast, inoculation and vaccination trials generate insightful longitudinal transcriptomic data. However, the scattering of these datasets across platforms, along with inconsistent metadata and preprocessing procedure, hinders AI-driven discovery. To address these challenges, we developed the Human Respiratory Viral Immunization LongitudinAl Gene Expression (HR-VILAGE-3K3M) repository: an AI-ready, rigorously curated dataset that integrates 14,136 RNA-seq profiles from 3,178 subjects across 66 studies encompassing over 2.56 million cells. Spanning vaccination, inoculation, and mixed exposures, the dataset includes microarray, bulk RNA-seq, and single-cell RNA-seq from whole blood, PBMCs, and nasal swabs, sourced from GEO, ImmPort, and ArrayExpress. We harmonized subject-level metadata, standardized outcome measures, applied unified preprocessing pipelines with rigorous quality control, and aligned all data to official gene symbols. To demonstrate the utility of HR-VILAGE-3K3M, we performed predictive modeling of vaccine responders and evaluated batch-effect correction methods. Beyond these initial demonstrations, it supports diverse systems immunology applications and benchmarking of feature selection and transfer learning algorithms. Its scale and heterogeneity also make it ideal for pretraining foundation models of the human immune response and for advancing multimodal learning frameworks. As the largest longitudinal transcriptomic resource for human respiratory viral immunization, it provides an accessible platform for reproducible AI-driven research, accelerating systems immunology and vaccine development against emerging viral threats.

Wireless Large AI Model: Shaping the AI-Native Future of 6G and Beyond

Apr 20, 2025Abstract:The emergence of sixth-generation and beyond communication systems is expected to fundamentally transform digital experiences through introducing unparalleled levels of intelligence, efficiency, and connectivity. A promising technology poised to enable this revolutionary vision is the wireless large AI model (WLAM), characterized by its exceptional capabilities in data processing, inference, and decision-making. In light of these remarkable capabilities, this paper provides a comprehensive survey of WLAM, elucidating its fundamental principles, diverse applications, critical challenges, and future research opportunities. We begin by introducing the background of WLAM and analyzing the key synergies with wireless networks, emphasizing the mutual benefits. Subsequently, we explore the foundational characteristics of WLAM, delving into their unique relevance in wireless environments. Then, the role of WLAM in optimizing wireless communication systems across various use cases and the reciprocal benefits are systematically investigated. Furthermore, we discuss the integration of WLAM with emerging technologies, highlighting their potential to enable transformative capabilities and breakthroughs in wireless communication. Finally, we thoroughly examine the high-level challenges hindering the practical implementation of WLAM and discuss pivotal future research directions.

LL-Localizer: A Life-Long Localization System based on Dynamic i-Octree

Apr 02, 2025

Abstract:This paper proposes an incremental voxel-based life-long localization method, LL-Localizer, which enables robots to localize robustly and accurately in multi-session mode using prior maps. Meanwhile, considering that it is difficult to be aware of changes in the environment in the prior map and robots may traverse between mapped and unmapped areas during actual operation, we will update the map when needed according to the established strategies through incremental voxel map. Besides, to ensure high performance in real-time and facilitate our map management, we utilize Dynamic i-Octree, an efficient organization of 3D points based on Dynamic Octree to load local map and update the map during the robot's operation. The experiments show that our system can perform stable and accurate localization comparable to state-of-the-art LIO systems. And even if the environment in the prior map changes or the robots traverse between mapped and unmapped areas, our system can still maintain robust and accurate localization without any distinction. Our demo can be found on Blibili (https://www.bilibili.com/video/BV1faZHYCEkZ) and youtube (https://youtu.be/UWn7RCb9kA8) and the program will be available at https://github.com/M-Evanovic/LL-Localizer.

BEV-LIO(LC): BEV Image Assisted LiDAR-Inertial Odometry with Loop Closure

Feb 26, 2025Abstract:This work introduces BEV-LIO(LC), a novel LiDAR-Inertial Odometry (LIO) framework that combines Bird's Eye View (BEV) image representations of LiDAR data with geometry-based point cloud registration and incorporates loop closure (LC) through BEV image features. By normalizing point density, we project LiDAR point clouds into BEV images, thereby enabling efficient feature extraction and matching. A lightweight convolutional neural network (CNN) based feature extractor is employed to extract distinctive local and global descriptors from the BEV images. Local descriptors are used to match BEV images with FAST keypoints for reprojection error construction, while global descriptors facilitate loop closure detection. Reprojection error minimization is then integrated with point-to-plane registration within an iterated Extended Kalman Filter (iEKF). In the back-end, global descriptors are used to create a KD-tree-indexed keyframe database for accurate loop closure detection. When a loop closure is detected, Random Sample Consensus (RANSAC) computes a coarse transform from BEV image matching, which serves as the initial estimate for Iterative Closest Point (ICP). The refined transform is subsequently incorporated into a factor graph along with odometry factors, improving the global consistency of localization. Extensive experiments conducted in various scenarios with different LiDAR types demonstrate that BEV-LIO(LC) outperforms state-of-the-art methods, achieving competitive localization accuracy. Our code, video and supplementary materials can be found at https://github.com/HxCa1/BEV-LIO-LC.

TV-Dialogue: Crafting Theme-Aware Video Dialogues with Immersive Interaction

Jan 31, 2025

Abstract:Recent advancements in LLMs have accelerated the development of dialogue generation across text and images, yet video-based dialogue generation remains underexplored and presents unique challenges. In this paper, we introduce Theme-aware Video Dialogue Crafting (TVDC), a novel task aimed at generating new dialogues that align with video content and adhere to user-specified themes. We propose TV-Dialogue, a novel multi-modal agent framework that ensures both theme alignment (i.e., the dialogue revolves around the theme) and visual consistency (i.e., the dialogue matches the emotions and behaviors of characters in the video) by enabling real-time immersive interactions among video characters, thereby accurately understanding the video content and generating new dialogue that aligns with the given themes. To assess the generated dialogues, we present a multi-granularity evaluation benchmark with high accuracy, interpretability and reliability, demonstrating the effectiveness of TV-Dialogue on self-collected dataset over directly using existing LLMs. Extensive experiments reveal that TV-Dialogue can generate dialogues for videos of any length and any theme in a zero-shot manner without training. Our findings underscore the potential of TV-Dialogue for various applications, such as video re-creation, film dubbing and its use in downstream multimodal tasks.

Enabling Cardiac Monitoring using In-ear Ballistocardiogram on COTS Wireless Earbuds

Jan 12, 2025Abstract:The human ear offers a unique opportunity for cardiac monitoring due to its physiological and practical advantages. However, existing earable solutions require additional hardware and complex processing, posing challenges for commercial True Wireless Stereo (TWS) earbuds which are limited by their form factor and resources. In this paper, we propose TWSCardio, a novel system that repurposes the IMU sensors in TWS earbuds for cardiac monitoring. Our key finding is that these sensors can capture in-ear ballistocardiogram (BCG) signals. TWSCardio reuses the unstable Bluetooth channel to stream the IMU data to a smartphone for BCG processing. It incorporates a signal enhancement framework to address issues related to missing data and low sampling rate, while mitigating motion artifacts by fusing multi-axis information. Furthermore, it employs a region-focused signal reconstruction method to translate the multi-axis in-ear BCG signals into fine-grained seismocardiogram (SCG) signals. We have implemented TWSCardio as an efficient real-time app. Our experiments on 100 subjects verify that TWSCardio can accurately reconstruct cardiac signals while showing resilience to motion artifacts, missing data, and low sampling rates. Our case studies further demonstrate that TWSCardio can support diverse cardiac monitoring applications.

Large Language Model-driven Multi-Agent Simulation for News Diffusion Under Different Network Structures

Oct 16, 2024Abstract:The proliferation of fake news in the digital age has raised critical concerns, particularly regarding its impact on societal trust and democratic processes. Diverging from conventional agent-based simulation approaches, this work introduces an innovative approach by employing a large language model (LLM)-driven multi-agent simulation to replicate complex interactions within information ecosystems. We investigate key factors that facilitate news propagation, such as agent personalities and network structures, while also evaluating strategies to combat misinformation. Through simulations across varying network structures, we demonstrate the potential of LLM-based agents in modeling the dynamics of misinformation spread, validating the influence of agent traits on the diffusion process. Our findings emphasize the advantages of LLM-based simulations over traditional techniques, as they uncover underlying causes of information spread -- such as agents promoting discussions -- beyond the predefined rules typically employed in existing agent-based models. Additionally, we evaluate three countermeasure strategies, discovering that brute-force blocking influential agents in the network or announcing news accuracy can effectively mitigate misinformation. However, their effectiveness is influenced by the network structure, highlighting the importance of considering network structure in the development of future misinformation countermeasures.

Light-Weight Fault Tolerant Attention for Large Language Model Training

Oct 15, 2024

Abstract:Large Language Models (LLMs) have demonstrated remarkable performance in various natural language processing tasks. However, the training of these models is computationally intensive and susceptible to faults, particularly in the attention mechanism, which is a critical component of transformer-based LLMs. In this paper, we investigate the impact of faults on LLM training, focusing on INF, NaN, and near-INF values in the computation results with systematic fault injection experiments. We observe the propagation patterns of these errors, which can trigger non-trainable states in the model and disrupt training, forcing the procedure to load from checkpoints.To mitigate the impact of these faults, we propose ATTNChecker, the first Algorithm-Based Fault Tolerance (ABFT) technique tailored for the attention mechanism in LLMs. ATTNChecker is designed based on fault propagation patterns of LLM and incorporates performance optimization to adapt to both system reliability and model vulnerability while providing lightweight protection for fast LLM training. Evaluations on four LLMs show that ATTNChecker on average incurs on average 7% overhead on training while detecting and correcting all extreme errors. Compared with the state-of-the-art checkpoint/restore approach, ATTNChecker reduces recovery overhead by up to 49x.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge