Xiao Li

Control Invariant Sets for Neural Network Dynamical Systems and Recursive Feasibility in Model Predictive Control

May 15, 2025

Abstract:Neural networks are powerful tools for data-driven modeling of complex dynamical systems, enhancing predictive capability for control applications. However, their inherent nonlinearity and black-box nature challenge control designs that prioritize rigorous safety and recursive feasibility guarantees. This paper presents algorithmic methods for synthesizing control invariant sets specifically tailored to neural network based dynamical models. These algorithms employ set recursion, ensuring termination after a finite number of iterations and generating subsets in which closed-loop dynamics are forward invariant, thus guaranteeing perpetual operational safety. Additionally, we propose model predictive control designs that integrate these control invariant sets into mixed-integer optimization, with guaranteed adherence to safety constraints and recursive feasibility at the computational level. We also present a comprehensive theoretical analysis examining the properties and guarantees of the proposed methods. Numerical simulations in an autonomous driving scenario demonstrate the methods' effectiveness in synthesizing control-invariant sets offline and implementing model predictive control online, ensuring safety and recursive feasibility.

Seed1.5-VL Technical Report

May 11, 2025

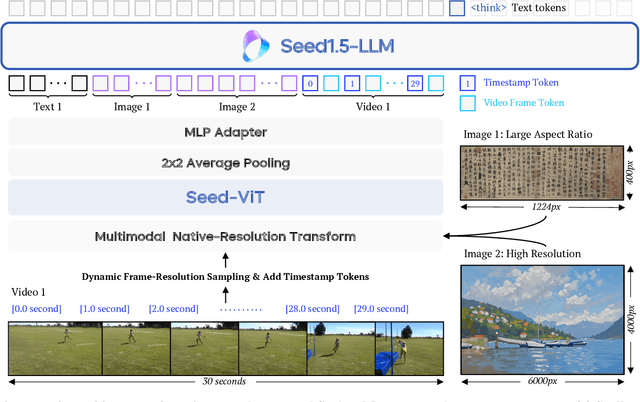

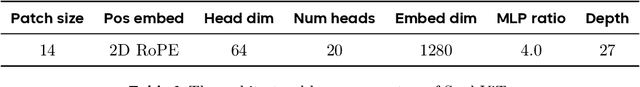

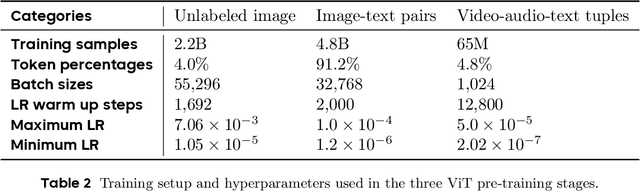

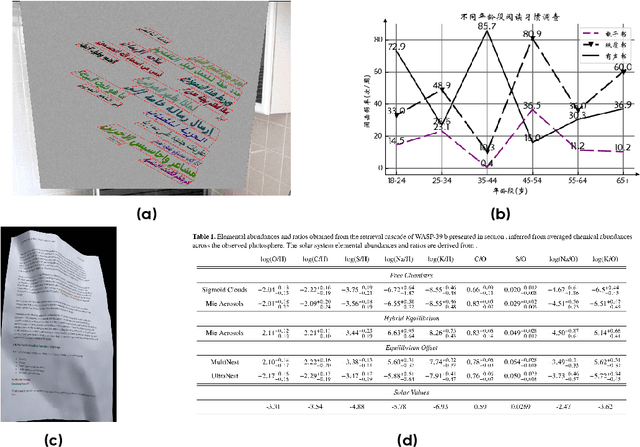

Abstract:We present Seed1.5-VL, a vision-language foundation model designed to advance general-purpose multimodal understanding and reasoning. Seed1.5-VL is composed with a 532M-parameter vision encoder and a Mixture-of-Experts (MoE) LLM of 20B active parameters. Despite its relatively compact architecture, it delivers strong performance across a wide spectrum of public VLM benchmarks and internal evaluation suites, achieving the state-of-the-art performance on 38 out of 60 public benchmarks. Moreover, in agent-centric tasks such as GUI control and gameplay, Seed1.5-VL outperforms leading multimodal systems, including OpenAI CUA and Claude 3.7. Beyond visual and video understanding, it also demonstrates strong reasoning abilities, making it particularly effective for multimodal reasoning challenges such as visual puzzles. We believe these capabilities will empower broader applications across diverse tasks. In this report, we mainly provide a comprehensive review of our experiences in building Seed1.5-VL across model design, data construction, and training at various stages, hoping that this report can inspire further research. Seed1.5-VL is now accessible at https://www.volcengine.com/ (Volcano Engine Model ID: doubao-1-5-thinking-vision-pro-250428)

Learned Intelligent Recognizer with Adaptively Customized RIS Phases in Communication Systems

May 05, 2025Abstract:This study presents an advanced wireless system that embeds target recognition within reconfigurable intelligent surface (RIS)-aided communication systems, powered by cuttingedge deep learning innovations. Such a system faces the challenge of fine-tuning both the RIS phase shifts and neural network (NN) parameters, since they intricately interdepend on each other to accomplish the recognition task. To address these challenges, we propose an intelligent recognizer that strategically harnesses every piece of prior action responses, thereby ingeniously multiplexing downlink signals to facilitate environment sensing. Specifically, we design a novel NN based on the long short-term memory (LSTM) architecture and the physical channel model. The NN iteratively captures and fuses information from previous measurements and adaptively customizes RIS configurations to acquire the most relevant information for the recognition task in subsequent moments. Tailored dynamically, these configurations adapt to the scene, task, and target specifics. Simulation results reveal that our proposed method significantly outperforms the state-of-the-art method, while resulting in minimal impacts on communication performance, even as sensing is performed simultaneously.

Cooperative ISAC Network for Off-Grid Imaging-based Low-Altitude Surveillance

May 05, 2025

Abstract:The low-altitude economy has emerged as a critical focus for future economic development, emphasizing the urgent need for flight activity surveillance utilizing the existing sensing capabilities of mobile cellular networks. Traditional monostatic or localization-based sensing methods, however, encounter challenges in fusing sensing results and matching channel parameters. To address these challenges, we propose an innovative approach that directly draws the radio images of the low-altitude space, leveraging its inherent sparsity with compressed sensing (CS)-based algorithms and the cooperation of multiple base stations. Furthermore, recognizing that unmanned aerial vehicles (UAVs) are randomly distributed in space, we introduce a physics-embedded learning method to overcome off-grid issues inherent in CS-based models. Additionally, an online hard example mining method is incorporated into the design of the loss function, enabling the network to adaptively concentrate on the samples bearing significant discrepancy with the ground truth, thereby enhancing its ability to detect the rare UAVs within the expansive low-altitude space. Simulation results demonstrate the effectiveness of the imaging-based low-altitude surveillance approach, with the proposed physics-embedded learning algorithm significantly outperforming traditional CS-based methods under off-grid conditions.

Analysis of the MICCAI Brain Tumor Segmentation -- Metastases (BraTS-METS) 2025 Lighthouse Challenge: Brain Metastasis Segmentation on Pre- and Post-treatment MRI

Apr 16, 2025Abstract:Despite continuous advancements in cancer treatment, brain metastatic disease remains a significant complication of primary cancer and is associated with an unfavorable prognosis. One approach for improving diagnosis, management, and outcomes is to implement algorithms based on artificial intelligence for the automated segmentation of both pre- and post-treatment MRI brain images. Such algorithms rely on volumetric criteria for lesion identification and treatment response assessment, which are still not available in clinical practice. Therefore, it is critical to establish tools for rapid volumetric segmentations methods that can be translated to clinical practice and that are trained on high quality annotated data. The BraTS-METS 2025 Lighthouse Challenge aims to address this critical need by establishing inter-rater and intra-rater variability in dataset annotation by generating high quality annotated datasets from four individual instances of segmentation by neuroradiologists while being recorded on video (two instances doing "from scratch" and two instances after AI pre-segmentation). This high-quality annotated dataset will be used for testing phase in 2025 Lighthouse challenge and will be publicly released at the completion of the challenge. The 2025 Lighthouse challenge will also release the 2023 and 2024 segmented datasets that were annotated using an established pipeline of pre-segmentation, student annotation, two neuroradiologists checking, and one neuroradiologist finalizing the process. It builds upon its previous edition by including post-treatment cases in the dataset. Using these high-quality annotated datasets, the 2025 Lighthouse challenge plans to test benchmark algorithms for automated segmentation of pre-and post-treatment brain metastases (BM), trained on diverse and multi-institutional datasets of MRI images obtained from patients with brain metastases.

Toward building next-generation Geocoding systems: a systematic review

Mar 24, 2025Abstract:Geocoding systems are widely used in both scientific research for spatial analysis and everyday life through location-based services. The quality of geocoded data significantly impacts subsequent processes and applications, underscoring the need for next-generation systems. In response to this demand, this review first examines the evolving requirements for geocoding inputs and outputs across various scenarios these systems must address. It then provides a detailed analysis of how to construct such systems by breaking them down into key functional components and reviewing a broad spectrum of existing approaches, from traditional rule-based methods to advanced techniques in information retrieval, natural language processing, and large language models. Finally, we identify opportunities to improve next-generation geocoding systems in light of recent technological advances.

StreamGS: Online Generalizable Gaussian Splatting Reconstruction for Unposed Image Streams

Mar 08, 2025

Abstract:The advent of 3D Gaussian Splatting (3DGS) has advanced 3D scene reconstruction and novel view synthesis. With the growing interest of interactive applications that need immediate feedback, online 3DGS reconstruction in real-time is in high demand. However, none of existing methods yet meet the demand due to three main challenges: the absence of predetermined camera parameters, the need for generalizable 3DGS optimization, and the necessity of reducing redundancy. We propose StreamGS, an online generalizable 3DGS reconstruction method for unposed image streams, which progressively transform image streams to 3D Gaussian streams by predicting and aggregating per-frame Gaussians. Our method overcomes the limitation of the initial point reconstruction \cite{dust3r} in tackling out-of-domain (OOD) issues by introducing a content adaptive refinement. The refinement enhances cross-frame consistency by establishing reliable pixel correspondences between adjacent frames. Such correspondences further aid in merging redundant Gaussians through cross-frame feature aggregation. The density of Gaussians is thereby reduced, empowering online reconstruction by significantly lowering computational and memory costs. Extensive experiments on diverse datasets have demonstrated that StreamGS achieves quality on par with optimization-based approaches but does so 150 times faster, and exhibits superior generalizability in handling OOD scenes.

Unveiling Downstream Performance Scaling of LLMs: A Clustering-Based Perspective

Feb 24, 2025Abstract:The rapid advancements in computing dramatically increase the scale and cost of training Large Language Models (LLMs). Accurately predicting downstream task performance prior to model training is crucial for efficient resource allocation, yet remains challenging due to two primary constraints: (1) the "emergence phenomenon", wherein downstream performance metrics become meaningful only after extensive training, which limits the ability to use smaller models for prediction; (2) Uneven task difficulty distributions and the absence of consistent scaling laws, resulting in substantial metric variability. Existing performance prediction methods suffer from limited accuracy and reliability, thereby impeding the assessment of potential LLM capabilities. To address these challenges, we propose a Clustering-On-Difficulty (COD) downstream performance prediction framework. COD first constructs a predictable support subset by clustering tasks based on difficulty features, strategically excluding non-emergent and non-scalable clusters. The scores on the selected subset serve as effective intermediate predictors of downstream performance on the full evaluation set. With theoretical support, we derive a mapping function that transforms performance metrics from the predictable subset to the full evaluation set, thereby ensuring accurate extrapolation of LLM downstream performance. The proposed method has been applied to predict performance scaling for a 70B LLM, providing actionable insights for training resource allocation and assisting in monitoring the training process. Notably, COD achieves remarkable predictive accuracy on the 70B LLM by leveraging an ensemble of small models, demonstrating an absolute mean deviation of 1.36% across eight important LLM evaluation benchmarks.

Guaranteed Conditional Diffusion: 3D Block-based Models for Scientific Data Compression

Feb 18, 2025Abstract:This paper proposes a new compression paradigm -- Guaranteed Conditional Diffusion with Tensor Correction (GCDTC) -- for lossy scientific data compression. The framework is based on recent conditional diffusion (CD) generative models, and it consists of a conditional diffusion model, tensor correction, and error guarantee. Our diffusion model is a mixture of 3D conditioning and 2D denoising U-Net. The approach leverages a 3D block-based compressing module to address spatiotemporal correlations in structured scientific data. Then, the reverse diffusion process for 2D spatial data is conditioned on the ``slices'' of content latent variables produced by the compressing module. After training, the denoising decoder reconstructs the data with zero noise and content latent variables, and thus it is entirely deterministic. The reconstructed outputs of the CD model are further post-processed by our tensor correction and error guarantee steps to control and ensure a maximum error distortion, which is an inevitable requirement in lossy scientific data compression. Our experiments involving two datasets generated by climate and chemical combustion simulations show that our framework outperforms standard convolutional autoencoders and yields competitive compression quality with an existing scientific data compression algorithm.

DPO-Shift: Shifting the Distribution of Direct Preference Optimization

Feb 11, 2025

Abstract:Direct Preference Optimization (DPO) and its variants have become increasingly popular for aligning language models with human preferences. These methods aim to teach models to better distinguish between chosen (or preferred) and rejected (or dispreferred) responses. However, prior research has identified that the probability of chosen responses often decreases during training, and this phenomenon is known as likelihood displacement. To tackle this challenge, in this work we introduce \method to controllably shift the distribution of the chosen probability. Then, we show that \method exhibits a fundamental trade-off between improving the chosen probability and sacrificing the reward margin, as supported by both theoretical analysis and experimental validation. Furthermore, we demonstrate the superiority of \method over DPO on downstream tasks such as MT-Bench and a designed win rate experiment. We believe this study shows that the likelihood displacement issue of DPO can be effectively mitigated with a simple, theoretically grounded solution. Our code is available at https://github.com/Meaquadddd/DPO-Shift.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge