Shangfei Wang

AU Codes, Language, and Synthesis: Translating Anatomy to Text for Facial Behavior Synthesis

Mar 19, 2026Abstract:Facial behavior synthesis remains a critical yet underexplored challenge. While text-to-face models have made progress, they often rely on coarse emotion categories, which lack the nuance needed to capture the full spectrum of human nonverbal communication. Action Units (AUs) provide a more precise and anatomically grounded alternative. However, current AU-based approaches typically encode AUs as one-hot vectors, modeling compound expressions as simple linear combinations of individual AUs. This linearity becomes problematic when handling conflicting AUs--defined as those which activate the same facial muscle with opposing actions. Such cases lead to anatomically implausible artifacts and unnatural motion superpositions. To address this, we propose a novel method that represents facial behavior through natural language descriptions of AUs. This approach preserves the expressiveness of the AU framework while enabling explicit modeling of complex and conflicting AUs. It also unlocks the potential of modern text-to-image models for high-fidelity facial synthesis. Supporting this direction, we introduce BP4D-AUText, the first large-scale text-image paired dataset for complex facial behavior. It is synthesized by applying a rule-based Dynamic AU Text Processor to the BP4D and BP4D+ datasets. We further propose VQ-AUFace, a generative model that leverages facial structural priors to synthesize realistic and diverse facial behaviors from text. Extensive quantitative experiments and user studies demonstrate that our approach significantly outperforms existing methods. It excels in generating facial expressions that are anatomically plausible, behaviorally rich, and perceptually convincing, particularly under challenging conditions involving conflicting AUs.

MIRRORTALK: Forging Personalized Avatars Via Disentangled Style and Hierarchical Motion Control

Jan 30, 2026Abstract:Synthesizing personalized talking faces that uphold and highlight a speaker's unique style while maintaining lip-sync accuracy remains a significant challenge. A primary limitation of existing approaches is the intrinsic confounding of speaker-specific talking style and semantic content within facial motions, which prevents the faithful transfer of a speaker's unique persona to arbitrary speech. In this paper, we propose MirrorTalk, a generative framework based on a conditional diffusion model, combined with a Semantically-Disentangled Style Encoder (SDSE) that can distill pure style representations from a brief reference video. To effectively utilize this representation, we further introduce a hierarchical modulation strategy within the diffusion process. This mechanism guides the synthesis by dynamically balancing the contributions of audio and style features across distinct facial regions, ensuring both precise lip-sync accuracy and expressive full-face dynamics. Extensive experiments demonstrate that MirrorTalk achieves significant improvements over state-of-the-art methods in terms of lip-sync accuracy and personalization preservation.

ConsistTalk: Intensity Controllable Temporally Consistent Talking Head Generation with Diffusion Noise Search

Nov 10, 2025Abstract:Recent advancements in video diffusion models have significantly enhanced audio-driven portrait animation. However, current methods still suffer from flickering, identity drift, and poor audio-visual synchronization. These issues primarily stem from entangled appearance-motion representations and unstable inference strategies. In this paper, we introduce \textbf{ConsistTalk}, a novel intensity-controllable and temporally consistent talking head generation framework with diffusion noise search inference. First, we propose \textbf{an optical flow-guided temporal module (OFT)} that decouples motion features from static appearance by leveraging facial optical flow, thereby reducing visual flicker and improving temporal consistency. Second, we present an \textbf{Audio-to-Intensity (A2I) model} obtained through multimodal teacher-student knowledge distillation. By transforming audio and facial velocity features into a frame-wise intensity sequence, the A2I model enables joint modeling of audio and visual motion, resulting in more natural dynamics. This further enables fine-grained, frame-wise control of motion dynamics while maintaining tight audio-visual synchronization. Third, we introduce a \textbf{diffusion noise initialization strategy (IC-Init)}. By enforcing explicit constraints on background coherence and motion continuity during inference-time noise search, we achieve better identity preservation and refine motion dynamics compared to the current autoregressive strategy. Extensive experiments demonstrate that ConsistTalk significantly outperforms prior methods in reducing flicker, preserving identity, and delivering temporally stable, high-fidelity talking head videos.

FreqMark: Invisible Image Watermarking via Frequency Based Optimization in Latent Space

Oct 28, 2024Abstract:Invisible watermarking is essential for safeguarding digital content, enabling copyright protection and content authentication. However, existing watermarking methods fall short in robustness against regeneration attacks. In this paper, we propose a novel method called FreqMark that involves unconstrained optimization of the image latent frequency space obtained after VAE encoding. Specifically, FreqMark embeds the watermark by optimizing the latent frequency space of the images and then extracts the watermark through a pre-trained image encoder. This optimization allows a flexible trade-off between image quality with watermark robustness and effectively resists regeneration attacks. Experimental results demonstrate that FreqMark offers significant advantages in image quality and robustness, permits flexible selection of the encoding bit number, and achieves a bit accuracy exceeding 90% when encoding a 48-bit hidden message under various attack scenarios.

Zero-shot Compound Expression Recognition with Visual Language Model at the 6th ABAW Challenge

Mar 18, 2024

Abstract:Conventional approaches to facial expression recognition primarily focus on the classification of six basic facial expressions. Nevertheless, real-world situations present a wider range of complex compound expressions that consist of combinations of these basics ones due to limited availability of comprehensive training datasets. The 6th Workshop and Competition on Affective Behavior Analysis in-the-wild (ABAW) offered unlabeled datasets containing compound expressions. In this study, we propose a zero-shot approach for recognizing compound expressions by leveraging a pretrained visual language model integrated with some traditional CNN networks.

1DFormer: Learning 1D Landmark Representations via Transformer for Facial Landmark Tracking

Nov 01, 2023Abstract:Recently, heatmap regression methods based on 1D landmark representations have shown prominent performance on locating facial landmarks. However, previous methods ignored to make deep explorations on the good potentials of 1D landmark representations for sequential and structural modeling of multiple landmarks to track facial landmarks. To address this limitation, we propose a Transformer architecture, namely 1DFormer, which learns informative 1D landmark representations by capturing the dynamic and the geometric patterns of landmarks via token communications in both temporal and spatial dimensions for facial landmark tracking. For temporal modeling, we propose a recurrent token mixing mechanism, an axis-landmark-positional embedding mechanism, as well as a confidence-enhanced multi-head attention mechanism to adaptively and robustly embed long-term landmark dynamics into their 1D representations; for structure modeling, we design intra-group and inter-group structure modeling mechanisms to encode the component-level as well as global-level facial structure patterns as a refinement for the 1D representations of landmarks through token communications in the spatial dimension via 1D convolutional layers. Experimental results on the 300VW and the TF databases show that 1DFormer successfully models the long-range sequential patterns as well as the inherent facial structures to learn informative 1D representations of landmark sequences, and achieves state-of-the-art performance on facial landmark tracking.

MEDIC: A Multimodal Empathy Dataset in Counseling

May 04, 2023Abstract:Although empathic interaction between counselor and client is fundamental to success in the psychotherapeutic process, there are currently few datasets to aid a computational approach to empathy understanding. In this paper, we construct a multimodal empathy dataset collected from face-to-face psychological counseling sessions. The dataset consists of 771 video clips. We also propose three labels (i.e., expression of experience, emotional reaction, and cognitive reaction) to describe the degree of empathy between counselors and their clients. Expression of experience describes whether the client has expressed experiences that can trigger empathy, and emotional and cognitive reactions indicate the counselor's empathic reactions. As an elementary assessment of the usability of the constructed multimodal empathy dataset, an interrater reliability analysis of annotators' subjective evaluations for video clips is conducted using the intraclass correlation coefficient and Fleiss' Kappa. Results prove that our data annotation is reliable. Furthermore, we conduct empathy prediction using three typical methods, including the tensor fusion network, the sentimental words aware fusion network, and a simple concatenation model. The experimental results show that empathy can be well predicted on our dataset. Our dataset is available for research purposes.

Emotional Reaction Intensity Estimation Based on Multimodal Data

Mar 16, 2023Abstract:This paper introduces our method for the Emotional Reaction Intensity (ERI) Estimation Challenge, in CVPR 2023: 5th Workshop and Competition on Affective Behavior Analysis in-the-wild (ABAW). Based on the multimodal data provided by the originazers, we extract acoustic and visual features with different pretrained models. The multimodal features are mixed together by Transformer Encoders with cross-modal attention mechnism. In this paper, 1. better features are extracted with the SOTA pretrained models. 2. Compared with the baseline, we improve the Pearson's Correlations Coefficient a lot. 3. We process the data with some special skills to enhance performance ability of our model.

Facial Affective Behavior Analysis Method for 5th ABAW Competition

Mar 16, 2023Abstract:Facial affective behavior analysis is important for human-computer interaction. 5th ABAW competition includes three challenges from Aff-Wild2 database. Three common facial affective analysis tasks are involved, i.e. valence-arousal estimation, expression classification, action unit recognition. For the three challenges, we construct three different models to solve the corresponding problems to improve the results, such as data unbalance and data noise. For the experiments of three challenges, we train the models on the provided training data and validate the models on the validation data.

Multi-Task Learning for Emotion Descriptors Estimation at the fourth ABAW Challenge

Jul 20, 2022

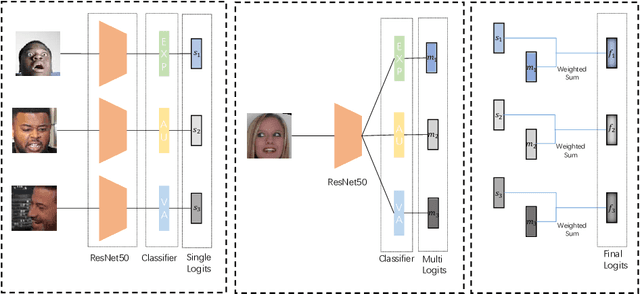

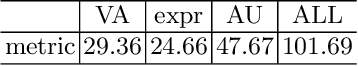

Abstract:Facial valence/arousal, expression and action unit are related tasks in facial affective analysis. However, the tasks only have limited performance in the wild due to the various collected conditions. The 4th competition on affective behavior analysis in the wild (ABAW) provided images with valence/arousal, expression and action unit labels. In this paper, we introduce multi-task learning framework to enhance the performance of three related tasks in the wild. Feature sharing and label fusion are used to utilize their relations. We conduct experiments on the provided training and validating data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge