Cho-Jui Hsieh

UCLA

CAT: Customized Adversarial Training for Improved Robustness

Feb 17, 2020

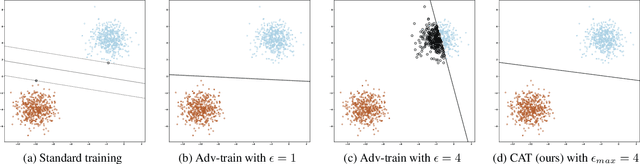

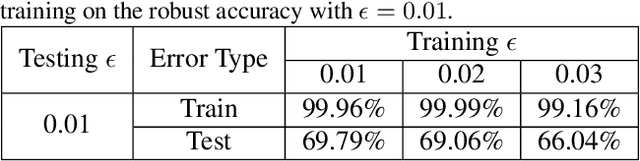

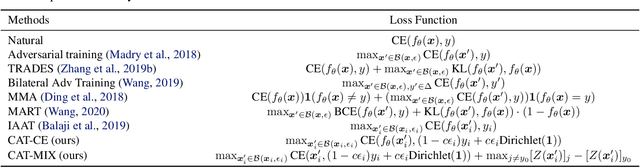

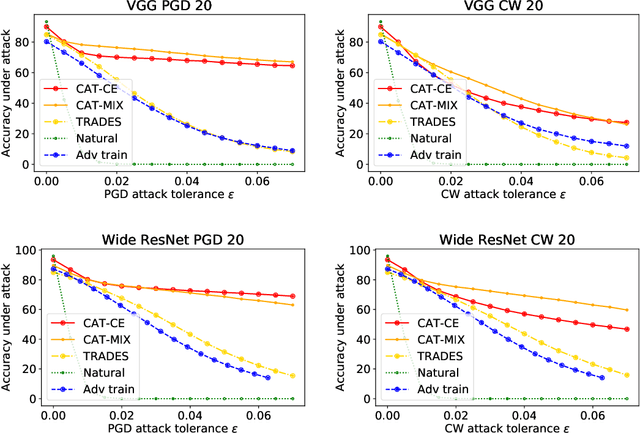

Abstract:Adversarial training has become one of the most effective methods for improving robustness of neural networks. However, it often suffers from poor generalization on both clean and perturbed data. In this paper, we propose a new algorithm, named Customized Adversarial Training (CAT), which adaptively customizes the perturbation level and the corresponding label for each training sample in adversarial training. We show that the proposed algorithm achieves better clean and robust accuracy than previous adversarial training methods through extensive experiments.

Robustness Verification for Transformers

Feb 16, 2020

Abstract:Robustness verification that aims to formally certify the prediction behavior of neural networks has become an important tool for understanding model behavior and obtaining safety guarantees. However, previous methods can usually only handle neural networks with relatively simple architectures. In this paper, we consider the robustness verification problem for Transformers. Transformers have complex self-attention layers that pose many challenges for verification, including cross-nonlinearity and cross-position dependency, which have not been discussed in previous works. We resolve these challenges and develop the first robustness verification algorithm for Transformers. The certified robustness bounds computed by our method are significantly tighter than those by naive Interval Bound Propagation. These bounds also shed light on interpreting Transformers as they consistently reflect the importance of different words in sentiment analysis.

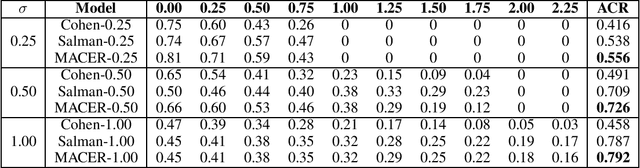

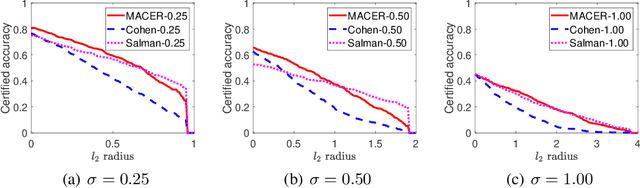

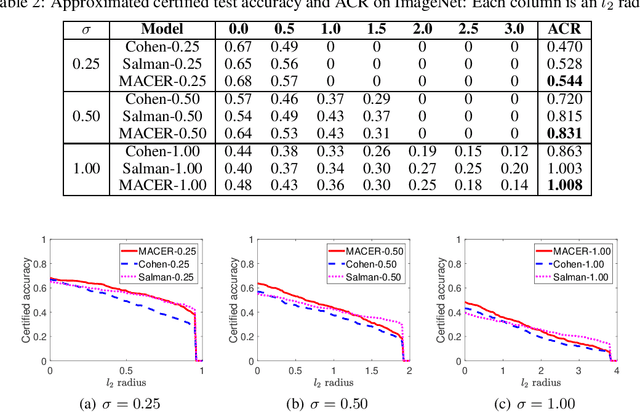

MACER: Attack-free and Scalable Robust Training via Maximizing Certified Radius

Feb 15, 2020

Abstract:Adversarial training is one of the most popular ways to learn robust models but is usually attack-dependent and time costly. In this paper, we propose the MACER algorithm, which learns robust models without using adversarial training but performs better than all existing provable l2-defenses. Recent work shows that randomized smoothing can be used to provide a certified l2 radius to smoothed classifiers, and our algorithm trains provably robust smoothed classifiers via MAximizing the CErtified Radius (MACER). The attack-free characteristic makes MACER faster to train and easier to optimize. In our experiments, we show that our method can be applied to modern deep neural networks on a wide range of datasets, including Cifar-10, ImageNet, MNIST, and SVHN. For all tasks, MACER spends less training time than state-of-the-art adversarial training algorithms, and the learned models achieve larger average certified radius.

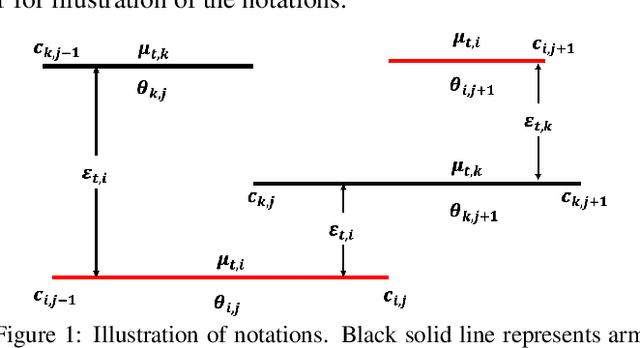

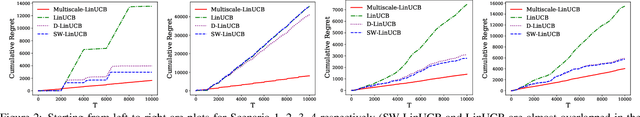

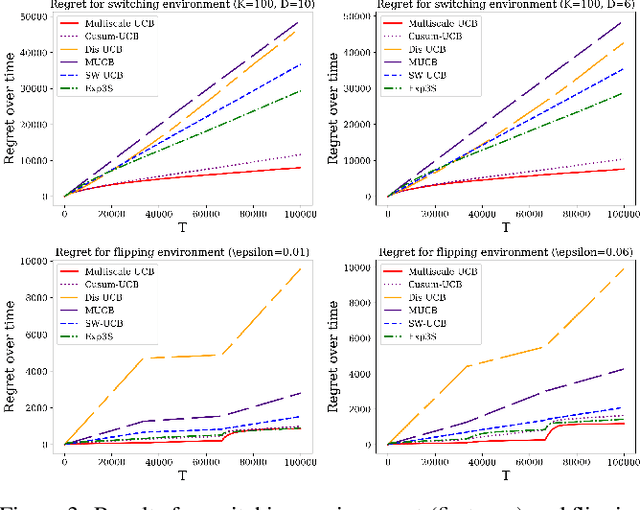

Multiscale Non-stationary Stochastic Bandits

Feb 13, 2020

Abstract:Classic contextual bandit algorithms for linear models, such as LinUCB, assume that the reward distribution for an arm is modeled by a stationary linear regression. When the linear regression model is non-stationary over time, the regret of LinUCB can scale linearly with time. In this paper, we propose a novel multiscale changepoint detection method for the non-stationary linear bandit problems, called Multiscale-LinUCB, which actively adapts to the changing environment. We also provide theoretical analysis of regret bound for Multiscale-LinUCB algorithm. Experimental results show that our proposed Multiscale-LinUCB algorithm outperforms other state-of-the-art algorithms in non-stationary contextual environments.

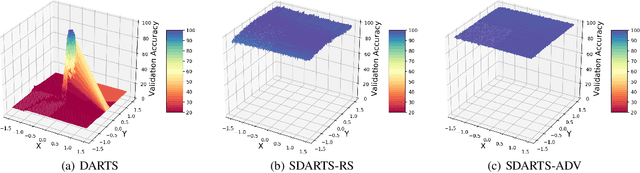

Stabilizing Differentiable Architecture Search via Perturbation-based Regularization

Feb 12, 2020

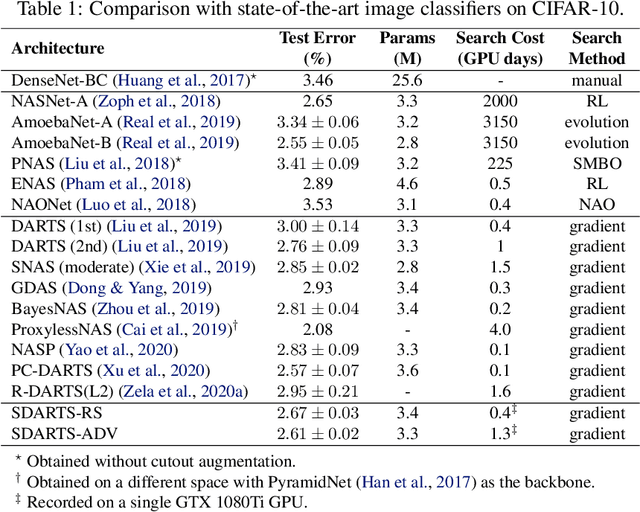

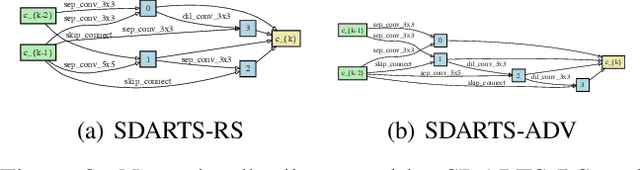

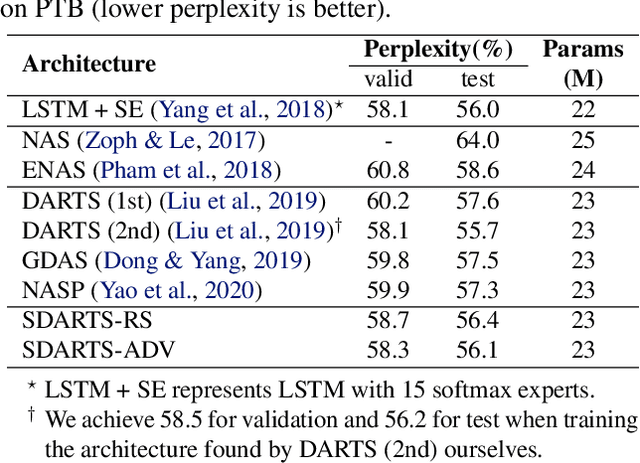

Abstract:Differentiable architecture search (DARTS) is a prevailing NAS solution to identify architectures. Based on the continuous relaxation of the architecture space, DARTS learns a differentiable architecture weight and largely reduces the search cost. However, its stability and generalizability have been challenged for yielding deteriorating architectures as the search proceeds. We find that the precipitous validation loss landscape, which leads to a dramatic performance drop when distilling the final architecture, is an essential factor that causes instability. Based on this observation, we propose a perturbation-based regularization, named SmoothDARTS (SDARTS), to smooth the loss landscape and improve the generalizability of DARTS. In particular, our new formulations stabilize DARTS by either random smoothing or adversarial attack. The search trajectory on NAS-Bench-1Shot1 demonstrates the effectiveness of our approach and due to the improved stability, we achieve performance gain across various search spaces on 4 datasets. Furthermore, we mathematically show that SDARTS implicitly regularizes the Hessian norm of the validation loss, which accounts for a smoother loss landscape and improved performance. The code is available at https://github.com/xiangning-chen/SmoothDARTS.

GraphDefense: Towards Robust Graph Convolutional Networks

Nov 11, 2019

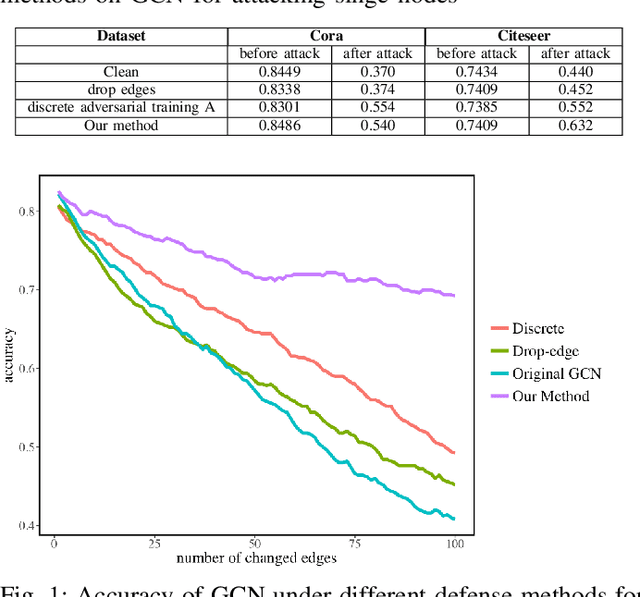

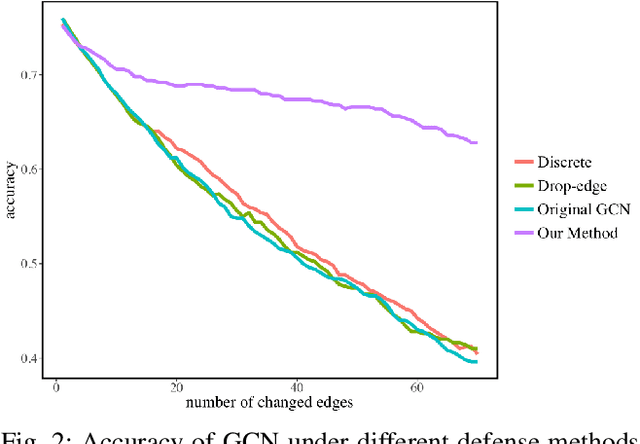

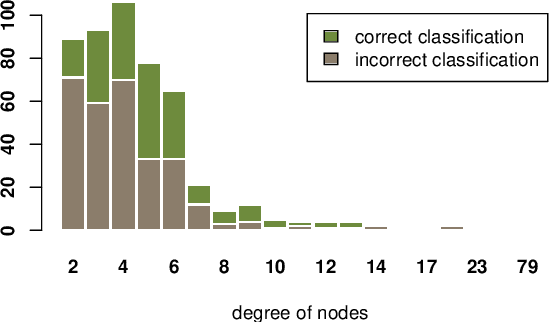

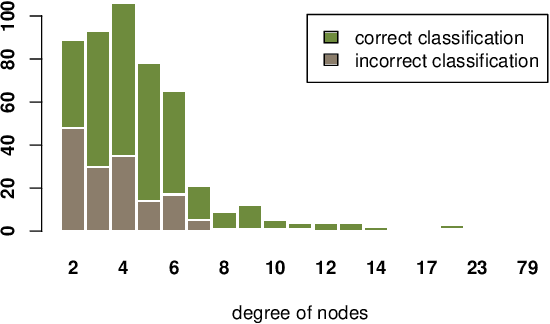

Abstract:In this paper, we study the robustness of graph convolutional networks (GCNs). Despite the good performance of GCNs on graph semi-supervised learning tasks, previous works have shown that the original GCNs are very unstable to adversarial perturbations. In particular, we can observe a severe performance degradation by slightly changing the graph adjacency matrix or the features of a few nodes, making it unsuitable for security-critical applications. Inspired by the previous works on adversarial defense for deep neural networks, and especially adversarial training algorithm, we propose a method called GraphDefense to defend against the adversarial perturbations. In addition, for our defense method, we could still maintain semi-supervised learning settings, without a large label rate. We also show that adversarial training in features is equivalent to adversarial training for edges with a small perturbation. Our experiments show that the proposed defense methods successfully increase the robustness of Graph Convolutional Networks. Furthermore, we show that with careful design, our proposed algorithm can scale to large graphs, such as Reddit dataset.

Enhancing Certifiable Robustness via a Deep Model Ensemble

Oct 31, 2019

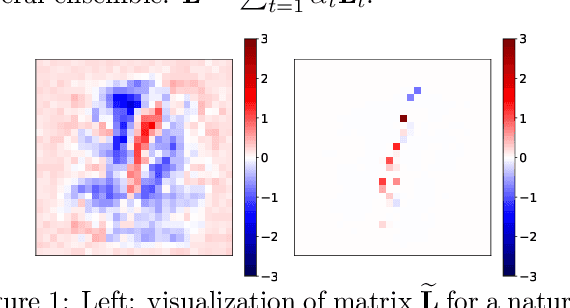

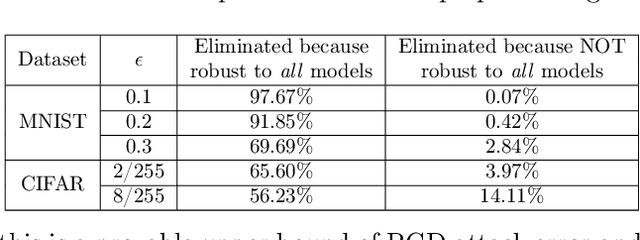

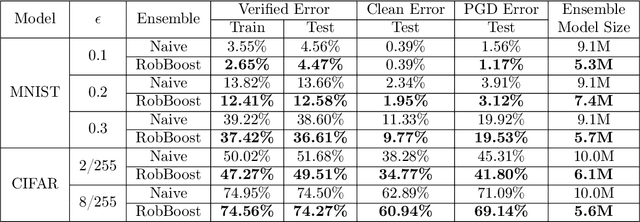

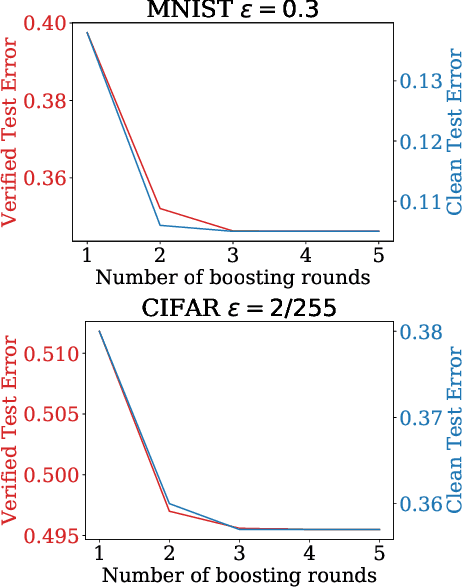

Abstract:We propose an algorithm to enhance certified robustness of a deep model ensemble by optimally weighting each base model. Unlike previous works on using ensembles to empirically improve robustness, our algorithm is based on optimizing a guaranteed robustness certificate of neural networks. Our proposed ensemble framework with certified robustness, RobBoost, formulates the optimal model selection and weighting task as an optimization problem on a lower bound of classification margin, which can be efficiently solved using coordinate descent. Experiments show that our algorithm can form a more robust ensemble than naively averaging all available models using robustly trained MNIST or CIFAR base models. Additionally, our ensemble typically has better accuracy on clean (unperturbed) data. RobBoost allows us to further improve certified robustness and clean accuracy by creating an ensemble of already certified models.

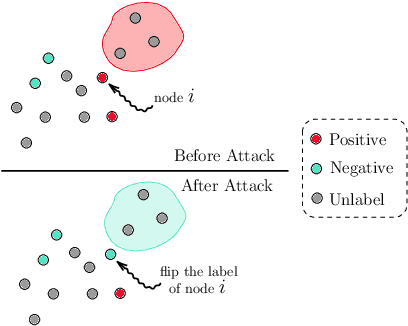

A Unified Framework for Data Poisoning Attack to Graph-based Semi-supervised Learning

Oct 30, 2019

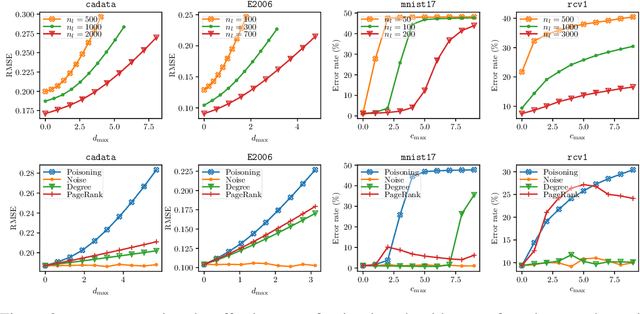

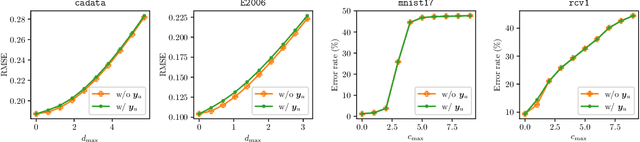

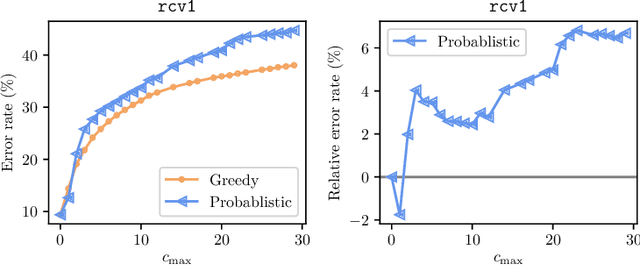

Abstract:In this paper, we proposed a general framework for data poisoning attacks to graph-based semi-supervised learning (G-SSL). In this framework, we first unify different tasks, goals, and constraints into a single formula for data poisoning attack in G-SSL, then we propose two specialized algorithms to efficiently solve two important cases --- poisoning regression tasks under $\ell_2$-norm constraint and classification tasks under $\ell_0$-norm constraint. In the former case, we transform it into a non-convex trust region problem and show that our gradient-based algorithm with delicate initialization and update scheme finds the (globally) optimal perturbation. For the latter case, although it is an NP-hard integer programming problem, we propose a probabilistic solver that works much better than the classical greedy method. Lastly, we test our framework on real datasets and evaluate the robustness of G-SSL algorithms. For instance, on the MNIST binary classification problem (50000 training data with 50 labeled), flipping two labeled data is enough to make the model perform like random guess (around 50\% error).

Learning to Learn by Zeroth-Order Oracle

Oct 21, 2019

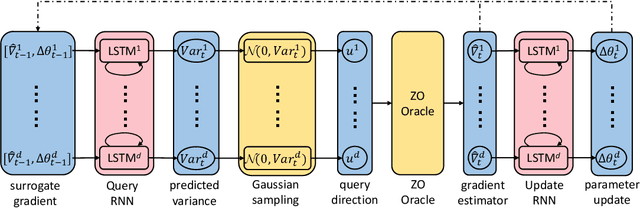

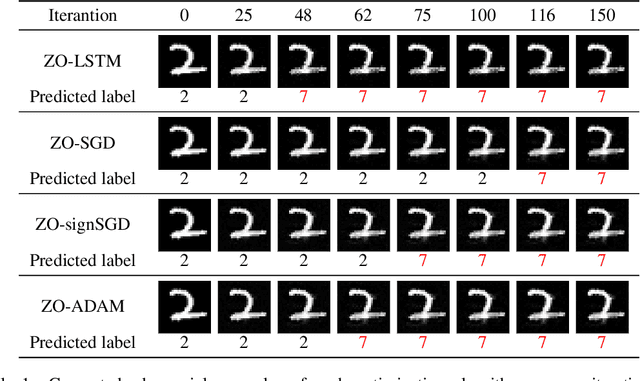

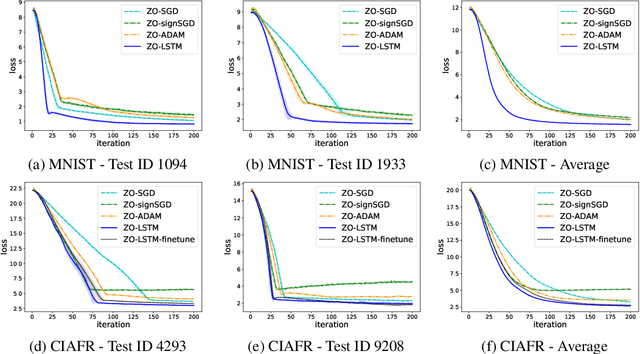

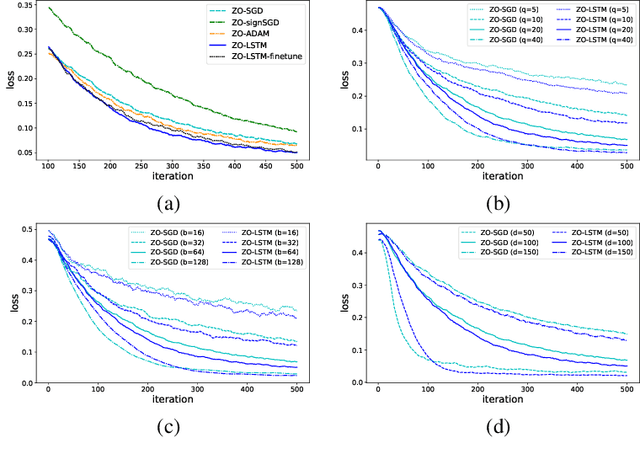

Abstract:In the learning to learn (L2L) framework, we cast the design of optimization algorithms as a machine learning problem and use deep neural networks to learn the update rules. In this paper, we extend the L2L framework to zeroth-order (ZO) optimization setting, where no explicit gradient information is available. Our learned optimizer, modeled as recurrent neural network (RNN), first approximates gradient by ZO gradient estimator and then produces parameter update utilizing the knowledge of previous iterations. To reduce high variance effect due to ZO gradient estimator, we further introduce another RNN to learn the Gaussian sampling rule and dynamically guide the query direction sampling. Our learned optimizer outperforms hand-designed algorithms in terms of convergence rate and final solution on both synthetic and practical ZO optimization tasks (in particular, the black-box adversarial attack task, which is one of the most widely used tasks of ZO optimization). We finally conduct extensive analytical experiments to demonstrate the effectiveness of our proposed optimizer.

BOSH: An Efficient Meta Algorithm for Decision-based Attacks

Oct 14, 2019

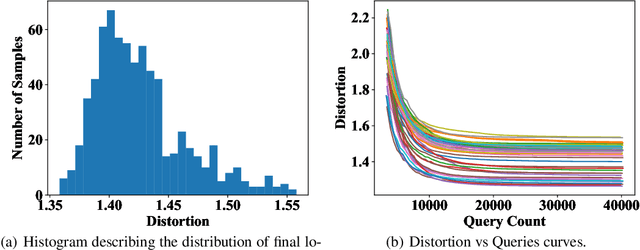

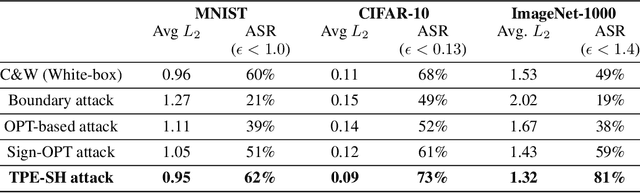

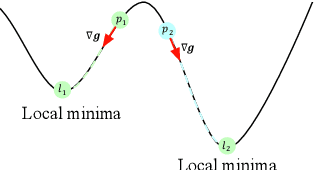

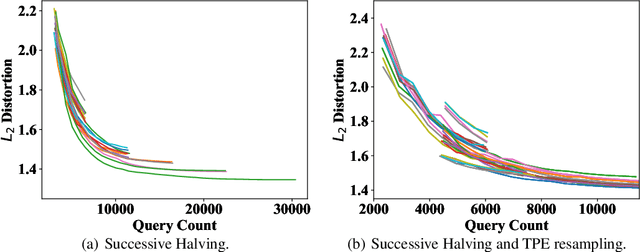

Abstract:Adversarial example generation becomes a viable method for evaluating the robustness of a machine learning model. In this paper, we consider hard-label black-box attacks (a.k.a. decision-based attacks), which is a challenging setting that generates adversarial examples based on only a series of black-box hard-label queries. This type of attacks can be used to attack discrete and complex models, such as Gradient Boosting Decision Tree (GBDT) and detection-based defense models. Existing decision-based attacks based on iterative local updates often get stuck in a local minimum and fail to generate the optimal adversarial example with the smallest distortion. To remedy this issue, we propose an efficient meta algorithm called BOSH-attack, which tremendously improves existing algorithms through Bayesian Optimization (BO) and Successive Halving (SH). In particular, instead of traversing a single solution path when searching an adversarial example, we maintain a pool of solution paths to explore important regions. We show empirically that the proposed algorithm converges to a better solution than existing approaches, while the query count is smaller than applying multiple random initializations by a factor of 10.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge