Chenghao Wang

TASU2: Controllable CTC Simulation for Alignment and Low-Resource Adaptation of Speech LLMs

Apr 09, 2026Abstract:Speech LLM post-training increasingly relies on efficient cross-modal alignment and robust low-resource adaptation, yet collecting large-scale audio-text pairs remains costly. Text-only alignment methods such as TASU reduce this burden by simulating CTC posteriors from transcripts, but they provide limited control over uncertainty and error rate, making curriculum design largely heuristic. We propose \textbf{TASU2}, a controllable CTC simulation framework that simulates CTC posterior distributions under a specified WER range, producing text-derived supervision that better matches the acoustic decoding interface. This enables principled post-training curricula that smoothly vary supervision difficulty without TTS. Across multiple source-to-target adaptation settings, TASU2 improves in-domain and out-of-domain recognition over TASU, and consistently outperforms strong baselines including text-only fine-tuning and TTS-based augmentation, while mitigating source-domain performance degradation.

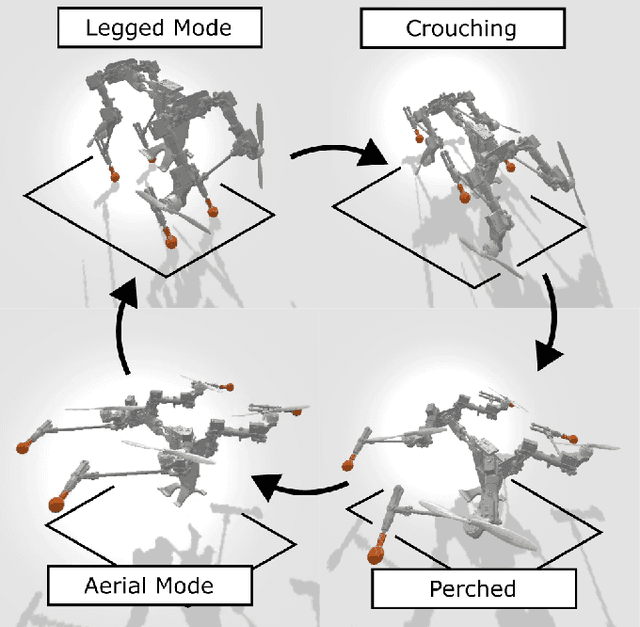

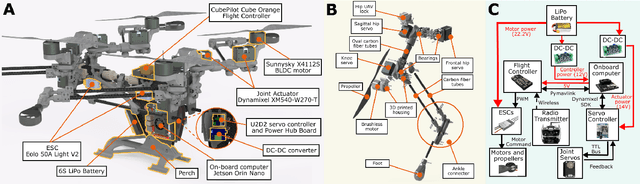

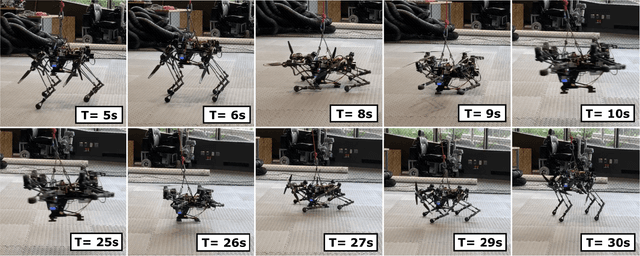

Dynamic Quadrupedal Legged and Aerial Locomotion via Structure Repurposing

Oct 10, 2025

Abstract:Multi-modal ground-aerial robots have been extensively studied, with a significant challenge lying in the integration of conflicting requirements across different modes of operation. The Husky robot family, developed at Northeastern University, and specifically the Husky v.2 discussed in this study, addresses this challenge by incorporating posture manipulation and thrust vectoring into multi-modal locomotion through structure repurposing. This quadrupedal robot features leg structures that can be repurposed for dynamic legged locomotion and flight. In this paper, we present the hardware design of the robot and report primary results on dynamic quadrupedal legged locomotion and hovering.

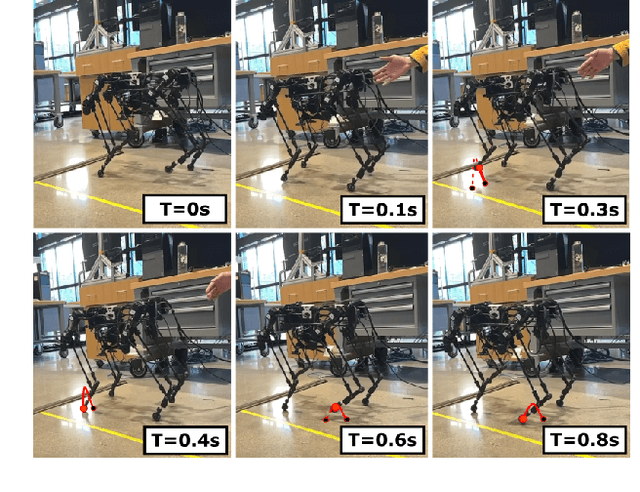

Guiding Energy-Efficient Locomotion through Impact Mitigation Rewards

Oct 10, 2025Abstract:Animals achieve energy-efficient locomotion by their implicit passive dynamics, a marvel that has captivated roboticists for decades.Recently, methods incorporated Adversarial Motion Prior (AMP) and Reinforcement learning (RL) shows promising progress to replicate Animals' naturalistic motion. However, such imitation learning approaches predominantly capture explicit kinematic patterns, so-called gaits, while overlooking the implicit passive dynamics. This work bridges this gap by incorporating a reward term guided by Impact Mitigation Factor (IMF), a physics-informed metric that quantifies a robot's ability to passively mitigate impacts. By integrating IMF with AMP, our approach enables RL policies to learn both explicit motion trajectories from animal reference motion and the implicit passive dynamic. We demonstrate energy efficiency improvements of up to 32%, as measured by the Cost of Transport (CoT), across both AMP and handcrafted reward structure.

Elevating Legal LLM Responses: Harnessing Trainable Logical Structures and Semantic Knowledge with Legal Reasoning

Feb 11, 2025

Abstract:Large Language Models (LLMs) have achieved impressive results across numerous domains, yet they experience notable deficiencies in legal question-answering tasks. LLMs often generate generalized responses that lack the logical specificity required for expert legal advice and are prone to hallucination, providing answers that appear correct but are unreliable. Retrieval-Augmented Generation (RAG) techniques offer partial solutions to address this challenge, but existing approaches typically focus only on semantic similarity, neglecting the logical structure essential to legal reasoning. In this paper, we propose the Logical-Semantic Integration Model (LSIM), a novel supervised framework that bridges semantic and logical coherence. LSIM comprises three components: reinforcement learning predicts a structured fact-rule chain for each question, a trainable Deep Structured Semantic Model (DSSM) retrieves the most relevant candidate questions by integrating semantic and logical features, and in-context learning generates the final answer using the retrieved content. Our experiments on a real-world legal QA dataset-validated through both automated metrics and human evaluation-demonstrate that LSIM significantly enhances accuracy and reliability compared to existing methods.

Enhanced Capture Point Control Using Thruster Dynamics and QP-Based Optimization for Harpy

Nov 21, 2024Abstract:Our work aims to make significant strides in understanding unexplored locomotion control paradigms based on the integration of posture manipulation and thrust vectoring. These techniques are commonly seen in nature, such as Chukar birds using their wings to run on a nearly vertical wall. In this work, we developed a capture-point-based controller integrated with a quadratic programming (QP) solver which is used to create a thruster-assisted dynamic bipedal walking controller for our state-of-the-art Harpy platform. Harpy is a bipedal robot capable of legged-aerial locomotion using its legs and thrusters attached to its main frame. While capture point control based on centroidal models for bipedal systems has been extensively studied, the use of these thrusters in determining the capture point for a bipedal robot has not been extensively explored. The addition of these external thrust forces can lead to interesting interpretations of locomotion, such as virtual buoyancy studied in aquatic-legged locomotion. In this work, we derive a thruster-assisted bipedal walking with the capture point controller and implement it in simulation to study its performance.

Conjugate momentum based thruster force estimate in dynamic multimodal robot

Nov 21, 2024Abstract:In a multi-modal system which combines thruster and legged locomotion such our state-of-the-art Harpy platform to perform dynamic locomotion. Therefore, it is very important to have a proper estimate of Thruster force. Harpy is a bipedal robot capable of legged-aerial locomotion using its legs and thrusters attached to its main frame. we can characterize thruster force using a thrust stand but it generally does not account for working conditions such as battery voltage. In this study, we present a momentum-based thruster force estimator. One of the key information required to estimate is terrain information. we show estimation results with and without terrain knowledge. In this work, we derive a conjugate momentum thruster force estimator and implement it on a numerical simulator that uses thruster force to perform thruster-assisted walking.

Quadratic Programming Optimization for Bio-Inspired Thruster-Assisted Bipedal Locomotion on Inclined Slopes

Nov 20, 2024

Abstract:Our work aims to make significant strides in understanding unexplored locomotion control paradigms based on the integration of posture manipulation and thrust vectoring. These techniques are commonly seen in nature, such as Chukar birds using their wings to run on a nearly vertical wall. In this work, we show quadratic programming with contact constraints which is then given to the whole body controller to map on robot states to produce a thruster-assisted slope walking controller for our state-of-the-art Harpy platform. Harpy is a bipedal robot capable of legged-aerial locomotion using its legs and thrusters attached to its main frame. The optimization-based walking controller has been used for dynamic locomotion such as slope walking, but the addition of thrusters to perform inclined slope walking has not been extensively explored. In this work, we derive a thruster-assisted bipedal walking with the quadratic programming (QP) controller and implement it in simulation to study its performance.

Enabling steep slope walking on Husky using reduced order modeling and quadratic programming

Nov 18, 2024Abstract:Wing-assisted inclined running (WAIR) observed in some young birds, is an attractive maneuver that can be extended to legged aerial systems. This study proposes a control method using a modified Variable Length Inverted Pendulum (VLIP) by assuming a fixed zero moment point and thruster forces collocated at the center of mass of the pendulum. A QP MPC is used to find the optimal ground reaction forces and thruster forces to track a reference position and velocity trajectory. Simulation results of this VLIP model on a slope of 40 degrees is maintained and shows thruster forces that can be obtained through posture manipulation. The simulation also provides insight to how the combined efforts of the thrusters and the tractive forces from the legs make WAIR possible in thruster-assisted legged systems.

Optimization free control and ground force estimation with momentum observer for a multimodal legged aerial robot

Nov 18, 2024

Abstract:Legged-aerial multimodal robots can make the most of both legged and aerial systems. In this paper, we propose a control framework that bypasses heavy onboard computers by using an optimization-free Explicit Reference Governor that incorporates external thruster forces from an attitude controller. Ground reaction forces are maintained within friction cone constraints using costly optimization solvers, but the ERG framework filters applied velocity references that ensure no slippage at the foot end. We also propose a Conjugate momentum observer, that is widely used in Disturbance Observation to estimate ground reaction forces and compare its efficacy against a constrained model in estimating ground reaction forces in a reduced-order simulation of Husky.

OrbitGrasp: $SE(3)$-Equivariant Grasp Learning

Jul 03, 2024

Abstract:While grasp detection is an important part of any robotic manipulation pipeline, reliable and accurate grasp detection in $SE(3)$ remains a research challenge. Many robotics applications in unstructured environments such as the home or warehouse would benefit a lot from better grasp performance. This paper proposes a novel framework for detecting $SE(3)$ grasp poses based on point cloud input. Our main contribution is to propose an $SE(3)$-equivariant model that maps each point in the cloud to a continuous grasp quality function over the 2-sphere $S^2$ using a spherical harmonic basis. Compared with reasoning about a finite set of samples, this formulation improves the accuracy and efficiency of our model when a large number of samples would otherwise be needed. In order to accomplish this, we propose a novel variation on EquiFormerV2 that leverages a UNet-style backbone to enlarge the number of points the model can handle. Our resulting method, which we name $\textit{OrbitGrasp}$, significantly outperforms baselines in both simulation and physical experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge