Zhichao Liu

Vision-assisted Avocado Harvesting with Aerial Bimanual Manipulation

Aug 17, 2024

Abstract:Robotic fruit harvesting holds potential in precision agriculture to improve harvesting efficiency. While ground mobile robots are mostly employed in fruit harvesting, certain crops, like avocado trees, cannot be harvested efficiently from the ground alone. This is because of unstructured ground and planting arrangement and high-to-reach fruits. In such cases, aerial robots integrated with manipulation capabilities can pave new ways in robotic harvesting. This paper outlines the design and implementation of a bimanual UAV that employs visual perception and learning to autonomously detect avocados, reach, and harvest them. The dual-arm system comprises a gripper and a fixer arm, to address a key challenge when harvesting avocados: once grasped, a rotational motion is the most efficient way to detach the avocado from the peduncle; however, the peduncle may store elastic energy preventing the avocado from being harvested. The fixer arm aims to stabilize the peduncle, allowing the gripper arm to harvest. The integrated visual perception process enables the detection of avocados and the determination of their pose; the latter is then used to determine target points for a bimanual manipulation planner. Several experiments are conducted to assess the efficacy of each component, and integrated experiments assess the effectiveness of the system.

ChipExpert: The Open-Source Integrated-Circuit-Design-Specific Large Language Model

Jul 26, 2024Abstract:The field of integrated circuit (IC) design is highly specialized, presenting significant barriers to entry and research and development challenges. Although large language models (LLMs) have achieved remarkable success in various domains, existing LLMs often fail to meet the specific needs of students, engineers, and researchers. Consequently, the potential of LLMs in the IC design domain remains largely unexplored. To address these issues, we introduce ChipExpert, the first open-source, instructional LLM specifically tailored for the IC design field. ChipExpert is trained on one of the current best open-source base model (Llama-3 8B). The entire training process encompasses several key stages, including data preparation, continue pre-training, instruction-guided supervised fine-tuning, preference alignment, and evaluation. In the data preparation stage, we construct multiple high-quality custom datasets through manual selection and data synthesis techniques. In the subsequent two stages, ChipExpert acquires a vast amount of IC design knowledge and learns how to respond to user queries professionally. ChipExpert also undergoes an alignment phase, using Direct Preference Optimization, to achieve a high standard of ethical performance. Finally, to mitigate the hallucinations of ChipExpert, we have developed a Retrieval-Augmented Generation (RAG) system, based on the IC design knowledge base. We also released the first IC design benchmark ChipICD-Bench, to evaluate the capabilities of LLMs across multiple IC design sub-domains. Through comprehensive experiments conducted on this benchmark, ChipExpert demonstrated a high level of expertise in IC design knowledge Question-and-Answer tasks.

An unsupervised approach towards promptable defect segmentation in laser-based additive manufacturing by Segment Anything

Dec 07, 2023

Abstract:Foundation models are currently driving a paradigm shift in computer vision tasks for various fields including biology, astronomy, and robotics among others, leveraging user-generated prompts to enhance their performance. In the manufacturing domain, accurate image-based defect segmentation is imperative to ensure product quality and facilitate real-time process control. However, such tasks are often characterized by multiple challenges including the absence of labels and the requirement for low latency inference among others. To address these issues, we construct a framework for image segmentation using a state-of-the-art Vision Transformer (ViT) based Foundation model (Segment Anything Model) with a novel multi-point prompt generation scheme using unsupervised clustering. We apply our framework to perform real-time porosity segmentation in a case study of laser base powder bed fusion (L-PBF) and obtain high Dice Similarity Coefficients (DSC) without the necessity for any supervised fine-tuning in the model. Using such lightweight foundation model inference in conjunction with unsupervised prompt generation, we envision the construction of a real-time anomaly detection pipeline that has the potential to revolutionize the current laser-based additive manufacturing processes, thereby facilitating the shift towards Industry 4.0 and promoting defect-free production along with operational efficiency.

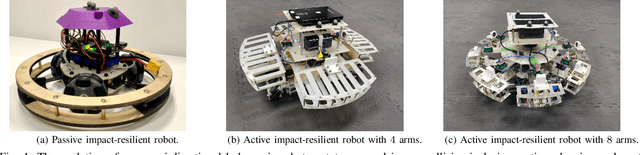

Dynamic Modeling and Analysis of Impact-resilient MAVs Undergoing High-speed and Large-angle Collisions with the Environment

Jul 21, 2023

Abstract:Micro Aerial Vehicles (MAVs) often face a high risk of collision during autonomous flight, particularly in cluttered and unstructured environments. To mitigate the collision impact on sensitive onboard devices, resilient MAVs with mechanical protective cages and reinforced frames are commonly used. However, compliant and impact-resilient MAVs offer a promising alternative by reducing the potential damage caused by impacts. In this study, we present novel findings on the impact-resilient capabilities of MAVs equipped with passive springs in their compliant arms. We analyze the effect of compliance through dynamic modeling and demonstrate that the inclusion of passive springs enhances impact resilience. The impact resilience is extensively tested to stabilize the MAV following wall collisions under high-speed and large-angle conditions. Additionally, we provide comprehensive comparisons with rigid MAVs to better determine the tradeoffs in flight by embedding compliance onto the robot's frame.

Contact-Prioritized Planning of Impact-Resilient Aerial Robots with an Integrated Compliant Arm

May 24, 2023

Abstract:The article develops an impact-resilient aerial robot (s-ARQ) equipped with a compliant arm to sense contacts and reduce collision impact and featuring a real-time contact force estimator and a non-linear motion controller to handle collisions while performing aggressive maneuvers and stabilize from high-speed wall collisions. Further, a new collision-inclusive planning method that aims to prioritize contacts to facilitate aerial robot navigation in cluttered environments is proposed. A range of simulated and physical experiments demonstrate key benefits of the robot and the contact-prioritized (CP) planner. Experimental results show that the compliant robot has only a $4\%$ weight increase but around $40\%$ impact reduction in drop tests and wall collision tests. s-ARQ can handle collisions while performing aggressive maneuvers and stabilize from high-speed wall collisions at $3.0$ m/s with a success rate of $100\%$. Our proposed compliant robot and contact-prioritized planning method can accelerate computation time while having shorter trajectory time and larger clearances compared to A$^\ast$ and RRT$^\ast$ planners with velocity constraints. Online planning tests in partially-known environments further demonstrate the preliminary feasibility of our method to apply in practical use cases.

Temporal Segment Transformer for Action Segmentation

Feb 25, 2023

Abstract:Recognizing human actions from untrimmed videos is an important task in activity understanding, and poses unique challenges in modeling long-range temporal relations. Recent works adopt a predict-and-refine strategy which converts an initial prediction to action segments for global context modeling. However, the generated segment representations are often noisy and exhibit inaccurate segment boundaries, over-segmentation and other problems. To deal with these issues, we propose an attention based approach which we call \textit{temporal segment transformer}, for joint segment relation modeling and denoising. The main idea is to denoise segment representations using attention between segment and frame representations, and also use inter-segment attention to capture temporal correlations between segments. The refined segment representations are used to predict action labels and adjust segment boundaries, and a final action segmentation is produced based on voting from segment masks. We show that this novel architecture achieves state-of-the-art accuracy on the popular 50Salads, GTEA and Breakfast benchmarks. We also conduct extensive ablations to demonstrate the effectiveness of different components of our design.

Time-Series Pattern Recognition in Smart Manufacturing Systems: A Literature Review and Ontology

Jan 29, 2023Abstract:Since the inception of Industry 4.0 in 2012, emerging technologies have enabled the acquisition of vast amounts of data from diverse sources such as machine tools, robust and affordable sensor systems with advanced information models, and other sources within Smart Manufacturing Systems (SMS). As a result, the amount of data that is available in manufacturing settings has exploded, allowing data-hungry tools such as Artificial Intelligence (AI) and Machine Learning (ML) to be leveraged. Time-series analytics has been successfully applied in a variety of industries, and that success is now being migrated to pattern recognition applications in manufacturing to support higher quality products, zero defect manufacturing, and improved customer satisfaction. However, the diverse landscape of manufacturing presents a challenge for successfully solving problems in industry using time-series pattern recognition. The resulting research gap of understanding and applying the subject matter of time-series pattern recognition in manufacturing is a major limiting factor for adoption in industry. The purpose of this paper is to provide a structured perspective of the current state of time-series pattern recognition in manufacturing with a problem-solving focus. By using an ontology to classify and define concepts, how they are structured, their properties, the relationships between them, and considerations when applying them, this paper aims to provide practical and actionable guidelines for application and recommendations for advancing time-series analytics.

Koopman Operators for Modeling and Control of Soft Robotics

Jan 23, 2023Abstract:Purpose of review: We review recent advances in algorithmic development and validation for modeling and control of soft robots leveraging the Koopman operator theory. Recent findings: We identify the following trends in recent research efforts in this area. (1) The design of lifting functions used in the data-driven approximation of the Koopman operator is critical for soft robots. (2) Robustness considerations are emphasized. Works are proposed to reduce the effect of uncertainty and noise during the process of modeling and control. (3) The Koopman operator has been embedded into different model-based control structures to drive the soft robots. Summary: Because of their compliance and nonlinearities, modeling and control of soft robots face key challenges. To resolve these challenges, Koopman operator-based approaches have been proposed, in an effort to express the nonlinear system in a linear manner. The Koopman operator enables global linearization to reduce nonlinearities and/or serves as model constraints in model-based control algorithms for soft robots. Various implementations in soft robotic systems are illustrated and summarized in the review.

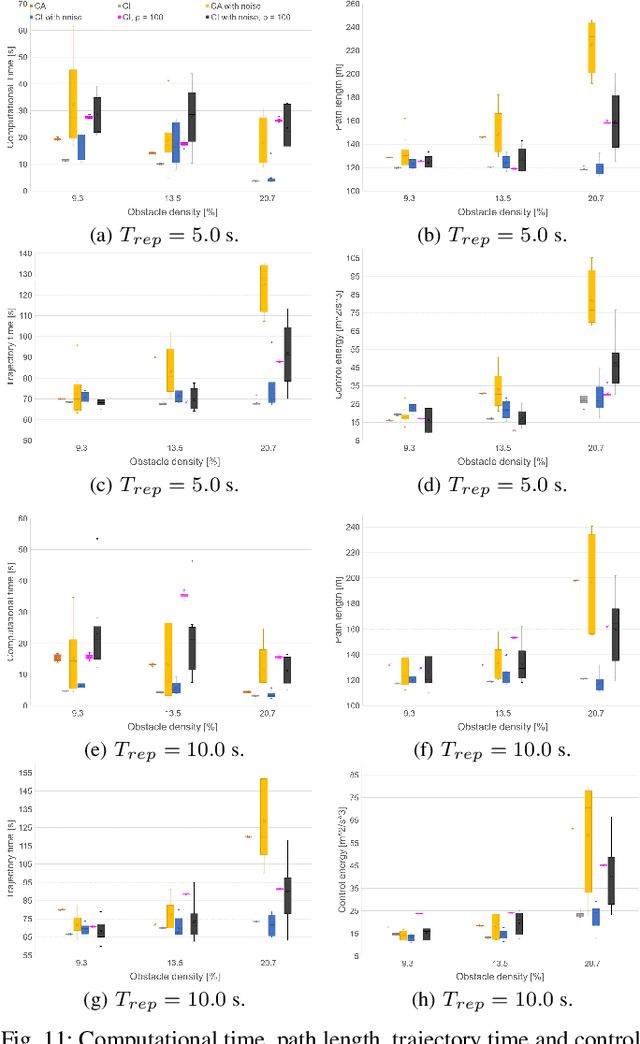

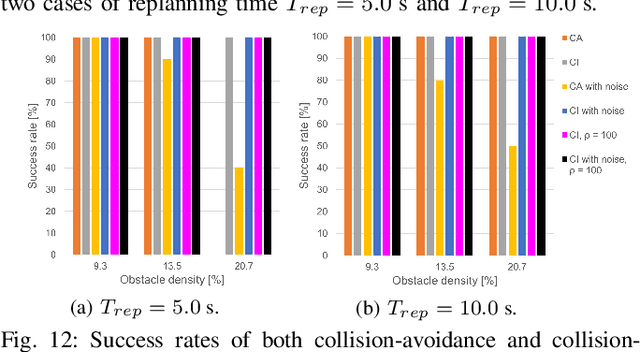

Online Search-based Collision-inclusive Motion Planning and Control for Impact-resilient Mobile Robots

Oct 05, 2022

Abstract:This paper focuses on the emerging paradigm shift of collision-inclusive motion planning and control for impact-resilient mobile robots, and develops a unified hierarchical framework for navigation in unknown and partially-observable cluttered spaces. At the lower-level, we develop a deformation recovery control and trajectory replanning strategy that handles collisions that may occur at run-time, locally. The low-level system actively detects collisions (via embedded Hall effect sensors on a mobile robot built in-house), enables the robot to recover from them, and locally adjusts the post-impact trajectory. Then, at the higher-level, we propose a search-based planning algorithm to determine how to best utilize potential collisions to improve certain metrics, such as control energy and computational time. Our method builds upon A* with jump points. We generate a novel heuristic function, and a collision checking and adjustment technique, thus making the A* algorithm converge faster to reach the goal by exploiting and utilizing possible collisions. The overall hierarchical framework generated by combining the global A* algorithm and the local deformation recovery and replanning strategy, as well as individual components of this framework, are tested extensively both in simulation and experimentally. An ablation study draws links to related state-of-the-art search-based collision-avoidance planners (for the overall framework), as well as search-based collision-avoidance and sampling-based collision-inclusive global planners (for the higher level). Results demonstrate our method's efficacy for collision-inclusive motion planning and control in unknown environments with isolated obstacles for a class of impact-resilient robots operating in 2D.

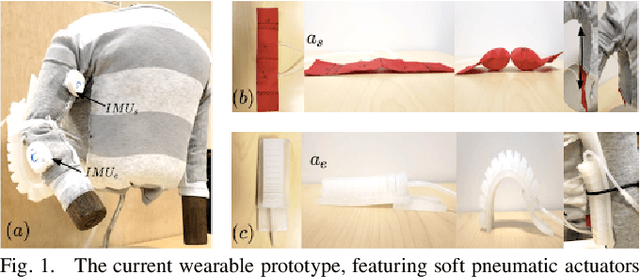

Closed-loop Position Control of a Pediatric Soft Robotic Wearable Device for Upper Extremity Assistance

Jun 16, 2022

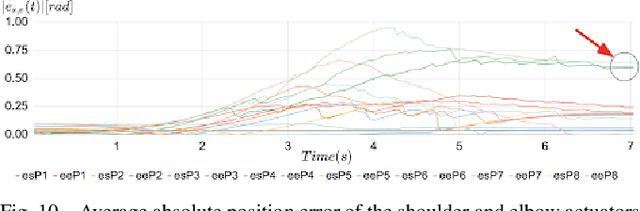

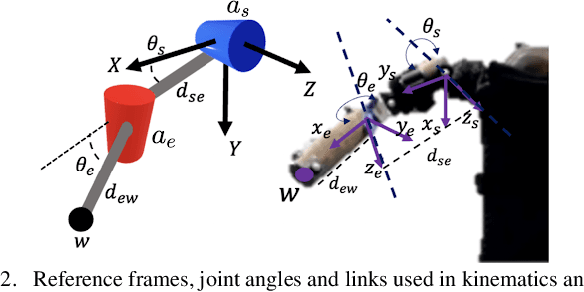

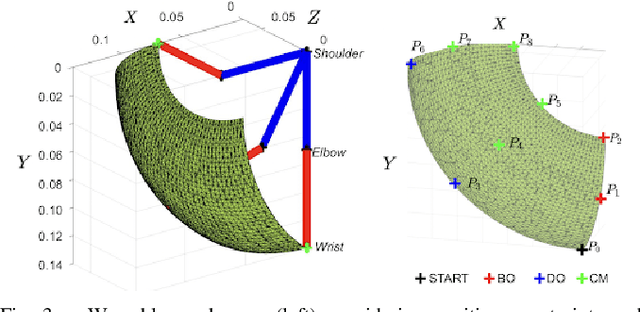

Abstract:This work focuses on closed-loop control based on proprioceptive feedback for a pneumatically-actuated soft wearable device aimed at future support of infant reaching tasks. The device comprises two soft pneumatic actuators (one textile-based and one silicone-casted) actively controlling two degrees-of-freedom per arm (shoulder adduction/abduction and elbow flexion/extension, respectively). Inertial measurement units (IMUs) attached to the wearable device provide real-time joint angle feedback. Device kinematics analysis is informed by anthropometric data from infants (arm lengths) reported in the literature. Range of motion and muscle co-activation patterns in infant reaching are considered to derive desired trajectories for the device's end-effector. Then, a proportional-derivative controller is developed to regulate the pressure inside the actuators and in turn move the arm along desired setpoints within the reachable workspace. Experimental results on tracking desired arm trajectories using an engineered mannequin are presented, demonstrating that the proposed controller can help guide the mannequin's wrist to the desired setpoints.

* 6 pages

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge