Xinyi Wang

University of Maryland, College Park & Beijing Normal University

ASMA-Tune: Unlocking LLMs' Assembly Code Comprehension via Structural-Semantic Instruction Tuning

Mar 14, 2025Abstract:Analysis and comprehension of assembly code are crucial in various applications, such as reverse engineering. However, the low information density and lack of explicit syntactic structures in assembly code pose significant challenges. Pioneering approaches with masked language modeling (MLM)-based methods have been limited by facilitating natural language interaction. While recent methods based on decoder-focused large language models (LLMs) have significantly enhanced semantic representation, they still struggle to capture the nuanced and sparse semantics in assembly code. In this paper, we propose Assembly Augmented Tuning (ASMA-Tune), an end-to-end structural-semantic instruction-tuning framework. Our approach synergizes encoder architectures with decoder-based LLMs through projector modules to enable comprehensive code understanding. Experiments show that ASMA-Tune outperforms existing benchmarks, significantly enhancing assembly code comprehension and instruction-following abilities. Our model and dataset are public at https://github.com/wxy3596/ASMA-Tune.

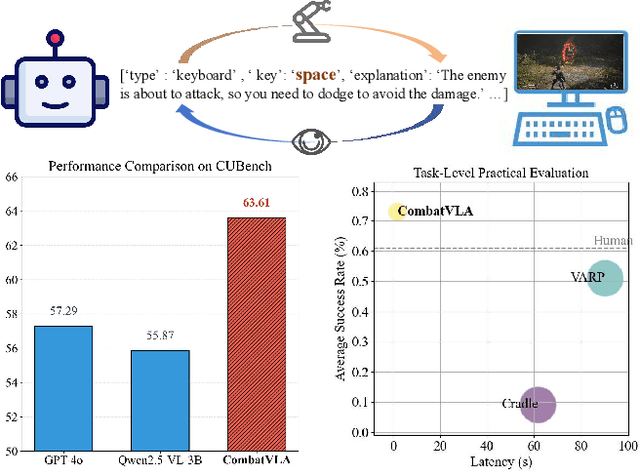

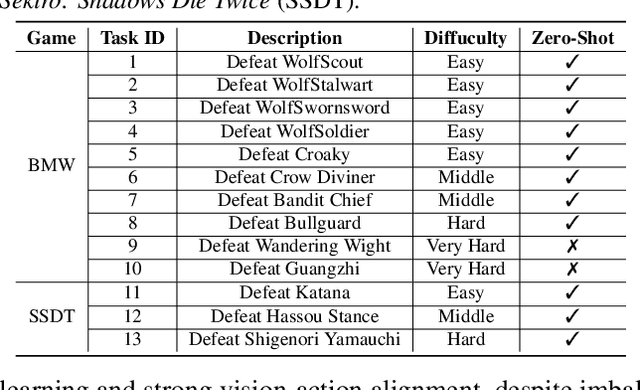

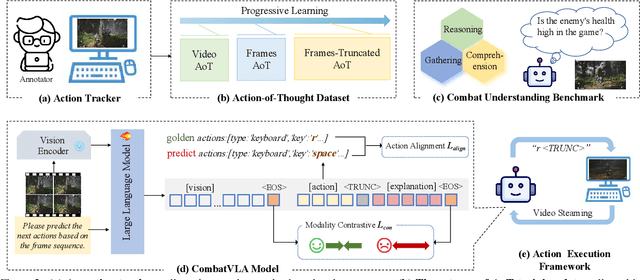

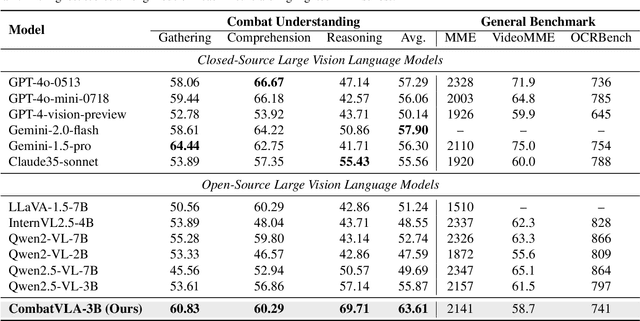

CombatVLA: An Efficient Vision-Language-Action Model for Combat Tasks in 3D Action Role-Playing Games

Mar 12, 2025

Abstract:Recent advances in Vision-Language-Action models (VLAs) have expanded the capabilities of embodied intelligence. However, significant challenges remain in real-time decision-making in complex 3D environments, which demand second-level responses, high-resolution perception, and tactical reasoning under dynamic conditions. To advance the field, we introduce CombatVLA, an efficient VLA model optimized for combat tasks in 3D action role-playing games(ARPGs). Specifically, our CombatVLA is a 3B model trained on video-action pairs collected by an action tracker, where the data is formatted as action-of-thought (AoT) sequences. Thereafter, CombatVLA seamlessly integrates into an action execution framework, allowing efficient inference through our truncated AoT strategy. Experimental results demonstrate that CombatVLA not only outperforms all existing models on the combat understanding benchmark but also achieves a 50-fold acceleration in game combat. Moreover, it has a higher task success rate than human players. We will open-source all resources, including the action tracker, dataset, benchmark, model weights, training code, and the implementation of the framework at https://combatvla.github.io/.

GM-MoE: Low-Light Enhancement with Gated-Mechanism Mixture-of-Experts

Mar 10, 2025Abstract:Low-light enhancement has wide applications in autonomous driving, 3D reconstruction, remote sensing, surveillance, and so on, which can significantly improve information utilization. However, most existing methods lack generalization and are limited to specific tasks such as image recovery. To address these issues, we propose \textbf{Gated-Mechanism Mixture-of-Experts (GM-MoE)}, the first framework to introduce a mixture-of-experts network for low-light image enhancement. GM-MoE comprises a dynamic gated weight conditioning network and three sub-expert networks, each specializing in a distinct enhancement task. Combining a self-designed gated mechanism that dynamically adjusts the weights of the sub-expert networks for different data domains. Additionally, we integrate local and global feature fusion within sub-expert networks to enhance image quality by capturing multi-scale features. Experimental results demonstrate that the GM-MoE achieves superior generalization with respect to 25 compared approaches, reaching state-of-the-art performance on PSNR on 5 benchmarks and SSIM on 4 benchmarks, respectively.

AugRefer: Advancing 3D Visual Grounding via Cross-Modal Augmentation and Spatial Relation-based Referring

Jan 16, 2025

Abstract:3D visual grounding (3DVG), which aims to correlate a natural language description with the target object within a 3D scene, is a significant yet challenging task. Despite recent advancements in this domain, existing approaches commonly encounter a shortage: a limited amount and diversity of text3D pairs available for training. Moreover, they fall short in effectively leveraging different contextual clues (e.g., rich spatial relations within the 3D visual space) for grounding. To address these limitations, we propose AugRefer, a novel approach for advancing 3D visual grounding. AugRefer introduces cross-modal augmentation designed to extensively generate diverse text-3D pairs by placing objects into 3D scenes and creating accurate and semantically rich descriptions using foundation models. Notably, the resulting pairs can be utilized by any existing 3DVG methods for enriching their training data. Additionally, AugRefer presents a language-spatial adaptive decoder that effectively adapts the potential referring objects based on the language description and various 3D spatial relations. Extensive experiments on three benchmark datasets clearly validate the effectiveness of AugRefer.

MixedGaussianAvatar: Realistically and Geometrically Accurate Head Avatar via Mixed 2D-3D Gaussian Splatting

Dec 06, 2024

Abstract:Reconstructing high-fidelity 3D head avatars is crucial in various applications such as virtual reality. The pioneering methods reconstruct realistic head avatars with Neural Radiance Fields (NeRF), which have been limited by training and rendering speed. Recent methods based on 3D Gaussian Splatting (3DGS) significantly improve the efficiency of training and rendering. However, the surface inconsistency of 3DGS results in subpar geometric accuracy; later, 2DGS uses 2D surfels to enhance geometric accuracy at the expense of rendering fidelity. To leverage the benefits of both 2DGS and 3DGS, we propose a novel method named MixedGaussianAvatar for realistically and geometrically accurate head avatar reconstruction. Our main idea is to utilize 2D Gaussians to reconstruct the surface of the 3D head, ensuring geometric accuracy. We attach the 2D Gaussians to the triangular mesh of the FLAME model and connect additional 3D Gaussians to those 2D Gaussians where the rendering quality of 2DGS is inadequate, creating a mixed 2D-3D Gaussian representation. These 2D-3D Gaussians can then be animated using FLAME parameters. We further introduce a progressive training strategy that first trains the 2D Gaussians and then fine-tunes the mixed 2D-3D Gaussians. We demonstrate the superiority of MixedGaussianAvatar through comprehensive experiments. The code will be released at: https://github.com/ChenVoid/MGA/.

Deep Learning-Enabled ISAC-OTFS Pre-equalization Design for Aerial-Terrestrial Networks

Dec 06, 2024

Abstract:Orthogonal time frequency space (OTFS) modulation has been viewed as a promising technique for integrated sensing and communication (ISAC) systems and aerial-terrestrial networks, due to its delay-Doppler domain transmission property and strong Doppler-resistance capability. However, it also suffers from high processing complexity at the receiver. In this work, we propose a novel pre-equalization based ISAC-OTFS transmission framework, where the terrestrial base station (BS) executes pre-equalization based on its estimated channel state information (CSI). In particular, the mean square error of OTFS symbol demodulation and Cramer-Rao lower bound of sensing parameter estimation are derived, and their weighted sum is utilized as the metric for optimizing the pre-equalization matrix. To address the formulated problem while taking the time-varying CSI into consideration, a deep learning enabled channel prediction-based pre-equalization framework is proposed, where a parameter-level channel prediction module is utilized to decouple OTFS channel parameters, and a low-dimensional prediction network is leveraged to correct outdated CSI. A CSI processing module is then used to initialize the input of the pre-equalization module. Finally, a residual-structured deep neural network is cascaded to execute pre-equalization. Simulation results show that under the proposed framework, the demodulation complexity at the receiver as well as the pilot overhead for channel estimation, are significantly reduced, while the symbol detection performance approaches those of conventional minimum mean square error equalization and perfect CSI.

IMNet: Interference-Aware Channel Knowledge Map Construction and Localization

Dec 02, 2024

Abstract:This paper presents a novel two-stage method for constructing channel knowledge maps (CKMs) specifically for A2G (Aerial-to-Ground) channels in the presence of non-cooperative interfering nodes (INs). We first estimate the interfering signal strength (ISS) at sampling locations based on total received signal strength measurements and the desired communication signal strength (DSS) map constructed with environmental topology. Next, an ISS map construction network (IMNet) is proposed, where a negative value correction module is included to enable precise reconstruction. Subsequently, we further execute signal-to-interference-plus-noise ratio map construction and IN localization. Simulation results demonstrate lower construction error of the proposed IMNet compared to baselines in the presence of interference.

Disentangling Memory and Reasoning Ability in Large Language Models

Nov 21, 2024Abstract:Large Language Models (LLMs) have demonstrated strong performance in handling complex tasks requiring both extensive knowledge and reasoning abilities. However, the existing LLM inference pipeline operates as an opaque process without explicit separation between knowledge retrieval and reasoning steps, making the model's decision-making process unclear and disorganized. This ambiguity can lead to issues such as hallucinations and knowledge forgetting, which significantly impact the reliability of LLMs in high-stakes domains. In this paper, we propose a new inference paradigm that decomposes the complex inference process into two distinct and clear actions: (1) memory recall: which retrieves relevant knowledge, and (2) reasoning: which performs logical steps based on the recalled knowledge. To facilitate this decomposition, we introduce two special tokens memory and reason, guiding the model to distinguish between steps that require knowledge retrieval and those that involve reasoning. Our experiment results show that this decomposition not only improves model performance but also enhances the interpretability of the inference process, enabling users to identify sources of error and refine model responses effectively. The code is available at https://github.com/MingyuJ666/Disentangling-Memory-and-Reasoning.

Mutual Information-oriented ISAC Beamforming Design under Statistical CSI

Nov 20, 2024Abstract:Existing integrated sensing and communication (ISAC) beamforming design were mostly designed under perfect instantaneous channel state information (CSI), limiting their use in practical dynamic environments. In this paper, we study the beamforming design for multiple-input multiple-output (MIMO) ISAC systems based on statistical CSI, with the weighted mutual information (MI) comprising sensing and communication perspectives adopted as the performance metric. In particular, the operator-valued free probability theory is utilized to derive the closed-form expression for the weighted MI under statistical CSI. Subsequently, an efficient projected gradient ascent (PGA) algorithm is proposed to optimize the transmit beamforming matrix with the aim of maximizing the weighted MI.Numerical results validate that the derived closed-form expression matches well with the Monte Carlo simulation results and the proposed optimization algorithm is able to improve the weighted MI significantly. We also illustrate the trade-off between sensing and communication MI.

Underwater Image Enhancement with Cascaded Contrastive Learning

Nov 16, 2024

Abstract:Underwater image enhancement (UIE) is a highly challenging task due to the complexity of underwater environment and the diversity of underwater image degradation. Due to the application of deep learning, current UIE methods have made significant progress. Most of the existing deep learning-based UIE methods follow a single-stage network which cannot effectively address the diverse degradations simultaneously. In this paper, we propose to address this issue by designing a two-stage deep learning framework and taking advantage of cascaded contrastive learning to guide the network training of each stage. The proposed method is called CCL-Net in short. Specifically, the proposed CCL-Net involves two cascaded stages, i.e., a color correction stage tailored to the color deviation issue and a haze removal stage tailored to improve the visibility and contrast of underwater images. To guarantee the underwater image can be progressively enhanced, we also apply contrastive loss as an additional constraint to guide the training of each stage. In the first stage, the raw underwater images are used as negative samples for building the first contrastive loss, ensuring the enhanced results of the first color correction stage are better than the original inputs. While in the second stage, the enhanced results rather than the raw underwater images of the first color correction stage are used as the negative samples for building the second contrastive loss, thus ensuring the final enhanced results of the second haze removal stage are better than the intermediate color corrected results. Extensive experiments on multiple benchmark datasets demonstrate that our CCL-Net can achieve superior performance compared to many state-of-the-art methods. The source code of CCL-Net will be released at https://github.com/lewis081/CCL-Net.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge