Shaoshan Liu

The Matter of Time -- A General and Efficient System for Precise Sensor Synchronization in Robotic Computing

Mar 30, 2021

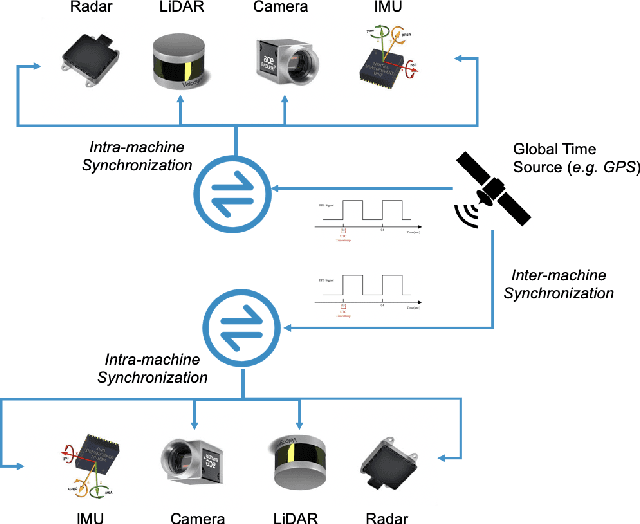

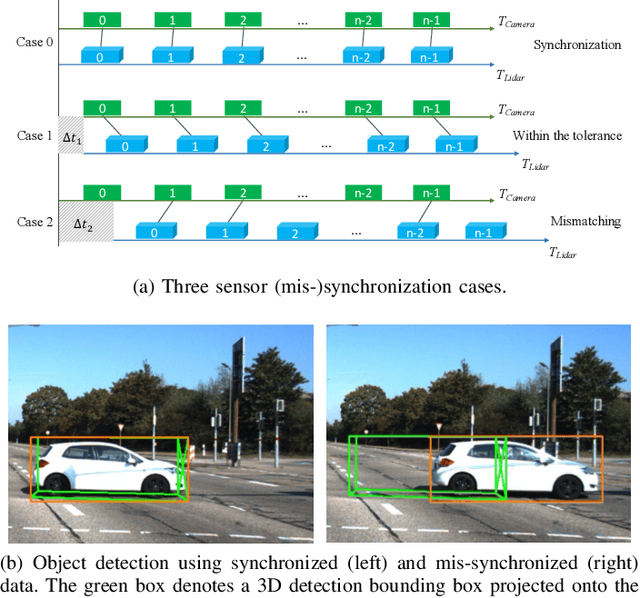

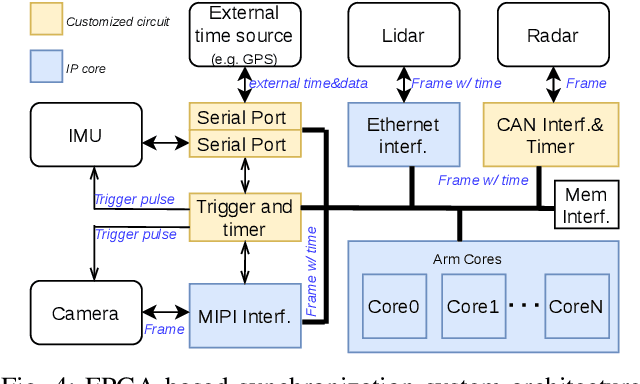

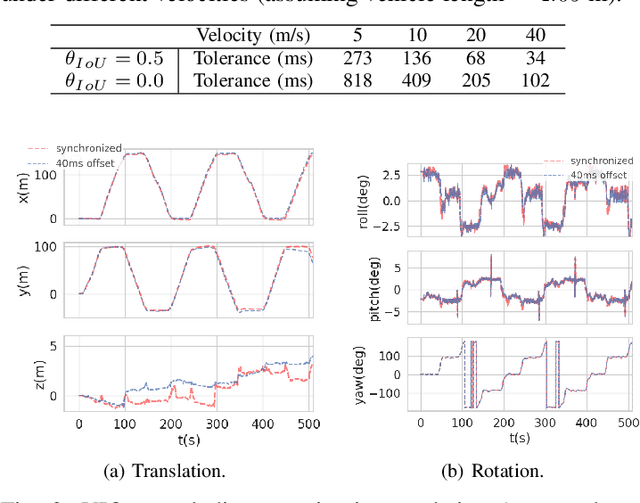

Abstract:Time synchronization is a critical task in robotic computing such as autonomous driving. In the past few years, as we developed advanced robotic applications, our synchronization system has evolved as well. In this paper, we first introduce the time synchronization problem and explain the challenges of time synchronization, especially in robotic workloads. Summarizing these challenges, we then present a general hardware synchronization system for robotic computing, which delivers high synchronization accuracy while maintaining low energy and resource consumption. The proposed hardware synchronization system is a key building block in our future robotic products.

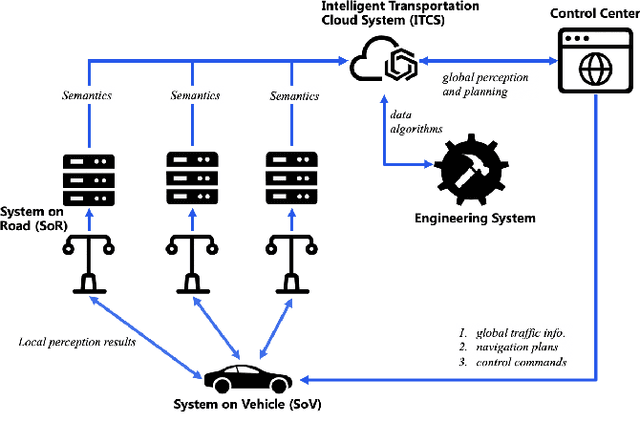

Towards Fully Intelligent Transportation through Infrastructure-Vehicle Cooperative Autonomous Driving: Challenges and Opportunities

Mar 03, 2021

Abstract:The infrastructure-vehicle cooperative autonomous driving approach depends on the cooperation between intelligent roads and intelligent vehicles. This approach is not only safer but also more economical compared to the traditional on-vehicle-only autonomous driving approach. In this paper, we introduce our real-world deployment experiences of cooperative autonomous driving, and delve into the details of new challenges and opportunities. Specifically, based on our progress towards commercial deployment, we follow a three-stage development roadmap of the cooperative autonomous driving approach:infrastructure-augmented autonomous driving (IAAD), infrastructure-guided autonomous driving (IGAD), and infrastructure-planned autonomous driving (IPAD).

Engineering Education in the Age of Autonomous Machines

Feb 16, 2021Abstract:In the past few years, we have observed a huge supply-demand gap for autonomous driving engineers. The core problem is that autonomous driving is not one single technology but rather a complex system integrating many technologies, and no one single academic department can provide comprehensive education in this field. We advocate to create a cross-disciplinary program to expose students with technical background in computer science, computer engineering, electrical engineering, as well as mechanical engineering. On top of the cross-disciplinary technical foundation, a capstone project that provides students with hands-on experiences of working with a real autonomous vehicle is required to consolidate the technical foundation.

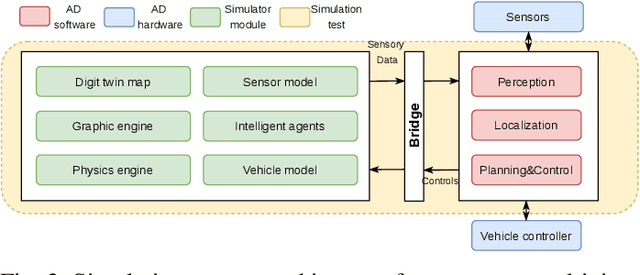

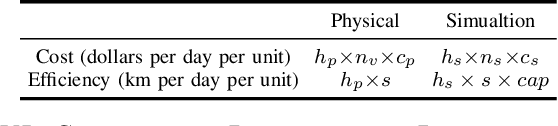

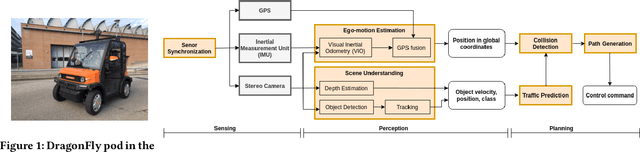

On Designing Computing Systems for Autonomous Vehicles: a PerceptIn Case Study

Nov 12, 2020

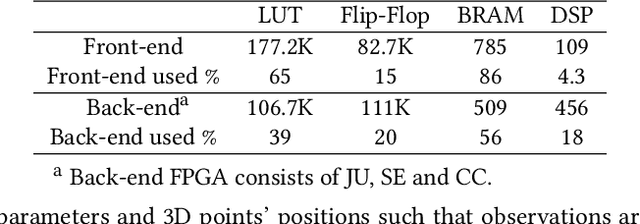

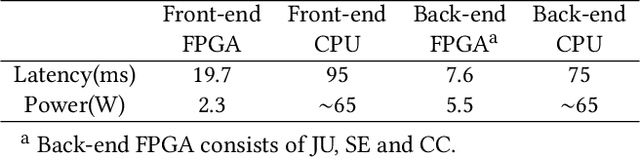

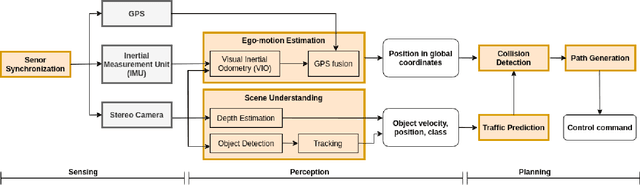

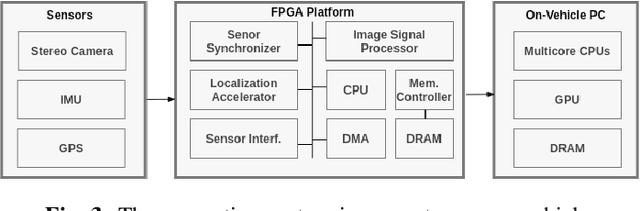

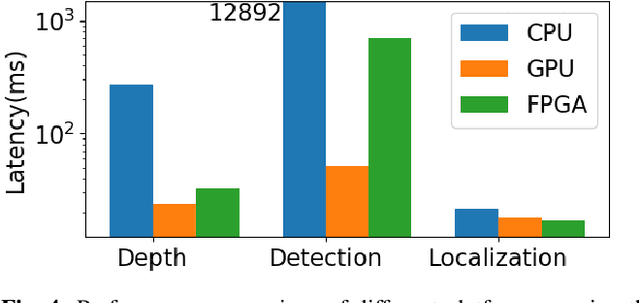

Abstract:PerceptIn develops and commercializes autonomous vehicles for micromobility around the globe. This paper makes a holistic summary of PerceptIn's development and operating experiences. This paper provides the business tale behind our product, and presents the development of the computing system for our vehicles. We illustrate the design decision made for the computing system, and show the advantage of offloading localization workloads onto an FPGA platform.

An Energy-Efficient High Definition Map Data Distribution Mechanism for Autonomous Driving

Oct 11, 2020

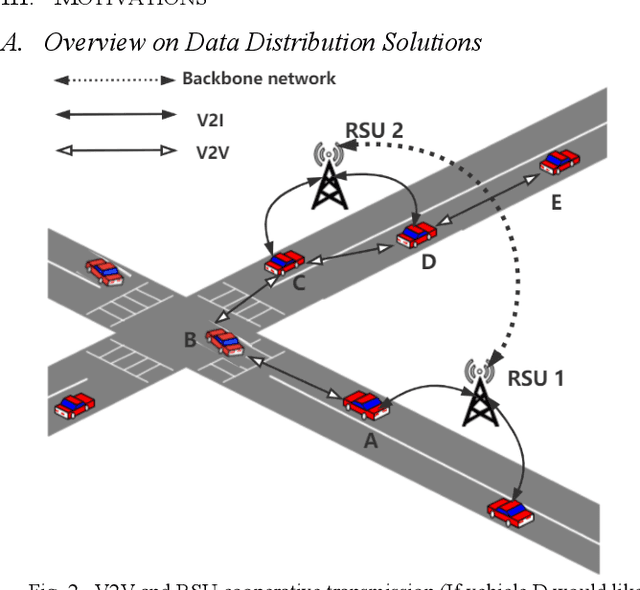

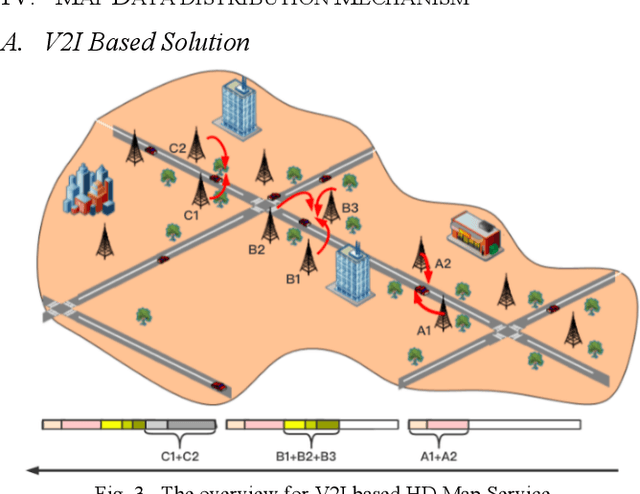

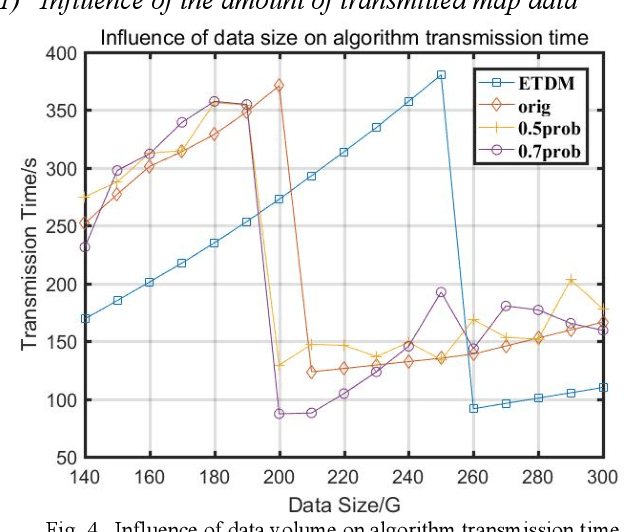

Abstract:Autonomous Driving is now the promising future of transportation. As one basis for autonomous driving, High Definition Map (HD map) provides high-precision descriptions of the environment, therefore it enables more accurate perception and localization while improving the efficiency of path planning. However, an extremely large amount of map data needs to be transmitted during driving, thus posing great challenge for real-time and safety requirements for autonomous driving. To this end, we first demonstrate how the existing data distribution mechanism can support HD map services. Furthermore, considering the constraints of vehicle power, vehicle speed, base station bandwidth, etc., we propose a HD map data distribution mechanism on top of Vehicle-to-Infrastructure (V2I) data transmission. By this mechanism, the map provision task is allocated to the selected RSU nodes and transmits proportionate HD map data cooperatively. Their works on map data loading aims to provide in-time HD map data service with optimized in-vehicle energy consumption. Finally, we model the selection of RSU nodes into a partial knapsack problem and propose a greedy strategy-based data transmission algorithm. Experimental results confirm that within limited energy consumption, the proposed mechanism can ensure HD map data service by coordinating multiple RSUs with the shortest data transmission time.

A Survey of FPGA-Based Robotic Computing

Sep 13, 2020

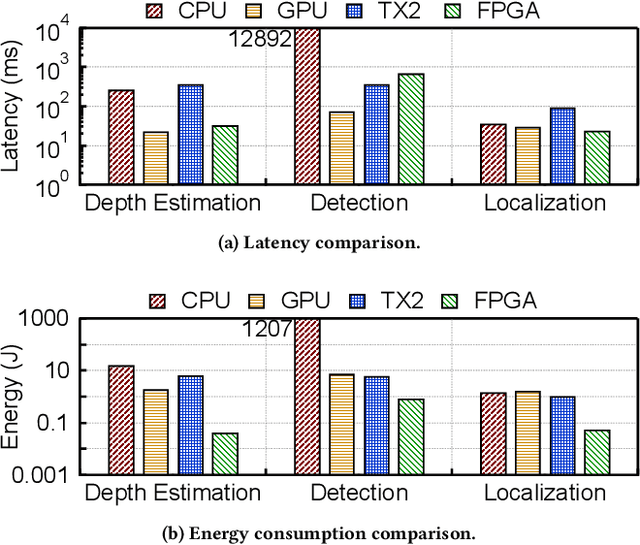

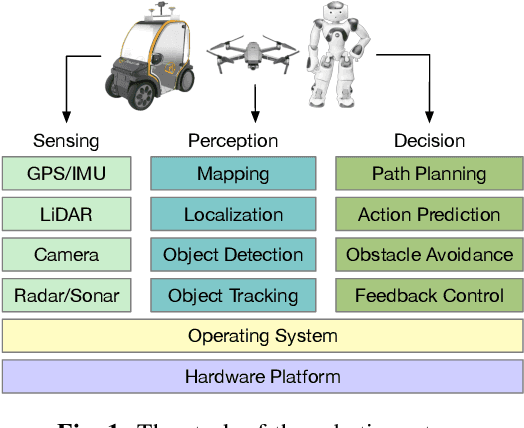

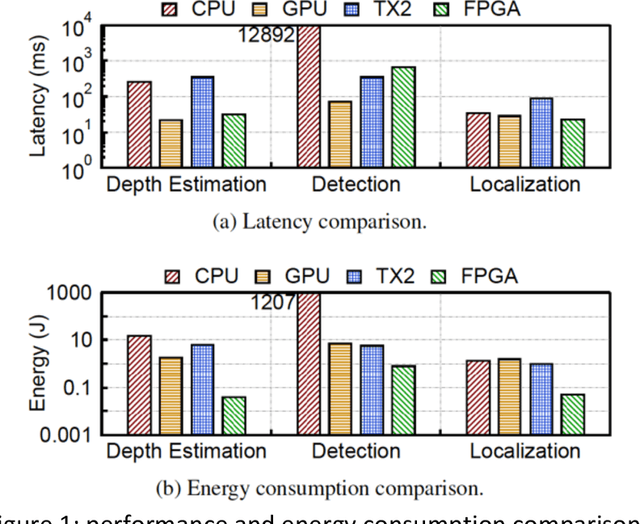

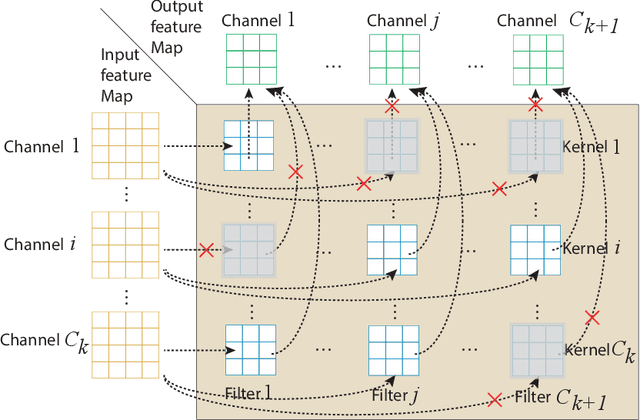

Abstract:Recent researches on robotics have shown significant improvement, spanning from algorithms, mechanics to hardware architectures. Robotics, including manipulators, legged robots, drones, and autonomous vehicles, are now widely applied in diverse scenarios. However, the high computation and data complexity of robotic algorithms pose great challenges to its applications. On the one hand, CPU platform is flexible to handle multiple robotic tasks. GPU platform has higher computational capacities and easy-touse development frameworks, so they have been widely adopted in several applications. On the other hand, FPGA-based robotic accelerators are becoming increasingly competitive alternatives, especially in latency-critical and power-limited scenarios. With specialized designed hardware logic and algorithm kernels, FPGA-based accelerators can surpass CPU and GPU in performance and energy efficiency. In this paper, we give an overview of previous work on FPGA-based robotic accelerators covering different stages of the robotic system pipeline. An analysis of software and hardware optimization techniques and main technical issues is presented, along with some commercial and space applications, to serve as a guide for future work.

Critical Business Decision Making for Technology Startups -- A PerceptIn Case Study

Sep 07, 2020

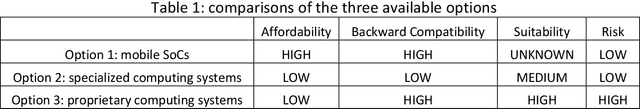

Abstract:Most business decisions are made with analysis, but some are judgment calls not susceptible to analysis due to time or information constraints. In this article, we present a real-life case study of critical business decision making of PerceptIn, an autonomous driving technology startup. In early years of PerceptIn, PerceptIn had to make a decision on the design of computing systems for its autonomous vehicle products. By providing details on PerceptIn's decision process and the results of the decision, we hope to provide some insights that can be beneficial to entrepreneurs and engineering managers in technology startups.

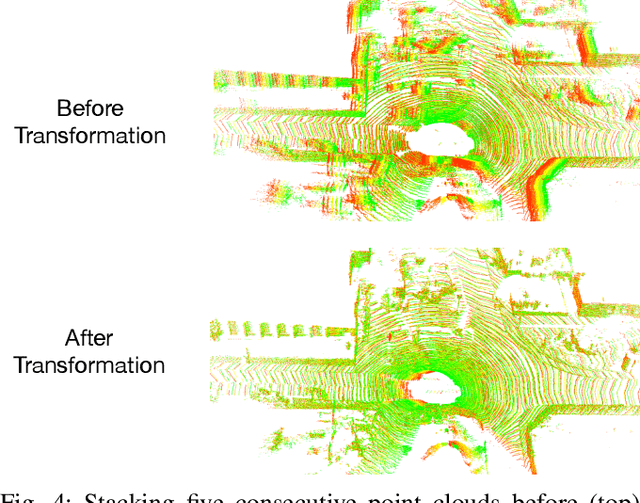

Real-Time Spatio-Temporal LiDAR Point Cloud Compression

Aug 16, 2020

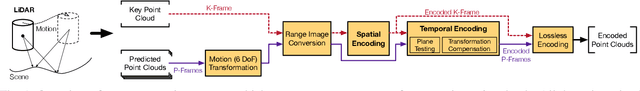

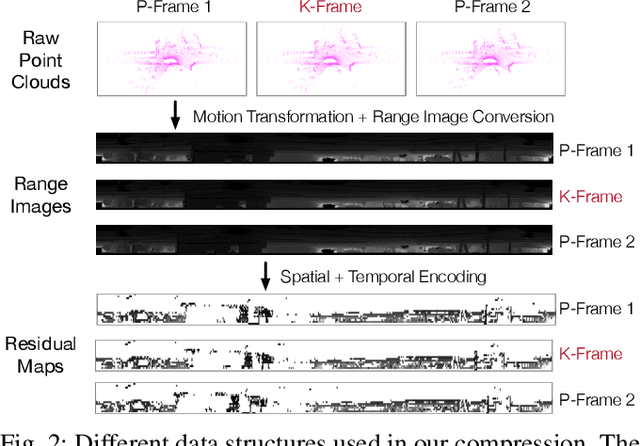

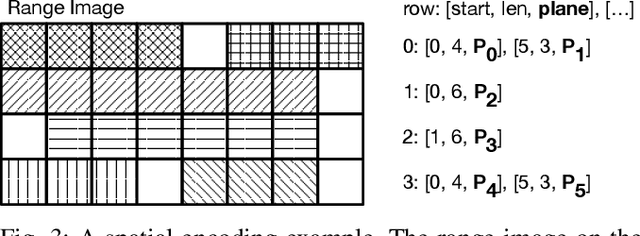

Abstract:Compressing massive LiDAR point clouds in real-time is critical to autonomous machines such as drones and self-driving cars. While most of the recent prior work has focused on compressing individual point cloud frames, this paper proposes a novel system that effectively compresses a sequence of point clouds. The idea to exploit both the spatial and temporal redundancies in a sequence of point cloud frames. We first identify a key frame in a point cloud sequence and spatially encode the key frame by iterative plane fitting. We then exploit the fact that consecutive point clouds have large overlaps in the physical space, and thus spatially encoded data can be (re-)used to encode the temporal stream. Temporal encoding by reusing spatial encoding data not only improves the compression rate, but also avoids redundant computations, which significantly improves the compression speed. Experiments show that our compression system achieves 40x to 90x compression rate, significantly higher than the MPEG's LiDAR point cloud compression standard, while retaining high end-to-end application accuracies. Meanwhile, our compression system has a compression speed that matches the point cloud generation rate by today LiDARs and out-performs existing compression systems, enabling real-time point cloud transmission.

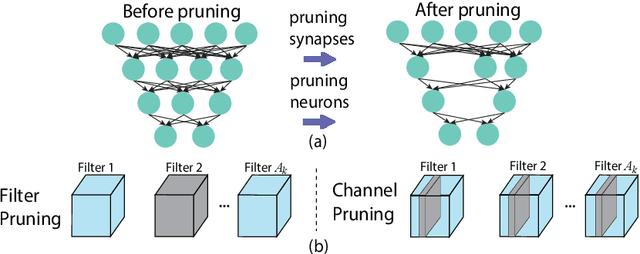

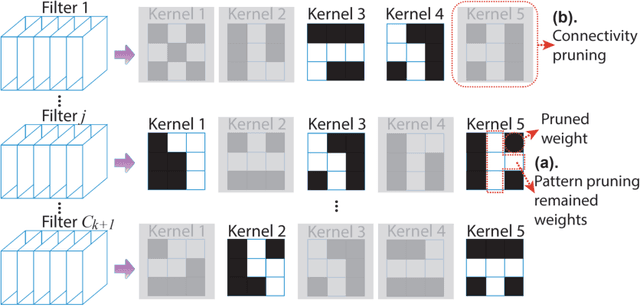

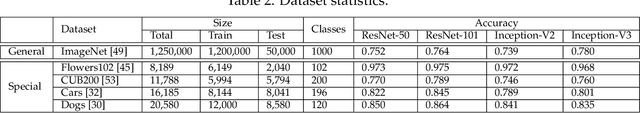

CoCoPIE: Making Mobile AI Sweet As PIE --Compression-Compilation Co-Design Goes a Long Way

Mar 25, 2020

Abstract:Assuming hardware is the major constraint for enabling real-time mobile intelligence, the industry has mainly dedicated their efforts to developing specialized hardware accelerators for machine learning and inference. This article challenges the assumption. By drawing on a recent real-time AI optimization framework CoCoPIE, it maintains that with effective compression-compiler co-design, it is possible to enable real-time artificial intelligence on mainstream end devices without special hardware. CoCoPIE is a software framework that holds numerous records on mobile AI: the first framework that supports all main kinds of DNNs, from CNNs to RNNs, transformer, language models, and so on; the fastest DNN pruning and acceleration framework, up to 180X faster compared with current DNN pruning on other frameworks such as TensorFlow-Lite; making many representative AI applications able to run in real-time on off-the-shelf mobile devices that have been previously regarded possible only with special hardware support; making off-the-shelf mobile devices outperform a number of representative ASIC and FPGA solutions in terms of energy efficiency and/or performance.

Autonomous Last-mile Delivery Vehicles in Complex Traffic Environments

Jan 22, 2020

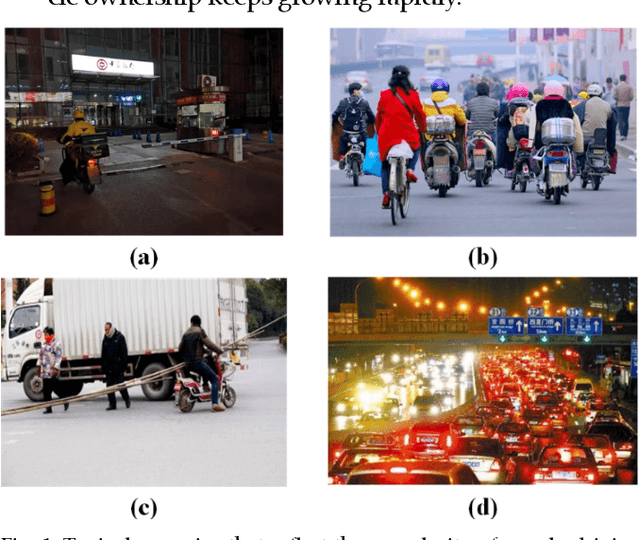

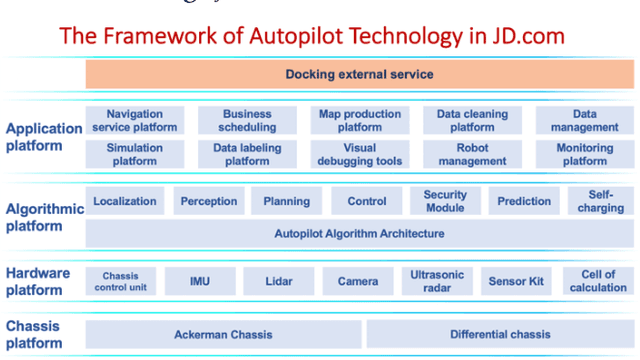

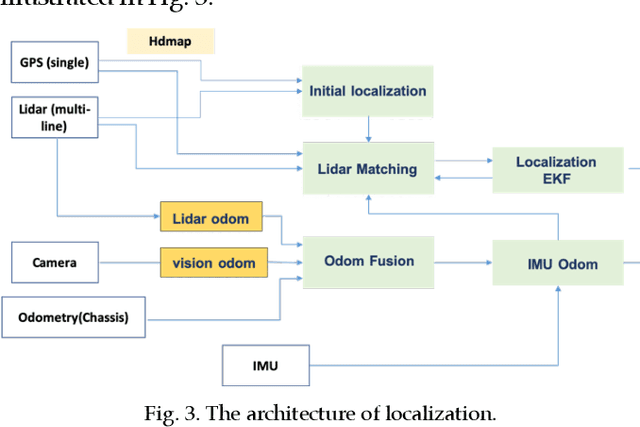

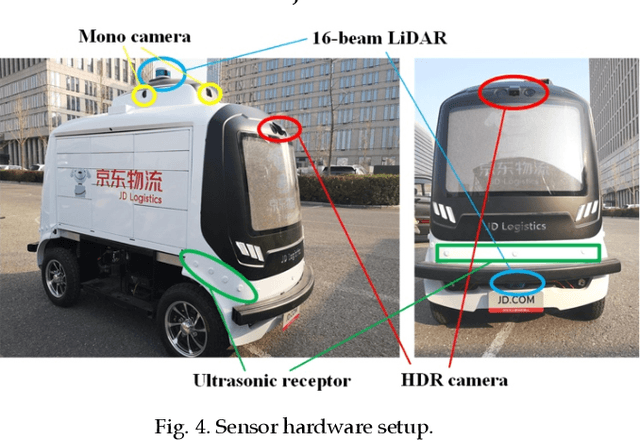

Abstract:E-commerce has evolved with the digital technology revolution over the years. Last-mile logistics service contributes a significant part of the e-commerce experience. In contrast to the traditional last-mile logistics services, smart logistics service with autonomous driving technologies provides a promising solution to reduce the delivery cost and to improve efficiency. However, the traffic conditions in complex traffic environments, such as those in China, are more challenging compared to those in well-developed countries. Many types of moving objects (such as pedestrians, bicycles, electric bicycles, and motorcycles, etc.) share the road with autonomous vehicles, and their behaviors are not easy to track and predict. This paper introduces a technical solution from JD.com, a leading E-commerce company in China, to the autonomous last-mile delivery in complex traffic environments. Concretely, the methodologies in each module of our autonomous vehicles are presented, together with safety guarantee strategies. Up to this point, JD.com has deployed more than 300 self-driving vehicles for trial operations in tens of provinces of China, with an accumulated 715,819 miles and up to millions of on-road testing hours.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge