Jianwu Dang

Locate and Beamform: Two-dimensional Locating All-neural Beamformer for Multi-channel Speech Separation

May 18, 2023Abstract:Recently, stunning improvements on multi-channel speech separation have been achieved by neural beamformers when direction information is available. However, most of them neglect to utilize speaker's 2-dimensional (2D) location cues contained in mixture signal, which limits the performance when two sources come from close directions. In this paper, we propose an end-to-end beamforming network for 2D location guided speech separation merely given mixture signal. It first estimates discriminable direction and 2D location cues, which imply directions the sources come from in multi views of microphones and their 2D coordinates. These cues are then integrated into location-aware neural beamformer, thus allowing accurate reconstruction of two sources' speech signals. Experiments show that our proposed model not only achieves a comprehensive decent improvement compared to baseline systems, but avoids inferior performance on spatial overlapping cases.

Time-domain Speech Enhancement Assisted by Multi-resolution Frequency Encoder and Decoder

Mar 26, 2023

Abstract:Time-domain speech enhancement (SE) has recently been intensively investigated. Among recent works, DEMUCS introduces multi-resolution STFT loss to enhance performance. However, some resolutions used for STFT contain non-stationary signals, and it is challenging to learn multi-resolution frequency losses simultaneously with only one output. For better use of multi-resolution frequency information, we supplement multiple spectrograms in different frame lengths into the time-domain encoders. They extract stationary frequency information in both narrowband and wideband. We also adopt multiple decoder outputs, each of which computes its corresponding resolution frequency loss. Experimental results show that (1) it is more effective to fuse stationary frequency features than non-stationary features in the encoder, and (2) the multiple outputs consistent with the frequency loss improve performance. Experiments on the Voice-Bank dataset show that the proposed method obtained a 0.14 PESQ improvement.

Cross-modal Audio-visual Co-learning for Text-independent Speaker Verification

Feb 22, 2023

Abstract:Visual speech (i.e., lip motion) is highly related to auditory speech due to the co-occurrence and synchronization in speech production. This paper investigates this correlation and proposes a cross-modal speech co-learning paradigm. The primary motivation of our cross-modal co-learning method is modeling one modality aided by exploiting knowledge from another modality. Specifically, two cross-modal boosters are introduced based on an audio-visual pseudo-siamese structure to learn the modality-transformed correlation. Inside each booster, a max-feature-map embedded Transformer variant is proposed for modality alignment and enhanced feature generation. The network is co-learned both from scratch and with pretrained models. Experimental results on the LRSLip3, GridLip, LomGridLip, and VoxLip datasets demonstrate that our proposed method achieves 60% and 20% average relative performance improvement over independently trained audio-only/visual-only and baseline fusion systems, respectively.

MIMO-DBnet: Multi-channel Input and Multiple Outputs DOA-aware Beamforming Network for Speech Separation

Dec 07, 2022Abstract:Recently, many deep learning based beamformers have been proposed for multi-channel speech separation. Nevertheless, most of them rely on extra cues known in advance, such as speaker feature, face image or directional information. In this paper, we propose an end-to-end beamforming network for direction guided speech separation given merely the mixture signal, namely MIMO-DBnet. Specifically, we design a multi-channel input and multiple outputs architecture to predict the direction-of-arrival based embeddings and beamforming weights for each source. The precisely estimated directional embedding provides quite effective spatial discrimination guidance for the neural beamformer to offset the effect of phase wrapping, thus allowing more accurate reconstruction of two sources' speech signals. Experiments show that our proposed MIMO-DBnet not only achieves a comprehensive decent improvement compared to baseline systems, but also maintain the performance on high frequency bands when phase wrapping occurs.

Monolingual Recognizers Fusion for Code-switching Speech Recognition

Nov 02, 2022

Abstract:The bi-encoder structure has been intensively investigated in code-switching (CS) automatic speech recognition (ASR). However, most existing methods require the structures of two monolingual ASR models (MAMs) should be the same and only use the encoder of MAMs. This leads to the problem that pre-trained MAMs cannot be timely and fully used for CS ASR. In this paper, we propose a monolingual recognizers fusion method for CS ASR. It has two stages: the speech awareness (SA) stage and the language fusion (LF) stage. In the SA stage, acoustic features are mapped to two language-specific predictions by two independent MAMs. To keep the MAMs focused on their own language, we further extend the language-aware training strategy for the MAMs. In the LF stage, the BELM fuses two language-specific predictions to get the final prediction. Moreover, we propose a text simulation strategy to simplify the training process of the BELM and reduce reliance on CS data. Experiments on a Mandarin-English corpus show the efficiency of the proposed method. The mix error rate is significantly reduced on the test set after using open-source pre-trained MAMs.

Deep Spectro-temporal Artifacts for Detecting Synthesized Speech

Oct 11, 2022

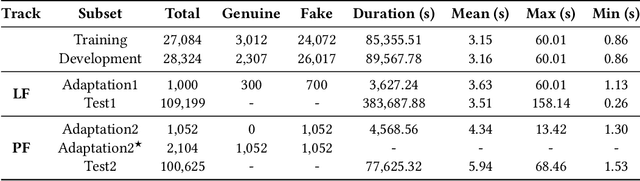

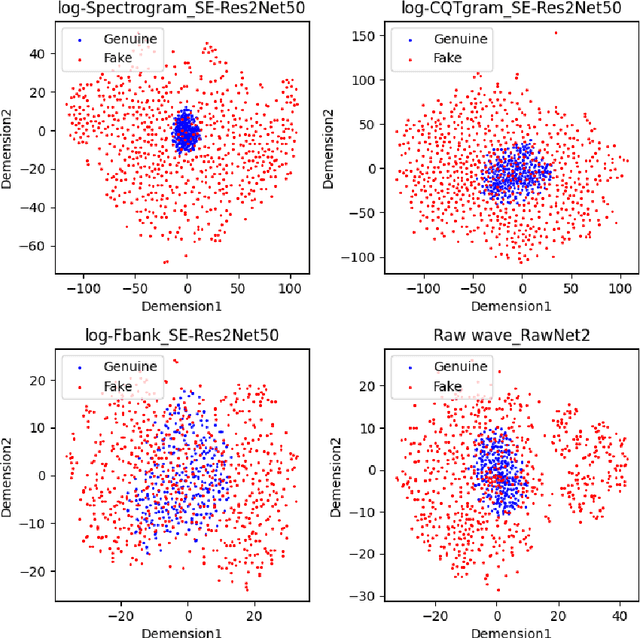

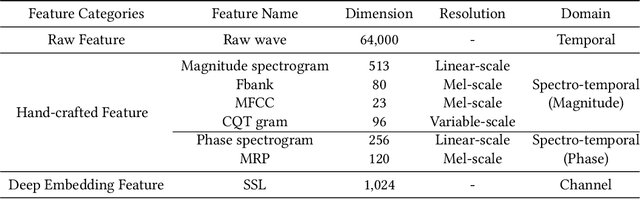

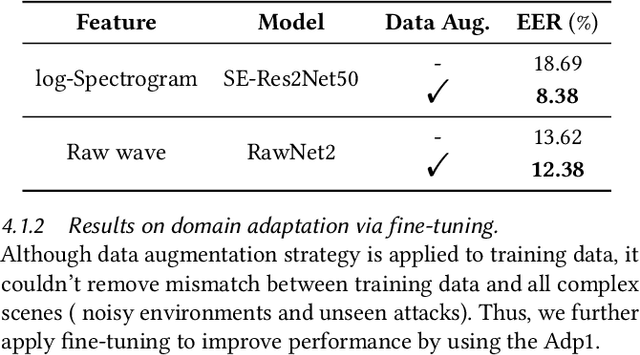

Abstract:The Audio Deep Synthesis Detection (ADD) Challenge has been held to detect generated human-like speech. With our submitted system, this paper provides an overall assessment of track 1 (Low-quality Fake Audio Detection) and track 2 (Partially Fake Audio Detection). In this paper, spectro-temporal artifacts were detected using raw temporal signals, spectral features, as well as deep embedding features. To address track 1, low-quality data augmentation, domain adaptation via finetuning, and various complementary feature information fusion were aggregated in our system. Furthermore, we analyzed the clustering characteristics of subsystems with different features by visualization method and explained the effectiveness of our proposed greedy fusion strategy. As for track 2, frame transition and smoothing were detected using self-supervised learning structure to capture the manipulation of PF attacks in the time domain. We ranked 4th and 5th in track 1 and track 2, respectively.

VCSE: Time-Domain Visual-Contextual Speaker Extraction Network

Oct 09, 2022

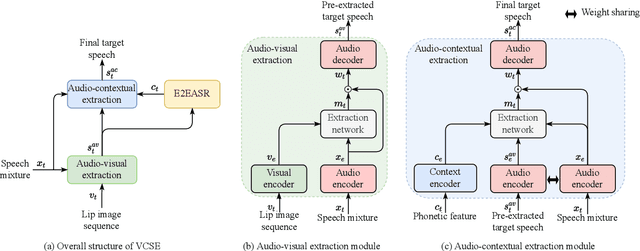

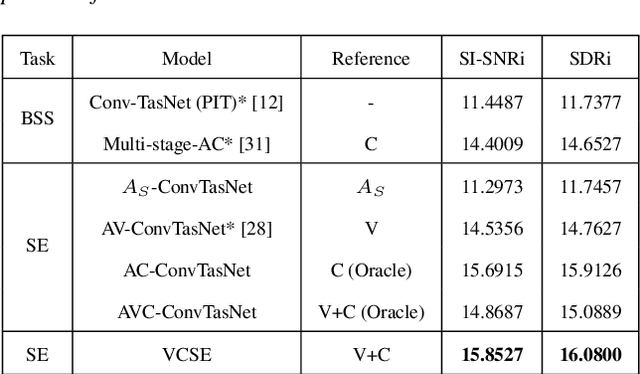

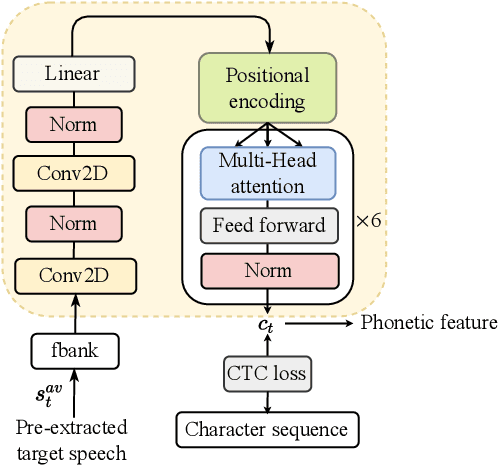

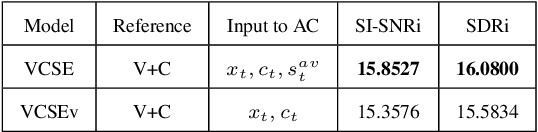

Abstract:Speaker extraction seeks to extract the target speech in a multi-talker scenario given an auxiliary reference. Such reference can be auditory, i.e., a pre-recorded speech, visual, i.e., lip movements, or contextual, i.e., phonetic sequence. References in different modalities provide distinct and complementary information that could be fused to form top-down attention on the target speaker. Previous studies have introduced visual and contextual modalities in a single model. In this paper, we propose a two-stage time-domain visual-contextual speaker extraction network named VCSE, which incorporates visual and self-enrolled contextual cues stage by stage to take full advantage of every modality. In the first stage, we pre-extract a target speech with visual cues and estimate the underlying phonetic sequence. In the second stage, we refine the pre-extracted target speech with the self-enrolled contextual cues. Experimental results on the real-world Lip Reading Sentences 3 (LRS3) database demonstrate that our proposed VCSE network consistently outperforms other state-of-the-art baselines.

MIMO-DoAnet: Multi-channel Input and Multiple Outputs DoA Network with Unknown Number of Sound Sources

Jul 15, 2022

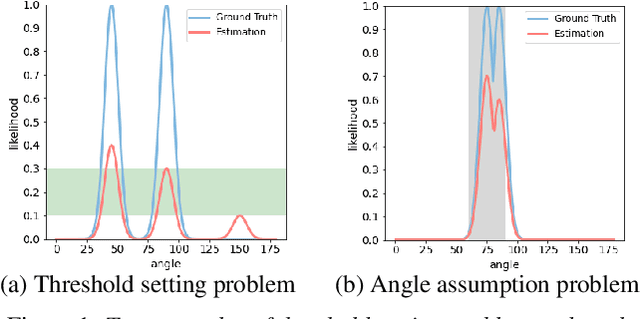

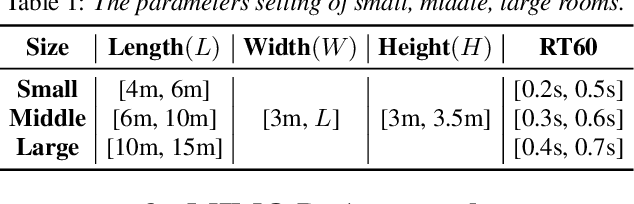

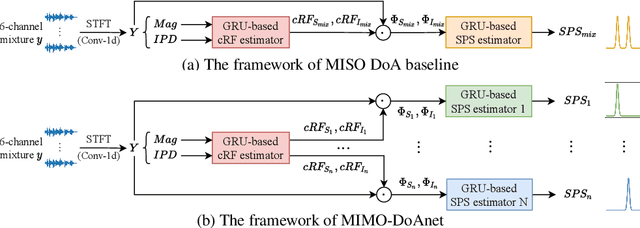

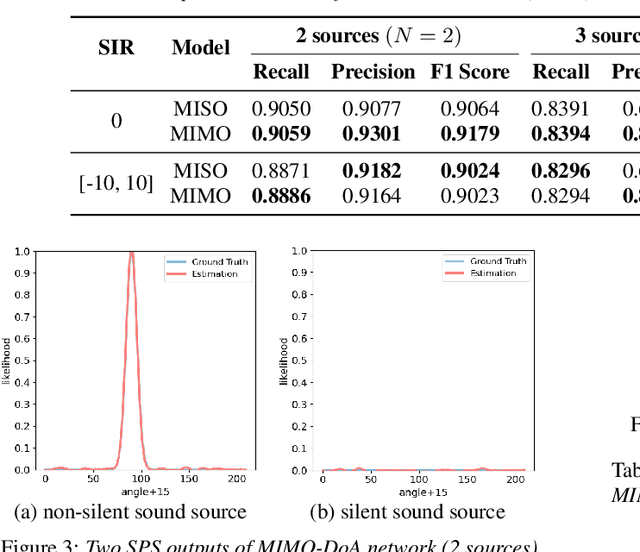

Abstract:Recent neural network based Direction of Arrival (DoA) estimation algorithms have performed well on unknown number of sound sources scenarios. These algorithms are usually achieved by mapping the multi-channel audio input to the single output (i.e. overall spatial pseudo-spectrum (SPS) of all sources), that is called MISO. However, such MISO algorithms strongly depend on empirical threshold setting and the angle assumption that the angles between the sound sources are greater than a fixed angle. To address these limitations, we propose a novel multi-channel input and multiple outputs DoA network called MIMO-DoAnet. Unlike the general MISO algorithms, MIMO-DoAnet predicts the SPS coding of each sound source with the help of the informative spatial covariance matrix. By doing so, the threshold task of detecting the number of sound sources becomes an easier task of detecting whether there is a sound source in each output, and the serious interaction between sound sources disappears during inference stage. Experimental results show that MIMO-DoAnet achieves relative 18.6% and absolute 13.3%, relative 34.4% and absolute 20.2% F1 score improvement compared with the MISO baseline system in 3, 4 sources scenes. The results also demonstrate MIMO-DoAnet alleviates the threshold setting problem and solves the angle assumption problem effectively.

Language-specific Characteristic Assistance for Code-switching Speech Recognition

Jul 05, 2022

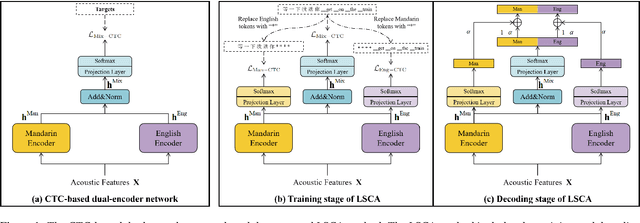

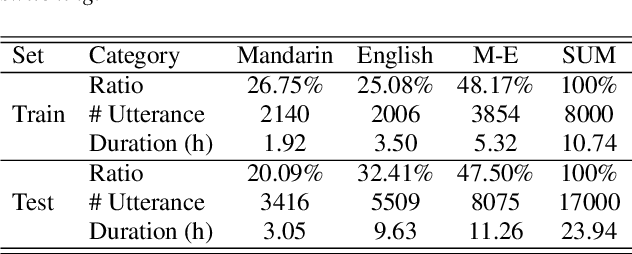

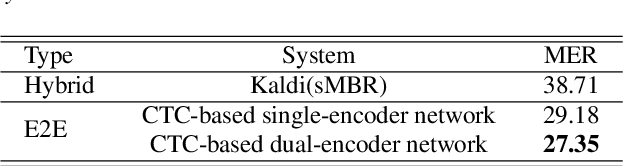

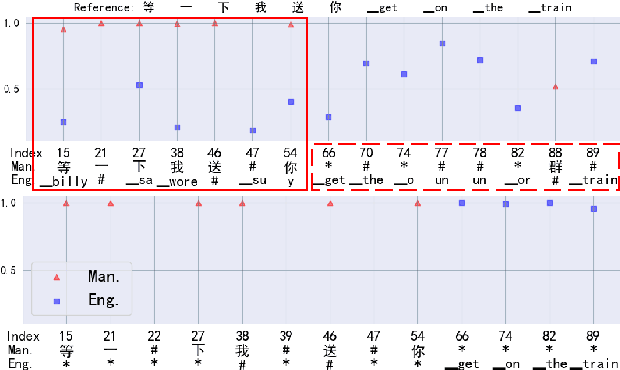

Abstract:Dual-encoder structure successfully utilizes two language-specific encoders (LSEs) for code-switching speech recognition. Because LSEs are initialized by two pre-trained language-specific models (LSMs), the dual-encoder structure can exploit sufficient monolingual data and capture the individual language attributes. However, existing methods have no language constraints on LSEs and underutilize language-specific knowledge of LSMs. In this paper, we propose a language-specific characteristic assistance (LSCA) method to mitigate the above problems. Specifically, during training, we introduce two language-specific losses as language constraints and generate corresponding language-specific targets for them. During decoding, we take the decoding abilities of LSMs into account by combining the output probabilities of two LSMs and the mixture model to obtain the final predictions. Experiments show that either the training or decoding method of LSCA can improve the model's performance. Furthermore, the best result can obtain up to 15.4% relative error reduction on the code-switching test set by combining the training and decoding methods of LSCA. Moreover, the system can process code-switching speech recognition tasks well without extra shared parameters or even retraining based on two pre-trained LSMs by using our method.

Iterative Sound Source Localization for Unknown Number of Sources

Jun 24, 2022

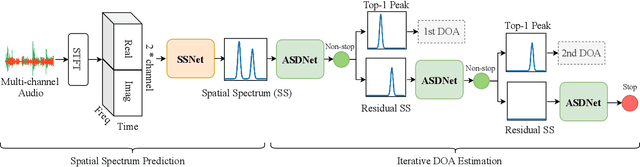

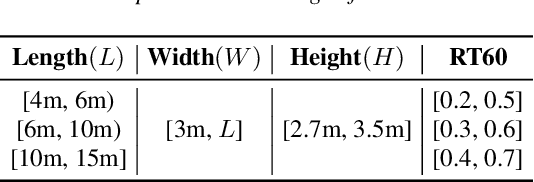

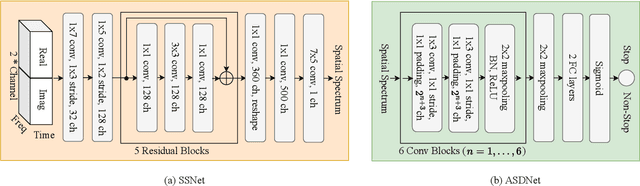

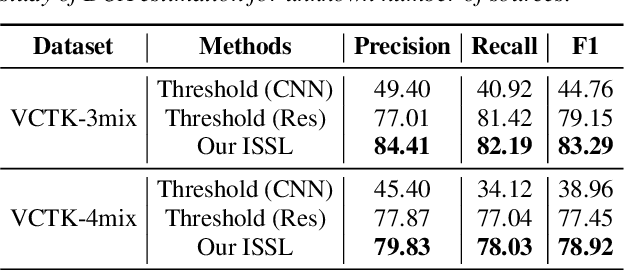

Abstract:Sound source localization aims to seek the direction of arrival (DOA) of all sound sources from the observed multi-channel audio. For the practical problem of unknown number of sources, existing localization algorithms attempt to predict a likelihood-based coding (i.e., spatial spectrum) and employ a pre-determined threshold to detect the source number and corresponding DOA value. However, these threshold-based algorithms are not stable since they are limited by the careful choice of threshold. To address this problem, we propose an iterative sound source localization approach called ISSL, which can iteratively extract each source's DOA without threshold until the termination criterion is met. Unlike threshold-based algorithms, ISSL designs an active source detector network based on binary classifier to accept residual spatial spectrum and decide whether to stop the iteration. By doing so, our ISSL can deal with an arbitrary number of sources, even more than the number of sources seen during the training stage. The experimental results show that our ISSL achieves significant performance improvements in both DOA estimation and source number detection compared with the existing threshold-based algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge