Jiachen Xu

NeuroLoRA: Context-Aware Neuromodulation for Parameter-Efficient Multi-Task Adaptation

Mar 12, 2026Abstract:Parameter-Efficient Fine-Tuning (PEFT) techniques, particularly Low-Rank Adaptation (LoRA), have become essential for adapting Large Language Models (LLMs) to downstream tasks. While the recent FlyLoRA framework successfully leverages bio-inspired sparse random projections to mitigate parameter interference, it relies on a static, magnitude-based routing mechanism that is agnostic to input context. In this paper, we propose NeuroLoRA, a novel Mixture-of-Experts (MoE) based LoRA framework inspired by biological neuromodulation -- the dynamic regulation of neuronal excitability based on context. NeuroLoRA retains the computational efficiency of frozen random projections while introducing a lightweight, learnable neuromodulation gate that contextually rescales the projection space prior to expert selection. We further propose a Contrastive Orthogonality Loss to explicitly enforce separation between expert subspaces, enhancing both task decoupling and continual learning capacity. Extensive experiments on MMLU, GSM8K, and ScienceQA demonstrate that NeuroLoRA consistently outperforms FlyLoRA and other strong baselines across single-task adaptation, multi-task model merging, and sequential continual learning scenarios, while maintaining comparable parameter efficiency.

Leave-One-Out Prediction for General Hypothesis Classes

Mar 02, 2026Abstract:Leave-one-out (LOO) prediction provides a principled, data-dependent measure of generalization, yet guarantees in fully transductive settings remain poorly understood beyond specialized models. We introduce Median of Level-Set Aggregation (MLSA), a general aggregation procedure based on empirical-risk level sets around the ERM. For arbitrary fixed datasets and losses satisfying a mild monotonicity condition, we establish a multiplicative oracle inequality for the LOO error of the form \[ LOO_S(\hat{h}) \;\le\; C \cdot \frac{1}{n} \min_{h\in H} L_S(h) \;+\; \frac{Comp(S,H,\ell)}{n}, \qquad C>1. \] The analysis is based on a local level-set growth condition controlling how the set of near-optimal empirical-risk minimizers expands as the tolerance increases. We verify this condition in several canonical settings. For classification with VC classes under the 0-1 loss, the resulting complexity scales as $O(d \log n)$, where $d$ is the VC dimension. For finite hypothesis and density classes under bounded or log loss, it scales as $O(\log |H|)$ and $O(\log |P|)$, respectively. For logistic regression with bounded covariates and parameters, a volumetric argument based on the empirical covariance matrix yields complexity scaling as $O(d \log n)$ up to problem-dependent factors.

HY3D-Bench: Generation of 3D Assets

Feb 03, 2026Abstract:While recent advances in neural representations and generative models have revolutionized 3D content creation, the field remains constrained by significant data processing bottlenecks. To address this, we introduce HY3D-Bench, an open-source ecosystem designed to establish a unified, high-quality foundation for 3D generation. Our contributions are threefold: (1) We curate a library of 250k high-fidelity 3D objects distilled from large-scale repositories, employing a rigorous pipeline to deliver training-ready artifacts, including watertight meshes and multi-view renderings; (2) We introduce structured part-level decomposition, providing the granularity essential for fine-grained perception and controllable editing; and (3) We bridge real-world distribution gaps via a scalable AIGC synthesis pipeline, contributing 125k synthetic assets to enhance diversity in long-tail categories. Validated empirically through the training of Hunyuan3D-2.1-Small, HY3D-Bench democratizes access to robust data resources, aiming to catalyze innovation across 3D perception, robotics, and digital content creation.

A Comparative Evaluation of Large Vision-Language Models for 2D Object Detection under SOTIF Conditions

Jan 30, 2026Abstract:Reliable environmental perception remains one of the main obstacles for safe operation of automated vehicles. Safety of the Intended Functionality (SOTIF) concerns safety risks from perception insufficiencies, particularly under adverse conditions where conventional detectors often falter. While Large Vision-Language Models (LVLMs) demonstrate promising semantic reasoning, their quantitative effectiveness for safety-critical 2D object detection is underexplored. This paper presents a systematic evaluation of ten representative LVLMs using the PeSOTIF dataset, a benchmark specifically curated for long-tail traffic scenarios and environmental degradations. Performance is quantitatively compared against the classical perception approach, a YOLO-based detector. Experimental results reveal a critical trade-off: top-performing LVLMs (e.g., Gemini 3, Doubao) surpass the YOLO baseline in recall by over 25% in complex natural scenarios, exhibiting superior robustness to visual degradation. Conversely, the baseline retains an advantage in geometric precision for synthetic perturbations. These findings highlight the complementary strengths of semantic reasoning versus geometric regression, supporting the use of LVLMs as high-level safety validators in SOTIF-oriented automated driving systems.

FinMR: A Knowledge-Intensive Multimodal Benchmark for Advanced Financial Reasoning

Oct 09, 2025Abstract:Multimodal Large Language Models (MLLMs) have made substantial progress in recent years. However, their rigorous evaluation within specialized domains like finance is hindered by the absence of datasets characterized by professional-level knowledge intensity, detailed annotations, and advanced reasoning complexity. To address this critical gap, we introduce FinMR, a high-quality, knowledge-intensive multimodal dataset explicitly designed to evaluate expert-level financial reasoning capabilities at a professional analyst's standard. FinMR comprises over 3,200 meticulously curated and expertly annotated question-answer pairs across 15 diverse financial topics, ensuring broad domain diversity and integrating sophisticated mathematical reasoning, advanced financial knowledge, and nuanced visual interpretation tasks across multiple image types. Through comprehensive benchmarking with leading closed-source and open-source MLLMs, we highlight significant performance disparities between these models and professional financial analysts, uncovering key areas for model advancement, such as precise image analysis, accurate application of complex financial formulas, and deeper contextual financial understanding. By providing richly varied visual content and thorough explanatory annotations, FinMR establishes itself as an essential benchmark tool for assessing and advancing multimodal financial reasoning toward professional analyst-level competence.

Hunyuan3D Studio: End-to-End AI Pipeline for Game-Ready 3D Asset Generation

Sep 16, 2025

Abstract:The creation of high-quality 3D assets, a cornerstone of modern game development, has long been characterized by labor-intensive and specialized workflows. This paper presents Hunyuan3D Studio, an end-to-end AI-powered content creation platform designed to revolutionize the game production pipeline by automating and streamlining the generation of game-ready 3D assets. At its core, Hunyuan3D Studio integrates a suite of advanced neural modules (such as Part-level 3D Generation, Polygon Generation, Semantic UV, etc.) into a cohesive and user-friendly system. This unified framework allows for the rapid transformation of a single concept image or textual description into a fully-realized, production-quality 3D model complete with optimized geometry and high-fidelity PBR textures. We demonstrate that assets generated by Hunyuan3D Studio are not only visually compelling but also adhere to the stringent technical requirements of contemporary game engines, significantly reducing iteration time and lowering the barrier to entry for 3D content creation. By providing a seamless bridge from creative intent to technical asset, Hunyuan3D Studio represents a significant leap forward for AI-assisted workflows in game development and interactive media.

X-Part: high fidelity and structure coherent shape decomposition

Sep 10, 2025

Abstract:Generating 3D shapes at part level is pivotal for downstream applications such as mesh retopology, UV mapping, and 3D printing. However, existing part-based generation methods often lack sufficient controllability and suffer from poor semantically meaningful decomposition. To this end, we introduce X-Part, a controllable generative model designed to decompose a holistic 3D object into semantically meaningful and structurally coherent parts with high geometric fidelity. X-Part exploits the bounding box as prompts for the part generation and injects point-wise semantic features for meaningful decomposition. Furthermore, we design an editable pipeline for interactive part generation. Extensive experimental results show that X-Part achieves state-of-the-art performance in part-level shape generation. This work establishes a new paradigm for creating production-ready, editable, and structurally sound 3D assets. Codes will be released for public research.

Digital Twin-Empowered Voltage Control for Power Systems

Dec 09, 2024

Abstract:Emerging digital twin technology has the potential to revolutionize voltage control in power systems. However, the state-of-the-art digital twin method suffers from low computational and sampling efficiency, which hinders its applications. To address this issue, we propose a Gumbel-Consistency Digital Twin (GC-DT) method that enhances voltage control with improved computational and sampling efficiency. First, the proposed method incorporates a Gumbel-based strategy improvement that leverages the Gumbel-top trick to enhance non-repetitive sampling actions and reduce the reliance on Monte Carlo Tree Search simulations, thereby improving computational efficiency. Second, a consistency loss function aligns predicted hidden states with actual hidden states in the latent space, which increases both prediction accuracy and sampling efficiency. Experiments on IEEE 123-bus, 34-bus, and 13-bus systems demonstrate that the proposed GC-DT outperforms the state-of-the-art DT method in both computational and sampling efficiency.

A Conversational Brain-Artificial Intelligence Interface

Feb 22, 2024

Abstract:We introduce Brain-Artificial Intelligence Interfaces (BAIs) as a new class of Brain-Computer Interfaces (BCIs). Unlike conventional BCIs, which rely on intact cognitive capabilities, BAIs leverage the power of artificial intelligence to replace parts of the neuro-cognitive processing pipeline. BAIs allow users to accomplish complex tasks by providing high-level intentions, while a pre-trained AI agent determines low-level details. This approach enlarges the target audience of BCIs to individuals with cognitive impairments, a population often excluded from the benefits of conventional BCIs. We present the general concept of BAIs and illustrate the potential of this new approach with a Conversational BAI based on EEG. In particular, we show in an experiment with simulated phone conversations that the Conversational BAI enables complex communication without the need to generate language. Our work thus demonstrates, for the first time, the ability of a speech neuroprosthesis to enable fluent communication in realistic scenarios with non-invasive technologies.

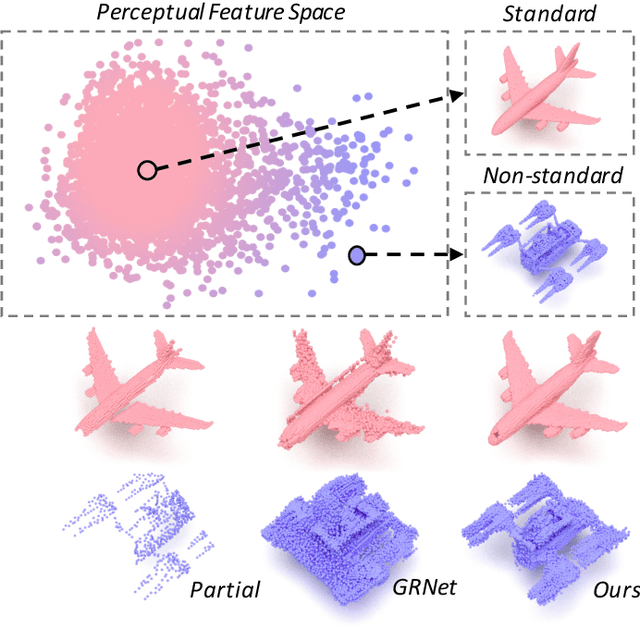

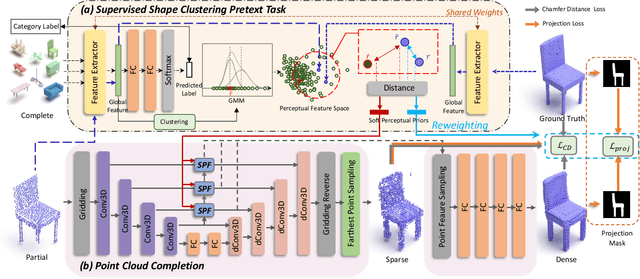

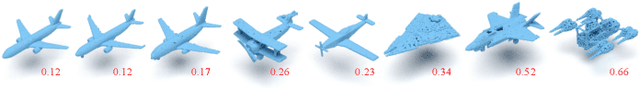

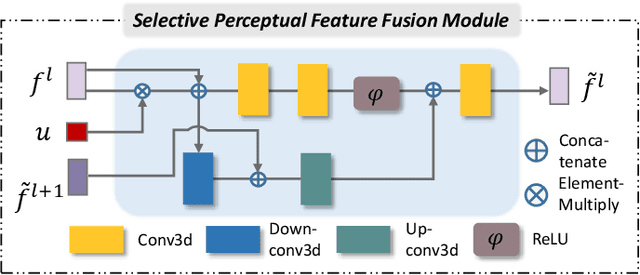

Prototype-Aware Heterogeneous Task for Point Cloud Completion

Sep 05, 2022

Abstract:Point cloud completion, which aims at recovering original shape information from partial point clouds, has attracted attention on 3D vision community. Existing methods usually succeed in completion for standard shape, while failing to generate local details of point clouds for some non-standard shapes. To achieve desirable local details, guidance from global shape information is of critical importance. In this work, we design an effective way to distinguish standard/non-standard shapes with the help of intra-class shape prototypical representation, which can be calculated by the proposed supervised shape clustering pretext task, resulting in a heterogeneous component w.r.t completion network. The representative prototype, defined as feature centroid of shape categories, can provide global shape guidance, which is referred to as soft-perceptual prior, to inject into downstream completion network by the desired selective perceptual feature fusion module in a multi-scale manner. Moreover, for effective training, we consider difficulty-based sampling strategy to encourage the network to pay more attention to some partial point clouds with fewer geometric information. Experimental results show that our method outperforms other state-of-the-art methods and has strong ability on completing complex geometric shapes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge