"Time": models, code, and papers

Evaluating the Effectiveness of Pre-trained Language Models in Predicting the Helpfulness of Online Product Reviews

Feb 19, 2023

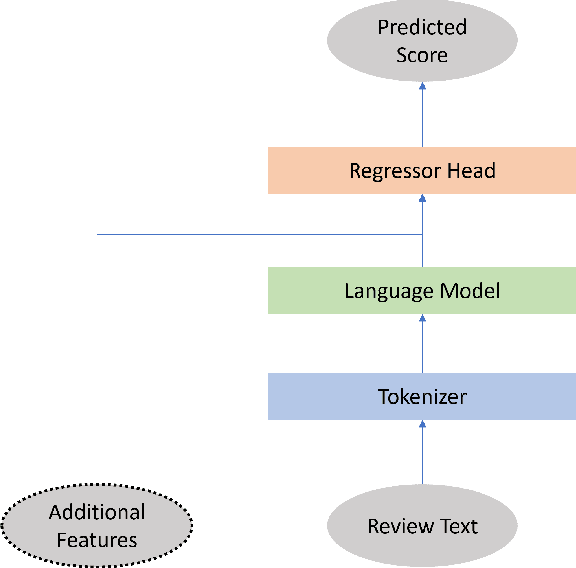

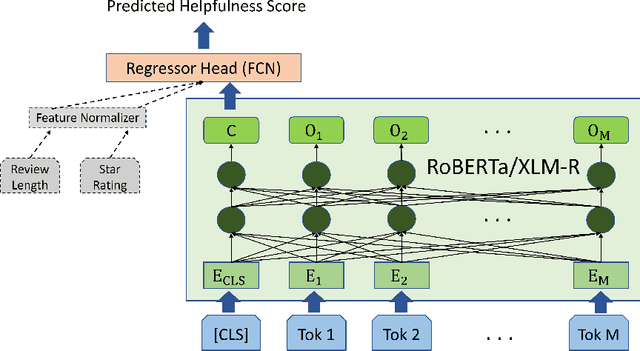

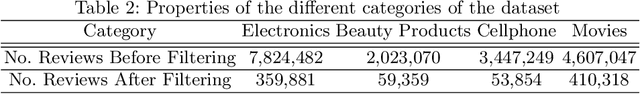

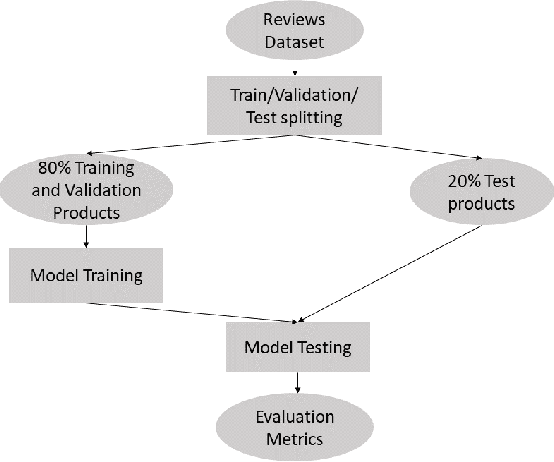

Businesses and customers can gain valuable information from product reviews. The sheer number of reviews often necessitates ranking them based on their potential helpfulness. However, only a few reviews ever receive any helpfulness votes on online marketplaces. Sorting all reviews based on the few existing votes can cause helpful reviews to go unnoticed because of the limited attention span of readers. The problem of review helpfulness prediction is even more important for higher review volumes, and newly written reviews or launched products. In this work we compare the use of RoBERTa and XLM-R language models to predict the helpfulness of online product reviews. The contributions of our work in relation to literature include extensively investigating the efficacy of state-of-the-art language models -- both monolingual and multilingual -- against a robust baseline, taking ranking metrics into account when assessing these approaches, and assessing multilingual models for the first time. We employ the Amazon review dataset for our experiments. According to our study on several product categories, multilingual and monolingual pre-trained language models outperform the baseline that utilizes random forest with handcrafted features as much as 23% in RMSE. Pre-trained language models reduce the need for complex text feature engineering. However, our results suggest that pre-trained multilingual models may not be used for fine-tuning only one language. We assess the performance of language models with and without additional features. Our results show that including additional features like product rating by the reviewer can further help the predictive methods.

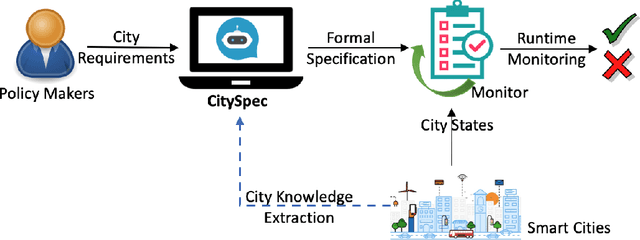

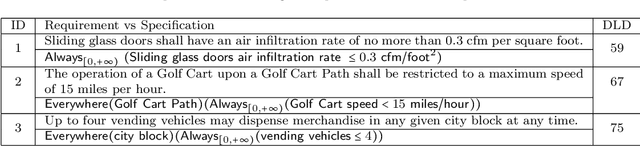

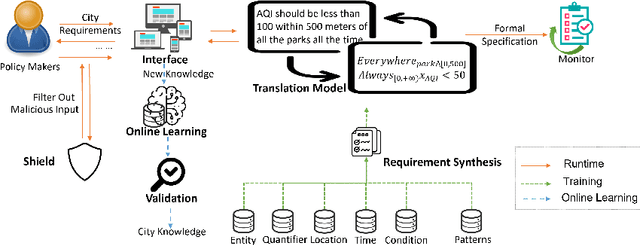

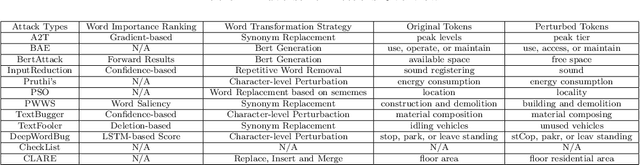

CitySpec with Shield: A Secure Intelligent Assistant for Requirement Formalization

Feb 19, 2023

An increasing number of monitoring systems have been developed in smart cities to ensure that the real-time operations of a city satisfy safety and performance requirements. However, many existing city requirements are written in English with missing, inaccurate, or ambiguous information. There is a high demand for assisting city policymakers in converting human-specified requirements to machine-understandable formal specifications for monitoring systems. To tackle this limitation, we build CitySpec, the first intelligent assistant system for requirement specification in smart cities. To create CitySpec, we first collect over 1,500 real-world city requirements across different domains (e.g., transportation and energy) from over 100 cities and extract city-specific knowledge to generate a dataset of city vocabulary with 3,061 words. We also build a translation model and enhance it through requirement synthesis and develop a novel online learning framework with shielded validation. The evaluation results on real-world city requirements show that CitySpec increases the sentence-level accuracy of requirement specification from 59.02% to 86.64%, and has strong adaptability to a new city and a new domain (e.g., the F1 score for requirements in Seattle increases from 77.6% to 93.75% with online learning). After the enhancement from the shield function, CitySpec is now immune to most known textual adversarial inputs (e.g., the attack success rate of DeepWordBug after the shield function is reduced to 0% from 82.73%). We test the CitySpec with 18 participants from different domains. CitySpec shows its strong usability and adaptability to different domains, and also its robustness to malicious inputs.

Connecting the Universe: Challenges, Mitigation, Advances, and Link Engineering

Feb 19, 2023

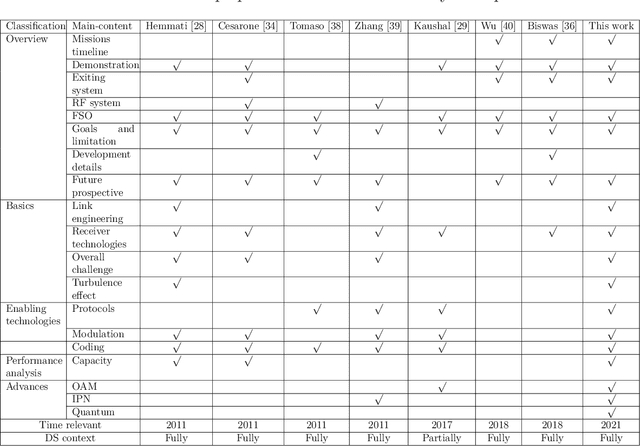

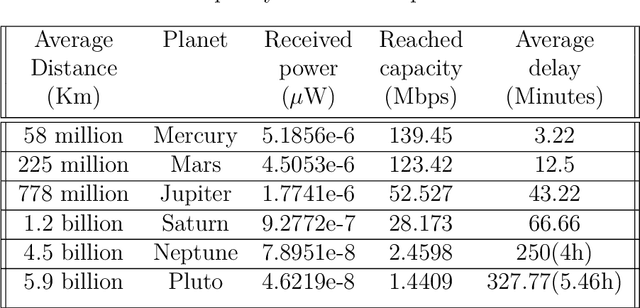

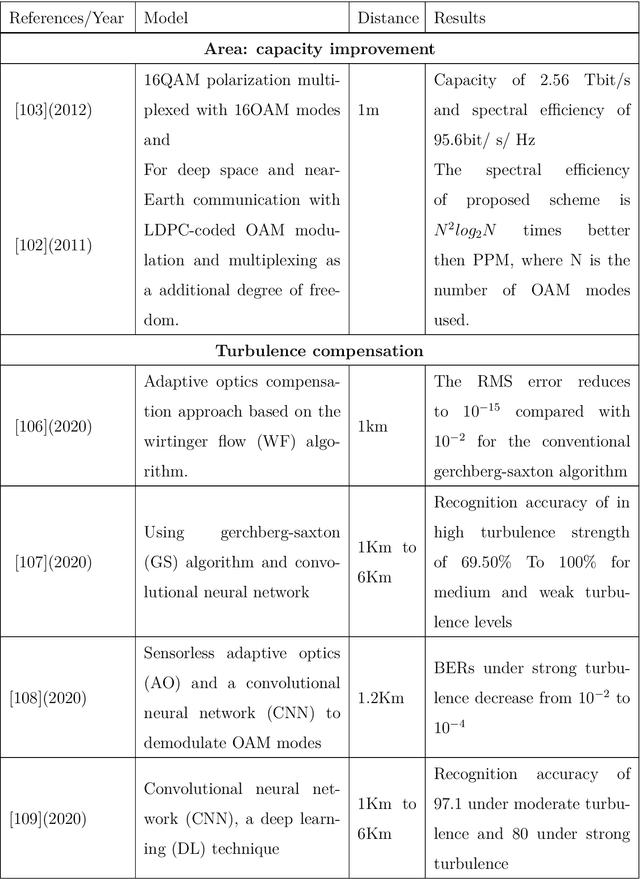

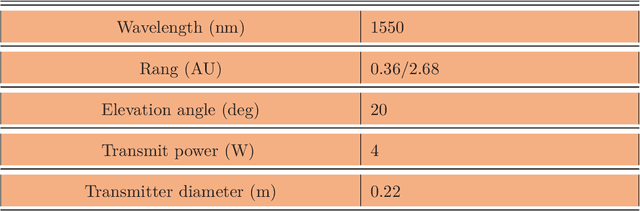

With the large number of deep space (DS) missions anticipated by the end of this decade, reliable-high capacity DS communication systems are needed more than ever. Nevertheless, existing DS communication technologies are far from meeting such a goal. Improving current systems does not only demand a system engineering leadership, but more crucially a well investigation in the potentials of emerging technologies in overcoming the challenges of the unique-ultra long DS communication channel. This project starts with a survey that highlights current technologies, trends, and advancements, investigates potentials, and identify challenges, and in essence, provide perspectives and propose solutions. It focuses on free-space optical (FSO) communication as a potential technology that can overcome the shortcomings of current radio frequency (RF)-based communication systems. To the best of our knowledge, in addition, it provides for the very first time a thoughtful discussion about implementing orbital angular momentum (OAM) for DS, identifies major related challenges, and proposes some novel solutions. Furthermore, we discuss DS modulations and coding schemes, as well as emerging receiver technologies and communication protocols. We also elaborate on how all of these technologies guarantee reliability, improve efficiency, offer capacity boosts, and enhance security in the unique DS environment. In addition to that, an extended study on the design and performance analysis of deep space optical communication (DSOC) is included, with the most suggested modulation for such a link being pulse position modulation (PPM) and a focus on the communication between Earth and the planet Mars, which is an important destination for space exploration.

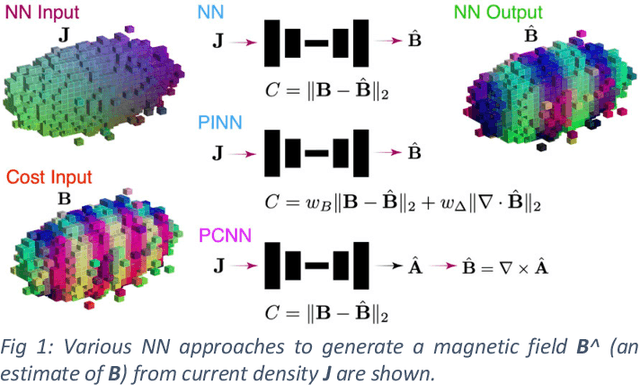

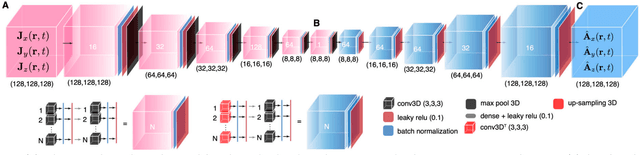

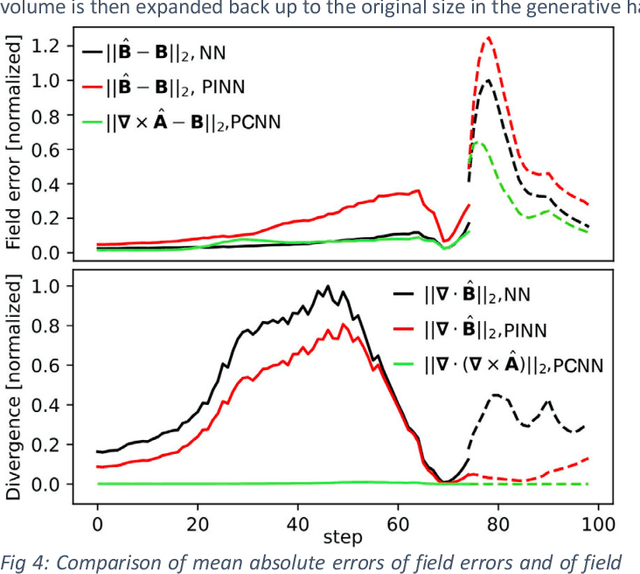

Physics-constrained 3D Convolutional Neural Networks for Electrodynamics

Jan 31, 2023

We present a physics-constrained neural network (PCNN) approach to solving Maxwell's equations for the electromagnetic fields of intense relativistic charged particle beams. We create a 3D convolutional PCNN to map time-varying current and charge densities J(r,t) and p(r,t) to vector and scalar potentials A(r,t) and V(r,t) from which we generate electromagnetic fields according to Maxwell's equations: B=curl(A), E=-div(V)-dA/dt. Our PCNNs satisfy hard constraints, such as div(B)=0, by construction. Soft constraints push A and V towards satisfying the Lorenz gauge.

Learning to Solve Multiple-TSP with Time Window and Rejections via Deep Reinforcement Learning

Sep 13, 2022

We propose a manager-worker framework based on deep reinforcement learning to tackle a hard yet nontrivial variant of Travelling Salesman Problem (TSP), \ie~multiple-vehicle TSP with time window and rejections (mTSPTWR), where customers who cannot be served before the deadline are subject to rejections. Particularly, in the proposed framework, a manager agent learns to divide mTSPTWR into sub-routing tasks by assigning customers to each vehicle via a Graph Isomorphism Network (GIN) based policy network. A worker agent learns to solve sub-routing tasks by minimizing the cost in terms of both tour length and rejection rate for each vehicle, the maximum of which is then fed back to the manager agent to learn better assignments. Experimental results demonstrate that the proposed framework outperforms strong baselines in terms of higher solution quality and shorter computation time. More importantly, the trained agents also achieve competitive performance for solving unseen larger instances.

Robust Human Motion Forecasting using Transformer-based Model

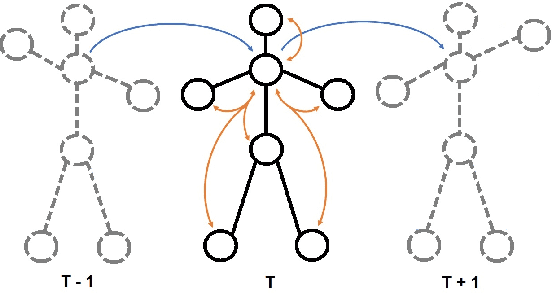

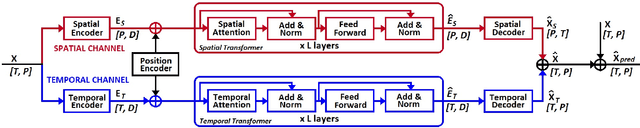

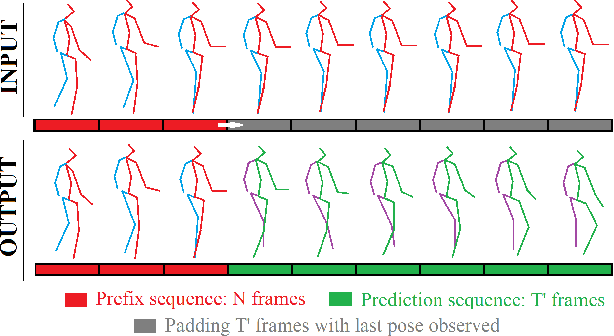

Feb 16, 2023

Comprehending human motion is a fundamental challenge for developing Human-Robot Collaborative applications. Computer vision researchers have addressed this field by only focusing on reducing error in predictions, but not taking into account the requirements to facilitate its implementation in robots. In this paper, we propose a new model based on Transformer that simultaneously deals with the real time 3D human motion forecasting in the short and long term. Our 2-Channel Transformer (2CH-TR) is able to efficiently exploit the spatio-temporal information of a shortly observed sequence (400ms) and generates a competitive accuracy against the current state-of-the-art. 2CH-TR stands out for the efficient performance of the Transformer, being lighter and faster than its competitors. In addition, our model is tested in conditions where the human motion is severely occluded, demonstrating its robustness in reconstructing and predicting 3D human motion in a highly noisy environment. Our experiment results show that the proposed 2CH-TR outperforms the ST-Transformer, which is another state-of-the-art model based on the Transformer, in terms of reconstruction and prediction under the same conditions of input prefix. Our model reduces in 8.89% the mean squared error of ST-Transformer in short-term prediction, and 2.57% in long-term prediction in Human3.6M dataset with 400ms input prefix.

* This paper has been already accepted to the 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2022)

EspalomaCharge: Machine learning-enabled ultra-fast partial charge assignment

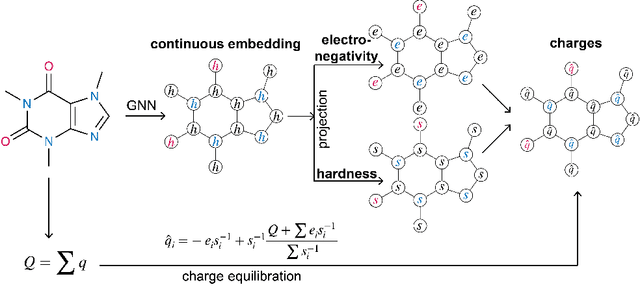

Feb 16, 2023

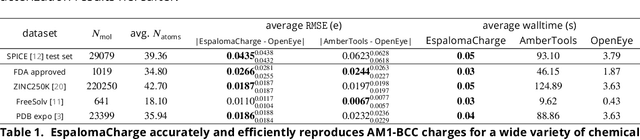

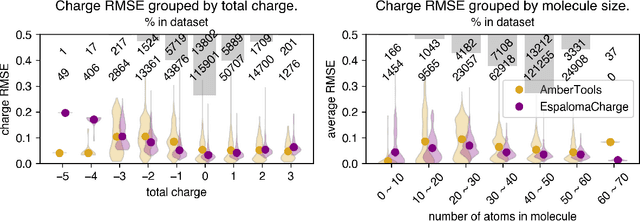

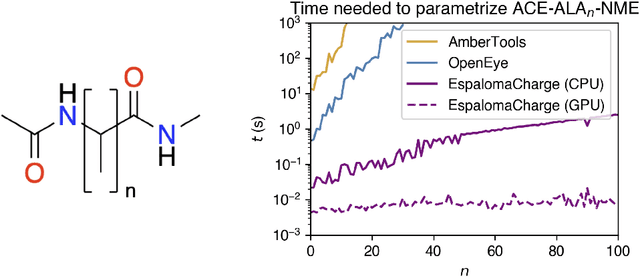

Atomic partial charges are crucial parameters in molecular dynamics (MD) simulation, dictating the electrostatic contributions to intermolecular energies, and thereby the potential energy landscape. Traditionally, the assignment of partial charges has relied on surrogates of \textit{ab initio} semiempirical quantum chemical methods such as AM1-BCC, and is expensive for large systems or large numbers of molecules. We propose a hybrid physical / graph neural network-based approximation to the widely popular AM1-BCC charge model that is orders of magnitude faster while maintaining accuracy comparable to differences in AM1-BCC implementations. Our hybrid approach couples a graph neural network to a streamlined charge equilibration approach in order to predict molecule-specific atomic electronegativity and hardness parameters, followed by analytical determination of optimal charge-equilibrated parameters that preserves total molecular charge. This hybrid approach scales linearly with the number of atoms, enabling, for the first time, the use of fully consistent charge models for small molecules and biopolymers for the construction of next-generation self-consistent biomolecular force fields. Implemented in the free and open source package \texttt{espaloma\_charge}, this approach provides drop-in replacements for both AmberTools \texttt{antechamber} and the Open Force Field Toolkit charging workflows, in addition to stand-alone charge generation interfaces. Source code is available at \url{https://github.com/choderalab/espaloma_charge}.

Equilibrium and Learning in Fixed-Price Data Markets with Externality

Feb 16, 2023We propose modeling real-world data markets, where sellers post fixed prices and buyers are free to purchase from any set of sellers they please, as a simultaneous-move game between the buyers. A key component of this model is the negative externality buyers induce on one another due to purchasing similar data, a phenomenon exacerbated by its easy replicability. In the complete-information setting, where all buyers know their valuations, we characterize both the existence and the quality (with respect to optimal social welfare) of the pure-strategy Nash equilibrium under various models of buyer externality. While this picture is bleak without any market intervention, reinforcing the inadequacy of modern data markets, we prove that for a broad class of externality functions, market intervention in the form of a revenue-neutral transaction cost can lead to a pure-strategy equilibrium with strong welfare guarantees. We further show that this intervention is amenable to the more realistic setting where buyers start with unknown valuations and learn them over time through repeated market interactions. For such a setting, we provide an online learning algorithm for each buyer that achieves low regret guarantees with respect to both individual buyers' strategy and social welfare optimal. Our work paves the way for considering simple intervention strategies for existing fixed-price data markets to address their shortcoming and the unique challenges put forth by data products.

TcGAN: Semantic-Aware and Structure-Preserved GANs with Individual Vision Transformer for Fast Arbitrary One-Shot Image Generation

Feb 16, 2023

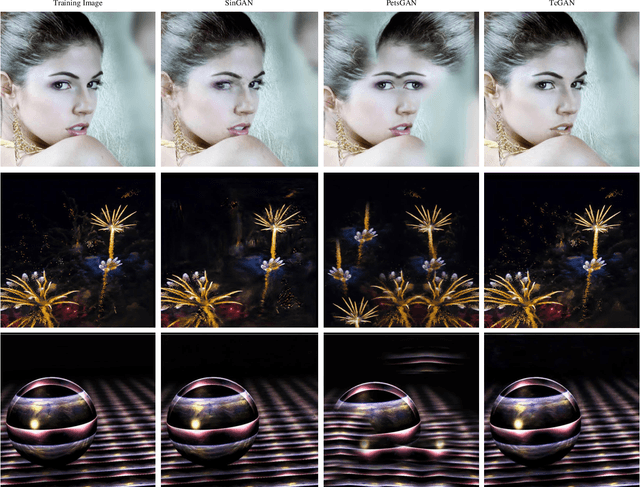

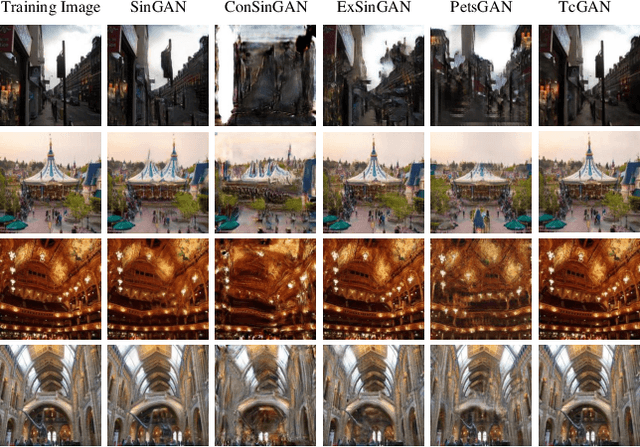

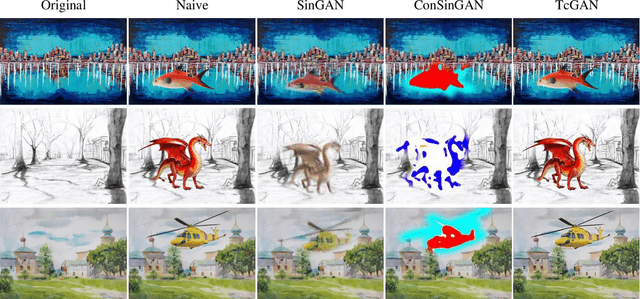

One-shot image generation (OSG) with generative adversarial networks that learn from the internal patches of a given image has attracted world wide attention. In recent studies, scholars have primarily focused on extracting features of images from probabilistically distributed inputs with pure convolutional neural networks (CNNs). However, it is quite difficult for CNNs with limited receptive domain to extract and maintain the global structural information. Therefore, in this paper, we propose a novel structure-preserved method TcGAN with individual vision transformer to overcome the shortcomings of the existing one-shot image generation methods. Specifically, TcGAN preserves global structure of an image during training to be compatible with local details while maintaining the integrity of semantic-aware information by exploiting the powerful long-range dependencies modeling capability of the transformer. We also propose a new scaling formula having scale-invariance during the calculation period, which effectively improves the generated image quality of the OSG model on image super-resolution tasks. We present the design of the TcGAN converter framework, comprehensive experimental as well as ablation studies demonstrating the ability of TcGAN to achieve arbitrary image generation with the fastest running time. Lastly, TcGAN achieves the most excellent performance in terms of applying it to other image processing tasks, e.g., super-resolution as well as image harmonization, the results further prove its superiority.

A method for incremental discovery of financial event types based on anomaly detection

Feb 16, 2023

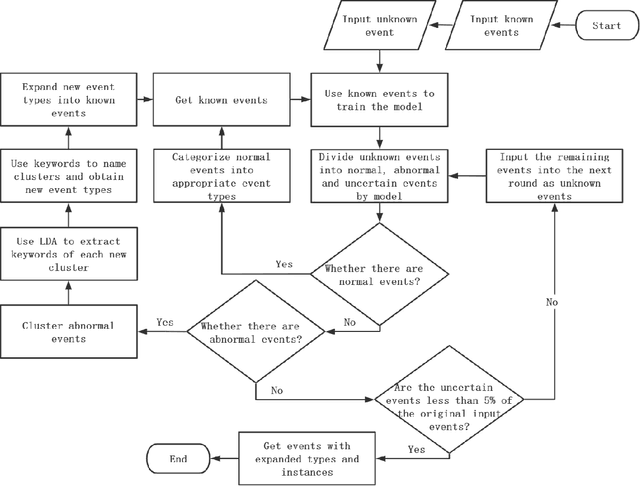

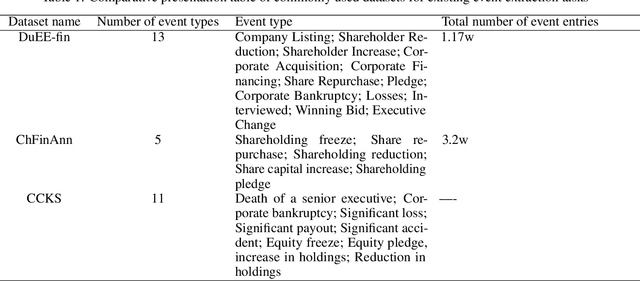

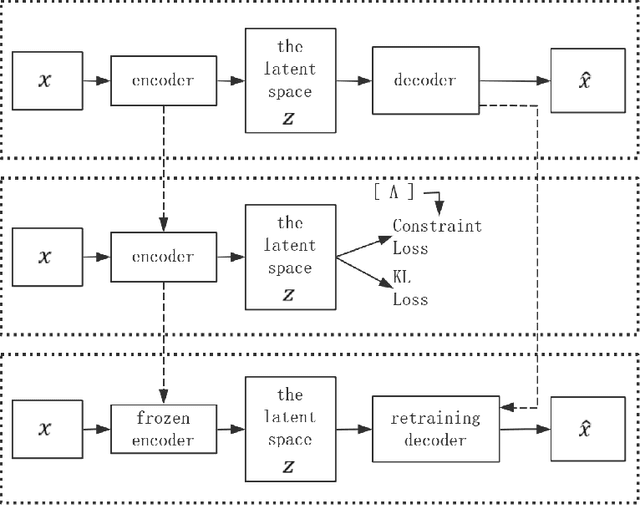

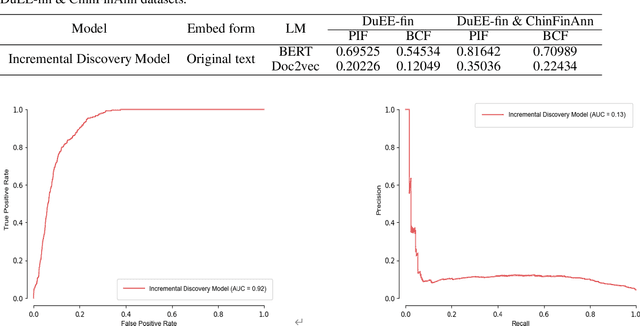

Event datasets in the financial domain are often constructed based on actual application scenarios, and their event types are weakly reusable due to scenario constraints; at the same time, the massive and diverse new financial big data cannot be limited to the event types defined for specific scenarios. This limitation of a small number of event types does not meet our research needs for more complex tasks such as the prediction of major financial events and the analysis of the ripple effects of financial events. In this paper, a three-stage approach is proposed to accomplish incremental discovery of event types. For an existing annotated financial event dataset, the three-stage approach consists of: for a set of financial event data with a mixture of original and unknown event types, a semi-supervised deep clustering model with anomaly detection is first applied to classify the data into normal and abnormal events, where abnormal events are events that do not belong to known types; then normal events are tagged with appropriate event types and abnormal events are reasonably clustered. Finally, a cluster keyword extraction method is used to recommend the type names of events for the new event clusters, thus incrementally discovering new event types. The proposed method is effective in the incremental discovery of new event types on real data sets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge