Unsupervised Anomaly Detection

Unsupervised anomaly detection is the process of identifying unusual patterns or outliers in data without using labeled examples.

Papers and Code

Moon: A Modality Conversion-based Efficient Multivariate Time Series Anomaly Detection

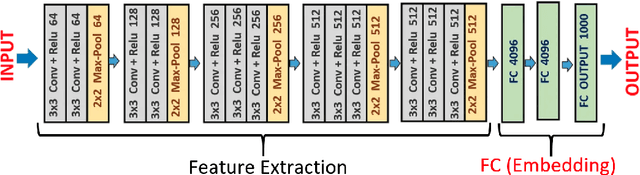

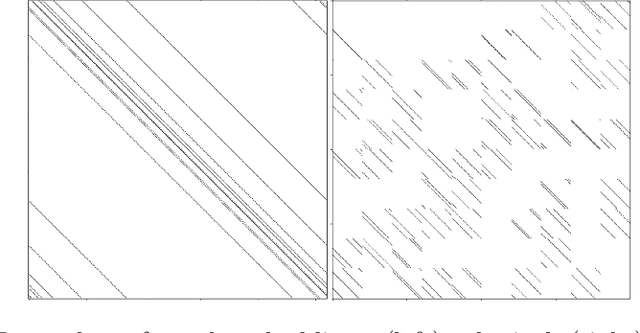

Oct 02, 2025Multivariate time series (MTS) anomaly detection identifies abnormal patterns where each timestamp contains multiple variables. Existing MTS anomaly detection methods fall into three categories: reconstruction-based, prediction-based, and classifier-based methods. However, these methods face two key challenges: (1) Unsupervised learning methods, such as reconstruction-based and prediction-based methods, rely on error thresholds, which can lead to inaccuracies; (2) Semi-supervised methods mainly model normal data and often underuse anomaly labels, limiting detection of subtle anomalies;(3) Supervised learning methods, such as classifier-based approaches, often fail to capture local relationships, incur high computational costs, and are constrained by the scarcity of labeled data. To address these limitations, we propose Moon, a supervised modality conversion-based multivariate time series anomaly detection framework. Moon enhances the efficiency and accuracy of anomaly detection while providing detailed anomaly analysis reports. First, Moon introduces a novel multivariate Markov Transition Field (MV-MTF) technique to convert numeric time series data into image representations, capturing relationships across variables and timestamps. Since numeric data retains unique patterns that cannot be fully captured by image conversion alone, Moon employs a Multimodal-CNN to integrate numeric and image data through a feature fusion model with parameter sharing, enhancing training efficiency. Finally, a SHAP-based anomaly explainer identifies key variables contributing to anomalies, improving interpretability. Extensive experiments on six real-world MTS datasets demonstrate that Moon outperforms six state-of-the-art methods by up to 93% in efficiency, 4% in accuracy and, 10.8% in interpretation performance.

MicroRCA-Agent: Microservice Root Cause Analysis Method Based on Large Language Model Agents

Sep 19, 2025This paper presents MicroRCA-Agent, an innovative solution for microservice root cause analysis based on large language model agents, which constructs an intelligent fault root cause localization system with multimodal data fusion. The technical innovations are embodied in three key aspects: First, we combine the pre-trained Drain log parsing algorithm with multi-level data filtering mechanism to efficiently compress massive logs into high-quality fault features. Second, we employ a dual anomaly detection approach that integrates Isolation Forest unsupervised learning algorithms with status code validation to achieve comprehensive trace anomaly identification. Third, we design a statistical symmetry ratio filtering mechanism coupled with a two-stage LLM analysis strategy to enable full-stack phenomenon summarization across node-service-pod hierarchies. The multimodal root cause analysis module leverages carefully designed cross-modal prompts to deeply integrate multimodal anomaly information, fully exploiting the cross-modal understanding and logical reasoning capabilities of large language models to generate structured analysis results encompassing fault components, root cause descriptions, and reasoning trace. Comprehensive ablation studies validate the complementary value of each modal data and the effectiveness of the system architecture. The proposed solution demonstrates superior performance in complex microservice fault scenarios, achieving a final score of 50.71. The code has been released at: https://github.com/tangpan360/MicroRCA-Agent.

How and Why: Taming Flow Matching for Unsupervised Anomaly Detection and Localization

Aug 07, 2025

We propose a new paradigm for unsupervised anomaly detection and localization using Flow Matching (FM), which fundamentally addresses the model expressivity limitations of conventional flow-based methods. To this end, we formalize the concept of time-reversed Flow Matching (rFM) as a vector field regression along a predefined probability path to transform unknown data distributions into standard Gaussian. We bring two core observations that reshape our understanding of FM. First, we rigorously prove that FM with linear interpolation probability paths is inherently non-invertible. Second, our analysis reveals that employing reversed Gaussian probability paths in high-dimensional spaces can lead to trivial vector fields. This issue arises due to the manifold-related constraints. Building on the second observation, we propose Worst Transport (WT) displacement interpolation to reconstruct a non-probabilistic evolution path. The proposed WT-Flow enhances dynamical control over sample trajectories, constructing ''degenerate potential wells'' for anomaly-free samples while allowing anomalous samples to escape. This novel unsupervised paradigm offers a theoretically grounded separation mechanism for anomalous samples. Notably, FM provides a computationally tractable framework that scales to complex data. We present the first successful application of FM for the unsupervised anomaly detection task, achieving state-of-the-art performance at a single scale on the MVTec dataset. The reproducible code for training will be released upon camera-ready submission.

Unsupervised anomaly detection using Bayesian flow networks: application to brain FDG PET in the context of Alzheimer's disease

Jul 23, 2025Unsupervised anomaly detection (UAD) plays a crucial role in neuroimaging for identifying deviations from healthy subject data and thus facilitating the diagnosis of neurological disorders. In this work, we focus on Bayesian flow networks (BFNs), a novel class of generative models, which have not yet been applied to medical imaging or anomaly detection. BFNs combine the strength of diffusion frameworks and Bayesian inference. We introduce AnoBFN, an extension of BFNs for UAD, designed to: i) perform conditional image generation under high levels of spatially correlated noise, and ii) preserve subject specificity by incorporating a recursive feedback from the input image throughout the generative process. We evaluate AnoBFN on the challenging task of Alzheimer's disease-related anomaly detection in FDG PET images. Our approach outperforms other state-of-the-art methods based on VAEs (beta-VAE), GANs (f-AnoGAN), and diffusion models (AnoDDPM), demonstrating its effectiveness at detecting anomalies while reducing false positive rates.

Multi-Stage Knowledge-Distilled VGAE and GAT for Robust Controller-Area-Network Intrusion Detection

Aug 06, 2025The Controller Area Network (CAN) protocol is a standard for in-vehicle communication but remains susceptible to cyber-attacks due to its lack of built-in security. This paper presents a multi-stage intrusion detection framework leveraging unsupervised anomaly detection and supervised graph learning tailored for automotive CAN traffic. Our architecture combines a Variational Graph Autoencoder (VGAE) for structural anomaly detection with a Knowledge-Distilled Graph Attention Network (KD-GAT) for robust attack classification. CAN bus activity is encoded as graph sequences to model temporal and relational dependencies. The pipeline applies VGAE-based selective undersampling to address class imbalance, followed by GAT classification with optional score-level fusion. The compact student GAT achieves 96% parameter reduction compared to the teacher model while maintaining strong predictive performance. Experiments on six public CAN intrusion datasets--Car-Hacking, Car-Survival, and can-train-and-test--demonstrate competitive accuracy and efficiency, with average improvements of 16.2% in F1-score over existing methods, particularly excelling on highly imbalanced datasets with up to 55% F1-score improvements.

$K^4$: Online Log Anomaly Detection Via Unsupervised Typicality Learning

Jul 26, 2025Existing Log Anomaly Detection (LogAD) methods are often slow, dependent on error-prone parsing, and use unrealistic evaluation protocols. We introduce $K^4$, an unsupervised and parser-independent framework for high-performance online detection. $K^4$ transforms arbitrary log embeddings into compact four-dimensional descriptors (Precision, Recall, Density, Coverage) using efficient k-nearest neighbor (k-NN) statistics. These descriptors enable lightweight detectors to accurately score anomalies without retraining. Using a more realistic online evaluation protocol, $K^4$ sets a new state-of-the-art (AUROC: 0.995-0.999), outperforming baselines by large margins while being orders of magnitude faster, with training under 4 seconds and inference as low as 4 $\mu$s.

Levarging Learning Bias for Noisy Anomaly Detection

Aug 10, 2025This paper addresses the challenge of fully unsupervised image anomaly detection (FUIAD), where training data may contain unlabeled anomalies. Conventional methods assume anomaly-free training data, but real-world contamination leads models to absorb anomalies as normal, degrading detection performance. To mitigate this, we propose a two-stage framework that systematically exploits inherent learning bias in models. The learning bias stems from: (1) the statistical dominance of normal samples, driving models to prioritize learning stable normal patterns over sparse anomalies, and (2) feature-space divergence, where normal data exhibit high intra-class consistency while anomalies display high diversity, leading to unstable model responses. Leveraging the learning bias, stage 1 partitions the training set into subsets, trains sub-models, and aggregates cross-model anomaly scores to filter a purified dataset. Stage 2 trains the final detector on this dataset. Experiments on the Real-IAD benchmark demonstrate superior anomaly detection and localization performance under different noise conditions. Ablation studies further validate the framework's contamination resilience, emphasizing the critical role of learning bias exploitation. The model-agnostic design ensures compatibility with diverse unsupervised backbones, offering a practical solution for real-world scenarios with imperfect training data. Code is available at https://github.com/hustzhangyuxin/LLBNAD.

TriP-LLM: A Tri-Branch Patch-wise Large Language Model Framework for Time-Series Anomaly Detection

Jul 31, 2025Time-series anomaly detection plays a central role across a wide range of application domains. With the increasing proliferation of the Internet of Things (IoT) and smart manufacturing, time-series data has dramatically increased in both scale and dimensionality. This growth has exposed the limitations of traditional statistical methods in handling the high heterogeneity and complexity of such data. Inspired by the recent success of large language models (LLMs) in multimodal tasks across language and vision domains, we propose a novel unsupervised anomaly detection framework: A Tri-Branch Patch-wise Large Language Model Framework for Time-Series Anomaly Detection (TriP-LLM). TriP-LLM integrates local and global temporal features through a tri-branch design-Patching, Selection, and Global-to encode the input time series into patch-wise tokens, which are then processed by a frozen, pretrained LLM. A lightweight patch-wise decoder reconstructs the input, from which anomaly scores are derived. We evaluate TriP-LLM on several public benchmark datasets using PATE, a recently proposed threshold-free evaluation metric, and conduct all comparisons within a unified open-source framework to ensure fairness. Experimental results show that TriP-LLM consistently outperforms recent state-of-the-art methods across all datasets, demonstrating strong detection capabilities. Furthermore, through extensive ablation studies, we verify the substantial contribution of the LLM to the overall architecture. Compared to LLM-based approaches using Channel Independence (CI) patch processing, TriP-LLM achieves significantly lower memory consumption, making it more suitable for GPU memory-constrained environments. All code and model checkpoints are publicly available on https://github.com/YYZStart/TriP-LLM.git

Adaptive Anomaly Detection in Evolving Network Environments

Aug 20, 2025Distribution shift, a change in the statistical properties of data over time, poses a critical challenge for deep learning anomaly detection systems. Existing anomaly detection systems often struggle to adapt to these shifts. Specifically, systems based on supervised learning require costly manual labeling, while those based on unsupervised learning rely on clean data, which is difficult to obtain, for shift adaptation. Both of these requirements are challenging to meet in practice. In this paper, we introduce NetSight, a framework for supervised anomaly detection in network data that continually detects and adapts to distribution shifts in an online manner. NetSight eliminates manual intervention through a novel pseudo-labeling technique and uses a knowledge distillation-based adaptation strategy to prevent catastrophic forgetting. Evaluated on three long-term network datasets, NetSight demonstrates superior adaptation performance compared to state-of-the-art methods that rely on manual labeling, achieving F1-score improvements of up to 11.72%. This proves its robustness and effectiveness in dynamic networks that experience distribution shifts over time.

Synthetic Image Detection via Spectral Gaps of QC-RBIM Nishimori Bethe-Hessian Operators

Aug 27, 2025

The rapid advance of deep generative models such as GANs and diffusion networks now produces images that are virtually indistinguishable from genuine photographs, undermining media forensics and biometric security. Supervised detectors quickly lose effectiveness on unseen generators or after adversarial post-processing, while existing unsupervised methods that rely on low-level statistical cues remain fragile. We introduce a physics-inspired, model-agnostic detector that treats synthetic-image identification as a community-detection problem on a sparse weighted graph. Image features are first extracted with pretrained CNNs and reduced to 32 dimensions, each feature vector becomes a node of a Multi-Edge Type QC-LDPC graph. Pairwise similarities are transformed into edge couplings calibrated at the Nishimori temperature, producing a Random Bond Ising Model (RBIM) whose Bethe-Hessian spectrum exhibits a characteristic gap when genuine community structure (real images) is present. Synthetic images violate the Nishimori symmetry and therefore lack such gaps. We validate the approach on binary tasks cat versus dog and male versus female using real photos from Flickr-Faces-HQ and CelebA and synthetic counterparts generated by GANs and diffusion models. Without any labeled synthetic data or retraining of the feature extractor, the detector achieves over 94% accuracy. Spectral analysis shows multiple well separated gaps for real image sets and a collapsed spectrum for generated ones. Our contributions are threefold: a novel LDPC graph construction that embeds deep image features, an analytical link between Nishimori temperature RBIM and the Bethe-Hessian spectrum providing a Bayes optimal detection criterion; and a practical, unsupervised synthetic image detector robust to new generative architectures. Future work will extend the framework to video streams and multi-class anomaly detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge