Zhi Sun

KARMA: Knowledge-Action Regularized Multimodal Alignment for Personalized Search at Taobao

Mar 24, 2026Abstract:Large Language Models (LLMs) are equipped with profound semantic knowledge, making them a natural choice for injecting semantic generalization into personalized search systems. However, in practice we find that directly fine-tuning LLMs on industrial personalized tasks (e.g. next item prediction) often yields suboptimal results. We attribute this bottleneck to a critical Knowledge--Action Gap: the inherent conflict between preserving pre-trained semantic knowledge and aligning with specific personalized actions by discriminative objectives. Empirically, action-only training objectives induce Semantic Collapse, such as attention ``sinks''. This degradation severely cripples the LLM's generalization, failing to bring improvements to personalized search systems. We propose KARMA (Knowledge--Action Regularized Multimodal Alignment), a unified framework that treats semantic reconstruction as a train-only regularizer. KARMA optimizes a next-interest embedding for retrieval (Action) while enforcing semantic decodability (Knowledge) through two complementary objectives: (i) history-conditioned semantic generation, which anchors optimization to the LLM's native next-token distribution, and (ii) embedding-conditioned semantic reconstruction, which constrains the interest embedding to remain semantically recoverable. On Taobao search system, KARMA mitigates semantic collapse (attention-sink analysis) and improves both action metrics and semantic fidelity. In ablations, semantic decodability yields up to +22.5 HR@200. With KARMA, we achieve +0.25 CTR AUC in ranking, +1.86 HR in pre-ranking and +2.51 HR in recalling. Deployed online with low inference overhead at ranking stage, KARMA drives +0.5% increase in Item Click.

Generalizable Learning for Massive MIMO CSI Feedback in Unseen Environments

Dec 28, 2025Abstract:Deep learning is promising to enhance the accuracy and reduce the overhead of channel state information (CSI) feedback, which can boost the capacity of frequency division duplex (FDD) massive multiple-input multiple-output (MIMO) systems. Nevertheless, the generalizability of current deep learning-based CSI feedback algorithms cannot be guaranteed in unseen environments, which induces a high deployment cost. In this paper, the generalizability of deep learning-based CSI feedback is promoted with physics interpretation. Firstly, the distribution shift of the cluster-based channel is modeled, which comprises the multi-cluster structure and single-cluster response. Secondly, the physics-based distribution alignment is proposed to effectively address the distribution shift of the cluster-based channel, which comprises multi-cluster decoupling and fine-grained alignment. Thirdly, the efficiency and robustness of physics-based distribution alignment are enhanced. Explicitly, an efficient multi-cluster decoupling algorithm is proposed based on the Eckart-Young-Mirsky (EYM) theorem to support real-time CSI feedback. Meanwhile, a hybrid criterion to estimate the number of decoupled clusters is designed, which enhances the robustness against channel estimation error. Fourthly, environment-generalizable neural network for CSI feedback (EG-CsiNet) is proposed as a novel learning framework with physics-based distribution alignment. Based on extensive simulations and sim-to-real experiments in various conditions, the proposed EG-CsiNet can robustly reduce the generalization error by more than 3 dB compared to the state-of-the-arts.

Enhancing Environment Generalizability for Deep Learning-Based CSI Feedback

Jul 09, 2025Abstract:Accurate and low-overhead channel state information (CSI) feedback is essential to boost the capacity of frequency division duplex (FDD) massive multiple-input multiple-output (MIMO) systems. Deep learning-based CSI feedback significantly outperforms conventional approaches. Nevertheless, current deep learning-based CSI feedback algorithms exhibit limited generalizability to unseen environments, which obviously increases the deployment cost. In this paper, we first model the distribution shift of CSI across different environments, which is composed of the distribution shift of multipath structure and a single-path. Then, EG-CsiNet is proposed as a novel CSI feedback learning framework to enhance environment-generalizability. Explicitly, EG-CsiNet comprises the modules of multipath decoupling and fine-grained alignment, which can address the distribution shift of multipath structure and a single path. Based on extensive simulations, the proposed EG-CsiNet can robustly enhance the generalizability in unseen environments compared to the state-of-the-art, especially in challenging conditions with a single source environment.

Generalizable Learning for Frequency-Domain Channel Extrapolation under Distribution Shift

May 20, 2025Abstract:Frequency-domain channel extrapolation is effective in reducing pilot overhead for massive multiple-input multiple-output (MIMO) systems. Recently, Deep learning (DL) based channel extrapolator has become a promising candidate for modeling complex frequency-domain dependency. Nevertheless, current DL extrapolators fail to operate in unseen environments under distribution shift, which poses challenges for large-scale deployment. In this paper, environment generalizable learning for channel extrapolation is achieved by realizing distribution alignment from a physics perspective. Firstly, the distribution shift of wireless channels is rigorously analyzed, which comprises the distribution shift of multipath structure and single-path response. Secondly, a physics-based progressive distribution alignment strategy is proposed to address the distribution shift, which includes successive path-oriented design and path alignment. Path-oriented DL extrapolator decomposes multipath channel extrapolation into parallel extrapolations of the extracted path, which can mitigate the distribution shift of multipath structure. Path alignment is proposed to address the distribution shift of single-path response in path-oriented DL extrapolators, which eventually enables generalizable learning for channel extrapolation. In the simulation, distinct wireless environments are generated using the precise ray-tracing tool. Based on extensive evaluations, the proposed path-oriented DL extrapolator with path alignment can reduce extrapolation error by more than 6 dB in unseen environments compared to the state-of-the-arts.

Path Evolution Model for Endogenous Channel Digital Twin towards 6G Wireless Networks

Jan 25, 2025

Abstract:Massive Multiple Input Multiple Output (MIMO) is critical for boosting 6G wireless network capacity. Nevertheless, high dimensional Channel State Information (CSI) acquisition becomes the bottleneck of 6G massive MIMO system. Recently, Channel Digital Twin (CDT), which replicates physical entities in wireless channels, has been proposed, providing site-specific prior knowledge for CSI acquisition. However, external devices (e.g., cameras and GPS devices) cannot always be integrated into existing communication systems, nor are they universally available across all scenarios. Moreover, the trained CDT model cannot be directly applied in new environments, which lacks environmental generalizability. To this end, Path Evolution Model (PEM) is proposed as an alternative CDT to reflect physical path evolutions from consecutive channel measurements. Compared to existing CDTs, PEM demonstrates virtues of full endogeneity, self-sustainability and environmental generalizability. Firstly, PEM only requires existing channel measurements, which is free of other hardware devices and can be readily deployed. Secondly, self-sustaining maintenance of PEM can be achieved in dynamic channel by progressive updates. Thirdly, environmental generalizability can greatly reduce deployment costs in dynamic environments. To facilitate the implementation of PEM, an intelligent and light-weighted operation framework is firstly designed. Then, the environmental generalizability of PEM is rigorously analyzed. Next, efficient learning approaches are proposed to reduce the amount of training data practically. Extensive simulation results reveal that PEM can simultaneously achieve high-precision and low-overhead CSI acquisition, which can serve as a fundamental CDT for 6G wireless networks.

Analysis and Optimization of Multiple-STAR-RIS Assisted MIMO-NOMA with GSVD Precoding: An Operator-Valued Free Probability Approach

Nov 14, 2024

Abstract:Among the key enabling 6G techniques, multiple-input multiple-output (MIMO) and non-orthogonal multiple-access (NOMA) play an important role in enhancing the spectral efficiency of the wireless communication systems. To further extend the coverage and the capacity, the simultaneously transmitting and reflecting reconfigurable intelligent surface (STAR-RIS) has recently emerged out as a cost-effective technology. To exploit the benefit of STAR-RIS in the MIMO-NOMA systems, in this paper, we investigate the analysis and optimization of the downlink dual-user MIMO-NOMA systems assisted by multiple STAR-RISs under the generalized singular value decomposition (GSVD) precoding scheme, in which the channel is assumed to be Rician faded with the Weichselberger's correlation structure. To analyze the asymptotic information rate of the users, we apply the operator-valued free probability theory to obtain the Cauchy transform of the generalized singular values (GSVs) of the MIMO-NOMA channel matrices, which can be used to obtain the information rate by Riemann integral. Then, considering the special case when the channels between the BS and the STAR-RISs are deterministic, we obtain the closed-form expression for the asymptotic information rates of the users. Furthermore, a projected gradient ascent method (PGAM) is proposed with the derived closed-form expression to design the STAR-RISs thereby maximizing the sum rate based on the statistical channel state information. The numerical results show the accuracy of the asymptotic expression compared to the Monte Carlo simulations and the superiority of the proposed PGAM algorithm.

Augmenting Channel Simulator and Semi- Supervised Learning for Efficient Indoor Positioning

Aug 01, 2024

Abstract:This work aims to tackle the labor-intensive and resource-consuming task of indoor positioning by proposing an efficient approach. The proposed approach involves the introduction of a semi-supervised learning (SSL) with a biased teacher (SSLB) algorithm, which effectively utilizes both labeled and unlabeled channel data. To reduce measurement expenses, unlabeled data is generated using an updated channel simulator (UCHS), and then weighted by adaptive confidence values to simplify the tuning of hyperparameters. Simulation results demonstrate that the proposed strategy achieves superior performance while minimizing measurement overhead and training expense compared to existing benchmarks, offering a valuable and practical solution for indoor positioning.

GaitSADA: Self-Aligned Domain Adaptation for mmWave Gait Recognition

Feb 01, 2023

Abstract:mmWave radar-based gait recognition is a novel user identification method that captures human gait biometrics from mmWave radar return signals. This technology offers privacy protection and is resilient to weather and lighting conditions. However, its generalization performance is yet unknown and limits its practical deployment. To address this problem, in this paper, a non-synthetic dataset is collected and analyzed to reveal the presence of spatial and temporal domain shifts in mmWave gait biometric data, which significantly impacts identification accuracy. To address this issue, a novel self-aligned domain adaptation method called GaitSADA is proposed. GaitSADA improves system generalization performance by using a two-stage semi-supervised model training approach. The first stage uses semi-supervised contrastive learning and the second stage uses semi-supervised consistency training with centroid alignment. Extensive experiments show that GaitSADA outperforms representative domain adaptation methods by an average of 15.41% in low data regimes.

Hierarchical Codebook-based Beam Training for Extremely Large-Scale Massive MIMO

Oct 07, 2022

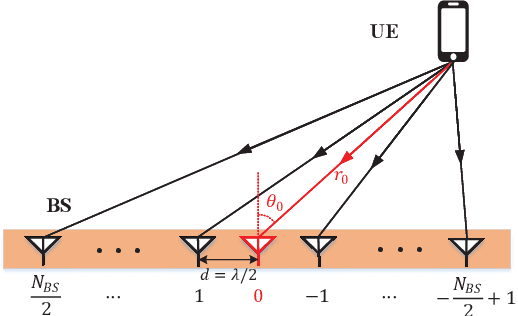

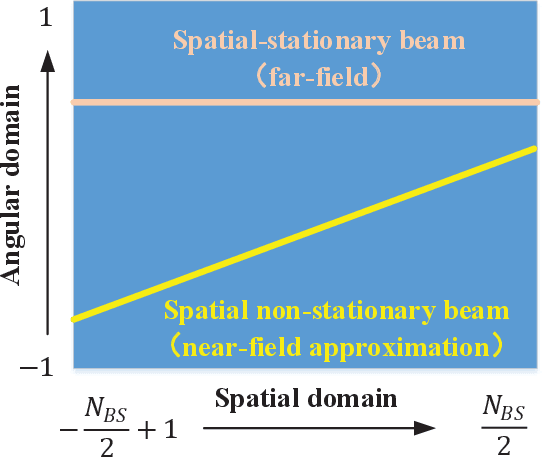

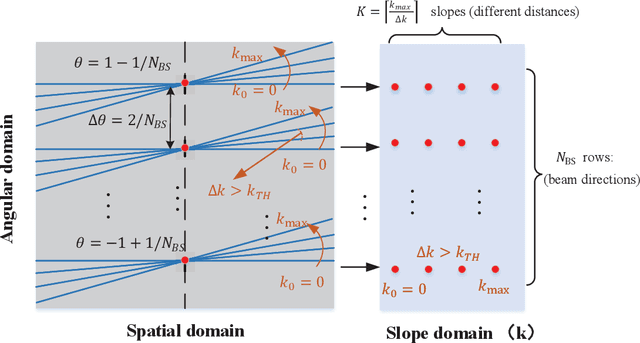

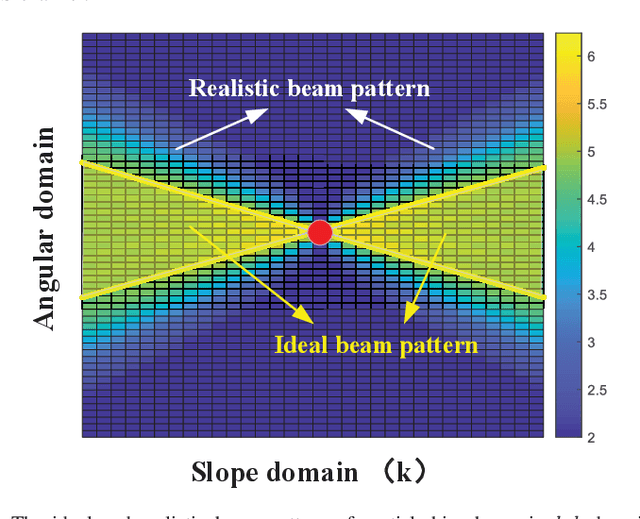

Abstract:Extremely large-scale multiple-input multiple-output (XL-MIMO) promises to provide ultrahigh data rates in millimeter-wave (mmWave) and Terahertz (THz) spectrum. However, the spherical-wavefront wireless transmission caused by large aperture array presents huge challenges for channel state information (CSI) acquisition and beamforming. Two independent parameters (physical angles and transmission distance) should be simultaneously considered in XL-MIMO beamforming, which brings severe overhead consumption and beamforming degradation. To address this problem, we exploit the near-field channel characteristic and propose two low-overhead hierarchical beam training schemes for near-field XL-MIMO system. Firstly, we project near-field channel into spatial-angular domain and slope-intercept domain to capture detailed representations. Then we point out three critical criteria for XL-MIMO hierarchical beam training. Secondly, a novel spatial-chirp beam-aided codebook and corresponding hierarchical update policy are proposed. Thirdly, given the imperfect coverage and overlapping of spatial-chirp beams, we further design an enhanced hierarchical training codebook via manifold optimization and alternative minimization. Theoretical analyses and numerical simulations are also displayed to verify the superior performances on beamforming and training overhead.

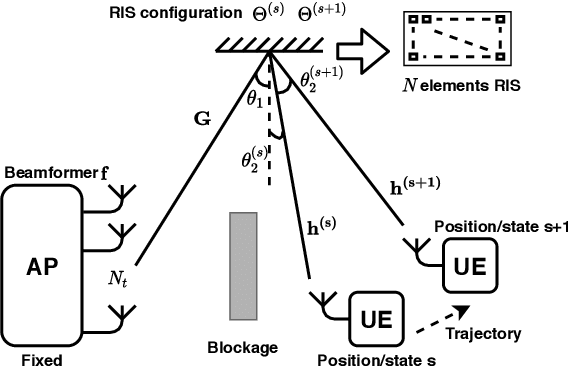

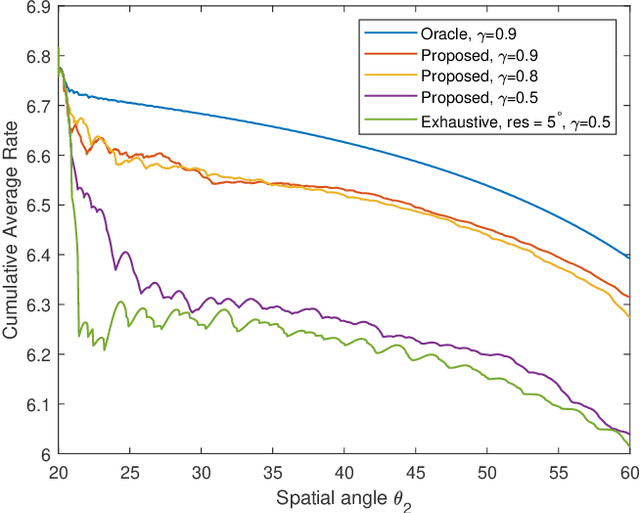

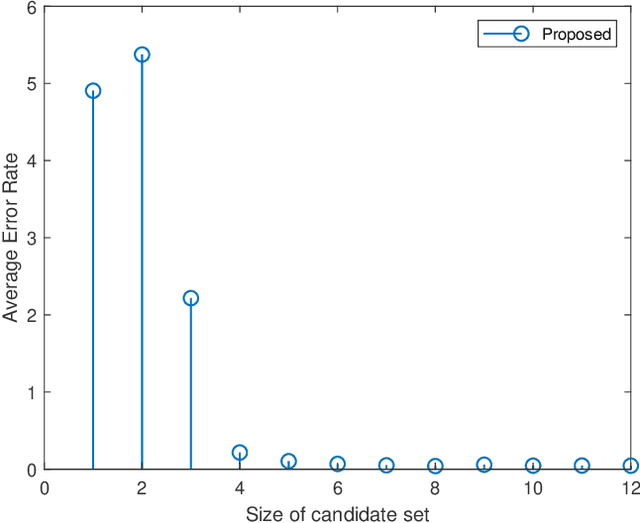

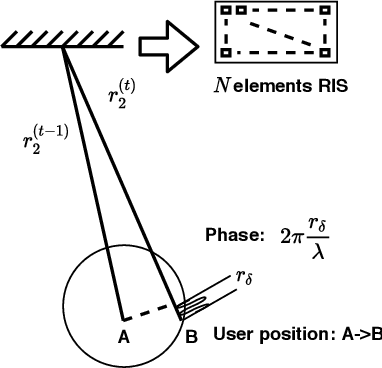

Fast Beam Tracking for Reconfigurable Intelligent Surface Assisted Mobile mmWave Networks

Feb 22, 2021

Abstract:Millimeter wave (mmWave) communications are vulnerable to blockages and node mobility due to the highly directional signal beams. The emerging Reconfigurable Intelligent Surfaces (RISs) technique can effectively mitigate the blockage problem by exploring the non-line-of-sight (NLOS) path, where the beam switching is realized by digitally configuring the phases of RIS elements. To date, most efforts have been made in the stationary scenario. However, when considering node mobility, beam tracking algorithms designed specifically for RIS are needed in order to maintain the NLOS link. In this paper, a fast RIS-based beam tracking algorithm is developed by partly transforming the large amount of signaling time into the calculation happens at base station in a mmWave system with mobile users. Specifically, the differential form of optimal RIS configuration is exploited as the updating beam tracking parameter to avoid complex channel estimation procedure. The RIS-based beam tracking problem is then transformed into an optimization problem whose solution is found by a calculation-based search. Finally, by training on a small set candidate, RIS-based beam tracking is realized. The effectiveness and efficiency of the proposed RIS-based beam tracking algorithm is evaluated by simulations. It shows that the proposed algorithm has near-optimal performance with dramatic savings in terms of signaling time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge