Yue He

A Knowledge-Informed Pretrained Model for Causal Discovery

Mar 21, 2026Abstract:Causal discovery has been widely studied, yet many existing methods rely on strong assumptions or fall into two extremes: either depending on costly interventional signals or partial ground truth as strong priors, or adopting purely data driven paradigms with limited guidance, which hinders practical deployment. Motivated by real-world scenarios where only coarse domain knowledge is available, we propose a knowledge-informed pretrained model for causal discovery that integrates weak prior knowledge as a principled middle ground. Our model adopts a dual source encoder-decoder architecture to process observational data in a knowledge-informed way. We design a diverse pretraining dataset and a curriculum learning strategy that smoothly adapts the model to varying prior strengths across mechanisms, graph densities, and variable scales. Extensive experiments on in-distribution, out-of distribution, and real-world datasets demonstrate consistent improvements over existing baselines, with strong robustness and practical applicability.

Reg-TTR, Test-Time Refinement for Fast, Robust and Accurate Image Registration

Jan 27, 2026Abstract:Traditional image registration methods are robust but slow due to their iterative nature. While deep learning has accelerated inference, it often struggles with domain shifts. Emerging registration foundation models offer a balance of speed and robustness, yet typically cannot match the peak accuracy of specialized models trained on specific datasets. To mitigate this limitation, we propose Reg-TTR, a test-time refinement framework that synergizes the complementary strengths of both deep learning and conventional registration techniques. By refining the predictions of pre-trained models at inference, our method delivers significantly improved registration accuracy at a modest computational cost, requiring only 21% additional inference time (0.56s). We evaluate Reg-TTR on two distinct tasks and show that it achieves state-of-the-art (SOTA) performance while maintaining inference speeds close to previous deep learning methods. As foundation models continue to emerge, our framework offers an efficient strategy to narrow the performance gap between registration foundation models and SOTA methods trained on specialized datasets. The source code will be publicly available following the acceptance of this work.

FMIR, a foundation model-based Image Registration Framework for Robust Image Registration

Jan 24, 2026Abstract:Deep learning has revolutionized medical image registration by achieving unprecedented speeds, yet its clinical application is hindered by a limited ability to generalize beyond the training domain, a critical weakness given the typically small scale of medical datasets. In this paper, we introduce FMIR, a foundation model-based registration framework that overcomes this limitation.Combining a foundation model-based feature encoder for extracting anatomical structures with a general registration head, and trained with a channel regularization strategy on just a single dataset, FMIR achieves state-of-the-art(SOTA) in-domain performance while maintaining robust registration on out-of-domain images.Our approach demonstrates a viable path toward building generalizable medical imaging foundation models with limited resources. The code is available at https://github.com/Monday0328/FMIR.git.

Generating Risky Samples with Conformity Constraints via Diffusion Models

Dec 21, 2025Abstract:Although neural networks achieve promising performance in many tasks, they may still fail when encountering some examples and bring about risks to applications. To discover risky samples, previous literature attempts to search for patterns of risky samples within existing datasets or inject perturbation into them. Yet in this way the diversity of risky samples is limited by the coverage of existing datasets. To overcome this limitation, recent works adopt diffusion models to produce new risky samples beyond the coverage of existing datasets. However, these methods struggle in the conformity between generated samples and expected categories, which could introduce label noise and severely limit their effectiveness in applications. To address this issue, we propose RiskyDiff that incorporates the embeddings of both texts and images as implicit constraints of category conformity. We also design a conformity score to further explicitly strengthen the category conformity, as well as introduce the mechanisms of embedding screening and risky gradient guidance to boost the risk of generated samples. Extensive experiments reveal that RiskyDiff greatly outperforms existing methods in terms of the degree of risk, generation quality, and conformity with conditioned categories. We also empirically show the generalization ability of the models can be enhanced by augmenting training data with generated samples of high conformity.

ODP-Bench: Benchmarking Out-of-Distribution Performance Prediction

Oct 31, 2025Abstract:Recently, there has been gradually more attention paid to Out-of-Distribution (OOD) performance prediction, whose goal is to predict the performance of trained models on unlabeled OOD test datasets, so that we could better leverage and deploy off-the-shelf trained models in risk-sensitive scenarios. Although progress has been made in this area, evaluation protocols in previous literature are inconsistent, and most works cover only a limited number of real-world OOD datasets and types of distribution shifts. To provide convenient and fair comparisons for various algorithms, we propose Out-of-Distribution Performance Prediction Benchmark (ODP-Bench), a comprehensive benchmark that includes most commonly used OOD datasets and existing practical performance prediction algorithms. We provide our trained models as a testbench for future researchers, thus guaranteeing the consistency of comparison and avoiding the burden of repeating the model training process. Furthermore, we also conduct in-depth experimental analyses to better understand their capability boundary.

LimiX: Unleashing Structured-Data Modeling Capability for Generalist Intelligence

Sep 03, 2025

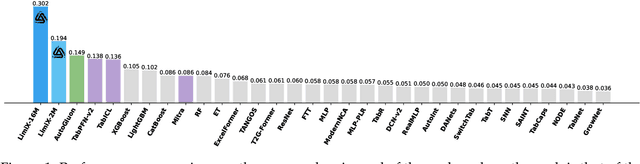

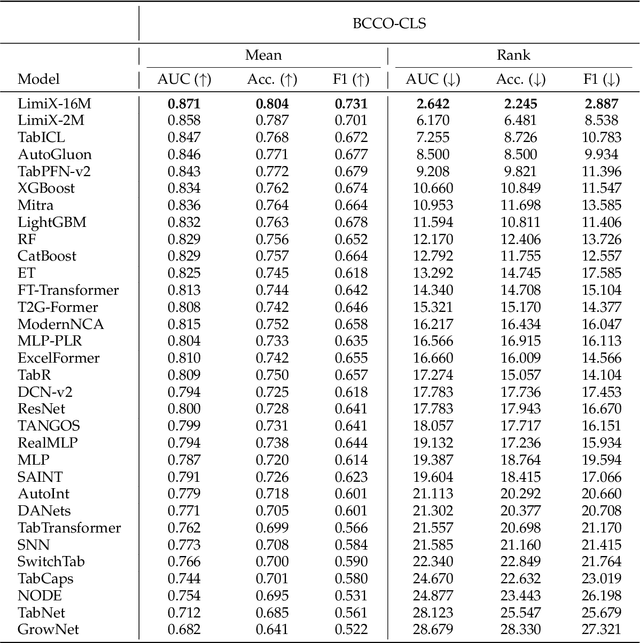

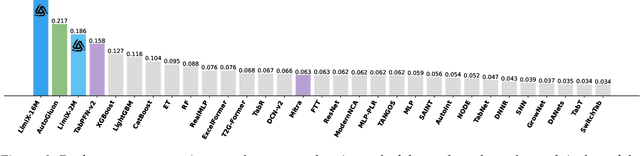

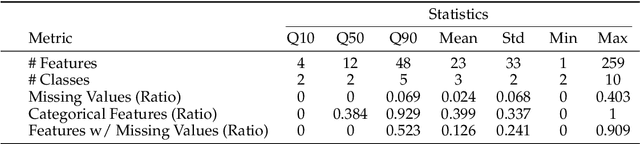

Abstract:We argue that progress toward general intelligence requires complementary foundation models grounded in language, the physical world, and structured data. This report presents LimiX, the first installment of our large structured-data models (LDMs). LimiX treats structured data as a joint distribution over variables and missingness, thus capable of addressing a wide range of tabular tasks through query-based conditional prediction via a single model. LimiX is pretrained using masked joint-distribution modeling with an episodic, context-conditional objective, where the model predicts for query subsets conditioned on dataset-specific contexts, supporting rapid, training-free adaptation at inference. We evaluate LimiX across 10 large structured-data benchmarks with broad regimes of sample size, feature dimensionality, class number, categorical-to-numerical feature ratio, missingness, and sample-to-feature ratios. With a single model and a unified interface, LimiX consistently surpasses strong baselines including gradient-boosting trees, deep tabular networks, recent tabular foundation models, and automated ensembles, as shown in Figure 1 and Figure 2. The superiority holds across a wide range of tasks, such as classification, regression, missing value imputation, and data generation, often by substantial margins, while avoiding task-specific architectures or bespoke training per task. All LimiX models are publicly accessible under Apache 2.0.

COUNTS: Benchmarking Object Detectors and Multimodal Large Language Models under Distribution Shifts

Apr 14, 2025Abstract:Current object detectors often suffer significant perfor-mance degradation in real-world applications when encountering distributional shifts. Consequently, the out-of-distribution (OOD) generalization capability of object detectors has garnered increasing attention from researchers. Despite this growing interest, there remains a lack of a large-scale, comprehensive dataset and evaluation benchmark with fine-grained annotations tailored to assess the OOD generalization on more intricate tasks like object detection and grounding. To address this gap, we introduce COUNTS, a large-scale OOD dataset with object-level annotations. COUNTS encompasses 14 natural distributional shifts, over 222K samples, and more than 1,196K labeled bounding boxes. Leveraging COUNTS, we introduce two novel benchmarks: O(OD)2 and OODG. O(OD)2 is designed to comprehensively evaluate the OOD generalization capabilities of object detectors by utilizing controlled distribution shifts between training and testing data. OODG, on the other hand, aims to assess the OOD generalization of grounding abilities in multimodal large language models (MLLMs). Our findings reveal that, while large models and extensive pre-training data substantially en hance performance in in-distribution (IID) scenarios, significant limitations and opportunities for improvement persist in OOD contexts for both object detectors and MLLMs. In visual grounding tasks, even the advanced GPT-4o and Gemini-1.5 only achieve 56.7% and 28.0% accuracy, respectively. We hope COUNTS facilitates advancements in the development and assessment of robust object detectors and MLLMs capable of maintaining high performance under distributional shifts.

Sample Weight Averaging for Stable Prediction

Feb 11, 2025Abstract:The challenge of Out-of-Distribution (OOD) generalization poses a foundational concern for the application of machine learning algorithms to risk-sensitive areas. Inspired by traditional importance weighting and propensity weighting methods, prior approaches employ an independence-based sample reweighting procedure. They aim at decorrelating covariates to counteract the bias introduced by spurious correlations between unstable variables and the outcome, thus enhancing generalization and fulfilling stable prediction under covariate shift. Nonetheless, these methods are prone to experiencing an inflation of variance, primarily attributable to the reduced efficacy in utilizing training samples during the reweighting process. Existing remedies necessitate either environmental labels or substantially higher time costs along with additional assumptions and supervised information. To mitigate this issue, we propose SAmple Weight Averaging (SAWA), a simple yet efficacious strategy that can be universally integrated into various sample reweighting algorithms to decrease the variance and coefficient estimation error, thus boosting the covariate-shift generalization and achieving stable prediction across different environments. We prove its rationality and benefits theoretically. Experiments across synthetic datasets and real-world datasets consistently underscore its superiority against covariate shift.

Error Slice Discovery via Manifold Compactness

Jan 31, 2025

Abstract:Despite the great performance of deep learning models in many areas, they still make mistakes and underperform on certain subsets of data, i.e. error slices. Given a trained model, it is important to identify its semantically coherent error slices that are easy to interpret, which is referred to as the error slice discovery problem. However, there is no proper metric of slice coherence without relying on extra information like predefined slice labels. Current evaluation of slice coherence requires access to predefined slices formulated by metadata like attributes or subclasses. Its validity heavily relies on the quality and abundance of metadata, where some possible patterns could be ignored. Besides, current algorithms cannot directly incorporate the constraint of coherence into their optimization objective due to the absence of an explicit coherence metric, which could potentially hinder their effectiveness. In this paper, we propose manifold compactness, a coherence metric without reliance on extra information by incorporating the data geometry property into its design, and experiments on typical datasets empirically validate the rationality of the metric. Then we develop Manifold Compactness based error Slice Discovery (MCSD), a novel algorithm that directly treats risk and coherence as the optimization objective, and is flexible to be applied to models of various tasks. Extensive experiments on the benchmark and case studies on other typical datasets demonstrate the superiority of MCSD.

Bilateral Unsymmetrical Graph Contrastive Learning for Recommendation

Mar 22, 2024

Abstract:Recent methods utilize graph contrastive Learning within graph-structured user-item interaction data for collaborative filtering and have demonstrated their efficacy in recommendation tasks. However, they ignore that the difference relation density of nodes between the user- and item-side causes the adaptability of graphs on bilateral nodes to be different after multi-hop graph interaction calculation, which limits existing models to achieve ideal results. To solve this issue, we propose a novel framework for recommendation tasks called Bilateral Unsymmetrical Graph Contrastive Learning (BusGCL) that consider the bilateral unsymmetry on user-item node relation density for sliced user and item graph reasoning better with bilateral slicing contrastive training. Especially, taking into account the aggregation ability of hypergraph-based graph convolutional network (GCN) in digging implicit similarities is more suitable for user nodes, embeddings generated from three different modules: hypergraph-based GCN, GCN and perturbed GCN, are sliced into two subviews by the user- and item-side respectively, and selectively combined into subview pairs bilaterally based on the characteristics of inter-node relation structure. Furthermore, to align the distribution of user and item embeddings after aggregation, a dispersing loss is leveraged to adjust the mutual distance between all embeddings for maintaining learning ability. Comprehensive experiments on two public datasets have proved the superiority of BusGCL in comparison to various recommendation methods. Other models can simply utilize our bilateral slicing contrastive learning to enhance recommending performance without incurring extra expenses.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge