Xujiang Zhao

Open-ended Commonsense Reasoning with Unrestricted Answer Scope

Oct 27, 2023

Abstract:Open-ended Commonsense Reasoning is defined as solving a commonsense question without providing 1) a short list of answer candidates and 2) a pre-defined answer scope. Conventional ways of formulating the commonsense question into a question-answering form or utilizing external knowledge to learn retrieval-based methods are less applicable in the open-ended setting due to an inherent challenge. Without pre-defining an answer scope or a few candidates, open-ended commonsense reasoning entails predicting answers by searching over an extremely large searching space. Moreover, most questions require implicit multi-hop reasoning, which presents even more challenges to our problem. In this work, we leverage pre-trained language models to iteratively retrieve reasoning paths on the external knowledge base, which does not require task-specific supervision. The reasoning paths can help to identify the most precise answer to the commonsense question. We conduct experiments on two commonsense benchmark datasets. Compared to other approaches, our proposed method achieves better performance both quantitatively and qualitatively.

Large Language Models Can Be Good Privacy Protection Learners

Oct 03, 2023

Abstract:The proliferation of Large Language Models (LLMs) has driven considerable interest in fine-tuning them with domain-specific data to create specialized language models. Nevertheless, such domain-specific fine-tuning data often contains sensitive personally identifiable information (PII). Direct fine-tuning LLMs on this data without privacy protection poses a risk of leakage. To address this challenge, we introduce Privacy Protection Language Models (PPLM), a novel paradigm for fine-tuning LLMs that effectively injects domain-specific knowledge while safeguarding data privacy. Our work offers a theoretical analysis for model design and delves into various techniques such as corpus curation, penalty-based unlikelihood in training loss, and instruction-based tuning, etc. Extensive experiments across diverse datasets and scenarios demonstrate the effectiveness of our approaches. In particular, instruction tuning with both positive and negative examples, stands out as a promising method, effectively protecting private data while enhancing the model's knowledge. Our work underscores the potential for Large Language Models as robust privacy protection learners.

Pursuing Counterfactual Fairness via Sequential Autoencoder Across Domains

Sep 22, 2023

Abstract:Recognizing the prevalence of domain shift as a common challenge in machine learning, various domain generalization (DG) techniques have been developed to enhance the performance of machine learning systems when dealing with out-of-distribution (OOD) data. Furthermore, in real-world scenarios, data distributions can gradually change across a sequence of sequential domains. While current methodologies primarily focus on improving model effectiveness within these new domains, they often overlook fairness issues throughout the learning process. In response, we introduce an innovative framework called Counterfactual Fairness-Aware Domain Generalization with Sequential Autoencoder (CDSAE). This approach effectively separates environmental information and sensitive attributes from the embedded representation of classification features. This concurrent separation not only greatly improves model generalization across diverse and unfamiliar domains but also effectively addresses challenges related to unfair classification. Our strategy is rooted in the principles of causal inference to tackle these dual issues. To examine the intricate relationship between semantic information, sensitive attributes, and environmental cues, we systematically categorize exogenous uncertainty factors into four latent variables: 1) semantic information influenced by sensitive attributes, 2) semantic information unaffected by sensitive attributes, 3) environmental cues influenced by sensitive attributes, and 4) environmental cues unaffected by sensitive attributes. By incorporating fairness regularization, we exclusively employ semantic information for classification purposes. Empirical validation on synthetic and real-world datasets substantiates the effectiveness of our approach, demonstrating improved accuracy levels while ensuring the preservation of fairness in the evolving landscape of continuous domains.

Improving Open Information Extraction with Large Language Models: A Study on Demonstration Uncertainty

Sep 07, 2023Abstract:Open Information Extraction (OIE) task aims at extracting structured facts from unstructured text, typically in the form of (subject, relation, object) triples. Despite the potential of large language models (LLMs) like ChatGPT as a general task solver, they lag behind state-of-the-art (supervised) methods in OIE tasks due to two key issues. First, LLMs struggle to distinguish irrelevant context from relevant relations and generate structured output due to the restrictions on fine-tuning the model. Second, LLMs generates responses autoregressively based on probability, which makes the predicted relations lack confidence. In this paper, we assess the capabilities of LLMs in improving the OIE task. Particularly, we propose various in-context learning strategies to enhance LLM's instruction-following ability and a demonstration uncertainty quantification module to enhance the confidence of the generated relations. Our experiments on three OIE benchmark datasets show that our approach holds its own against established supervised methods, both quantitatively and qualitatively.

Adaptation Speed Analysis for Fairness-aware Causal Models

Aug 31, 2023Abstract:For example, in machine translation tasks, to achieve bidirectional translation between two languages, the source corpus is often used as the target corpus, which involves the training of two models with opposite directions. The question of which one can adapt most quickly to a domain shift is of significant importance in many fields. Specifically, consider an original distribution p that changes due to an unknown intervention, resulting in a modified distribution p*. In aligning p with p*, several factors can affect the adaptation rate, including the causal dependencies between variables in p. In real-life scenarios, however, we have to consider the fairness of the training process, and it is particularly crucial to involve a sensitive variable (bias) present between a cause and an effect variable. To explore this scenario, we examine a simple structural causal model (SCM) with a cause-bias-effect structure, where variable A acts as a sensitive variable between cause (X) and effect (Y). The two models, respectively, exhibit consistent and contrary cause-effect directions in the cause-bias-effect SCM. After conducting unknown interventions on variables within the SCM, we can simulate some kinds of domain shifts for analysis. We then compare the adaptation speeds of two models across four shift scenarios. Additionally, we prove the connection between the adaptation speeds of the two models across all interventions.

Beyond One-Model-Fits-All: A Survey of Domain Specialization for Large Language Models

May 31, 2023

Abstract:Large language models (LLMs) have significantly advanced the field of natural language processing (NLP), providing a highly useful, task-agnostic foundation for a wide range of applications. The great promise of LLMs as general task solvers motivated people to extend their functionality largely beyond just a ``chatbot'', and use it as an assistant or even replacement for domain experts and tools in specific domains such as healthcare, finance, and education. However, directly applying LLMs to solve sophisticated problems in specific domains meets many hurdles, caused by the heterogeneity of domain data, the sophistication of domain knowledge, the uniqueness of domain objectives, and the diversity of the constraints (e.g., various social norms, cultural conformity, religious beliefs, and ethical standards in the domain applications). To fill such a gap, explosively-increase research, and practices have been conducted in very recent years on the domain specialization of LLMs, which, however, calls for a comprehensive and systematic review to better summarizes and guide this promising domain. In this survey paper, first, we propose a systematic taxonomy that categorizes the LLM domain-specialization techniques based on the accessibility to LLMs and summarizes the framework for all the subcategories as well as their relations and differences to each other. We also present a comprehensive taxonomy of critical application domains that can benefit from specialized LLMs, discussing their practical significance and open challenges. Furthermore, we offer insights into the current research status and future trends in this area.

Multidimensional Uncertainty Quantification for Deep Neural Networks

Apr 20, 2023Abstract:Deep neural networks (DNNs) have received tremendous attention and achieved great success in various applications, such as image and video analysis, natural language processing, recommendation systems, and drug discovery. However, inherent uncertainties derived from different root causes have been realized as serious hurdles for DNNs to find robust and trustworthy solutions for real-world problems. A lack of consideration of such uncertainties may lead to unnecessary risk. For example, a self-driving autonomous car can misdetect a human on the road. A deep learning-based medical assistant may misdiagnose cancer as a benign tumor. In this work, we study how to measure different uncertainty causes for DNNs and use them to solve diverse decision-making problems more effectively. In the first part of this thesis, we develop a general learning framework to quantify multiple types of uncertainties caused by different root causes, such as vacuity (i.e., uncertainty due to a lack of evidence) and dissonance (i.e., uncertainty due to conflicting evidence), for graph neural networks. We provide a theoretical analysis of the relationships between different uncertainty types. We further demonstrate that dissonance is most effective for misclassification detection and vacuity is most effective for Out-of-Distribution (OOD) detection. In the second part of the thesis, we study the significant impact of OOD objects on semi-supervised learning (SSL) for DNNs and develop a novel framework to improve the robustness of existing SSL algorithms against OODs. In the last part of the thesis, we create a general learning framework to quantity multiple uncertainty types for multi-label temporal neural networks. We further develop novel uncertainty fusion operators to quantify the fused uncertainty of a subsequence for early event detection.

Dynamic Prompting: A Unified Framework for Prompt Tuning

Mar 06, 2023

Abstract:It has been demonstrated that prompt tuning is highly effective in efficiently eliciting knowledge from language models (LMs). However, the prompt tuning still lags behind fine-tuning, especially when the LMs are small. P-tuning v2 (Liu et al., 2021b) makes it comparable with finetuning by adding continuous prompts for every layer of the pre-trained model. However, prepending fixed soft prompts for all instances, regardless of their discrepancy, is doubtful. In particular, the inserted prompt position, length, and the representations of prompts for diversified instances through different tasks could all affect the prompt tuning performance. To fill this gap, we propose dynamic prompting (DP): the position, length, and prompt representation can all be dynamically optimized with respect to different tasks and instances. We conduct comprehensive experiments on the SuperGlue benchmark to validate our hypothesis and demonstrate substantial improvements. We also derive a unified framework for supporting our dynamic prompting strategy. In particular, we use a simple learning network and Gumble- Softmax for learning instance-dependent guidance. Experimental results show that simple instance-level position-aware soft prompts can improve the classification accuracy of up to 6 points on average on five datasets, reducing its gap with fine-tuning. Besides, we also prove its universal usefulness under full-data, few-shot, and multitask regimes. Combining them together can even further unleash the power of DP, narrowing the distance between finetuning.

Knowledge-enhanced Neural Machine Reasoning: A Review

Feb 07, 2023Abstract:Knowledge-enhanced neural machine reasoning has garnered significant attention as a cutting-edge yet challenging research area with numerous practical applications. Over the past few years, plenty of studies have leveraged various forms of external knowledge to augment the reasoning capabilities of deep models, tackling challenges such as effective knowledge integration, implicit knowledge mining, and problems of tractability and optimization. However, there is a dearth of a comprehensive technical review of the existing knowledge-enhanced reasoning techniques across the diverse range of application domains. This survey provides an in-depth examination of recent advancements in the field, introducing a novel taxonomy that categorizes existing knowledge-enhanced methods into two primary categories and four subcategories. We systematically discuss these methods and highlight their correlations, strengths, and limitations. Finally, we elucidate the current application domains and provide insight into promising prospects for future research.

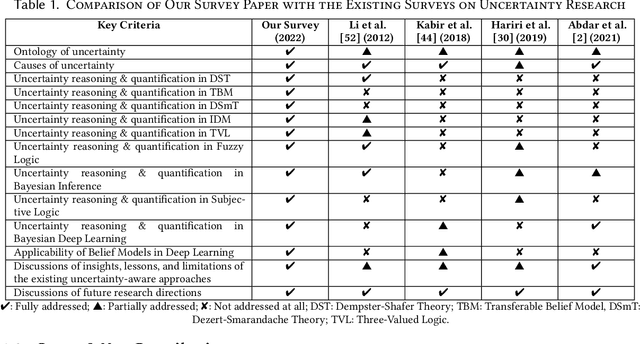

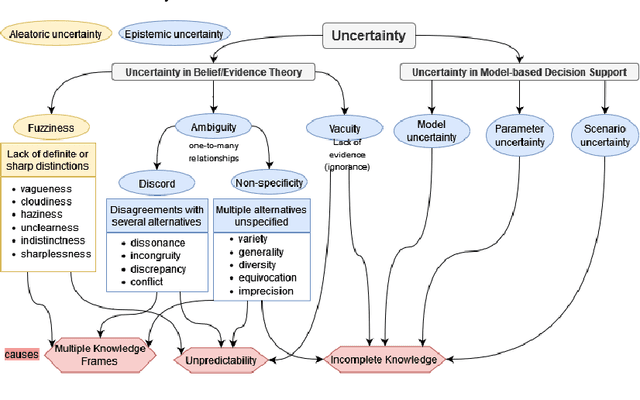

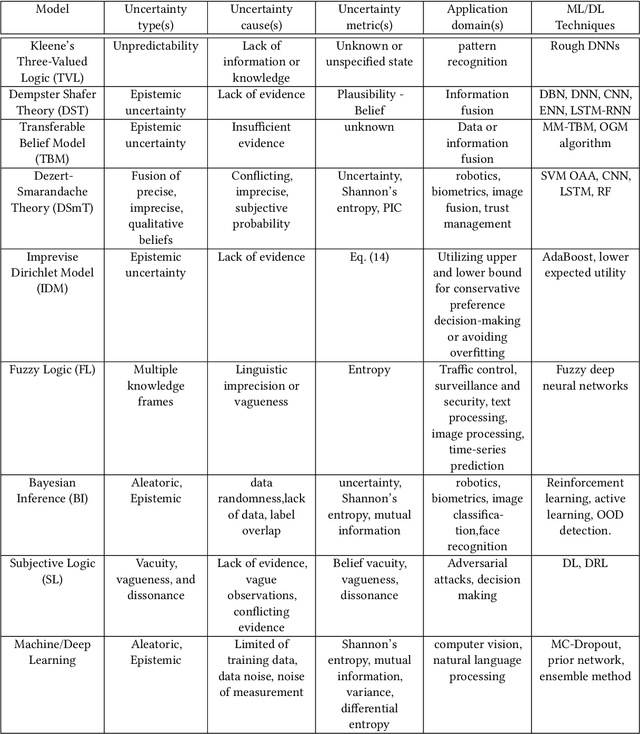

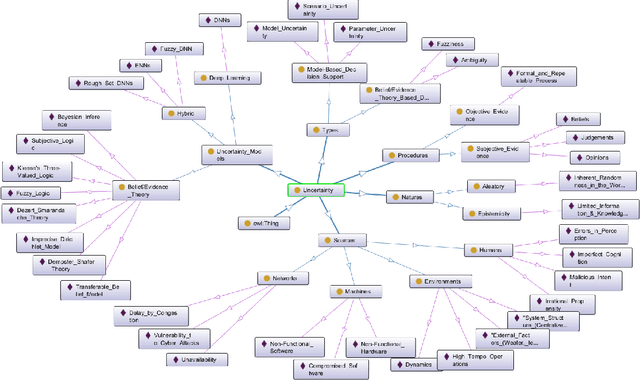

A Survey on Uncertainty Reasoning and Quantification for Decision Making: Belief Theory Meets Deep Learning

Jun 14, 2022

Abstract:An in-depth understanding of uncertainty is the first step to making effective decisions under uncertainty. Deep/machine learning (ML/DL) has been hugely leveraged to solve complex problems involved with processing high-dimensional data. However, reasoning and quantifying different types of uncertainties to achieve effective decision-making have been much less explored in ML/DL than in other Artificial Intelligence (AI) domains. In particular, belief/evidence theories have been studied in KRR since the 1960s to reason and measure uncertainties to enhance decision-making effectiveness. We found that only a few studies have leveraged the mature uncertainty research in belief/evidence theories in ML/DL to tackle complex problems under different types of uncertainty. In this survey paper, we discuss several popular belief theories and their core ideas dealing with uncertainty causes and types and quantifying them, along with the discussions of their applicability in ML/DL. In addition, we discuss three main approaches that leverage belief theories in Deep Neural Networks (DNNs), including Evidential DNNs, Fuzzy DNNs, and Rough DNNs, in terms of their uncertainty causes, types, and quantification methods along with their applicability in diverse problem domains. Based on our in-depth survey, we discuss insights, lessons learned, limitations of the current state-of-the-art bridging belief theories and ML/DL, and finally, future research directions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge