Mengke Li

Learning Order Forest for Qualitative-Attribute Data Clustering

Mar 03, 2026Abstract:Clustering is a fundamental approach to understanding data patterns, wherein the intuitive Euclidean distance space is commonly adopted. However, this is not the case for implicit cluster distributions reflected by qualitative attribute values, e.g., the nominal values of attributes like symptoms, marital status, etc. This paper, therefore, discovered a tree-like distance structure to flexibly represent the local order relationship among intra-attribute qualitative values. That is, treating a value as the vertex of the tree allows to capture rich order relationships among the vertex value and the others. To obtain the trees in a clustering-friendly form, a joint learning mechanism is proposed to iteratively obtain more appropriate tree structures and clusters. It turns out that the latent distance space of the whole dataset can be well-represented by a forest consisting of the learned trees. Extensive experiments demonstrate that the joint learning adapts the forest to the clustering task to yield accurate results. Comparisons of 10 counterparts on 12 real benchmark datasets with significance tests verify the superiority of the proposed method.

* Accepted to ECAI2024

One-Shot Hierarchical Federated Clustering

Jan 10, 2026Abstract:Driven by the growth of Web-scale decentralized services, Federated Clustering (FC) aims to extract knowledge from heterogeneous clients in an unsupervised manner while preserving the clients' privacy, which has emerged as a significant challenge due to the lack of label guidance and the Non-Independent and Identically Distributed (non-IID) nature of clients. In real scenarios such as personalized recommendation and cross-device user profiling, the global cluster may be fragmented and distributed among different clients, and the clusters may exist at different granularities or even nested. Although Hierarchical Clustering (HC) is considered promising for exploring such distributions, the sophisticated recursive clustering process makes it more computationally expensive and vulnerable to privacy exposure, thus relatively unexplored under the federated learning scenario. This paper introduces an efficient one-shot hierarchical FC framework that performs client-end distribution exploration and server-end distribution aggregation through one-way prototype-level communication from clients to the server. A fine partition mechanism is developed to generate successive clusterlets to describe the complex landscape of the clients' clusters. Then, a multi-granular learning mechanism on the server is proposed to fuse the clusterlets, even when they have inconsistent granularities generated from different clients. It turns out that the complex cluster distributions across clients can be efficiently explored, and extensive experiments comparing state-of-the-art methods on ten public datasets demonstrate the superiority of the proposed method.

Break the Tie: Learning Cluster-Customized Category Relationships for Categorical Data Clustering

Nov 12, 2025

Abstract:Categorical attributes with qualitative values are ubiquitous in cluster analysis of real datasets. Unlike the Euclidean distance of numerical attributes, the categorical attributes lack well-defined relationships of their possible values (also called categories interchangeably), which hampers the exploration of compact categorical data clusters. Although most attempts are made for developing appropriate distance metrics, they typically assume a fixed topological relationship between categories when learning distance metrics, which limits their adaptability to varying cluster structures and often leads to suboptimal clustering performance. This paper, therefore, breaks the intrinsic relationship tie of attribute categories and learns customized distance metrics suitable for flexibly and accurately revealing various cluster distributions. As a result, the fitting ability of the clustering algorithm is significantly enhanced, benefiting from the learnable category relationships. Moreover, the learned category relationships are proved to be Euclidean distance metric-compatible, enabling a seamless extension to mixed datasets that include both numerical and categorical attributes. Comparative experiments on 12 real benchmark datasets with significance tests show the superior clustering accuracy of the proposed method with an average ranking of 1.25, which is significantly higher than the 5.21 ranking of the current best-performing method.

FATE: A Prompt-Tuning-Based Semi-Supervised Learning Framework for Extremely Limited Labeled Data

Apr 14, 2025Abstract:Semi-supervised learning (SSL) has achieved significant progress by leveraging both labeled data and unlabeled data. Existing SSL methods overlook a common real-world scenario when labeled data is extremely scarce, potentially as limited as a single labeled sample in the dataset. General SSL approaches struggle to train effectively from scratch under such constraints, while methods utilizing pre-trained models often fail to find an optimal balance between leveraging limited labeled data and abundant unlabeled data. To address this challenge, we propose Firstly Adapt, Then catEgorize (FATE), a novel SSL framework tailored for scenarios with extremely limited labeled data. At its core, the two-stage prompt tuning paradigm FATE exploits unlabeled data to compensate for scarce supervision signals, then transfers to downstream tasks. Concretely, FATE first adapts a pre-trained model to the feature distribution of downstream data using volumes of unlabeled samples in an unsupervised manner. It then applies an SSL method specifically designed for pre-trained models to complete the final classification task. FATE is designed to be compatible with both vision and vision-language pre-trained models. Extensive experiments demonstrate that FATE effectively mitigates challenges arising from the scarcity of labeled samples in SSL, achieving an average performance improvement of 33.74% across seven benchmarks compared to state-of-the-art SSL methods. Code is available at https://anonymous.4open.science/r/Semi-supervised-learning-BA72.

Classifying Long-tailed and Label-noise Data via Disentangling and Unlearning

Mar 14, 2025

Abstract:In real-world datasets, the challenges of long-tailed distributions and noisy labels often coexist, posing obstacles to the model training and performance. Existing studies on long-tailed noisy label learning (LTNLL) typically assume that the generation of noisy labels is independent of the long-tailed distribution, which may not be true from a practical perspective. In real-world situaiton, we observe that the tail class samples are more likely to be mislabeled as head, exacerbating the original degree of imbalance. We call this phenomenon as ``tail-to-head (T2H)'' noise. T2H noise severely degrades model performance by polluting the head classes and forcing the model to learn the tail samples as head. To address this challenge, we investigate the dynamic misleading process of the nosiy labels and propose a novel method called Disentangling and Unlearning for Long-tailed and Label-noisy data (DULL). It first employs the Inner-Feature Disentangling (IFD) to disentangle feature internally. Based on this, the Inner-Feature Partial Unlearning (IFPU) is then applied to weaken and unlearn incorrect feature regions correlated to wrong classes. This method prevents the model from being misled by noisy labels, enhancing the model's robustness against noise. To provide a controlled experimental environment, we further propose a new noise addition algorithm to simulate T2H noise. Extensive experiments on both simulated and real-world datasets demonstrate the effectiveness of our proposed method.

Iterative Prompt Relocation for Distribution-Adaptive Visual Prompt Tuning

Mar 10, 2025

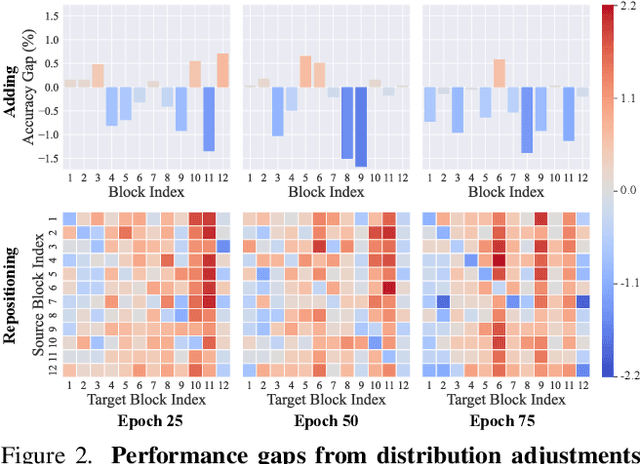

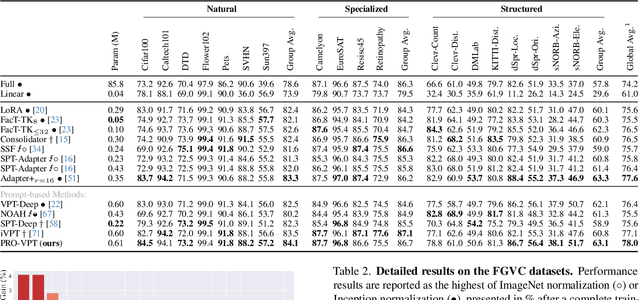

Abstract:Visual prompt tuning (VPT) provides an efficient and effective solution for adapting pre-trained models to various downstream tasks by incorporating learnable prompts. However, most prior art indiscriminately applies a fixed prompt distribution across different tasks, neglecting the importance of each block differing depending on the task. In this paper, we investigate adaptive distribution optimization (ADO) by addressing two key questions: (1) How to appropriately and formally define ADO, and (2) How to design an adaptive distribution strategy guided by this definition? Through in-depth analysis, we provide an affirmative answer that properly adjusting the distribution significantly improves VPT performance, and further uncover a key insight that a nested relationship exists between ADO and VPT. Based on these findings, we propose a new VPT framework, termed PRO-VPT (iterative Prompt RelOcation-based VPT), which adaptively adjusts the distribution building upon a nested optimization formulation. Specifically, we develop a prompt relocation strategy for ADO derived from this formulation, comprising two optimization steps: identifying and pruning idle prompts, followed by determining the optimal blocks for their relocation. By iteratively performing prompt relocation and VPT, our proposal adaptively learns the optimal prompt distribution, thereby unlocking the full potential of VPT. Extensive experiments demonstrate that our proposal significantly outperforms state-of-the-art VPT methods, e.g., PRO-VPT surpasses VPT by 1.6% average accuracy, leading prompt-based methods to state-of-the-art performance on the VTAB-1k benchmark. The code is available at https://github.com/ckshang/PRO-VPT.

Asynchronous Federated Clustering with Unknown Number of Clusters

Dec 29, 2024

Abstract:Federated Clustering (FC) is crucial to mining knowledge from unlabeled non-Independent Identically Distributed (non-IID) data provided by multiple clients while preserving their privacy. Most existing attempts learn cluster distributions at local clients, and then securely pass the desensitized information to the server for aggregation. However, some tricky but common FC problems are still relatively unexplored, including the heterogeneity in terms of clients' communication capacity and the unknown number of proper clusters $k^*$. To further bridge the gap between FC and real application scenarios, this paper first shows that the clients' communication asynchrony and unknown $k^*$ are complex coupling problems, and then proposes an Asynchronous Federated Cluster Learning (AFCL) method accordingly. It spreads the excessive number of seed points to the clients as a learning medium and coordinates them across the clients to form a consensus. To alleviate the distribution imbalance cumulated due to the unforeseen asynchronous uploading from the heterogeneous clients, we also design a balancing mechanism for seeds updating. As a result, the seeds gradually adapt to each other to reveal a proper number of clusters. Extensive experiments demonstrate the efficacy of AFCL.

Attributed Graph Clustering via Generalized Quaternion Representation Learning

Nov 22, 2024

Abstract:Clustering complex data in the form of attributed graphs has attracted increasing attention, where appropriate graph representation is a critical prerequisite for accurate cluster analysis. However, the Graph Convolutional Network will homogenize the representation of graph nodes due to the well-known over-smoothing effect. This limits the network architecture to a shallow one, losing the ability to capture the critical global distribution information for clustering. Therefore, we propose a generalized graph auto-encoder network, which introduces quaternion operations to the encoders to achieve efficient structured feature representation learning without incurring deeper network and larger-scale parameters. The generalization of our method lies in the following two aspects: 1) connecting the quaternion operation naturally suitable for four feature components with graph data of arbitrary attribute dimensions, and 2) introducing a generalized graph clustering objective as a loss term to obtain clustering-friendly representations without requiring a pre-specified number of clusters $k$. It turns out that the representations of nodes learned by the proposed Graph Clustering based on Generalized Quaternion representation learning (GCGQ) are more discriminative, containing global distribution information, and are more general, suiting downstream clustering under different $k$s. Extensive experiments including significance tests, ablation studies, and qualitative results, illustrate the superiority of GCGQ. The source code is temporarily opened at \url{https://anonymous.4open.science/r/ICLR-25-No7181-codes}.

Improving Visual Prompt Tuning by Gaussian Neighborhood Minimization for Long-Tailed Visual Recognition

Oct 28, 2024

Abstract:Long-tail learning has garnered widespread attention and achieved significant progress in recent times. However, even with pre-trained prior knowledge, models still exhibit weaker generalization performance on tail classes. The promising Sharpness-Aware Minimization (SAM) can effectively improve the generalization capability of models by seeking out flat minima in the loss landscape, which, however, comes at the cost of doubling the computational time. Since the update rule of SAM necessitates two consecutive (non-parallelizable) forward and backpropagation at each step. To address this issue, we propose a novel method called Random SAM prompt tuning (RSAM-PT) to improve the model generalization, requiring only one-step gradient computation at each step. Specifically, we search for the gradient descent direction within a random neighborhood of the parameters during each gradient update. To amplify the impact of tail-class samples and avoid overfitting, we employ the deferred re-weight scheme to increase the significance of tail-class samples. The classification accuracy of long-tailed data can be significantly improved by the proposed RSAM-PT, particularly for tail classes. RSAM-PT achieves the state-of-the-art performance of 90.3\%, 76.5\%, and 50.1\% on benchmark datasets CIFAR100-LT (IF 100), iNaturalist 2018, and Places-LT, respectively. The source code is temporarily available at https://github.com/Keke921/GNM-PT.

Personalized Federated Learning on Heterogeneous and Long-Tailed Data via Expert Collaborative Learning

Aug 04, 2024Abstract:Personalized Federated Learning (PFL) aims to acquire customized models for each client without disclosing raw data by leveraging the collective knowledge of distributed clients. However, the data collected in real-world scenarios is likely to follow a long-tailed distribution. For example, in the medical domain, it is more common for the number of general health notes to be much larger than those specifically relatedto certain diseases. The presence of long-tailed data can significantly degrade the performance of PFL models. Additionally, due to the diverse environments in which each client operates, data heterogeneity is also a classic challenge in federated learning. In this paper, we explore the joint problem of global long-tailed distribution and data heterogeneity in PFL and propose a method called Expert Collaborative Learning (ECL) to tackle this problem. Specifically, each client has multiple experts, and each expert has a different training subset, which ensures that each class, especially the minority classes, receives sufficient training. Multiple experts collaborate synergistically to produce the final prediction output. Without special bells and whistles, the vanilla ECL outperforms other state-of-the-art PFL methods on several benchmark datasets under different degrees of data heterogeneity and long-tailed distribution.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge