Lu Sun

A^3: Towards Advertising Aesthetic Assessment

Mar 25, 2026Abstract:Advertising images significantly impact commercial conversion rates and brand equity, yet current evaluation methods rely on subjective judgments, lacking scalability, standardized criteria, and interpretability. To address these challenges, we present A^3 (Advertising Aesthetic Assessment), a comprehensive framework encompassing four components: a paradigm (A^3-Law), a dataset (A^3-Dataset), a multimodal large language model (A^3-Align), and a benchmark (A^3-Bench). Central to A^3 is a theory-driven paradigm, A^3-Law, comprising three hierarchical stages: (1) Perceptual Attention, evaluating perceptual image signals for their ability to attract attention; (2) Formal Interest, assessing formal composition of image color and spatial layout in evoking interest; and (3) Desire Impact, measuring desire evocation from images and their persuasive impact. Building on A^3-Law, we construct A^3-Dataset with 120K instruction-response pairs from 30K advertising images, each richly annotated with multi-dimensional labels and Chain-of-Thought (CoT) rationales. We further develop A^3-Align, trained under A^3-Law with CoT-guided learning on A^3-Dataset. Extensive experiments on A^3-Bench demonstrate that A^3-Align achieves superior alignment with A^3-Law compared to existing models, and this alignment generalizes well to quality advertisement selection and prescriptive advertisement critique, indicating its potential for broader deployment. Dataset, code, and models can be found at: https://github.com/euleryuan/A3-Align.

SafeSci: Safety Evaluation of Large Language Models in Science Domains and Beyond

Mar 02, 2026Abstract:The success of large language models (LLMs) in scientific domains has heightened safety concerns, prompting numerous benchmarks to evaluate their scientific safety. Existing benchmarks often suffer from limited risk coverage and a reliance on subjective evaluation. To address these problems, we introduce SafeSci, a comprehensive framework for safety evaluation and enhancement in scientific contexts. SafeSci comprises SafeSciBench, a multi-disciplinary benchmark with 0.25M samples, and SafeSciTrain, a large-scale dataset containing 1.5M samples for safety enhancement. SafeSciBench distinguishes between safety knowledge and risk to cover extensive scopes and employs objective metrics such as deterministically answerable questions to mitigate evaluation bias. We evaluate 24 advanced LLMs, revealing critical vulnerabilities in current models. We also observe that LLMs exhibit varying degrees of excessive refusal behaviors on safety-related issues. For safety enhancement, we demonstrate that fine-tuning on SafeSciTrain significantly enhances the safety alignment of models. Finally, we argue that knowledge is a double-edged sword, and determining the safety of a scientific question should depend on specific context, rather than universally categorizing it as safe or unsafe. Our work provides both a diagnostic tool and a practical resource for building safer scientific AI systems.

GhostCite: A Large-Scale Analysis of Citation Validity in the Age of Large Language Models

Feb 06, 2026Abstract:Citations provide the basis for trusting scientific claims; when they are invalid or fabricated, this trust collapses. With the advent of Large Language Models (LLMs), this risk has intensified: LLMs are increasingly used for academic writing, yet their tendency to fabricate citations (``ghost citations'') poses a systemic threat to citation validity. To quantify this threat and inform mitigation, we develop CiteVerifier, an open-source framework for large-scale citation verification, and conduct the first comprehensive study of citation validity in the LLM era through three experiments built on it. We benchmark 13 state-of-the-art LLMs on citation generation across 40 research domains, finding that all models hallucinate citations at rates from 14.23\% to 94.93\%, with significant variation across research domains. Moreover, we analyze 2.2 million citations from 56,381 papers published at top-tier AI/ML and Security venues (2020--2025), confirming that 1.07\% of papers contain invalid or fabricated citations (604 papers), with an 80.9\% increase in 2025 alone. Furthermore, we survey 97 researchers and analyze 94 valid responses after removing 3 conflicting samples, revealing a critical ``verification gap'': 41.5\% of researchers copy-paste BibTeX without checking and 44.4\% choose no-action responses when encountering suspicious references; meanwhile, 76.7\% of reviewers do not thoroughly check references and 80.0\% never suspect fake citations. Our findings reveal an accelerating crisis where unreliable AI tools, combined with inadequate human verification by researchers and insufficient peer review scrutiny, enable fabricated citations to contaminate the scientific record. We propose interventions for researchers, venues, and tool developers to protect citation integrity.

Wavelet-based Decoupling Framework for low-light Stereo Image Enhancement

Jul 16, 2025

Abstract:Low-light images suffer from complex degradation, and existing enhancement methods often encode all degradation factors within a single latent space. This leads to highly entangled features and strong black-box characteristics, making the model prone to shortcut learning. To mitigate the above issues, this paper proposes a wavelet-based low-light stereo image enhancement method with feature space decoupling. Our insight comes from the following findings: (1) Wavelet transform enables the independent processing of low-frequency and high-frequency information. (2) Illumination adjustment can be achieved by adjusting the low-frequency component of a low-light image, extracted through multi-level wavelet decomposition. Thus, by using wavelet transform the feature space is decomposed into a low-frequency branch for illumination adjustment and multiple high-frequency branches for texture enhancement. Additionally, stereo low-light image enhancement can extract useful cues from another view to improve enhancement. To this end, we propose a novel high-frequency guided cross-view interaction module (HF-CIM) that operates within high-frequency branches rather than across the entire feature space, effectively extracting valuable image details from the other view. Furthermore, to enhance the high-frequency information, a detail and texture enhancement module (DTEM) is proposed based on cross-attention mechanism. The model is trained on a dataset consisting of images with uniform illumination and images with non-uniform illumination. Experimental results on both real and synthetic images indicate that our algorithm offers significant advantages in light adjustment while effectively recovering high-frequency information. The code and dataset are publicly available at: https://github.com/Cherisherr/WDCI-Net.git.

Accelerating Non-Maximum Suppression: A Graph Theory Perspective

Sep 30, 2024

Abstract:Non-maximum suppression (NMS) is an indispensable post-processing step in object detection. With the continuous optimization of network models, NMS has become the ``last mile'' to enhance the efficiency of object detection. This paper systematically analyzes NMS from a graph theory perspective for the first time, revealing its intrinsic structure. Consequently, we propose two optimization methods, namely QSI-NMS and BOE-NMS. The former is a fast recursive divide-and-conquer algorithm with negligible mAP loss, and its extended version (eQSI-NMS) achieves optimal complexity of $\mathcal{O}(n\log n)$. The latter, concentrating on the locality of NMS, achieves an optimization at a constant level without an mAP loss penalty. Moreover, to facilitate rapid evaluation of NMS methods for researchers, we introduce NMS-Bench, the first benchmark designed to comprehensively assess various NMS methods. Taking the YOLOv8-N model on MS COCO 2017 as the benchmark setup, our method QSI-NMS provides $6.2\times$ speed of original NMS on the benchmark, with a $0.1\%$ decrease in mAP. The optimal eQSI-NMS, with only a $0.3\%$ mAP decrease, achieves $10.7\times$ speed. Meanwhile, BOE-NMS exhibits $5.1\times$ speed with no compromise in mAP.

Generalized Deepfakes Detection with Reconstructed-Blended Images and Multi-scale Feature Reconstruction Network

Dec 13, 2023

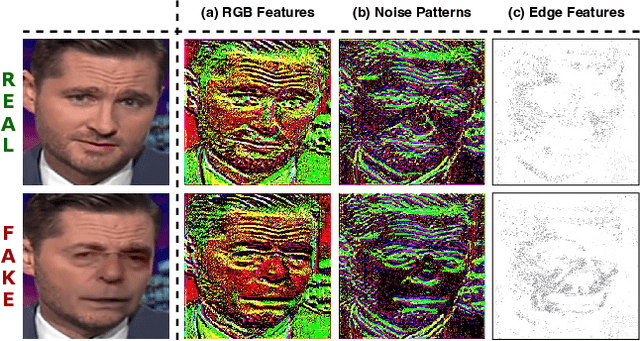

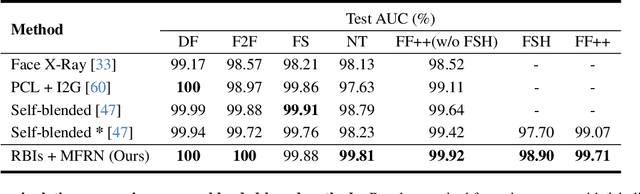

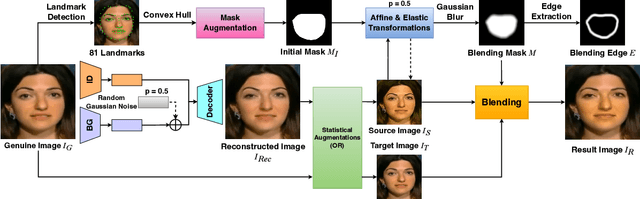

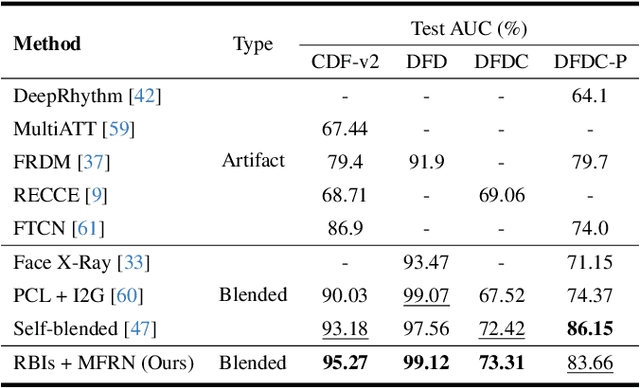

Abstract:The growing diversity of digital face manipulation techniques has led to an urgent need for a universal and robust detection technology to mitigate the risks posed by malicious forgeries. We present a blended-based detection approach that has robust applicability to unseen datasets. It combines a method for generating synthetic training samples, i.e., reconstructed blended images, that incorporate potential deepfake generator artifacts and a detection model, a multi-scale feature reconstruction network, for capturing the generic boundary artifacts and noise distribution anomalies brought about by digital face manipulations. Experiments demonstrated that this approach results in better performance in both cross-manipulation detection and cross-dataset detection on unseen data.

Face Forgery Detection Based on Facial Region Displacement Trajectory Series

Dec 07, 2022

Abstract:Deep-learning-based technologies such as deepfakes ones have been attracting widespread attention in both society and academia, particularly ones used to synthesize forged face images. These automatic and professional-skill-free face manipulation technologies can be used to replace the face in an original image or video with any target object while maintaining the expression and demeanor. Since human faces are closely related to identity characteristics, maliciously disseminated identity manipulated videos could trigger a crisis of public trust in the media and could even have serious political, social, and legal implications. To effectively detect manipulated videos, we focus on the position offset in the face blending process, resulting from the forced affine transformation of the normalized forged face. We introduce a method for detecting manipulated videos that is based on the trajectory of the facial region displacement. Specifically, we develop a virtual-anchor-based method for extracting the facial trajectory, which can robustly represent displacement information. This information was used to construct a network for exposing multidimensional artifacts in the trajectory sequences of manipulated videos that is based on dual-stream spatial-temporal graph attention and a gated recurrent unit backbone. Testing of our method on various manipulation datasets demonstrated that its accuracy and generalization ability is competitive with that of the leading detection methods.

CEMENT: Incomplete Multi-View Weak-Label Learning with Long-Tailed Labels

Jan 12, 2022

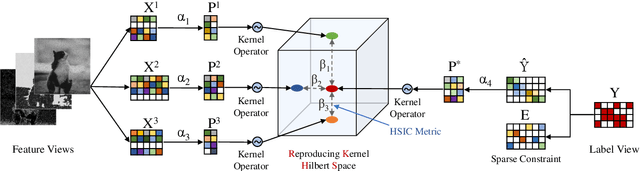

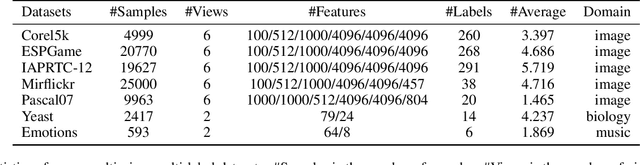

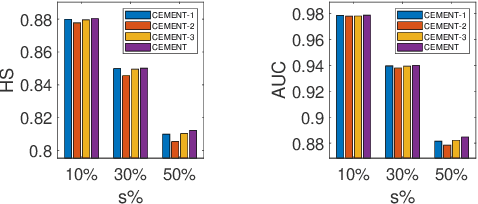

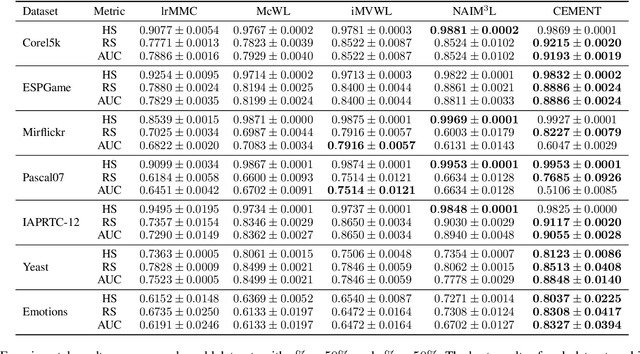

Abstract:A variety of modern applications exhibit multi-view multi-label learning, where each sample has multi-view features, and multiple labels are correlated via common views. In recent years, several methods have been proposed to cope with it and achieve much success, but still suffer from two key problems: 1) lack the ability to deal with the incomplete multi-view weak-label data, in which only a subset of features and labels are provided for each sample; 2) ignore the presence of noisy views and tail labels usually occurring in real-world problems. In this paper, we propose a novel method, named CEMENT, to overcome the limitations. For 1), CEMENT jointly embeds incomplete views and weak labels into distinct low-dimensional subspaces, and then correlates them via Hilbert-Schmidt Independence Criterion (HSIC). For 2), CEMEMT adaptively learns the weights of embeddings to capture noisy views, and explores an additional sparse component to model tail labels, making the low-rankness available in the multi-label setting. We develop an alternating algorithm to solve the proposed optimization problem. Experimental results on seven real-world datasets demonstrate the effectiveness of the proposed method.

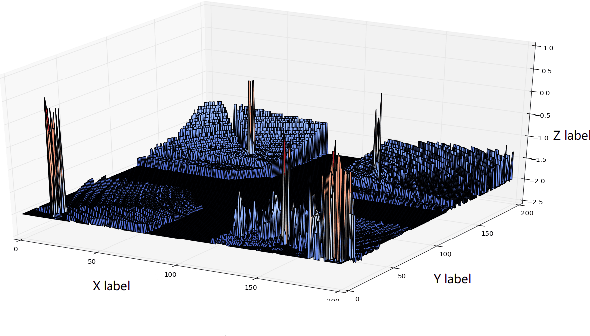

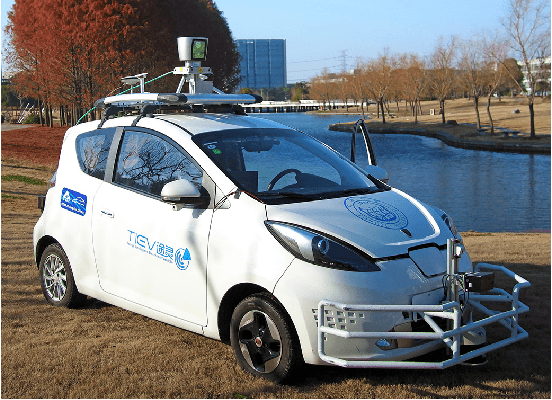

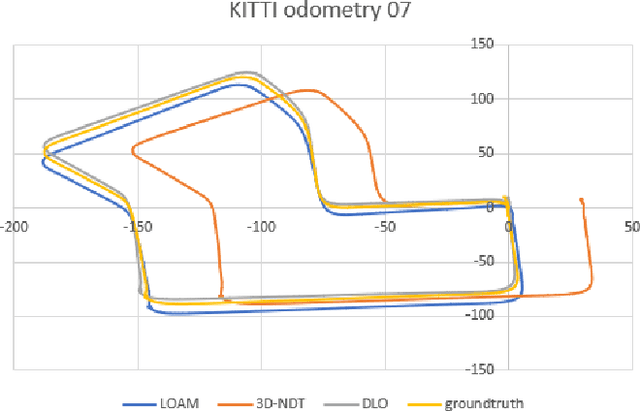

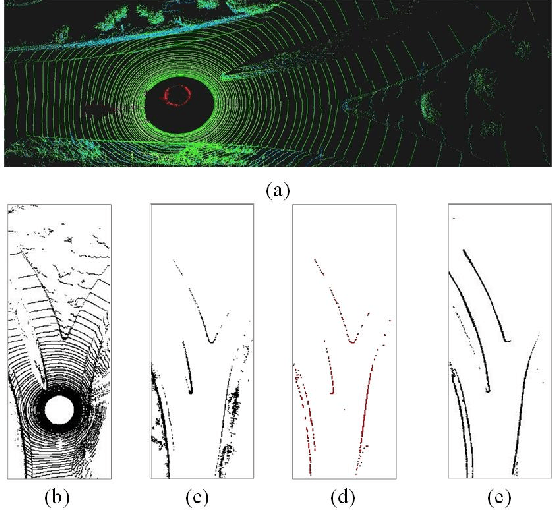

DLO: Direct LiDAR Odometry for 2.5D Outdoor Environment

Sep 15, 2018

Abstract:For autonomous vehicles, high-precision real-time localization is the guarantee of stable driving. Compared with the visual odometry (VO), the LiDAR odometry (LO) has the advantages of higher accuracy and better stability. However, 2D LO is only suitable for the indoor environment, and 3D LO has less efficiency in general. Both are not suitable for the online localization of an autonomous vehicle in an outdoor driving environment. In this paper, a direct LO method based on the 2.5D grid map is proposed. The fast semi-dense direct method proposed for VO is employed to register two 2.5D maps. Experiments show that this method is superior to both the 3D-NDT and LOAM in the outdoor environment.

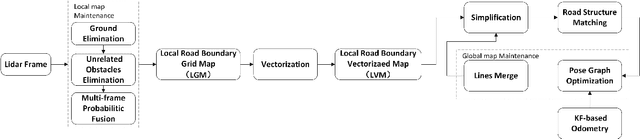

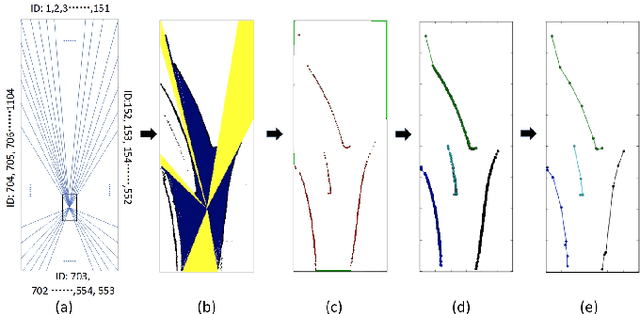

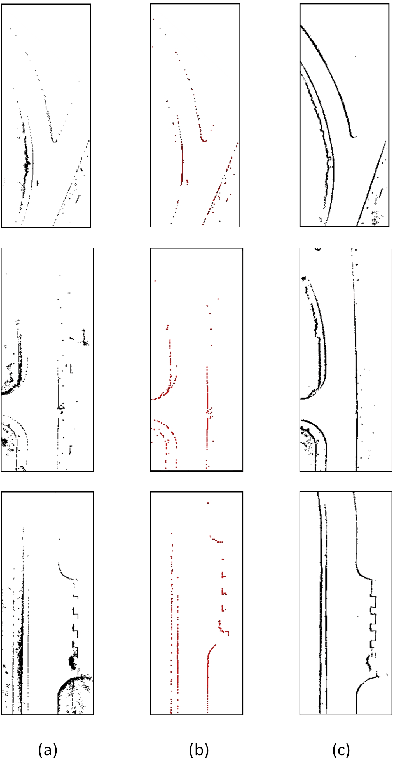

Automatic Vector-based Road Structure Mapping Using Multi-beam LiDAR

Jun 06, 2018

Abstract:In this paper, we studied a SLAM method for vector-based road structure mapping using multi-beam LiDAR. We propose to use the polyline as the primary mapping element instead of grid cell or point cloud, because the vector-based representation is precise and lightweight, and it can directly generate vector-based High-Definition (HD) driving map as demanded by autonomous driving systems. We explored: 1) the extraction and vectorization of road structures based on local probabilistic fusion. 2) the efficient vector-based matching between frames of road structures. 3) the loop closure and optimization based on the pose-graph. In this study, we took a specific road structure, the road boundary, as an example. We applied the proposed matching method in three different scenes and achieved the average absolute matching error of 0.07. We further applied the mapping system to the urban road with the length of 860 meters and achieved an average global accuracy of 0.466 m without the help of high precision GPS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge