Li Yan

NTIRE 2026 The 3rd Restore Any Image Model (RAIM) Challenge: Professional Image Quality Assessment (Track 1)

Apr 14, 2026Abstract:In this paper, we present an overview of the NTIRE 2026 challenge on the 3rd Restore Any Image Model in the Wild, specifically focusing on Track 1: Professional Image Quality Assessment. Conventional Image Quality Assessment (IQA) typically relies on scalar scores. By compressing complex visual characteristics into a single number, these methods fundamentally struggle to distinguish subtle differences among uniformly high-quality images. Furthermore, they fail to articulate why one image is superior, lacking the reasoning capabilities required to provide guidance for vision tasks. To bridge this gap, recent advancements in Multimodal Large Language Models (MLLMs) offer a promising paradigm. Inspired by this potential, our challenge establishes a novel benchmark exploring the ability of MLLMs to mimic human expert cognition in evaluating high-quality image pairs. Participants were tasked with overcoming critical bottlenecks in professional scenarios, centering on two primary objectives: (1) Comparative Quality Selection: reliably identifying the visually superior image within a high-quality pair; and (2) Interpretative Reasoning: generating grounded, expert-level explanations that detail the rationale behind the selection. In total, the challenge attracted nearly 200 registrations and over 2,500 submissions. The top-performing methods significantly advanced the state of the art in professional IQA. The challenge dataset is available at https://github.com/narthchin/RAIM-PIQA, and the official homepage is accessible at https://www.codabench.org/competitions/12789/.

Redefining Quality Criteria and Distance-Aware Score Modeling for Image Editing Assessment

Apr 14, 2026Abstract:Recent advances in image editing have heightened the need for reliable Image Editing Quality Assessment (IEQA). Unlike traditional methods, IEQA requires complex reasoning over multimodal inputs and multi-dimensional assessments. Existing MLLM-based approaches often rely on human heuristic prompting, leading to two key limitations: rigid metric prompting and distance-agnostic score modeling. These issues hinder alignment with implicit human criteria and fail to capture the continuous structure of score spaces. To address this, we propose Define-and-Score Image Editing Quality Assessment (DS-IEQA), a unified framework that jointly learns evaluation criteria and score representations. Specifically, we introduce Feedback-Driven Metric Prompt Optimization (FDMPO) to automatically refine metric definitions via probabilistic feedback. Furthermore, we propose Token-Decoupled Distance Regression Loss (TDRL), which decouples numerical tokens from language modeling to explicitly model score continuity through expected distance minimization. Extensive experiments show our method's superior performance; it ranks 4th in the 2026 NTIRE X-AIGC Quality Assessment Track 2 without any additional training data.

Foundation Model-Driven Semantic Change Detection in Remote Sensing Imagery

Feb 14, 2026Abstract:Remote sensing (RS) change detection methods can extract critical information on surface dynamics and are an essential means for humans to understand changes in the earth's surface and environment. Among these methods, semantic change detection (SCD) can more effectively interpret the multi-class information contained in bi-temporal RS imagery, providing semantic-level predictions that support dynamic change monitoring. However, due to the limited semantic understanding capability of the model and the inherent complexity of the SCD tasks, existing SCD methods face significant challenges in both performance and paradigm complexity. In this paper, we propose PerASCD, a SCD method driven by RS foundation model PerA, designed to enhance the multi-scale semantic understanding and overall performance. We introduce a modular Cascaded Gated Decoder (CG-Decoder) that simplifies complex SCD decoding pipelines while promoting effective multi-level feature interaction and fusion. In addition, we propose a Soft Semantic Consistency Loss (SSCLoss) to mitigate the numerical instability commonly encountered during SCD training. We further explore the applicability of multiple existing RS foundation models on the SCD task when equipped with the proposed decoder. Experimental results demonstrate that our decoder not only effectively simplifies the paradigm of SCD, but also achieves seamless adaptation across various vision encoders. Our method achieves state-of-the-art (SOTA) performance on two public benchmark datasets, validating its effectiveness. The code is available at https://github.com/SathShen/PerASCD.git.

Clustering-based Transfer Learning for Dynamic Multimodal MultiObjective Evolutionary Algorithm

Dec 22, 2025Abstract:Dynamic multimodal multiobjective optimization presents the dual challenge of simultaneously tracking multiple equivalent pareto optimal sets and maintaining population diversity in time-varying environments. However, existing dynamic multiobjective evolutionary algorithms often neglect solution modality, whereas static multimodal multiobjective evolutionary algorithms lack adaptability to dynamic changes. To address above challenge, this paper makes two primary contributions. First, we introduce a new benchmark suite of dynamic multimodal multiobjective test functions constructed by fusing the properties of both dynamic and multimodal optimization to establish a rigorous evaluation platform. Second, we propose a novel algorithm centered on a Clustering-based Autoencoder prediction dynamic response mechanism, which utilizes an autoencoder model to process matched clusters to generate a highly diverse initial population. Furthermore, to balance the algorithm's convergence and diversity, we integrate an adaptive niching strategy into the static optimizer. Empirical analysis on 12 instances of dynamic multimodal multiobjective test functions reveals that, compared with several state-of-the-art dynamic multiobjective evolutionary algorithms and multimodal multiobjective evolutionary algorithms, our algorithm not only preserves population diversity more effectively in the decision space but also achieves superior convergence in the objective space.

SenseCrypt: Sensitivity-guided Selective Homomorphic Encryption for Joint Federated Learning in Cross-Device Scenarios

Aug 06, 2025Abstract:Homomorphic Encryption (HE) prevails in securing Federated Learning (FL), but suffers from high overhead and adaptation cost. Selective HE methods, which partially encrypt model parameters by a global mask, are expected to protect privacy with reduced overhead and easy adaptation. However, in cross-device scenarios with heterogeneous data and system capabilities, traditional Selective HE methods deteriorate client straggling, and suffer from degraded HE overhead reduction performance. Accordingly, we propose SenseCrypt, a Sensitivity-guided selective Homomorphic EnCryption framework, to adaptively balance security and HE overhead per cross-device FL client. Given the observation that model parameter sensitivity is effective for measuring clients' data distribution similarity, we first design a privacy-preserving method to respectively cluster the clients with similar data distributions. Then, we develop a scoring mechanism to deduce the straggler-free ratio of model parameters that can be encrypted by each client per cluster. Finally, for each client, we formulate and solve a multi-objective model parameter selection optimization problem, which minimizes HE overhead while maximizing model security without causing straggling. Experiments demonstrate that SenseCrypt ensures security against the state-of-the-art inversion attacks, while achieving normal model accuracy as on IID data, and reducing training time by 58.4%-88.7% as compared to traditional HE methods.

Align-DA: Align Score-based Atmospheric Data Assimilation with Multiple Preferences

May 28, 2025Abstract:Data assimilation (DA) aims to estimate the full state of a dynamical system by combining partial and noisy observations with a prior model forecast, commonly referred to as the background. In atmospheric applications, this problem is fundamentally ill-posed due to the sparsity of observations relative to the high-dimensional state space. Traditional methods address this challenge by simplifying background priors to regularize the solution, which are empirical and require continual tuning for application. Inspired by alignment techniques in text-to-image diffusion models, we propose Align-DA, which formulates DA as a generative process and uses reward signals to guide background priors, replacing manual tuning with data-driven alignment. Specifically, we train a score-based model in the latent space to approximate the background-conditioned prior, and align it using three complementary reward signals for DA: (1) assimilation accuracy, (2) forecast skill initialized from the assimilated state, and (3) physical adherence of the analysis fields. Experiments with multiple reward signals demonstrate consistent improvements in analysis quality across different evaluation metrics and observation-guidance strategies. These results show that preference alignment, implemented as a soft constraint, can automatically adapt complex background priors tailored to DA, offering a promising new direction for advancing the field.

LDPM: Towards undersampled MRI reconstruction with MR-VAE and Latent Diffusion Prior

Nov 05, 2024

Abstract:Diffusion model, as a powerful generative model, has found a wide range of applications including MRI reconstruction. However, most existing diffusion model-based MRI reconstruction methods operate directly in pixel space, which makes their optimization and inference computationally expensive. Latent diffusion models were introduced to address this problem in natural image processing, but directly applying them to MRI reconstruction still faces many challenges, including the lack of control over the generated results, the adaptability of Variational AutoEncoder (VAE) to MRI, and the exploration of applicable data consistency in latent space. To address these challenges, a Latent Diffusion Prior based undersampled MRI reconstruction (LDPM) method is proposed. A sketcher module is utilized to provide appropriate control and balance the quality and fidelity of the reconstructed MR images. A VAE adapted for MRI tasks (MR-VAE) is explored, which can serve as the backbone for future MR-related tasks. Furthermore, a variation of the DDIM sampler, called the Dual-Stage Sampler, is proposed to achieve high-fidelity reconstruction in the latent space. The proposed method achieves competitive results on fastMRI datasets, and the effectiveness of each module is demonstrated in ablation experiments.

Micro-Structures Graph-Based Point Cloud Registration for Balancing Efficiency and Accuracy

Oct 29, 2024

Abstract:Point Cloud Registration (PCR) is a fundamental and significant issue in photogrammetry and remote sensing, aiming to seek the optimal rigid transformation between sets of points. Achieving efficient and precise PCR poses a considerable challenge. We propose a novel micro-structures graph-based global point cloud registration method. The overall method is comprised of two stages. 1) Coarse registration (CR): We develop a graph incorporating micro-structures, employing an efficient graph-based hierarchical strategy to remove outliers for obtaining the maximal consensus set. We propose a robust GNC-Welsch estimator for optimization derived from a robust estimator to the outlier process in the Lie algebra space, achieving fast and robust alignment. 2) Fine registration (FR): To refine local alignment further, we use the octree approach to adaptive search plane features in the micro-structures. By minimizing the distance from the point-to-plane, we can obtain a more precise local alignment, and the process will also be addressed effectively by being treated as a planar adjustment algorithm combined with Anderson accelerated optimization (PA-AA). After extensive experiments on real data, our proposed method performs well on the 3DMatch and ETH datasets compared to the most advanced methods, achieving higher accuracy metrics and reducing the time cost by at least one-third.

Autonomous Exploration Method for Fast Unknown Environment Mapping by Using UAV Equipped with Limited FOV Sensor

Feb 05, 2023Abstract:Autonomous exploration is one of the important parts to achieve the fast autonomous mapping and target search. However, most of the existing methods are facing low-efficiency problems caused by low-quality trajectory or back-and-forth maneuvers. To improve the exploration efficiency in unknown environments, a fast autonomous exploration planner (FAEP) is proposed in this paper. Different from existing methods, we firstly design a novel frontiers exploration sequence generation method to obtain a more reasonable exploration path, which considers not only the flight-level but frontier-level factors in the asymmetric traveling salesman problem (ATSP). Then, according to the exploration sequence and the distribution of frontiers, an adaptive yaw planning method is proposed to cover more frontiers by yaw change during an exploration journey. In addition, to increase the speed and fluency of flight, a dynamic replanning strategy is also adopted. We present sufficient comparison and evaluation experiments in simulation environments. Experimental results show the proposed exploration planner has better performance in terms of flight time and flight distance compared to typical and state-of-the-art methods. Moreover, the effectiveness of the proposed method is further evaluated in real-world environments.

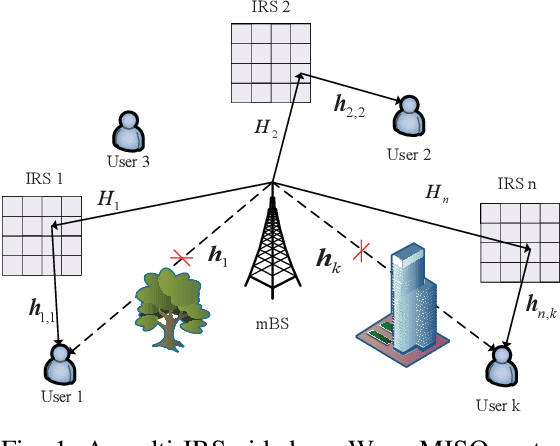

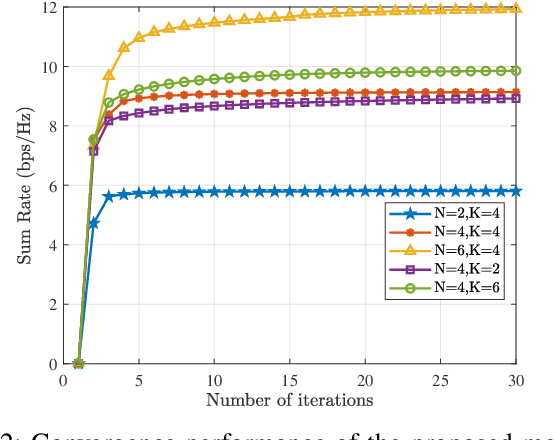

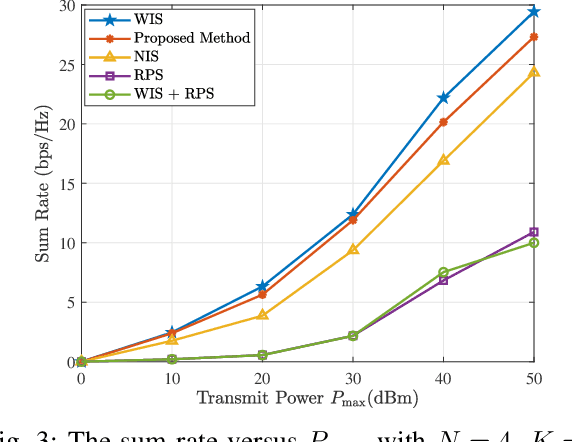

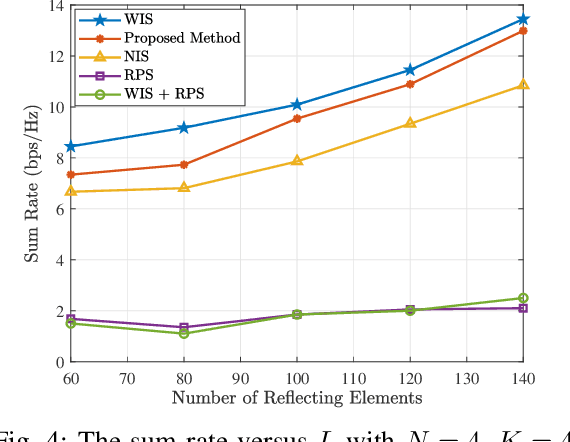

Joint Optimization of Active and Passive Beamforming in Multi-IRS Aided mmWave Communications

Oct 04, 2022

Abstract:Intelligent reflecting surface (IRS) has been considered as a promising technology to alleviate the blockage effect and enhance coverage in millimeter wave (mmWave) communication. To explore the impact of IRS on the performance of mmWave communication, we investigate a multi-IRS assisted mmWave communication network and formulate a sum rate maximization problem by jointly optimizing the active and passive beamforming and the set of IRSs for assistance. The optimization problem is intractable due to the lack of convexity of the objective function and the binary nature of the IRS selection variables. To tackle the complex non-convex problem, an alternating iterative approach is proposed. In particular, utilizing the fractional programming method to optimize the active and passive beamforming and the optimization of IRS selection is solved by enumerating. Simulation results demonstrate the performance gain of our proposed approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge