Feifei Gao

Sherman

Joint Channel Estimation and Data Detection for Hybrid RIS aided Millimeter Wave OTFS Systems

Aug 14, 2022

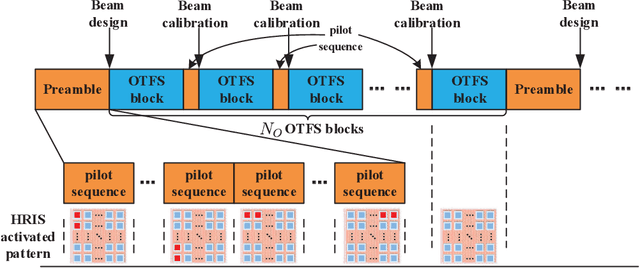

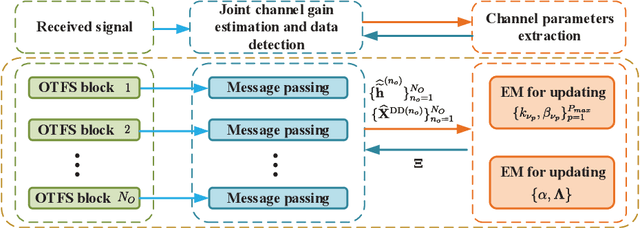

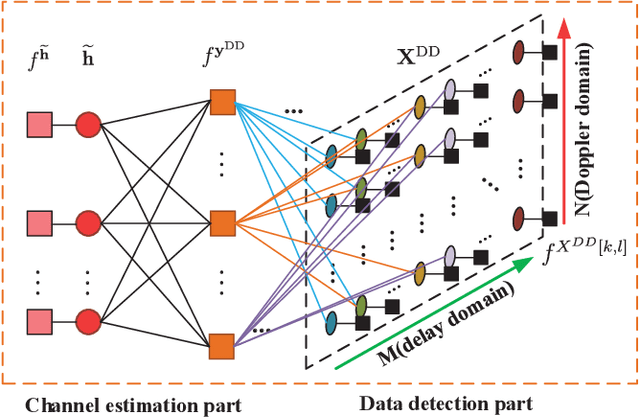

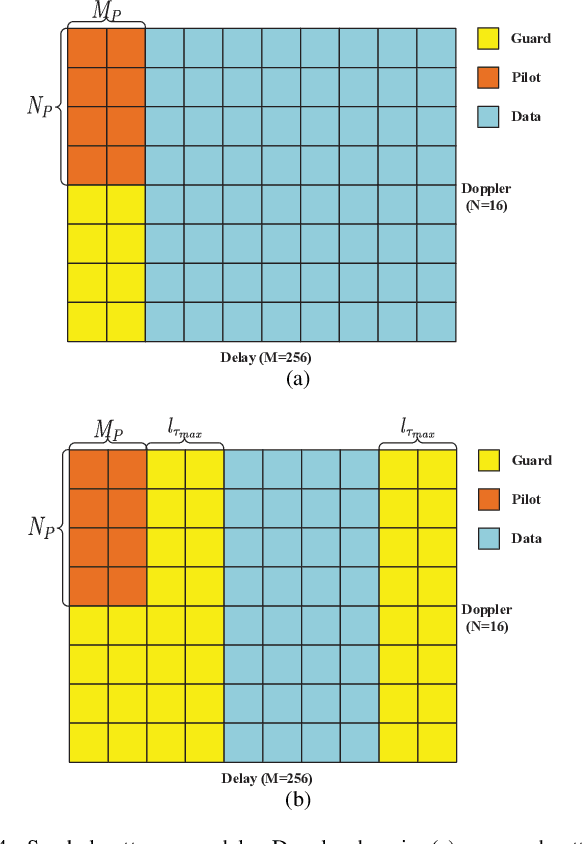

Abstract:For high mobility communication scenario, the recently emerged orthogonal time frequency space (OTFS) modulation introduces a new delay-Doppler domain signal space, and can provide better communication performance than traditional orthogonal frequency division multiplexing system. This article focuses on the joint channel estimation and data detection (JCEDD) for hybrid reconfigurable intelligent surface (HRIS) aided millimeter wave (mmWave) OTFS systems. Firstly, a new transmission structure is designed. Within the pilot durations of the designed structure, partial HRIS elements are alternatively activated. The time domain channel model is then exhibited. Secondly, the received signal model for both the HRIS over time domain and the base station over delay-Doppler domain are studied. Thirdly, by utilizing channel parameters acquired at the HRIS, an HRIS beamforming design strategy is proposed. For the OTFS transmission, we propose a JCEDD scheme over delay-Doppler domain. In this scheme, message passing (MP) algorithm is designed to simultaneously obtain the equivalent channel gain and the data symbols. On the other hand, the channel parameters, i.e., the Doppler shift, the channel sparsity, and the channel variance, are updated through expectation-maximization (EM) algorithm. By iteratively executing the MP and EM algorithm, both the channel and the unknown data symbols can be accurately acquired. Finally, simulation results are provided to validate the effectiveness of our proposed JCEDD scheme.

Towards Semantic Communications: Deep Learning-Based Image Semantic Coding

Aug 08, 2022

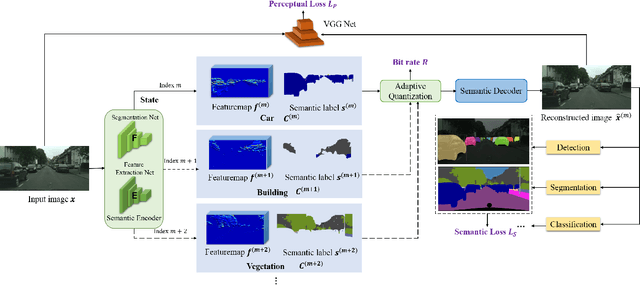

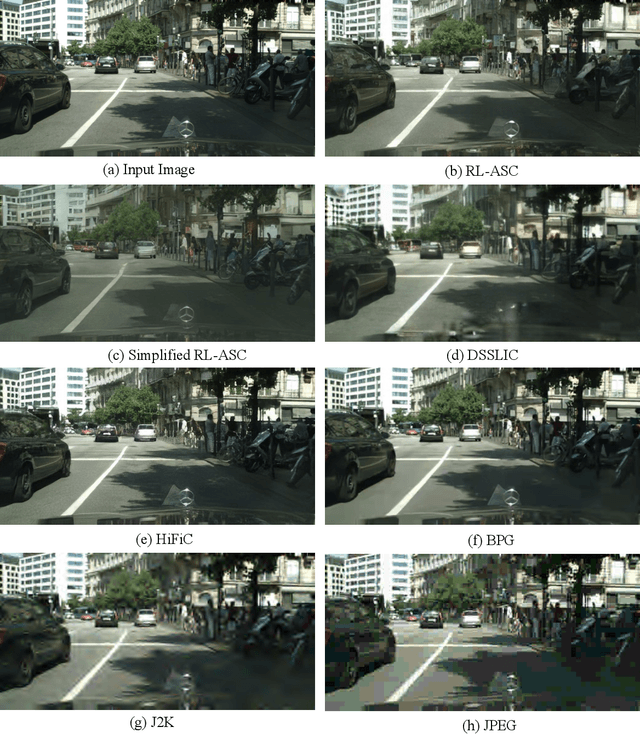

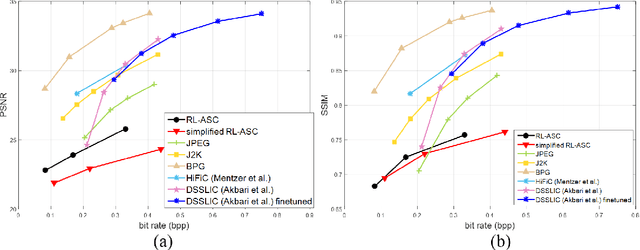

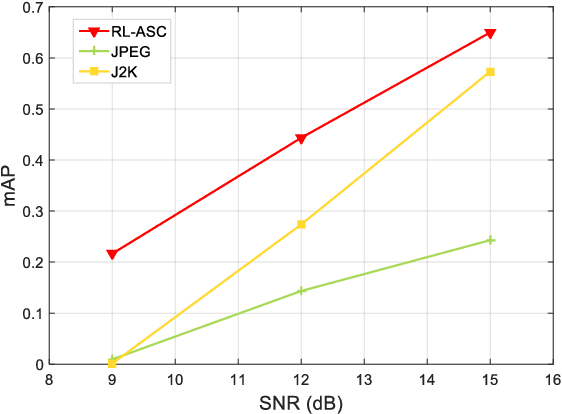

Abstract:Semantic communications has received growing interest since it can remarkably reduce the amount of data to be transmitted without missing critical information. Most existing works explore the semantic encoding and transmission for text and apply techniques in Natural Language Processing (NLP) to interpret the meaning of the text. In this paper, we conceive the semantic communications for image data that is much more richer in semantics and bandwidth sensitive. We propose an reinforcement learning based adaptive semantic coding (RL-ASC) approach that encodes images beyond pixel level. Firstly, we define the semantic concept of image data that includes the category, spatial arrangement, and visual feature as the representation unit, and propose a convolutional semantic encoder to extract semantic concepts. Secondly, we propose the image reconstruction criterion that evolves from the traditional pixel similarity to semantic similarity and perceptual performance. Thirdly, we design a novel RL-based semantic bit allocation model, whose reward is the increase in rate-semantic-perceptual performance after encoding a certain semantic concept with adaptive quantization level. Thus, the task-related information is preserved and reconstructed properly while less important data is discarded. Finally, we propose the Generative Adversarial Nets (GANs) based semantic decoder that fuses both locally and globally features via an attention module. Experimental results demonstrate that the proposed RL-ASC is noise robust and could reconstruct visually pleasant and semantic consistent image, and saves times of bit cost compared to standard codecs and other deep learning-based image codecs.

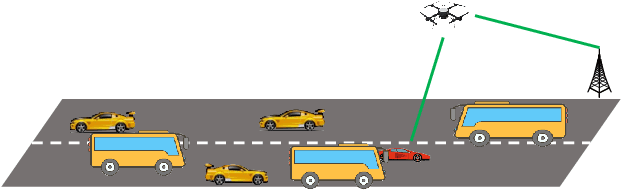

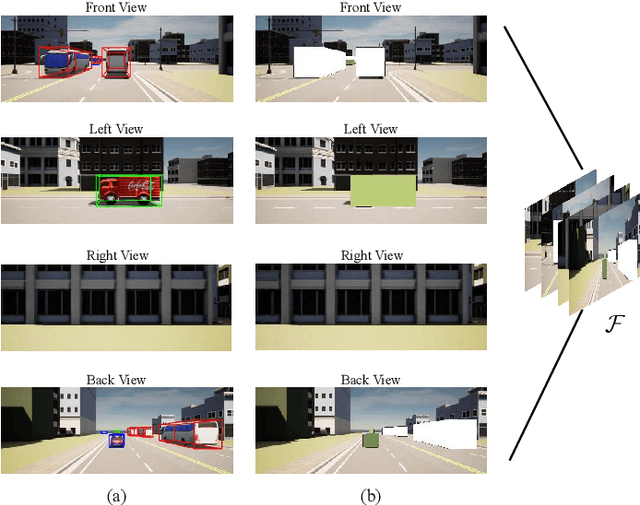

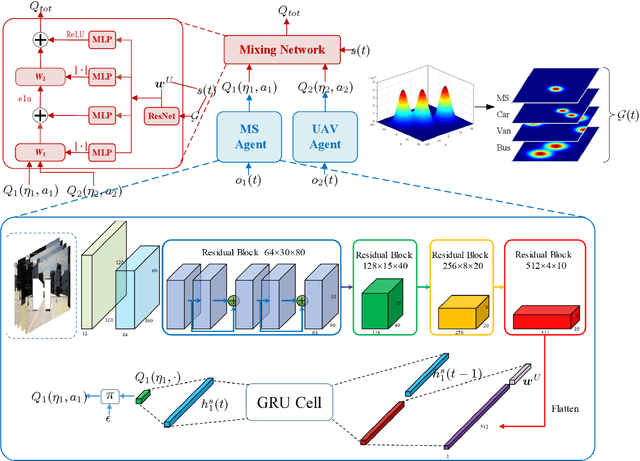

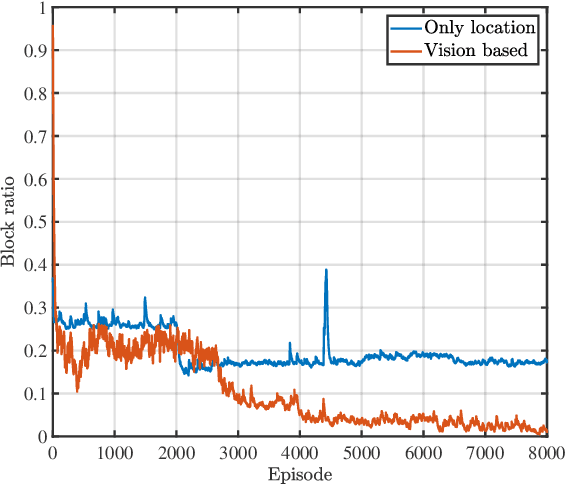

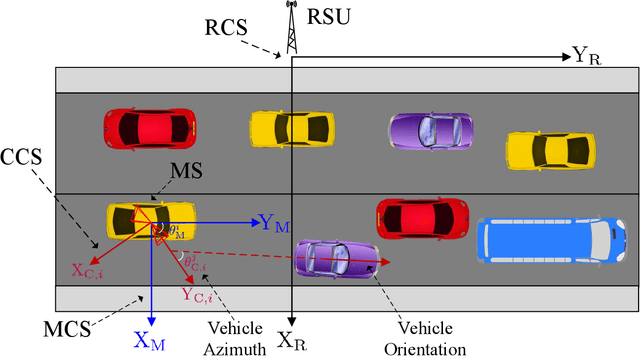

Vision-Aided Blockage Avoidance in UAV-assisted V2X Communications

Jul 26, 2022

Abstract:The blockage is a key challenge for millimeter wave communication systems, since these systems mainly work on line-of-sight (LOS) links, and the blockage can degrade the system performance significantly. It is recently found that visual information, easily obtained by cameras, can be utilized to extract the location and size information of the environmental objects, which can help to infer the communication parameters, such as blockage status. In this paper, we propose a novel vision-aided handover framework for UAV-assisted V2X system, which leverages the images taken by cameras at the mobile station (MS) to choose the direct link or UAV-assisted link to avoid blockage caused by the vehicles on the road. We propose a deep reinforcement learning algorithm to optimize the handover and UAV trajectory policy in order to improve the long-term throughput. Simulations results demonstrate the effectiveness of using visual information to deal with the blockage issues.

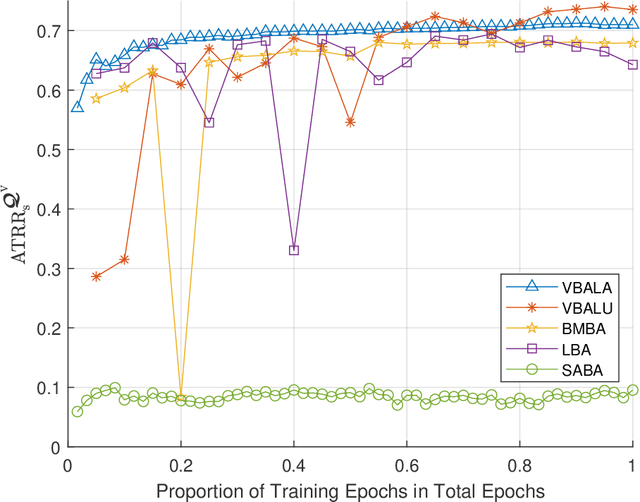

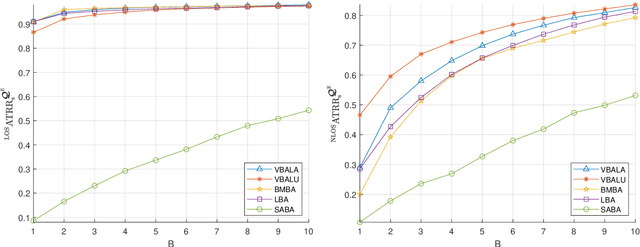

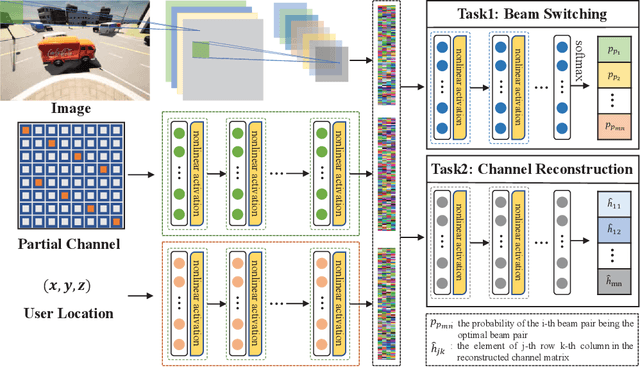

Computer Vision Aided mmWave Beam Alignment in V2X Communications

Jul 23, 2022

Abstract:Visual information, captured for example by cameras, can effectively reflect the sizes and locations of the environmental scattering objects, and thereby can be used to infer communications parameters like propagation directions, receiver powers, as well as the blockage status. In this paper, we propose a novel beam alignment framework that leverages images taken by cameras installed at the mobile user. Specifically, we utilize 3D object detection techniques to extract the size and location information of the dynamic vehicles around the mobile user, and design a deep neural network (DNN) to infer the optimal beam pair for transceivers without any pilot signal overhead. Moreover, to avoid performing beam alignment too frequently or too slowly, a beam coherence time (BCT) prediction method is developed based on the vision information. This can effectively improve the transmission rate compared with the beam alignment approach with the fixed BCT. Simulation results show that the proposed vision based beam alignment methods outperform the existing LIDAR and vision based solutions, and demand for much lower hardware cost and communication overhead.

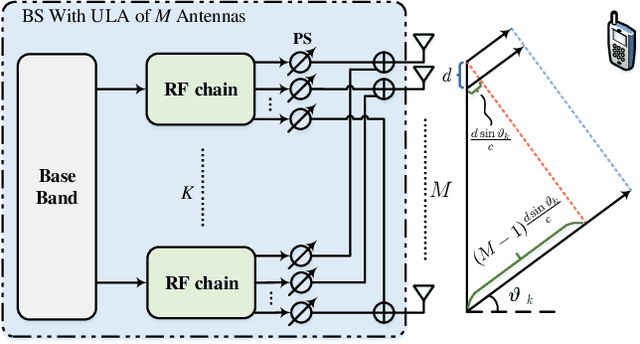

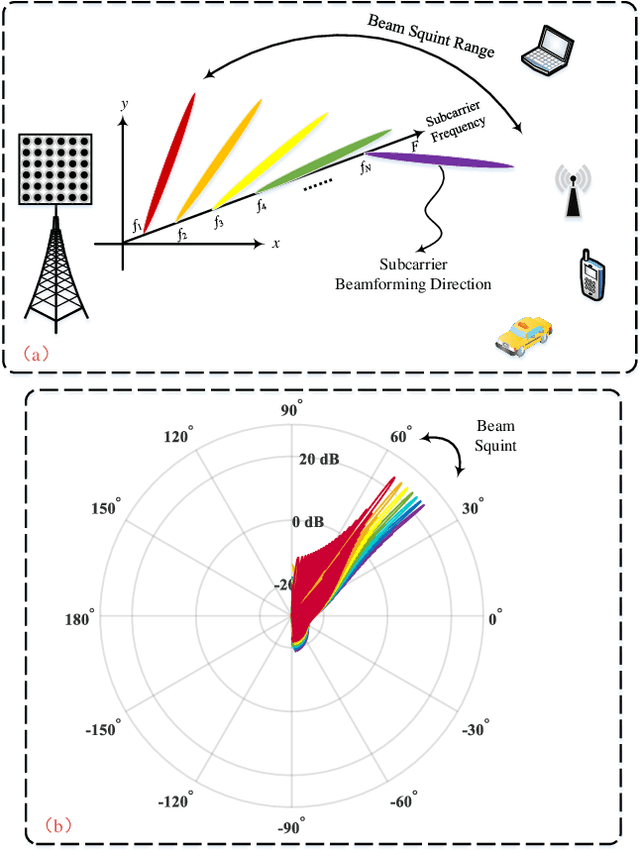

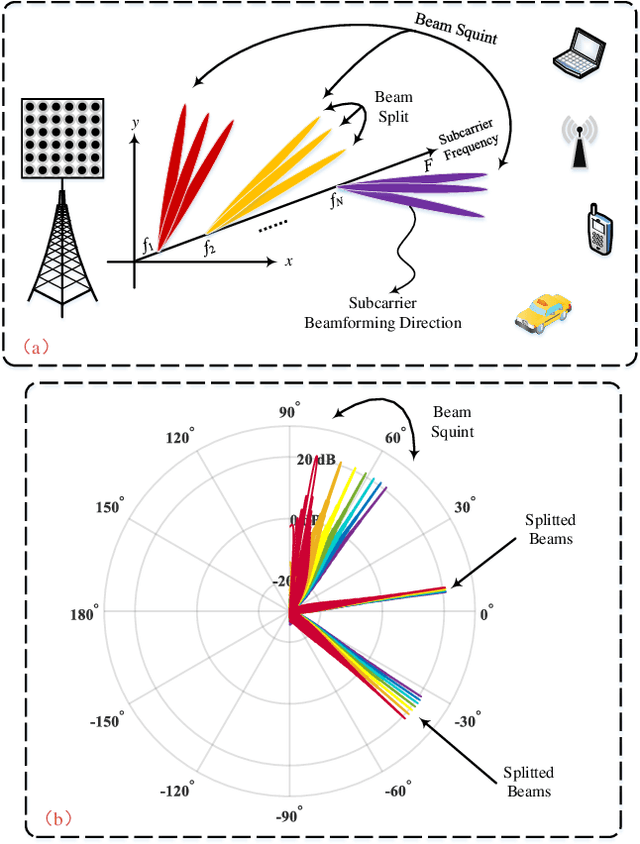

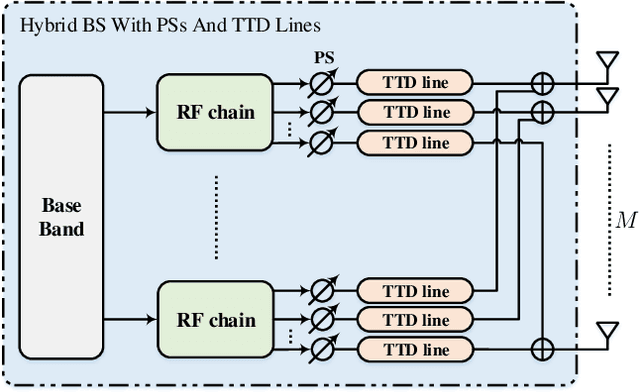

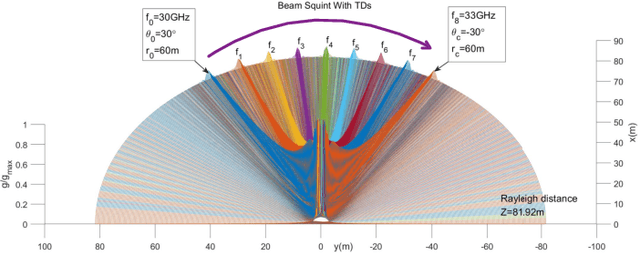

Integrated Sensing and Communications with Joint Beam Squint and Beam Split for Massive MIMO

Jul 19, 2022

Abstract:Integrated sensing and communications (ISAC) has attracted tremendous attention for the future 6G wireless communication systems. To improve the transmission rates and sensing accuracy, massive multi-input multi-output (MIMO) technique is leveraged with large transmission bandwidth. However, the growing size of transmission bandwidth and antenna array results in the beam squint effect, which hampers the communications. Moreover, the time overhead of the traditional sensing algorithm is prohibitive for practical systems. In this paper, instead of alleviating the wideband beam squint effect, we take advantage of joint beam squint and beam split effect and propose a novel user directions sensing method integrated with massive MIMO orthogonal frequency division multiplexing (OFDM) systems. Specifically, with the beam squint effect, the BS utilizes the true-time-delay (TTD) lines to steer the beams of different OFDM subcarriers towards different directions simultaneously. The users feedback the subcarrier frequency with the maximum array gain to the BS. Then, the BS calculates the direction based on the subcarrier frequency feedback. Futhermore, the beam split effect introduced by enlarging the inter-antenna spacing is exploited to expand the sensing range. The proposed sensing method operates over frequency-domain, and the intended sensing range is covered by all the subcarriers simultaneously, which reduces the time overhead of the conventional sensing significantly. Simulation results have demonstrated the effectiveness as well as the superior performance of the proposed ISAC scheme.

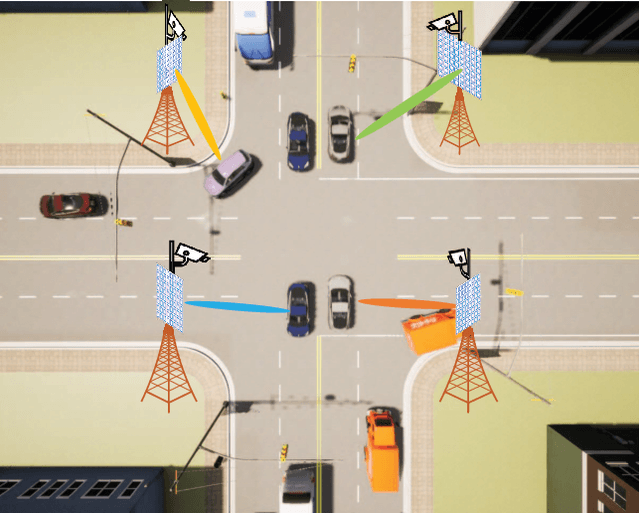

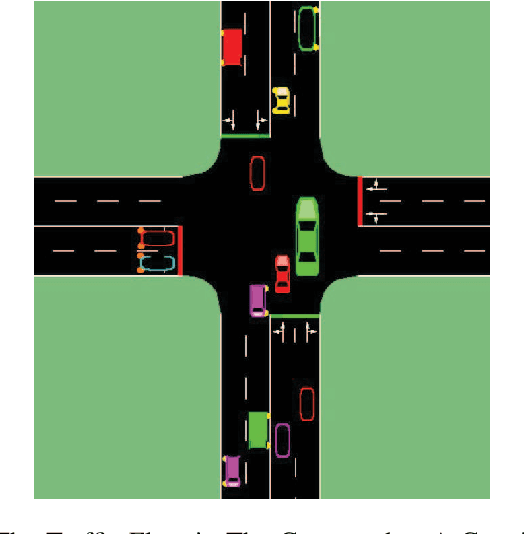

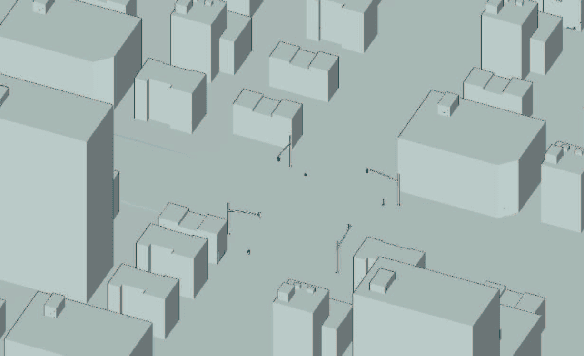

Multi-Camera View Based Proactive BS Selection and Beam Switching for V2X

Jul 12, 2022

Abstract:Due to the short wavelength and large attenuation of millimeter-wave (mmWave), mmWave BSs are densely distributed and require beamforming with high directivity. When the user moves out of the coverage of the current BS or is severely blocked, the mmWave BS must be switched to ensure the communication quality. In this paper, we proposed a multi-camera view based proactive BS selection and beam switching that can predict the optimal BS of the user in the future frame and switch the corresponding beam pair. Specifically, we extract the features of multi-camera view images and a small part of channel state information (CSI) in historical frames, and dynamically adjust the weight of each modality feature. Then we design a multi-task learning module to guide the network to better understand the main task, thereby enhancing the accuracy and the robustness of BS selection and beam switching. Using the outputs of all tasks, a prior knowledge based fine tuning network is designed to further increase the BS switching accuracy. After the optimal BS is obtained, a beam pair switching network is proposed to directly predict the optimal beam pair of the corresponding BS. Simulation results in an outdoor intersection environment show the superior performance of our proposed solution under several metrics such as predicting accuracy, achievable rate, harmonic mean of precision and recall.

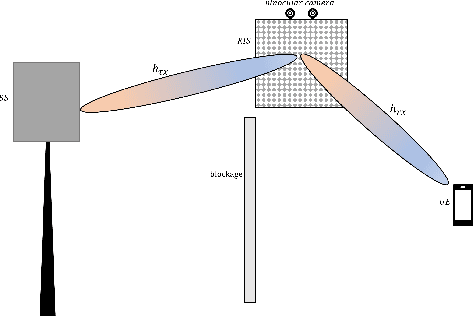

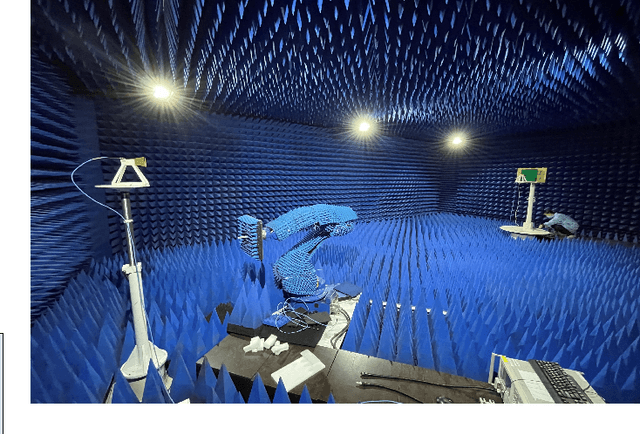

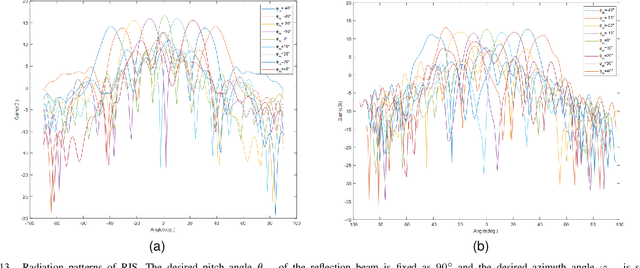

Computer Vision-Aided Reconfigurable Intelligent Surface-Based Beam Tracking: Prototyping and Experimental Results

Jul 11, 2022

Abstract:In this paper, we propose a novel computer vision-based approach to aid Reconfigurable Intelligent Surface (RIS) for dynamic beam tracking and then implement the corresponding prototype verification system. A camera is attached at the RIS to obtain the visual information about the surrounding environment, with which RIS identifies the desired reflected beam direction and then adjusts the reflection coefficients according to the pre-designed codebook. Compared to the conventional approaches that utilize channel estimation or beam sweeping to obtain the reflection coefficients, the proposed one not only saves beam training overhead but also eliminates the requirement for extra feedback links. We build a 20-by-20 RIS running at 5.4 GHz and develop a high-speed control board to ensure the real-time refresh of the reflection coefficients. Meanwhile we implement an independent peer-to-peer communication system to simulate the communication between the base station and the user equipment. The vision-aided RIS prototype system is tested in two mobile scenarios: RIS works in near-field conditions as a passive array antenna of the base station; RIS works in far-field conditions to assist the communication between the base station and the user equipment. The experimental results show that RIS can quickly adjust the reflection coefficients for dynamic beam tracking with the help of visual information.

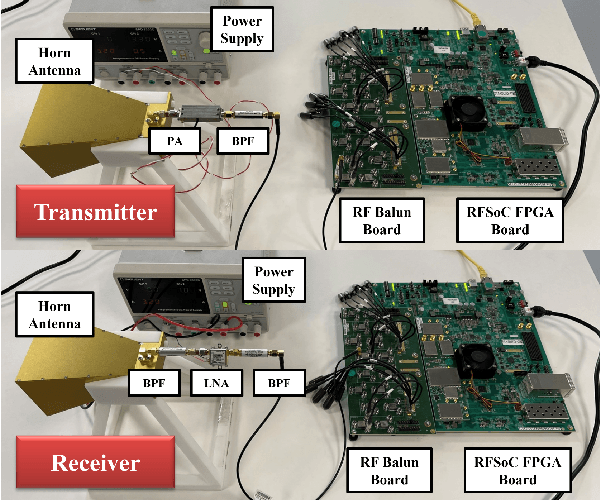

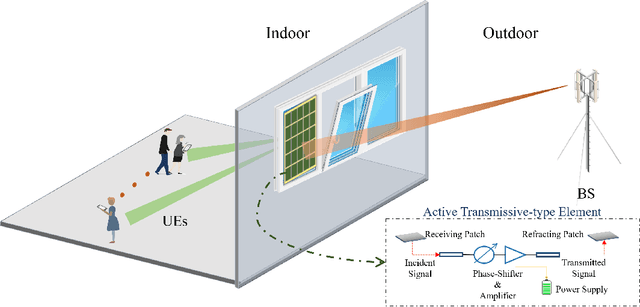

Joint Precoding for Active Intelligent Transmitting Surface Empowered Outdoor-to-Indoor Communication in mmWave Cellular Networks

Jun 28, 2022

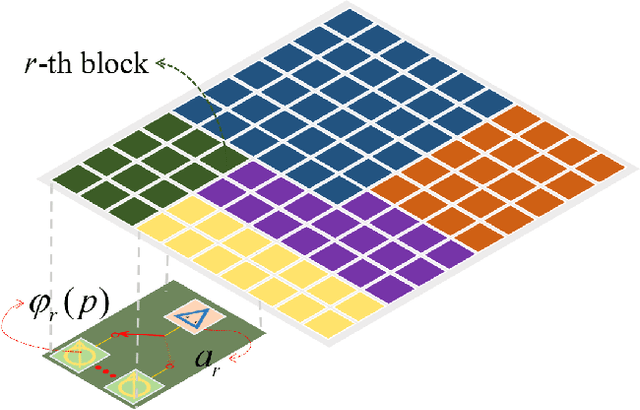

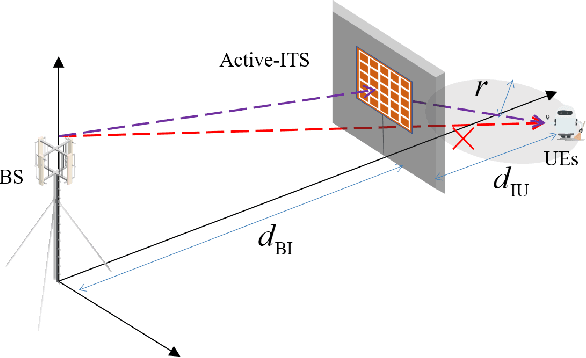

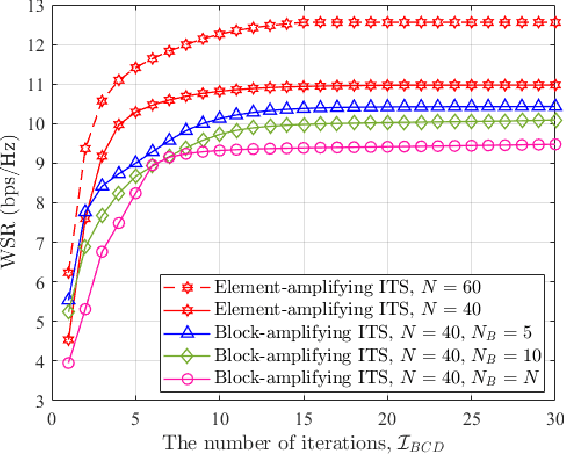

Abstract:Outdoor-to-indoor communications in millimeter-wave (mmWave) cellular networks have been one challenging research problem due to the severe attenuation and the high penetration loss caused by the propagation characteristics of mmWave signals. We propose a viable solution to implement the outdoor-to-indoor mmWave communication system with the aid of an active intelligent transmitting surface (active-ITS), where the active-ITS allows the incoming signal from an outdoor base station (BS) to pass through the surface and be received by the indoor user-equipments (UEs) after shifting its phase and magnifying its amplitude. Then, the problem of joint precoding of the BS and active-ITS is investigated to maximize the weighted sum-rate (WSR) of the communication system. An efficient block coordinate descent (BCD) based algorithm is developed to solve it with the suboptimal solutions in nearly closed-forms. In addition, to reduce the size and hardware cost of an active-ITS, we provide a block-amplifying architecture to partially remove the circuit components for power-amplifying, where multiple transmissive-type elements (TEs) in each block share a same power amplifier. Simulations indicate that active-ITS has the potential of achieving a given performance with much fewer TEs compared to the passive-ITS under the same total system power consumption, which makes it suitable for application to the size-limited and aesthetic-needed scenario, and the inevitable performance degradation caused by the block-amplifying architecture is acceptable.

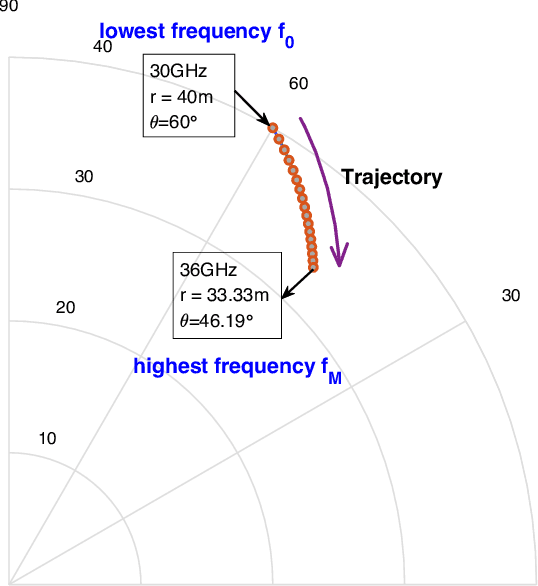

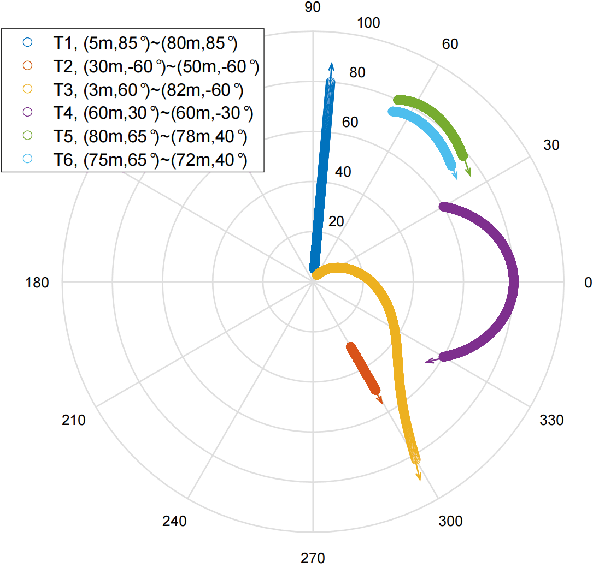

Beam Squint Assisted User Localization in Near-Field Communications Systems

May 23, 2022

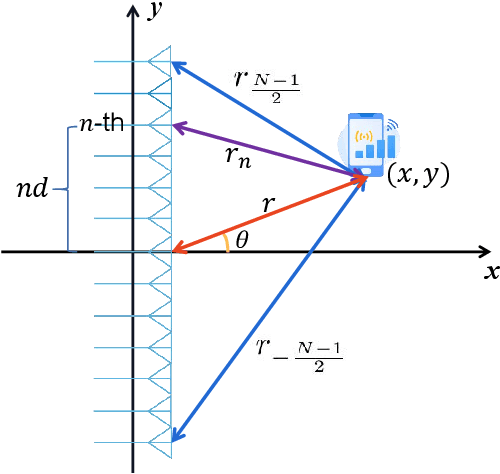

Abstract:The beam squint phenomenon in massive multi-input and multi-output wideband communications has been widely concerned recently, which generally deteriorates the beamforming performance. In this paper, we find that with the aid of the time-delay lines (TDs), the range and trajectory of the beam squint of a near-field communications system can be freely controlled, and hence it is possible to reversely utilize the beam squint for user localization. We derive the trajectory equation for near-field beam squint points and design a way to control the trajectory of these beam squint points. With the proposed design, beamforming from different subcarriers would purposely point to different angles and different distances such that users from different positions would receive the maximum power at different subcarriers. Hence, one can simply find the different users' position from the beam squint effect. Simulation results demonstrate the effectiveness of the proposed scheme.

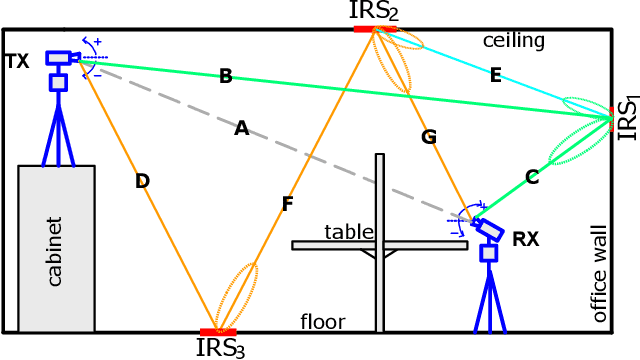

Intelligent Reflecting Surface Networks with Multi-Order-Reflection Effect: System Modelling and Critical Bounds

May 03, 2022

Abstract:In this paper, we model, analyze and optimize the multi-user and multi-order-reflection (MUMOR) intelligent reflecting surface (IRS) networks. We first derive a complete MUMOR IRS network model applicable for the arbitrary times of reflections, size and number of IRSs/reflectors. The optimal condition for achieving sum-rate upper bound with one IRS in a closed-form function and the analytical condition to achieve interference-free transmission are derived, respectively. Leveraging this optimal condition, we obtain the MUMOR sum-rate upper bound of the IRS network with different network topologies, where the linear graph (LG), complete graph (CG) and null graph (NG) topologies are considered. Simulation results verify our theories and derivations and demonstrate that the sum-rate upper bounds of different network topologies are under a K-fold improvement given K-piece IRS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge