Fabio Ramos

NVIDIA, University of Sydney

Guided Uncertainty-Aware Policy Optimization: Combining Learning and Model-Based Strategies for Sample-Efficient Policy Learning

May 26, 2020

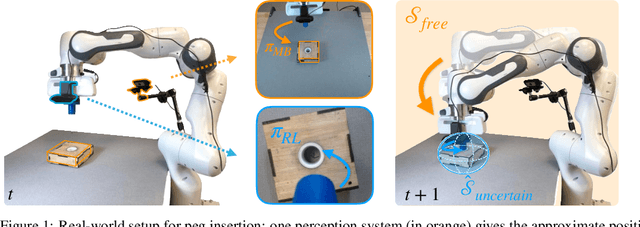

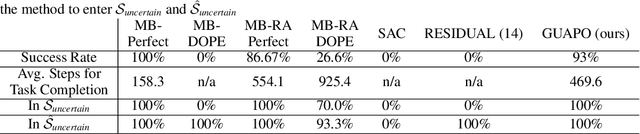

Abstract:Traditional robotic approaches rely on an accurate model of the environment, a detailed description of how to perform the task, and a robust perception system to keep track of the current state. On the other hand, reinforcement learning approaches can operate directly from raw sensory inputs with only a reward signal to describe the task, but are extremely sample-inefficient and brittle. In this work, we combine the strengths of model-based methods with the flexibility of learning-based methods to obtain a general method that is able to overcome inaccuracies in the robotics perception/actuation pipeline, while requiring minimal interactions with the environment. This is achieved by leveraging uncertainty estimates to divide the space in regions where the given model-based policy is reliable, and regions where it may have flaws or not be well defined. In these uncertain regions, we show that a locally learned-policy can be used directly with raw sensory inputs. We test our algorithm, Guided Uncertainty-Aware Policy Optimization (GUAPO), on a real-world robot performing peg insertion. Videos are available at https://sites.google.com/view/guapo-rl

Estimating Motion Uncertainty with Bayesian ICP

Apr 16, 2020

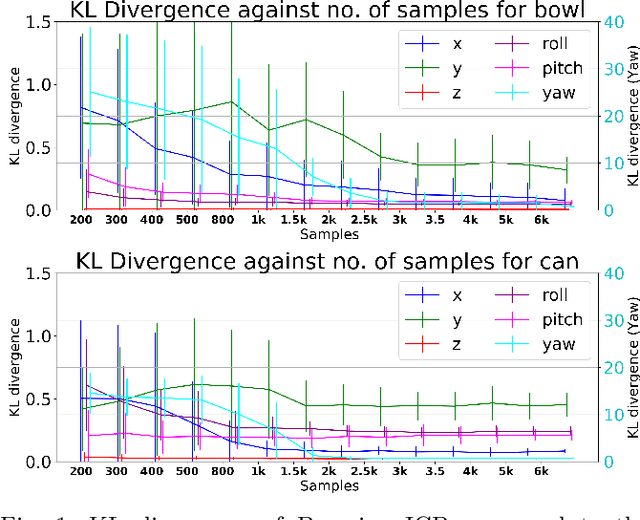

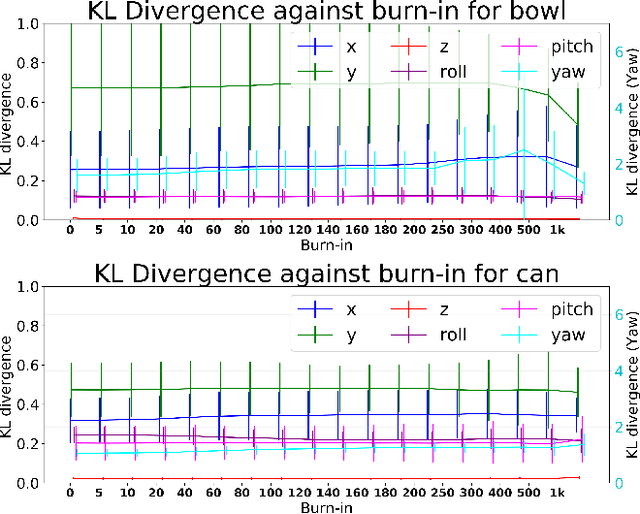

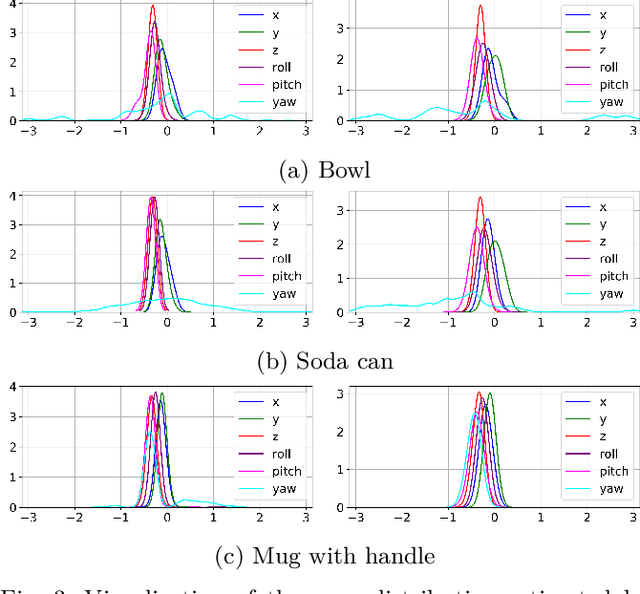

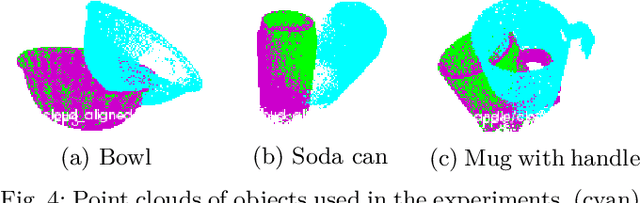

Abstract:Accurate uncertainty estimation associated with the pose transformation between two 3D point clouds is critical for autonomous navigation, grasping, and data fusion. Iterative closest point (ICP) is widely used to estimate the transformation between point cloud pairs by iteratively performing data association and motion estimation. Despite its success and popularity, ICP is effectively a deterministic algorithm, and attempts to reformulate it in a probabilistic manner generally do not capture all sources of uncertainty, such as data association errors and sensor noise. This leads to overconfident transformation estimates, potentially compromising the robustness of systems relying on them. In this paper we propose a novel method to estimate pose uncertainty in ICP with a Markov Chain Monte Carlo (MCMC) algorithm. Our method combines recent developments in optimization for scalable Bayesian sampling such as stochastic gradient Langevin dynamics (SGLD) to infer a full posterior distribution of the pose transformation between two point clouds. We evaluate our method, called Bayesian ICP, in experiments using 3D Kinect data demonstrating that our method is capable of both quickly and accuractely estimating pose uncertainty, taking into account data association uncertainty as reflected by the shape of the objects.

Intrinsic Exploration as Multi-Objective RL

Apr 06, 2020

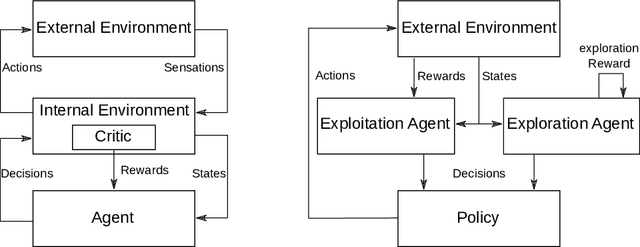

Abstract:Intrinsic motivation enables reinforcement learning (RL) agents to explore when rewards are very sparse, where traditional exploration heuristics such as Boltzmann or e-greedy would typically fail. However, intrinsic exploration is generally handled in an ad-hoc manner, where exploration is not treated as a core objective of the learning process; this weak formulation leads to sub-optimal exploration performance. To overcome this problem, we propose a framework based on multi-objective RL where both exploration and exploitation are being optimized as separate objectives. This formulation brings the balance between exploration and exploitation at a policy level, resulting in advantages over traditional methods. This also allows for controlling exploration while learning, at no extra cost. Such strategies achieve a degree of control over agent exploration that was previously unattainable with classic or intrinsic rewards. We demonstrate scalability to continuous state-action spaces by presenting a method (EMU-Q) based on our framework, guiding exploration towards regions of higher value-function uncertainty. EMU-Q is experimentally shown to outperform classic exploration techniques and other intrinsic RL methods on a continuous control benchmark and on a robotic manipulator.

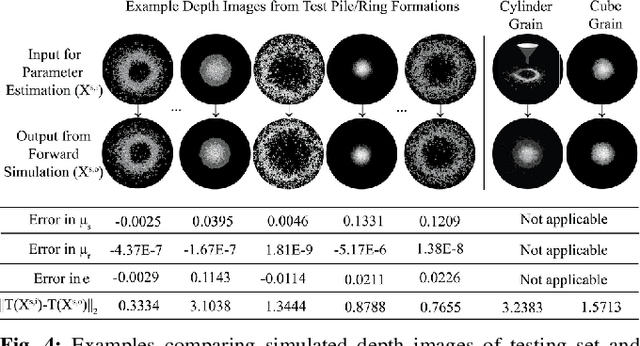

Inferring the Material Properties of Granular Media for Robotic Tasks

Mar 19, 2020

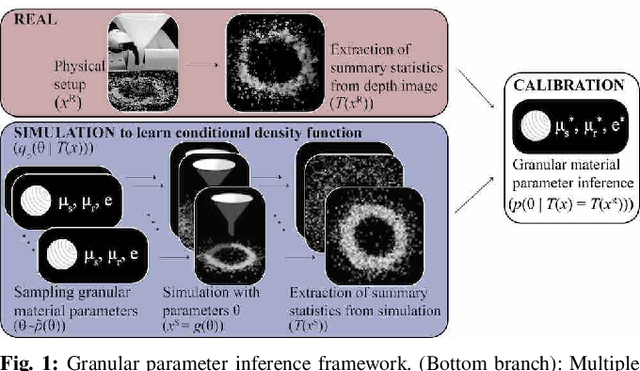

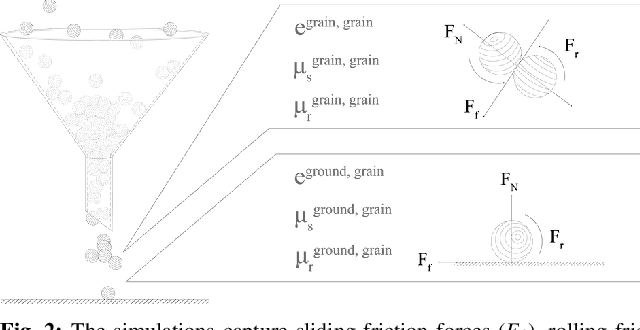

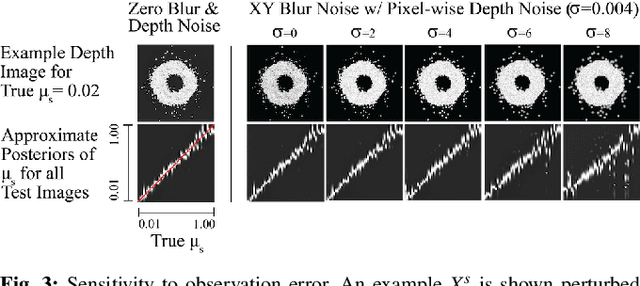

Abstract:Granular media (e.g., cereal grains, plastic resin pellets, and pills) are ubiquitous in robotics-integrated industries, such as agriculture, manufacturing, and pharmaceutical development. This prevalence mandates the accurate and efficient simulation of these materials. This work presents a software and hardware framework that automatically calibrates a fast physics simulator to accurately simulate granular materials by inferring material properties from real-world depth images of granular formations (i.e., piles and rings). Specifically, coefficients of sliding friction, rolling friction, and restitution of grains are estimated from summary statistics of grain formations using likelihood-free Bayesian inference. The calibrated simulator accurately predicts unseen granular formations in both simulation and experiment; furthermore, simulator predictions are shown to generalize to more complex tasks, including using a robot to pour grains into a bowl, as well as to create a desired pattern of piles and rings. Visualizations of the framework and experiments can be viewed at https://www.youtube.com/watch?v=X-5Sk2TUET4.

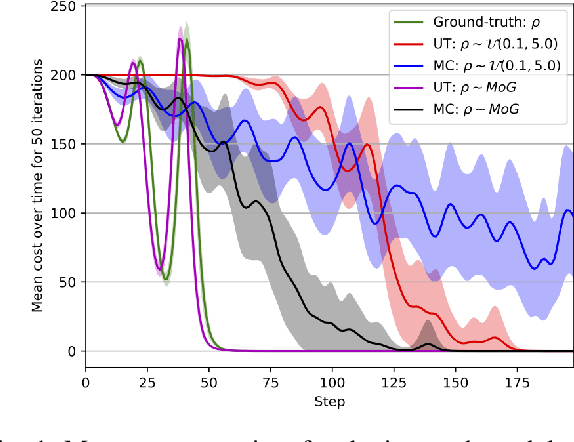

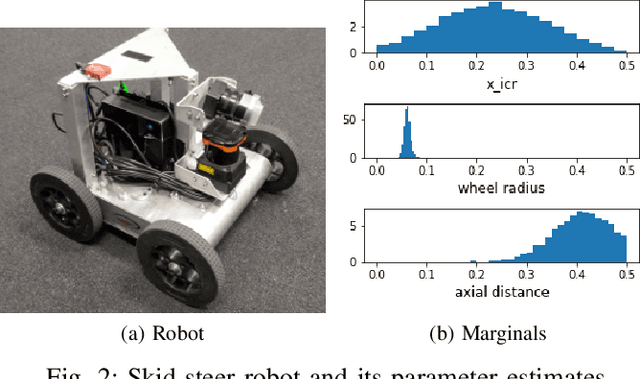

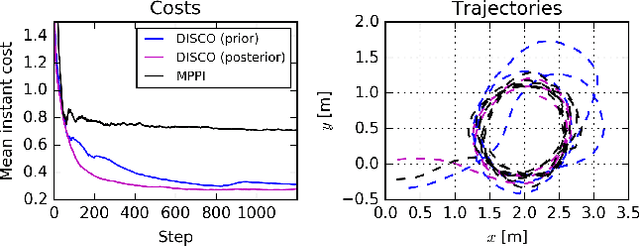

DISCO: Double Likelihood-free Inference Stochastic Control

Feb 25, 2020

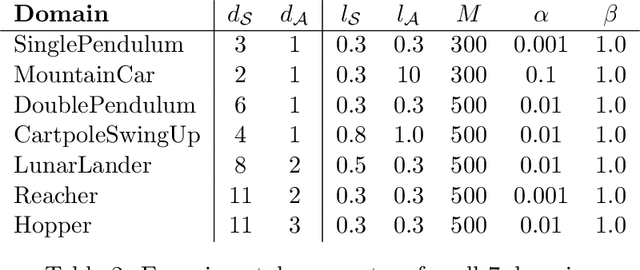

Abstract:Accurate simulation of complex physical systems enables the development, testing, and certification of control strategies before they are deployed into the real systems. As simulators become more advanced, the analytical tractability of the differential equations and associated numerical solvers incorporated in the simulations diminishes, making them difficult to analyse. A potential solution is the use of probabilistic inference to assess the uncertainty of the simulation parameters given real observations of the system. Unfortunately the likelihood function required for inference is generally expensive to compute or totally intractable. In this paper we propose to leverage the power of modern simulators and recent techniques in Bayesian statistics for likelihood-free inference to design a control framework that is efficient and robust with respect to the uncertainty over simulation parameters. The posterior distribution over simulation parameters is propagated through a potentially non-analytical model of the system with the unscented transform, and a variant of the information theoretical model predictive control. This approach provides a more efficient way to evaluate trajectory roll outs than Monte Carlo sampling, reducing the online computation burden. Experiments show that the controller proposed attained superior performance and robustness on classical control and robotics tasks when compared to models not accounting for the uncertainty over model parameters.

Reinforcement Learning with Probabilistically Complete Exploration

Jan 20, 2020

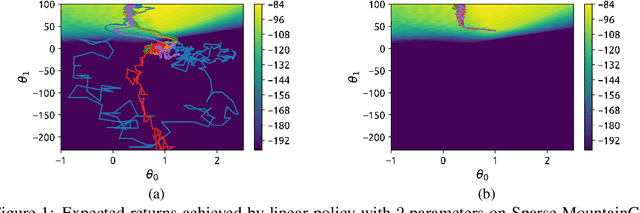

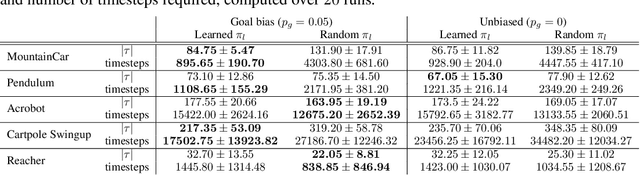

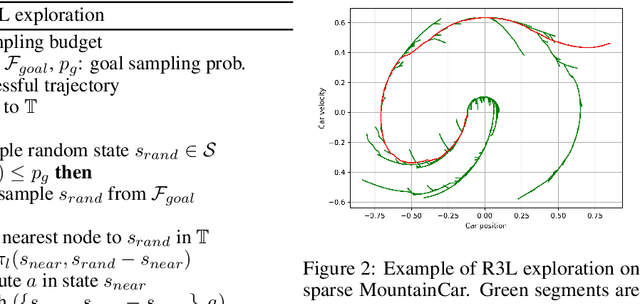

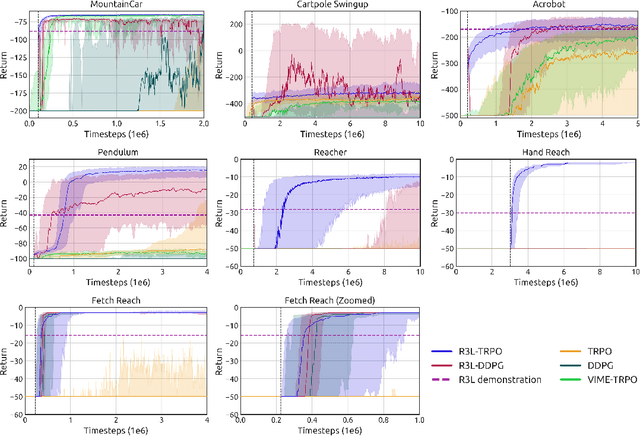

Abstract:Balancing exploration and exploitation remains a key challenge in reinforcement learning (RL). State-of-the-art RL algorithms suffer from high sample complexity, particularly in the sparse reward case, where they can do no better than to explore in all directions until the first positive rewards are found. To mitigate this, we propose Rapidly Randomly-exploring Reinforcement Learning (R3L). We formulate exploration as a search problem and leverage widely-used planning algorithms such as Rapidly-exploring Random Tree (RRT) to find initial solutions. These solutions are used as demonstrations to initialize a policy, then refined by a generic RL algorithm, leading to faster and more stable convergence. We provide theoretical guarantees of R3L exploration finding successful solutions, as well as bounds for its sampling complexity. We experimentally demonstrate the method outperforms classic and intrinsic exploration techniques, requiring only a fraction of exploration samples and achieving better asymptotic performance.

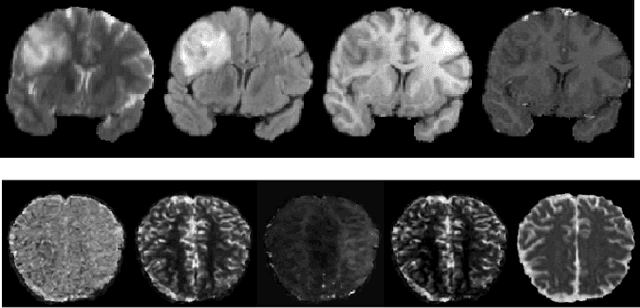

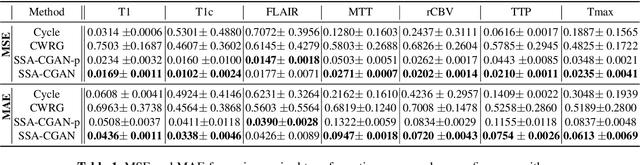

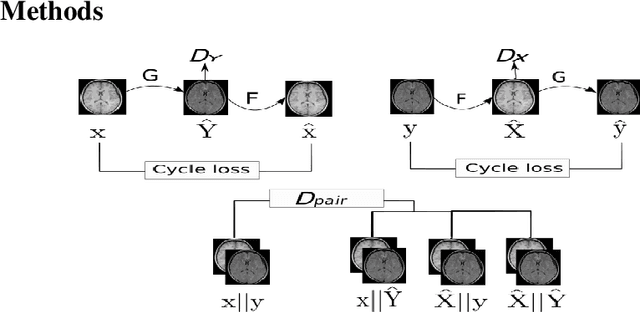

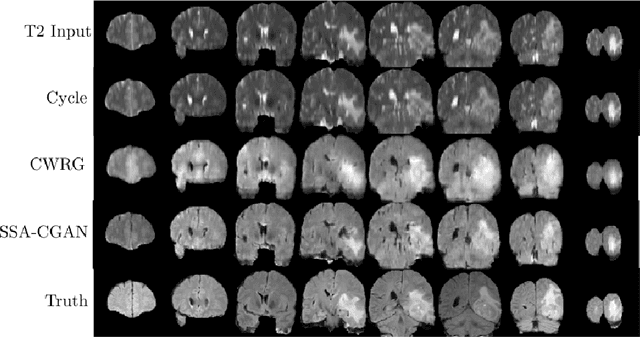

Semi-supervised Learning Approach to Generate Neuroimaging Modalities with Adversarial Training

Dec 09, 2019

Abstract:Magnetic Resonance Imaging (MRI) of the brain can come in the form of different modalities such as T1-weighted and Fluid Attenuated Inversion Recovery (FLAIR) which has been used to investigate a wide range of neurological disorders. Current state-of-the-art models for brain tissue segmentation and disease classification require multiple modalities for training and inference. However, the acquisition of all of these modalities are expensive, time-consuming, inconvenient and the required modalities are often not available. As a result, these datasets contain large amounts of \emph{unpaired} data, where examples in the dataset do not contain all modalities. On the other hand, there is smaller fraction of examples that contain all modalities (\emph{paired} data) and furthermore each modality is high dimensional when compared to number of datapoints. In this work, we develop a method to address these issues with semi-supervised learning in translating between two neuroimaging modalities. Our proposed model, Semi-Supervised Adversarial CycleGAN (SSA-CGAN), uses an adversarial loss to learn from \emph{unpaired} data points, cycle loss to enforce consistent reconstructions of the mappings and another adversarial loss to take advantage of \emph{paired} data points. Our experiments demonstrate that our proposed framework produces an improvement in reconstruction error and reduced variance for the pairwise translation of multiple modalities and is more robust to thermal noise when compared to existing methods.

Dynamic Hilbert Maps: Real-Time Occupancy Predictions in Changing Environment

Dec 04, 2019

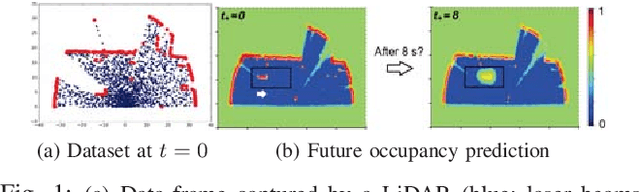

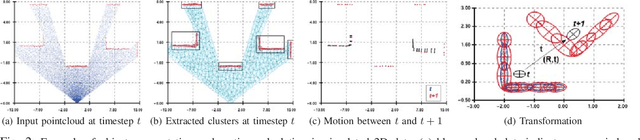

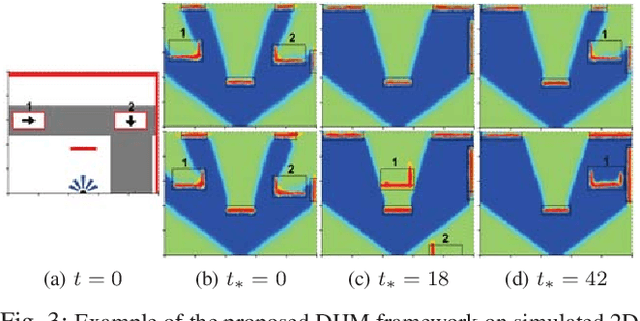

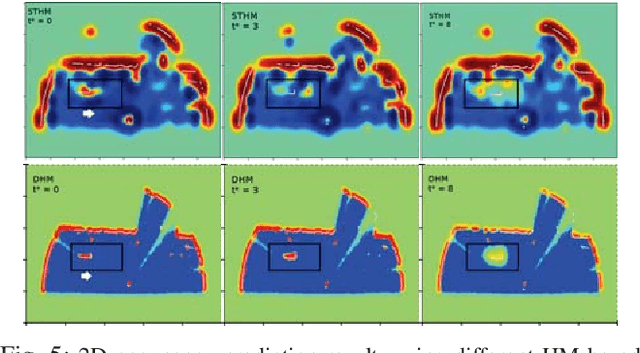

Abstract:This paper addresses the problem of learning instantaneous occupancy levels of dynamic environments and predicting future occupancy levels. Due to the complexity of most real-world environments, such as urban streets or crowded areas, the efficient and robust incorporation of temporal dependencies into otherwise static occupancy models remains a challenge. We propose a method to capture the spatial uncertainty of moving objects and incorporate this uncertainty information into a continuous occupancy map represented in a rich high-dimensional feature space. Experiments performed using LIDAR data verified the real-time performance of the algorithm.

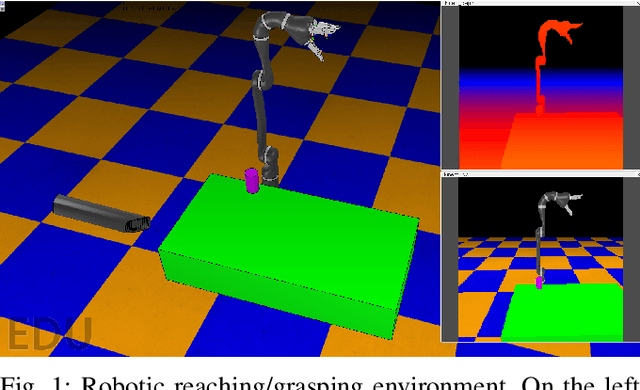

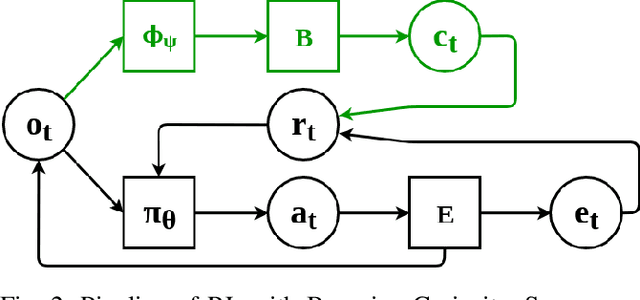

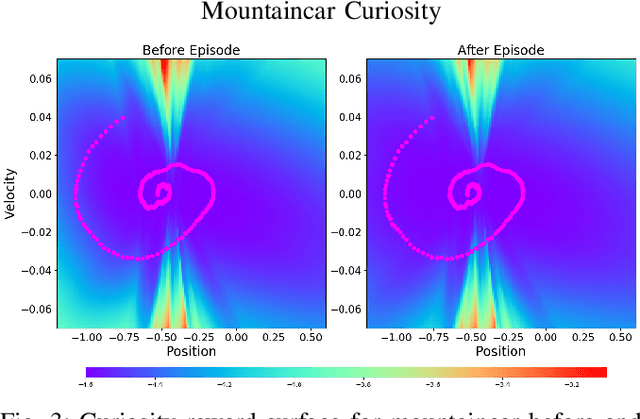

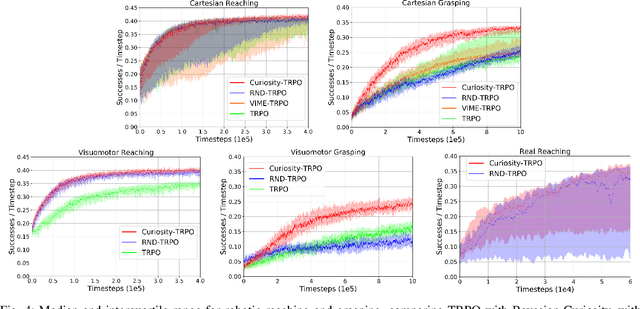

Bayesian Curiosity for Efficient Exploration in Reinforcement Learning

Nov 20, 2019

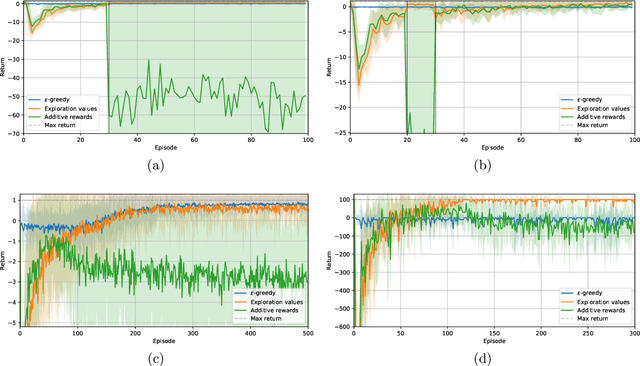

Abstract:Balancing exploration and exploitation is a fundamental part of reinforcement learning, yet most state-of-the-art algorithms use a naive exploration protocol like $\epsilon$-greedy. This contributes to the problem of high sample complexity, as the algorithm wastes effort by repeatedly visiting parts of the state space that have already been explored. We introduce a novel method based on Bayesian linear regression and latent space embedding to generate an intrinsic reward signal that encourages the learning agent to seek out unexplored parts of the state space. This method is computationally efficient, simple to implement, and can extend any state-of-the-art reinforcement learning algorithm. We evaluate the method on a range of algorithms and challenging control tasks, on both simulated and physical robots, demonstrating how the proposed method can significantly improve sample complexity.

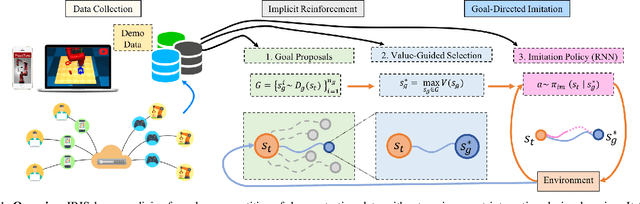

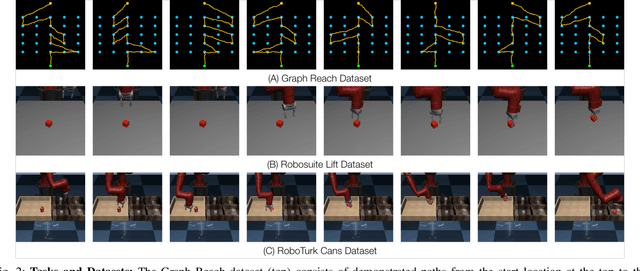

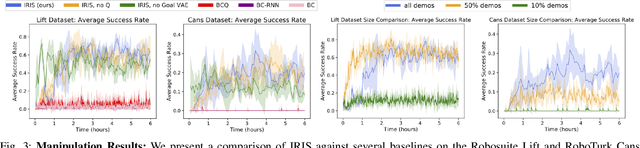

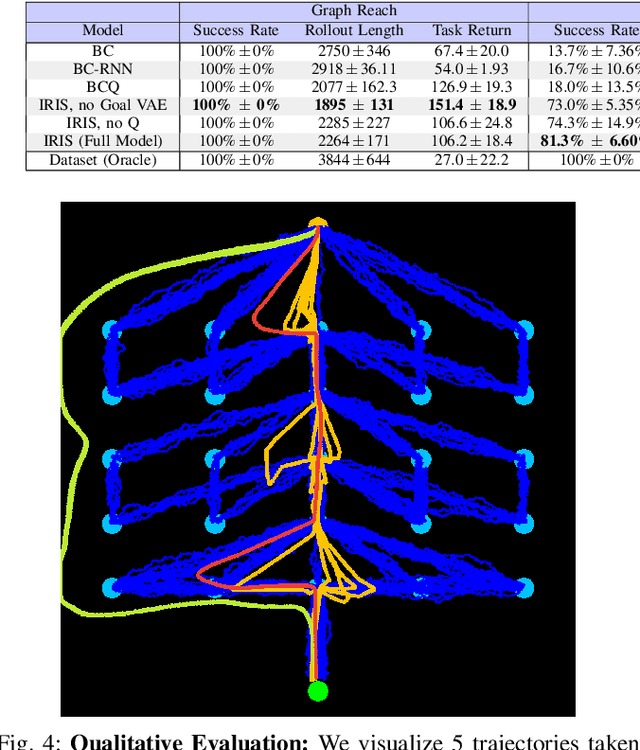

IRIS: Implicit Reinforcement without Interaction at Scale for Learning Control from Offline Robot Manipulation Data

Nov 13, 2019

Abstract:Learning from offline task demonstrations is a problem of great interest in robotics. For simple short-horizon manipulation tasks with modest variation in task instances, offline learning from a small set of demonstrations can produce controllers that successfully solve the task. However, leveraging a fixed batch of data can be problematic for larger datasets and longer-horizon tasks with greater variations. The data can exhibit substantial diversity and consist of suboptimal solution approaches. In this paper, we propose Implicit Reinforcement without Interaction at Scale (IRIS), a novel framework for learning from large-scale demonstration datasets. IRIS factorizes the control problem into a goal-conditioned low-level controller that imitates short demonstration sequences and a high-level goal selection mechanism that sets goals for the low-level and selectively combines parts of suboptimal solutions leading to more successful task completions. We evaluate IRIS across three datasets, including the RoboTurk Cans dataset collected by humans via crowdsourcing, and show that performant policies can be learned from purely offline learning. Additional results and videos at https://stanfordvl.github.io/iris/ .

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge