Cosmin Ancuti

Low Light Image Enhancement Challenge at NTIRE 2026

Apr 19, 2026Abstract:This paper presents a comprehensive review of the NTIRE 2026 Low Light Image Enhancement Challenge, highlighting the proposed solutions and final results. The objective of this challenge is to identify effective networks capable of producing clearer and visually compelling images in diverse and challenging conditions by learning representative visual cues with the purpose of restoring information loss due to low-contrast and noisy images. A total of 195 participants registered for the first track and 153 for the second track of the competition, and 22 teams ultimately submitted valid entries. This paper thoroughly evaluates the state-of-the-art advances in (joint denoising and) low-light image enhancement, showcasing the significant progress in the field, while leveraging samples of our novel dataset.

Multinex: Lightweight Low-light Image Enhancement via Multi-prior Retinex

Apr 11, 2026Abstract:Low-light image enhancement (LLIE) aims to restore natural visibility, color fidelity, and structural detail under severe illumination degradation. State-of-the-art (SOTA) LLIE techniques often rely on large models and multi-stage training, limiting practicality for edge deployment. Moreover, their dependence on a single color space introduces instability and visible exposure or color artifacts. To address these, we propose Multinex, an ultra-lightweight structured framework that integrates multiple fine-grained representations within a principled Retinex residual formulation. It decomposes an image into illumination and color prior stacks derived from distinct analytic representations, and learns to fuse these representations into luminance and reflectance adjustments required to correct exposure. By prioritizing enhancement over reconstruction and exploiting lightweight neural operations, Multinex significantly reduces computational cost, exemplified by its lightweight (45K parameters) and nano (0.7K parameters) versions. Extensive benchmarks show that all lightweight variants significantly outperform their corresponding lightweight SOTA models, and reach comparable performance to heavy models. Paper page available at https://albrateanu.github.io/multinex.

SFP: Real-World Scene Recovery Using Spatial and Frequency Priors

Dec 09, 2025

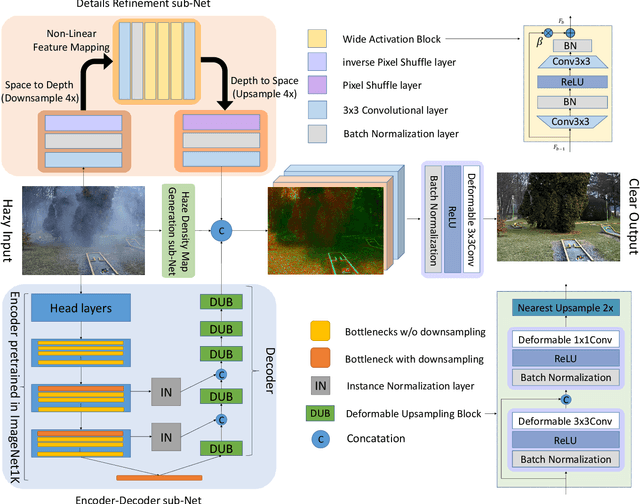

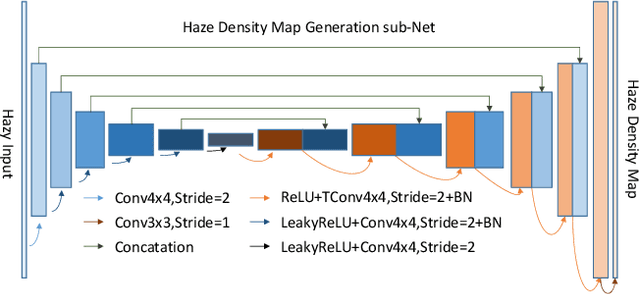

Abstract:Scene recovery serves as a critical task for various computer vision applications. Existing methods typically rely on a single prior, which is inherently insufficient to handle multiple degradations, or employ complex network architectures trained on synthetic data, which suffer from poor generalization for diverse real-world scenarios. In this paper, we propose Spatial and Frequency Priors (SFP) for real-world scene recovery. In the spatial domain, we observe that the inverse of the degraded image exhibits a projection along its spectral direction that resembles the scene transmission. Leveraging this spatial prior, the transmission map is estimated to recover the scene from scattering degradation. In the frequency domain, a mask is constructed for adaptive frequency enhancement, with two parameters estimated using our proposed novel priors. Specifically, one prior assumes that the mean intensity of the degraded image's direct current (DC) components across three channels in the frequency domain closely approximates that of each channel in the clear image. The second prior is based on the observation that, for clear images, the magnitude of low radial frequencies below 0.001 constitutes approximately 1% of the total spectrum. Finally, we design a weighted fusion strategy to integrate spatial-domain restoration, frequency-domain enhancement, and salient features from the input image, yielding the final recovered result. Extensive evaluations demonstrate the effectiveness and superiority of our proposed SFP for scene recovery under various degradation conditions.

ISALux: Illumination and Segmentation Aware Transformer Employing Mixture of Experts for Low Light Image Enhancement

Aug 25, 2025

Abstract:We introduce ISALux, a novel transformer-based approach for Low-Light Image Enhancement (LLIE) that seamlessly integrates illumination and semantic priors. Our architecture includes an original self-attention block, Hybrid Illumination and Semantics-Aware Multi-Headed Self- Attention (HISA-MSA), which integrates illumination and semantic segmentation maps for en- hanced feature extraction. ISALux employs two self-attention modules to independently process illumination and semantic features, selectively enriching each other to regulate luminance and high- light structural variations in real-world scenarios. A Mixture of Experts (MoE)-based Feed-Forward Network (FFN) enhances contextual learning, with a gating mechanism conditionally activating the top K experts for specialized processing. To address overfitting in LLIE methods caused by distinct light patterns in benchmarking datasets, we enhance the HISA-MSA module with low-rank matrix adaptations (LoRA). Extensive qualitative and quantitative evaluations across multiple specialized datasets demonstrate that ISALux is competitive with state-of-the-art (SOTA) methods. Addition- ally, an ablation study highlights the contribution of each component in the proposed model. Code will be released upon publication.

ModalFormer: Multimodal Transformer for Low-Light Image Enhancement

Jul 27, 2025

Abstract:Low-light image enhancement (LLIE) is a fundamental yet challenging task due to the presence of noise, loss of detail, and poor contrast in images captured under insufficient lighting conditions. Recent methods often rely solely on pixel-level transformations of RGB images, neglecting the rich contextual information available from multiple visual modalities. In this paper, we present ModalFormer, the first large-scale multimodal framework for LLIE that fully exploits nine auxiliary modalities to achieve state-of-the-art performance. Our model comprises two main components: a Cross-modal Transformer (CM-T) designed to restore corrupted images while seamlessly integrating multimodal information, and multiple auxiliary subnetworks dedicated to multimodal feature reconstruction. Central to the CM-T is our novel Cross-modal Multi-headed Self-Attention mechanism (CM-MSA), which effectively fuses RGB data with modality-specific features--including deep feature embeddings, segmentation information, geometric cues, and color information--to generate information-rich hybrid attention maps. Extensive experiments on multiple benchmark datasets demonstrate ModalFormer's state-of-the-art performance in LLIE. Pre-trained models and results are made available at https://github.com/albrateanu/ModalFormer.

NTIRE 2025 Image Shadow Removal Challenge Report

Jun 18, 2025

Abstract:This work examines the findings of the NTIRE 2025 Shadow Removal Challenge. A total of 306 participants have registered, with 17 teams successfully submitting their solutions during the final evaluation phase. Following the last two editions, this challenge had two evaluation tracks: one focusing on reconstruction fidelity and the other on visual perception through a user study. Both tracks were evaluated with images from the WSRD+ dataset, simulating interactions between self- and cast-shadows with a large number of diverse objects, textures, and materials.

The Tenth NTIRE 2025 Image Denoising Challenge Report

Apr 16, 2025

Abstract:This paper presents an overview of the NTIRE 2025 Image Denoising Challenge ({\sigma} = 50), highlighting the proposed methodologies and corresponding results. The primary objective is to develop a network architecture capable of achieving high-quality denoising performance, quantitatively evaluated using PSNR, without constraints on computational complexity or model size. The task assumes independent additive white Gaussian noise (AWGN) with a fixed noise level of 50. A total of 290 participants registered for the challenge, with 20 teams successfully submitting valid results, providing insights into the current state-of-the-art in image denoising.

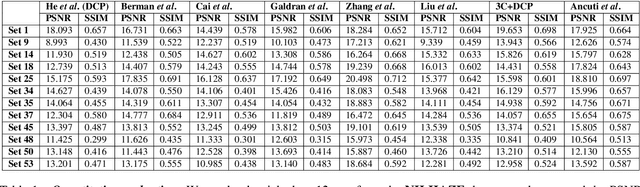

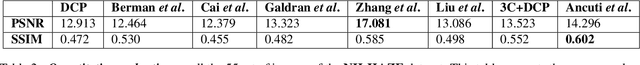

NH-HAZE: An Image Dehazing Benchmark with Non-Homogeneous Hazy and Haze-Free Images

May 07, 2020

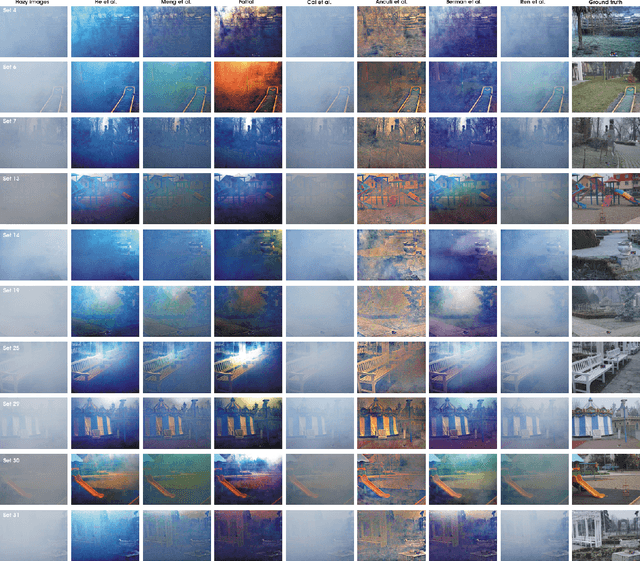

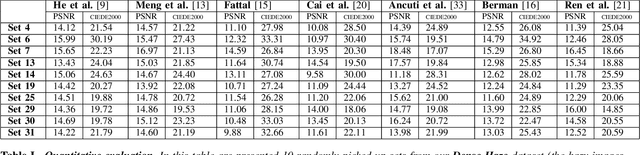

Abstract:Image dehazing is an ill-posed problem that has been extensively studied in the recent years. The objective performance evaluation of the dehazing methods is one of the major obstacles due to the lacking of a reference dataset. While the synthetic datasets have shown important limitations, the few realistic datasets introduced recently assume homogeneous haze over the entire scene. Since in many real cases haze is not uniformly distributed we introduce NH-HAZE, a non-homogeneous realistic dataset with pairs of real hazy and corresponding haze-free images. This is the first non-homogeneous image dehazing dataset and contains 55 outdoor scenes. The non-homogeneous haze has been introduced in the scene using a professional haze generator that imitates the real conditions of hazy scenes. Additionally, this work presents an objective assessment of several state-of-the-art single image dehazing methods that were evaluated using NH-HAZE dataset.

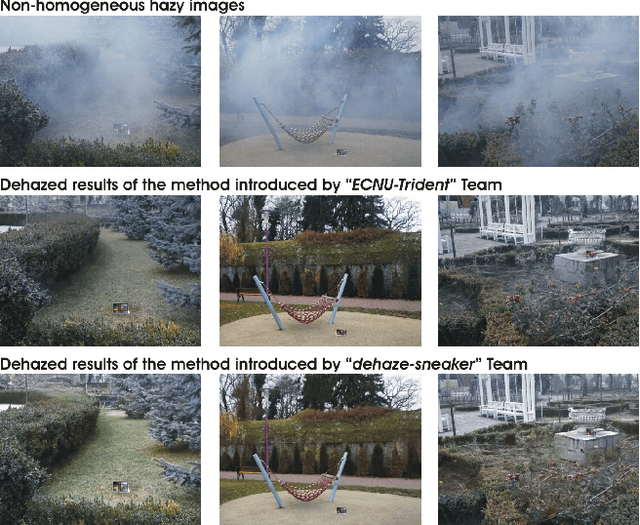

NTIRE 2020 Challenge on NonHomogeneous Dehazing

May 07, 2020

Abstract:This paper reviews the NTIRE 2020 Challenge on NonHomogeneous Dehazing of images (restoration of rich details in hazy image). We focus on the proposed solutions and their results evaluated on NH-Haze, a novel dataset consisting of 55 pairs of real haze free and nonhomogeneous hazy images recorded outdoor. NH-Haze is the first realistic nonhomogeneous haze dataset that provides ground truth images. The nonhomogeneous haze has been produced using a professional haze generator that imitates the real conditions of haze scenes. 168 participants registered in the challenge and 27 teams competed in the final testing phase. The proposed solutions gauge the state-of-the-art in image dehazing.

Dense Haze: A benchmark for image dehazing with dense-haze and haze-free images

Apr 05, 2019

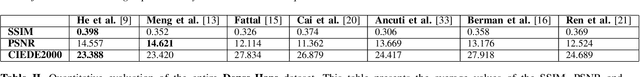

Abstract:Single image dehazing is an ill-posed problem that has recently drawn important attention. Despite the significant increase in interest shown for dehazing over the past few years, the validation of the dehazing methods remains largely unsatisfactory, due to the lack of pairs of real hazy and corresponding haze-free reference images. To address this limitation, we introduce Dense-Haze - a novel dehazing dataset. Characterized by dense and homogeneous hazy scenes, Dense-Haze contains 33 pairs of real hazy and corresponding haze-free images of various outdoor scenes. The hazy scenes have been recorded by introducing real haze, generated by professional haze machines. The hazy and haze-free corresponding scenes contain the same visual content captured under the same illumination parameters. Dense-Haze dataset aims to push significantly the state-of-the-art in single-image dehazing by promoting robust methods for real and various hazy scenes. We also provide a comprehensive qualitative and quantitative evaluation of state-of-the-art single image dehazing techniques based on the Dense-Haze dataset. Not surprisingly, our study reveals that the existing dehazing techniques perform poorly for dense homogeneous hazy scenes and that there is still much room for improvement.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge