Bailiang Jian

Evo-MedAgent: Beyond One-Shot Diagnosis with Agents That Remember, Reflect, and Improve

Apr 15, 2026Abstract:Tool-augmented large language model (LLM) agents can orchestrate specialist classifiers, segmentation models, and visual question-answering modules to interpret chest X-rays. However, these agents still solve each case in isolation: they fail to accumulate experience across cases, correct recurrent reasoning mistakes, or adapt their tool-use behavior without expensive reinforcement learning. While a radiologist naturally improves with every case, current agents remain static. In this work, we propose Evo-MedAgent, a self-evolving memory module that equips a medical agent with the capacity for inter-case learning at test time. Our memory comprises three complementary stores: (1)~\emph{Retrospective Clinical Episodes} that retrieve problem-solving experiences from similar past cases, (2)~an \emph{Adaptive Procedural Heuristics} bank curating priority-tagged diagnostic rules that evolves via reflection, much like a physician refining their internal criteria, and (3)~a \emph{Tool Reliability Controller} that tracks per-tool trustworthiness. On ChestAgentBench, Evo-MedAgent raises multiple-choice question (MCQ) accuracy from 0.68 to 0.79 on GPT-5-mini, and from 0.76 to 0.87 on Gemini-3 Flash. With a strong base model, evolving memory improves performance more effectively than orchestrating external tools on qualitative diagnostic tasks. Because Evo-MedAgent requires no training, its per-case overhead is bounded by one additional retrieval pass and a single reflection call, making it deployable on top of any frozen model.

Beyond the LUMIR challenge: The pathway to foundational registration models

May 30, 2025

Abstract:Medical image challenges have played a transformative role in advancing the field, catalyzing algorithmic innovation and establishing new performance standards across diverse clinical applications. Image registration, a foundational task in neuroimaging pipelines, has similarly benefited from the Learn2Reg initiative. Building on this foundation, we introduce the Large-scale Unsupervised Brain MRI Image Registration (LUMIR) challenge, a next-generation benchmark designed to assess and advance unsupervised brain MRI registration. Distinct from prior challenges that leveraged anatomical label maps for supervision, LUMIR removes this dependency by providing over 4,000 preprocessed T1-weighted brain MRIs for training without any label maps, encouraging biologically plausible deformation modeling through self-supervision. In addition to evaluating performance on 590 held-out test subjects, LUMIR introduces a rigorous suite of zero-shot generalization tasks, spanning out-of-domain imaging modalities (e.g., FLAIR, T2-weighted, T2*-weighted), disease populations (e.g., Alzheimer's disease), acquisition protocols (e.g., 9.4T MRI), and species (e.g., macaque brains). A total of 1,158 subjects and over 4,000 image pairs were included for evaluation. Performance was assessed using both segmentation-based metrics (Dice coefficient, 95th percentile Hausdorff distance) and landmark-based registration accuracy (target registration error). Across both in-domain and zero-shot tasks, deep learning-based methods consistently achieved state-of-the-art accuracy while producing anatomically plausible deformation fields. The top-performing deep learning-based models demonstrated diffeomorphic properties and inverse consistency, outperforming several leading optimization-based methods, and showing strong robustness to most domain shifts, the exception being a drop in performance on out-of-domain contrasts.

TimeFlow: Longitudinal Brain Image Registration and Aging Progression Analysis

Jan 15, 2025

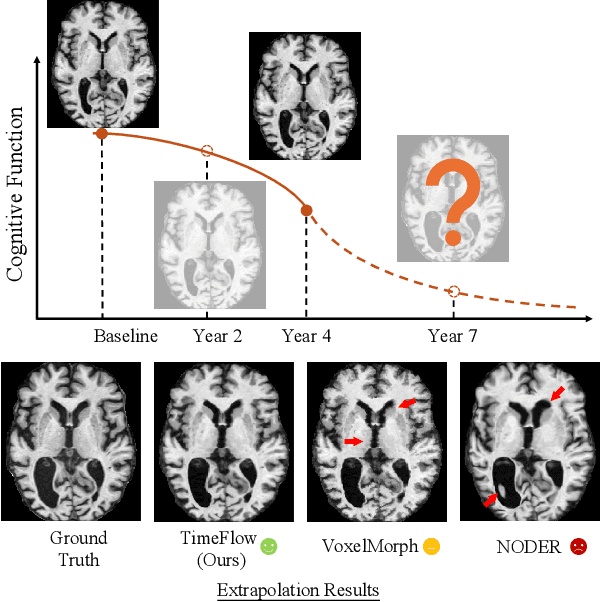

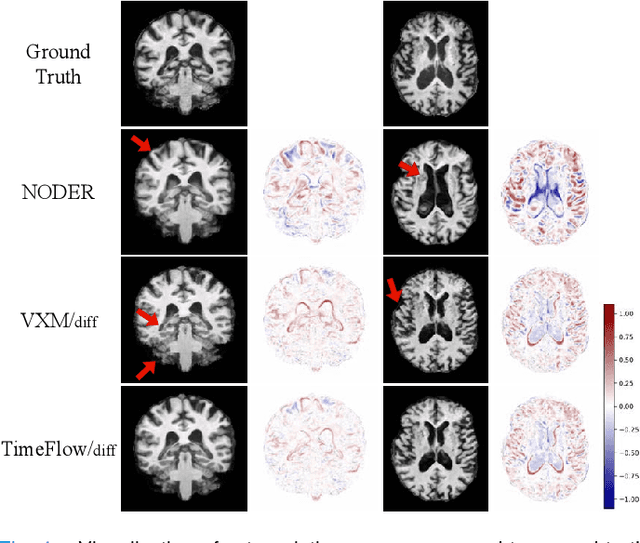

Abstract:Predicting future brain states is crucial for understanding healthy aging and neurodegenerative diseases. Longitudinal brain MRI registration, a cornerstone for such analyses, has long been limited by its inability to forecast future developments, reliance on extensive, dense longitudinal data, and the need to balance registration accuracy with temporal smoothness. In this work, we present \emph{TimeFlow}, a novel framework for longitudinal brain MRI registration that overcomes all these challenges. Leveraging a U-Net architecture with temporal conditioning inspired by diffusion models, TimeFlow enables accurate longitudinal registration and facilitates prospective analyses through future image prediction. Unlike traditional methods that depend on explicit smoothness regularizers and dense sequential data, TimeFlow achieves temporal consistency and continuity without these constraints. Experimental results highlight its superior performance in both future timepoint prediction and registration accuracy compared to state-of-the-art methods. Additionally, TimeFlow supports novel biological brain aging analyses, effectively differentiating neurodegenerative conditions from healthy aging. It eliminates the need for segmentation, thereby avoiding the challenges of non-trivial annotation and inconsistent segmentation errors. TimeFlow paves the way for accurate, data-efficient, and annotation-free prospective analyses of brain aging and chronic diseases.

Mamba? Catch The Hype Or Rethink What Really Helps for Image Registration

Jul 27, 2024

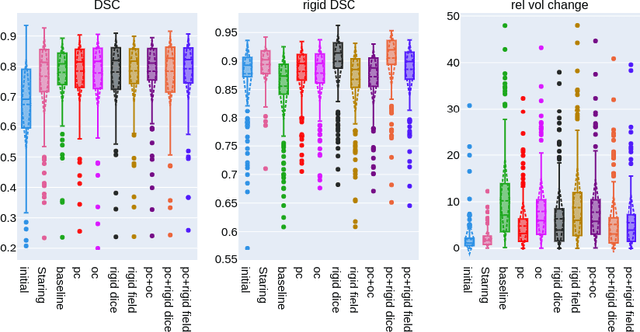

Abstract:Our findings indicate that adopting "advanced" computational elements fails to significantly improve registration accuracy. Instead, well-established registration-specific designs offer fair improvements, enhancing results by a marginal 1.5\% over the baseline. Our findings emphasize the importance of rigorous, unbiased evaluation and contribution disentanglement of all low- and high-level registration components, rather than simply following the computer vision trends with "more advanced" computational blocks. We advocate for simpler yet effective solutions and novel evaluation metrics that go beyond conventional registration accuracy, warranting further research across diverse organs and modalities. The code is available at \url{https://github.com/BailiangJ/rethink-reg}.

Biomedical image analysis competitions: The state of current participation practice

Dec 16, 2022Abstract:The number of international benchmarking competitions is steadily increasing in various fields of machine learning (ML) research and practice. So far, however, little is known about the common practice as well as bottlenecks faced by the community in tackling the research questions posed. To shed light on the status quo of algorithm development in the specific field of biomedical imaging analysis, we designed an international survey that was issued to all participants of challenges conducted in conjunction with the IEEE ISBI 2021 and MICCAI 2021 conferences (80 competitions in total). The survey covered participants' expertise and working environments, their chosen strategies, as well as algorithm characteristics. A median of 72% challenge participants took part in the survey. According to our results, knowledge exchange was the primary incentive (70%) for participation, while the reception of prize money played only a minor role (16%). While a median of 80 working hours was spent on method development, a large portion of participants stated that they did not have enough time for method development (32%). 25% perceived the infrastructure to be a bottleneck. Overall, 94% of all solutions were deep learning-based. Of these, 84% were based on standard architectures. 43% of the respondents reported that the data samples (e.g., images) were too large to be processed at once. This was most commonly addressed by patch-based training (69%), downsampling (37%), and solving 3D analysis tasks as a series of 2D tasks. K-fold cross-validation on the training set was performed by only 37% of the participants and only 50% of the participants performed ensembling based on multiple identical models (61%) or heterogeneous models (39%). 48% of the respondents applied postprocessing steps.

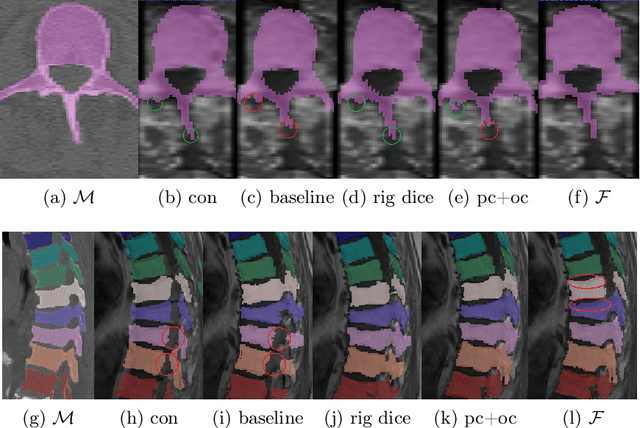

Weakly-supervised Biomechanically-constrained CT/MRI Registration of the Spine

May 16, 2022

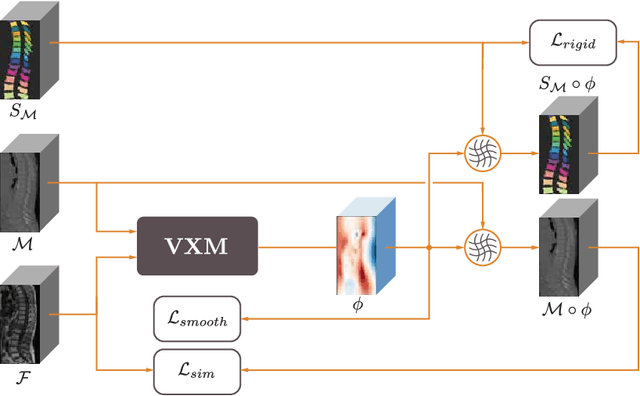

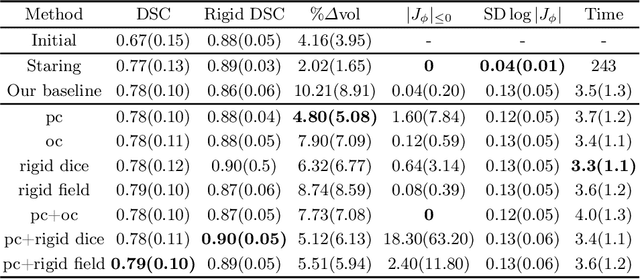

Abstract:CT and MRI are two of the most informative modalities in spinal diagnostics and treatment planning. CT is useful when analysing bony structures, while MRI gives information about the soft tissue. Thus, fusing the information of both modalities can be very beneficial. Registration is the first step for this fusion. While the soft tissues around the vertebra are deformable, each vertebral body is constrained to move rigidly. We propose a weakly-supervised deep learning framework that preserves the rigidity and the volume of each vertebra while maximizing the accuracy of the registration. To achieve this goal, we introduce anatomy-aware losses for training the network. We specifically design these losses to depend only on the CT label maps since automatic vertebra segmentation in CT gives more accurate results contrary to MRI. We evaluate our method on an in-house dataset of 167 patients. Our results show that adding the anatomy-aware losses increases the plausibility of the inferred transformation while keeping the accuracy untouched.

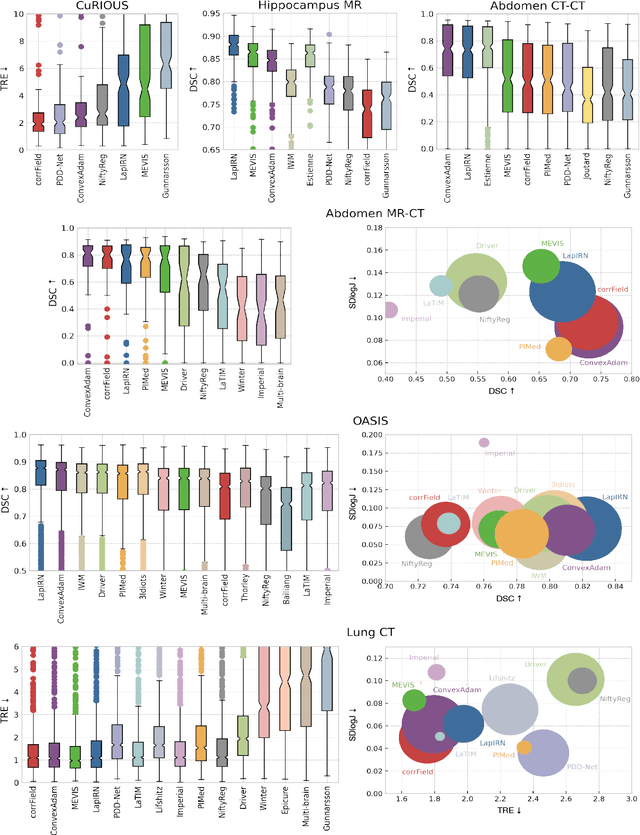

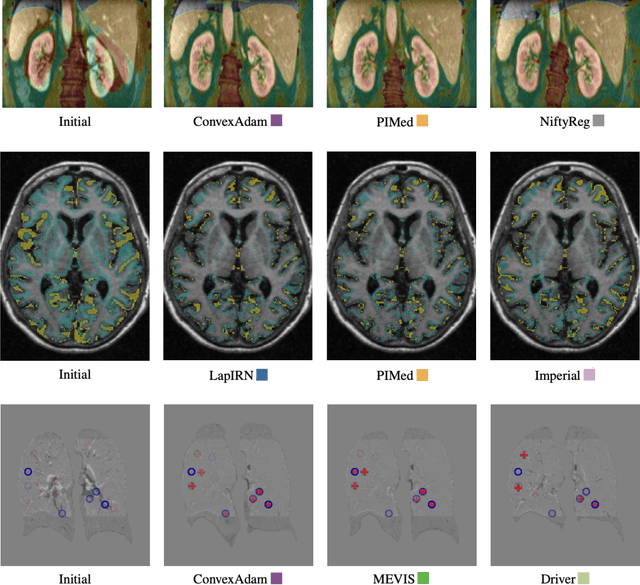

Learn2Reg: comprehensive multi-task medical image registration challenge, dataset and evaluation in the era of deep learning

Dec 23, 2021

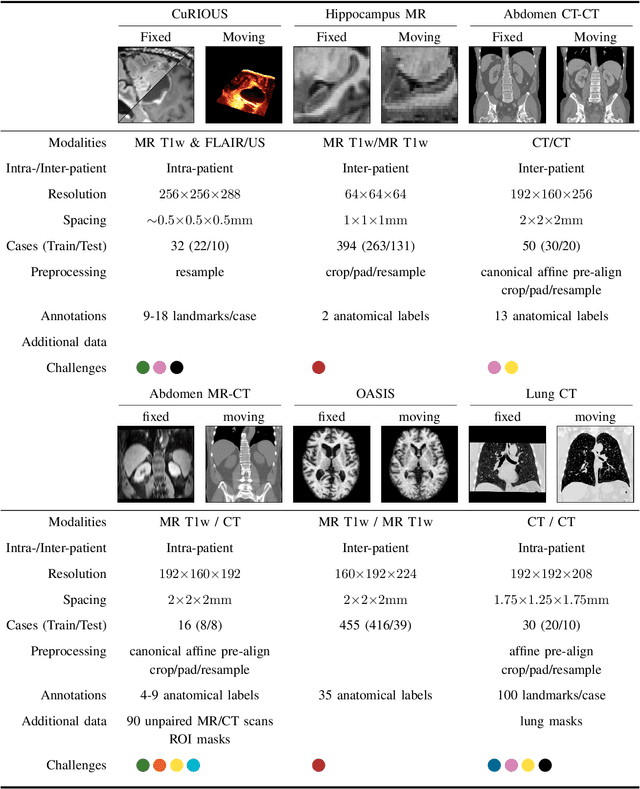

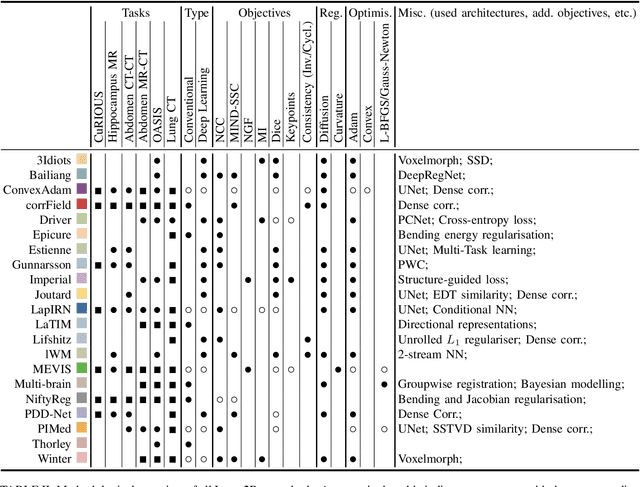

Abstract:Image registration is a fundamental medical image analysis task, and a wide variety of approaches have been proposed. However, only a few studies have comprehensively compared medical image registration approaches on a wide range of clinically relevant tasks, in part because of the lack of availability of such diverse data. This limits the development of registration methods, the adoption of research advances into practice, and a fair benchmark across competing approaches. The Learn2Reg challenge addresses these limitations by providing a multi-task medical image registration benchmark for comprehensive characterisation of deformable registration algorithms. A continuous evaluation will be possible at https://learn2reg.grand-challenge.org. Learn2Reg covers a wide range of anatomies (brain, abdomen, and thorax), modalities (ultrasound, CT, MR), availability of annotations, as well as intra- and inter-patient registration evaluation. We established an easily accessible framework for training and validation of 3D registration methods, which enabled the compilation of results of over 65 individual method submissions from more than 20 unique teams. We used a complementary set of metrics, including robustness, accuracy, plausibility, and runtime, enabling unique insight into the current state-of-the-art of medical image registration. This paper describes datasets, tasks, evaluation methods and results of the challenge, and the results of further analysis of transferability to new datasets, the importance of label supervision, and resulting bias.

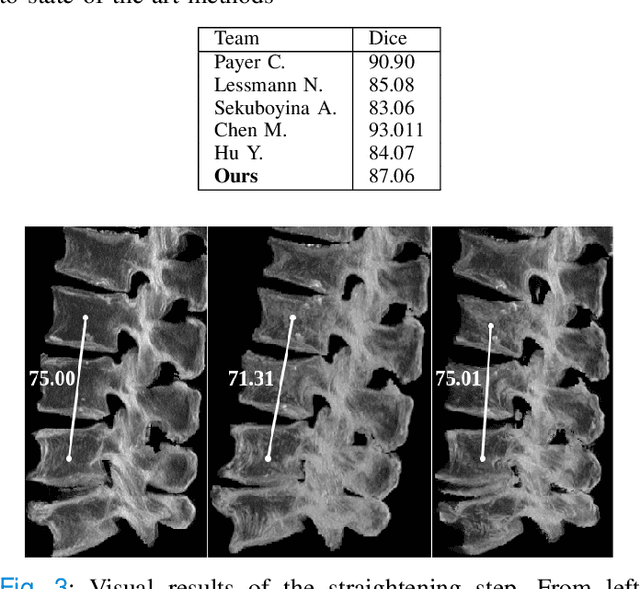

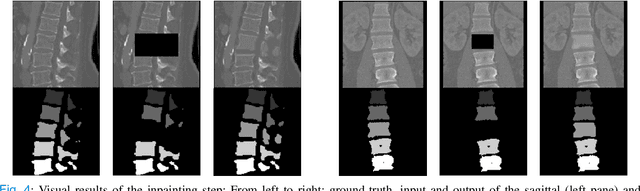

Patient-specific virtual spine straightening and vertebra inpainting: An automatic framework for osteoplasty planning

Mar 23, 2021

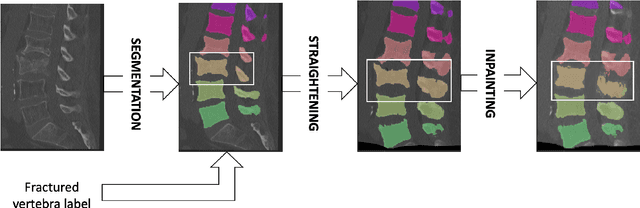

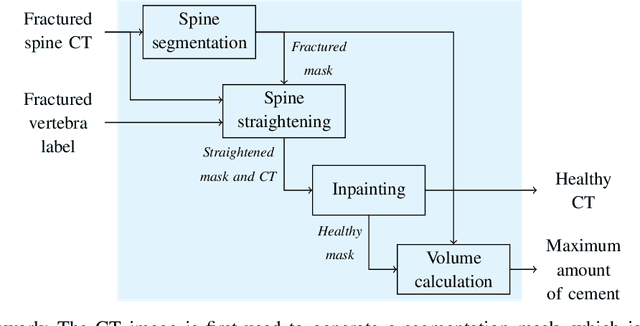

Abstract:Symptomatic spinal vertebral compression fractures (VCFs) often require osteoplasty treatment. A cement-like material is injected into the bone to stabilize the fracture, restore the vertebral body height and alleviate pain. Leakage is a common complication and may occur due to too much cement being injected. In this work, we propose an automated patient-specific framework that can allow physicians to calculate an upper bound of cement for the injection and estimate the optimal outcome of osteoplasty. The framework uses the patient CT scan and the fractured vertebra label to build a virtual healthy spine using a high-level approach. Firstly, the fractured spine is segmented with a three-step Convolution Neural Network (CNN) architecture. Next, a per-vertebra rigid registration to a healthy spine atlas restores its curvature. Finally, a GAN-based inpainting approach replaces the fractured vertebra with an estimation of its original shape. Based on this outcome, we then estimate the maximum amount of bone cement for injection. We evaluate our framework by comparing the virtual vertebrae volumes of ten patients to their healthy equivalent and report an average error of 3.88$\pm$7.63\%. The presented pipeline offers a first approach to a personalized automatic high-level framework for planning osteoplasty procedures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge