"Time": models, code, and papers

How Smart Guessing Strategies Can Yield Massive Scalability Improvements for Sparse Decision Tree Optimization

Dec 01, 2021

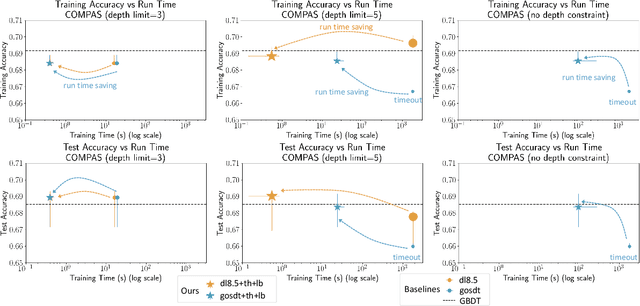

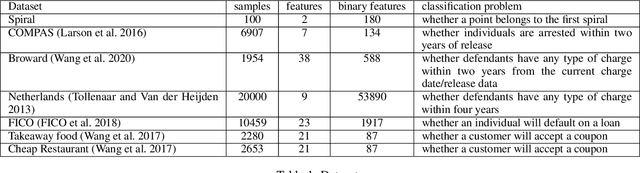

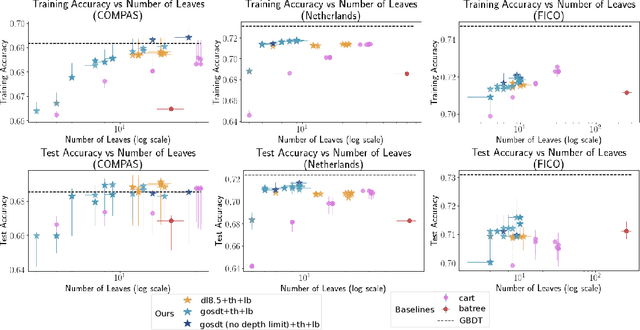

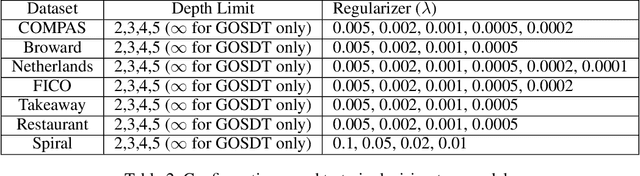

Sparse decision tree optimization has been one of the most fundamental problems in AI since its inception and is a challenge at the core of interpretable machine learning. Sparse decision tree optimization is computationally hard, and despite steady effort since the 1960's, breakthroughs have only been made on the problem within the past few years, primarily on the problem of finding optimal sparse decision trees. However, current state-of-the-art algorithms often require impractical amounts of computation time and memory to find optimal or near-optimal trees for some real-world datasets, particularly those having several continuous-valued features. Given that the search spaces of these decision tree optimization problems are massive, can we practically hope to find a sparse decision tree that competes in accuracy with a black box machine learning model? We address this problem via smart guessing strategies that can be applied to any optimal branch-and-bound-based decision tree algorithm. We show that by using these guesses, we can reduce the run time by multiple orders of magnitude, while providing bounds on how far the resulting trees can deviate from the black box's accuracy and expressive power. Our approach enables guesses about how to bin continuous features, the size of the tree, and lower bounds on the error for the optimal decision tree. Our experiments show that in many cases we can rapidly construct sparse decision trees that match the accuracy of black box models. To summarize: when you are having trouble optimizing, just guess.

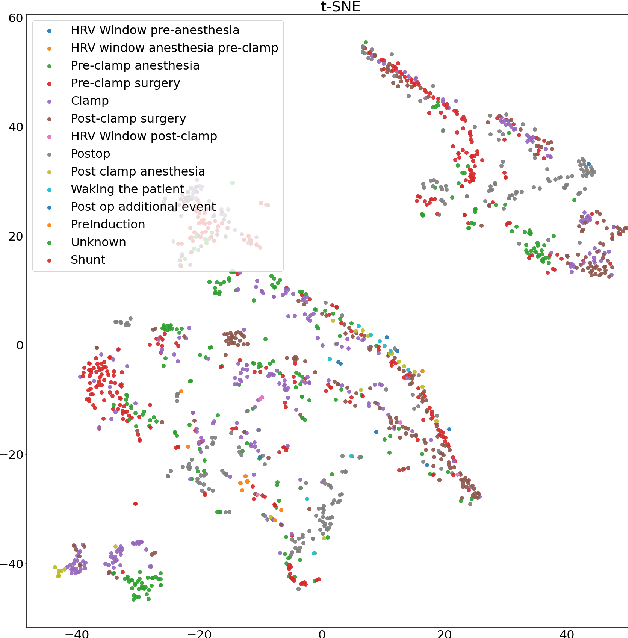

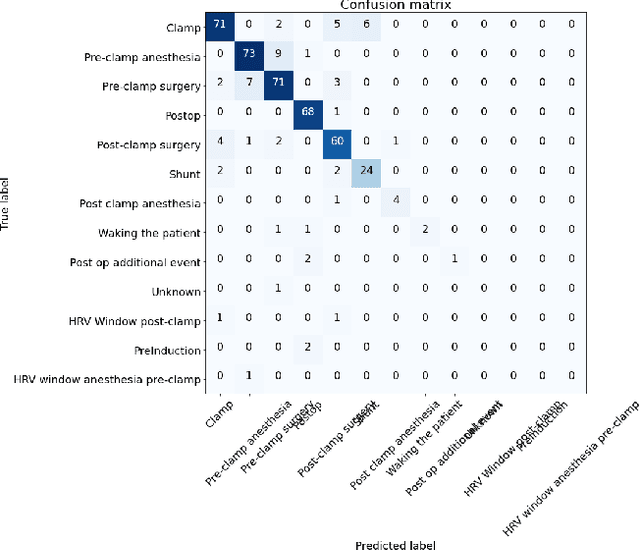

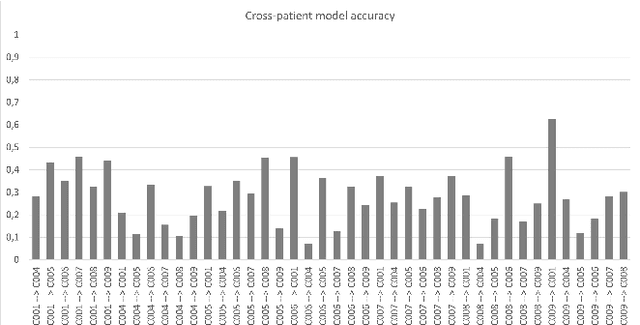

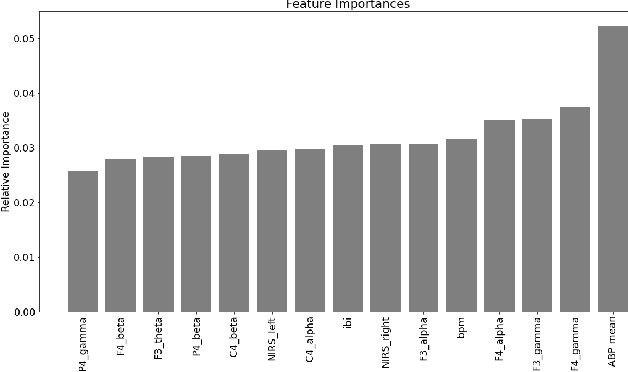

Towards Trustworthy Cross-patient Model Development

Dec 20, 2021

Machine learning is used in medicine to support physicians in examination, diagnosis, and predicting outcomes. One of the most dynamic area is the usage of patient generated health data from intensive care units. The goal of this paper is to demonstrate how we advance cross-patient ML model development by combining the patient's demographics data with their physiological data. We used a population of patients undergoing Carotid Enderarterectomy (CEA), where we studied differences in model performance and explainability when trained for all patients and one patient at a time. The results show that patients' demographics has a large impact on the performance and explainability and thus trustworthiness. We conclude that we can increase trust in ML models in a cross-patient context, by careful selection of models and patients based on their demographics and the surgical procedure.

Unadjusted Langevin algorithm for sampling a mixture of weakly smooth potentials

Dec 17, 2021Discretization of continuous-time diffusion processes is a widely recognized method for sampling. However, it seems to be a considerable restriction when the potentials are often required to be smooth (gradient Lipschitz). This paper studies the problem of sampling through Euler discretization, where the potential function is assumed to be a mixture of weakly smooth distributions and satisfies weakly dissipative. We establish the convergence in Kullback-Leibler (KL) divergence with the number of iterations to reach $\epsilon$-neighborhood of a target distribution in only polynomial dependence on the dimension. We relax the degenerated convex at infinity conditions of \citet{erdogdu2020convergence} and prove convergence guarantees under Poincar\'{e} inequality or non-strongly convex outside the ball. In addition, we also provide convergence in $L_{\beta}$-Wasserstein metric for the smoothing potential.

Control of Unknown Nonlinear Systems with Linear Time-Varying MPC

Apr 09, 2020

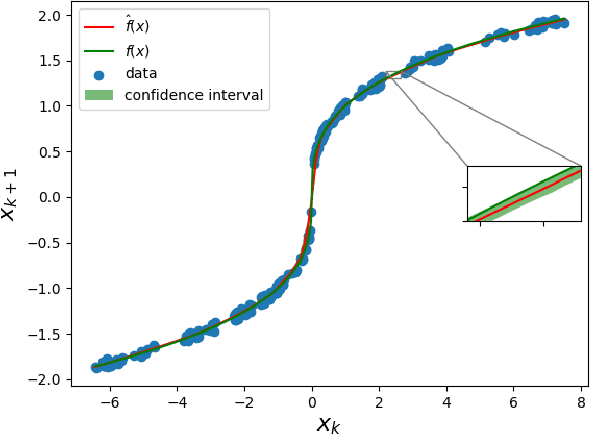

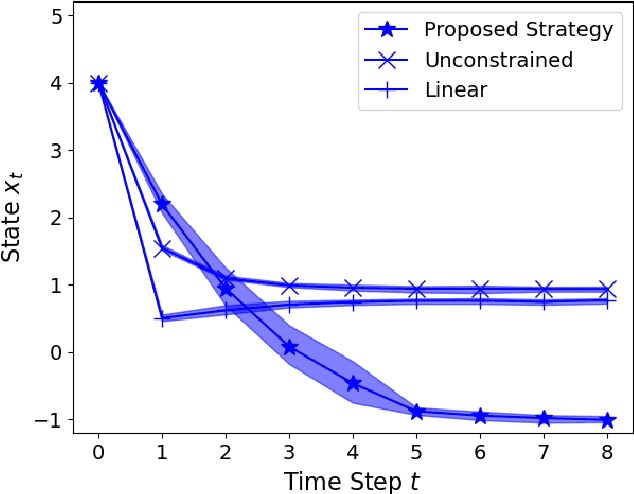

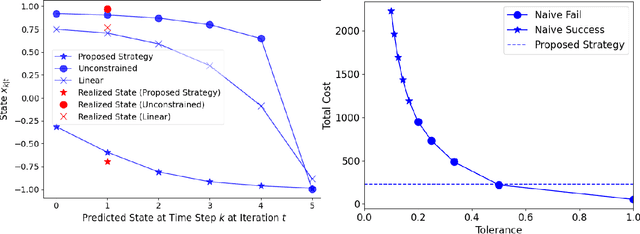

We present a Model Predictive Control (MPC) strategy for unknown input-affine nonlinear dynamical systems. A non-parametric method is used to estimate the nonlinear dynamics from observed data. The estimated nonlinear dynamics are then linearized over time varying regions of the state space to construct an Affine Time Varying (ATV) model. Error bounds arising from the estimation and linearization procedure are computed by using sampling techniques. The ATV model and the uncertainty sets are used to design a robust Model Predictive Control (MPC) problem which guarantees safety for the unknown system with high probability. A simple nonlinear example demonstrates the effectiveness of the approach where commonly used linearization methods fail.

Egocentric Deep Multi-Channel Audio-Visual Active Speaker Localization

Jan 06, 2022

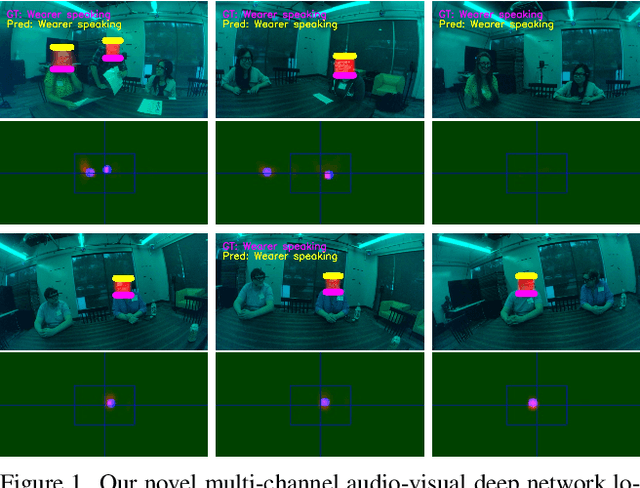

Augmented reality devices have the potential to enhance human perception and enable other assistive functionalities in complex conversational environments. Effectively capturing the audio-visual context necessary for understanding these social interactions first requires detecting and localizing the voice activities of the device wearer and the surrounding people. These tasks are challenging due to their egocentric nature: the wearer's head motion may cause motion blur, surrounding people may appear in difficult viewing angles, and there may be occlusions, visual clutter, audio noise, and bad lighting. Under these conditions, previous state-of-the-art active speaker detection methods do not give satisfactory results. Instead, we tackle the problem from a new setting using both video and multi-channel microphone array audio. We propose a novel end-to-end deep learning approach that is able to give robust voice activity detection and localization results. In contrast to previous methods, our method localizes active speakers from all possible directions on the sphere, even outside the camera's field of view, while simultaneously detecting the device wearer's own voice activity. Our experiments show that the proposed method gives superior results, can run in real time, and is robust against noise and clutter.

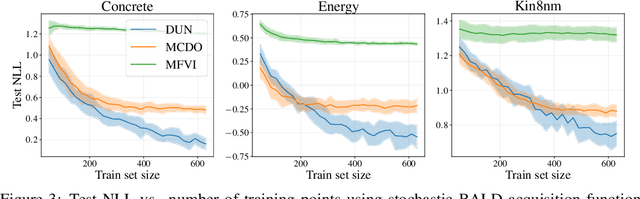

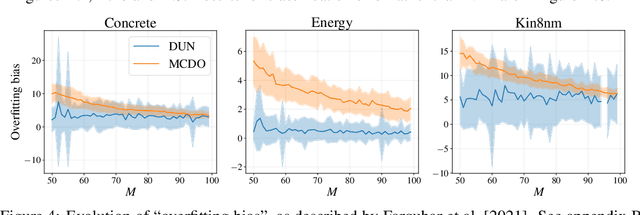

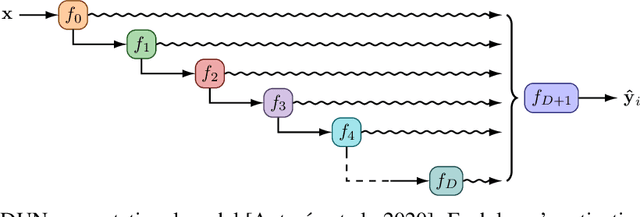

Depth Uncertainty Networks for Active Learning

Dec 13, 2021

In active learning, the size and complexity of the training dataset changes over time. Simple models that are well specified by the amount of data available at the start of active learning might suffer from bias as more points are actively sampled. Flexible models that might be well suited to the full dataset can suffer from overfitting towards the start of active learning. We tackle this problem using Depth Uncertainty Networks (DUNs), a BNN variant in which the depth of the network, and thus its complexity, is inferred. We find that DUNs outperform other BNN variants on several active learning tasks. Importantly, we show that on the tasks in which DUNs perform best they present notably less overfitting than baselines.

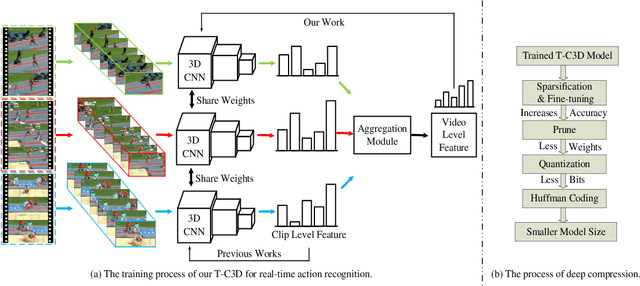

A Real-time Action Representation with Temporal Encoding and Deep Compression

Jun 17, 2020

Deep neural networks have achieved remarkable success for video-based action recognition. However, most of existing approaches cannot be deployed in practice due to the high computational cost. To address this challenge, we propose a new real-time convolutional architecture, called Temporal Convolutional 3D Network (T-C3D), for action representation. T-C3D learns video action representations in a hierarchical multi-granularity manner while obtaining a high process speed. Specifically, we propose a residual 3D Convolutional Neural Network (CNN) to capture complementary information on the appearance of a single frame and the motion between consecutive frames. Based on this CNN, we develop a new temporal encoding method to explore the temporal dynamics of the whole video. Furthermore, we integrate deep compression techniques with T-C3D to further accelerate the deployment of models via reducing the size of the model. By these means, heavy calculations can be avoided when doing the inference, which enables the method to deal with videos beyond real-time speed while keeping promising performance. Our method achieves clear improvements on UCF101 action recognition benchmark against state-of-the-art real-time methods by 5.4% in terms of accuracy and 2 times faster in terms of inference speed with a less than 5MB storage model. We validate our approach by studying its action representation performance on four different benchmarks over three different tasks. Extensive experiments demonstrate comparable recognition performance to the state-of-the-art methods. The source code and the pre-trained models are publicly available at https://github.com/tc3d.

Integrated Sensing and Communication with mmWave Massive MIMO: A Compressed Sampling Perspective

Jan 15, 2022

Integrated sensing and communication (ISAC) has opened up numerous game-changing opportunities for realizing future wireless systems. In this paper, we propose an ISAC processing framework relying on millimeter-wave (mmWave) massive multiple-input multiple-output (MIMO) systems. Specifically, we provide a compressed sampling (CS) perspective to facilitate ISAC processing, which can not only recover the large-scale channel state information or/and radar imaging information, but also significantly reduce pilot overhead. First, an energy-efficient widely spaced array (WSA) architecture is tailored for the radar receiver, which enhances the angular resolution of radar sensing at the cost of angular ambiguity. Then, we propose an ISAC frame structure for time-variant ISAC systems considering different timescales. The pilot waveforms are judiciously designed by taking into account both CS theories and hardware constraints. Next, we design the dedicated dictionary for WSA that serves as a building block for formulating the ISAC processing as sparse signal recovery problems. The orthogonal matching pursuit with support refinement (OMP-SR) algorithm is proposed to effectively solve the problems in the existence of the angular ambiguity. We also provide a framework for estimating and compensating the Doppler frequencies during payload data transmission to guarantee communication performances. Simulation results demonstrate the good performances of both communications and radar sensing under the proposed ISAC framework.

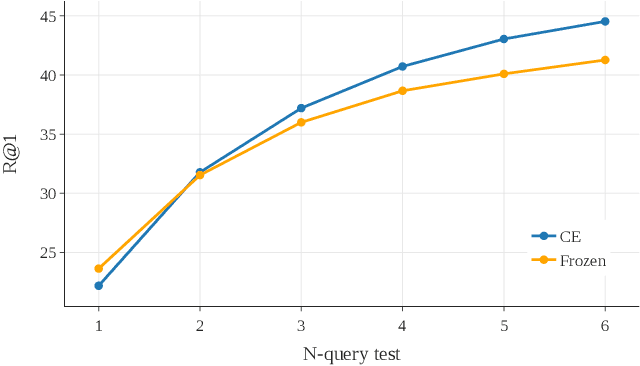

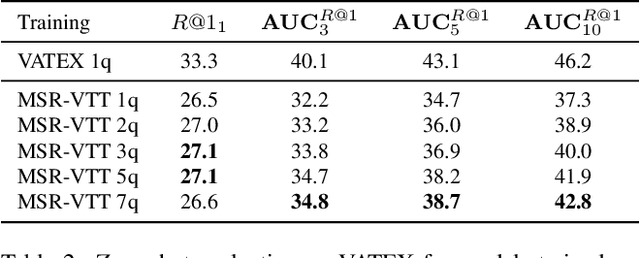

Multi-query Video Retrieval

Jan 10, 2022

Retrieving target videos based on text descriptions is a task of great practical value and has received increasing attention over the past few years. In this paper, we focus on the less-studied setting of multi-query video retrieval, where multiple queries are provided to the model for searching over the video archive. We first show that the multi-query retrieval task is more pragmatic and representative of real-world use cases and better evaluates retrieval capabilities of current models, thereby deserving of further investigation alongside the more prevalent single-query retrieval setup. We then propose several new methods for leveraging multiple queries at training time to improve over simply combining similarity outputs of multiple queries from regular single-query trained models. Our models consistently outperform several competitive baselines over three different datasets. For instance, Recall@1 can be improved by 4.7 points on MSR-VTT, 4.1 points on MSVD and 11.7 points on VATEX over a strong baseline built on the state-of-the-art CLIP4Clip model. We believe further modeling efforts will bring new insights to this direction and spark new systems that perform better in real-world video retrieval applications. Code is available at https://github.com/princetonvisualai/MQVR.

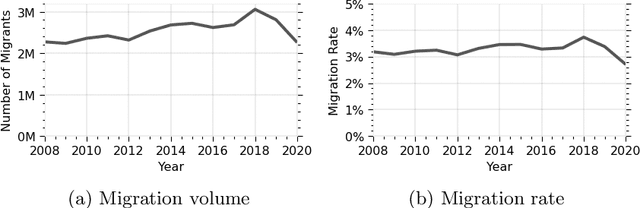

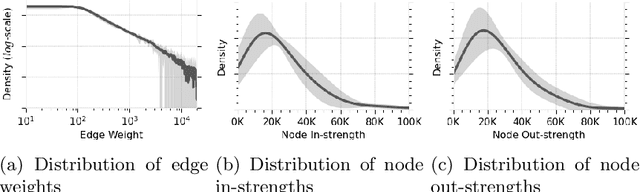

Investigating internal migration with network analysis and latent space representations: An application to Turkey

Jan 10, 2022

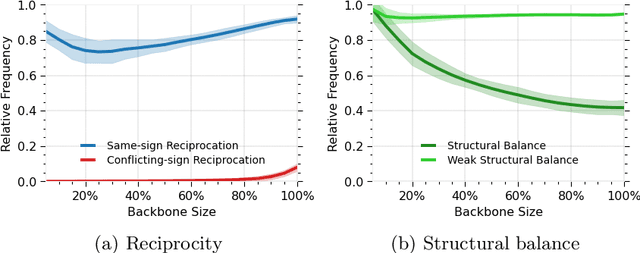

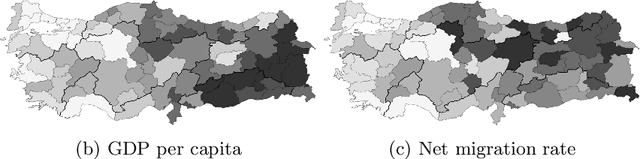

Human migration patterns influence the redistribution of population characteristics over the geography and since such distributions are closely related to social and economic outcomes, investigating the structure and dynamics of internal migration plays a crucial role in understanding and designing policies for such systems. We provide an in-depth investigation into the structure and dynamics of the internal migration in Turkey from 2008 to 2020. We identify a set of classical migration laws and examine them via various methods for signed network analysis, ego network analysis, representation learning, temporal stability analysis, community detection, and network visualization. The findings show that, in line with the classical migration laws, most migration links are geographically bounded with several exceptions involving cities with large economic activity, major migration flows are countered with migration flows in the opposite direction, there are well-defined migration routes, and the migration system is generally stable over the investigated period. Apart from these general results, we also provide unique and specific insights into Turkey. Overall, the novel toolset we employ for the first time in the literature allows the investigation of selected migration laws from a complex networks perspective and sheds light on future migration research on different geographies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge