"Time": models, code, and papers

EfficientSRFace: An Efficient Network with Super-Resolution Enhancement for Accurate Face Detection

Jun 04, 2023

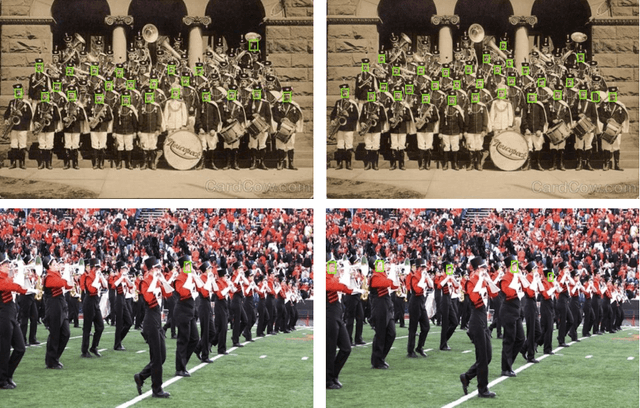

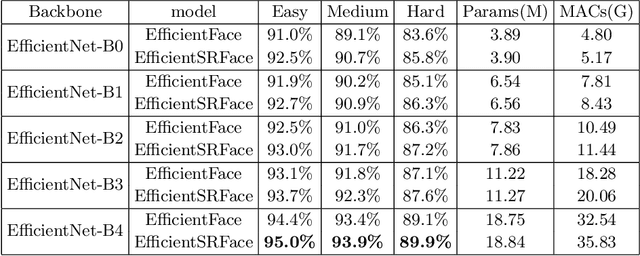

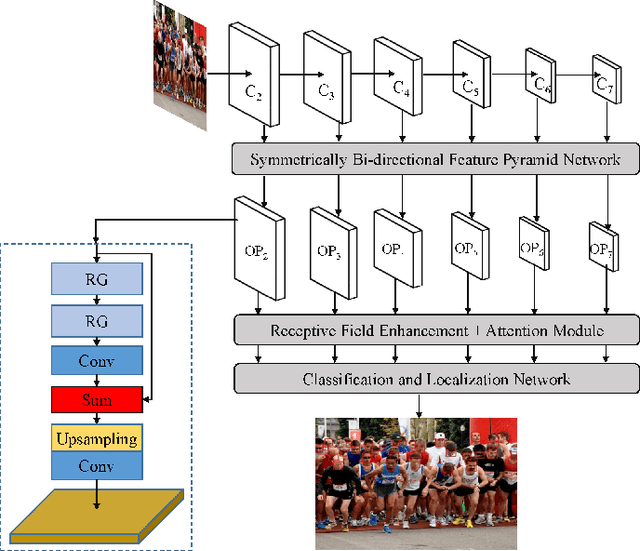

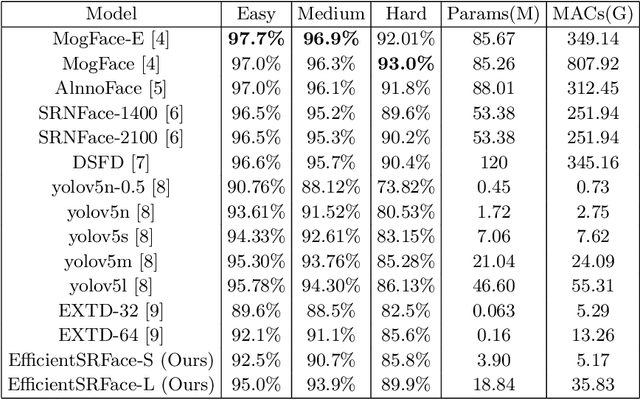

In face detection, low-resolution faces, such as numerous small faces of a human group in a crowded scene, are common in dense face prediction tasks. They usually contain limited visual clues and make small faces less distinguishable from the other small objects, which poses great challenge to accurate face detection. Although deep convolutional neural network has significantly promoted the research on face detection recently, current deep face detectors rarely take into account low-resolution faces and are still vulnerable to the real-world scenarios where massive amount of low-resolution faces exist. Consequently, they usually achieve degraded performance for low-resolution face detection. In order to alleviate this problem, we develop an efficient detector termed EfficientSRFace by introducing a feature-level super-resolution reconstruction network for enhancing the feature representation capability of the model. This module plays an auxiliary role in the training process, and can be removed during the inference without increasing the inference time. Extensive experiments on public benchmarking datasets, such as FDDB and WIDER Face, show that the embedded image super-resolution module can significantly improve the detection accuracy at the cost of a small amount of additional parameters and computational overhead, while helping our model achieve competitive performance compared with the state-of-the-arts methods.

The Power Of Simplicity: Why Simple Linear Models Outperform Complex Machine Learning Techniques -- Case Of Breast Cancer Diagnosis

Jun 04, 2023

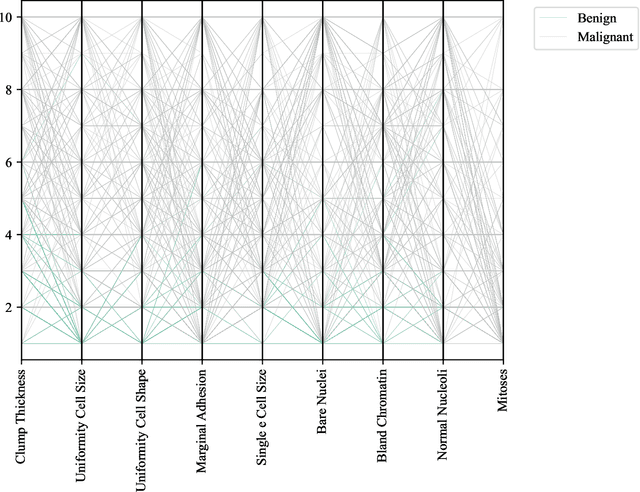

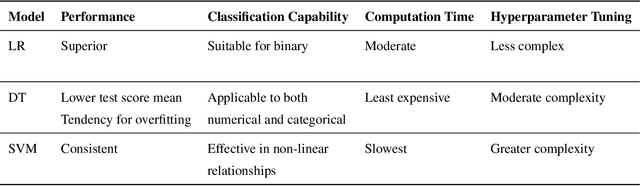

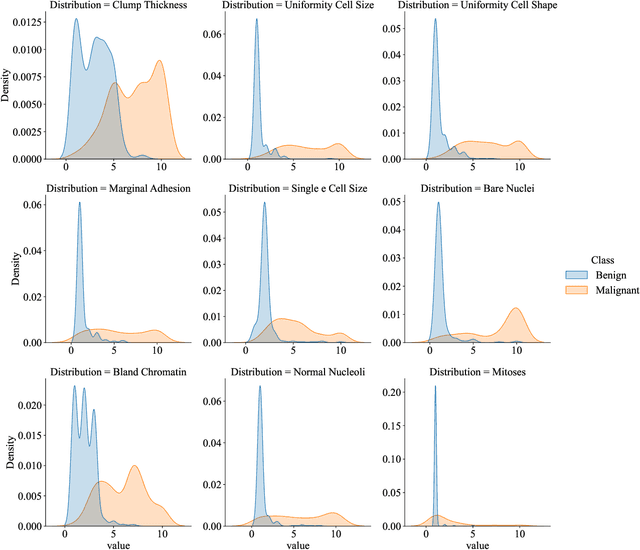

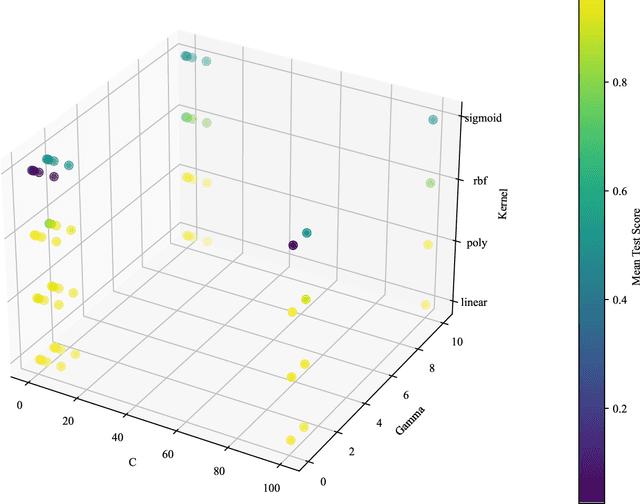

This research paper investigates the effectiveness of simple linear models versus complex machine learning techniques in breast cancer diagnosis, emphasizing the importance of interpretability and computational efficiency in the medical domain. We focus on Logistic Regression (LR), Decision Trees (DT), and Support Vector Machines (SVM) and optimize their performance using the UCI Machine Learning Repository dataset. Our findings demonstrate that the simpler linear model, LR, outperforms the more complex DT and SVM techniques, with a test score mean of 97.28%, a standard deviation of 1.62%, and a computation time of 35.56 ms. In comparison, DT achieved a test score mean of 93.73%, and SVM had a test score mean of 96.44%. The superior performance of LR can be attributed to its simplicity and interpretability, which provide a clear understanding of the relationship between input features and the outcome. This is particularly valuable in the medical domain, where interpretability is crucial for decision-making. Moreover, the computational efficiency of LR offers advantages in terms of scalability and real-world applicability. The results of this study highlight the power of simplicity in the context of breast cancer diagnosis and suggest that simpler linear models like LR can be more effective, interpretable, and computationally efficient than their complex counterparts, making them a more suitable choice for medical applications.

Towards Understanding the Dynamics of Gaussian--Stein Variational Gradient Descent

May 23, 2023

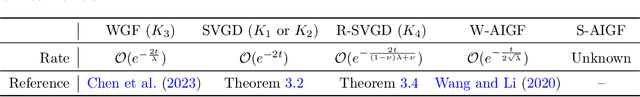

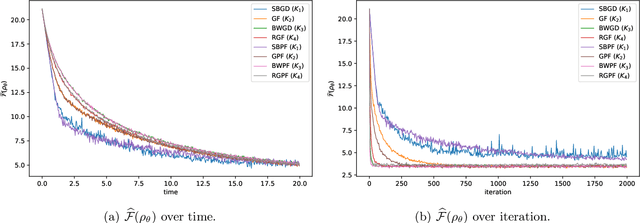

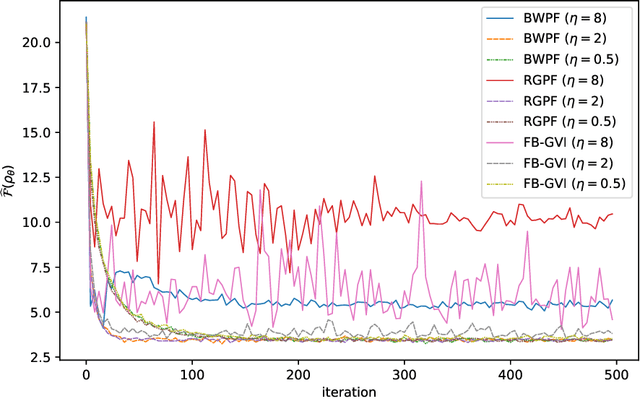

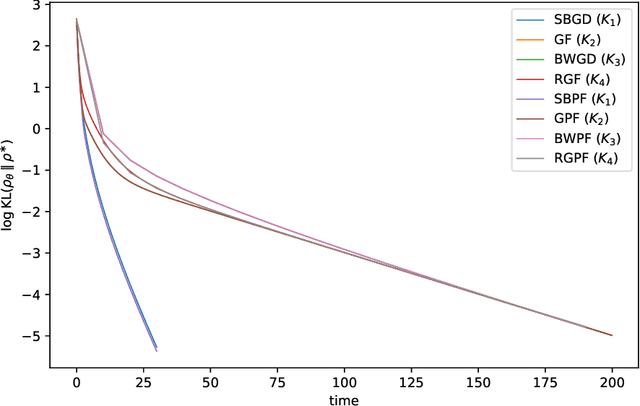

Stein Variational Gradient Descent (SVGD) is a nonparametric particle-based deterministic sampling algorithm. Despite its wide usage, understanding the theoretical properties of SVGD has remained a challenging problem. For sampling from a Gaussian target, the SVGD dynamics with a bilinear kernel will remain Gaussian as long as the initializer is Gaussian. Inspired by this fact, we undertake a detailed theoretical study of the Gaussian-SVGD, i.e., SVGD projected to the family of Gaussian distributions via the bilinear kernel, or equivalently Gaussian variational inference (GVI) with SVGD. We present a complete picture by considering both the mean-field PDE and discrete particle systems. When the target is strongly log-concave, the mean-field Gaussian-SVGD dynamics is proven to converge linearly to the Gaussian distribution closest to the target in KL divergence. In the finite-particle setting, there is both uniform in time convergence to the mean-field limit and linear convergence in time to the equilibrium if the target is Gaussian. In the general case, we propose a density-based and a particle-based implementation of the Gaussian-SVGD, and show that several recent algorithms for GVI, proposed from different perspectives, emerge as special cases of our unified framework. Interestingly, one of the new particle-based instance from this framework empirically outperforms existing approaches. Our results make concrete contributions towards obtaining a deeper understanding of both SVGD and GVI.

Online Learning in Multi-unit Auctions

May 27, 2023

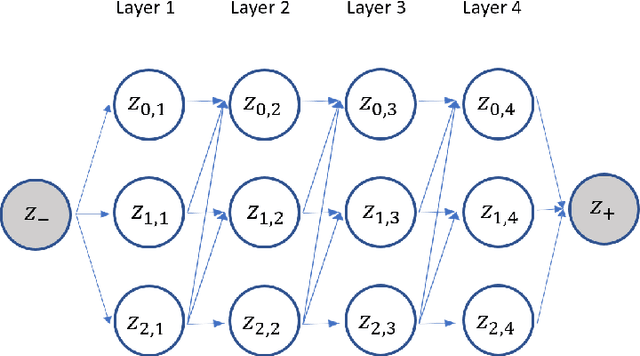

We consider repeated multi-unit auctions with uniform pricing, which are widely used in practice for allocating goods such as carbon licenses. In each round, $K$ identical units of a good are sold to a group of buyers that have valuations with diminishing marginal returns. The buyers submit bids for the units, and then a price $p$ is set per unit so that all the units are sold. We consider two variants of the auction, where the price is set to the $K$-th highest bid and $(K+1)$-st highest bid, respectively. We analyze the properties of this auction in both the offline and online settings. In the offline setting, we consider the problem that one player $i$ is facing: given access to a data set that contains the bids submitted by competitors in past auctions, find a bid vector that maximizes player $i$'s cumulative utility on the data set. We design a polynomial time algorithm for this problem, by showing it is equivalent to finding a maximum-weight path on a carefully constructed directed acyclic graph. In the online setting, the players run learning algorithms to update their bids as they participate in the auction over time. Based on our offline algorithm, we design efficient online learning algorithms for bidding. The algorithms have sublinear regret, under both full information and bandit feedback structures. We complement our online learning algorithms with regret lower bounds. Finally, we analyze the quality of the equilibria in the worst case through the lens of the core solution concept in the game among the bidders. We show that the $(K+1)$-st price format is susceptible to collusion among the bidders; meanwhile, the $K$-th price format does not have this issue.

Embracing Uncertainty: Adaptive Vague Preference Policy Learning for Multi-round Conversational Recommendation

Jun 07, 2023

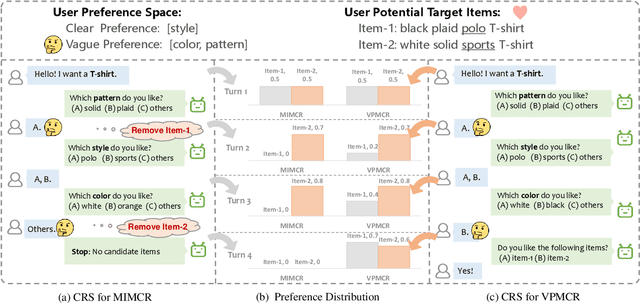

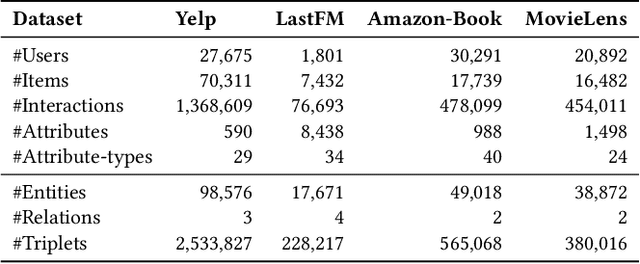

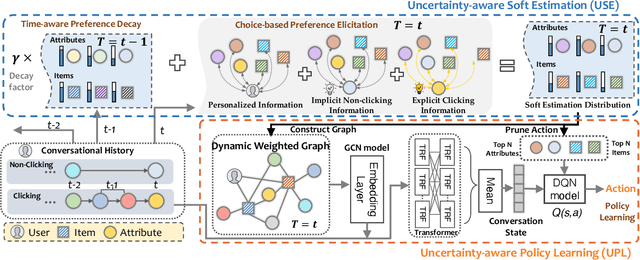

Conversational recommendation systems (CRS) effectively address information asymmetry by dynamically eliciting user preferences through multi-turn interactions. Existing CRS widely assumes that users have clear preferences. Under this assumption, the agent will completely trust the user feedback and treat the accepted or rejected signals as strong indicators to filter items and reduce the candidate space, which may lead to the problem of over-filtering. However, in reality, users' preferences are often vague and volatile, with uncertainty about their desires and changing decisions during interactions. To address this issue, we introduce a novel scenario called Vague Preference Multi-round Conversational Recommendation (VPMCR), which considers users' vague and volatile preferences in CRS.VPMCR employs a soft estimation mechanism to assign a non-zero confidence score for all candidate items to be displayed, naturally avoiding the over-filtering problem. In the VPMCR setting, we introduce an solution called Adaptive Vague Preference Policy Learning (AVPPL), which consists of two main components: Uncertainty-aware Soft Estimation (USE) and Uncertainty-aware Policy Learning (UPL). USE estimates the uncertainty of users' vague feedback and captures their dynamic preferences using a choice-based preferences extraction module and a time-aware decaying strategy. UPL leverages the preference distribution estimated by USE to guide the conversation and adapt to changes in users' preferences to make recommendations or ask for attributes. Our extensive experiments demonstrate the effectiveness of our method in the VPMCR scenario, highlighting its potential for practical applications and improving the overall performance and applicability of CRS in real-world settings, particularly for users with vague or dynamic preferences.

A novel deeponet model for learning moving-solution operators with applications to earthquake hypocenter localization

Jun 07, 2023

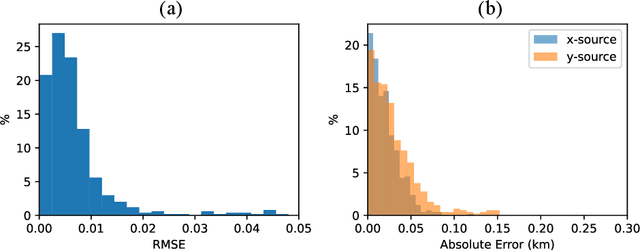

Seismicity induced by human activities poses a significant threat to public safety, emphasizing the need for accurate and timely earthquake hypocenter localization. In this study, we introduce X-DeepONet, a novel variant of deep operator networks (DeepONets), for learning moving-solution operators of parametric partial differential equations (PDEs), with application to real-time earthquake localization. Leveraging the power of neural operators, X-DeepONet learns to estimate traveltime fields associated with earthquake sources by incorporating information from seismic arrival times and velocity models. Similar to the DeepONet, X-DeepONet includes a trunk net and a branch net. Additionally, we introduce a root network that not only takes the standard DeepONet's multiplication operator as input, it also takes addition and subtraction operators. We show that for problems with moving fields, the standard multiplication operation of DeepONet is insufficient to capture field relocation, while addition and subtraction operators along with the eXtended root significantly improve its accuracy both under data-driven (supervised) and physics-informed (unsupervised) training. We demonstrate the effectiveness of X-DeepONet through various experiments, including scenarios with variable velocity models and arrival times. The results show remarkable accuracy in earthquake localization, even for heterogeneous and complex velocity models. The proposed framework also exhibits excellent generalization capabilities and robustness against noisy arrival times. The method provides a computationally efficient approach for quantifying uncertainty in hypocenter locations resulting from traveltime pick errors and velocity model variations. Our results underscore X-DeepONet's potential to improve seismic monitoring systems, aiding the development of early warning systems for seismic hazard mitigation.

Cooperative Sense and Avoid for UAVs using Secondary Radar

Jun 05, 2023

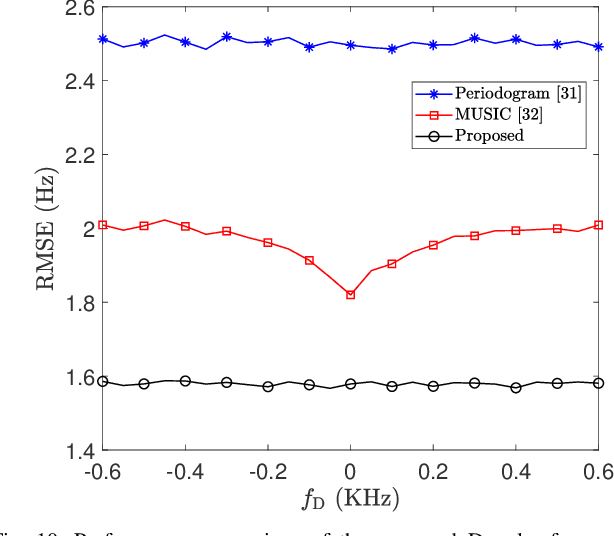

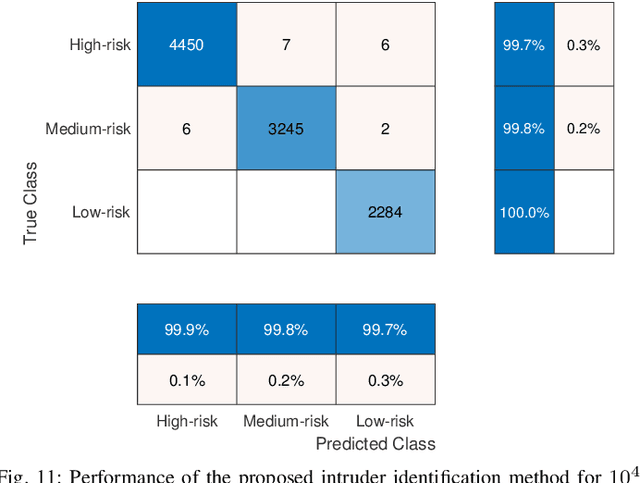

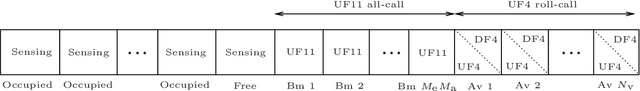

A new Sense and Avoid (SAA) method for safe navigation of small-sized UAVs within an airspace is proposed in this paper. The proposed method relies upon cooperation between the UAV and the surrounding transponder-equipped aviation obstacles. To do so, the aviation obstacles share their altitude and their identification code with the UAV by using a miniaturized Mode S operation Secondary surveillance radar (SSR) after interrogation. The proposed SAA algorithm removes the need for a primary radar and a clock synchronization since it relies on the estimate of the aviation obstacle's elevation angle for ranging. This results in more accurate ranging compared to the round-trip time-based ranging. We also propose a new radial velocity estimator for the Mode S operation of the SSR which is employed in the proposed SAA system. The root-mean-square error (RMSE) of the proposed estimators are analytically derived. Moreover, by considering the pulse-position modulation (PPM) of the transponder reply as a waveform of pulse radar with staggered multiple pulse repetition frequencies, the maximum unambiguous radial velocity is obtained. Given these estimated parameters, our proposed SAA method classifies the aviation obstacles into high-, medium-, and low-risk intruders. The output of the classifier enables the UAV to plan its path or maneuver for safe navigation accordingly. The effectiveness of the proposed estimators and the SAA method is confirmed through simulation experiments.

Explore to Generalize in Zero-Shot RL

Jun 05, 2023

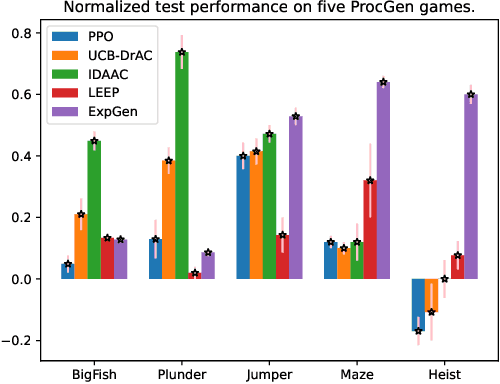

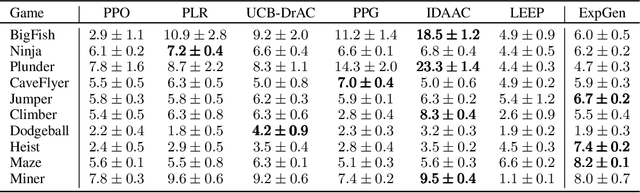

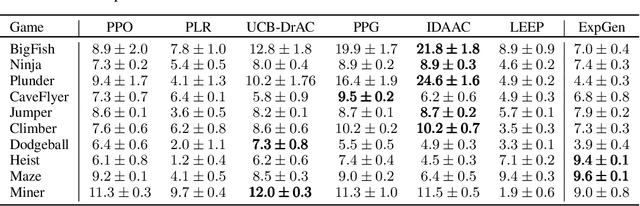

We study zero-shot generalization in reinforcement learning - optimizing a policy on a set of training tasks such that it will perform well on a similar but unseen test task. To mitigate overfitting, previous work explored different notions of invariance to the task. However, on problems such as the ProcGen Maze, an adequate solution that is invariant to the task visualization does not exist, and therefore invariance-based approaches fail. Our insight is that learning a policy that $\textit{explores}$ the domain effectively is harder to memorize than a policy that maximizes reward for a specific task, and therefore we expect such learned behavior to generalize well; we indeed demonstrate this empirically on several domains that are difficult for invariance-based approaches. Our $\textit{Explore to Generalize}$ algorithm (ExpGen) builds on this insight: We train an additional ensemble of agents that optimize reward. At test time, either the ensemble agrees on an action, and we generalize well, or we take exploratory actions, which are guaranteed to generalize and drive us to a novel part of the state space, where the ensemble may potentially agree again. We show that our approach is the state-of-the-art on several tasks in the ProcGen challenge that have so far eluded effective generalization. For example, we demonstrate a success rate of $82\%$ on the Maze task and $74\%$ on Heist with $200$ training levels.

Graph Based Long-Term And Short-Term Interest Model for Click-Through Rate Prediction

Jun 05, 2023

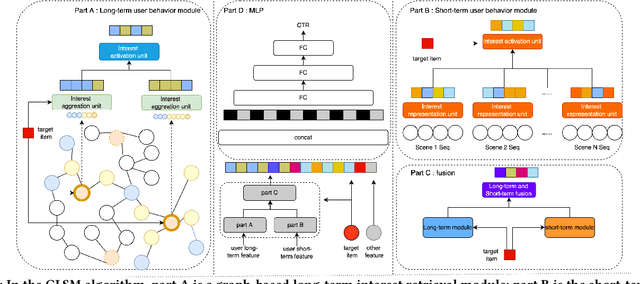

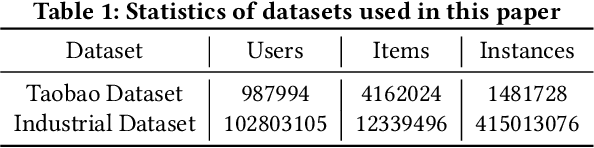

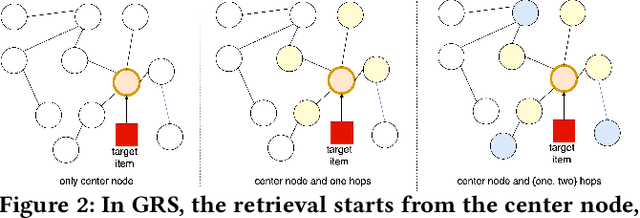

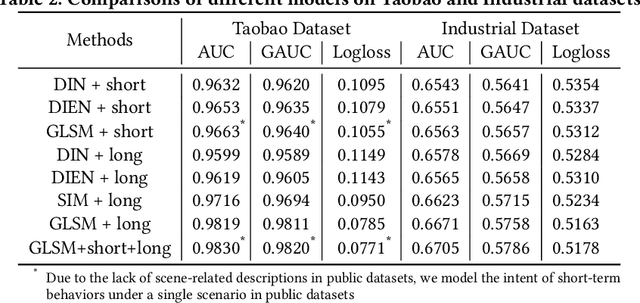

Click-through rate (CTR) prediction aims to predict the probability that the user will click an item, which has been one of the key tasks in online recommender and advertising systems. In such systems, rich user behavior (viz. long- and short-term) has been proved to be of great value in capturing user interests. Both industry and academy have paid much attention to this topic and propose different approaches to modeling with long-term and short-term user behavior data. But there are still some unresolved issues. More specially, (1) rule and truncation based methods to extract information from long-term behavior are easy to cause information loss, and (2) single feedback behavior regardless of scenario to extract information from short-term behavior lead to information confusion and noise. To fill this gap, we propose a Graph based Long-term and Short-term interest Model, termed GLSM. It consists of a multi-interest graph structure for capturing long-term user behavior, a multi-scenario heterogeneous sequence model for modeling short-term information, then an adaptive fusion mechanism to fused information from long-term and short-term behaviors. Comprehensive experiments on real-world datasets, GLSM achieved SOTA score on offline metrics. At the same time, the GLSM algorithm has been deployed in our industrial application, bringing 4.9% CTR and 4.3% GMV lift, which is significant to the business.

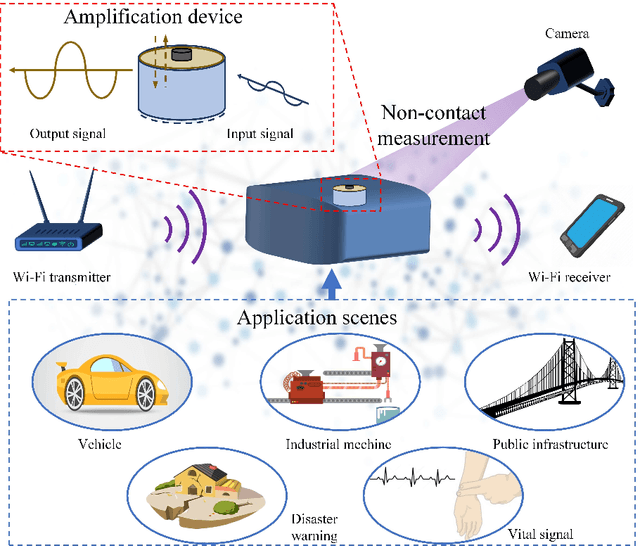

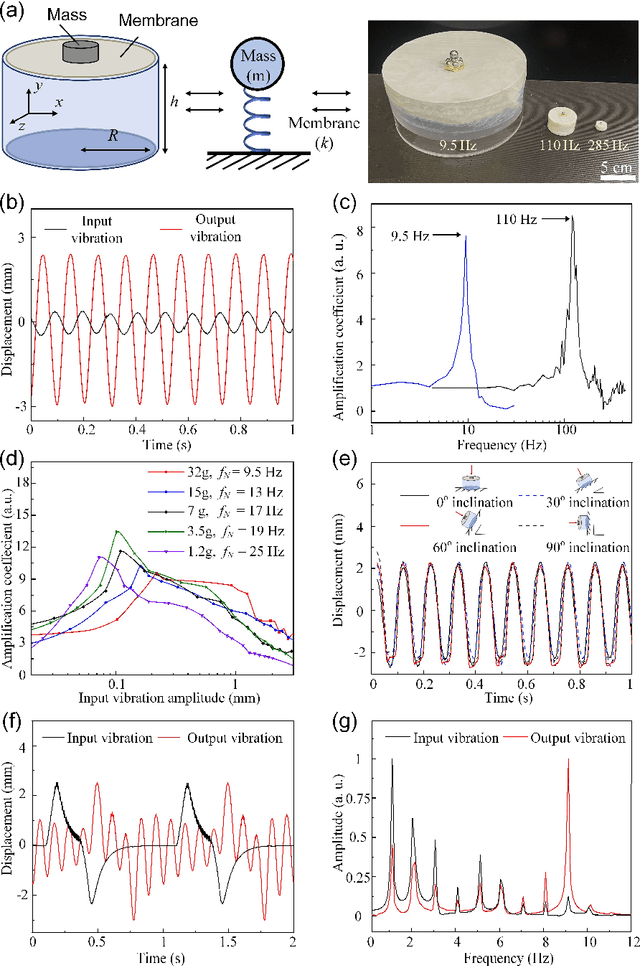

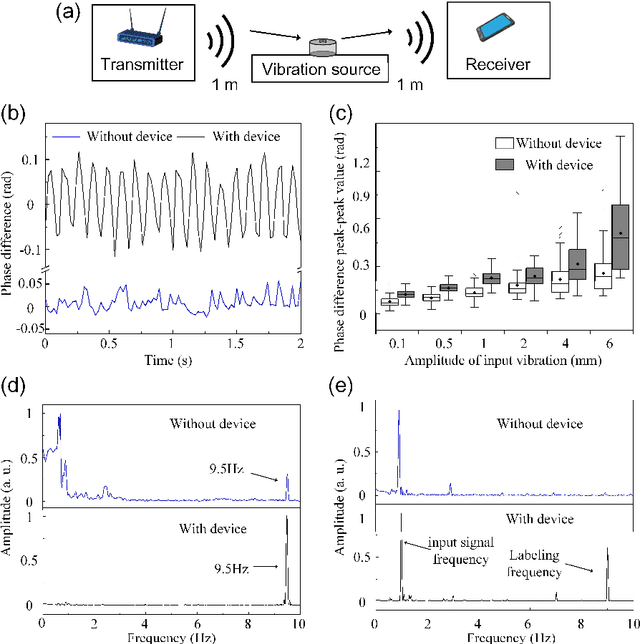

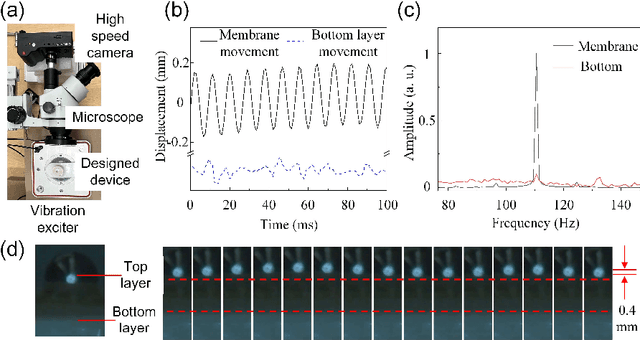

Passive Mechanical Vibration Processor for Wireless Vibration Sensing

May 18, 2023

Real-time, low-cost, and wireless mechanical vibration monitoring is necessary for industrial applications to track the operation status of equipment, environmental applications to proactively predict natural disasters, as well as day-to-day applications such as vital sign monitoring. Despite this urgent need, existing solutions, such as laser vibrometers, commercial Wi-Fi devices, and cameras, lack wide practical deployment due to their limited sensitivity and functionality. In this work, we propose and verify that a fully passive, resonance-based vibration processing device attached to the vibrating surface can improve the sensitivity of wireless vibration measurement methods by more than 10 times at designated frequencies. Additionally, the device realizes an analog real-time vibration filtering/labeling effect, and the device also provides a platform for surface editing, which adds more functionalities to the current non-contact sensing systems. Finally, the working frequency of the device is widely adjustable over orders of magnitudes, broadening its applicability to different applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge