"Information": models, code, and papers

Top-K Ranking Deep Contextual Bandits for Information Selection Systems

Jan 28, 2022

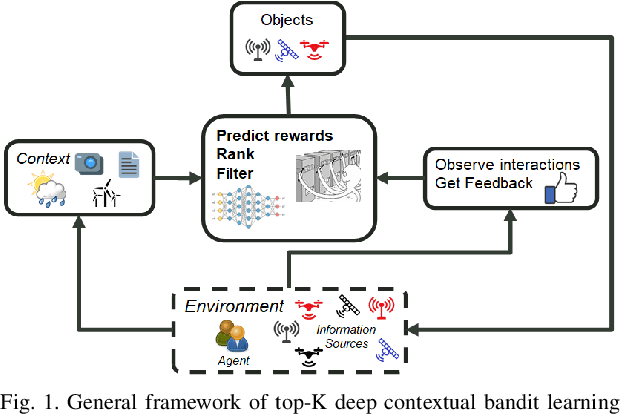

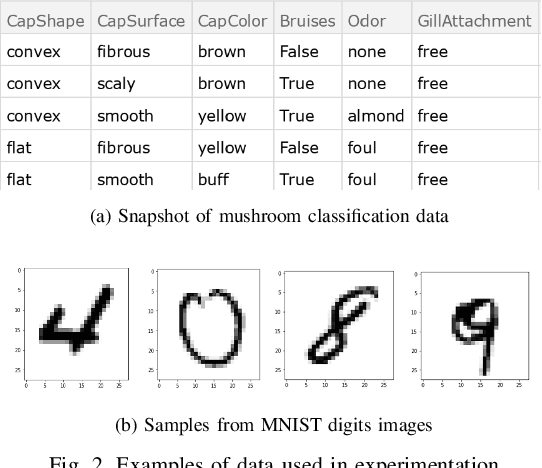

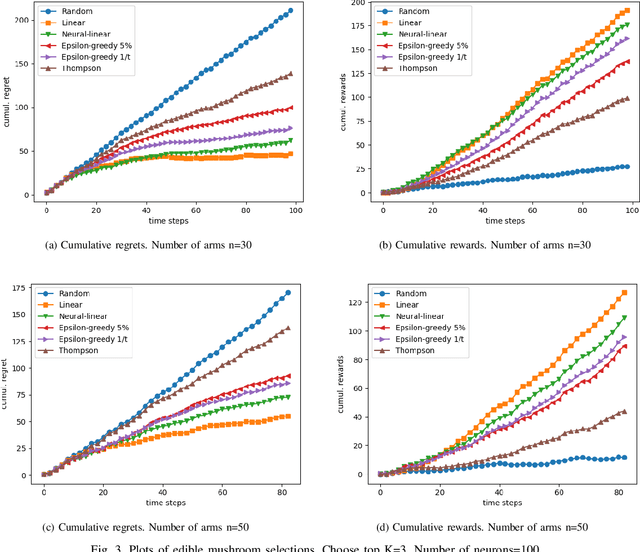

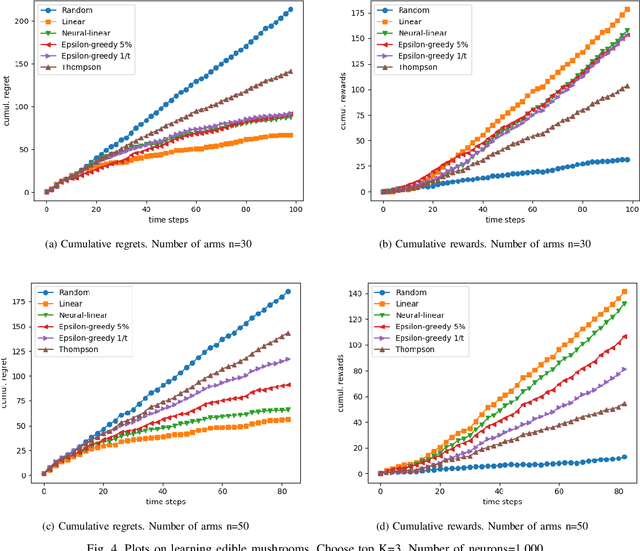

In today's technology environment, information is abundant, dynamic, and heterogeneous in nature. Automated filtering and prioritization of information is based on the distinction between whether the information adds substantial value toward one's goal or not. Contextual multi-armed bandit has been widely used for learning to filter contents and prioritize according to user interest or relevance. Learn-to-Rank technique optimizes the relevance ranking on items, allowing the contents to be selected accordingly. We propose a novel approach to top-K rankings under the contextual multi-armed bandit framework. We model the stochastic reward function with a neural network to allow non-linear approximation to learn the relationship between rewards and contexts. We demonstrate the approach and evaluate the the performance of learning from the experiments using real world data sets in simulated scenarios. Empirical results show that this approach performs well under the complexity of a reward structure and high dimensional contextual features.

Learning from the Dark: Boosting Graph Convolutional Neural Networks with Diverse Negative Samples

Oct 03, 2022

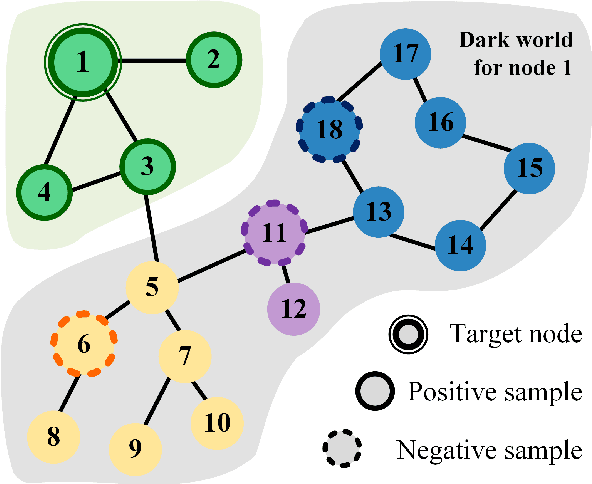

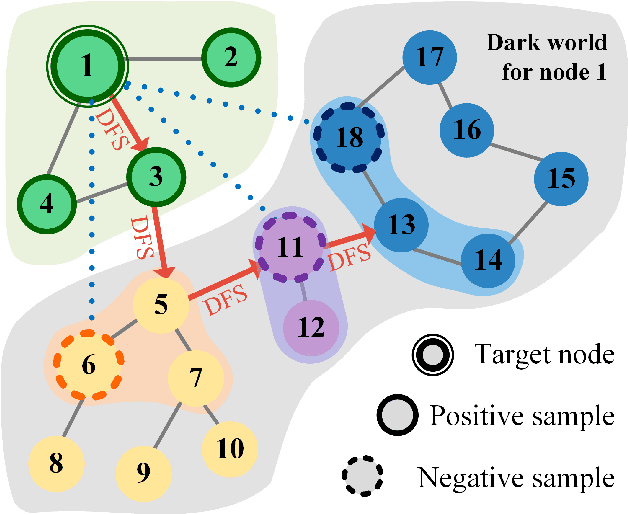

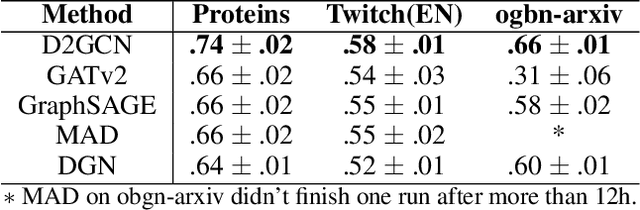

Graph Convolutional Neural Networks (GCNs) has been generally accepted to be an effective tool for node representations learning. An interesting way to understand GCNs is to think of them as a message passing mechanism where each node updates its representation by accepting information from its neighbours (also known as positive samples). However, beyond these neighbouring nodes, graphs have a large, dark, all-but forgotten world in which we find the non-neighbouring nodes (negative samples). In this paper, we show that this great dark world holds a substantial amount of information that might be useful for representation learning. Most specifically, it can provide negative information about the node representations. Our overall idea is to select appropriate negative samples for each node and incorporate the negative information contained in these samples into the representation updates. Moreover, we show that the process of selecting the negative samples is not trivial. Our theme therefore begins by describing the criteria for a good negative sample, followed by a determinantal point process algorithm for efficiently obtaining such samples. A GCN, boosted by diverse negative samples, then jointly considers the positive and negative information when passing messages. Experimental evaluations show that this idea not only improves the overall performance of standard representation learning but also significantly alleviates over-smoothing problems.

On the Role of Visual Context in Enriching Music Representations

Oct 28, 2022

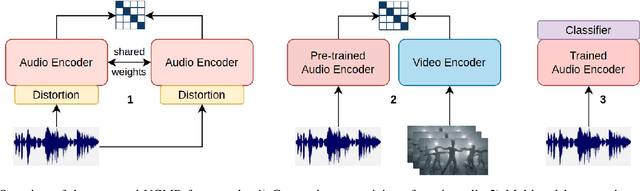

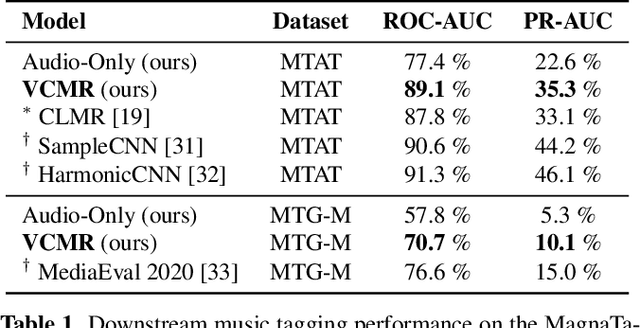

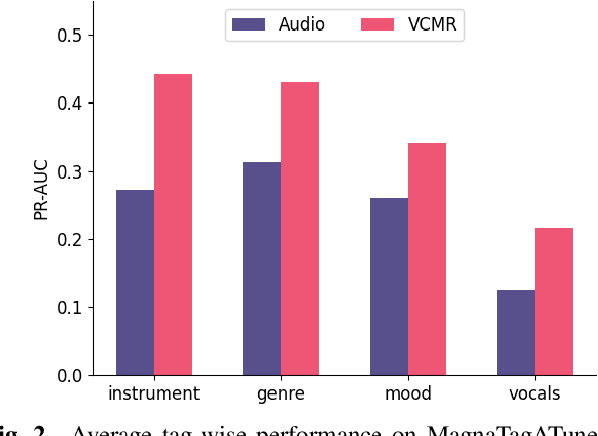

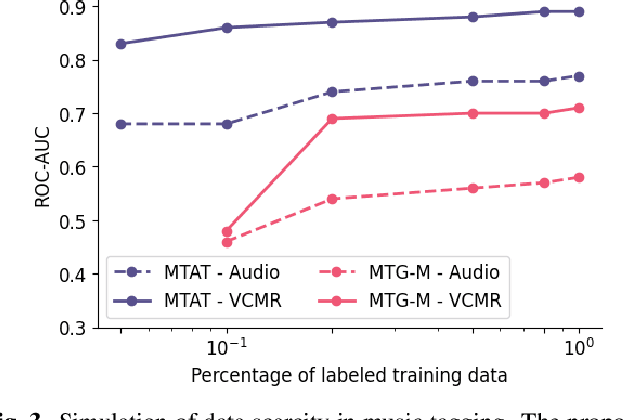

Human perception and experience of music is highly context-dependent. Contextual variability contributes to differences in how we interpret and interact with music, challenging the design of robust models for information retrieval. Incorporating multimodal context from diverse sources provides a promising approach toward modeling this variability. Music presented in media such as movies and music videos provide rich multimodal context that modulates underlying human experiences. However, such context modeling is underexplored, as it requires large amounts of multimodal data along with relevant annotations. Self-supervised learning can help address these challenges by automatically extracting rich, high-level correspondences between different modalities, hence alleviating the need for fine-grained annotations at scale. In this study, we propose VCMR -- Video-Conditioned Music Representations, a contrastive learning framework that learns music representations from audio and the accompanying music videos. The contextual visual information enhances representations of music audio, as evaluated on the downstream task of music tagging. Experimental results show that the proposed framework can contribute additive robustness to audio representations and indicates to what extent musical elements are affected or determined by visual context.

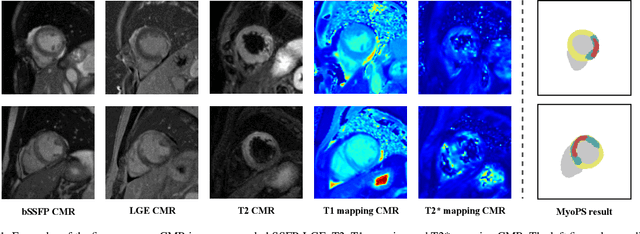

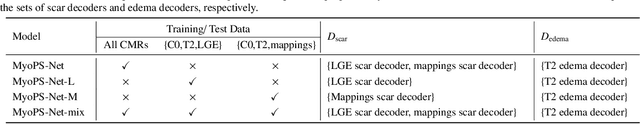

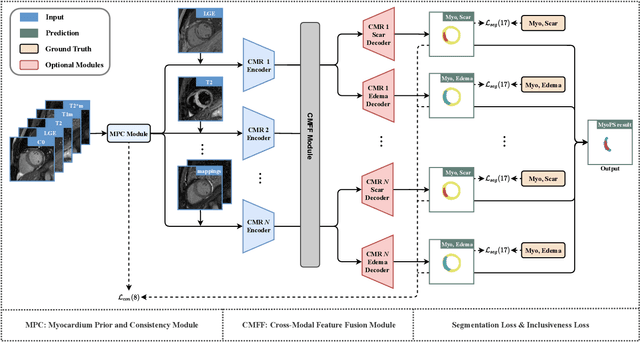

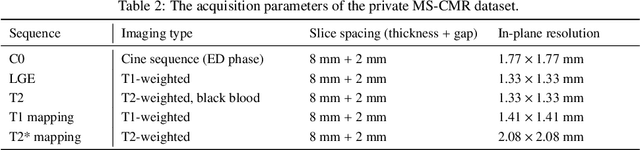

MyoPS-Net: Myocardial Pathology Segmentation with Flexible Combination of Multi-Sequence CMR Images

Nov 06, 2022

Myocardial pathology segmentation (MyoPS) can be a prerequisite for the accurate diagnosis and treatment planning of myocardial infarction. However, achieving this segmentation is challenging, mainly due to the inadequate and indistinct information from an image. In this work, we develop an end-to-end deep neural network, referred to as MyoPS-Net, to flexibly combine five-sequence cardiac magnetic resonance (CMR) images for MyoPS. To extract precise and adequate information, we design an effective yet flexible architecture to extract and fuse cross-modal features. This architecture can tackle different numbers of CMR images and complex combinations of modalities, with output branches targeting specific pathologies. To impose anatomical knowledge on the segmentation results, we first propose a module to regularize myocardium consistency and localize the pathologies, and then introduce an inclusiveness loss to utilize relations between myocardial scars and edema. We evaluated the proposed MyoPS-Net on two datasets, i.e., a private one consisting of 50 paired multi-sequence CMR images and a public one from MICCAI2020 MyoPS Challenge. Experimental results showed that MyoPS-Net could achieve state-of-the-art performance in various scenarios. Note that in practical clinics, the subjects may not have full sequences, such as missing LGE CMR or mapping CMR scans. We therefore conducted extensive experiments to investigate the performance of the proposed method in dealing with such complex combinations of different CMR sequences. Results proved the superiority and generalizability of MyoPS-Net, and more importantly, indicated a practical clinical application.

Depth-based Sampling and Steering Constraints for Memoryless Local Planners

Nov 06, 2022

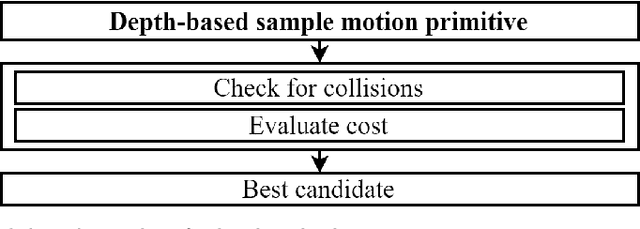

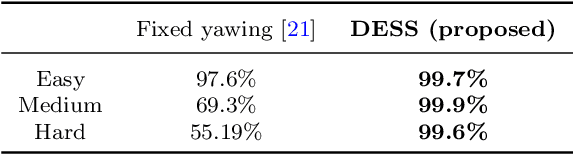

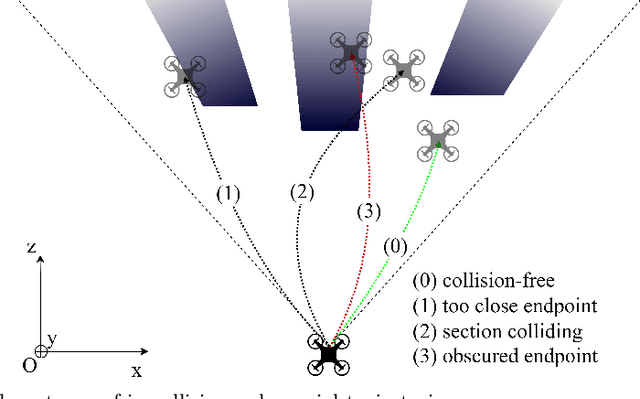

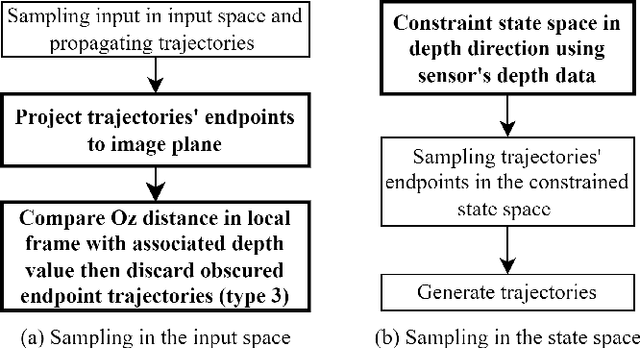

By utilizing only depth information, the paper introduces a novel but efficient local planning approach that enhances not only computational efficiency but also planning performances for memoryless local planners. The sampling is first proposed to be based on the depth data which can identify and eliminate a specific type of in-collision trajectories in the sampled motion primitive library. More specifically, all the obscured primitives' endpoints are found through querying the depth values and excluded from the sampled set, which can significantly reduce the computational workload required in collision checking. On the other hand, we furthermore propose a steering mechanism also based on the depth information to effectively prevent an autonomous vehicle from getting stuck when facing a large convex obstacle, providing a higher level of autonomy for a planning system. Our steering technique is theoretically proved to be complete in scenarios of convex obstacles. To evaluate effectiveness of the proposed DEpth based both Sampling and Steering (DESS) methods, we implemented them in the synthetic environments where a quadrotor was simulated flying through a cluttered region with multiple size-different obstacles. The obtained results demonstrate that the proposed approach can considerably decrease computing time in local planners, where more trajectories can be evaluated while the best path with much lower cost can be found. More importantly, the success rates calculated by the fact that the robot successfully navigated to the destinations in different testing scenarios are always higher than 99.6% on average.

Confidence-Ranked Reconstruction of Census Microdata from Published Statistics

Nov 06, 2022

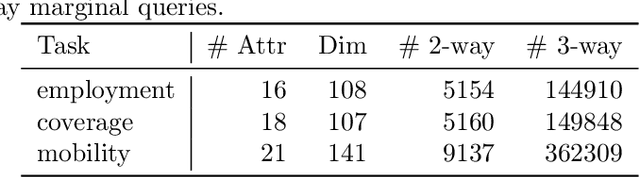

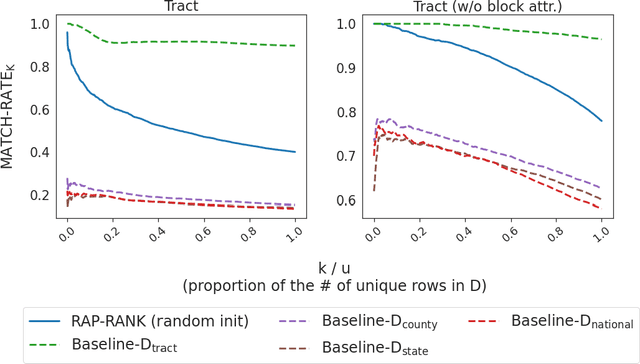

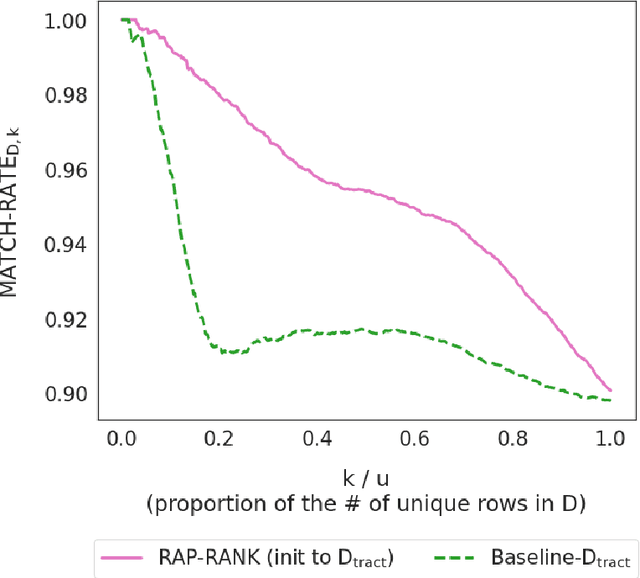

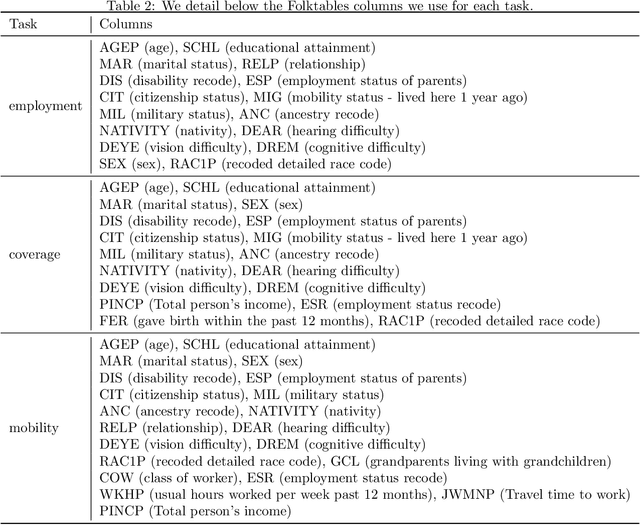

A reconstruction attack on a private dataset $D$ takes as input some publicly accessible information about the dataset and produces a list of candidate elements of $D$. We introduce a new class of data reconstruction attacks based on randomized methods for non-convex optimization. We empirically demonstrate that our attacks can not only reconstruct full rows of $D$ from aggregate query statistics $Q(D)\in \mathbb{R}^m$, but can do so in a way that reliably ranks reconstructed rows by their odds of appearing in the private data, providing a signature that could be used for prioritizing reconstructed rows for further actions such as identify theft or hate crime. We also design a sequence of baselines for evaluating reconstruction attacks. Our attacks significantly outperform those that are based only on access to a public distribution or population from which the private dataset $D$ was sampled, demonstrating that they are exploiting information in the aggregate statistics $Q(D)$, and not simply the overall structure of the distribution. In other words, the queries $Q(D)$ are permitting reconstruction of elements of this dataset, not the distribution from which $D$ was drawn. These findings are established both on 2010 U.S. decennial Census data and queries and Census-derived American Community Survey datasets. Taken together, our methods and experiments illustrate the risks in releasing numerically precise aggregate statistics of a large dataset, and provide further motivation for the careful application of provably private techniques such as differential privacy.

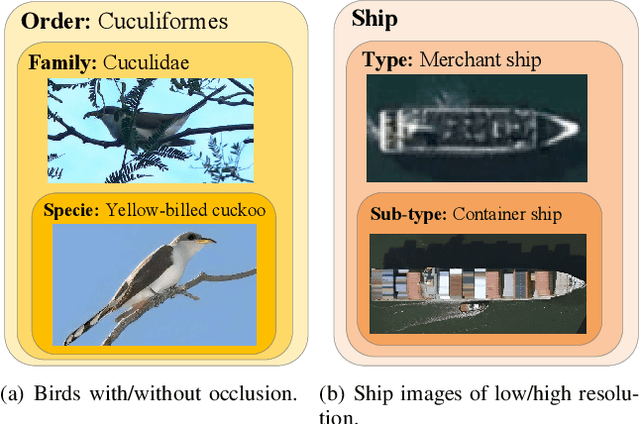

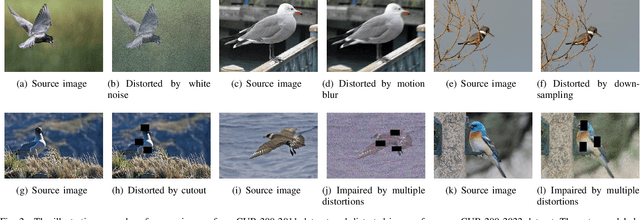

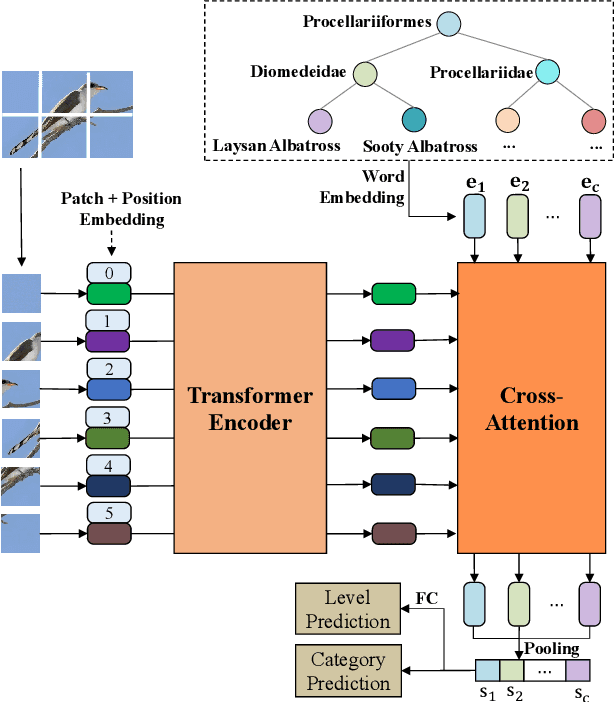

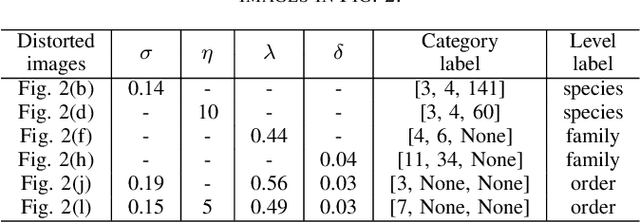

Semantic Guided Level-Category Hybrid Prediction Network for Hierarchical Image Classification

Nov 22, 2022

Hierarchical classification (HC) assigns each object with multiple labels organized into a hierarchical structure. The existing deep learning based HC methods usually predict an instance starting from the root node until a leaf node is reached. However, in the real world, images interfered by noise, occlusion, blur, or low resolution may not provide sufficient information for the classification at subordinate levels. To address this issue, we propose a novel semantic guided level-category hybrid prediction network (SGLCHPN) that can jointly perform the level and category prediction in an end-to-end manner. SGLCHPN comprises two modules: a visual transformer that extracts feature vectors from the input images, and a semantic guided cross-attention module that uses categories word embeddings as queries to guide learning category-specific representations. In order to evaluate the proposed method, we construct two new datasets in which images are at a broad range of quality and thus are labeled to different levels (depths) in the hierarchy according to their individual quality. Experimental results demonstrate the effectiveness of our proposed HC method.

Motif-aware temporal GCN for fraud detection in signed cryptocurrency trust networks

Nov 22, 2022

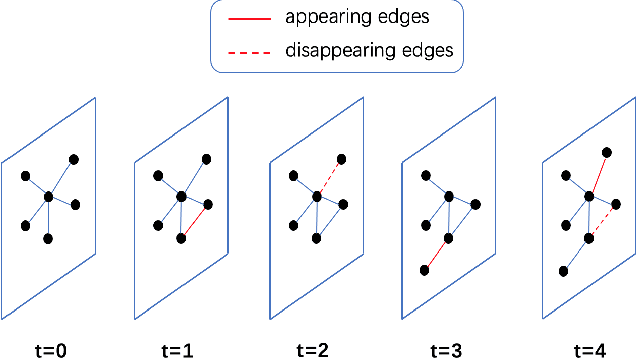

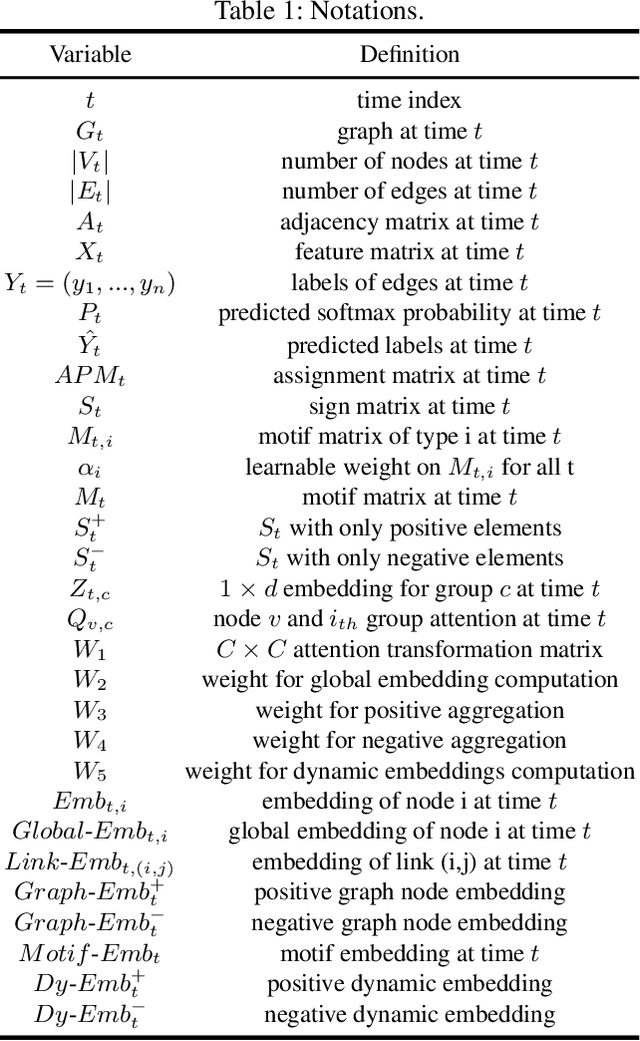

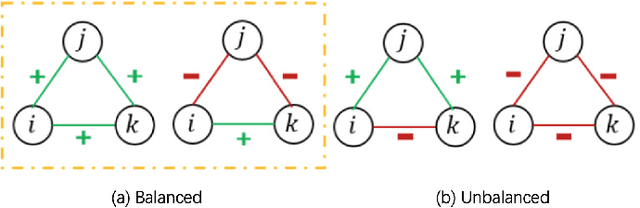

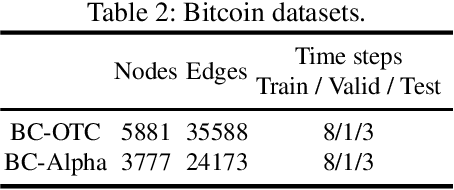

Graph convolutional networks (GCNs) is a class of artificial neural networks for processing data that can be represented as graphs. Since financial transactions can naturally be constructed as graphs, GCNs are widely applied in the financial industry, especially for financial fraud detection. In this paper, we focus on fraud detection on cryptocurrency truct networks. In the literature, most works focus on static networks. Whereas in this study, we consider the evolving nature of cryptocurrency networks, and use local structural as well as the balance theory to guide the training process. More specifically, we compute motif matrices to capture the local topological information, then use them in the GCN aggregation process. The generated embedding at each snapshot is a weighted average of embeddings within a time window, where the weights are learnable parameters. Since the trust networks is signed on each edge, balance theory is used to guide the training process. Experimental results on bitcoin-alpha and bitcoin-otc datasets show that the proposed model outperforms those in the literature.

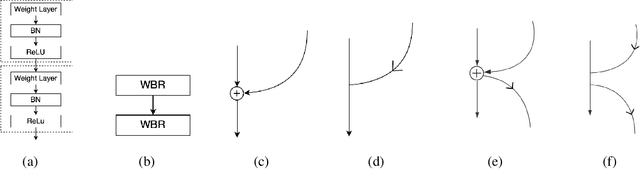

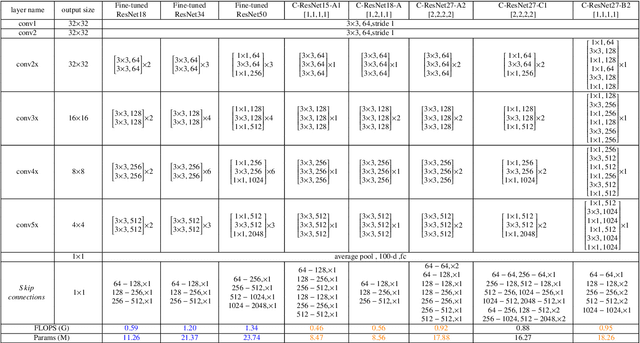

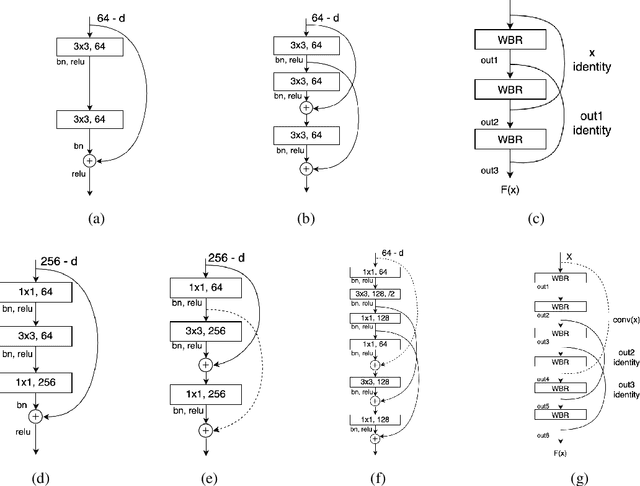

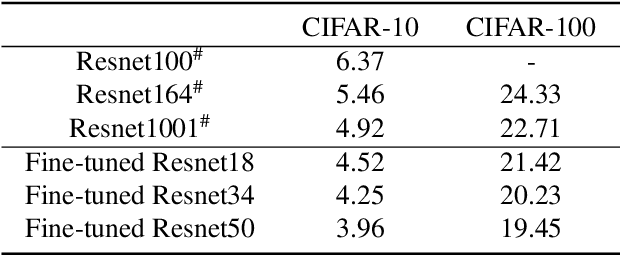

A Cross-Residual Learning for Image Recognition

Nov 22, 2022

ResNets and its variants play an important role in various fields of image recognition. This paper gives another variant of ResNets, a kind of cross-residual learning networks called C-ResNets, which has less computation and parameters than ResNets. C-ResNets increases the information interaction between modules by densifying jumpers and enriches the role of jumpers. In addition, some meticulous designs on jumpers and channels counts can further reduce the resource consumption of C-ResNets and increase its classification performance. In order to test the effectiveness of C-ResNets, we use the same hyperparameter settings as fine-tuned ResNets in the experiments. We test our C-ResNets on datasets MNIST, FashionMnist, CIFAR-10, CIFAR-100, CALTECH-101 and SVHN. Compared with fine-tuned ResNets, C-ResNets not only maintains the classification performance, but also enormously reduces the amount of calculations and parameters which greatly save the utilization rate of GPUs and GPU memory resources. Therefore, our C-ResNets is competitive and viable alternatives to ResNets in various scenarios. Code is available at https://github.com/liangjunhello/C-ResNet

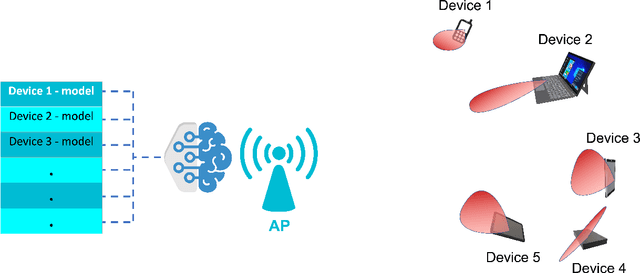

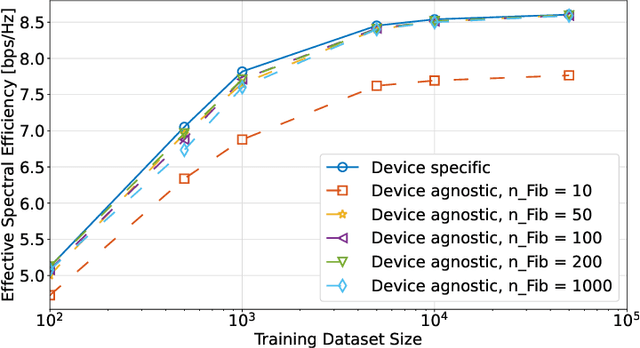

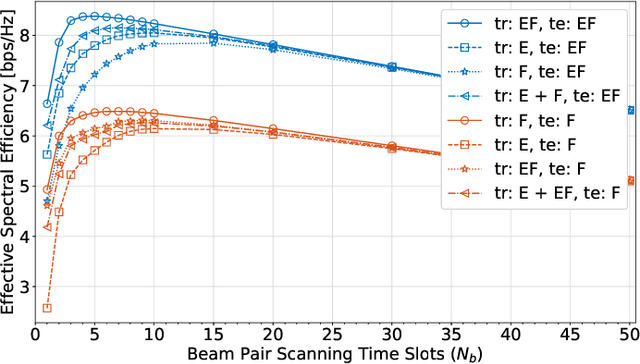

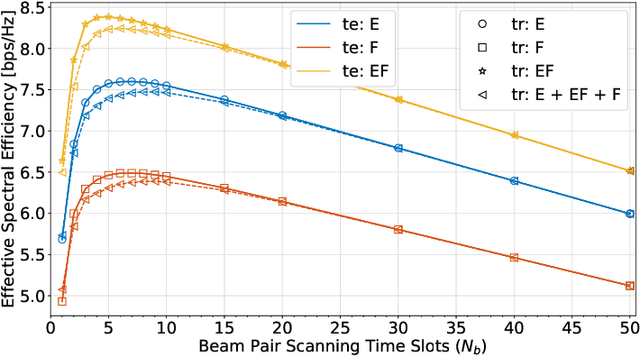

Device-Agnostic Millimeter Wave Beam Selection using Machine Learning

Nov 22, 2022

Most research in the area of machine learning-based user beam selection considers a structure where the model proposes appropriate user beams. However, this design requires a specific model for each user-device beam codebook, where a model learned for a device with a particular codebook can not be reused for another device with a different codebook. Moreover, this design requires training and test samples for each antenna placement configuration/codebook. This paper proposes a device-agnostic beam selection framework that leverages context information to propose appropriate user beams using a generic model and a post processing unit. The generic neural network predicts the potential angles of arrival, and the post processing unit maps these directions to beams based on the specific device's codebook. The proposed beam selection framework works well for user devices with antenna configuration/codebook unseen in the training dataset. Also, the proposed generic network has the option to be trained with a dataset mixed of samples with different antenna configurations/codebooks, which significantly eases the burden of effective model training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge