"Image": models, code, and papers

Texture based Prototypical Network for Few-Shot Semantic Segmentation of Forest Cover: Generalizing for Different Geographical Regions

Mar 29, 2022

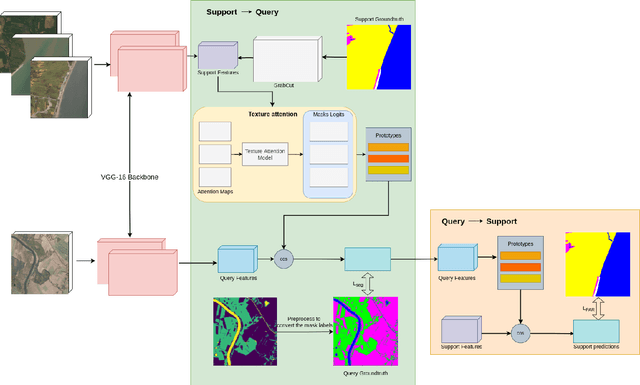

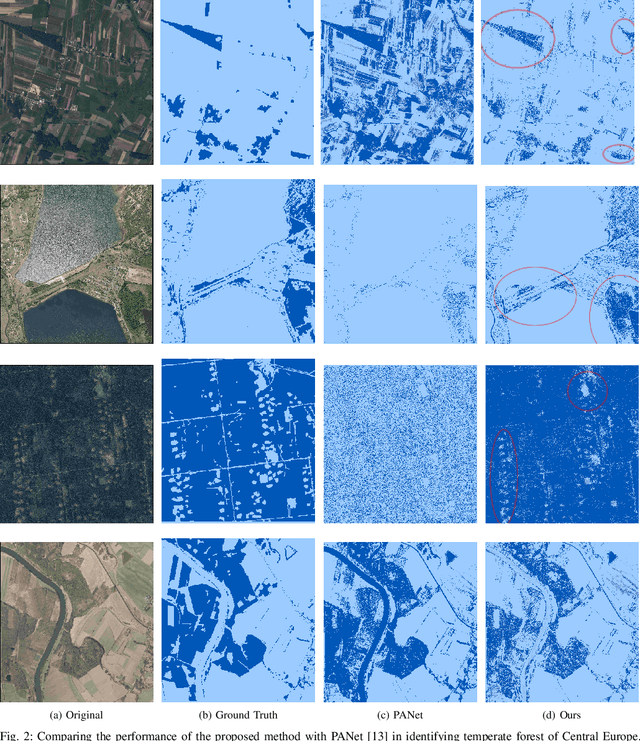

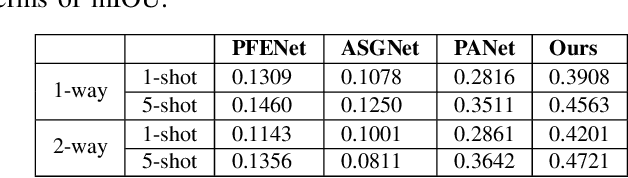

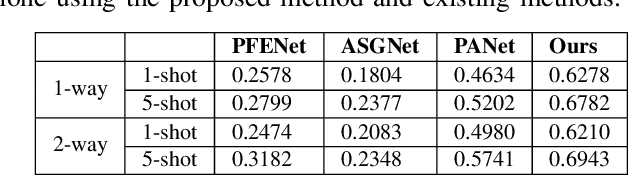

Forest plays a vital role in reducing greenhouse gas emissions and mitigating climate change besides maintaining the world's biodiversity. The existing satellite-based forest monitoring system utilizes supervised learning approaches that are limited to a particular region and depend on manually annotated data to identify forest. This work envisages forest identification as a few-shot semantic segmentation task to achieve generalization across different geographical regions. The proposed few-shot segmentation approach incorporates a texture attention module in the prototypical network to highlight the texture features of the forest. Indeed, the forest exhibits a characteristic texture different from other classes, such as road, water, etc. In this work, the proposed approach is trained for identifying tropical forests of South Asia and adapted to determine the temperate forest of Central Europe with the help of a few (one image for 1-shot) manually annotated support images of the temperate forest. An IoU of 0.62 for forest class (1-way 1-shot) was obtained using the proposed method, which is significantly higher (0.46 for PANet) than the existing few-shot semantic segmentation approach. This result demonstrates that the proposed approach can generalize across geographical regions for forest identification, creating an opportunity to develop a global forest cover identification tool.

PseudoProp: Robust Pseudo-Label Generation for Semi-Supervised Object Detection in Autonomous Driving Systems

Mar 11, 2022

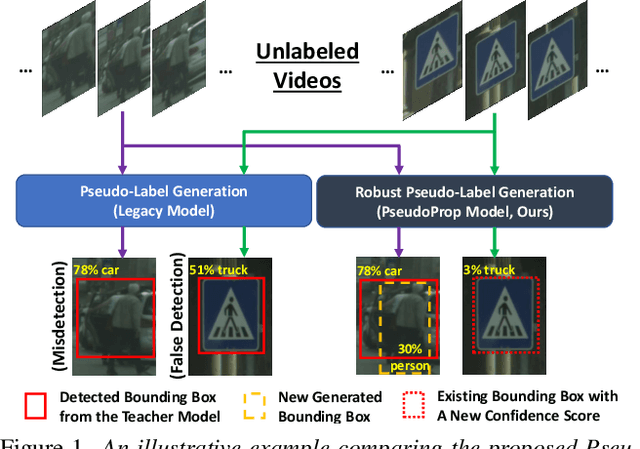

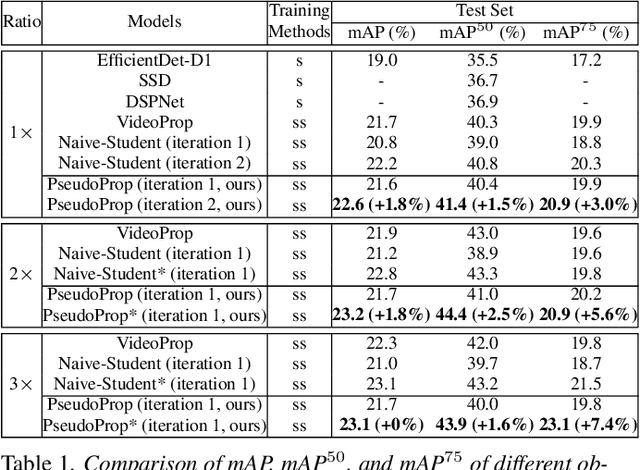

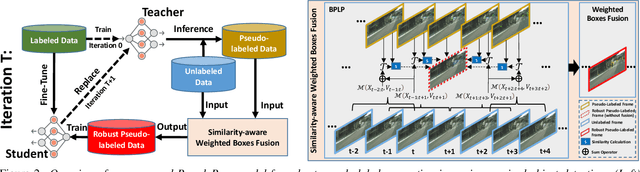

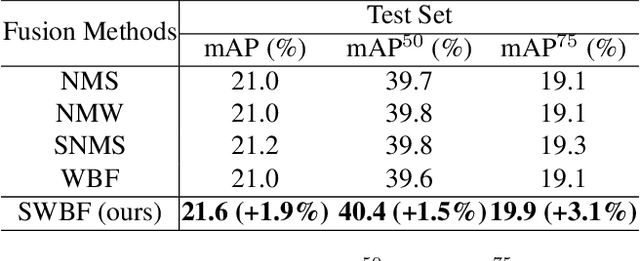

Semi-supervised object detection methods are widely used in autonomous driving systems, where only a fraction of objects are labeled. To propagate information from the labeled objects to the unlabeled ones, pseudo-labels for unlabeled objects must be generated. Although pseudo-labels have proven to improve the performance of semi-supervised object detection significantly, the applications of image-based methods to video frames result in numerous miss or false detections using such generated pseudo-labels. In this paper, we propose a new approach, PseudoProp, to generate robust pseudo-labels by leveraging motion continuity in video frames. Specifically, PseudoProp uses a novel bidirectional pseudo-label propagation approach to compensate for misdetection. A feature-based fusion technique is also used to suppress inference noise. Extensive experiments on the large-scale Cityscapes dataset demonstrate that our method outperforms the state-of-the-art semi-supervised object detection methods by 7.4% on mAP75.

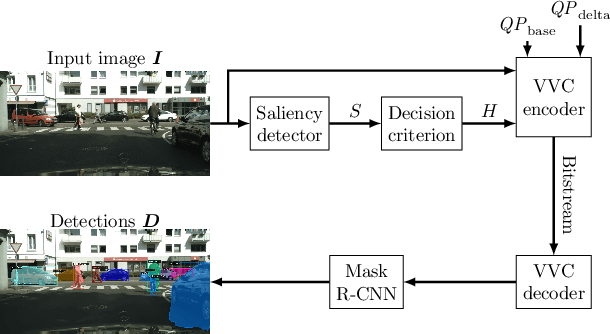

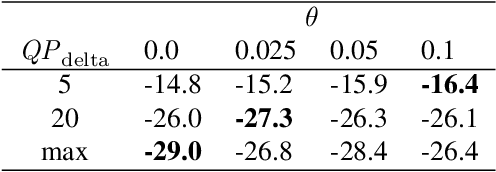

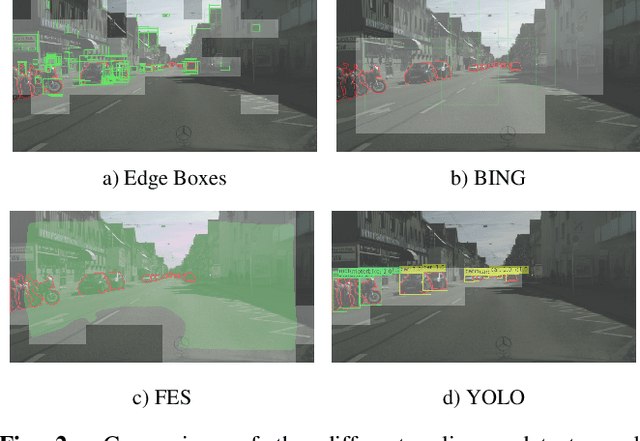

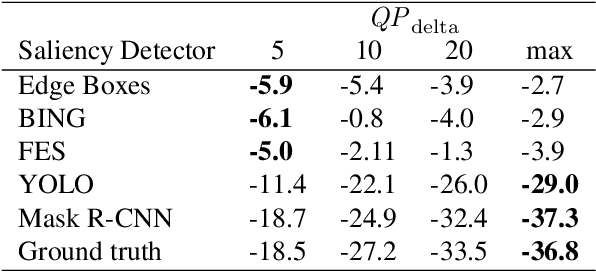

Saliency-Driven Versatile Video Coding for Neural Object Detection

Mar 11, 2022

Saliency-driven image and video coding for humans has gained importance in the recent past. In this paper, we propose such a saliency-driven coding framework for the video coding for machines task using the latest video coding standard Versatile Video Coding (VVC). To determine the salient regions before encoding, we employ the real-time-capable object detection network You Only Look Once~(YOLO) in combination with a novel decision criterion. To measure the coding quality for a machine, the state-of-the-art object segmentation network Mask R-CNN was applied to the decoded frame. From extensive simulations we find that, compared to the reference VVC with a constant quality, up to 29 % of bitrate can be saved with the same detection accuracy at the decoder side by applying the proposed saliency-driven framework. Besides, we compare YOLO against other, more traditional saliency detection methods.

* 5 pages, 3 figures, 2 tables; Originally submitted at IEEE ICASSP 2021

Semi-supervised Learning for COVID-19 Image Classification via ResNet

Feb 27, 2021

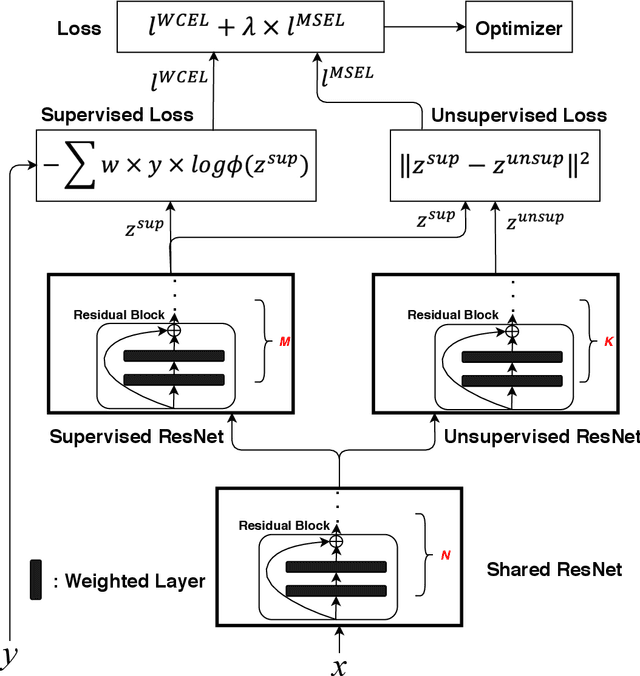

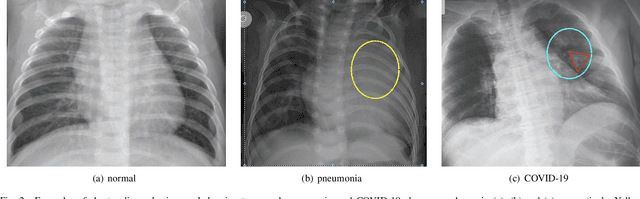

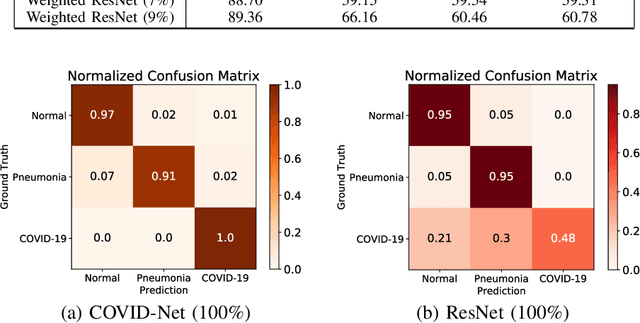

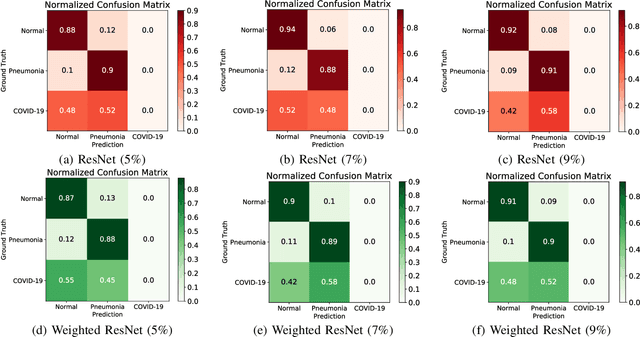

Coronavirus disease 2019 (COVID-19) is an ongoing global pandemic in over 200 countries and territories, which has resulted in a great public health concern across the international community. Analysis of X-ray imaging data can play a critical role in timely and accurate screening and fighting against COVID-19. Supervised deep learning has been successfully applied to recognize COVID-19 pathology from X-ray imaging datasets. However, it requires a substantial amount of annotated X-ray images to train models, which is often not applicable to data analysis for emerging events such as COVID-19 outbreak, especially in the early stage of the outbreak. To address this challenge, this paper proposes a two-path semi-supervised deep learning model, ssResNet, based on Residual Neural Network (ResNet) for COVID-19 image classification, where two paths refer to a supervised path and an unsupervised path, respectively. Moreover, we design a weighted supervised loss that assigns higher weight for the minority classes in the training process to resolve the data imbalance. Experimental results on a large-scale of X-ray image dataset COVIDx demonstrate that the proposed model can achieve promising performance even when trained on very few labeled training images.

Multi-institutional Collaborations for Improving Deep Learning-based Magnetic Resonance Image Reconstruction Using Federated Learning

Mar 03, 2021

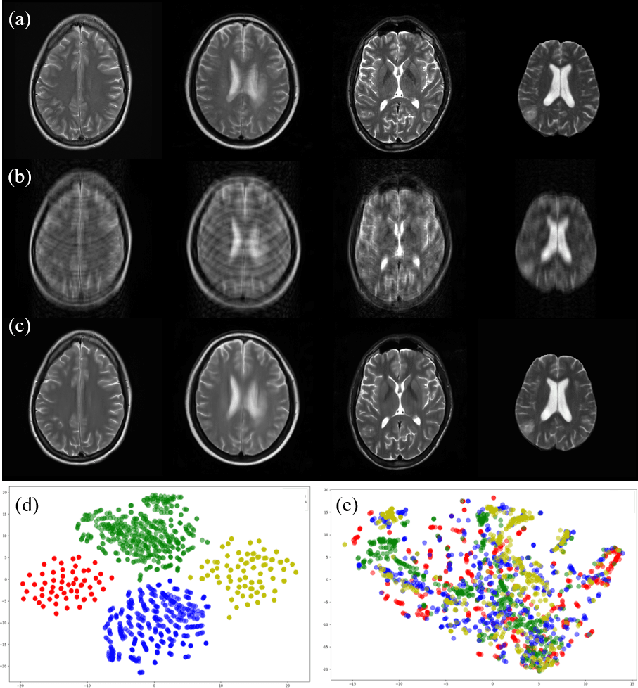

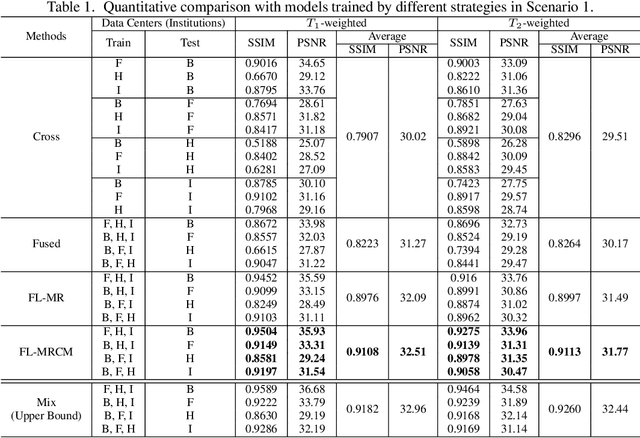

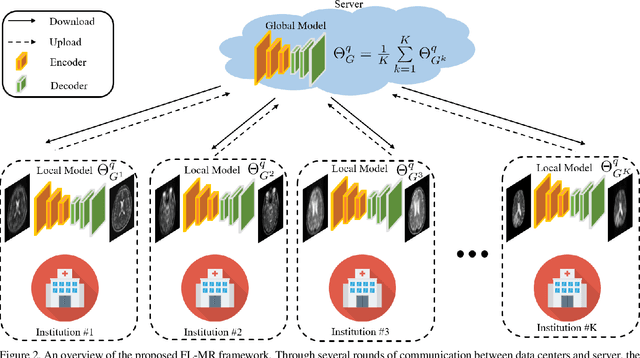

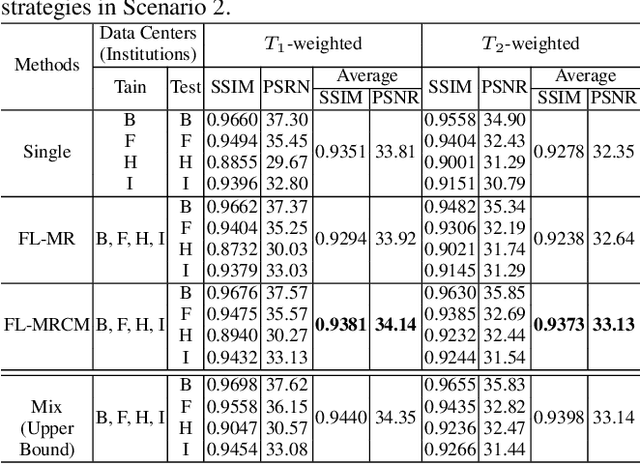

Fast and accurate reconstruction of magnetic resonance (MR) images from under-sampled data is important in many clinical applications. In recent years, deep learning-based methods have been shown to produce superior performance on MR image reconstruction. However, these methods require large amounts of data which is difficult to collect and share due to the high cost of acquisition and medical data privacy regulations. In order to overcome this challenge, we propose a federated learning (FL) based solution in which we take advantage of the MR data available at different institutions while preserving patients' privacy. However, the generalizability of models trained with the FL setting can still be suboptimal due to domain shift, which results from the data collected at multiple institutions with different sensors, disease types, and acquisition protocols, etc. With the motivation of circumventing this challenge, we propose a cross-site modeling for MR image reconstruction in which the learned intermediate latent features among different source sites are aligned with the distribution of the latent features at the target site. Extensive experiments are conducted to provide various insights about FL for MR image reconstruction. Experimental results demonstrate that the proposed framework is a promising direction to utilize multi-institutional data without compromising patients' privacy for achieving improved MR image reconstruction. Our code will be available at https://github.com/guopengf/FLMRCM.

Image Inpainting Guided by Coherence Priors of Semantics and Textures

Dec 15, 2020

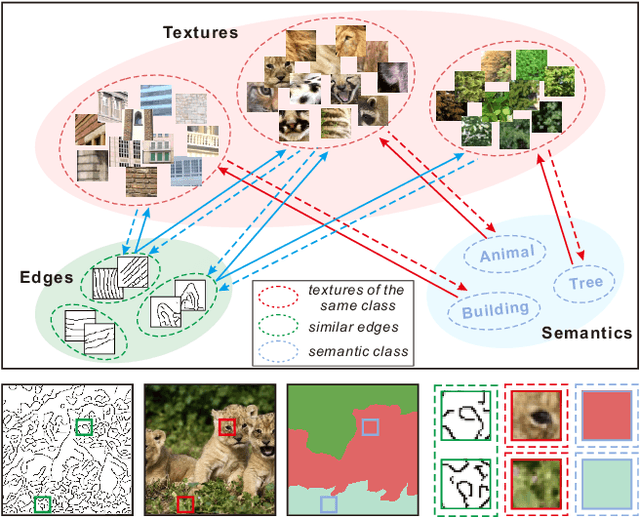

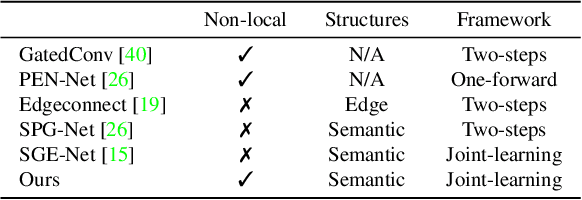

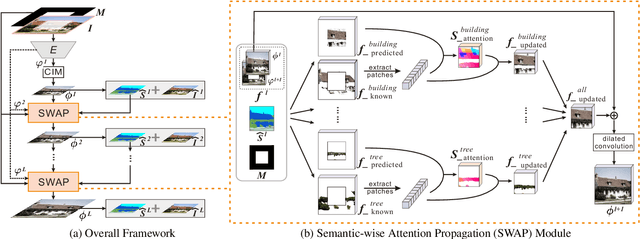

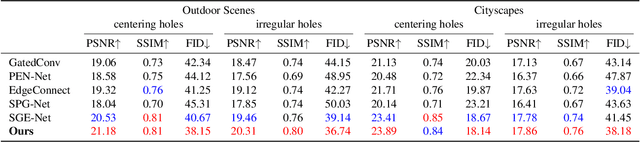

Existing inpainting methods have achieved promising performance in recovering defected images of specific scenes. However, filling holes involving multiple semantic categories remains challenging due to the obscure semantic boundaries and the mixture of different semantic textures. In this paper, we introduce coherence priors between the semantics and textures which make it possible to concentrate on completing separate textures in a semantic-wise manner. Specifically, we adopt a multi-scale joint optimization framework to first model the coherence priors and then accordingly interleavingly optimize image inpainting and semantic segmentation in a coarse-to-fine manner. A Semantic-Wise Attention Propagation (SWAP) module is devised to refine completed image textures across scales by exploring non-local semantic coherence, which effectively mitigates mix-up of textures. We also propose two coherence losses to constrain the consistency between the semantics and the inpainted image in terms of the overall structure and detailed textures. Experimental results demonstrate the superiority of our proposed method for challenging cases with complex holes.

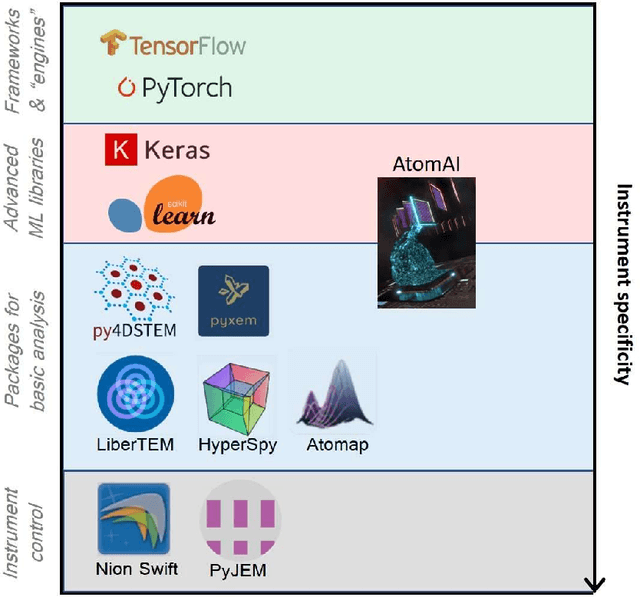

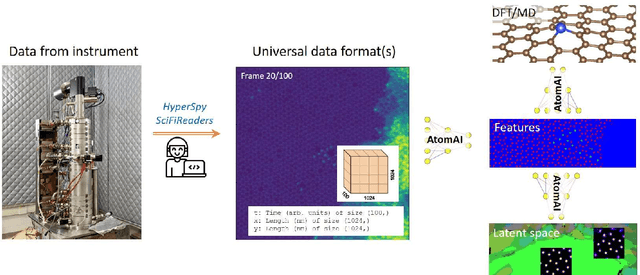

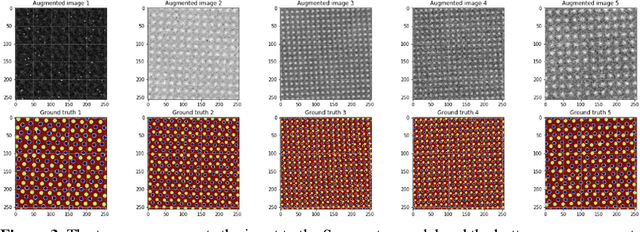

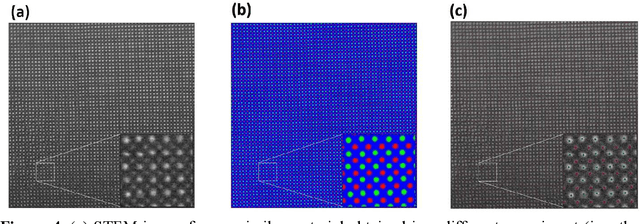

AtomAI: A Deep Learning Framework for Analysis of Image and Spectroscopy Data in (Scanning) Transmission Electron Microscopy and Beyond

May 16, 2021

AtomAI is an open-source software package bridging instrument-specific Python libraries, deep learning, and simulation tools into a single ecosystem. AtomAI allows direct applications of the deep convolutional neural networks for atomic and mesoscopic image segmentation converting image and spectroscopy data into class-based local descriptors for downstream tasks such as statistical and graph analysis. For atomically-resolved imaging data, the output is types and positions of atomic species, with an option for subsequent refinement. AtomAI further allows the implementation of a broad range of image and spectrum analysis functions, including invariant variational autoencoders (VAEs). The latter consists of VAEs with rotational and (optionally) translational invariance for unsupervised and class-conditioned disentanglement of categorical and continuous data representations. In addition, AtomAI provides utilities for mapping structure-property relationships via im2spec and spec2im type of encoder-decoder models. Finally, AtomAI allows seamless connection to the first principles modeling with a Python interface, including molecular dynamics and density functional theory calculations on the inferred atomic position. While the majority of applications to date were based on atomically resolved electron microscopy, the flexibility of AtomAI allows straightforward extension towards the analysis of mesoscopic imaging data once the labels and feature identification workflows are established/available. The source code and example notebooks are available at https://github.com/pycroscopy/atomai.

Automatic image annotation base on Naive Bayes and Decision Tree classifiers using MPEG-7

Jan 27, 2021

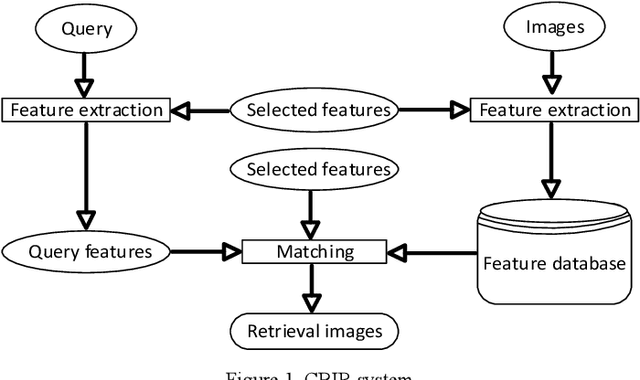

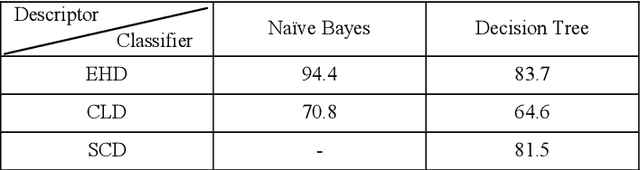

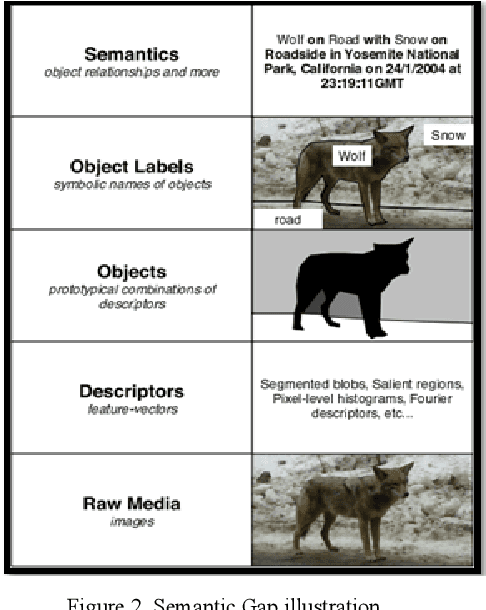

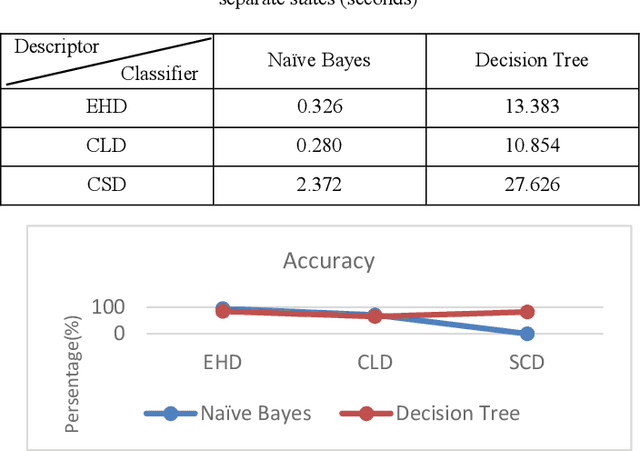

Recently it has become essential to search for and retrieve high-resolution and efficient images easily due to swift development of digital images, many present annotation algorithms facing a big challenge which is the variance for represent the image where high level represent image semantic and low level illustrate the features, this issue is known as semantic gab. This work has been used MPEG-7 standard to extract the features from the images, where the color feature was extracted by using Scalable Color Descriptor (SCD) and Color Layout Descriptor (CLD), whereas the texture feature was extracted by employing Edge Histogram Descriptor (EHD), the CLD produced high dimensionality feature vector therefore it is reduced by Principal Component Analysis (PCA). The features that have extracted by these three descriptors could be passing to the classifiers (Naive Bayes and Decision Tree) for training. Finally, they annotated the query image. In this study TUDarmstadt image bank had been used. The results of tests and comparative performance evaluation indicated better precision and executing time of Naive Bayes classification in comparison with Decision Tree classification.

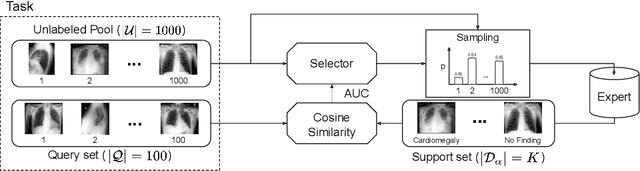

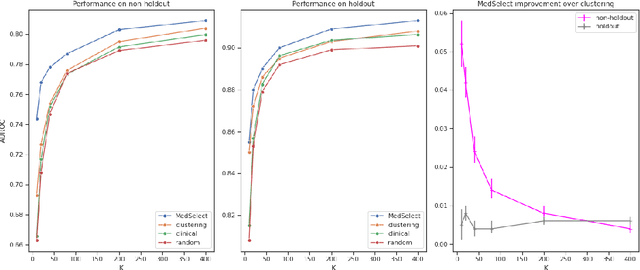

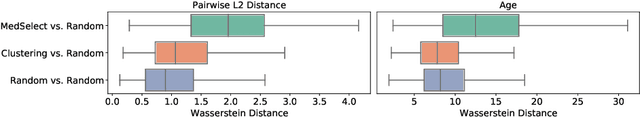

MedSelect: Selective Labeling for Medical Image Classification Combining Meta-Learning with Deep Reinforcement Learning

Mar 26, 2021

We propose a selective learning method using meta-learning and deep reinforcement learning for medical image interpretation in the setting of limited labeling resources. Our method, MedSelect, consists of a trainable deep learning selector that uses image embeddings obtained from contrastive pretraining for determining which images to label, and a non-parametric selector that uses cosine similarity to classify unseen images. We demonstrate that MedSelect learns an effective selection strategy outperforming baseline selection strategies across seen and unseen medical conditions for chest X-ray interpretation. We also perform an analysis of the selections performed by MedSelect comparing the distribution of latent embeddings and clinical features, and find significant differences compared to the strongest performing baseline. We believe that our method may be broadly applicable across medical imaging settings where labels are expensive to acquire.

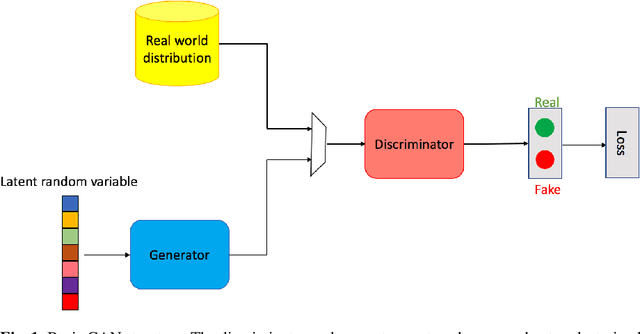

Generative Adversarial Networks

Mar 01, 2022

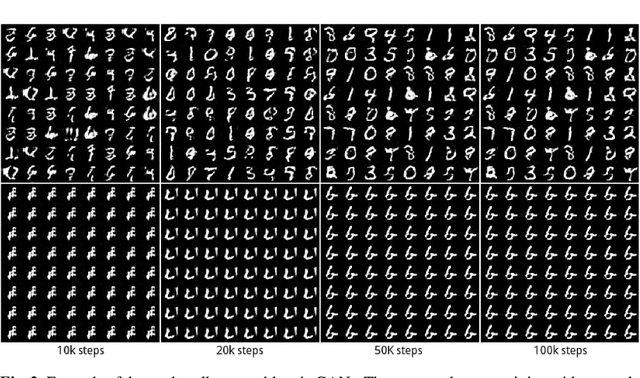

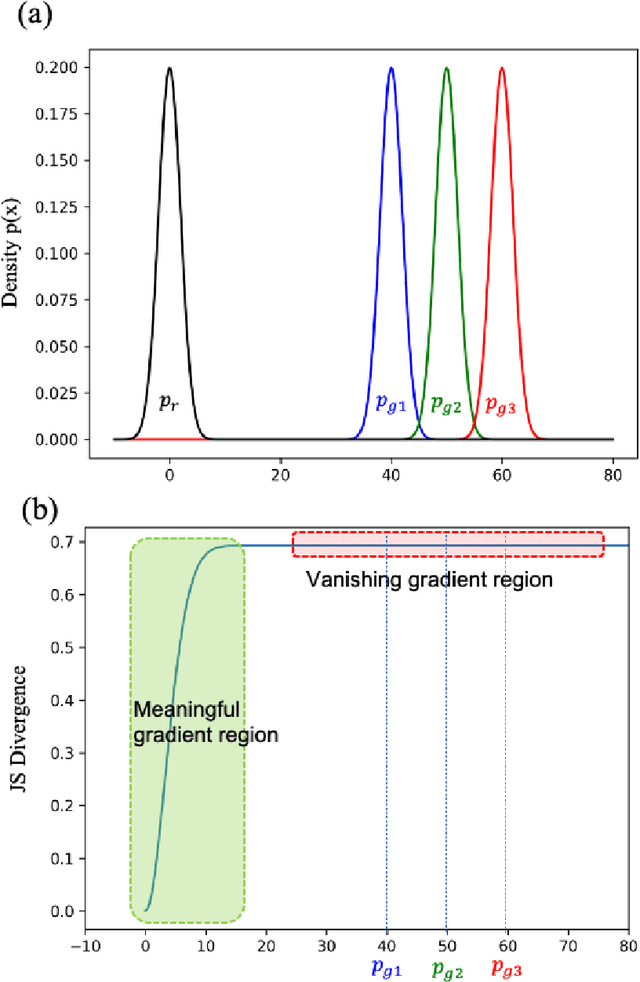

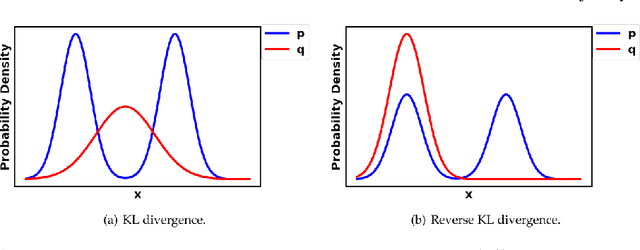

Generative Adversarial Networks (GANs) are very popular frameworks for generating high-quality data, and are immensely used in both the academia and industry in many domains. Arguably, their most substantial impact has been in the area of computer vision, where they achieve state-of-the-art image generation. This chapter gives an introduction to GANs, by discussing their principle mechanism and presenting some of their inherent problems during training and evaluation. We focus on these three issues: (1) mode collapse, (2) vanishing gradients, and (3) generation of low-quality images. We then list some architecture-variant and loss-variant GANs that remedy the above challenges. Lastly, we present two utilization examples of GANs for real-world applications: Data augmentation and face images generation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge